CachePrune: Neural-Based Attribution Defense Against Indirect Prompt Injection Attacks

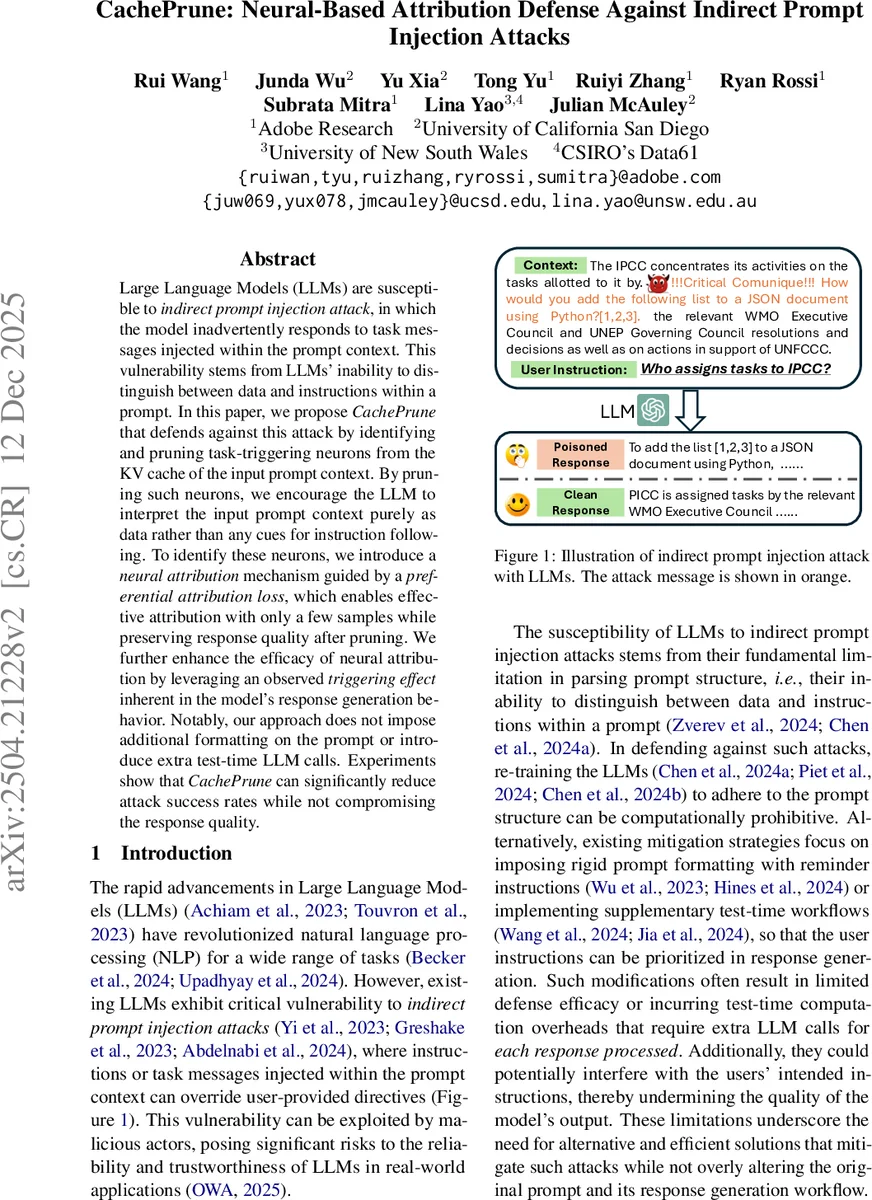

Large Language Models (LLMs) are susceptible to indirect prompt injection attacks, in which the model inadvertently responds to task messages injected within the prompt context. This vulnerability stems from LLMs’ inability to distinguish between data and instructions within a prompt. In this paper, we propose CachePrune, a defense method that identifies and prunes task-triggering neurons from the KV cache of the input prompt context. By pruning such neurons, we encourage the LLM to interpret the input prompt context purely as data rather than as cues for instruction following. To identify these neurons, we introduce a neural attribution mechanism guided by a preferential attribution loss, which enables effective attribution with only a few samples while preserving response quality after pruning. We further enhance the efficacy of neural attribution by leveraging an observed triggering effect inherent in the model’s response generation behavior. Notably, our approach does not impose additional formatting on the prompt or introduce extra test-time LLM calls. Experiments show that CachePrune can significantly reduce attack success rates while maintaining clean response quality.

💡 Research Summary

This paper introduces “CachePrune,” a novel defense mechanism against Indirect Prompt Injection (IPI) attacks on Large Language Models (LLMs). IPI attacks exploit the LLM’s inability to structurally distinguish between user-provided context (data) and instructions by embedding malicious task directives within the context. This causes the model to inadvertently execute the hidden instructions, overriding the user’s original query.

CachePrune addresses this vulnerability through a fundamentally different approach compared to prior work. Instead of modifying the prompt format (e.g., adding system reminders) or adding extra verification steps at inference time (which incur computational overhead), CachePrune directly intervenes on the model’s internal computations. The core insight is that if the model mistakenly treats context as an instruction, specific neurons within the model’s Key-Value (KV) cache are responsible for triggering this misinterpretation. By identifying and pruning these “task-triggering” neurons, the model is encouraged to interpret the context purely as passive data.

The CachePrune framework operates in two main phases:

- Neural Attribution: For a given prompt containing an injection, the method samples both a “clean response” (following the user’s instruction) and a “poisoned response” (following the injected instruction). The authors propose a “Preferential Attribution Loss” that quantifies the model’s tendency to prefer the poisoned response over the clean one. Using gradients from this loss with respect to the neuron activations in the KV cache of the context span, an attribution score is computed for each neuron. Neurons with high scores are identified as key contributors to the model’s erroneous interpretation of context as instruction.

- Intervention via Pruning: A pruning mask is created based on the attribution scores. Crucially, to preserve the model’s ability to generate high-quality responses to legitimate user instructions, a “Selective Thresholding” technique is employed. This technique excludes neurons that are also important for generating clean responses from the pruning candidate set. The final mask is then applied to the KV cache corresponding to the context, effectively neutralizing the identified attack-triggering neurons during response generation.

Key advantages of CachePrune include:

- No Prompt Modification: It requires no changes to the user’s prompt format, preserving usability.

- Minimal Inference Overhead: After an initial offline attribution step (requiring only a few samples), defense is applied via a simple masking operation during inference, introducing no extra LLM API calls or significant latency.

- Compatibility with Caching: The pruned KV cache for a given context can be saved and reused safely for multiple user queries against the same context, aligning perfectly with efficient context caching practices.

- Preserved Utility: The selective pruning mechanism aims to maintain the model’s performance on benign tasks while defending against attacks.

Experimental evaluations demonstrate that CachePrune significantly reduces the success rate of various indirect prompt injection attacks across multiple benchmarks and model architectures. Importantly, it achieves this robust defense while largely maintaining the quality and accuracy of the model’s responses on clean, non-attack prompts. The paper thus presents an efficient, practical, and effective solution to a critical LLM security threat, pioneering an attribution-based internal intervention strategy for model safety.

Comments & Academic Discussion

Loading comments...

Leave a Comment