Empowering Dynamic Urban Navigation with Stereo and Mid-Level Vision

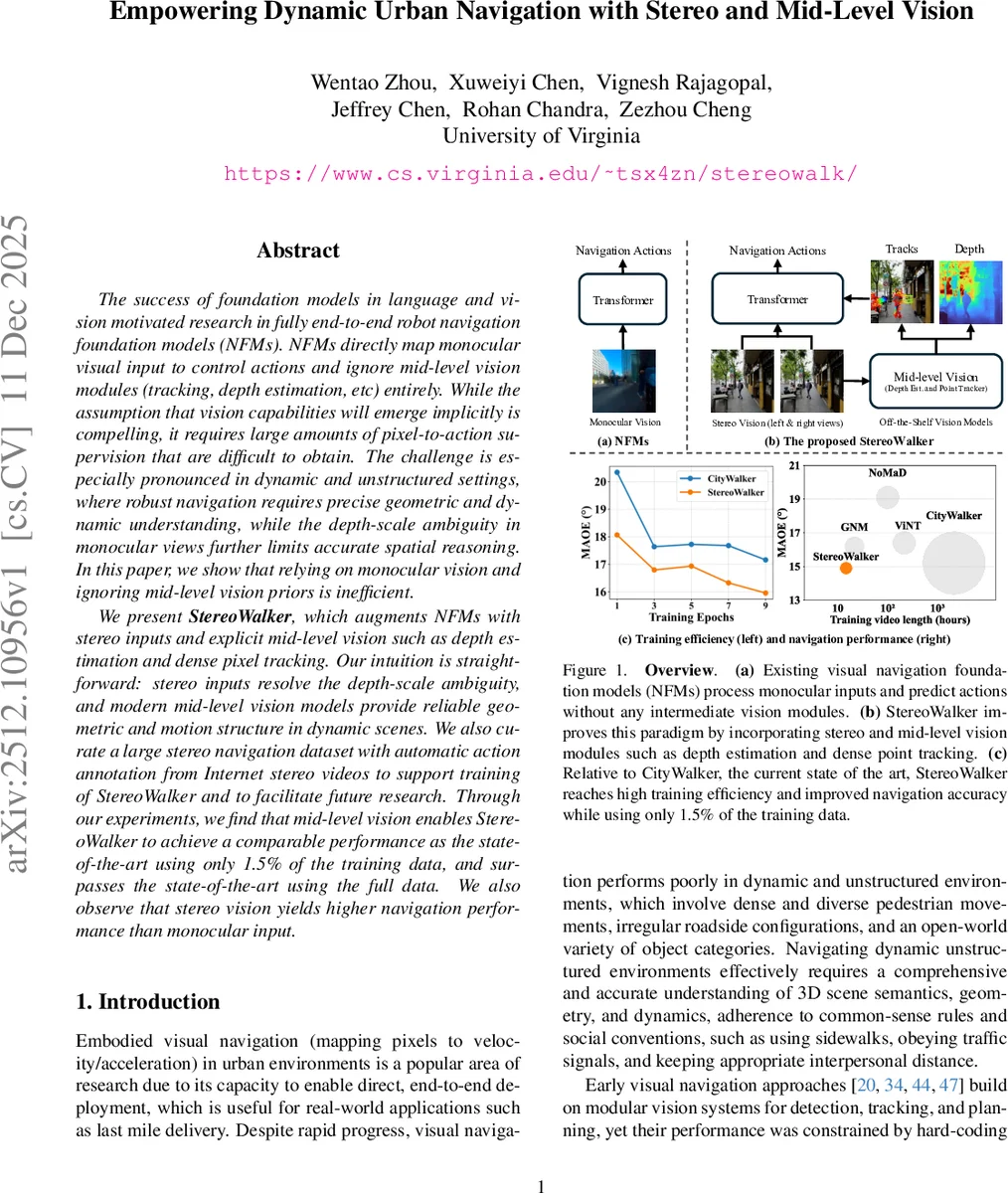

The success of foundation models in language and vision motivated research in fully end-to-end robot navigation foundation models (NFMs). NFMs directly map monocular visual input to control actions and ignore mid-level vision modules (tracking, depth estimation, etc) entirely. While the assumption that vision capabilities will emerge implicitly is compelling, it requires large amounts of pixel-to-action supervision that are difficult to obtain. The challenge is especially pronounced in dynamic and unstructured settings, where robust navigation requires precise geometric and dynamic understanding, while the depth-scale ambiguity in monocular views further limits accurate spatial reasoning. In this paper, we show that relying on monocular vision and ignoring mid-level vision priors is inefficient. We present StereoWalker, which augments NFMs with stereo inputs and explicit mid-level vision such as depth estimation and dense pixel tracking. Our intuition is straightforward: stereo inputs resolve the depth-scale ambiguity, and modern mid-level vision models provide reliable geometric and motion structure in dynamic scenes. We also curate a large stereo navigation dataset with automatic action annotation from Internet stereo videos to support training of StereoWalker and to facilitate future research. Through our experiments, we find that mid-level vision enables StereoWalker to achieve a comparable performance as the state-of-the-art using only 1.5% of the training data, and surpasses the state-of-the-art using the full data. We also observe that stereo vision yields higher navigation performance than monocular input.

💡 Research Summary

This paper introduces “StereoWalker,” a novel framework designed to address the core challenges of visual robot navigation in dynamic urban environments. It critiques the prevailing trend in Navigation Foundation Models (NFMs), which rely solely on monocular RGB input and pursue a purely end-to-end approach from pixels to actions, omitting explicit mid-level vision processing like depth estimation and tracking. The authors identify two fundamental limitations of this paradigm: 1) the inherent depth-scale ambiguity of monocular vision, which hampers accurate spatial reasoning, and 2) the strong and often inefficient assumption that necessary geometric and dynamic understanding will emerge implicitly from vast amounts of pixel-action supervision, which is scarce and costly to obtain.

To overcome these issues, StereoWalker proposes a hybrid paradigm that powerfully augments NFMs with stereo vision and explicit mid-level vision modules. The core intuition is straightforward: stereo input resolves depth-scale ambiguity, providing metric geometric cues, while modern, pre-trained mid-level vision models offer reliable, off-the-shelf representations of scene structure and motion. This explicit injection of knowledge drastically reduces the learning burden on the navigation policy.

The work makes three primary contributions. First, the authors curate and release a large-scale stereo navigation dataset mined from publicly available VR180 first-person walking videos on YouTube. They implement an automated filtering pipeline using a vision-language model (Qwen2-VL) to retain only clips depicting goal-directed walking, resulting in roughly 60 hours of high-quality, diverse stereo footage from global cities. Action labels (trajectories) are automatically generated using a state-of-the-art stereo visual odometry method (MAC-VO), which proves more accurate than the monocular method used by prior work.

Second, they present the StereoWalker model architecture. It takes a short history of stereo (or monocular) image pairs, past trajectory coordinates, and a current sub-goal as input. Instead of compressing each frame into a single global feature, StereoWalker retains fine-grained spatial information by extracting dense patch tokens from three frozen foundation models: DINOv2 for appearance, DepthAnythingV2 for depth, and CoTracker-v3 for point tracks across time. These tokens, concatenating appearance and depth features, are processed through a three-stage transformer block: 1) Tracking-guided attention (inspired by TrackTention) to maintain temporal correspondence and model motion, 2) Global attention to integrate contextual information across the scene, and 3) Target-token attention to focus all relevant information onto a goal-specific token. This token is finally decoded into an arrival probability and immediate control actions (e.g., velocity).

Third, through extensive experiments, the paper demonstrates StereoWalker’s superior data efficiency and performance. The most striking result is that StereoWalker, equipped with mid-level vision, achieves performance comparable to the state-of-the-art monocular NFM (CityWalker) using only 1.5% of the training data. When trained on the full dataset, it surpasses CityWalker’s performance. Ablation studies confirm that stereo vision consistently yields higher navigation accuracy than monocular input. Evaluations are conducted on the established CityWalker benchmark, a new StereoWalker benchmark, and in real-world environments, showcasing the model’s robustness.

In summary, this work provides a compelling case for moving beyond purely end-to-end approaches in embodied AI. By explicitly integrating stereo geometry and powerful mid-level visual priors, StereoWalker achieves unprecedented data efficiency and state-of-the-art performance, offering a scalable and robust pathway toward reliable navigation in complex, dynamic real-world settings. The released dataset and model establish a valuable foundation for future research in this direction.

Comments & Academic Discussion

Loading comments...

Leave a Comment