ClusIR: Towards Cluster-Guided All-in-One Image Restoration

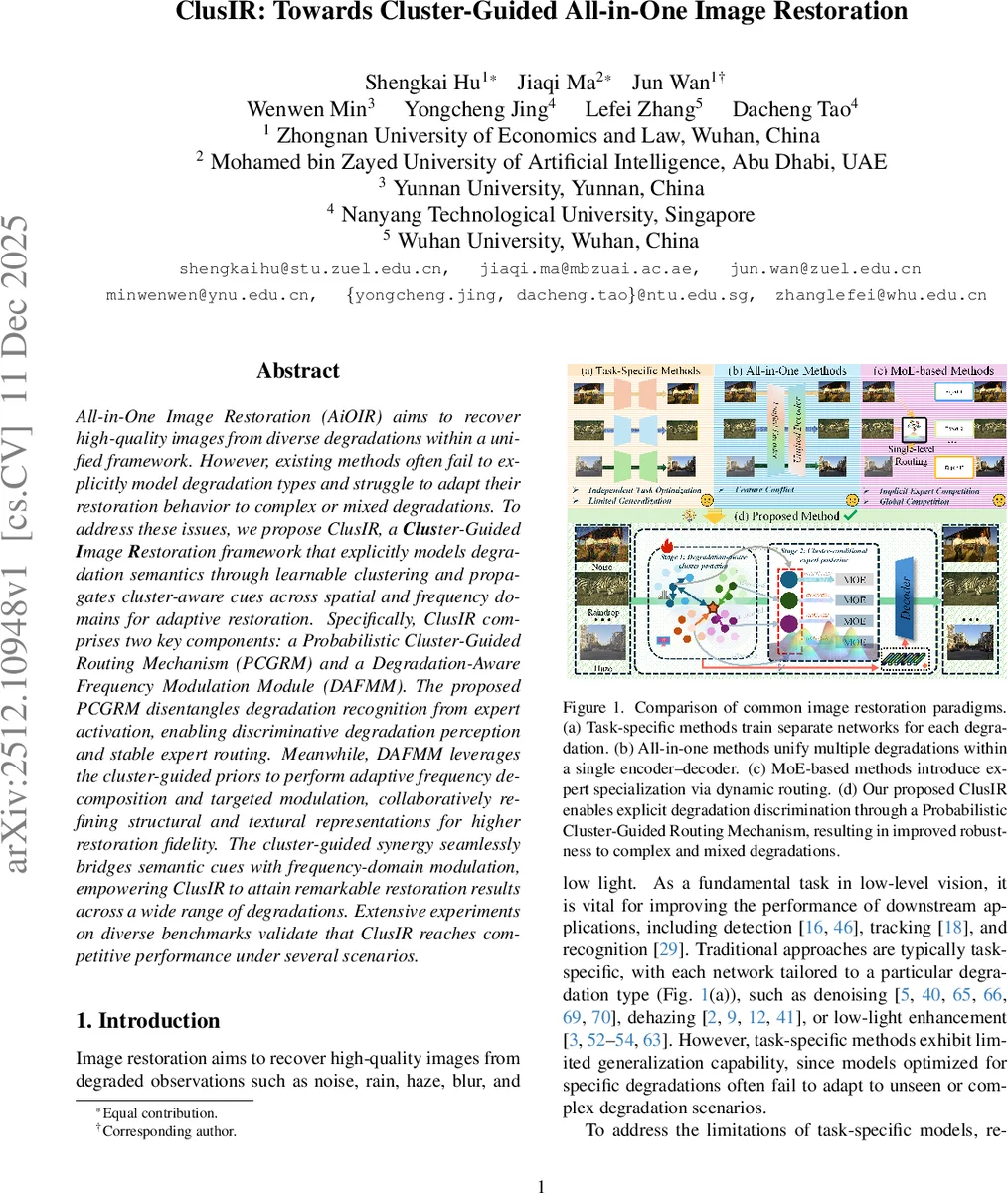

All-in-One Image Restoration (AiOIR) aims to recover high-quality images from diverse degradations within a unified framework. However, existing methods often fail to explicitly model degradation types and struggle to adapt their restoration behavior to complex or mixed degradations. To address these issues, we propose ClusIR, a Cluster-Guided Image Restoration framework that explicitly models degradation semantics through learnable clustering and propagates cluster-aware cues across spatial and frequency domains for adaptive restoration. Specifically, ClusIR comprises two key components: a Probabilistic Cluster-Guided Routing Mechanism (PCGRM) and a Degradation-Aware Frequency Modulation Module (DAFMM). The proposed PCGRM disentangles degradation recognition from expert activation, enabling discriminative degradation perception and stable expert routing. Meanwhile, DAFMM leverages the cluster-guided priors to perform adaptive frequency decomposition and targeted modulation, collaboratively refining structural and textural representations for higher restoration fidelity. The cluster-guided synergy seamlessly bridges semantic cues with frequency-domain modulation, empowering ClusIR to attain remarkable restoration results across a wide range of degradations. Extensive experiments on diverse benchmarks validate that ClusIR reaches competitive performance under several scenarios.

💡 Research Summary

The paper “ClusIR: Towards Cluster-Guided All-in-One Image Restoration” addresses key limitations in the field of All-in-One Image Restoration (AiOIR), which aims to handle diverse image degradations (e.g., noise, rain, haze, blur, low-light) within a single unified model. The authors identify that existing methods, including early unified frameworks and more recent Mixture-of-Experts (MoE) based approaches, often fail to explicitly model degradation types. They rely on implicit feature sharing or induce global competition among experts, leading to suboptimal performance, semantic entanglement, and instability when dealing with complex or mixed degradations.

To overcome these challenges, the authors propose ClusIR, a novel Cluster-Guided Image Restoration framework. The core innovation of ClusIR lies in its two synergistic components designed to bridge semantic degradation understanding with frequency-domain processing.

The first component is the Probabilistic Cluster-Guided Routing Mechanism (PCGRM). PCGRM revolutionizes the expert routing process in MoE architectures by decomposing it into a hierarchical two-stage procedure. Instead of directly predicting expert activations from input features using a single softmax (which assumes a unimodal distribution), PCGRM first infers a degradation-aware cluster posterior. It does this by comparing multi-scale feature representations against a set of learnable, layer-specific cluster prototypes that capture degradation semantics. This step explicitly identifies potential degradation types present in the input. In the second stage, only experts associated with the selected clusters are activated, calculating a cluster-conditional expert posterior. This factorization disentangles the tasks of “recognizing what degradation is present” from “selecting which experts to use for it.” It mitigates global expert interference, stabilizes training, and provides a more interpretable routing path, especially beneficial for mixed degradations that are inherently multimodal.

The second component is the Degradation-Aware Frequency Modulation Module (DAFMM). Recognizing that different degradations affect various frequency components differently (e.g., blur corrupts low-frequency structures, noise affects high-frequency textures), DAFMM leverages the cluster-aware semantic prompts generated by PCGRM to guide fine-grained frequency-domain restoration. It begins by decomposing features into low-frequency (LL) and high-frequency (LH, HL, HH) subbands using Discrete Wavelet Transform (DWT). A key sub-module, the Frequency Self-Mining Block (FSB), is introduced to address the incomplete separation of structural and textural information by standard DWT. The FSB adaptively refines the low-frequency component to extract purer structural information and residual detail. All frequency components are then modulated under the guidance of the cluster prompts before being reconstructed. This allows for targeted enhancement of structures and textures based on the identified degradation type.

Extensive experiments on multiple benchmarks for tasks like denoising, dehazing, deraining, and low-light enhancement demonstrate that ClusIR achieves state-of-the-art or highly competitive performance compared to existing AiOIR and MoE-based methods. Its superiority is particularly evident in handling complex and mixed degradation scenarios, showcasing improved robustness and generalization. In conclusion, ClusIR presents a significant advance in AiOIR by integrating explicit, cluster-based degradation modeling with adaptive frequency-domain processing, leading to more stable, interpretable, and effective restoration across a wide spectrum of image degradations.

Comments & Academic Discussion

Loading comments...

Leave a Comment