LDP: Parameter-Efficient Fine-Tuning of Multimodal LLM for Medical Report Generation

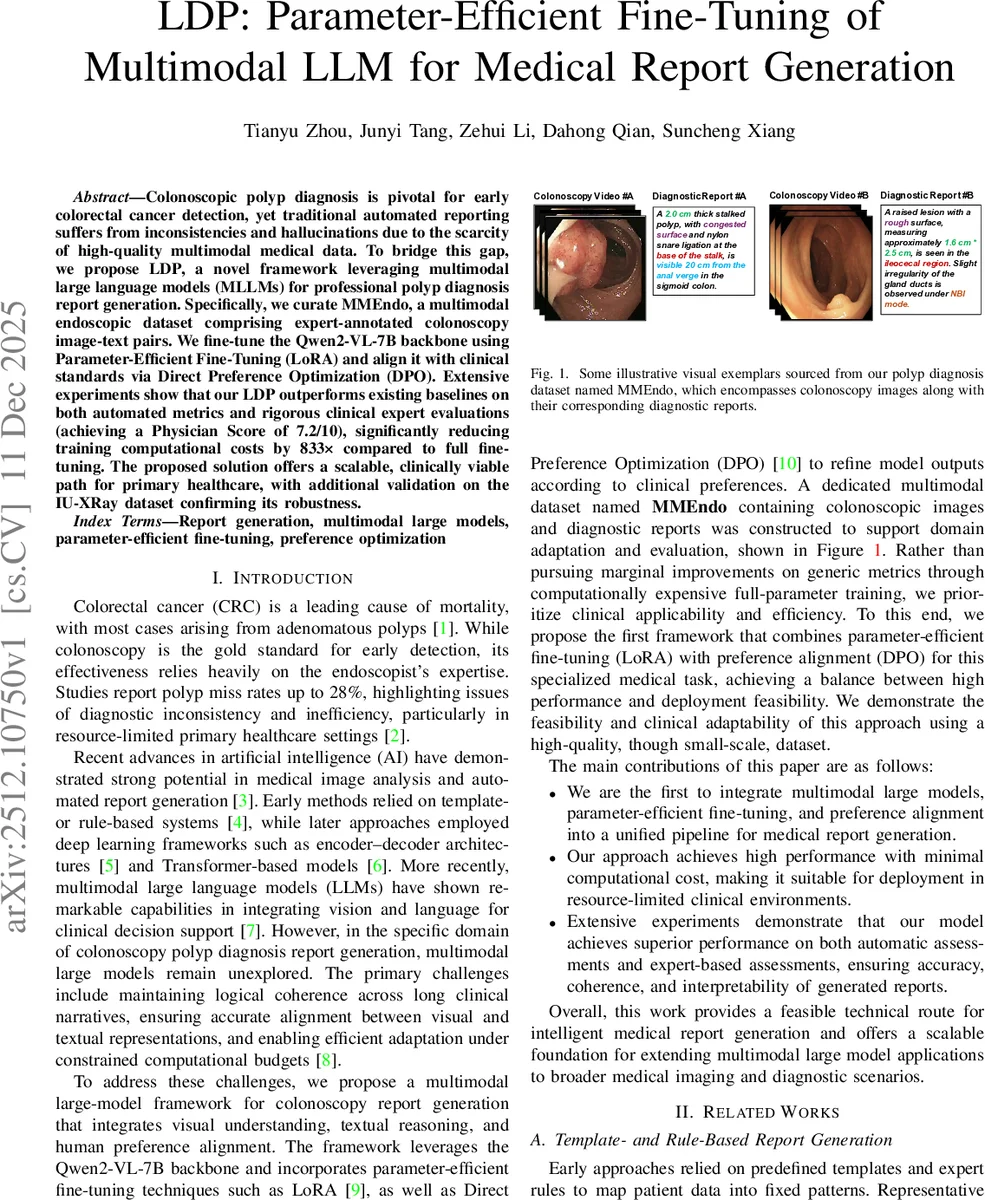

Colonoscopic polyp diagnosis is pivotal for early colorectal cancer detection, yet traditional automated reporting suffers from inconsistencies and hallucinations due to the scarcity of high-quality multimodal medical data. To bridge this gap, we propose LDP, a novel framework leveraging multimodal large language models (MLLMs) for professional polyp diagnosis report generation. Specifically, we curate MMEndo, a multimodal endoscopic dataset comprising expert-annotated colonoscopy image-text pairs. We fine-tune the Qwen2-VL-7B backbone using Parameter-Efficient Fine-Tuning (LoRA) and align it with clinical standards via Direct Preference Optimization (DPO). Extensive experiments show that our LDP outperforms existing baselines on both automated metrics and rigorous clinical expert evaluations (achieving a Physician Score of 7.2/10), significantly reducing training computational costs by 833x compared to full fine-tuning. The proposed solution offers a scalable, clinically viable path for primary healthcare, with additional validation on the IU-XRay dataset confirming its robustness.

💡 Research Summary

This paper introduces LDP, a novel framework for automated generation of professional colonoscopic polyp diagnosis reports, addressing key challenges of inconsistency and hallucinations in traditional methods. Colorectal cancer often originates from adenomatous polyps, and colonoscopy is the gold standard for early detection. However, the effectiveness of diagnosis heavily relies on the endoscopist’s expertise, leading to variability, especially in resource-limited primary healthcare settings.

The core innovation of LDP lies in its unified pipeline that efficiently adapts a powerful Multimodal Large Language Model (MLLM) to a specialized medical domain while aligning its outputs with clinical standards. The framework is built upon three pillars. First, the authors curated MMEndo, a high-quality multimodal dataset comprising 2,314 expert-annotated colonoscopy image-text pairs. This dataset was constructed through meticulous keyframe extraction, data cleaning, and a “frame-to-sentence” alignment strategy to ensure precise correspondence between visual findings and textual descriptions.

Second, for domain adaptation, the pre-trained Qwen2-VL-7B model was fine-tuned using a Parameter-Efficient Fine-Tuning (PEFT) technique called LoRA (Low-Rank Adaptation). Instead of retraining all model parameters—a computationally prohibitive process—LoRA freezes the original weights and injects trainable low-rank matrices into the self-attention layers. This approach drastically reduces the number of trainable parameters, making it feasible to adapt the large model with limited resources (e.g., four NVIDIA RTX 4090 GPUs).

Third, to enhance the clinical quality and relevance of the generated reports beyond basic fine-tuning, the framework employs Direct Preference Optimization (DPO). DPO aligns the model with human expert preferences by learning from a dataset of “preferred” reports (written by senior endoscopists) and “non-preferred” reports (generated by the base model, which may contain hallucinations). Unlike complex Reinforcement Learning from Human Feedback (RLHF), DPO directly optimizes the policy, simplifying training and effectively steering the model towards concise, accurate, and professionally styled outputs.

Extensive experiments were conducted on the proprietary MMEndo dataset and the public IU-XRay dataset to evaluate generalization. Performance was measured using standard NLP metrics (BLEU, METEOR, ROUGE-L, CIDEr) and a novel Physician Score (PS), a rigorous manual evaluation by seven expert clinicians rating reports on clinical accuracy, factual completeness, and terminology appropriateness.

The results demonstrate that the full LDP framework (integrating LoRA and DPO) outperforms all strong baselines, including the vanilla Qwen2-VL-7B, models with parameter initialization, and other PEFT methods like AdaLoRA. Crucially, LDP achieved a top Physician Score of 7.2 out of 10, indicating its outputs are considered “Good” and clinically useful by experts. This represents a significant improvement over the LoRA-only model (PS: 6.7), highlighting the added value of preference optimization. Furthermore, the approach achieved this high performance while reducing computational cost by a factor of 833 compared to full fine-tuning.

In conclusion, LDP presents a scalable and clinically viable pathway for deploying advanced AI assistance in primary healthcare. By successfully combining parameter-efficient fine-tuning with direct preference alignment, it offers a practical blueprint for adapting large multimodal models to specialized, resource-constrained medical imaging tasks beyond colonoscopy, such as chest X-ray reporting.

Comments & Academic Discussion

Loading comments...

Leave a Comment