Video Depth Propagation

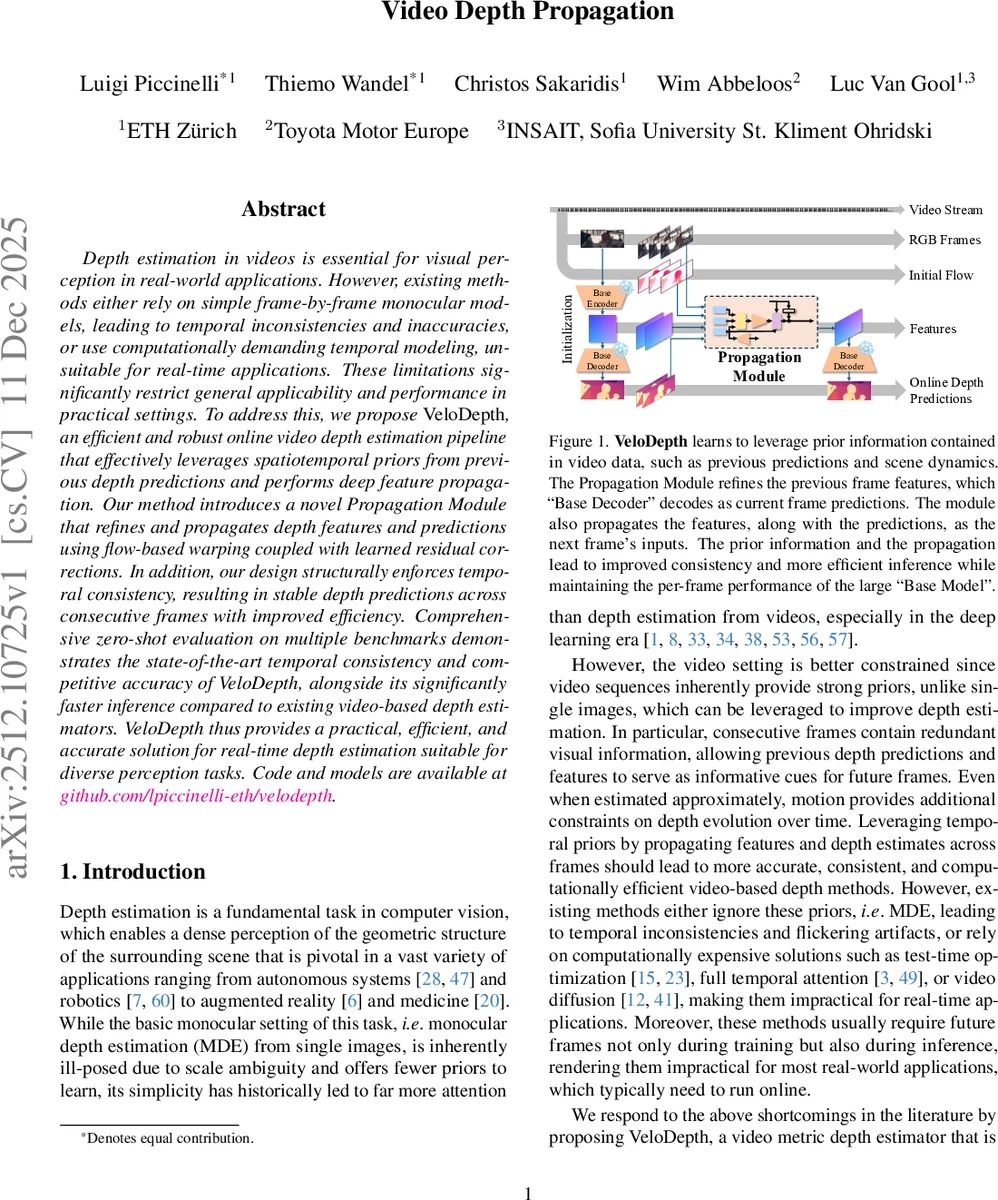

Depth estimation in videos is essential for visual perception in real-world applications. However, existing methods either rely on simple frame-by-frame monocular models, leading to temporal inconsistencies and inaccuracies, or use computationally demanding temporal modeling, unsuitable for real-time applications. These limitations significantly restrict general applicability and performance in practical settings. To address this, we propose VeloDepth, an efficient and robust online video depth estimation pipeline that effectively leverages spatiotemporal priors from previous depth predictions and performs deep feature propagation. Our method introduces a novel Propagation Module that refines and propagates depth features and predictions using flow-based warping coupled with learned residual corrections. In addition, our design structurally enforces temporal consistency, resulting in stable depth predictions across consecutive frames with improved efficiency. Comprehensive zero-shot evaluation on multiple benchmarks demonstrates the state-of-the-art temporal consistency and competitive accuracy of VeloDepth, alongside its significantly faster inference compared to existing video-based depth estimators. VeloDepth thus provides a practical, efficient, and accurate solution for real-time depth estimation suitable for diverse perception tasks. Code and models are available at https://github.com/lpiccinelli-eth/velodepth

💡 Research Summary

The paper “Video Depth Propagation” introduces VeloDepth, a novel pipeline for efficient and robust online video depth estimation. It addresses the critical limitations of existing approaches: frame-by-frame monocular methods suffer from temporal inconsistencies and flickering, while video-based methods often rely on computationally heavy offline processing or future frame information, making them unsuitable for real-time applications.

VeloDepth’s core innovation is the “Propagation Module,” which is designed to leverage spatiotemporal priors inherent in video sequences. The module operates on a simple yet powerful principle: instead of predicting depth for each frame from scratch, it propagates and refines information from the previous frame to bootstrap the estimation for the current frame. The process begins with an initial optical flow estimate between consecutive frames, which is then refined by a lightweight network. This refined flow is used to warp the previous frame’s depth prediction and, more importantly, its intermediate feature maps (from the encoder “neck”) forward in time. These warped features serve as a strong geometric prior for the current frame.

However, simple warping is prone to errors in occluded regions or where flow is inaccurate. To mitigate this, the Propagation Module employs a sophisticated multi-modal fusion and gating mechanism. It takes three inputs: the current RGB frame, the warped depth features, and the flow features. These are fused, and the network learns to output a “residual correction” tensor. Crucially, this correction is gated by signals derived from the flow features. One gate controls how much of the warped depth information to trust during fusion, and another gate controls the application of the final residual correction to the warped previous features. This dual-gating allows the model to adaptively decide when to rely on propagated information and when to apply corrections, preventing error accumulation.

The corrected features are then passed to a pre-trained, powerful “Base Decoder” (e.g., from ZoeDepth or Metric3D) to generate the final depth map for the current frame. This architecture structurally enforces temporal consistency because the current prediction is directly influenced by the previous one. It also achieves high efficiency because the Propagation Module only needs to learn the simpler residual mapping, while the heavy lifting of depth decoding is handled by the frozen Base Decoder.

Furthermore, the paper proposes an improved temporal consistency loss for training. Unlike previous losses that directly compare depth values without considering camera motion, VeloDepth’s loss operates on metric radial distance, which is invariant to camera rotation. It also aligns the median of 3D point clouds between frames to compensate for translation, enabling robust consistency enforcement without requiring explicit camera poses.

Extensive zero-shot evaluations on multiple benchmarks (KITTI, NYU-Depth-v2, DDAD, Sintel) demonstrate VeloDepth’s strengths. It maintains competitive per-frame accuracy compared to state-of-the-art monocular models while achieving superior temporal consistency scores. Most notably, it offers a dramatic speed advantage, running significantly faster than other video-based depth estimators, making it a practical solution for real-time perception tasks. The work also introduces a keyframe strategy to skip redundant computations in static scenes, further boosting efficiency. In summary, VeloDepth successfully balances accuracy, temporal stability, and inference speed, presenting a compelling approach for online video depth estimation.

Comments & Academic Discussion

Loading comments...

Leave a Comment