Mastering Diverse, Unknown, and Cluttered Tracks for Robust Vision-Based Drone Racing

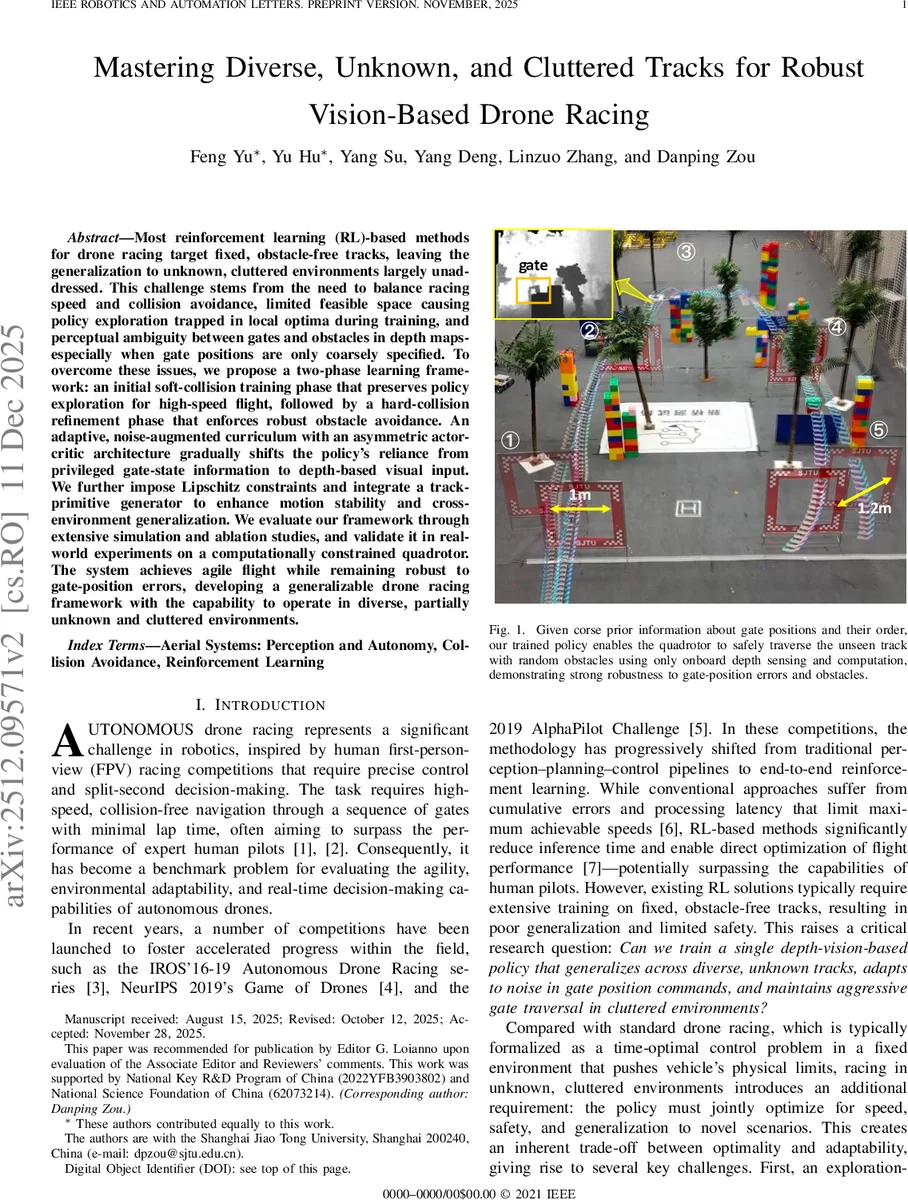

Most reinforcement learning(RL)-based methods for drone racing target fixed, obstacle-free tracks, leaving the generalization to unknown, cluttered environments largely unaddressed. This challenge stems from the need to balance racing speed and collision avoidance, limited feasible space causing policy exploration trapped in local optima during training, and perceptual ambiguity between gates and obstacles in depth maps-especially when gate positions are only coarsely specified. To overcome these issues, we propose a two-phase learning framework: an initial soft-collision training phase that preserves policy exploration for high-speed flight, followed by a hard-collision refinement phase that enforces robust obstacle avoidance. An adaptive, noise-augmented curriculum with an asymmetric actor-critic architecture gradually shifts the policy’s reliance from privileged gate-state information to depth-based visual input. We further impose Lipschitz constraints and integrate a track-primitive generator to enhance motion stability and cross-environment generalization. We evaluate our framework through extensive simulation and ablation studies, and validate it in real-world experiments on a computationally constrained quadrotor. The system achieves agile flight while remaining robust to gate-position errors, developing a generalizable drone racing framework with the capability to operate in diverse, partially unknown and cluttered environments. https://yufengsjtu.github.io/MasterRacing.github.io/

💡 Research Summary

This paper tackles the long‑standing limitation of reinforcement‑learning (RL) based drone racing, namely the inability to generalize to unknown, cluttered tracks while maintaining high speed. The authors propose a comprehensive two‑phase learning framework that explicitly separates the acquisition of aggressive racing skills from the development of robust obstacle avoidance.

In the first “soft‑collision” phase, the drone is allowed to intersect obstacles without triggering a physical collision response; instead, a mild penalty proportional to the number of penetrated collision points is applied. This design prevents early episode termination, enabling long trajectories and rich exploration of high‑speed flight dynamics. The second “hard‑collision” phase re‑introduces realistic rigid‑body collisions, imposing a large negative reward and immediate episode termination upon impact. By fine‑tuning the policy under these stricter conditions, the agent learns precise avoidance maneuvers while retaining the speed‑focused behaviors learned earlier.

To bridge the gap between privileged gate‑state information (available during training) and raw depth perception (available at deployment), the authors introduce an adaptive noise‑augmented curriculum on the next‑gate position commands. A uniform noise distribution U(‑µ, µ) is applied to each command, and µ is increased when the agent successfully passes more than three gates, decreased when it fails, and left unchanged otherwise. This performance‑driven schedule gradually degrades the reliability of the command signal, forcing the policy to rely increasingly on depth images.

The curriculum is coupled with a non‑symmetric actor‑critic architecture. The actor receives both noisy command inputs and depth observations, but as the noise grows it is compelled to extract gate‑relevant features directly from the depth map. The critic, however, continues to use the ground‑truth gate positions, providing a stable learning signal and preventing divergence. This asymmetric design enables a smooth transition from command‑driven to perception‑driven control.

To ensure smooth, safe motions, a Lipschitz continuity regularizer is added to the network loss, limiting the rate of change of the policy output with respect to its inputs. Additionally, a “track primitive generator” creates a diverse set of training tracks by randomly combining three basic primitives—circular, zig‑zag, and elliptical—each with variations in direction and curvature. This diversity mitigates over‑fitting to a single layout and promotes transferability to novel environments.

Training is performed in DiffLab, a high‑fidelity, massively parallel simulator built on NVIDIA Isaac Lab. DiffLab supports domain randomization, system identification, and realistic aerodynamics, thereby narrowing the sim‑to‑real gap. After training, the policy is deployed on a lightweight quadrotor equipped with an Intel RealSense D435i depth camera, a Raspberry Pi Zero 3W for onboard inference, and a VICON motion‑capture system for state estimation.

Extensive simulation studies demonstrate that the two‑phase approach reduces average lap time by roughly 12 % and cuts collision rates by over 90 % compared to baseline RL methods that train only on obstacle‑free tracks. Real‑world experiments confirm that the policy tolerates gate‑position errors up to ±0.3 m and navigates through randomly placed obstacles without significant performance degradation.

In summary, the paper contributes (1) a novel soft‑to‑hard collision training schedule that balances exploration and safety, (2) an adaptive noise curriculum combined with an asymmetric actor‑critic to shift reliance from privileged commands to raw depth perception, and (3) Lipschitz regularization plus a primitive‑based track generator to enforce smooth control and broad generalization. The resulting system operates in real time on a computationally constrained platform, achieving agile, safe, and generalizable drone racing in diverse, partially unknown, and cluttered environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment