From Generated Human Videos to Physically Plausible Robot Trajectories

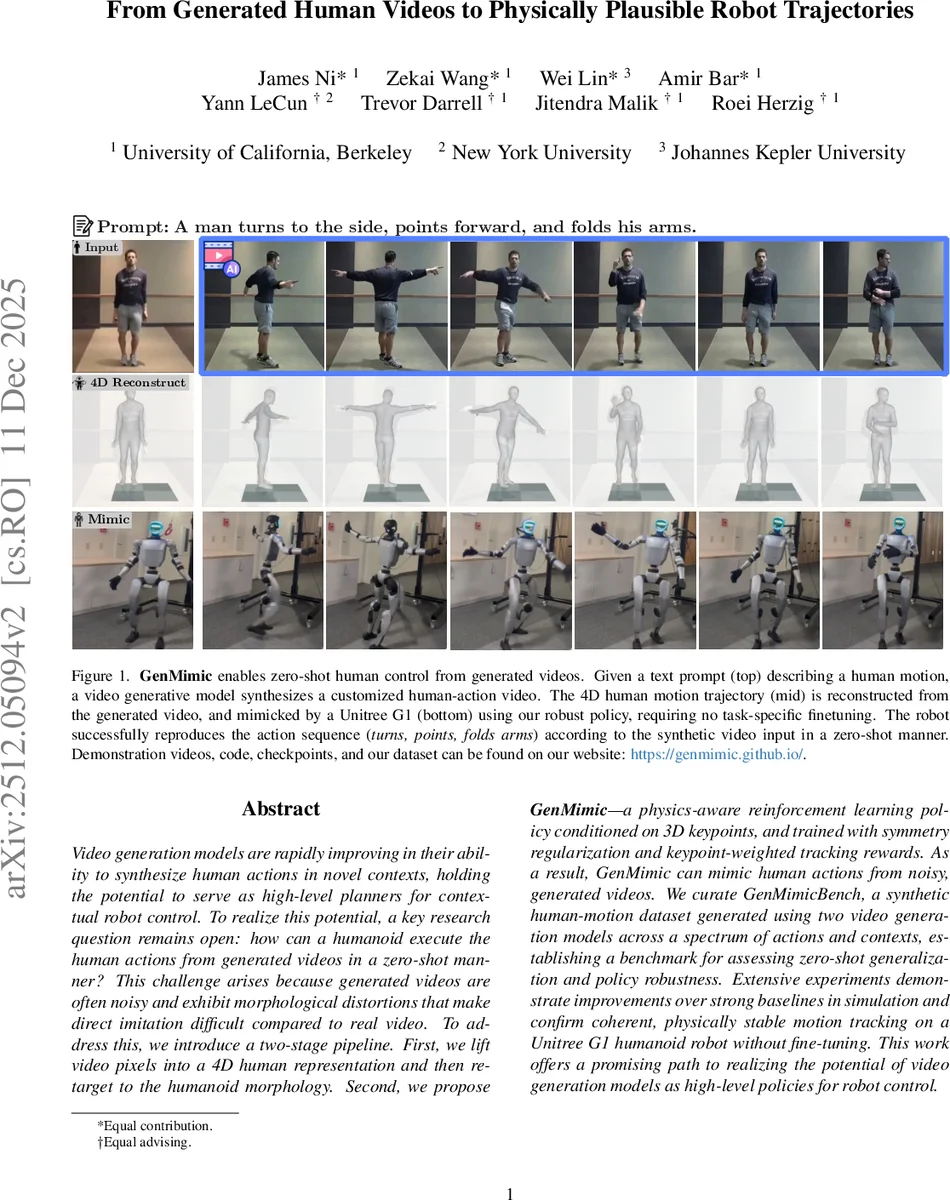

Video generation models are rapidly improving in their ability to synthesize human actions in novel contexts, holding the potential to serve as high-level planners for contextual robot control. To realize this potential, a key research question remains open: how can a humanoid execute the human actions from generated videos in a zero-shot manner? This challenge arises because generated videos are often noisy and exhibit morphological distortions that make direct imitation difficult compared to real video. To address this, we introduce a two-stage pipeline. First, we lift video pixels into a 4D human representation and then retarget to the humanoid morphology. Second, we propose GenMimic-a physics-aware reinforcement learning policy conditioned on 3D keypoints, and trained with symmetry regularization and keypoint-weighted tracking rewards. As a result, GenMimic can mimic human actions from noisy, generated videos. We curate GenMimicBench, a synthetic human-motion dataset generated using two video generation models across a spectrum of actions and contexts, establishing a benchmark for assessing zero-shot generalization and policy robustness. Extensive experiments demonstrate improvements over strong baselines in simulation and confirm coherent, physically stable motion tracking on a Unitree G1 humanoid robot without fine-tuning. This work offers a promising path to realizing the potential of video generation models as high-level policies for robot control.

💡 Research Summary

The rapid advancement of video generation models has opened new frontiers for robotics, particularly in using synthesized human actions as high-level planners for humanoid control. However, a significant gap exists between the visual output of these models and the physical requirements of robots. Generated videos often suffer from morphological distortions, pixel-level noise, and temporal inconsistencies, which make direct imitation by a robot physically dangerous or impossible. This paper introduces “GenMimic,” a robust two-stage pipeline designed to bridge this gap by transforming noisy, generated human videos into physically plausible and stable robot trajectories.

The proposed methodology consists of two sophisticated stages. The first stage focuses on structural transformation: lifting 2D video pixels into a 4D human representation and subsequently retargeting this motion to the specific morphology of a humanoid robot. This process effectively filters out much of the visual noise by converting unstructured pixels into structured geometric data. The second stage introduces the GenMimic policy, a physics-aware reinforcement learning (RL) framework. To combat the inherent artifacts of generative models, the authors implement three critical technical innovations: 3D keypoint conditioning to provide a structural guide, symmetry regularization to correct the asymmetrical distortions common in generative outputs, and keypoint-weighted tracking rewards to prioritize the accuracy of essential joints. This ensures that the robot maintains physical stability even when the input video is visually flawed.

To rigorously evaluate the effectiveness of GenMimic, the researchers developed “GenMimicBench,” a comprehensive synthetic dataset generated from two different video generation models, covering a wide array of human actions and environmental contexts. The experimental results demonstrate that GenMimic significantly outperforms existing strong baselines in simulated environments. Most impressively, the policy demonstrates remarkable zero-shot generalization capabilities; when deployed on a real-world Unitree G1 humanoid robot without any additional fine-tuning, the robot exhibited coherent and physically stable motion tracking.

In conclusion, this research provides a transformative pathway for integrating generative AI with robotics. By treating video generation models as high-level behavioral planners, GenMimic demonstrates that the “reality gap” caused by generative artifacts can be overcome through physics-aware learning and structural retargeting. This work paves the way for a future where robots can learn complex, context-aware tasks simply by observing or being provided with synthesized video demonstrations, fundamentally changing the paradigm of robot motion learning.

Comments & Academic Discussion

Loading comments...

Leave a Comment