mmWEAVER: Environment-Specific mmWave Signal Synthesis from a Photo and Activity Description

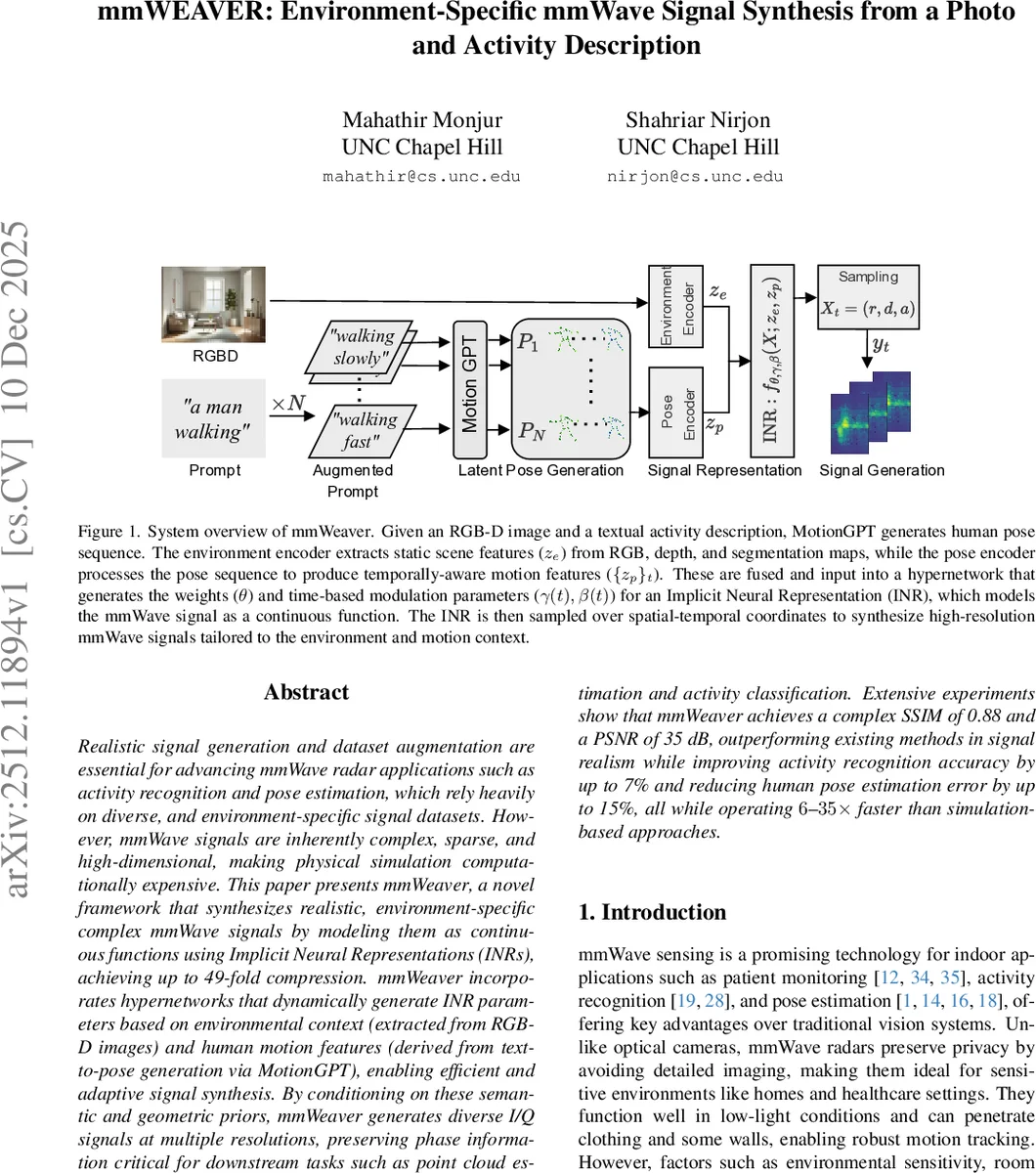

Realistic signal generation and dataset augmentation are essential for advancing mmWave radar applications such as activity recognition and pose estimation, which rely heavily on diverse, and environment-specific signal datasets. However, mmWave signals are inherently complex, sparse, and high-dimensional, making physical simulation computationally expensive. This paper presents mmWeaver, a novel framework that synthesizes realistic, environment-specific complex mmWave signals by modeling them as continuous functions using Implicit Neural Representations (INRs), achieving up to 49-fold compression. mmWeaver incorporates hypernetworks that dynamically generate INR parameters based on environmental context (extracted from RGB-D images) and human motion features (derived from text-to-pose generation via MotionGPT), enabling efficient and adaptive signal synthesis. By conditioning on these semantic and geometric priors, mmWeaver generates diverse I/Q signals at multiple resolutions, preserving phase information critical for downstream tasks such as point cloud estimation and activity classification. Extensive experiments show that mmWeaver achieves a complex SSIM of 0.88 and a PSNR of 35 dB, outperforming existing methods in signal realism while improving activity recognition accuracy by up to 7% and reducing human pose estimation error by up to 15%, all while operating 6-35 times faster than simulation-based approaches.

💡 Research Summary

**

mmWEAVER addresses two major bottlenecks in millimeter‑wave (mmWave) radar research: the scarcity of diverse, environment‑specific training data and the high computational cost of physics‑based simulation. The proposed system takes as input a single RGB‑D image of a room and a textual description of a human activity (e.g., “walking slowly”). From the image, an environment encoder extracts a 512‑dimensional latent vector that captures geometry, material properties, and object layout. From the textual prompt, MotionGPT—a transformer‑based motion synthesis model—generates a temporally coherent 3D pose sequence. This pose sequence is encoded by a two‑stage transformer (spatial then temporal self‑attention) into a motion latent vector.

Both latent vectors are concatenated and fed into a hypernetwork. The hypernetwork dynamically produces the weights of an Implicit Neural Representation (INR) together with time‑dependent modulation parameters (γ(t), β(t)). The INR maps spatial coordinates (range, Doppler, antenna index) to complex I/Q values, while the modulation parameters inject temporal dynamics. Positional encoding with eight Fourier frequencies expands the input space, allowing the network to represent both low‑frequency structure and high‑frequency details of the radar signal. Because the INR is a continuous function, it can be sampled at arbitrary resolutions, enabling super‑resolution synthesis without retraining.

Training proceeds on a newly collected dataset comprising 10 indoor scenes, 6 participants, and 12 activities, yielding over 12 000 synchronized radar‑camera sequences. The loss combines complex SSIM, MSE, and a perceptual term derived from a custom radar feature extractor. After 1 000 epochs (Adam, lr = 1e‑4), the model achieves a complex SSIM of 0.88 and a PSNR of 35 dB on held‑out scenes—substantially higher than prior RF‑Genesis or RF‑Diffusion baselines.

Downstream evaluations demonstrate practical impact. When synthetic data generated by mmWEAVER are mixed with real data, activity‑recognition accuracy improves by up to 7 %, and 3‑D pose estimation error (MPJPE) drops by 15 %. On the public HuPR dataset, similar gains of around 10 % are observed. Inference is extremely fast: a full activity’s radar signal is synthesized in an average of 3.4 seconds, which is 6‑35× faster than conventional ray‑tracing simulators that require 20 seconds to 2 minutes per sample. Moreover, the INR‑based representation compresses the full‑resolution signal by up to 49×, reducing storage and transmission costs.

The paper also discusses limitations. The environment encoder relies on static RGB‑D captures, so dynamic changes (moving furniture, lighting shifts) are not directly modeled. The hypernetwork‑INR parameter set (~7 MB) may be too large for ultra‑low‑power edge devices, suggesting future work on model pruning, quantization, or knowledge distillation. Finally, online adaptation mechanisms could enable the system to update its environment latent vector in real time as the scene evolves.

In summary, mmWEAVER is the first framework that unifies continuous INR‑based radar modeling with a context‑driven hypernetwork that conditions on both visual scene information and synthesized human motion. It delivers high‑fidelity, multi‑resolution mmWave signals, dramatically accelerates data generation, and demonstrably boosts the performance of downstream perception tasks. The approach opens a path toward scalable, privacy‑preserving radar datasets for smart home, healthcare, and security applications, where rapid, environment‑specific data synthesis is essential.

Comments & Academic Discussion

Loading comments...

Leave a Comment