YOPO-Nav: Visual Navigation using 3DGS Graphs from One-Pass Videos

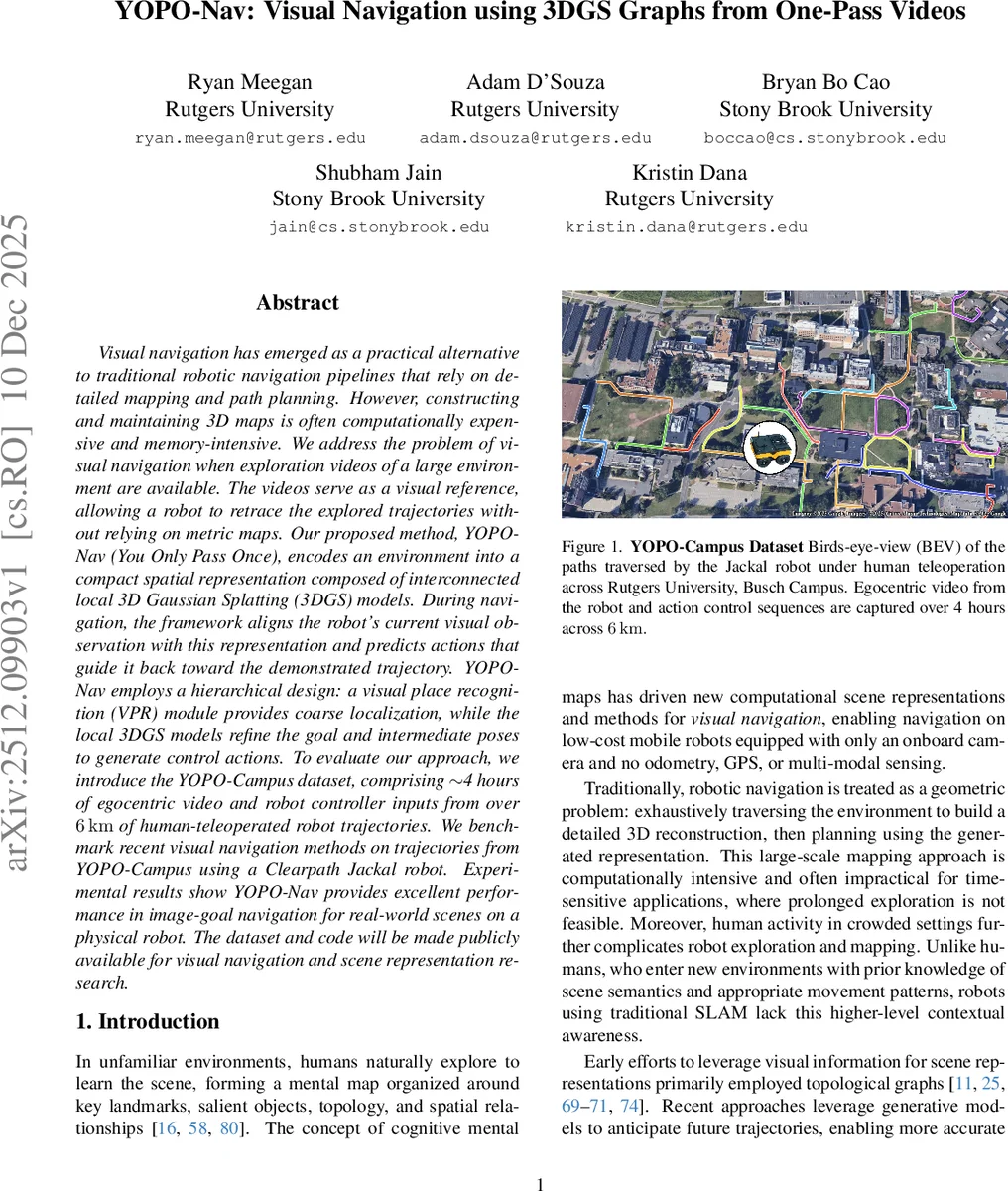

Visual navigation has emerged as a practical alternative to traditional robotic navigation pipelines that rely on detailed mapping and path planning. However, constructing and maintaining 3D maps is often computationally expensive and memory-intensive. We address the problem of visual navigation when exploration videos of a large environment are available. The videos serve as a visual reference, allowing a robot to retrace the explored trajectories without relying on metric maps. Our proposed method, YOPO-Nav (You Only Pass Once), encodes an environment into a compact spatial representation composed of interconnected local 3D Gaussian Splatting (3DGS) models. During navigation, the framework aligns the robot’s current visual observation with this representation and predicts actions that guide it back toward the demonstrated trajectory. YOPO-Nav employs a hierarchical design: a visual place recognition (VPR) module provides coarse localization, while the local 3DGS models refine the goal and intermediate poses to generate control actions. To evaluate our approach, we introduce the YOPO-Campus dataset, comprising 4 hours of egocentric video and robot controller inputs from over 6 km of human-teleoperated robot trajectories. We benchmark recent visual navigation methods on trajectories from YOPO-Campus using a Clearpath Jackal robot. Experimental results show YOPO-Nav provides excellent performance in image-goal navigation for real-world scenes on a physical robot. The dataset and code will be made publicly available for visual navigation and scene representation research.

💡 Research Summary

The paper “YOPO-Nav: Visual Navigation using 3DGS Graphs from One-Pass Videos” presents a novel framework for enabling robots to navigate large-scale environments using only visual references from previously captured exploration videos, without relying on metric maps, odometry, or GPS. The core problem addressed is the computational expense and impracticality of traditional detailed 3D mapping and path planning for time-sensitive applications or in dynamic human spaces.

The proposed solution, YOPO-Nav (You Only Pass Once), constructs a compact spatial representation of the environment in the form of a graph composed of interconnected local 3D Gaussian Splatting (3DGS) models. This representation is built directly from a single-pass exploration video, treating the video as a “breadcrumb trail” that defines a traversable path. Inspired by human cognitive mapping, the method partitions the environment into manageable local regions (nodes), each represented by its own 3DGS model trained on roughly 50-55 video frames. Edges between nodes are established based on frame continuity within a video or visual similarity across different videos, creating a navigable topological structure.

The navigation framework operates hierarchically. First, a Visual Place Recognition (VPR) module, dubbed YOPO-Loc, provides coarse global localization by matching the robot’s current live view against a database of images from the exploration video, identifying the most likely graph node. Subsequently, within that localized node’s 3DGS model, a precise 6-DoF pose of the robot (p’) is estimated using Perspective-n-Point (PnP) with RANSAC. This estimated pose is compared to the desired pose (p) stored from the original demonstration trajectory within that node. The transformation difference between p’ and p is then used to generate low-level control actions (discrete forward/backward and rotation commands) that guide the robot back onto the demonstrated path.

To rigorously evaluate this approach, the authors introduce the YOPO-Campus dataset, a substantial real-world collection effort. It comprises approximately 4 hours of egocentric RGB-D video and synchronized robot controller inputs, GPS, and compass data, captured by a human-teleoperated Clearpath Jackal robot traversing over 6 km of sidewalk paths across a university campus. The dataset includes 35 unique trajectories, designed to support benchmarking for visual navigation tasks.

Experimental validation on physical hardware using trajectories from YOPO-Campus demonstrates that YOPO-Nav achieves excellent performance in the image-goal navigation task. It outperforms recent generalist visual navigation foundation models, indicating that when one or more exploration videos are available for a specific environment, a tailored representation like YOPO-Nav’s graph of 3DGS models can be highly effective. Furthermore, the paper shows strong transfer learning for the VPR component; a model pre-trained on the geographically distinct Global Navigation Dataset (GND) generalizes well to YOPO-Campus, underscoring the practicality of the approach.

In summary, YOPO-Nav contributes a lightweight, interpretable, and scalable framework for visual navigation that bypasses the need for heavy global 3D reconstruction. Its key innovations are the fusion of topological graph concepts with local, photorealistic 3DGS representations, and a hierarchical global-local navigation strategy. The release of the YOPO-Campus dataset and code aims to foster further research in scene representation and embodied visual navigation.

Comments & Academic Discussion

Loading comments...

Leave a Comment