Chat with UAV -- Human-UAV Interaction Based on Large Language Models

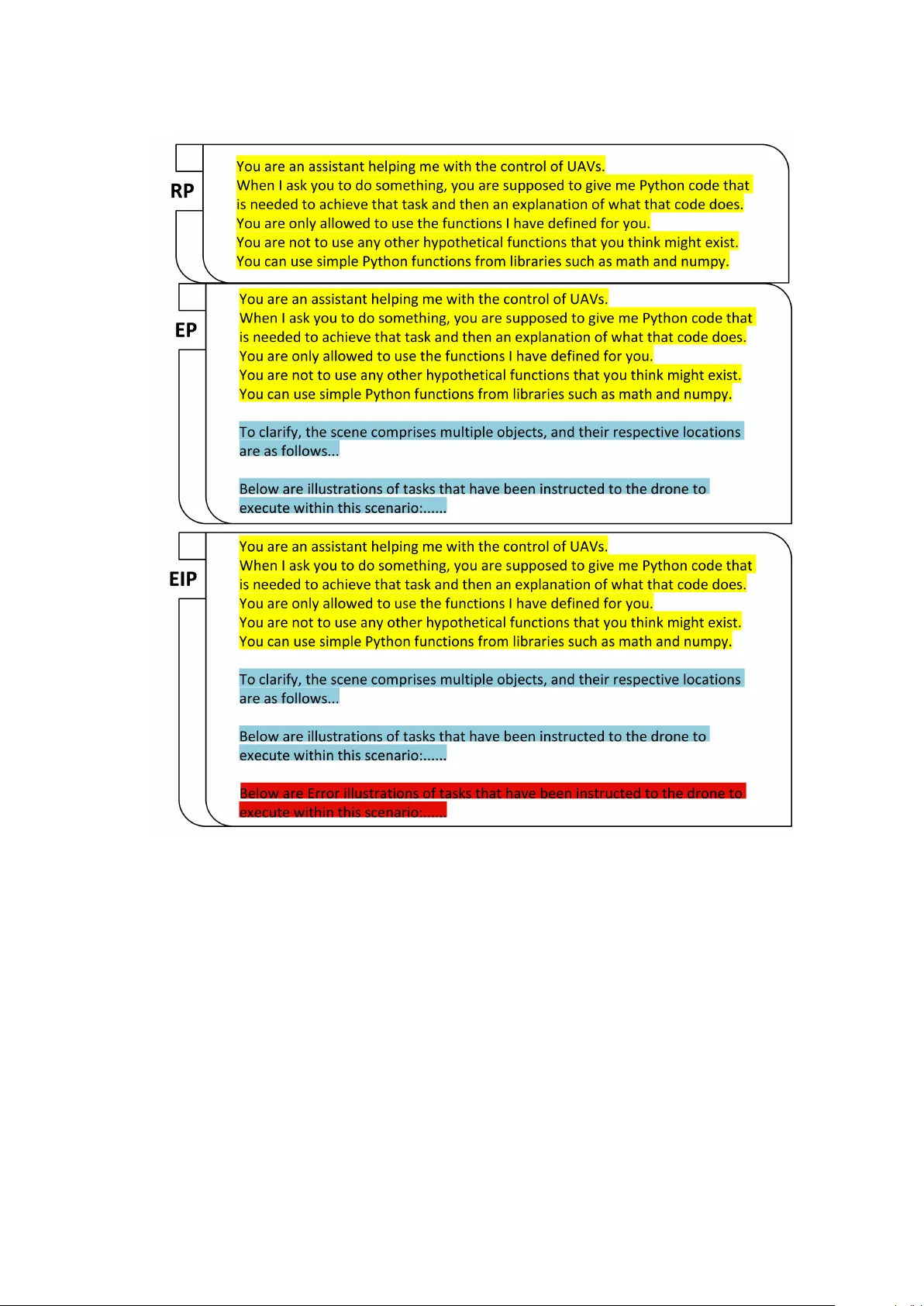

The future of UAV interaction systems is evolving from engineer-driven to user-driven, aiming to replace traditional predefined Human-UAV Interaction designs. This shift focuses on enabling more personalized task planning and design, thereby achievin…

Authors: Haoran Wang, Zhuohang Chen, Guang Li