ESPADA: Execution Speedup via Semantics Aware Demonstration Data Downsampling for Imitation Learning

Behavior-cloning based visuomotor policies enable precise manipulation but often inherit the slow, cautious tempo of human demonstrations, limiting practical deployment. However, prior studies on acceleration methods mainly rely on statistical or heu…

Authors: Byungju Kim, Jinu Pahk, Chungwoo Lee

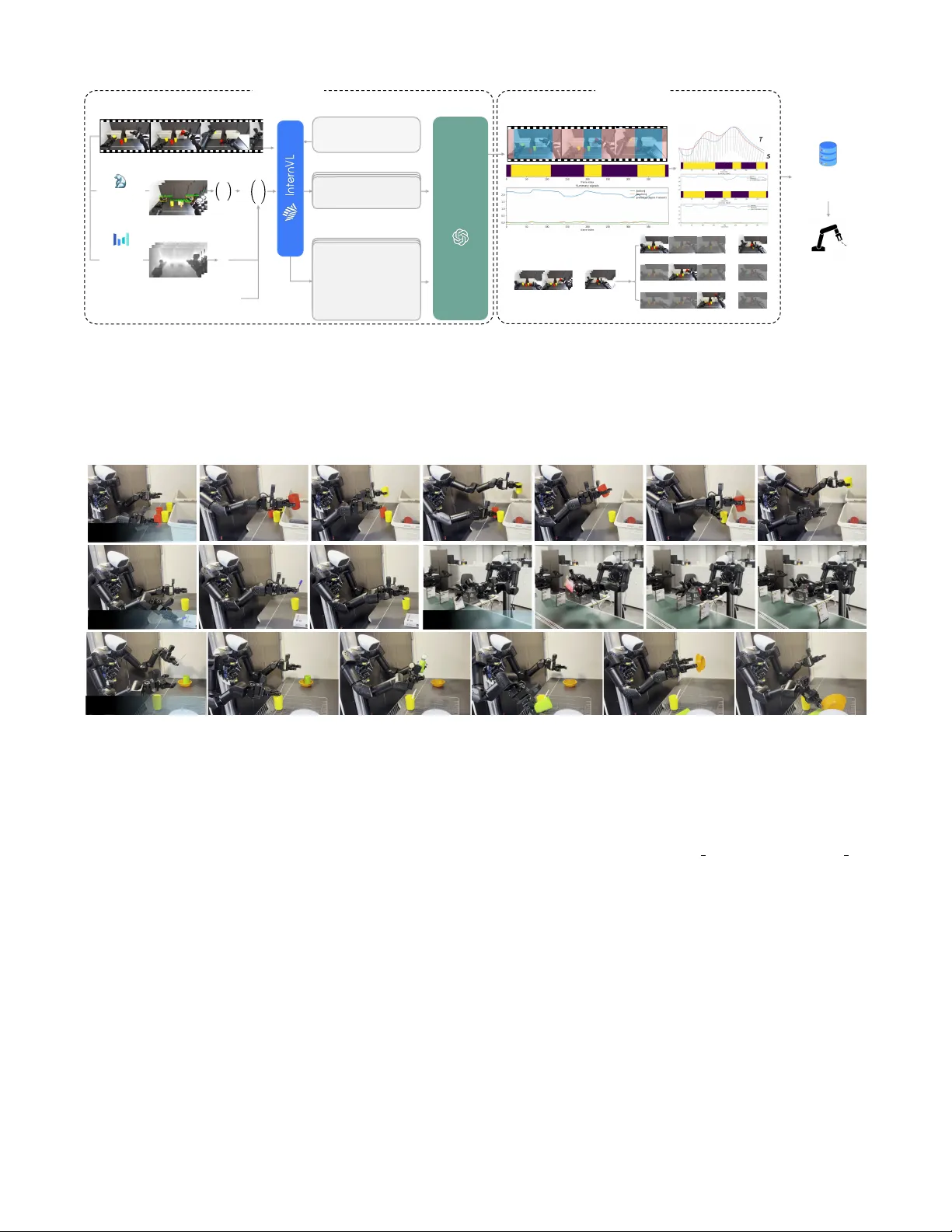

ESP AD A: Execution Speedup via Semantics A war e Demonstration Data Downsampling f or Imitation Learning Byungju Kim 1 , 2 , ∗ , Jinu Pahk 1 , 2 , ∗ , Chungwoo Lee 1 , ∗ , Jaejoon Kim 1 , 3 , ∗ , Jangha Lee 1 , 3 , ∗ , Theo T aeyeong Kim 1 , 3 , K yuhwan Shim 2 , Jun Ki Lee 4 , † , Byoung-T ak Zhang 3 , † Abstract — Behavior -cloning based visuomotor policies enable precise manipulation but often inherit the slow , cautious tempo of human demonstrations, limiting practical deployment. How- ever , prior studies on acceleration methods mainly rely on statistical or heuristic cues that ignore task semantics and can fail acr oss diverse manipulation settings. W e present ESP AD A, a semantic and spatially awar e framework that segments demon- strations using a VLM–LLM pipeline with 3D gripper–object relations, enabling aggr essive downsampling only in non-critical segments while preserving precision-critical phases, without requiring extra data or architectural modifications, or any form of retraining . T o scale from a single annotated episode to the full dataset, ESP ADA pr opagates segment labels via Dynamic Time W arping (DTW) on dynamics-only features. Across both simulation and r eal-world experiments with A CT and DP baselines, ESP ADA achiev es approximately a 2x speed- up while maintaining success rates, narrowing the gap between human demonstrations and efficient robot control. I . I N T RO D U C T I O N Imitation learning (IL) has emer ged as a central paradigm in robot learning [1]–[7], of fering a practical alternative to reinforcement learning by bypassing explicit reward design and costly online exploration. By leveraging expert demon- strations, IL enables robots to acquire manipulation skills in a data-efficient manner . While early applications were limited to simple pick-and-place tasks, recent adv ances hav e extended IL to long-horizon [3], contact-rich [8], and visually complex manipulations [1], [9]. W idely used policies such as Action Chunking T ransformer (A CT) [1] and Diffusion Policy (DP) [2] illustrate this practicality and serve as strong baselines for imitation-based manipulation. Despite these successes, deployments in IL often suf fer from insuf ficient execution speed. Human demonstrators tend to act slowly and cautiously to ensure safety and maxi- mize task success. Moreover , prior studies ha ve intentionally adopted slow demonstrations due to three main factors: (i) camera frame-rate constraints, (ii) research that slower motions can improv e training stability , and (iii) the anthro- pomorphism gap between human kinematics and robotic morphology [10]. In short, these factors collectiv ely bias human operators toward conservati ve motions, producing 1 T ommoro Robotics 2 Interdisciplinary Program in Artificial Intellgience, Seoul National Uni- versity 3 Department of Computer Science and Engineering, Seoul National Univ ersity 4 Artificial Intelligence Institute at Seoul National Uni versity * equal contribution. † corresponding authors. Semantic Cues, Spatial Relations, Gripper - Distance OUT-OF- DISTRIBUTION FAILURE! Over - accelerated Dataset Raw Demonstrations Casual Casual Casual Casual Precision Casual Na ï ve Acceleration Semantic & Spatially - Aware Acceleration ESPADA No Semantic Awareness ESPADA ESPADA Trained Policy SUCCESS! ESPAD A Se m a n t i c s - ba s ed Se g m e n t a t i o n Speedup Dataset Raw Demonstrations Fig. 1: Na ¨ ıve and heuristic-based acceleration breaks precision behavior in manipulation tasks. Our model, ESP AD A uses semantics and 3D spatial cues to preserve contact-critical phases while accelerating transit motions. trajectories that are far more temporally saturated than nec- essary , thereby causing learned policies to inherit this slow tempo at ex ecution time [11]. Simply replaying demonstrations faster or uniformly sub- sampling observ ations can push trajectories out of distribu- tion, inducing compounding error and degraded performance. In response, sev eral methods ha ve sought to improve exe- cution ef ficiency; SAIL [12] le verages A WE [13] features with DBSCAN [14] clustering to identify coarse phases, and DemoSpeedup [15] identifies casual segments by estimating action-distribution entropy with a pre-trained proxy policy , treating high-entropy regions as safe to accelerate. Howe ver , these scenario-assumption based approaches rely on hand- crafted heuristics, and the narrow scenario space makes them fragile to e ven mild de viations. SAIL [12], for instance, implicitly assumes that precision- critical behavior manifests as densely sampled regions in trajectory space and implements this assumption via clus- tering, but a density-based view of precision is intuitively valid only in highly restricted scenarios. On the other hand, DemoSpeedup [15] assumes that high action entropy signals accelerable segments, but entropy is not a reliable indicator of precision: (1) multimodal strate gies arising from scenario variability (e.g., random object initialization) can yield high entropy despite strict precision demands, (2) repetitive path- fixed motions may hav e low entropy without actually requir- ing precision, causing accelerable segments to be sacrificed. Fundamentally , both approaches rely on scenario- dependent assumptions and attempt to infer precision from motion statistics rather than task semantics, limiting their ability to distinguish accelerable from precision-critical phases and preventing them from scaling robustly in a task- and scenario-agnostic manner . Accordingly , we introduce ESP AD A , a semantic-driv en trajectory segmentation framework that selectiv ely acceler- ates demonstrations without extra hardware, additional data, or additional policy trainings. Prior methods rely on heuristic motion statistics, such as density clusters or action entropy , to implicitly approximate precision. In contrast, ESP ADA replaces these assumptions with explicit scene semantics and gripper–object 3D relations. These cues reveal the task intent (e.g., approach, align, adjust) and the actual interaction state between the gripper and the target object, enabling the system to determine exactly where acceleration is safe while preserving genuine precision-critical phases. Concretely , we extract per-frame 3D coordinates of grip- pers and key objects using open-vocabulary segmenta- tion [16], [17] and video-based depth estimation [18], [19], which we adopt instead of single-image estimators because depth can be computed offline and video models exploit temporal context across the entire sequence, yielding more stable and semantically coherent geometry . In addition, to remain compatible with the standard visuomotor imitation- learning formulation—where demonstrations rely solely on monocular onboard observ ations without auxiliary sens- ing—we deriv e all 3D cues from monocular video rather than requiring extra depth sensors. These geometric cues, together with image observations, are summarized by a vision–language model [20] into se- mantic scene descriptions. W e further conv ert all spatial and semantic observations into a compact language rep- resentation so that a large language model—currently the strongest general-purpose reasoning module—can perform segment classification in a token-ef ficient and structurally interpretable form. Next, the LLM reasons o ver these de- scriptions and trends in the gripper–object distance over time to classify segments into casual (aggressiv ely accel- erable) and pr ecision . Finally , we accelerate the casual segments via replicate-befor e-downsample with geometric consistency [15], reducing temporal density while preserving task success. Our contributions are three-fold: (i) The first semantic and 3D-relation–aware policy acceleration framew ork via demonstration downsampling without any additional sensor data or retraining. (ii) A scalable label transfer scheme that propagates segment labels from a single annotated episode to the rest of the dataset using banded DTW . (iii) Experimental validation in simulation and real-world settings, with up to a 3.6× ex ecution speedup while maintaining or improving success rates. I I . R E L A T E D W O R K Speeding up imitation learning execution: While mod- ern visuomotor policies such as ACT [1] and DP [2] provide strong manipulation performance, they typically inherit the slo w , human-paced timing of demonstrations. Uniform downsampling or increasing the control rate can speed up ex ecution, but both risk pushing observations Out of Distribution (OOD) and amplifying errors. Recent work proposes learning when it is safe to compress time. DemoSpeedup estimates action-distribution entropy from a proxy policy and applies replicate-before-downsample with geometric constraints, reporting up to ∼ 3 × acceleration without success loss [15]. In parallel, SAIL estimates “motion complexity” from waypoints and uses DBSCAN to segment trajectories; segments with lower complexity are accelerated while higher-complexity segments are pre- served [12]. While effecti ve, these prior methods rely on narrow , scenario-specific assumptions — often tied to clus- tering hyperparameters or entropy as a coarse proxy — which limits their robustness. Our approach replaces entropy/feature clustering with semantic reasoning, yielding segments that better align with manipulation intent. T emporal se gmentation and phase discovery: Classical phase-discov ery pipelines often rely on fix ed features and clustering (e.g., DBSCAN [14]) to recover phases from kinematics or vision [12], [13], sometimes assisted by motion primitiv es. These pipelines can be brittle across tasks and cameras because phase boundaries depend on feature scaling and neighborhood thresholds. Other approaches use latent structure learning for phase discovery [6], [7], b ut they still struggle to distinguish precision-critical contact phases from benign transits. ESP AD A instead uses 3D gripper–object distance trends as grounded signals, deferring semantic inter- pretation to an large language model (LLM), which produces coherent manipulation chunks. V ision–language for r obotics: VLMs and LLM pro- vide complementary capabilities: grounded perception from images and structured reasoning over text. This modularity has been explored for generalist robots [9], [21]–[23]. In- stead of training bespoke video-understanding models, we adopt a VLM → LLM pipeline: category-free segmentation (Grounded DINO + SAM [16], [24]), depth [18], [19], and semantic summaries (InternVL [20]), followed by LLM- based segmentation. The motiv ation for this modular de- sign is that spatial relations—such as gripper–object ge- ometry—must be explicitly surfaced as linguistic cues for downstream reasoning, which is dif ficult to guarantee with monolithic video-understanding models. By conv erting spa- tial structure into interpretable language tokens, the LLM can perform fine-grained temporal and semantic reasoning with more reliability and controllability . This design is auditable, improv es as foundation models improve, and transfers to an online v ariant for fast/precise mode switching. P ositioning: ESP AD A addresses sev eral specific limi- tations of DemoSpeedup and SAIL. While those methods assume that high entropy or low complexity reliably indicate “casual” motion, ESP AD A detects when that assumption breaks by consulting explicit relational and semantic cues, specifically gripper–object distance trends and scene seman- tics. Unlike entropy alone, our se gmentation tends to produce more stable, coherent boundaries and av oids misclassifying fine, contact-critical motions as safe to do wnsample. Empir - ically , ESP ADA produces fewer fragmented boundaries and more coherent motion chunks, simplifying per-segment com- pression factor selection and reducing reliance on delicate clustering hyperparameters. I I I . P R O B LE M S E T U P W e consider a dataset of robot manipulation demonstra- tions D = { ( o t , a t ) } T t =1 , where o t are observations (RGB images, proprioception) and a t are low-le vel actions (joint position commands). Demonstrations are collected at control frequencies f ctrl ∈ [30 , 50] Hz, producing temporally dense trajectories. Policies such as A CT [1] and DP [2] predict fixed-horizon action chunks A t = { a t , . . . , a t + K − 1 } from recent observ ations. A core issue is that human demonstrations are performed slowly and cautiously , yielding ov ersampled sequences. Uniformly downsampling often pushes trajectories out-of- distribution, because aggressi ve temporal thinning alters the local action–state transitions seen during training, intro- duces temporal aliasing in contact-rich or high-curvature segments, and disrupts the smoothness assumptions under which behavior -cloned policies generalize. Our goal is to accelerate demonstrations offline by selectiv ely reducing temporal density in casual phases while applying only mild reduction in pr ecision-critical phases , without modifying the runtime control loop or the policy architecture. Formally , we segment each trajectory as S = { ( s i , e i , y i ) } M i =1 , with y i ∈ { casual , precision } . W e then transform D by T ( D , S , N ) = M [ i =1 n RBD ( D [ s i : e i ] , N y i ) where y i ∈ { precision, casual } , and RBD denotes downsampling with replicate-befor e-downsample [15], en- suring that all original frames are preserved across repli- cas. Here, casual indicates segments that can be safely downsampled without compromising task fidelity , while precision denotes precision-critical spans that are re- tained at near full resolution, with only minimal accelera- tion applied when safe. For stability , we enforce geometric consistency [15] by adjusting accelerated chunk horizons K ′ so that the spatial displacement P K ′ − 1 k =0 ∥ ∆ x t + k ∥ matches that of the original horizon K . N y i denotes the number of replicas in RBD, determined by the maximum acceleration ratio. I V . M E T H O D Our pipeline con verts ra w demonstrations into semanti- cally and spatially informed segments that can be selecti vely accelerated, then constructs an acceleration-aware training set via replicate-before-downsample (RBD) with geometric consistency . Figure 2 provides an o vervie w . A. Context- and Spatial-A ware Se gmentation via VLM → LLM a) Object tr acking with inter active k e yframe seeding.: First, we obtain open-v ocabulary tracks from demonstration videos using Grounded-SAM2 [16], [17]. In addition to text prompts, users can provide sparse keyframe annotations (boxes or point-groups) via a lightweight UI. W e maintain a label ↔ id mapping across keyframes and perform IoU- based association to propagate user labels to SAM2 track IDs. During propagation, we use a keep-ali ve strategy (bbox carry-ov er for short outages) and periodic re-detection with Grounding DINO, reconnecting lost tracks via a score that mixes IoU and color -histogram similarity . This reduces frag- mentations and preserves object identity across occlusions. T o bootstrap object grounding, we first sample ∼ 10 rep- resentativ e frames from episode 0 and feed them into a InternVL 3.5 [20] to obtain a compact language description of the ov erall task. For the same frames, we apply Ground- ing DINO v2 to detect and segment task-rele vant entities such as left gripper , right gripper , and target objects (e.g., yellow cup ). If bounding box predictions fail for some frames, we allow lightweight manual correction (bounding box only) through the UI. The corrected box es serve as anchors for SAM2, which then propagates object masks and bounding boxes consistently across the entire episode. This hybrid strategy (automatic detection + sparse manual fallback + SAM2 propagation) ensures that ev ery frame obtains reliable per-object segmentation, even under occlusion or detector failure. b) Depth estimation and 3D bac k-pr ojection.: W e esti- mate per -frame depths with VD A/D A2 [18], [19] (metric or relativ e; optionally scaled by a factor z scale ). As we obtained the pixel coordinates (u, v) of each object of interest in the previous step, given the corresponding depth Z, we can recov er its 3D position in the camera coordinate frame via standard back-projection: p = Z K − 1 [ u, v , 1] ⊤ , (1) This yields a center 3d for each tracked mask. W e then compute frame-wise gripper–object distances, r t ( g , o ) = p ( g ) t − p ( o ) t 2 , (2) for g ∈ { gripper left,gripper right } and task-relev ant ob- jects o . For multi-view sequences, we b uild per-camera relations 3d from the set of r t ( g , o ) values, and prefer the head camera if present; otherwise we select the camera with the most valid relations at a frame. W e rely on temporal tr ends in r t rather than absolute scale, av oiding the need for extrinsics. c) LLM-Based Se gmentation Conditioned on VLM Sum- maries.: From the sampled frames (typically 4–8) and their structured 3D cues, we query a VLM (InternVL-3.5 8B [20]) for a strict-JSON, chronologically ordered episode summary . Prefer%camera%"head".%If%absent,%use% the%camera%that%has%the%most%relations% per%frame. For%each%frame,%collect%all% `r`%values%(gripper – object%distances)% for%the%chosen%camera ... Raw Demonstrations Semantics - based Segmentation Ca s ual Precise Speeded - up Dataset Replicate - before - downsample Strategy 𝐀 𝟏 𝐀 𝟐 𝐀 𝐤 Video Depth Anything Module 1 Module 2 Grounded SAM 2 𝐱′ 𝐲′ 𝐳 Projection to 3D 𝐢 frame 𝐤 𝐭𝐡 object {𝐫, 𝐥} grip per VLM Text Prompt 𝐱 𝐲 𝐳 𝐫 Large Language Model GPT -5 Thinking 𝐀 𝟏 𝐀 𝟐 𝐀 𝟑 𝐀 𝐤 𝐀 𝟏 𝐀 𝟐 𝐀 𝟑 𝐀 𝐤 ⋯ ⋯ 𝐀 𝟏 𝐀 𝟐 𝐀 𝟑 𝐀 𝐤 ⋯ ⋯ Scene Description Robotic'arms'manipulate'yellow' plastic'cups'and'blue'pen'on' black'table'as'part'of'an' activity'or'experiment Resampling 𝐍 𝐜𝐚𝐬𝐮𝐚𝐥 Sampling 3D Object – Relation Representation ! "#$%&'()* ! +, - . !/$%&#$0! +,1 1 !2$3&2! +,! #&)'/43& !. !/&56&#'7)! +,8 9 :;< . 9 -;= . >;7 ?@. 1 !2$3&2! +,! 2&"6'A#(BB&# !., !/&56&#'7)! +,8 9 C;D . 9 -;7 . E;> ?@. 1 !2$3&2! +,F #(AG6'A#(BB&# !., !/&56&#'7)! +,8 9 -;H . 9 -;7 . E;= ?@. !#&2$6(I507)! +,1 !G&$)! +,8 1 !A#(BB&#! +,! 2&"6'A#(BB&# !. !I3J! +,! #&)'/43& !. !# ! +, :;C-D @. @ Policy Imitation Learning Banded DTW Label Transfer Episode_0 Episode_1 Episode_2 Bounding Box Object/ G ripper T racking Depth Depth Estimation Fig. 2: Overview of ESP AD A . W e use Grounded-SAM2 and V ideo Depth Anything (VD A) to extract 3D object-gripper relations, summarize the episode with a VLM, and segment trajectories with an LLM into precision and casual spans. Segment-wise downsampling is then applied with replicate-before-do wnsample and geometric consistency , producing faster yet safe demonstrations for imitation learning. T o reduce annotation cost, we annotate only episode 0 via the VLM → LLM pipeline, and propagate its labels to other episodes with banded DTW label transfer , which aligns action sequences under temporal v ariation while refining boundaries. Sort Pen in cu p Conveyor Kitchenw are Fig. 3: Real-world evaluation of ESP AD A on the AI W orker robot acr oss four r epresentative manipulation tasks . (i) Sort – classifying colored objects into bins, (ii) Pen in cup – placing a pen into a cup, (iii) Con veyor – transferring curry into a basket along a mo ving belt, and (iv) Kitchenware – handling bo wls and cups. W e then attach this VLM-produced summary as a task descriptor to the LLM prompt. T o enable the LLM(GPT -5 Thinking) to infer manipulation intent directly from raw 3D relations, we incorporate a compact set of few-shot exemplars into the system–user prompt. These exemplars encode canonical temporal pat- terns—such as near-contact plateaus for precision and mono- tonic approach or retreat for coarse transit—thereby anchor- ing the model’s relational reasoning and guiding it to inter- pret variations in r t as semantically meaningful interaction states rather than unstructured numeric fluctuations. This lightweight conditioning substantially stabilizes the LLM’s behavior and allows the subsequent segmentation process to rely on consistent, spatially grounded intent predictions across long trajectories. Lev eraging both the fe w-shot–conditioned relational prior and the task description, the LLM then infers segment boundaries as follows. The LLM receiv es: (i) a JSONL stream with frame-wise center 3d and relations 3d for the full episode, and (ii) the VLM summary descriptor . It outputs non-ov erlapping, inclusiv e index ranges labeled precision or casual . W e encode policy hints to fav or robust, human-lik e chunks: • Intent criteria. Sustained near-contact plateaus and low-v ariance micro-adjustments ⇒ precision; long ap- proach/retreat or persistent far separation ⇒ casual. • Stability . Minimum segment length L min =8 ; merge same- label segments across gaps shorter than G min =5 ; require ≥ 3 consecuti ve frames to switch labels (hysteresis); ignore micro-oscillations shorter than L micro =6 . • Parsimony . Prefer 3 – 4 segments unless strong evidence suggests otherwise. Because the model may leave small gaps when confidence is low , we run a deterministic coverage completion pass: fill gaps by e xtending the nearest high-confidence neighbor that best matches the local r t trend, then re-apply the stability rules. The final set S = { ( s i , e i , y i ) } M i =1 provides full frame covera ge with y i ∈ { precision , casual } and per- segment confidence. Finally , to respect LLM context limits for long demon- strations, we apply token-budgeted sampling and JSON slimming. Demonstrations often have thousands of frames, easily exceeding LLM context limits. W e therefore compute the maximum feasible sample count K by binary search over the measured per-frame JSON length and select K evenly spaced indices, ensuring trajectory-wide co verage under a fixed character budget. W e further compact prompts by float rounding and whitespace-free JSON serialization, reducing token o verhead by ∼ 30 – 40% without changing semantics. B. Banded DTW Label T ransfer fr om Episode-0 For datasets where only episode 0 is labeled, we propagate its segment labels ( precision / casual ) to the remaining episodes via banded Dynamic Time W arping (DTW). a) Pr oprioceptive DTW Alignment.: From each episode we b uild a per-frame feature vector using only proprioception and actions. Concretely , we concatenate z-scored features to form ϕ t ∈ R D : ϕ t = a t , ∆ a t , v t , ∆ v t , ∥ a t ∥ , ∥ v t ∥ , ∥ ∆ a t ∥ , ∥ ∆ q t ∥ , ∥ ∆ v t ∥ , ∠ ( a t , a t +∆ a t ) , ∠ ( v t , v t +∆ v t ) . (3) where a t are actions, q t are joint positions, v t are joint velocities if a vailable (otherwise we use ∆ q t as a proxy), and ∠ ( · , · ) is the angle between successive vectors. Giv en episode 0 features X 0 ∈ R T 0 × D and target features X k ∈ R T k × D for episode k , we run DTW with a Sak oe–Chiba band of half-width b = ⌊ ρ · max( T 0 , T k ) ⌋ with ρ ∈ [0 . 05 , 0 . 10] (default ρ =0 . 08 ). This yields an alignment path P ⊂ [1 , T 0 ] × [1 , T k ] . W e con vert it into a monotone inde x map m : { 1 , . . . , T 0 } → { 1 , . . . , T k } by averaging all matched target indices per source frame and enforcing non-decreasingness. b) Segment-wise Label T ransfer and Refinement.: For each episode-0 labeled segment S 0 = { ( s i , e i , y i ) } M i =1 with label y i ∈ { precision , casual } , we obtain the tar get span S k = { ( m ( s i ) , m ( e i ) , y i ) } M i =1 and snap both ends within a local window of ± W frames (default W =12 ) by minimizing the ℓ 2 distance between short mean-pooled feature summaries. Mapped segments are sorted and trimmed to remove overlaps while preserving order . If a path break occurs, we drop only the affected segment. Any uncovered frames default to precise when expanded to per-frame labels. The banded DTW runtime is O (max( T 0 , T k ) · b ) , i.e., near - linear in sequence length. With 50 Hz episodes ( ∼ 500–2k frames), transfers run quickly on CPU and require no proxy models. C. Segment-wise Downsampling and Dataset Compilation Giv en the final segmentation S , we construct an acceleration-aware dataset by applying replicate-before- downsample with a larger downsampling factor for casual spans and a smaller downsampling factor for precision spans. a) Replicate-before-downsample .: T o maintain full state cov erage under temporal compression, we adopt a replicate-before-downsample strategy [15]. For a segment [ s, e ] and do wnsampling factor N , we create N replicas with offsets m ∈ { 0 , . . . , N − 1 } and retain frames { t ∈ [ s, e ] | ( t − s ) mo d N = m } . T aking the union across m recov ers the original support, thereby preserving full state di versity in the do wnsampled dataset and prev enting loss of observation cov erage during model training. b) Geometric Consistency for Chunked P olicies.: T emporal acceleration alters the per-chunk spatial dis- placement, undermining the horizon K that the policy has been optimized to perform best at. T o maintain geomet- ric fidelity under accelerated demonstrations, we adopt the geometry-consistent do wnsampling scheme [15] and rescale the effecti ve chunk horizon K ′ so that its spatial displace- ment remains consistent with the original: K ′ − 1 X k =0 ∆ x t + k ≈ K − 1 X k =0 ∆ x t + k , (4) where x t denotes the end-effector pose. In practice, K ′ ≈ 1 2 K performs well and approximately satisfies Eq. (4) across tasks. c) Gripper Event Pr ecision F or cing.: W e apply gripper ev ent precision forcing method to safeguard contact-rich phases from being ov er-accelerated. For each trajectory , we detect gripper movements by checking the change in the normalized gripper command g t and mark a frame as a candidate ev ent if | g t +4 − g t | ≥ 0 . 03 . All marked frames are then clustered along the temporal axis using DBSCAN [14]. For each cluster , we take the minimum and maximum frame indices, pad them by two frames on both sides, and override the corresponding window to be precision on top of the base LLM segmentation results. V . E X P E R I M E N T S Our experimental e valuation is guided by the following research questions: • RQ1 . Does ESP ADA achie ve a higher success rate across div erse manipulation tasks, e ven under more aggressive acceleration settings, compared to baselines? • RQ2 . How accurately does ESP ADA distinguish precision-critical from casual segments compared to entropy-based se gmentation methods? • RQ3 . What are the respecti ve roles of the 3D grip- per–object distance r t and VLM-generated scene descrip- tions in improving se gmentation quality? A. Setup W e ev aluate our approach in both simulation and real- world settings using A CT and DP [1], [2] as the baseline pol- icy architectures, and compare our accelerated model against policies trained on the original dataset and those using the entropy-based acceleration method DemoSpeedup [15] under each architecture. Simulation. In Aloha simulation [4], we ev aluate two representativ e manipulation tasks—Transfer Cube and Inser- tion—each provided with 50 e xpert demonstrations at 50 Hz. Policies are trained from single head-camera observations. Experiments were conducted with precision/casual acceler- ation factors of (2x, 4x). In BiGym [25], we ev aluate 7 long-horizon manipulation tasks that in v olve target reaching and articulated object interaction in home-like environments. Policies are trained with different numbers of demonstrations per task, while failed episodes are filtered out. Real-world. Experiments are conducted on the R OBO TIS AI-W orker [26], a dual-gripper humanoid robot equipped with two wrist-mounted cameras and a head-mounted cam- era. W e ev aluate four representativ e tasks— Sort (bin sorting), P en in Cup (insertion), Kitchenwar e (bowl and cup handling), and Conve yor (dynamic transfer)—as shown in Fig. 3, mea- suring both throughput and episode length across models. All policies follows the baseline-matched hyperparameters [15] for both training and inference, and the accelerated segments use a chunk horizon of roughly half the original. DP exhibited limited robustness to large out-of-distribution deviations during preliminary experiments. T o av oid conflat- ing this effect with the impact of temporal acceleration, we reset the initial robot pose to lie within the training-time distribution for all tasks except Con veyor . Metrics. W e ev aluated whether time efficiency could be improv ed without compromising task success. W e report the task completion success rate and the average episode execution length , where task failure is defined as the inability to proceed within 10 seconds in real-world e xperiments. B. Simulation Results In Aloha simulation, As shown in T able II, While na ¨ ıve 2× acceleration lo wers success rates, our method even improves them while achieving up to 2.64× speedup o ver the original. Relativ e to DemoSpeedup, it matches performance on all Insertion while demonstrating a similar level of acceleration, and achiev es the highest success on T ransfer Cube(A CT) while being slightly less aggressi ve in shortening episodes. High Segmentation Quality Under Random Scenario. Random initialization of object position in the Aloha en- vironment increases entropy during the approach–grasp phase, leading the entrop y-based baseline to mislabel this interaction-critical region as a casual segment. T o ev alu- ate segmentation quality , we compare segmentation outputs against ground-truth manually annotated by human ev al- uators using explicit physical-interaction criteria. Against this reference, our method achiev es higher IoUs—0.1989 vs. 0.1745 for insertion and 0.2649 vs. 0.2013 for trans- fer cube—demonstrating robustness to initialization-induced variability . ALOHA Sim also reports subtask-lev el success metrics, and in the initial interaction-detection subtask, which is particularly sensitiv e to randomness in object placement, our method attains 91% success compared to 87% for the entropy-based baseline, further indicating the stability of semantic grounding in early-phase boundary identification. DemoSpeed Up time DBSCAN - based Entropy Segmentation Fast Slow Fast ESPADA (Ours) Semantics - based Segmentation Precision 𝑟 !"#$$%" ~ '()%*+ Distance Precision DemoSpeedUp ESPADA (Ours) Fast Fig. 4: Precision-phase estimation in the con veyor sce- nario based on low entropy (DemoSpeedup, black re- gions) versus semantics (Ours, red regions). In repetitive and relati vely simple segments such as grasping curry on the conv eyor , DemoSpeedup misclassifies them as precision- critical due to low action entropy . In contrast, our semantic analysis correctly identifies these spans as accelerable. Long-Horizon Speedup and Sensitivity to Unstable V isual Scenes. In BiGym, our method achie ves signifi- cantly higher success rates than the simple 2× baseline (A CT : 66% → 73%, DP: 47% → 60%) while maintaining per- formance comparable to the original 1× policy and pro- viding up to 2.3× acceleration. Interestingly , acceleration and task success were not in versely related; f aster execution often improved success by reducing compounding errors and prev enting drift into OOD states. While our approach performs on par with DemoSpeedup in most tasks, we still observe failures in some cases, likely due to unstable visual observ ations—often outside the object scene as the robot moves—which undermine gripper–object recognition and VLM semantic grounding. W e leav e it to future w ork to improv e semantic grounding through more stable viewpoints and richer multimodal signals such as joint states and haptics. C. Real-world Results As shown in T able III, ESP ADA achie ves the highest over- all success rates while providing strong acceleration across all tasks. Under the 2 × /4 × setting (precision range 2 × , casual range 4 × acceleration), A CT+Ours achieves 90.0% success at 2.21 × speedup, whereas DemoSpeedup drops to 45.0% despite achieving a marginally higher speedup by aggressiv ely classifying many spans as casual. This over - acceleration is most evident in the Con veyor task, where DemoSpeedup collapses to 1/20 success while ESP AD A maintains 18–19/20. A similar trend holds for DP: ES- P AD A attains both the best success rate (85.4%) and the largest speedup (2.41 × ), outperforming DP+DemoSpeedup T ABLE I: BiGym Simulation Results . Method Sandwich Remove More Plate Load Cups Put Cups success rate( ↑ ) episode len( ↓ ) success rate( ↑ ) episode len( ↓ ) success rate( ↑ ) episode len( ↓ ) success rate( ↑ ) episode len( ↓ ) A CT 53% 368 54% 157 61% 319 61% 288 A CT -2x 46% 193 46% 119 50% 195 54% 141 A CT+ DemoSpeedup 77% 156 53% 91 59% 176 62% 132 A CT+ Ours 80% 176 24% 91 54% 173 60% 149 DP 52% 352 52% 170 15% 419 12% 386 DP-2x 51% 247 41% 125 11% 177 7% 243 DP+ DemoSpeedup 54% 217 49% 113 38% 171 21% 205 DP+ Ours 46% 200 40% 79 34% 162 38% 218 Method Saucepan to Hob Drawers Close Cupboard Open A veraged success rate( ↑ ) episode len( ↓ ) success rate( ↑ ) episode len( ↓ ) success rate( ↑ ) episode len( ↓ ) success rate( ↑ ) speed-up( ↑ ) A CT 86% 383 100% 119 100% 146 74% 1.0 × A CT -2x 81% 224 87% 84 96% 103 66% 1.7 × A CT+ DemoSpeedup 92% 163 100% 63 100% 81 78% 2.1 × A CT+ Ours 94% 148 100% 56 100% 81 73% 2.3 × DP 79% 324 96% 114 100% 181 58% 1.0 × DP-2x 41% 242 81% 65 94% 161 47% 1.5 × DP+ DemoSpeedup 79% 169 89% 59 100% 103 61% 1.9 × DP+ Ours 76% 148 88% 56 100% 116 60% 2.0 × T ABLE II: Aloha-Sim Simulation Results . Method Insertion T ransfer Cube success rate( ↑ ) episode len( ↓ ) success rate( ↑ ) episode len( ↓ ) A CT 21% 452 72% 291 A CT -2x 13% 238 70% 162 A CT+ DemoSpeedup (repro) 28% 166 66% 127 A CT+ Ours 28% 171 72% 141 DP 16% 431 66% 281 DP-2x 12% 245 61% 146 DP+ DemoSpeedup (repro) 26% 173 64% 137 DP+ Ours 26% 193 58% 121 (79.6%). Casual-exploiting Segmentation. By combining temporal trends in the gripper–object distance with VLM-generated scene descriptions—and le veraging the reasoning capability of an LLM to interpret these cues—ESP AD A reliably iden- tifies genuine precision phases, such as near -contact adjust- ments, while aggressively compressing spans that are truly casual. In contrast, entropy-based segmentation implicitly treats low action entropy as a proxy for precision. This assumption fails in repetitiv e motions: entropy often remains low ev en when no fine control is required, causing De- moSpeedup to systematically ov erestimate precision-critical spans. As sho wn in Fig. 4 for the Con veyor task, this misclas- sification marks lar ge portions of repetitiv e scooping as non- accelerable, restricting potential speed gains and obscuring accelerable casual se gments that ESP AD A correctly recov ers. Low entropy , in other words, does not necessarily imply high precision demands. Quantitativ ely , the same pattern appears in the 1/3- acceleration setting, where the number of trials yields stable statistics. ESP AD A achiev es slightly shorter episode lengths not by compressing true precision phases, but by av oid- ing DemoSpeedup’s overe xtension of low-entropy repeti- tiv e segments. Across tasks, ESP AD A consistently preserves success-critical precision phases without compromising suc- cess rate, while more accurately identifying accelerable ca- sual spans. Precision-pr eserving Segmentation. In Kitchenwar e (A CT , 2×/4×), ESP AD A achiev es 16/20 successes versus 1/20 for DemoSpeedup, which sho ws ESP AD A reliably maintains precision-critical phases ev en under high acceler- ation. In contact-rich manipulation, near -contact spans must not be down-sampled; ESP AD A preserves this precision- critical interaction, whereas DemoSpeedup down-samples the delicate cup-grasp phase too aggressiv ely , leading to only 1/20 success. The gripper–object-distance trend feeded into LLM allow it to infer phase intent (approach → align → close), while conservati vely gating gripper e vents as well—thereby retaining precision spans and avoiding to compress precise motion. Robustness in Dynamic Scenario. Con veyor task shows that manipulation task in dynamic scene exposes funda- mental limitations of entropy-based acceleration. When the target object(curry) first enters the camera view , the system must hold the arm still and wait for the correct picking configuration. Ho wever , action entropy is naturally high dur- ing this early transient, causing DemoSpeedup to repeatedly misclassify this span as casual and trigger arm descent earlier than intended. Consequently , the joint state collapses into an unrecov erable configuration under A CT’s strong joint-state- conditioned action chunking tendency , leading to failures. In contrast, ESP ADA explicitly identifies this waiting phase as precision by leveraging semantic cues from VLM descriptions together with the temporal trend of the grip- per–object distance. The model correctly holds the arm still until the object reaches the appropriate pickup zone, prev enting early descent and ensuring stable execution e ven under irregular con ve yor timing. D. Ablations W e ablate the effect of gripper–object distance r and the VLM scene description using four variants: w/o r , w/o description, w/o both, and our full model (T able IV). W e report IoU and the predicted number of segments with respect to the ground-truth segmentation. For Insertion , removing r collapses IoU (0.5166 → 0.0224), indicating that r is essential for alignment-sensitiv e interactions. For T ransfer Cube , dropping the description T ABLE III: Real-world Results. Method Pen in Cup Sort Kitchenware Con veyor A veraged success rate( ↑ ) episode len( ↓ ) success rate( ↑ ) episode len( ↓ ) success rate( ↑ ) episode len( ↓ ) success rate( ↑ ) episode len( ↓ ) success rate( ↑ ) speed-up( ↑ ) A CT 29/30 18.67 27/30 37.52 8/20 38.68 13/20 9.89 72.9% 1.0x A CT+ DemoSpeedup 1/3 29/30 15.52 29/30 29.29 10/20 25.62 4/20 7.55 65.8% 1.34x A CT+ DemoSpeedup 2/4 27/30 5.36 24/30 13.23 1/20 17.39 1/20 4.49 45.0% 2.59x A CT+ Ours 1/3 29/30 15.32 29/30 29.32 10/20 22.76 19/20 7.36 84.6% 1.40x A CT+ Ours 2/4 29/30 6.57 28/30 15.56 16/20 20.72 18/20 4.51 90.0% 2.21x DP 11/15 21.55 10/15 48.29 0/15 x 0/20 x 35.0% 1.0x DP+ DemoSpeedup 2/4 15/15 8.66 13/15 23.58 10/15 27.50 13/20 6.15 79.6% 2.12x DP+ Ours 2/4 15/15 5.83 15/15 21.54 10/15 23.15 15/20 7.41 85.4% 2.41x T ABLE IV: Ablation on IoU and number of se gments. Method Insertion T ransfer Cube IoU #Seg. IoU #Seg. w/o r 0.0224 3/3 0.2791 1/2 w/o Desc. 0.1024 3/3 0.0584 3/2 w/o r , Desc. 0.1111 3/3 0.0693 3/2 Ours 0.5166 3/3 0.3064 2/2 sharply reduces IoU (0.3064 → 0.0584), suggesting that textual cues help disambiguate phases with similar geometry . All variants reco ver the correct number of segments, but only our full model achie ves tight temporal alignment. Overall, this ablation confirms that the r v alue encodes precise temporal segmentation cues, while scene description provides semantic grounding, and both are necessary for high-fidelity alignment. V I . C O N C L U S I O N W e presented ESP ADA (Execution Speedup via Spa- tially A ware Demonstration Data Do wnsampling), a se- mantic segmentation framew ork that accelerates demon- strations without requiring additional data, hardware, or policy retraining. By exploiting scene semantics and grip- per–object spatial relations, ESP AD A distinguishes accel- erable from precision-critical se gments, producing motion- aligned chunks and reducing temporal redundancy via replicate-before-downsample [15] with geometric consis- tency . Integrated with ACT and DP , ESP AD A achie ves natu- ral motion chunking, preserves task success, and generalizes across both simulation and real hardware. Limitations. ESP AD A still faces challenges: inaccurate masks or object tracking may distort spatial relations, monoc- ular depth estimation introduces noise, and further validation is needed for lar ge-scale deployment. Addressing these issues will be crucial for adv ancing ESP ADA as a reliable and general framework for safe and ef ficient policy acceleration. R E F E R E N C E S [1] T . Z. Zhao, V . Kumar , S. Levine, and C. Finn, “Learning fine-grained bimanual manipulation with low-cost hardware, ” arXiv preprint arXiv:2304.13705 , 2023. [2] C. Chi, Z. Xu, S. Feng, E. Cousineau, Y . Du, B. Burchfiel, R. T edrake, and S. Song, “Dif fusion policy: V isuomotor policy learning via ac- tion diffusion, ” The International Journal of Robotics Research , p. 02783649241273668, 2023. [3] K. Black, N. Brown, D. Driess, A. Esmail, M. Equi, C. Finn, N. Fusai, L. Groom, K. Hausman, B. Ichter et al. , “ π 0: A vision-language-action flow model for general robot control. corr, abs/2410.24164, 2024. doi: 10.48550, ” arXiv pr eprint ARXIV .2410.24164 . [4] T . Z. Zhao, J. T ompson, D. Driess, P . Florence, K. Ghasemipour, C. Finn, and A. W ahid, “ Aloha unleashed: A simple recipe for robot dexterity , ” arXiv preprint , 2024. [5] Y . Ze, G. Zhang, K. Zhang, C. Hu, M. W ang, and H. Xu, “3d diffusion polic y: Generalizable visuomotor policy learning via simple 3d representations, ” arXiv preprint , 2024. [6] A. Mandlekar et al. , “robomimic: A frame work for robot learning from demonstration, ” Conference on Robot Learning (CoRL) , 2021. [7] F . Ebert et al. , “Bridge data: A large-scale dataset for robotic imitation learning, ” Conference on Robot Learning (CoRL) , 2022. [8] J. J. Liu, Y . Li, K. Shaw , T . T ao, R. Salakhutdinov , and D. Pathak, “Factr: Force-attending curriculum training for contact-rich policy learning, ” arXiv pr eprint arXiv:2502.17432v1 , 2025. [9] A. Brohan et al. , “Open-x embodiment: Extending rt-x to div erse robots, ” arXiv pr eprint arXiv:2306.08592 , 2023. [10] C. Chi, Z. Xu, C. Pan, E. Cousineau, B. Burchfiel, S. Feng, R. T edrake, and S. Song, “Uni versal manipulation interface: In-the-wild robot teaching without in-the-wild robots, ” in Robotics: Science and Sys- tems , 2024. [11] J. Xie, Z. W ang, J. T an, H. Lin, and X. Ma, “Subconscious robotic imitation learning, ” arXiv preprint , 2024. [12] N. Ranawaka Arachchige, Z. Chen, W . Jung, W . C. Shin, R. Bansal, P . Barroso, Y . H. He, Y . C. Lin, B. Joffe, S. Kousik et al. , “Sail: Faster- than-demonstration execution of imitation learning policies, ” arXiv e- prints , pp. arXiv–2506, 2025. [13] L. X. Shi, A. Sharma, T . Z. Zhao, and C. Finn, “W aypoint- based imitation learning for robotic manipulation, ” arXiv preprint arXiv:2307.14326 , 2023. [14] M. Ester, H.-P . Kriegel, J. Sander , X. Xu et al. , “ A density-based algorithm for discovering clusters in large spatial databases with noise, ” in kdd , vol. 96, no. 34, 1996, pp. 226–231. [15] L. Guo, Z. Xue, Z. Xu, and H. Xu, “Demospeedup: Accelerating visuomotor policies via entropy-guided demonstration acceleration, ” arXiv preprint arXiv:2506.05064 , 2025. [16] S. Liu, Z. Zeng, T . Ren, F . Li, H. Zhang, J. Y ang, C. Li, J. Y ang, H. Su, J. Zhu et al. , “Grounding dino: Marrying dino with grounded pre-training for open-set object detection, ” arXiv preprint arXiv:2303.05499 , 2023. [17] N. Ravi, V . Gabeur , Y .-T . Hu, R. Hu, C. Ryali, T . Ma, H. Khedr, R. R ¨ adle, C. Rolland, L. Gustafson et al. , “Sam 2: Segment anything in images and videos, ” arXiv preprint , 2024. [18] S. Chen, H. Guo, S. Zhu, F . Zhang, Z. Huang, J. Feng, and B. Kang, “V ideo depth anything: Consistent depth estimation for super-long videos, ” in Pr oceedings of the Computer V ision and P attern Recogni- tion Conference , 2025, pp. 22 831–22 840. [19] Z. Y ang et al. , “Depth anything v2, ” arXiv preprint , 2024. [20] W . W ang, Z. Gao, L. Gu, H. Pu, L. Cui, X. W ei, Z. Liu, L. Jing, S. Y e, J. Shao et al. , “Internvl3. 5: Advancing open-source multi- modal models in versatility , reasoning, and efficienc y , ” arXiv pr eprint arXiv:2508.18265 , 2025. [21] A. Brohan et al. , “Rt-1: Robotics transformer for real-world control at scale, ” Robotics: Science and Systems (RSS) , 2023. [22] M. Ahn et al. , “Do as i can, not as i say: Grounding language in robotic affordances, ” Robotics: Science and Systems (RSS) , 2022. [23] D. Driess et al. , “P alm-e: An embodied multimodal language model, ” International Conference on Learning Representations (ICLR) , 2023. [24] A. Kirillov et al. , “Segment anything, ” International Confer ence on Computer V ision (ICCV) , 2023. [25] N. Chernyade v , N. Backshall, X. Ma, Y . Lu, Y . Seo, and S. James, “Bigym: A demo-driven mobile bi-manual manipulation benchmark, ” arXiv preprint arXiv:2407.07788 , 2024. [26] ROBO TIS, “Introduction to ai worker , ” https://ai.robotis.com/ai work er/introduction ai worker .html/, 2025, accessed: 2025-12-02.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment