DuGI-MAE: Improving Infrared Mask Autoencoders via Dual-Domain Guidance

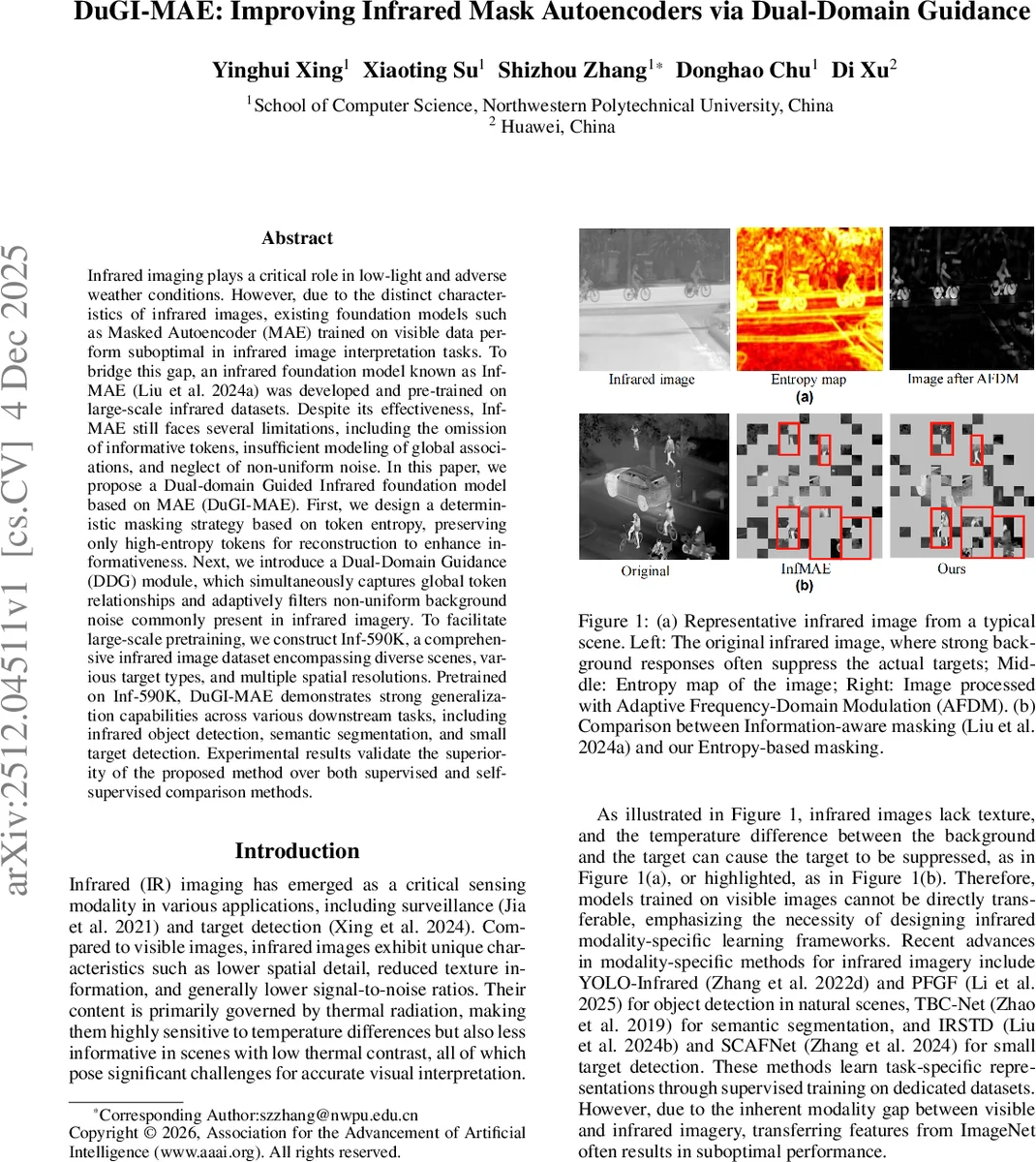

Infrared imaging plays a critical role in low-light and adverse weather conditions. However, due to the distinct characteristics of infrared images, existing foundation models such as Masked Autoencoder (MAE) trained on visible data perform suboptimal in infrared image interpretation tasks. To bridge this gap, an infrared foundation model known as InfMAE was developed and pre-trained on large-scale infrared datasets. Despite its effectiveness, InfMAE still faces several limitations, including the omission of informative tokens, insufficient modeling of global associations, and neglect of non-uniform noise. In this paper, we propose a Dual-domain Guided Infrared foundation model based on MAE (DuGI-MAE). First, we design a deterministic masking strategy based on token entropy, preserving only high-entropy tokens for reconstruction to enhance informativeness. Next, we introduce a Dual-Domain Guidance (DDG) module, which simultaneously captures global token relationships and adaptively filters non-uniform background noise commonly present in infrared imagery. To facilitate large-scale pretraining, we construct Inf-590K, a comprehensive infrared image dataset encompassing diverse scenes, various target types, and multiple spatial resolutions. Pretrained on Inf-590K, DuGI-MAE demonstrates strong generalization capabilities across various downstream tasks, including infrared object detection, semantic segmentation, and small target detection. Experimental results validate the superiority of the proposed method over both supervised and self-supervised comparison methods. Our code is available in the supplementary material.

💡 Research Summary

DuGI‑MAE (Dual‑Domain Guided Infrared Masked AutoEncoder) addresses three fundamental shortcomings of existing infrared foundation models such as InfMAE: (1) loss of informative tokens due to random or fixed‑interval sampling, (2) insufficient modeling of global token relationships, and (3) neglect of the non‑uniform noise that frequently contaminates infrared imagery. The authors propose two complementary mechanisms. First, an entropy‑based deterministic masking strategy computes the Shannon entropy of each image patch (token) and retains the top (1‑λ) fraction (λ=0.75) with the highest entropy, thereby guaranteeing that the most informative, typically target‑containing regions are kept for reconstruction. This “non‑sampling” mask eliminates the chance of discarding crucial thermal signals. Second, a Dual‑Domain Guidance (DDG) module injects frequency‑domain information into the MAE pipeline. The input image is transformed to the frequency domain via FFT, where a learnable radial filter H(u,v)=α·exp(−β·‖D(u,v)‖²) selectively attenuates low‑frequency components that usually host temperature‑drift noise while preserving mid‑to‑high‑frequency thermal details. The filtered spectrum is inverse‑FFT‑ed back to the spatial domain, embedded as key‑value pairs, and used to guide the spatial token queries from the encoder through cross‑attention. This design simultaneously (a) strengthens global association among dispersed high‑entropy tokens and (b) reduces non‑uniform background noise, improving the signal‑to‑noise ratio of the learned representations.

To enable large‑scale pre‑training, the authors assemble Inf‑590K, a 590,700‑image infrared dataset covering 445 distinct resolutions (50×34 to 6912×576) and a wide variety of scenes (urban, rural, maritime, aerial), weather conditions, and target categories (vehicles, pedestrians, ships, aircraft, infrastructure). Redundancy is reduced by cosine‑similarity based filtering (threshold 0.85) and automatic cropping of black borders in visible‑infrared video pairs.

Extensive experiments demonstrate that DuGI‑MAE, pre‑trained on Inf‑590K, outperforms both supervised and self‑supervised baselines across three downstream tasks: infrared object detection, semantic segmentation, and small‑target detection. Compared with InfMAE, DuGI‑MAE yields +4.2 % AP on object detection, +3.8 % mIoU on segmentation, and a +6.5 % detection rate for tiny targets. Ablation studies confirm that entropy‑based masking alone contributes ~2.5 % performance gain, DDG alone ~3.3 %, and their combination provides a synergistic boost beyond the sum of individual effects.

In summary, DuGI‑MAE introduces (i) a deterministic, information‑preserving masking scheme, (ii) a dual‑domain guidance mechanism that fuses adaptive frequency filtering with transformer attention, and (iii) a massive, diverse infrared pre‑training corpus. Together these innovations set a new benchmark for infrared foundation models and open avenues for future work such as multi‑scale DDG integration or multimodal (visible‑infrared) extensions.

Comments & Academic Discussion

Loading comments...

Leave a Comment