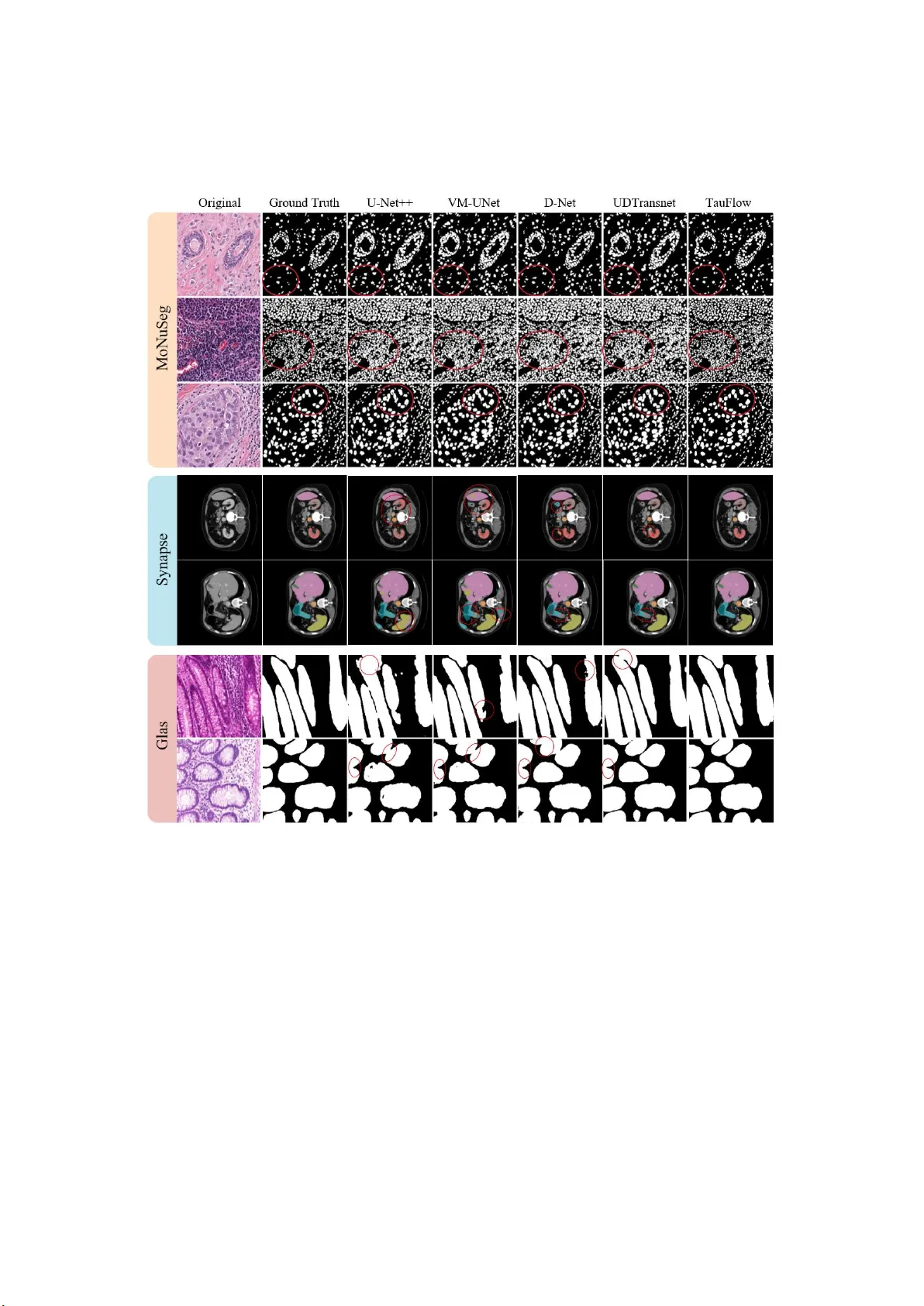

TauFlow: Dynamic Causal Constraint for Complexity-Adaptive Lightweight Segmentation

Deploying lightweight medical image segmentation models on edge devices presents two major challenges: 1) efficiently handling the stark contrast between lesion boundaries and background regions, and 2) the sharp drop in accuracy that occurs when pur…

Authors: Zidong Chen, Fadratul Hafinaz Hassan