A convolutional neural network deep learning method for model class selection

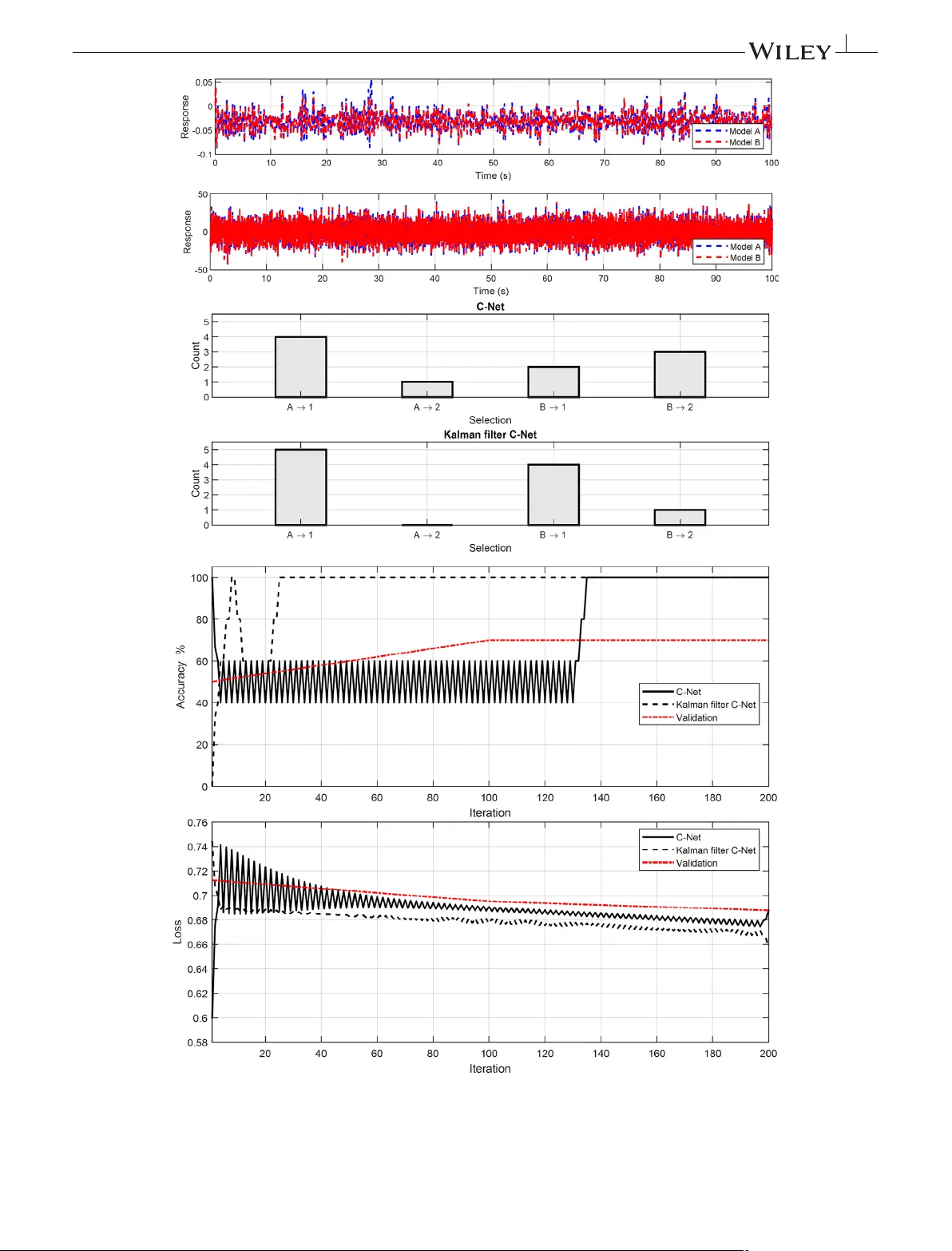

The response-only model class selection capability of a novel deep convolutional neural network method is examined herein in a simple, yet effective, manner. Specifically, the responses from a unique degree of freedom along with their class informati…

Authors: Marios Impraimakis