From Failure Modes to Reliability Awareness in Generative and Agentic AI System

This chapter bridges technical analysis and organizational preparedness by tracing the path from layered failure modes to reliability awareness in generative and agentic AI systems. We first introduce an 11-layer failure stack, a structured framework…

Authors: Janet, Lin, Liangwei Zhang

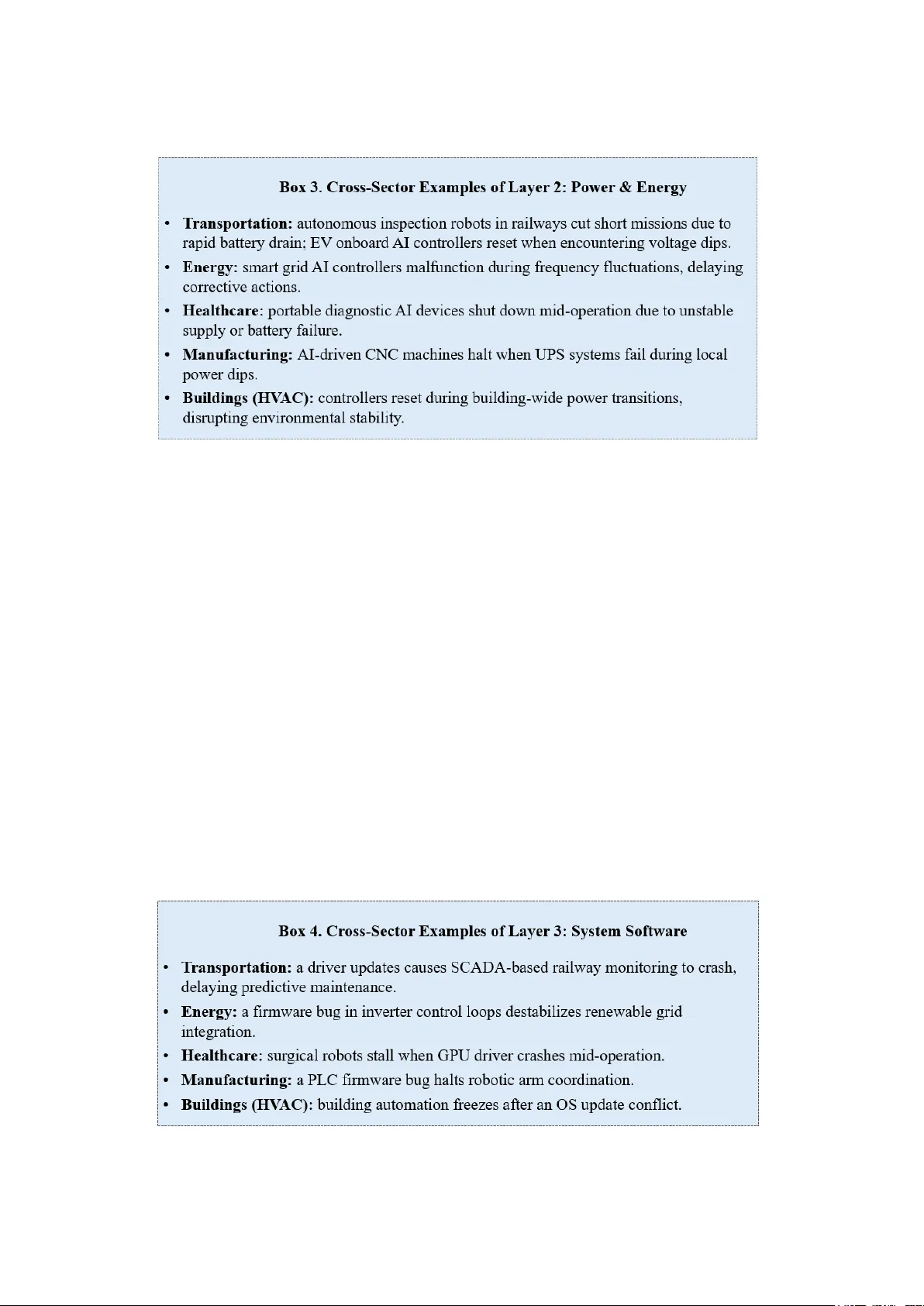

1 This is a preprint of a chapter accepted for publicatio n in Genera tive and Agentic AI Reliability : Architectures, Challenges, and Trust for Autonomous Systems , published by Spri nger Nature. From Failure Modes to Reli ability Awareness in Generative and A gentic AI System Janet (J ing) Lin 1 , Liangwei Zhang 2 1 Division of Op eration and Maintenance , Lu leå University of Technolog y, Luleå, Sweden 2 Department o f Industrial Engineering, Dong guan University of Techno logy, Dongguan, China Abstract This chapter bridges technical analysis an d o rganizational preparedness by tracing the path from layered failure modes to reliability awareness in generative and ag entic AI systems. We first introduce an 11-lay er failure stack, a structured framework for iden tifying vulnerabilities ranging from ha rdware and power foundations to adaptive learning and agentic reasoning. Building on this, the ch apter demonstrates how failures r arely occur in isolation but propagate across layers, creating cascading effects with systemic co nsequences. To complemen t this diagnostic lens, we develop the concept of awareness mapping: a maturity -or iented framework that quantifie s how well individuals and organ izations recognize reliability risks across the AI stack. Awareness is tre ated not only as a diagnostic score but also as a strategic inpu t for AI governance, guiding improvement and resilience planning. By linking layered failu res to awareness levels and further integrating th is into Dependability-Centred A sset Management (DCAM), the chapter positions awareness mapping as bo th a measur ement tool and a road map for trustworthy and sustainable AI deploy ment acro ss mission-critical do mains. Keywords AI Reliability , Awareness Ma pping , Dep endability-Centred Asset Management , Generative an d Agentic AI Systems 1 Introduction AI systems ar e in creasingly deployed in s afety- and mission-critical domains such as transportation, energy, healthcare, manufacturing, and the built en vironment. Reliability has lon g bee n a ce ntral concern for what we call conventional AI systems — mach ine learning applicatio ns designed for tasks such as clas sification, p rediction, optimization, and control. These systems face mu lti -lev el vulnerab ilities, spanning hardware stability, power q uality, data integ rity, model robustness, and application in tegration. The arriv al of generative AI expan ds this landscape. By producing open -ended outputs — text, images, co de, or d esigns — gener ative systems in troduce new reliability challenges, including hallucination s, factual erro rs, tox ic or biased content, and potential m isuse through d eepfakes o r disinformation . T hese r isks extend beyond conven tional performance concer ns to qu estions of trustworthiness, safety , and governance (Joshi, 2 025). The next wav e, agentic AI, deepens th e ch allenge f urther. Combining autonomy, p lanning, reasoning, memory, and multi-agen t in teraction, ag entic systems can pursue goals and in itiating actions with system-wide consequ ences. Their f ailure m odes in clude goal misalignment, flawed planning, emergent conflicts between agen ts, and breakdowns in human – AI collaboration (Acharya, Kuppan and Divya, 2025) . This chapter addresses th ese paradigms in con tinuity, asking: where a nd how can conventional, generative, and ag entic AI systems fail — and h ow aware a re we of these vuln erabilities? To answer, the ch apter introduces two co mplementary contribu tions: 2 • The 11 -layer failure stack , a structured f ramework tracing vulnerabilities f rom physical computation and energy thro ugh data, models, and applications, up to learning, reasoning, and multi-agen t coordination. • The concept of awar eness mapping , which assesses how well indiv iduals and organizations recognize risks acro ss these layers and position s awareness itself as a dimen sion of reliability. Case vig nettes d rawn f rom tr ansportation, energy, healthcare, manufacturing, and the built environment illustrate both layer -specific vulnerabilities and cascad ing, cross-layer ef fects. Finally , the chapter situates these tools within the paradigm of Depen dab ility-Centred Asset Manag ement (DCAM), linking technical failure analysis to lifecycle strategies for trustwor thy and sustainable deployment o f generative and agentic AI systems. 2 Reliability as a Moving Target in Conventional, Generative, and Agentic AI Reliability has traditionally been treated as someth ing that could b e designed for and verified — a destination rather than a journey. In pr actice, however, even in classical engineering domain s, reliability has always been a moving target. It shifts over time with o perating conditions, usage patterns, main tenance pr actices, and unex pected interactions. What was once “reliable” in a test environment m ay not hold under long -term use or in new contexts. This dynamism becom es ev en m ore pronounced in AI systems. Unlike physical comp onents whose d egradation can of ten be mod elled in predictab le ways, AI systems continuously interact with changing data, evolving en vironments, and — in th e case of agentic AI — with other ag ents and human stakeho lders. Reliability here is not a static p roperty but a dynamic relatio nship, shap ed by m acro-level reg ulations and institutions, m eso- level organization al practices, and m icro-level component behaviours (Lin and Silfvenius, 2025) . To ground this discussion, we turn first to established def initions. I nternational stand ards such as ISO, IEC, and IE EE define reliability as: “ The a bility of a system or co mponent to perform its required functio ns under stated con ditions for a specified period o f time. ” (Zhang et al. , 2017) This classical definition provides a solid foundation, bu t its m eaning shifts as we move from traditional physical and software systems to conven tional AI , generativ e AI, and agentic AI . Each paradigm for ces us to reinterpret what co unts as the “inten ded function” and which vulnerabilities matter most. This pro gression d emonstrates that reliability is cumulative . Each paradigm inherits the concerns o f the ones belo w — p hysical durab ility, computational stability, data and mo del robustness — while intro ducing new dimension s shaped by its sco pe. Conventional AI dep ends on reliable infrastructure but adds sensitivit y to data and models. Generative AI bu ilds on th ese layers 3 while demanding co ntent reliability and safety . Ag entic AI inherits all the above, extending reliability into g oal alignment, adaptive learning, and emergent multi -agent behav iour (Fig. 1). Fig. 1. Reliability a s an expanding, cumulative concept. Each paradigm inherits the reliability concerns of the ones below whil e i ntroducing new dimensions shaped by its scope. Traditional reliability e mphasizes physical and software dependability; conventional A I a dds data a nd model robustness; generative AI extends to trustworthy content; and agentic AI expands further into reason ing, adaptation, and int eraction. In this sense, AI d oes not change the fact that reliability is a moving target — it am plifies it. By redefining what counts as an “intended function ,” AI systems shift the trajectory of r eliability challenges and require continuous adaptation in engineering practice (Table 1). At the same time, the very features that co mplicate reliability — adaptivity, perception , and reasoning — also create new o pportunities, from ad vanced d iagnostics to self -healing mech anisms. AI makes r eliability both harder and more achievable: harder, b ecause it multiplies potential failure modes; more achievable, because it equips us with intelligen t tools to anticipate and mitigate them in real time. Table 1 Evolving interpretatio ns of reliability acro ss system paradigms System Type How the Standard Defini tion Applies Characteristic Failure Emph ases Traditional systems (Physical assets & classical software) Performing intended functio ns under stated conditions = predictable operation of compone nts or code over time, managed by design quality, testing, a nd maintenance. Wear-out, fatigue, corrosion, software bugs, maintenance errors. Conventional AI systems Intended function = providing consistent and accurate predictions, classifications, or optimizations, supported by reliable infrastructure. Data drift, mislabelling, model instability, hardware/software faults, silent monitoring failures. Generative AI systems Intended function = producing trustworthy, factual, safe, and contextually appropriate outputs, sta ble at scale. Hallucinations, factual inconsistency, bias, unsafe content, misuse (d eepfakes), scaling failures. Agentic AI systems Intended function = reasoning, planning, adapting, and safel y pursuing go als in dynamic, multi-agent conte xts. Goal misalignment, flawed reasoning, unsafe adaptation, emergent conflicts, cascading systemic fail ures. Yet AI is not only an amplifier of e xisting reliability dynamics. It can also s hift or misdirect the trajectory of how reliab ility is un derstood and man aged. By redef ining what counts as reliable 4 behaviour — for example, emph asizing output plausibility rather than operatio nal stability — AI risks encourag ing organizations to und eremphasize traditional depend ability concerns or overestimate AI’s self - correcting capacities. As argued in earlier work on the intrinsic mec hanisms of reliability im provement (Lin and Silfven ius, 2025) , reliability mu st b e seen as a continuou sly evolving system property, requiring deliberate and ongoing en hancement. This perspective reinforces wh y a structured, layered approach is essential: it no t only catalog s failure modes b ut also provides guidance for sustaining and improving reliability in the er a of co nventional, generative, and agentic AI. Thus, the stan dards-based definition remains essential, bu t for AI systems it must be extended into a layer ed and dynamic conce ption of reliability . Th is prepares the groun d for Section 3, where we introd uce the 11 -layer failure stack an d map how vulnerabilities man ifest differ ently acro ss conventional, generative, and agentic AI sy stems. 3. Layered Failure Modes Across Conventional, Generative, and Agentic AI Systems Failures in AI systems rarely occu r in isolation. They emerge from multiple interacting layers, spanning from phy sical computation and infrastructure through data pipelines, mo dels, and applications, up to adap tive learning and multi -agent coordination. To capture this comp lexity, we adopt an 11- layer failure stack: a structured fram ework that traces potential vulnerabilities across the full spectru m of AI systems. This failure stack provides a baseline lens fo r analysing reliability challenges. Yet its manifestations are not uniform. In conventional AI, failures often concentrate around data quality, model robustness, an d integration into domain applications. In g enerative AI, reliab ility hinges on the factuality, appropriateness, and safe use of generated content. In agentic AI, ch allenges extend further into goal alignment, reasoning fidelity , adaptation in dynamic environments, and emergen t multi-agen t behaviours. To organize th is analysis, the section unfo lds in three steps: • 3.1 introduce s the 11-layer failure stack as a g eneral framework, prov iding an ov erview o f its structure and logic. • 3.2 develops a comparative view, showing how each l ayer manifests differently across conventional, generative, and agentic AI sy stems. • 3.3 offers paradigm spotlights, highligh ting distinctive reliability challen ges and illustrativ e vignettes that d emonstrate how failures un fold in real -world contex ts. • 3.4 dr aws the thr eads togeth er by ex amining cross -layer risks an d cascadin g effects, showing how localized faults propagate through the stack and amplify into systemic failures. Taken together, this layered perspective reveals both shared vulnerabilities and paradigm - specific risks, u nderscoring that AI reliability cannot be s af eguarded at a single layer alone. It requires a cross-lay er, paradigm- sensitive approach th at ad apts to the ev olving nature o f AI systems (Ale et al. , 2 025). 3.1 The 11-Layer Failure Stack: A General Framework Ensuring the reliab ility o f AI systems req uires more than verifyin g isolated components. Failures often arise from complex interactions acro ss multiple layer s, wh ere v ulnerabilities at one lev el propagate upward or d ownward, creating systemic risks. To cap ture this co mplexity, we propose an 11 -layer failure stack, wh ich o rganizes potential vuln erabilities f rom the phy sical substrate of computation to the highest levels of reason ing, adaptation, and multi -agent interaction. The 11 layers can be grouped into four broad dom ains: 1. Foundation al layers (hardware, power, system software, AI framewor ks) — the phy sical and computation al substrate. 2. Core intelligen ce layers (models and data) — the learning and knowled ge base. 5 3. Operational layers (applications, execution, monitoring, l ea rning) — the deplo yment an d adaptation m echanisms. 4. Agentic lay er (reasoning, g oal alignment, multi- agent coordinatio n) — the level where autonomy and decision- making unfold. Each lay er has a distinct r ole, typ ical failure mod es, and sectoral manifestations. Table 2 provides a high -level summary. Table 2 Overview of the 11-Layer Failure Stack Layer Function Representative Failur e Modes 1. Hardware Computing substrate: processors, memory, storage, interconnects Overheating, memory bi t flips, device wear-out, interconnect degradation 2. Power & Energy Stable power s upply: PSUs, UPS, energy management Voltage instability, surges/ spikes, thermal overload, battery depletio n 3. System Software OS, drivers, virtualizati on, firmware Kernel panics, d river incompatibility, firmware bugs, virtualization o verhead 4. AI Frameworks ML/DL libraries and pi pelines Dependency conflicts, numerical instability, non-deterministic training 5. Models Encoded AI knowledge and decision rules Overfitting, underfitting, adversarial attacks, hallucinations 6. Data Input pip elines, stor age, labelling Data drift, noise, mi slabelled data , integratio n errors, unreliable synth etic data 7. Applications Domain-specific AI use cas es API downtime, poor int egration, UI errors 8. Execution Real-time orchestration: cloud/edge, load balancin g Latency spikes, cold starts , resource starvation 9. Monitoring Observability, logging, alerts Silent failure, undetected drift, alert fatigue 10. Learning Continuous learning and adaptation Concept drift, misaligned feedback lo ops, retraining on biased dat a 11. Agentic AI Reasoning, planning, multi- agent coordination Goal misalignment, e mergent conflicts, opaque decision-making Why Layers Matter To gether The 11-layer stack is not ju st a checklist — it emphasizes that failures are lay ered and interdependen t. A data error (Layer 6 ) can cascade into faulty mo dels (Layer 5), misguide applications (Layer 7), an d distort agentic co ordination (Layer 11). Conversely, poor r easoning at the ag entic level can impose stress o n infr astructure, amplifying faults downward . Thus, the 11 - layer stack pr ovides both: • a diagnostic lens (where can failures o ccur?), and • a design pr inciple (how should systems be ar chitected to contain cascadin g risks?). In the nex t section (3.2), we extend this framewo rk into a compar ative analysis, showing how the same layer s manifest differently ac ross conventional, generative, and agentic AI systems. 3.1.1 Layer 1: Hardware The hardware lay er provides the physical fo undation for all AI systems. It e ncompasses processors (CPUs, GPU s, TPUs), memor y, storag e devices, and interconnects that carry ou t and s ustain computation. Without reliab le h ardware, higher levels of the AI stac k ca nnot operate co rrectly, as every model, data flow, and decision ultimately depend on the integr ity of the physical substrate. Hardware failu res often emerge from: • Thermal stress, such as overheating in processors or acceler ators. • Wear-out an d fatigue, including deg raded solder joints or storage m edia failure. • Transient faults, such as memory b it flips from cosmic rad iation or electromagnetic interference . • Environmen tal stress, including vibr ation, dust, or humidity damag ing sensitive circuits. These f ailures can be either catastrophic (system crash es) or silent (bit errors that propagate unnoticed). 6 Classical hardware r eliability engineering has a long tradition of mitigation strategies, including : • Redundancy ( backup processors, fault -toleran t architectures). • Error- Correcting Codes (ECC) to d etect and correct memory errors. • Thermal man agement through active coo ling and environmental design. However, the demands of AI hard ware introduce new ch allenges. Accelerato rs like GPUs and TPUs operate at much higher utilization and parallelism, making them more susceptible to ther mal cycling and componen t stress. Neuromorphic and edge -AI chips present em erging failur e behaviours that a re less well understood. Research is e xploring self-monitor ing har dware, ad aptive voltage/frequen cy scalin g, and AI -driven prognostics to predict hard ware d egradation (Shankar and Muralidh ar, 2025). Yet, dep loyment in safety- critical contexts such as healthcare o r transportation remains limited. Link to Higher Layers Hardware failures often propagate invisibly . A silent memory error at this lev el can corrupt model weights (Layer 5), leading to systematic misclassificatio ns. A processor g litch can trig ger operating system crashes (Layer 3) , cascading into down time at th e app lication or agen tic layer s. Thus, ensu ring robustness at th e hardware layer is n ot only foundation al b ut also essential f or preserving reliab ility throughout the entire AI stack. 3.1.2 Layer 2: Power & Energy The power and energy layer supplies the stable electrical found ation that enables AI h ardware to function. This incl u des power supply units (PSUs), batteries, uninterruptible power s upplies (UPS), and energy management systems. Reliability here ensur es not only continuous operatio n b ut also protection against fluctuation s and interruptio ns that ca n compromise computation (Huang et al. , 2025). Power & Energy failures of ten emerge from: • Voltage instability d ue to grid fluctuation s. • Thermal overlo ad of power compon ents. • Surge or sp ike damage from transient events. • Battery depletion in mobile or edge devices. • Grounding or wirin g faults in deployed env ironments. 7 Reliability engineering for power systems em phasizes redundant power supplies, surge protection, and battery management systems. In data centers, advan ced en ergy - aware schedu ling balances AI workload against power draw. In edge-AI, low-power ch ips and adaptive energ y managemen t extend dev ice life. Yet, as AI systems mov e into mission -critical contexts, even min or instabilities are unaccep table. Research is shifting toward AI -en abled power monitoring (predicting battery life, detecting instability) an d integ ration of re newable energy sources, though these approaches are not yet mature for safety -critical deplo yment. Link to Higher Layers Failures here p ropagate rapidly upward. A tr ansient p ower loss can cause f irmware corruption (Layer 3), data p ipeline interruption (Layer 6), or unexpected resets that undermine multi -agent coordination ( Layer 11). Reliable AI req uires stable power as a no n-negotiable foundation. 3.1.3 Layer 3: System Software System softwar e sits b etween hardware and h igher -level framewo rks, provid ing the operatin g environment fo r AI workloads. It inclu des operating systems, dev ice drivers, virtualization layers, and f irmware. This layer en sures that ph ysical comp onents are acc essible and stable for applications above. Typical System Software Failu res include (Ebad, 2018): • Kernel pan ics or OS crashes under load. • Driver inco mpatibility with accelerators (e.g ., GPU drivers). • Firmware bu gs that destabilize contro l systems. • Virtualization ov erhead or clock skew, af fecting real -time tasks. • Poorly timed updates introducing regressions. 8 System software reliability is usually addr essed through certification, lo ng -term support ker nels, and redundan t control firmware. Virtualization ad ds isolation but also complexity, raising n ew risks. In AI, t h e challenge is heigh tened because drivers and firmware must keep pace with rap idly evolving hardware accelerators. Research explores lightweight hypervisors, formally verified kernels, and continuous integ ration pipelines for system software. However, man y industries still face gaps betwe en rapidly updated AI stacks and conserv ative operational environmen ts (e. g., energy or healthca re). Link to Higher Layers System software i s the glue between phy sical hardware an d AI framewor ks. Failures here can ripple upward, render ing f rameworks unusable (L ayer 4), halting mo del inference (Layer 5), or causing app lication downtime (Layer 7). Ensuring resilien ce at this lay er requires balan cing stability and adaptability. 3.1.4 Layer 4: AI Frameworks AI frameworks provide the libraries and pipelines that enable develop ers to build, train, and deploy models. Th is in cludes deep learning platforms (e.g., TensorFlow, PyTo rch), optimization toolkits, and inference runtimes. Fra meworks standardize acce ss to hardwar e accelerators and simplify large - scale model train ing, but they also introdu ce dependencies and complex ity (Weber, 2022 ). Typical AI Frameworks Failures include: • Dependen cy conflicts between framewo rk versions. • Numerical instab ilities during training ( e.g., exploding/vanishing gradien ts). • Non-deter ministic behaviour , making models difficu lt to reproduce. • Poor back ward compatibility when upgrad ing frameworks. Current best prac tices emphasize contain erization, dependency pinnin g, and continuous integration testing . Open-source ecosystems like PyTo rch and TensorFlow evolve rapidly, enabling cutting-ed ge applications but creating instability for safety- critical industries. Research focuses on deterministic training, lightweight inferen ce runtimes, and fram ework cer tification f or high - assurance d omains. Ad option in reg ulated in dustries remains slow, as frameworks are rarely validated for d ependability. Link to Higher Layers If frameworks fail, models (Layer 5) cannot run or reproduce reliably, undermining application - level dep endability (La yer 7 ). This layer thus acts as a key stone connecting computational resources to usable intelligen ce. 3.1.5 Layer 5: Models Models en code the learned intelligen ce of AI systems, transforming data into predictions, classifications, or decisions. This includes con ventional ML models, d eep neu ral netwo rks, foundation mod els, and large languag e models (LLMs). 9 Typical Mo dels Failures include: • Overfitting o r underfitting, reducing generaliza bility. • Adversarial vu lnerabilities, where small per turbations trigger misclassification. • Hallucinations, p articularly in generative models producing plausible bu t false outputs. • Model drif t, as performance degrades under new data distributions. Research on r obustness, fairness, and explain ability has grown rapidly. Techniques such as adversarial training, calibration methods, and un certainty quantification aim to improve reliability. Yet, the rise of foundation and generative mod els introduces new risks: hallucinations, misaligned goals, and opaque decision -making ( T. Zhang et al. , 2 025). Current progress includes fine -tuning guardrails, alignm ent method s such as Reinforcement Learning from Hu man Feedback (RLHF), and certificatio n efforts, but practical assurance for mission -critical u se is limited. Link to Higher Layers Model failures propag ate directly to the application lay er (7) and can misguide mu lti -agent coordination (Layer 11). B ecause m odels form the intelligence core, reliability at th is layer is h ighly visible to end -users, often overshadowin g vulnerabilities in lower lay ers. 3.1.6 Layer 6: Data Data fo rms th e lifeblo od of AI systems. This layer includes data pipelines, storage, collection devices, labelling infrastructures, and syn thetic d ata generation. Reliable AI depends on the q uality, integrity, and timelin ess of data. Typical Data Failures include: • Data drift, wher e input distributions shift o ver time. • Noise or cor ruption from faulty sensors. • Labelling errors, especially in superv ised learning. • Integration err ors when fusing multiple data so urces. • Synthetic data unreliability, if generation processes introduce hidden biases. Data reliab ility resear ch emphasizes data v alidation pip elines, anomaly detection, and active data curation (Shar ma, Kumar and Kaswan, 2021). Advances in synthetic data generation promise coverage of rare ev ents but risk introducing unrepresentative d istributions. Ind ustry practice o ften underestimates the difficulty of main taining high -quality, real-time data pipelines, especially when 10 integrating legacy sensors. Ongoin g research explores data provenance tracking and AI -dr iven labelling q uality assurance. Link to Higher Layers Faulty d ata under mines m odel training (Layer 5), disrupts applications (Layer 7 ), and erodes monitoring reliability (Layer 9). In many cases, data-layer failures propagate invisibly, making this one of the mo st critical and underestimated points of failure in AI systems. 3.1.7 Layer 7: Application The application layer represents the domain - specific integration of AI into workflows and real - world decision -making. It connects m odels to oper ators, assets, and en d -users through d ashboards, APIs, or autom ation systems. This is where AI ’s predictions bec o me actionable. Typical Ap plication Failur es include: • Poor integr ation with legacy systems. • API downtime that interrupts functionality . • User interface er rors leading to misinterpr etation of outputs. • Context mismatch , where AI decisions ar e applied inappropriately. Application reliab ility is supported through API testing, m odular integration, and resilience design (Kothamali, 2 025). However, in practice, applications often d epend on brittle mid dleware and poo rly monitored interfaces. Current r esearch emphasizes h uman -centred d esign, trust- calibrated interfac es, an d explainable outpu ts to stren gthen the reliability of AI -assisted decision - making . Link to Higher Layers Application failur es o ften obscure root causes: oper ators may b lame the application ev en when the true fault lies in d ata (Layer 6) or models (Lay er 5). This makes r obust app lication design cr itical as the final tou chpoint between AI an d human trust. 3.1.8 Layer 8: Execution The ex ecution layer g overns the r eal-tim e orchestration of AI workloads. This includes cloud/ed ge scheduling, parallel execution, and load balancing (Alsadie and Alsulam i, 2024) . Reliability here ensures that AI models and application s can run under constraints of latency, scale, and computation al resources. Typical Failures include: • Latency spikes d egrading real-time performance. • Cold starts in serverless arch itectures causing delays. • Resource starvation when workloads exce ed system capacity. • Communication bottlenecks between distribu ted nodes. 11 Solutions include container orchestration ( Kubernetes), real-time sch edulers, and edg e-cloud hybrid architectu res. However, performance assurance for safety - critical AI is still immature. Research explores deter ministic execution fram eworks and AI -dr iven workload optimization, b ut adoption lags o utside data center environments. Link to Higher Layers Execution issues are highly visible: even reliable models (Layer 5) or applications (Layer 7) fail if latency or availability breaks th e pipeline. Thus, execution reliability is key to m aking AI dependable in real-world operation s. 3.1.9 Layer 9: Monitoring The monitoring layer p rovides observ ability into AI systems, including lo gging, anomaly detection, drift detection, and alerting (Aghaei et al. , 2025). Its role is to ensure that failures and degradations are identified in time to act. Typical Mo nitoring Failures include: • Silent failure, wh ere monitoring stops witho ut notice. • Undetected d rift, allowing model degradation to persist. • Alert fatigue, where too many false alarms cau se warnings to be ignor ed. • Insufficient v isibility, leaving blind spots in performance monitoring. Progress in cludes drift d etection algor ithms, explainab le monitoring d ashboards, and f ederated observability p latforms. Yet, industry practice often f alls sh ort — many sy stems lack effective monitoring of AI -specific risks. Emer ging r esearch explores self - mo nitoring AI agen ts capable of explaining their own uncertainty. Link to Higher Layers Without reliable monitoring, failures propagate unchecked. This layer serves as the immune system of AI system s, making its reliability critical to long -term safety and trust. 3.1.10 Layer 10: Learning 12 The lear ning lay er governs ad aptation and co ntinuous imp rovement. It includes retraining pi pelines, online learning, reinfo rcement learning, a n d feedback integration. Unlike static s ystems, AI systems evolve after dep loyment — m aking this layer uniquely dyn amic. Typical Lea rning Failures in clude: • Concept drif t, where learned rules no longer fit current conditions. • Feedback lo op misalignment, where learning amplifies errors. • Biased retraining data, reinforcing systemic wea knesses. • Forgetting rar e but critical cases during adap tation. Research on continual learning, reinforcement learning safety , and robust retraining is advancing rapidly. Industrial p ractice includ es shadow training p ipelines and o ffline validation before deployment (Bay ram and Ahm ed, 2025) , but these safeg uards are resource intensive . T rue safe lifelong lear ning remains unsolved in critical domains. Link to Higher Layers Errors in learning undermine trust at the agentic level (Layer 11), as agents adapt in unintended ways. Continuous learning thus transforms r eliability from a static property into a moving target that must be ac tively managed. 3.1.11 Layer 11: AI Agent The agent lay er introduces the hig hest-lev el intelligence: reasoning, planning, c o mmunication, goal alignment, and multi -agent interaction . This is wher e AI systems become agentic, acting autonomously to pursue objectives in comp lex environments. Typical AI Agent Failures include: • Goal misalignmen t, where agent objectiv es diverge from human inten t. • Multi-agent c onflicts, when agents com pete instead of cooperating . • Opaque rea soning, making it difficult to explain or correct decisions. • Cascading failures, as poor agentic choices a mplify lower -layer weak nesses. 13 Agent-based AI is an emer ging frontier. Current progress includ es goal alignm ent techniques (e.g., reward shapin g, constitutional AI (Sicari et al. , 202 4)), multi-ag ent coordin ation frameworks, and explain able planning methods. Howev er, reliability assurance h ere is im mature, with open challenges aro und emergent behav iours and human -agent collaboratio n. Link to Higher Layers The ag ent lay er sits at the top of the stack, b ut it reflects vu lnerabilities fr om ev ery lower layer. Poor data (Lay er 6 ) or unstable learning (Lay er 1 0) can distort agent reason ing. Failures here are most visible to society , as they directly af fect human trust, safety, and system-lev el outcomes. 3.2 Comparative View: Layered Failure Modes Across AI Paradigms The 11-lay er failure stack is universal: every AI system — from conventio nal classifiers to generative models to auto nomous agents — operates across th ese lay ers. Importan tly, failures can occur at any layer. The distinctions drawn here highlight where reliab ility challenges tend to concentrate or evolve, not where risks exclu sively reside (Table 3 , Fig. 2). Thus, the com parative analysis should b e understood as showing relativ e prominence and shifting mean ings, rather than the absence o f failures in less emphasized layers. Conventional AI: Pipeline -Centre d Risks Convention al AI systems are task -specific and domain-bound ed. Reliability risks concentrate in the data – model – application pip eline: • Layer 5 (Mod els): overfitting and br ittleness under unseen conditions. • Layer 6 (Data): d rift, noise, and labelling errors. • Layer 7 (App lications): integration failures d isrupting workflows. Other layers remain relevant — for instance, hard ware faults (Lay er 1) or wea k monitoring (Layer 9) — but they are less promin ent in defining conventional AI reliab ility. Generative AI: Content-Cent red Risks Generative AI ex pands conventional sy stems by pr oducing open -en ded content. Th is shifts reliability conce rns toward accuracy, saf ety, and appropriateness of gen erated outputs: • Layer 5 (Mod els): hallucinations, con trollability, and factual accuracy . • Layer 6 (Data): r eliance on web- scale corpora introdu ces copyright, bias, and toxicity issues. • Layer 9 (Mon itoring): active filters to detect harm ful or misleading content. • Layer 10 (Learnin g): risks of misalignment du ring fine -tuning or feed back-based ad aptation. Lower layers still matter: GPU failures (Layer 1) or orchestration b ottlenecks (Layer 8) can undermine gen erative systems as much as conventional ones. Agentic AI: Autono my- Centred Risks Agentic AI com bines generative capabilities with plann ing, reasoning, memory, and multi -agent interaction. Reliab ility here extends into alignment, safe adaptation , and emergent behav iours: • Layer 10 (Learnin g): lifelong adaptation , reinforcement drift, and catastrophic forgetting. • Layer 11 (AI Agen t): g oal m isalignment, multi -agent conf licts, op aque reasoning, erosion of human trust. These higher lay ers intensify the complex ity of reliability, but lower lay ers rem ain just as critical. A voltage fluctuation (Layer 2) o r b iased train ing dataset (Layer 6) can still cascade upward, destabilizin g autonomy. Table 3 Core Risks vs. Pa radigm-Specific Emphasis Layer Core Risks (All Paradigms) Conventional AI Emphasis Generative AI Emphasis Agentic AI Emphasis 1 Hardware Device wear-out, overheating Embedded systems Compute scaling for training/inference Energy-hungry autonomy at edge 2 Power & Energy Voltage instability, depletion Battery drains in robots Cloud/edge cost and energy use Grid-level load from fleets 14 3 System Software OS crashes, driver conflicts Firmware bugs in controllers Framework dependency fragility Autonomy requires robust OS for m ulti- agents 4 Frameworks Instability, version conflicts ML pipeline mismatches Model integration with APIs Multi-agent orchestration libraries 5 Models Overfitting, adversarial inputs Task-specific brittleness Hallucinations, controllability Reasoning errors, emergent strategies 6 Data Drift, noise, mislabelling Pipeline integrity Bias in web- scale/synthetic corpora Self-selected/adaptive data risks 7 Applications Poor integration, API fragility Workflow disruption Copilots, generative design Autonomous workflows and decision loops 8 Execution Latency, cold starts, overload Basic resource scheduling Inference s c aling, real - time streaming Fleet-level orchestration 9 Monitoring Silent failure, alert fatigue Limited logging Safety filters, toxicity/factuality check Goal intent and human oversight dashboards 10 Learning Misaligned retraining, drift Offline retraining RLHF misalignment, online fine-tuning Lifelong adaptation, safe reinforcement 11 AI Agent Goal conflicts, black-box reasoning Absent/minimal Limited prompting autonomy Full autonomy, multi- agent coordination risks Cautionary Note : While certain layers are m ore visible in one p aradigm, all lay ers remain relevant to reliability. Overlo oking foundational or less -pr ominent layers risks hidden faults cascading upward, whe re they amplify in to sy stem - or s ociety-lev el co nsequences. Effective reliability assurance therefore requires vig ilance across the entire stack, reg ardless of paradigm. Fig. 2 Reliability Prominence A c ross AI Paradigm s (11-Layer Stack) Fig . 2 illustrates how reliability co ncerns are distributed across the 11 -lay er failure stack for conventional, generative, and agen tic AI systems. Num bers (1 – 5) rep resent relative p rominence — not the existence of failures, but the degree to which r eliability e fforts and discussions hav e typically con centrated at each layer. In conven tional AI, r eliability attention has focused mo st on data, models, and applications (scores 4), r eflecting widespread wo rk on data quality, mo del robustness, and system integration. However, this does n ot im ply that oth er layers are free of risk; rath er, concerns at lower (hardwar e, power) or h igher ( monitoring, learn ing) lay ers h ave histor ically been less emphasized, even though they can produce significant cascading ef fects. In generativ e AI, prominence shifts u pward: models and data pipelin es reach the high est level (5), as issues like hallucinations, bias, an d content safety dominate. Frameworks and monitoring 15 also gain prominence bec ause of the need for decoding stability, safety filters, and real -time oversight. In agentic AI, em phasis extends further into higher layer s. Execution, learning, and ag ent -level reasoning em erge as top concerns (5), cap turing risks such as goal misalign ment, unsafe ad aptation, and emergen t multi-agent conflict. The progression demonstrates that while all layers remain vu lnerable, the center of gravity of reliability attention shifts with paradig m evolution: from mid -stack concerns in conventional AI, to content valid ity in generative AI, to align ment and coordina tion challen ges in agentic AI. 3.3 Paradigm Spotlights: Reliability in Conventional, Generative, and Agentic AI While all th ree par adigms shar e the same 11 -layer stack of vulnerabilities, their reliability personalities differ in important way s. Sectio n 3 .2 high lighted how emphasis shifts acro ss lay ers. Here, the fo cus turns to the systemic natu re of failures within each paradigm , sho wing how risks manifest in pr actice and how the resear ch and standards commun ities are b eg inning to respond. Convention al AI systems, such as predictive classifiers and optimization m odels, have long been applied in transportation, energy, healthcare, and manufacturing. Their reliability challenges are most visible in the rob ustness of models, the stability of da ta pipelin es, and the effectiveness of integration with domain -specific applications. Unlike catastrophic failur es, breakdowns here often emerge gradually, as p erformance degrades due to data drift or u nanticipated operating conditions. For instance, a railway maintenance system trained on older vibration data may fail to generalize when co nfronted with new materials, prod ucing false negatives that quietly u ndermine serv ice availability. To mitigate such risks, the state of the art emphasizes rigorou s valid ation and lifecycle managemen t through ML Ops framework s, alongside established safety standards such as ISO 26262 for automotive systems ( ISO, 2 018), IEC 6 1508 for functional safety (IEC, 2010), and IEEE 1633 for software reliab ility (IEEE, 2017) . Reliability in conventional AI is th us anchored in robustness, valid ation, and disciplined o perational practices. Generative AI represen ts a different reliability pr ofile, because it produces o pen -ended outputs rather than f ixed predictions. Here, the cen tral question is not merely wh ether the mod el generalizes correctly, b ut wheth er its outputs are f actually ac curate , trustworthy, and saf e for downstream u se (X. Zhang et al. , 2025). This raises risks o f hallucination , compounding errors wh en outputs are recycled into retr aining, and m isuse of generated content. A hea lthcare example illustrates the stakes: a generative assistant might produce a co nvincing but incorrec t radiology expl anation th at persuades clin icians to d elay n ecessary treatmen t. The problem is not just tech nical error, bu t the amplification of error through h uman trust. To address th ese risks, current approaches include the NIST AI Risk Managemen t Framewo rk (RMF) (Nist, 2023 ), which emphasizes transparen cy, documentation, and saf eguards, as well as practical tech niques such as red -teaming and output guardrails. The I EEE P7003 standar d on algorithmic bias consider ations (Koene, Dowth waite and Seth, 2018) also represents an emerging foundation f o r gov erning fairness and reliability in generative models. Reliability in gener ative AI therefore rests on controlling un predictability, ensuring f actual grounding, and embedding safeguards around open -ended b ehaviour. Agentic AI systems extend the r eliability challen ge even fu rther. By combining reasoning, planning, memory, and interac tion acr oss multiple agents, they introduce risks associated with goal alignment, emergent b ehaviours, and t h e erosion of human o versight. Failures here are rarely lo cal; instead, they em erge systemically, as au tonomous agents optimize loca l objectives in ways that destabilize global p erformance. A case in point ar ises in energy markets, wher e autonomous bidding a g ents act rationally in isolatio n but collectively undermine grid stability. Add ressing these risks requires g overnance as m uch as techn ical solutions, inclu ding human - in -the-loop oversigh t mechanisms, ongoing AI alignmen t research (Russell, 2 022), and em erging frameworks su ch as IEEE P7 009 for fail -safe d esign of autonomous and semi -autonomous sy stems ( IEEE, 2024) . In this sense, ag entic AI reliab ility is less about robustness or hallucination and mo re a bout managing 16 emergent complexity through alignment, governance, and systemic resilience (Zamb are, Thanikella an d Liu, 2025). Table 4 Comparative Reliabili ty Spotlights Across AI Paradigms Paradigm Systemic Reliability Risks Illustrative Example Current Responses / Standards Key Takeaway Conventional AI Data drift, brittle generalization, integration failures Railway predi ctive maintenance misclassifies faults in new materials ML Ops, I S O 26262, IEC 61508, IEEE 1633 Reliability anchored in robustness and validation Generative AI Hallucinations, compounding feedback errors, unsafe outputs Healthcare diagnostic assistant generates misleading radiology explanations NIST AI RMF, red-teaming, IEEE P7003 Reliability defined by trustworthiness and safeguards Agentic AI Goal misalignment, emergent b ehaviours, loss of oversight Autonomous bidding agents destabilize power grid markets Human- in -the- loop, AI alignment research, IEEE P7009 Reliability depends on alignment, governance, and resilience Taken together, these spotlights suggest that the trajectory of AI reliability reflects the evolution of paradigms themselv es. Conv entional AI struggles most with robustness an d life cycle maintenance , g enerative AI with fac tuality and safe use of outputs, and agentic AI with alignmen t and govern ance of emergent behaviou rs. Reliability engin eering must therefore adapt in step: from strengthenin g robustness, to embedd ing safeguards, to governing systemic complexity (Table 4) . 3.4 Cross-Layer Risks and Cascading Effects The 11-layer failure stack shows that vulnerab ilities exist at every stage of AI systems, from hardware foun dations to ag entic co ordination. Yet in practice, failu res rarely remain confined to a single layer. They propagate ac ross the stack, amplifying ris ks an d creating system- wide consequen ces. Th is proper ty distingu ishes AI reliability from many traditional engineering contexts, wher e failures are more localized and predictable (Elder et al. , 2024) . Three arch etypes of cross-lay er propagation are particularly salient: 1. Bottom-up cascades occur when low -level disturbances rise through the stack. For example, noisy sensor d ata (L ayer 6) ca n degrade model accuracy (Layer 5), wh ich then misguides decision-mak ing applications (Lay er 7) . In transportation, a mis -ca librated vibration sensor in a railway bogie may trigger false d efect predictions, resulting in unnecessary reschedulin g and systemic delays. 2. Top-down cascades emerge when high- level decisions o r agentic behaviours stress lower layers. In energ y system s, bidd ing strateg ies by autonomous m arket agents (Layer 11) may overburden control applications (Layer 7) and even destabilize physical infrastructure (Layer 2). Here, the cascad e b egins at the reasoning and coordination layer and reverberates downwar d, producing failures not fr om material fatigue but from em ergent behaviour . 3. Feedback loops are self -reinforcing cy cles that b lur the b oundary between layers. In healthcare, generative models retrained on their o wn outputs (Layer 10) can inject bias into data p ipelines (Layer 6), wh ich in turn wo rsens model reliability (L ayer 5). What begin s as a small d rift compound into systemic diagnostic inac curacies that ero de clinician trust (Lay er 11). These pattern s illustrate why AI reliab ility cannot be reduced to guarding individual components. Cascading failures amp lify r isks and often manifest in sur prising ways — turn ing local disturb ances into global instability. They also high light a gap in curr ent o rganizational practice: m any stakehold ers can iden tify obvious model or application failu res, but f ewer recognize the cross-lay er dynamics that cause small fau lts to grow into systemic risks. 17 This gap motivates the secon d framewor k of the chapter: awareness map ping. By evaluating how organizatio ns perceive and respond to layered and cross- layer risks, awareness mapp ing provides a maturity-o riented lens for designing dependab le AI architectures. 4. Awareness Mapping: From Failure Modes to Reliability Maturity Reliability in AI systems is not on ly a m atter of technical safeguards; it also depends on how well individuals an d o rganizations perceive, understand, and prepare for r isks. Vulnerabilities span all 11 layers of the failure stack, yet their im pact is stro n gly shap ed by awareness. In practice, awareness is un even: m ost practitioners ca n r ecognize failures at the model or application layer, b ut far fewer anticipate risks in data pipelines, adaptive learning dynamics, or multi -agen t interactions — wh ere some of the most conseq uential failures arise. To address this gap, we introduce awaren ess mapping: a structured approach for assessing how compreh ensively o rganizations un derstand reliability risks across AI systems. Awa reness mapping shifts the fo cus f rom failures themselves to the recognition o f those failures, positioning awareness as a critical dimension of reli ability. Mo re importan tly, it prov ides an ev idence - based foundation for strategic decision -mak ing, helpin g organizations review th eir current prep aredness, identify blind spots, and prioriti ze improvements in g overnance, training, monitoring, and lifecy cle managemen t. I n th is way , awa reness mapping serv es both as a diagnostic lens and as a practical tool for shapin g reliability strategies in co nventional, generative, an d agentic AI. This section dev elops the framework in four steps. Section 4.1 explain s how failures across the 11 -layer stack are oper ationalized in to specific reliab ility issues that can b e scored as points o f awareness. Section 4.2 in troduces a five-level maturity scale that translates th ese s cores into stages of organization al readiness. Section 4.3 presents empirical insights from practition er sur veys, highlighting cu rrent blind spots and un even awareness across sectors. Finally, Section 4.4 link s awareness mapp ing to Dependability- Centred Asset Manag ement (DCAM), showing how awareness maturity can guide lifecycle strateg ies for trustworthy and r esilient AI. Ultimately, awareness map ping transforms reliability from a reactive co ncern into a proactive strategic capability, enabling organizations to anticipate, govern, and continually improve AI systems in step with tech nological evolu tion. 4.1 Scoring Awareness Across Failure Modes Section 3 catalog ued the technical vulnerabilities of AI systems thr ough an 11 -layer failure stack. To move from technical vulnerabilities to measurable or ganizational maturity, we link each failure mode to its corresponding body of reliab ility stu dies. Th e idea is that awareness is not simply knowing that failures may occur, b ut being familiar with met hods, studies, or practices that address them. Each study area therefore becomes a poten tial awareness point . In our implementation, the 11-lay er stack is mapped o nto approximately 47 reliability studies drawn from convention al enginee ring, AI safety research, and emerg ing gen erative/agentic AI work. For example: • Hardware failures such as memor y corruption are linked to stu dies on ECC diagnostics and thermal agin g analysis. • Data-related risk s ar e link ed to m ethods for d rift d etection, data pipeline validation, and b ias auditing. • Model-lev el conce rns correspond to ad versarial r obustness testing , uncertainty quantification, or hallucin ation suppression. • At th e ag entic level, studies focus on alignment verificatio n, simulation - in -the-loop evaluation, and multi -agent stress testing. Table 5 su mmarizes this mapping. For each layer of the stack, it disting uishes between: • Baseline reliability studies in conv entional AI systems, • Additional fo cus areas introduced by gener ative AI, and • New reliability challen ges and studies specific to agentic AI. 18 When u sed in practice, respondents are asked to indicate which of these stud y areas they are aware of. E ach affirm ative response cou nts as one point toward an ov erall awareness score. The total score thus reflects not just recognition of risks, but also fam iliarity with concrete methods for addressing them . This scoring approach — assigning one point per identified study area, across 47 in total — is deliberately simplified . It does not capture the dep th of knowledge or implementation quality. However, it prov ides a transpar ent and actionable b aseline: organi zations can benchmark their maturity, iden tify blind spots, and prioritize cap acity building. Section 4 .2 builds on this by introdu cing a five -level maturity scale that translates awa reness scores into stages o f organizational readin ess. Table 5 Reliability Studie s Across Layers of Conventional, Generative, and Agentic AI Systems Layer Conventional AI – Reliability Studies Generative AI – Added Reliability Studies Agentic AI – Added Reliability Studies 1. Hardware 1. GPU/TPU diagnostics; 2. ECC error analysis; 3. in terconnect reliability; 4. th ermal aging studies; 5. vi bration stress testing Long-run accelerator reliability; mixed-precisio n error validation; sustained training/inference stress tests Robotics hard ware robustness; actuator/sensor degradation analysis; fleet-level redundancy evaluation 2. Power & Energy 6. Voltage fluctuation testing; 7. su rge/UPS resilience; 8. th ermal envelope validation; 9. bl ackout/brown out recovery Energy efficiency studies for large-scale t raining; power- capping effects on Q oS; cooling/thermal resilience in datacenters Battery SoH/SOC forecasting; energy-aware autonomy validation; safe degradation pathways under power loss 3. System Software 10. Kernel panic forensics; 11. container/VM stability tests; 12. firmware update safety; 13. clock skew validation CUDA/ROCm compatibility matrices; NUMA/I/O contention studies; G PU m emory allocation fault analysis RTOS determinism studies; watchdog & safety monitor reliability; secure update protocols in edge/IoT setti ngs 4. AI Frameworks 14. Dependency conflict resolution; 15. build reproducibility testing; 16. deterministic training benchmarking Tokenizer stability; decoding reproducibility; safety filter integration validation Agent-framework reliability (tool u se contracts, sandboxing); plug-in orchestration correctness studies 5. Models 17. OOD robustness testing; 18. calibration metrics; 19. adversarial robustness studies; 20. concept drift monitoring; 21. uncertainty quantification Hallucination detection benchmarks; factuality/toxicity red- teaming; controllability experiments (prompt constraints, decoding strategies) Reasoning & planning f idelity testing; goal alignment verification; emergent strategy audits in multi-agent setups 6. Data 22. Label quality audits; 23. data dri ft & leakage detection; 24. data pipeline validation; 25. synthetic data robustness; 26. provenance/lineage verification Web-scale bias detection; PII/copyright filtering studies; synthetic data robustness analysis Memory/log data integrity; self- generated interaction data validation; agent feedback loop consistency checks 19 7. Applications 27. API failu re recovery analysis; 28. latency/throughput impact studies; 29. user trust calibration 30. HMI resilience evaluation Guardrail UI testing; human trust calibration studies; post-processing verification frameworks Governance of end- to -end workflows; safe-abort/approval mechanisms; role/permission modelling validation 8. Execution 31. Chaos engineering; 32. Deployment fault injectioion; 33. orchestration stress tests; 34. autoscaling & rollback analysis; 35. environment drift control KV -cache stability; batching/streaming trade- off benchmarking; heterogeneous accelerator scheduling Real-time multi-agent orchestration testing; consensus/coordination stress tests; runaway loop pre vention 9. Monitoring 36. Observability coverage audits; 37. anomaly detection benchmarks; 38. drift detection latency analysis; 39. alert fatigue mitigation Hallucination/toxicity monitoring frameworks; content safety dashboards; multi-pass critique/verification pipelines Goal deviation monitors; plan - conformance v alidation; safety sentinels for multi-agent monitoring; escalation pat hways 10. Learning 40. Online learning romustness 41. Offline retraining hygiene studies; 42. drift-adaptive training validation; 43. rollback o f regressions RLHF/RLAIF s tability; online fine-tuning safety validation; preference shift monitoring Lifelong l earning safety validation; safe exploration benchmarks; c atastrophic forgetting protection; policy versioning safeguards 11. AI Agent — (minimal in conventional AI) Prompt-loop guardrail validation; limited autonomy safety checks Autonomy safety cases; alignment verification frameworks; simulation- in -the- loop testing; multi-agent ga me- theoretic stress tests Cross - cutting 44. Silent failu re detection studies; 45. cascading-fault modelling; 46. multi-layer resilience co -design; 47. security breach defense Red-teaming suites for generative models; usage policy enforcement; provenance/auditability of generated outputs Governance & oversight frameworks; auditability of plans/actions; inter -agent safety norms; societal/market impact constraints Table 5 translates the 1 1-layer failure stack into a set of reliability studies that serve as awareness points. For each lay er, the b aseline column reflects established reliability p ractices in con ventional AI, while the gen erative and agentic co lumns extend th ese wit h additional co ncerns unique to open - ended conten t generation and au tonomous reason ing. Each study co rresponds to a poin t of awareness: if a practitioner or organizatio n can recognize an d articulate the relevance of that study to their system , it cont ributes one point to their awareness scor e. In total, the 47 identified studies (baseline + ex tensions) define the assessment space. These awaren ess points form the backbone of the scoring m ethod described in Section 4.2, where the to tal nu mber of recognized po ints (0 – 47) is mapped o nto a five-lev el maturity scale. Th is mapping transforms awareness from a q ualitative impression into a stru ctured diagnostic tool, enabling organizations to b enchmark their prepar edness, iden tify b lind spots, and prioritize improvem ents in AI reliability strategies. 20 4.2 Awareness Maturity Levels The awar eness score provides a structured way to measu re how reliably individu als or organizations perceive risks across the AI system stack. It is derived from th e 47 diagnostic points identified in Section 4.1 , corresponding to failure m odes and reliabil ity stud ies spann ing all 11 layers — fro m hardware and data pipelines to adaptive learning and agentic reasoning. Each point r epresents a distinct reliability concern. Respondents are ask ed whether they are aware o f each issue; ever y positive respo nse contrib utes o ne point, pro ducing a maximum possible score of 47. The total reflects the brea dth of reliability risks that stakeh olders consciously r ecognize. It is important to emph asize that these 47 points are drawn f rom conventiona l AI system s . They capture well -established risks such as hardwar e degradation, power instability, data drift, model overfitting, or monitori ng b lind spots. Ho wever, th e framework is designed to be ex tensible . By integr ating g enerative-specific risks (e. g., hallu cinations, factu ality errors, unsafe content) and agentic-specif ic risks (e.g., goal misalignment, reasoning drift, emergent multi -agen t conf licts), the same approach can evolve into a mo re compreh ensive awa reness instru ment. I n this sense, the 47 points should be seen as a baseline example , not a closed set. To make scores meaningful, we map them onto a five-level maturity scale (Table 6) . This s cale captures no t only the number of risks rec ognized, but also the organizational posture implied by that recogn ition: • Level I (0 – 9 points): Unaware Organization s at this stage have not considered AI reliability in a s ystematic way. Only o bvious failures such as applicatio n crashes o r output er rors are n oticed. Reliability is absent from conversation s and practices, leaving system s highly exposed. • Level II (10 – 19 points): Fragmented Awareness Some failures are acknowledged, ty pically at the model or applicatio n level, bu t wit ho ut systematic measures. Risks in d eeper layers — such as data p ipelines, execution, or monitor ing — remain ov erlooked. Reliability effor ts are piecemeal and reactive. • Level III (20 – 29 points): Em erging Multi -Layer Awareness Awareness ex pands across multiple layers. Tea ms recognize that AI can fail beyond surface - level errors and begin to consider m itigation strategies. Data quality, mo del robustness, and infrastructur e reliab ility en ter the discussion, though responses remain mostly reactiv e an d un even. • Level IV (30 – 39 points): Proactive Systemic Awareness Reliability is actively monito red and tested across several layers. Govern ance mechanisms begin to take shape, supported by str uctured dependability practices. Blind spots remain — particularly in higher-ord er risks such as goal alignment or emergent multi-agen t behaviors — but awareness is no longer confin ed to isolated issues. • Level V (40 – 47 points): Compreh ensive Cross- Layer Reliability Table 6 summarizes these l evels, their score ranges, and their practical meaning. Level Score Range Descriptor Meaning in Practice I. Unaware 0 – 9 No consideration Reliability absents from discussions; only obvious failures (e.g., crashes) not iced. II. Fragmented Awareness 10 – 19 Isolated recognition Failures acknowledged mai nly at model or application level; no systematic measures for deeper risks. III. Emerging Multi-Layer Awareness 20 – 29 Expanding recognition Failures a t several layers are ackn owledged; some mitigations applied, tho ugh mostly reactive. IV. Proactive Systemic Awareness 30 – 39 Structured approach Reliability monitored a nd tested across multiple layers; governance mechanisms beginning to emerge. V. Comprehensive Cross - Layer Reliability 40 – 47 Full integration Reliability strategy spans a ll 11 layers, including generative and agentic ri sks; e mbedded in organizational culture. 21 Awareness span s virtually all diag nostic points, including those in reasoning, adaptation, and agentic coordination. Reliability is embedded in organizational culture, supported by systematic dependability en gineering, proactive go vernance, and lifecycle s trategies. This scoring metho d is delib erately simplified. It privileges brea dth of recognition (how many failure types are known) over d epth of understanding (how well those failures are mitigated). As such, it should be interpreted as an indicative measure of org an izational maturity rather than a definitive ev aluation of ca pability. Its stren gth lies in co mparability: b y using the same sco ring approach across team s, sectors, or domains, it highlights b lind spots, benchmarks prog ress, an d guides interv entions. Ultimately, this maturity framewor k turns awaren ess from a static description into a developm ental roadmap. Progression from Level I to Level V reflects n ot only wider recognition of risks, but also deeper institutional capacity to anticipate, mitigate, an d continu ally improve reliability across co nventional, generativ e, and agentic AI systems. 4.3 Empirical Insights from Practice To assess how the awareness map ping framework per forms in practice, we a pplied it du ring sever al invited key note talks across d omains includ ing transportation, ener gy, and manufac turing. In these sessions, participan ts — p rimarily en gineers, manag ers, and researchers engag ed with AI-enabled systems — were asked to complete a rap id diagnostic exercise. They were presen ted with a list of 47 reliability issues mapped to the 11 -lay er f ailure stack (see Section 3) and asked to ind icate which ones they had previously co nsidered in their work. Each identified issue counted as o ne point toward an overall awareness score. The results were s trikingly consistent. While participan ts readily identified a h andful of visible issues — such as model over fitting, data noise, o r application downtime — f ar fewer recognized vulnerab ilities at deeper layers such as power s tability, ex ecu tion latency, or adaptive learning drift . Scores clustered toward the lower end of the maturity ladder, with mo st individuals and organizatio ns falling within Level I (Unaware) or Level II (Frag mented Awaren ess). Only a minority reached Level III, and very few r espondents d emonstrated the cross- layer recognition required for Lev els IV or V. Fig. 3 Distribution of awareness scores from keynote session on AI reliability in the physical asset management and transportation sector. Conference A : 30th International and Me diterranean HDO (MeditM a int2025); HDO: Croatian Maintenance Society) conference, from 19th -22nd May, Rovinj, Croatian. Conference B: 1st International Conference on Transportati on Systems (TS2025), from 16th -18th June 2025, Lisbon, Portugal. Fig . 3 illustrate the distrib ution of scores from two keynote sessions. Despite differ ences in sectoral focu s, both show a similar skew: most particip ants recogn ized fewer than 20 of the 47 failure mo des, with only a very small g roup ach ieving awareness scores above 30 . Notably, b oth 0 5 10 15 20 25 0-10 11-20 2 1-30 31-40 41-47 Conference A Conference B 22 surveys confirmed the same misalign ment: failur es are m ost o ften noticed at the mod el and application layers, while the mo st conseq uential risks — such as cascading effects f rom data pipelines, exec ution environments, or ag entic coordination — are rarely ackno wledged. This evidence underscores two les sons. First, organizatio ns may be sy stematically underestimatin g reliability risks b y co ncentrating o n surf ace -level failu res. Secon d, ev en a simp le diagnostic instrumen t such as the 47 -item checklist can serve a dual role: no t only measuring awareness but also exp anding it. Participants often rep orted that encountering failur e modes outside their p rior experience — par ticularly in domain s such as power and en ergy managemen t or multi - agent coordin ation — reshap ed their perspective o n where reliability strategies sho uld focus. Taken tog ether, these findings hig hlight both the urgency of b roadening reliability awareness and the poten tial of structured mappin g to support this expansion in p ractice. 4.4 Linking Awareness to Dependability-Centred Asset Management (DCAM) The awareness mapping framework is more than a diagnostic tool for m easuring how organizations perceive AI r eliability r isks. It also align s directly with the broader paradigm of Dependability - Centred Asset Ma nagement (DCAM), which ex tends traditional asse t managem ent by embed ding reliability, av ailability, m aintainability, and safety (RAMS) across the lifecycle of both physical and digital assets (L in, 2025) . In this view, AI systems — whet her con ventional, generative, or agentic — are treated as critical organizational assets whose dependability must be m anaged alongside physical infr astructure. Awareness lev els serve as an entry point into DCAM practice. Organizations at Lev el I or II lack the readiness to integrat e AI r eliability into asset managem ent strategies, as risks r emain unrecognized and d ecision- making is rea ctive. By Level III, awa rene ss expands across multiple layers, allowing AI reliability consideration s to begin influencing operational strategies such as monitoring, anom aly detection, and lifecycle planning. At Lev el IV, systemic awareness begins to merge with D CAM structu res. AI reliab ility is embedded into gover nance and management processes, including cross - layer monitoring, predictive maintenance supported by AI diagnostics, an d structured validation proto co ls. At the highest level, Lev el V, awareness is compreh ensive and cou pled with institutionalized dependability practices. Here, DCAM an d awareness mapping converge: reliability strategies become pro active, emb edded into desig n, deployment, and contin uous improv ement, span ning both physical assets and AI-d riven systems. Crucially, awareness mapping provides a diagnostic b ridge b etween technical AI r eliability and organizatio nal maturity in asset managemen t. It reveals blind spots that man agers may overloo k, guiding targeted interventions. For example, a utility operator w ho recognizes model -level error s, but neg lects execution -layer risks may f ail to secure grid stability und er dynamic demand; awareness mapping d irects attention to these gap s and extends DCAM practices into dig ital infrastructur es. In this way, awa reness mapping en riches DCAM in two comp lementary ways: 1. By embedd ing AI reliability explicitly within the asset management lif ecycle. 2. By offering a stru ctured roadmap for organization s to progress from fragmented awareness to compreh ensive dependability. Together, th e two frameworks exten d the scope of asset man agement to meet the challenges of increasingly au tonomous, adaptive, an d agentic AI systems, en suring that reliability is no t an afterthought but a core principle of lifecy cle strategy. 5. Conclusion and Outlook This chapter has advanced a dual con tribution to the study of AI reliability. First, it introduced the 11 -layer failure stack — a structured framework for tracing vuln erabilities acro ss co nventional, generative, and agentic AI systems. By mo ving from physi ca l computation layers to reasoning and agentic coordination , the stack highlights that reliability challenges are not confined to m odels o r 23 applications but extend throughout the entire lifecycle of AI system s. Ca se vig nettes illu strated how failures at seemingly minor layers can cascade into systemic disruptions, underscoring the need for a cross-layer perspective. Second, the chapter developed the concept of awareness mapping — a meth od for evaluating how well organizations recognize and pre pare for reliability r isks. By translating failure modes into measurable awareness points, the framework provides a diagnostic lens into o rganizational maturity. The five-level maturity scale h elps practitioners id entify blind s pots, benchmark p rogress, and guide the integration o f reliability consid erations into strategy and governan ce. Th e alignm ent of awareness mapping with Dependability -Centred Ass et Managemen t (DCAM) further shows how reliability can b e embedded within broader life cycle practices for both physical an d digital assets. Together, the failure stack and awareness map ping demonstrate that reliability is not a fixed property but a moving targ et, shaped by technological evolutio n and organizational readiness. Conven tional AI, generativ e AI, and agentic AI ea ch bring distinct v ulnerabilities, yet the p rinciple is con sistent: safeguarding reliability r equires both technical safeguards a n d organizational awareness, reinforced across layers and over time. Looking forward, several avenues emer ge. Future wor k could refine awareness mapping beyond breadth of recognition to capture depth of under standing an d mitigation ca pacity. Compar ative studies across industries would provide empirical evid ence on sector-spec ific b lind spots and resilience strategies. In parallel, the failure stack could evolve as new p aradigms — such as embodied intelligence or h ybrid human – AI collectives — intr oduce a d ditional layers of complexity. Finally, integ ration with stan dards, regulation, and governance f rameworks will be essential to ensure that awareness an d dep endability principles scale wit h the societa l deployment o f g enerative and agentic AI. In conclusion, layered failu re analysis and awareness mapping together offer a foundation for moving from rea ctive responses to proactive reliability strategies. By situating AI reliability within a systemic, lifecy cle-orien ted framewo rk, they pr ovide both a d iagnostic lens an d a roadmap — supporting t he trustworthy , su stain able, and s afe deployment of AI in increasingly critical domains. References Acharya, D.B., Kuppan, K. an d Divya, B . (2025) ‘Agentic AI : Autonomous Intelligence for Complex Goals - A Comprehensive Survey’, IEEE Access , 13, pp. 18912 – 18936 . Available at: https://doi.org /10.1109/ACCESS.2025.353285 3. Aghaei, M. et al. (2025) ‘Au tonomous Intelligent Mon itoring of Photovoltaic Systems: An I n -Depth Multidisciplinary Review’, Prog ress in Photovolta ics: Research an d Applications , 33(3), pp . 381 – 409. Available at: http s://doi.org/10.1002/pip.385 9. Ale, L. et al. (2025 ) ‘Enhancing generative AI reliab ility via agen tic AI in 6G - enabled edge computing’, Nature Reviews Electrical En gineering , 2(7), pp. 441 – 4 43. Availab le at: https://doi.org /10.1038/s44287- 025-00169- 3. Alsadie, D. and Alsulami, M . ( 2024) ‘Enhancing work flow efficien cy with a modified Firef ly Algorithm for hybrid cloud edge environments’, Scientific Reports , 14(1), pp. 1 – 19. Available at: https://doi.org /10.1038/s41598 - 024 -75859- 3. Bayram, F. an d Ahmed, B.S. ( 2025) ‘Towards T rustworthy Machine Learning in Production: An Overview of the Robustness in MLOps Ap proach’, ACM Compu ting Surveys , 57 (5), pp. 1 – 35 . Available at: http s://doi.org/10.1145/3 708497. Ebad, S.A. ( 2018) ‘The influencin g causes of softwar e unavailability: A case study fr om industry’, Software: Practice and Experience , 48(5), pp. 1056 – 1076. Available at: https://doi.org /10.1002/spe.2569. Elder, H. et al. (2024) ‘Knowing When to Pass: The Effect of AI Reliab ility in Risky Decision Contexts’, Human Factors , 66(2), pp. 348 – 362. Available at: https://doi.org /10.1177/00187208221100691. 24 Huang, H. et al. (2025) ‘Power Managem ent Optimization for Data Cen ters: A Power Supply Perspective’, IEEE Transaction s on Sustaina ble Computing , 10(4), pp. 784 – 803. Av ailable at: https://doi.org /10.1109/TSUSC.2025. 3542779. IEC (2 010) Functional Safety of Electrical/Electronic/Progra mmable Electronic Safety -Related Systems , IEC 6150 8-1: 2010 . IEEE (2017) ‘IEEE Recomm ended Pra ctice on Software Reliability’, in IEEE Std 1 633-2016 , pp. 1 – 261. IEEE (2024) ‘IEEE Standard for Fail - Safe Design of Autonomou s and Semi- Autonomous Systems’, in IEEE Std 7009 -2024 , pp. 1 – 45. ISO (2018) ‘Ro ad vehicles — Functional safety’ , in ISO Std 262 62-1:2018 , pp. 1 – 23. Joshi, S. (2025) ‘Model Risk Managem ent in the Era o f Generative AI: Challen ges, Op portunities, and Future Directions’, Internationa l Journal o f Scientific and Research Publications , 15(5), pp. 299 – 30 9. Available at: https://doi.org /10.29322/ijsrp.15.05.2025 .p16133. Koene, A., Dowthwaite, L. and Seth, S. (2018) ‘IE EE P7003TM Standard for Algorithmic Bia s Considerations’, in 2018 IEEE/ACM In ternational Workshop on Software Fairn ess . IEEE/ACM, pp. 38 – 41. Available at: h ttps://doi.org/10.1145/31 94770.3194773. Kothamali, P.R. (2025) ‘ AI -Powered Quality Assuran ce: Revolutionizing Automation Fra meworks for Cloud Application s’, Journa l of Ad vanced Computing Systems , 5(3), pp . 1 – 25. Available at: https://doi.org /10.69987/JACS.2025.5030 1. Lin, J. (2025) ‘Dep endability -Centered Asset Management(DCAM): Toward Trustworthy and Sustainable System s in th e CPS Era’ , IEEE Relia bility Mag azine , 2(3), pp . 1 – 7 . Available at: https://doi.org /10.1109/MRL.2025 .3583632. Lin, J. and Silfven ius, C. (2025) ‘Some Critical Th inking o n Electric Vehicle Battery Reliability: From E nhancement to Optimization ’, B atteries , 11(2), p p. 1 – 33. Available at: https://doi.org /10.3390/batteries11020048 . Nist (2023) ‘NIST AI Risk Managemen t Framework (AI RMF 1.0) ’, in NIST AI 100-1 , pp. 1 – 48. Russell, S. (2 022) ‘Artificial intelligence and the problem of co ntrol’, in Perspectives on Digita l Humanism , p p. 19 – 24. Av ailable at: https://doi.org /10.1007/978 -3-030-86144- 5_9. Shankar, V. and Mur alidhar, N. (2025) ‘Securing Hardware with AI : Intrusion Detection, Threat Mitigation, and Tr ust Assurance’, In ternational Jou rnal of Computer Sciences and Engineering , 13(4), pp. 92 – 98. Available at: https://doi.o rg/10.26438/ijcse/v1 3i4.9298. Sharma, S., Kumar, N. and Ka swan, K.S. (2021) ‘Big data reliability: A critical review’, Journal of Intelligent and Fuzzy Systems , 40 (3), pp. 5501 – 5 516. Availab le at: https://doi.org /10.3233/JIFS-202 503. Sicari, S. et al. (20 24) ‘Open -Eth ical AI: Adv ancements in Open -Sou rce Human- Centric Neural Languag e Models’, ACM Compu ting Surveys , 57(4), pp. 1 – 47. Available at: https://doi.org /10.1145/3703454. Weber, N. ( 2022) SOL: Reducing the Maintenan ce Overhead for In tegrating Hardware Support into AI Fra meworks , arXiv p reprint a rXiv:2205 . Association fo r Com puting Ma chinery. Available at: http ://arxiv.org/abs/2205.10357 . Zambare, P., Thanikella, V.N. and Liu, Y. (2025) ‘Securing Agentic AI: Threat Modeling and Risk Analysis fo r Networ k Monitoring Agentic AI System’, arXiv preprint arXiv:2508 , p. 10043. Available at: http ://arxiv.org/abs/2508.10043 . Zhang, D. et al. ( 2017) ‘Reliability evaluation and component importance measure for manufacturing systems based on failu re losses’, Journa l of In telligent Manufacturing , 28(8), pp. 1859 – 1 869. Available at: https://do i.org/10.1007/s10 845-0 15-1073- 1. Zhang, T. et al. (2025) ‘From Data to Deploymen t: A Comprehen sive Analysis of Risks in Large Languag e Model Research and Development’ , IET Information Security , 2025(1), p. 7358963. Available at: http s://doi.org/10.1049/ise2 /7358963. Zhang, X. et al. (2025) ‘Reliability engineering, risk m anagement, and trustworthiness assurance for AI systems’, Journal of Reliability Scien ce and Engineering , 1(2), p. 022001. Availab le at: https://doi.org /10.1088/3050 -2454/adcef2.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment