X-CoT: Explainable Text-to-Video Retrieval via LLM-based Chain-of-Thought Reasoning

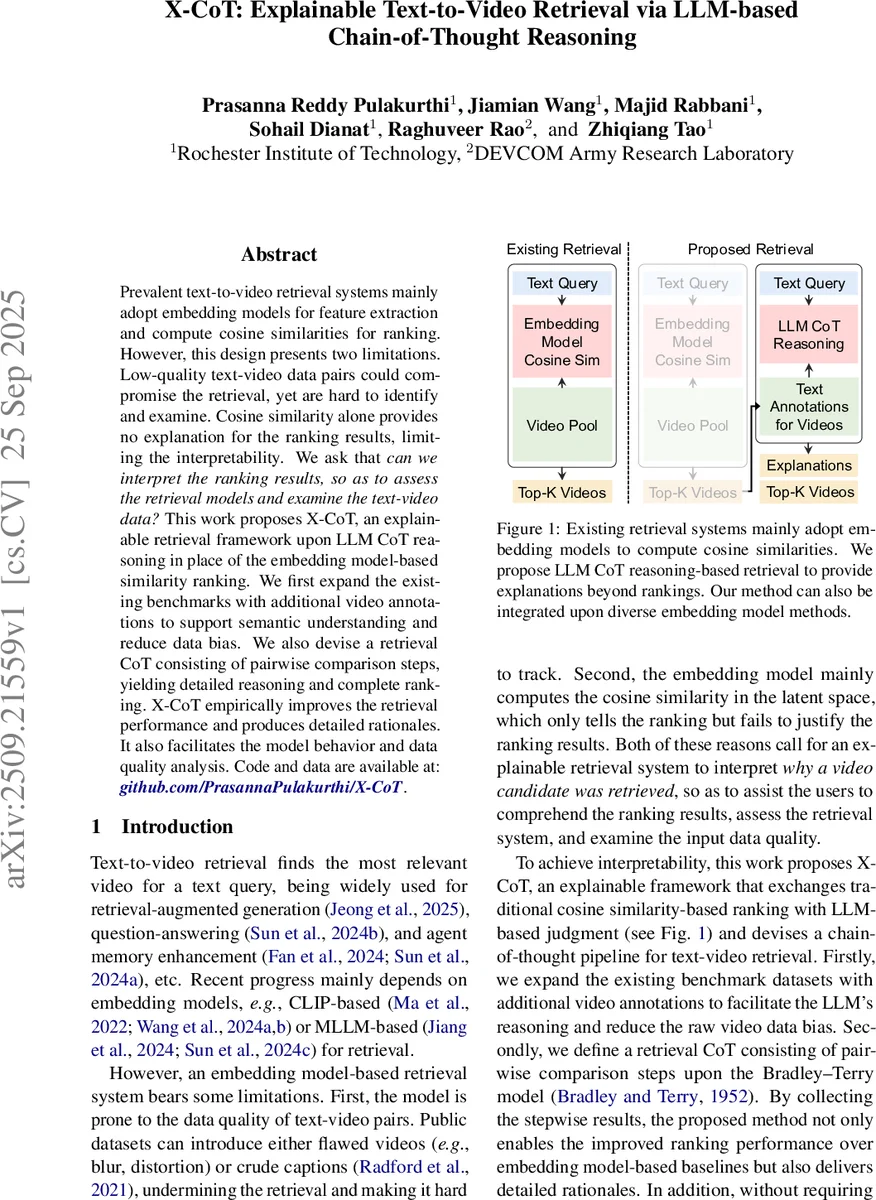

Prevalent text-to-video retrieval systems mainly adopt embedding models for feature extraction and compute cosine similarities for ranking. However, this design presents two limitations. Low-quality text-video data pairs could compromise the retrieval, yet are hard to identify and examine. Cosine similarity alone provides no explanation for the ranking results, limiting the interpretability. We ask that can we interpret the ranking results, so as to assess the retrieval models and examine the text-video data? This work proposes X-CoT, an explainable retrieval framework upon LLM CoT reasoning in place of the embedding model-based similarity ranking. We first expand the existing benchmarks with additional video annotations to support semantic understanding and reduce data bias. We also devise a retrieval CoT consisting of pairwise comparison steps, yielding detailed reasoning and complete ranking. X-CoT empirically improves the retrieval performance and produces detailed rationales. It also facilitates the model behavior and data quality analysis. Code and data are available at: https://github.com/PrasannaPulakurthi/X-CoT.

💡 Research Summary

The paper addresses two fundamental shortcomings of current text‑to‑video retrieval systems that rely on joint embedding models and cosine similarity ranking. First, the quality of the paired text‑video data in public benchmarks is often low; blurry frames or inaccurate captions corrupt the learned embeddings and degrade retrieval performance, yet there is no systematic way to detect or correct these noisy pairs. Second, cosine similarity scores provide no human‑readable justification for why a particular video is ranked higher, limiting interpretability and trust.

To overcome these issues, the authors propose X‑CoT (Explainable Chain‑of‑Thought), a framework that replaces the final similarity‑based ranking with reasoning performed by a large language model (LLM). The approach consists of three main components.

-

Structured video annotation – For each video in the benchmark, a multimodal LLM (Qwen2.5‑VL‑7B‑Captioner‑Relaxed) generates frame‑level captions. These captions are aggregated and re‑phrased into a structured representation containing objects, actions, scene tags, and a high‑level summary. A series of post‑processing steps (duplicate removal, noun/verb filtering, stop‑word elimination, normalization) ensures concise, semantically rich annotations that compensate for visual noise and provide the LLM with explicit grounding cues.

-

Retrieval Chain‑of‑Thought – An off‑the‑shelf embedding model (e.g., CLIP‑ViT‑B/32, Qwen2‑VL, or X‑Pool) first produces a coarse top‑K candidate pool (K < 25). For every unordered pair (vi, vj) within this pool, the LLM receives a prompt containing the text query and the two videos’ structured annotations and is asked to decide whether vi is more relevant than vj. The LLM returns a binary preference together with a natural‑language justification.

-

Bradley‑Terry aggregation – The collection of pairwise preferences is fitted to a Bradley‑Terry model via maximum‑likelihood estimation, yielding ability scores θi for each video. The final ranking is obtained by sorting videos in descending order of θi, which smooths out noisy or cyclic judgments that may arise from individual pairwise decisions.

Because the LLM reasoning step is independent of the embedding backbone, X‑CoT can be plugged into any existing retrieval pipeline. The authors evaluate the method on four widely used datasets (MSR‑VTT, MSVD, DiDeMo, LSMDC) using three embedding baselines. Across all metrics (Recall@1, @5, @10, Median Rank, Mean Rank) X‑CoT consistently improves performance, with gains ranging from 2 % to 6 % absolute recall. Notably, CLIP‑ViT‑B/32 on MSVD sees a +5.6 % increase in R@1, and X‑Pool on the same dataset improves by +1.9 % R@1.

Ablation studies demonstrate that (a) removing the chain‑of‑thought step and asking the LLM to rank K items directly leads to a substantial drop (≈ ‑2.9 % R@1), confirming that pairwise comparisons are easier for the model; (b) omitting the Bradley‑Terry refinement still yields improvements over the baseline, but the refinement further refines the ranking.

Beyond raw performance, X‑CoT provides interpretable explanations for each decision. The authors show examples where the LLM’s reasoning highlights semantic elements (e.g., the presence of a “man” or a “stop sign”) that the embedding model missed, enabling users to diagnose model blind spots. Moreover, by jointly inspecting the original caption, the retrieved video, and the LLM’s rationale, one can identify mislabeled or ambiguous data, offering a practical tool for dataset quality assessment and cleaning.

The paper acknowledges limitations: the quality of reasoning depends heavily on the LLM’s capacity and domain alignment; extremely long videos or highly specialized domains (medical, scientific) may exceed current LLM capabilities. Additionally, the pairwise comparison cost grows quadratically with K, so practical deployment requires careful selection of K or more efficient sampling strategies.

In conclusion, X‑CoT introduces a novel, plug‑and‑play paradigm that augments traditional embedding‑based text‑to‑video retrieval with LLM‑driven chain‑of‑thought reasoning. It simultaneously boosts retrieval accuracy, supplies human‑readable justifications, and offers a mechanism for data‑quality analysis, paving the way toward more trustworthy and explainable multimodal retrieval systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment