Blind Network Revenue Management and Bandits with Knapsacks under Limited Switches

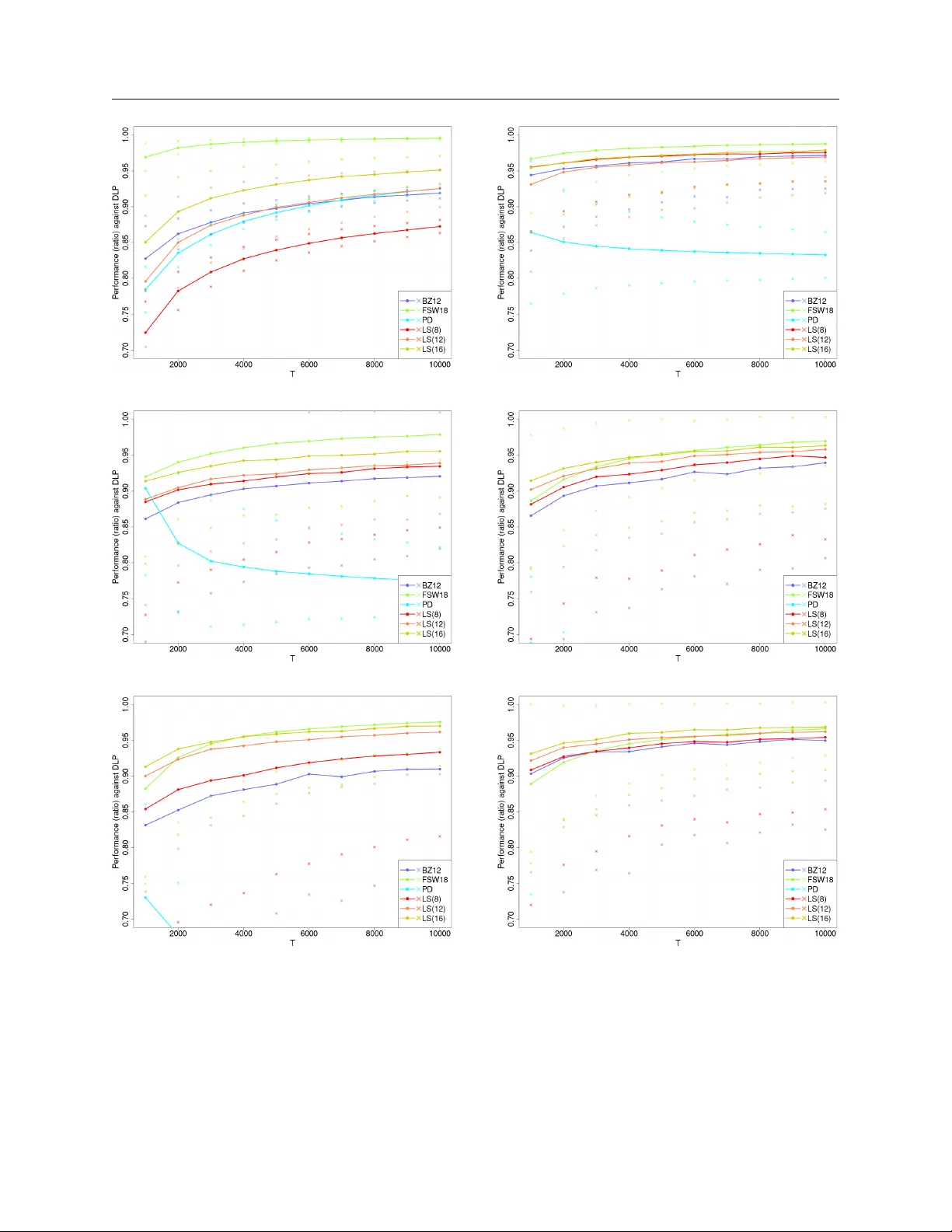

This paper studies the impact of limited switches on resource-constrained dynamic pricing with demand learning. We focus on the classical price-based blind network revenue management problem and extend our results to the bandits with knapsacks proble…

Authors: David Simchi-Levi, Yunzong Xu, Jinglong Zhao