DWIM: Towards Tool-aware Visual Reasoning via Discrepancy-aware Workflow Generation & Instruct-Masking Tuning

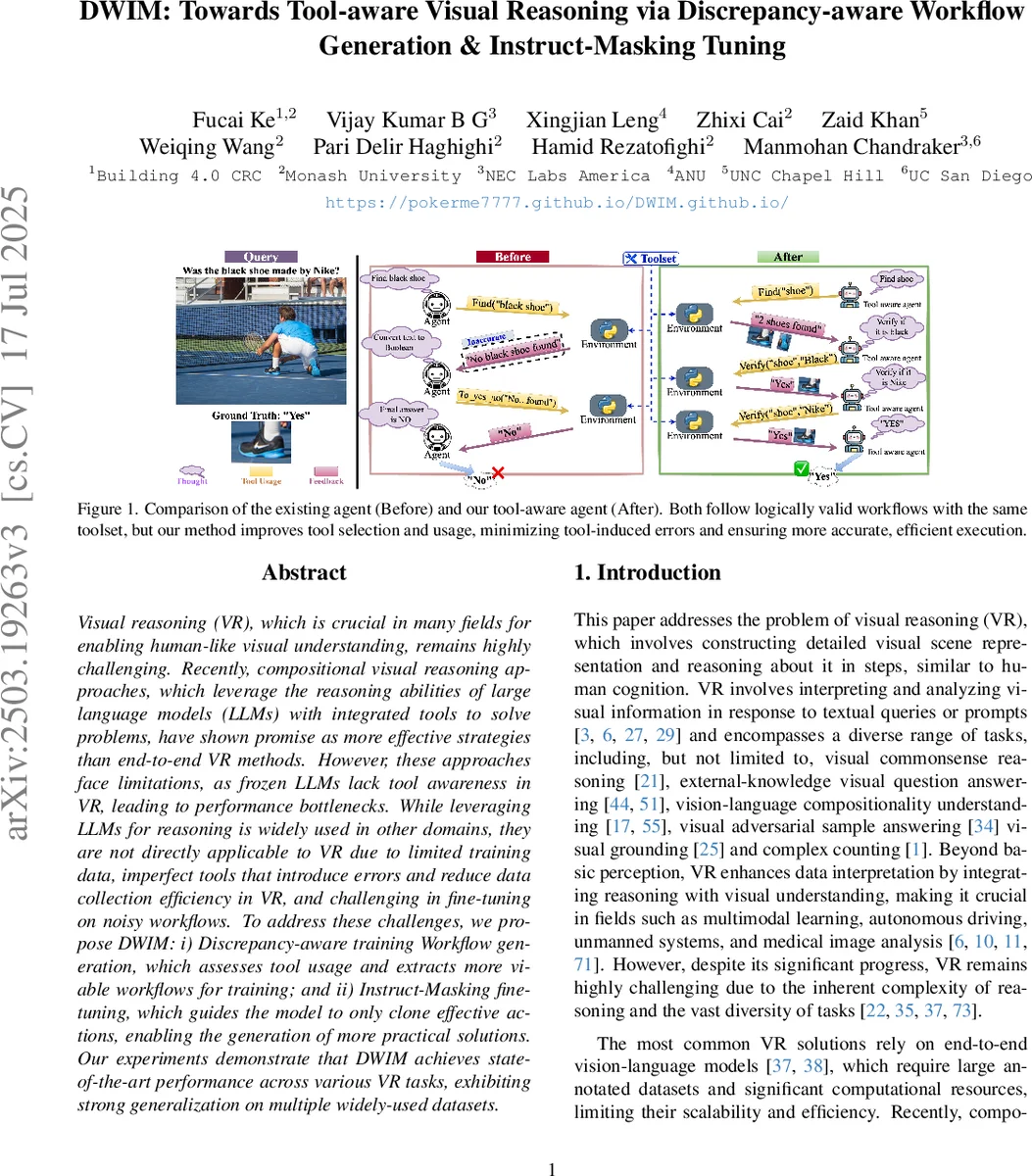

Visual reasoning (VR), which is crucial in many fields for enabling human-like visual understanding, remains highly challenging. Recently, compositional visual reasoning approaches, which leverage the reasoning abilities of large language models (LLMs) with integrated tools to solve problems, have shown promise as more effective strategies than end-to-end VR methods. However, these approaches face limitations, as frozen LLMs lack tool awareness in VR, leading to performance bottlenecks. While leveraging LLMs for reasoning is widely used in other domains, they are not directly applicable to VR due to limited training data, imperfect tools that introduce errors and reduce data collection efficiency in VR, and challenging in fine-tuning on noisy workflows. To address these challenges, we propose DWIM: i) Discrepancy-aware training Workflow generation, which assesses tool usage and extracts more viable workflows for training; and ii) Instruct-Masking fine-tuning, which guides the model to only clone effective actions, enabling the generation of more practical solutions. Our experiments demonstrate that DWIM achieves state-of-the-art performance across various VR tasks, exhibiting strong generalization on multiple widely-used datasets.

💡 Research Summary

The paper introduces DWIM, a novel framework for enhancing tool‑aware visual reasoning (VR) by addressing two major shortcomings of existing compositional approaches: the lack of tool awareness in frozen large language models (LLMs) and the reliance on single‑turn planning that ignores execution errors. DWIM consists of (i) Discrepancy‑aware Workflow Generation and (ii) Instruct‑Masking Fine‑tuning.

In Discrepancy‑aware Workflow Generation, an agentic LLM interacts with the environment over multiple turns. At each step the model receives feedback from a visual or external tool (e.g., object detector, OCR, search engine) and compares it with the known correct answer. When a mismatch (discrepancy) is detected, the model inserts a “Rethink” step, revises its reasoning, and may select alternative tools or parameters. This loop (think‑code‑done) continues until the answer matches the ground truth, thereby automatically collecting a set of high‑success‑rate workflows that include both successful actions and explicit failure analyses. Unlike prior “standard” workflow generation that filters only by final correctness, DWIM evaluates the effectiveness of each intermediate tool usage, dramatically improving data utilization.

Instruct‑Masking Fine‑tuning leverages the noisy workflow collection. The workflow is treated as a sequence of actions; effective tool invocations are masked, forcing the model to predict them from surrounding context and from the explicit failure explanations left unmasked. Consequently, the model learns why a tool failed and how to replace it, rather than blindly copying entire pipelines. This masking also serves as data augmentation, expanding the effective training set without additional human annotation.

Experiments on several benchmark VR datasets—including GQA, VCR, OK‑VQA, and complex counting tasks—show that DWIM outperforms state‑of‑the‑art methods such as HYDRA, VisProg, and VIsRep by 3–5 percentage points on average. The gains are especially pronounced in scenarios where tool errors are frequent, confirming that the discrepancy‑aware component successfully mitigates tool‑induced noise. Ablation studies reveal that removing either the discrepancy‑aware generation or the instruct‑masking step leads to substantial performance drops, underscoring their complementary roles.

Key contributions are: (1) a multi‑turn, discrepancy‑aware workflow generation process that automatically discovers and refines effective tool usage; (2) an instruct‑masking fine‑tuning strategy that teaches the LLM to clone only successful actions while learning from failure cases; and (3) a demonstration that tool‑aware LLMs can be trained with limited VR data, reducing reliance on extensive human prompt engineering.

The authors suggest future directions such as scaling the discrepancy detection to larger tool libraries, integrating reinforcement‑learning based reward signals for more nuanced policy learning, and combining human‑verified workflows with automatically generated ones to further improve safety and robustness. DWIM thus represents a significant step toward turning frozen LLMs into competent, tool‑aware agents for visual reasoning tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment