Loss functions incorporating auditory spatial perception in deep learning -- a review

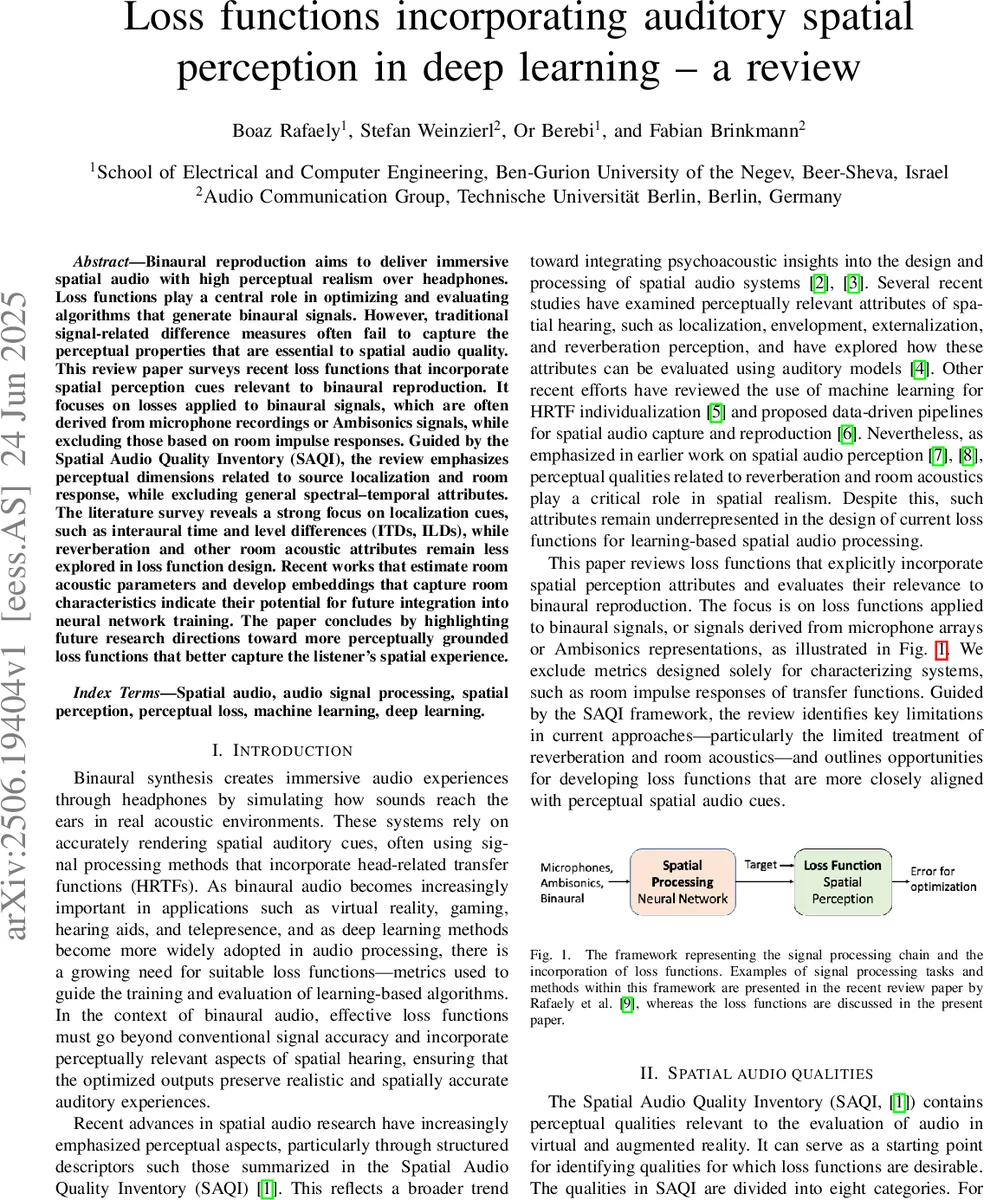

Binaural reproduction aims to deliver immersive spatial audio with high perceptual realism over headphones. Loss functions play a central role in optimizing and evaluating algorithms that generate binaural signals. However, traditional signal-related difference measures often fail to capture the perceptual properties that are essential to spatial audio quality. This review paper surveys recent loss functions that incorporate spatial perception cues relevant to binaural reproduction. It focuses on losses applied to binaural signals, which are often derived from microphone recordings or Ambisonics signals, while excluding those based on room impulse responses. Guided by the Spatial Audio Quality Inventory (SAQI), the review emphasizes perceptual dimensions related to source localization and room response, while excluding general spectral-temporal attributes. The literature survey reveals a strong focus on localization cues, such as interaural time and level differences (ITDs, ILDs), while reverberation and other room acoustic attributes remain less explored in loss function design. Recent works that estimate room acoustic parameters and develop embeddings that capture room characteristics indicate their potential for future integration into neural network training. The paper concludes by highlighting future research directions toward more perceptually grounded loss functions that better capture the listener’s spatial experience.

💡 Research Summary

The paper provides a comprehensive review of loss functions that explicitly incorporate auditory spatial perception for deep‑learning‑based binaural audio synthesis. It begins by highlighting the inadequacy of conventional waveform‑level error metrics (e.g., L2, L1) in capturing the perceptual realism required for immersive headphone playback. To ground the discussion in a perceptual framework, the authors adopt the Spatial Audio Quality Inventory (SAQI), focusing on two of its eight categories: “geometry” (source‑related spatial qualities) and “room” (environment‑related spatial qualities). From these categories, eight perceptual attributes are selected, ranging from horizontal direction (ITD, ILD) and vertical cues (spectral cues) to more complex measures such as apparent source width (ASW), interaural cross‑correlation (IACC), distance perception (DRR), and reverberation metrics (T60, EDT, DRR, LEV, LF).

The core contribution is a taxonomy of loss‑function types relevant to neural‑network training:

- SL‑NN (Spatial Loss for Neural Networks) – loss terms that have already been used as training objectives and directly encode spatial perception (e.g., ITD/ILD differences, embedding‑based distances).

- EST‑NN (Estimators with Neural Networks) – networks trained to estimate spatial audio parameters (distance, T60, etc.) that are not themselves loss functions but could be repurposed as perceptual regularizers.

- EMB‑NN (Embeddings for Neural Networks) – learned representations that implicitly capture spatial cues; examples include the Spatial Audio Quality Assessment Metric (SA QAM) and its attention‑enhanced variant HAPG‑SA QAM.

- SL‑CAN (Spatial Loss Candidate) – perceptually motivated metrics that have not yet been employed as training losses but show promise (e.g., BSD A, PBC‑2, Perceptually Enhanced Spectral Distance).

The review surveys a wide range of existing approaches. Overall‑quality predictors such as the Generative Machine Listener (GML) and SA QAM achieve high correlation (≥0.79) with human MUSHRA scores, suggesting they could be turned into differentiable loss functions (SL‑CAN). Coloration metrics are examined in depth: the simple Log Spectral Distortion (LSD) correlates poorly with perceived coloration, whereas perceptually smoothed measures like BSD A (ρ≈0.84) and PBC‑2 (ρ≈0.92) perform substantially better and can be implemented as loss terms similar to iMagLS.

Localization‑focused losses dominate the literature. Several works embed ITD/ILD errors directly into the loss, while others employ triplet‑embedding objectives that align source‑direction embeddings between reference and generated binaural signals (e.g., BSM‑iMagLS, MMagLS). EST‑NN models that predict distance or reverberation time are also discussed, highlighting their potential as auxiliary perceptual regularizers.

A critical observation is the strong bias toward source‑direction cues and the relative neglect of “room” attributes such as reverberation time, early decay, and listener envelopment. Although the SAQI inventory lists these qualities, few loss functions currently address them, limiting the perceptual fidelity of reproduced environments. The authors argue that future loss designs should incorporate reverberation‑related parameters (DRR, T60, EDT) either directly as differentiable terms or via learned embeddings that capture room acoustics.

The paper concludes with two forward‑looking research directions. First, develop multi‑task loss frameworks that jointly optimize source localization, room acoustics, coloration, and externalization, thereby reflecting the multidimensional nature of spatial audio quality. Second, leverage attention mechanisms and perceptual weighting schemes to align loss gradients with human judgment, as demonstrated by HAPG‑SA QAM’s improved correlation (0.83 overall, 0.77 spatial). By expanding beyond the current SL‑NN and SL‑CAN categories toward more integrated perceptual loss functions, the field can move closer to training neural networks that produce binaural audio indistinguishable from real acoustic scenes.

Comments & Academic Discussion

Loading comments...

Leave a Comment