Resolving the induction problem: Can we state with complete confidence via induction that the sun rises forever?

Induction is a form of reasoning that starts with a particular example and generalizes to a rule, namely, a hypothesis. However, establishing the truth of a hypothesis is problematic due to the potential occurrence of conflicting events, also known a…

Authors: Youngjo Lee

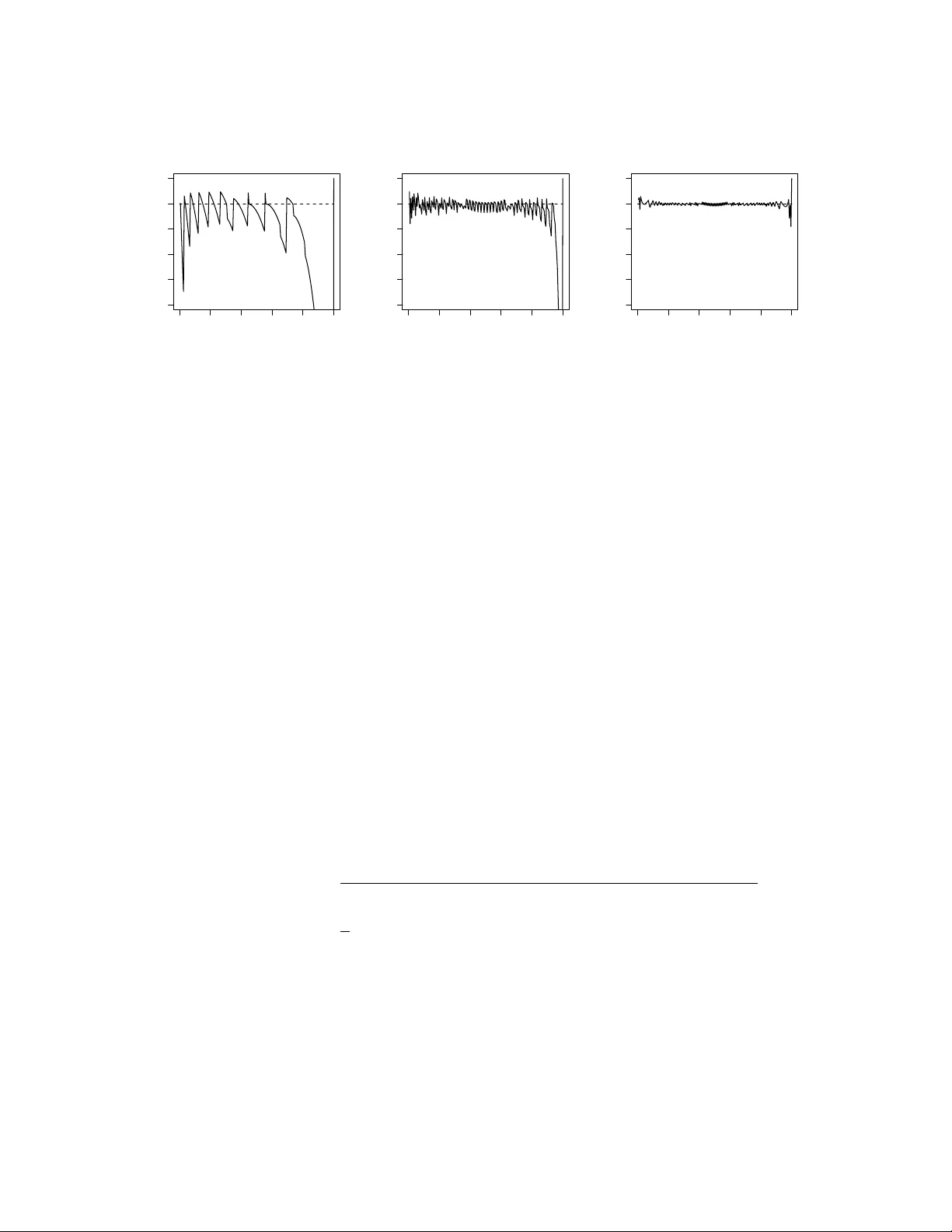

Resolving the Induction Problem: Can W e State with Complete Confidence via Induction that the Sun Rises F orev er? Y oung jo Lee Departmen t of Statistics, Seoul National Universit y Marc h 3, 2025 Abstract Induction is a form of reasoning that starts with a particular example and generalizes to a rule, namely , a h yp othesis. Ho w ever, establishing the truth of a h yp othesis is problematic due to the p oten tial o ccurrence of conflicting even ts, also kno wn as the induction problem. The sunrise problem, first introduced by Laplace (1814), is a quintessen tial example of the probabilit y-based induction. In his solution, a zero probabilit y is alw ays assigned to the hypothesis that the sun rises forev er, regardless of the n umber of observ ations made. This is a symptom of fundamental deficiency of probabilit y-based induction: A hypothesis can never b e accepted via the Bay es-Laplace approach. Alternativ e priors hav e b een pro- p osed to address this issue, but they hav e failed to fully ov ercome the deficiency . W e inv estigate why this o ccurs and demonstrate that the confidence do es not exhibit such a deficiency , as it is not a probabilit y and therefore does not adhere to Bay es’ rule. The confidence is neither a lik eliho o d to allo w not only a recon- ciliation b etw een epistemic and aleatory in terpretations of probabilit y but also a resolution in agreemen t with the evidence by enabling us to accept a hypothesis with complete confidence as a rational decision. 1 In tro duction Induction from exp erience is crucial for drawing v alid inferences. Induction is a form of reasoning that mov es from particular examples to a rule, where one infers a hypothesis (prop osition, scientific theory) based on data. Originally , the goal of science was to corrob orate h yp otheses suc h as ‘all ra vens are blac k’ or to infer them from observ ational data. Ho wev er, the difficult y of deriving suc h inductive logic has b een recognized as a problem of induction since classical times. Pyrrhonian skeptic Sextus Empiricus (1933) 1 questioned the v alidit y of inductiv e reasoning, ‘p ositing that a universal rule could not b e established from an incomplete set of particular instances. Sp ecifically , to establish a univ ersal rule from particular instances b y means of induction, either all or some of the particulars can b e review ed. If only some of the instances are reviewed, the induction ma y not b e definitiv e, as some of the instances omitted in the induction ma y con tra vene the universal fact; ho w ever, reviewing all the instances ma y b e nearly imp ossible, as the instances are infinite and indefinite.’ Hume (1748) argued that inductiv e reasoning cannot b e justified rationally b ecause it presupp oses that the future will resemble the past. In resp onse to Hume’s sk epticism, Ba yes (1763) proposed an inductive reasoning pro cedure to address the question of whether one can rationally accept a h yp othesis. Ho wev er, he might not hav e embraced the broad scop e of application no w kno wn as Ba yesian epistemology , whic h was pioneered and p opularized by Laplace (1814) as in verse probabilit y . Probabilit y has b een used to represen t uncertainties in inductiv e reasoning. Kol- mogoro v (1933) presented formal definition of mathematical probability as the k ey measure of uncertain t y . There ha ve b een tw o main strands in interpreting probabil- it y; epistemic and aleatory . In the epistemic interpretation, the probabilit y represents the degree of b elief in a hypothesis. P a witan and Lee (2024) discussed four differen t epistemic in terpretations applied to h yp othesis; sub jectiv e Ba yesian, logical, ob jectiv e Ba yesian and fiducial in terpretation. Sa v age (1954) provided an axiomatic basis for sub jective probability as a degree of p ersonal b elief. Keynes (1921) prop osed a logical in terpretation as a degree of rational b elief. Though his theory was simply to o v ague to ha v e direct impact on statistics and mathematics, it may influence logical probabil- it y of Carnap (1950), the ob jectiv e Ba yesian probability (Jeffreys, 1961) and fiducial probabilit y (FP; Fisher, 1930). In aleatory in terpretation, the probability is applied to random ev ents, represen ting either a long-run frequency (V on Mises, 1928) or a prop en- sit y of the generating mec hanism (Pa witan and Lee, 2024). F or them, a non-rep eatable single ev ent suc h as the true status of a h yp othesis do es not ha v e a probabilit y . Fisher (1922) in tro duced likelihoo d as another measure of uncertainties for fixed unknowns. Recen tly , extended lik eliho o d has b een extensiv ely dev elop ed for prediction of random unkno wns (Lee and Nelder, 1996; Lee et al., 2017; Pa witan and Lee, 2024; Lee and Lee, 2024). Ro yall (1997) paraphrased three t yp e conclusions ab out hypotheses G and H via inductiv e reasoning: C1: I b eliev e G to b e true. C2: I should act as if G were true. C3: The observ ation is evidence supp orting G ov er H . Ba yesian approac h would b e useful for C1, frequentist approach such as h yp othesis testing of Neyman and Pearson (1933) for C2, and likelihoo d theories for C3 (Ro yall, 1997). Bay esian epistemic probabilities ha v e been applied to all t yp es of h yp otheses in v arious fields (Paulos, 2011). W e review how Bay esian epistemic probabilities can b e applied to dra w C1-C3. Fisher (1930) in tro duced the FP as an alternative to Bay esian epistemic probability without assuming a prior. Throughout his life, he b eliev ed that 2 it is a true probability of ‘early writers’ (Fisher, 1958) such as Bay es (Barnard, 1987); Fisher used the term ‘probability’ in the same sense as used b y Bay es (Barnard, 1987). Ho wev er, it encountered substan tial criticisms, with ongoing con tro versy ab out its ad- herence to probabilit y (Edwards, 1977). According to Sa v age (1961), Fisher’s FP is ‘a b old attempt to mak e the Ba yesian omelet without breaking the Ba yesian egg’. The FP has recen tly b ecome of interest as a form of confidence. The confidence is an extended lik eliho o d (Pa witan and Lee, 2021), which is a distinct concept from b oth probability and likelihoo d. Extended likelihoo d has b een introduced to deal with complex mo dels with additional random unkno wns. Confidence concept gives external meaning outside the confidence-interv al context. The extended lik eliho o d can access to the frequentist probabilit y , an ob jective certification not directly av ailable to the classical likelihoo d (P awitan and Lee, 2024). F or example, the confidence interpretation can b e allow ed for predictive in terv als of random unknowns (Lee and Lee, 2024). In this pap er, we argue that FP aligns with the extended lik eliho o d, and that Ba y es migh t b e consid- ered to utilize an extended lik eliho o d. Efron (1998) noted, “Ma yb e Fisher’s biggest blunder will b ecome a big hit in the 21st cen tury!” In this pap er, w e demonstrate alternativ e wa ys to dra w C1-C3 via lik eliho o d-based inductiv e reasoning. The t wo dis- tinct epistemic and aleatory in terpretations of probabilit y can b e reconciled through the confidence. W e emphasize its synergy rather than conflict b et ween epistemic and aleatory interpretations. 2 Epistemic Probabilit y for Inductiv e Logic Curren t inductiv e logic is based on the idea that the probability represen ts a logical relation b et w een the hypothesis and the relev ant observ ations. Let G b e a h yp othesis, suc h as ‘all rav ens are black’ or ‘the sun rises forever’ and E b e a particular observ ation or ev ent such as ‘the rav en in fron t of me is black’ or ‘the sun will rise tomorro w’. The deductiv e logic that G implies E can b e represen ted b y the conditional probability P ( E | G ) = 1 and P (not E | G ) = 0 and its contrapositive logic, that not E implies not G, b y P (not G | not E ) = 1 and P ( G | not E ) = 0 . The probabilit y can b e quantified as a num b er b et ween 0 and 1, where 0 indicates imp ossibilit y (false), and 1 indicates certain ty (true). Th us, deductiv e reasoning allo ws us to predict a particular even t and to falsify a h yp othesis b y a conflicting observ ation. A single observ ation of a non-blac k ra ven can certainly falsify the h yp othesis. Inductiv e reasoning has b een studied based on the Bay es (1763) rule, defined b y the conditional probability P ( G | E ) = P ( E | G ) P ( G ) P ( E ) = P ( E , G ) P ( E ) , (1) 3 where P ( G ) is called a prior of hypothesis G , P ( E | G ) is a likelihoo d and P ( G | E ) is a p osterior of G . This Bay es rule is implied by coherency axiom in sub jectiv e proba- bilit y theory (Sav age, 1972), whereas b y a definition of the conditional probabilit y in Kolmogoro v system. Under current system, probability obeys the Bay es rule. Provided that the denominator is not zero, this leads to 1 ≥ P ( G | E ) = P ( E | G ) P ( G ) P ( E ) = P ( G ) P ( E ) ≥ P ( G ) ≥ 0 . Up dating the degree of b elief of G from P ( G ) to P ( G | E ) via the Ba yes rule is called ‘learning from the data’ in Bay esian epistemology . It is prohibited to assert that P ( G ) = 0 or 1 b ecause learning from the data is imp ossible, as P ( G | E ) = P ( G ) . A sub jective Ba yesian would consider p eople rational if they maximize the exp ected utilit y of b etting. De Finetti (1972) insisted that the betting quotien t P ( G ) represents a degree of b elief for computing the exp ected utilit y . Assigning P ( G ) = 1 means that one is willing to b et ev erything on the h yp othesis G . Since we are rarely willing to bet ev erything on a hypothesis, we should av oid assigning P ( G ) = 1 (Olsson, 2018). The ‘Ba yesian challenge’ means that b elief P ( G ) = 1 is not relev ant to rational decision- making. Let I ( · ) b e the indicator function such that I ( G ) = 1 if hypothesis G is true and = 0 otherwise. Epistemic probability P ( G ) = 1 or 0 is nev ertheless equiv alen t to I ( G ) = 1 or 0, resp ectiv ely . Prohibiting a complete b elief in a hypothesis P ( G ) = 1 w ould b e compulsory b ecause it implies I ( G ) = 1, whic h can b e never known. This w ould b e what the Bay esian challenge a voids. 3 Ba y esian Solutions to the Sunrise Problem By using the sunrise problem, Laplace (1814) demonstrated ho w to up date degrees of b elief (epistemic probabilit y) on a h yp othesis based on the data. Let θ b e the long-run frequency of sunrises, i.e., the sun rises on 100 × θ % of da ys. Under the Bernoulli mo del, the h yp othesis G that the sun rises forev er is equiv alen t to h yp othesis θ = 1 . Based on the finite observ ations ( E ) until the presen t da y , can w e attain P ( G | E ) = 1? Prior to the kno wledge of an y sunrise, let us suppose that one is completely ignoran t of the v alue of θ . Laplace (1814) represented this prior ignorance due to the principle of insufficien t reason by means of a uniform prior P 0 ( θ ) = 1 on θ ∈ (0 , 1) . This uniform prior w as also prop osed b y Ba yes (1763). Here, the probability of there b eing a sunrise tomorro w is θ . How ev er, w e do not know the true v alue of θ . Let T n b e the n um b er of sunrises in the last n days. W e are pro vided with the observ ed data that the sun has risen ev ery da y on record ( T n = n ). Laplace, based on a y oung-earth creationist reading of the Bible, inferred the n umber of days according to the b elief that the univ erse w as created appro ximately 6000 y ears ago. The Ba yes–Laplace rule gives the p osterior 4 densit y , f ( θ | T n = n ) = P 0 ( θ ) θ n R 1 0 P 0 ( θ ) θ n dθ = ( n + 1) θ n . (2) Consequen tly , the probability statemen ts for θ can b e established from this p osterior. In the App endix, given n = 6000 × 365 = 2 , 190 , 000 days of consecutiv e sunrises, we ha ve P ( E | T n = n ) = Z θ f ( θ | T n = n ) dθ = n + 1 n + 2 = 2190001 2190002 = 0 . 9999995 . The probabilit y of this particular ev ent, that is, the sun rising the next day , ev entually b ecomes one as the n um b er of observ ations increases. Ho wev er, this asp ect is not suffi- cien t to accept the h yp othesis G that the sun rises forev er. As sho wn in the App endix, Broad (1918) show ed that for an y t = 0 , 1 , · · · , n and n = 1 , 2 , · · · , P ( G | T n = t ) = 0 . Th us, the Bay es-Laplace solution cannot b e used for the significan t test with a n ull h yp othesis G : θ = 1 , b ecause it has a false-negative error of 1. Jaynes (2003) argued that a b eta prior, B eta ( α, β ) with α > 0 and β > 0 , describ es the state of knowledge that α successes and β failures w ere observ ed prior to the exp erimen t. The Ba y es– Laplace uniform (equiv alen t to B eta (1 , 1)) prior means that one success and one failure w ere observed a priori . Having such a uniform prior indicates that the exp erimen t is a true binary in terms of ph ysical p ossibilit y 0 < θ < 1, leading to the parameter space Θ = (0 , 1). Thus, it cannot b e an ignorant prior. This explains why one cannot accept G ( P ( G | E ) = P ( θ = 1 | E ) = 0) by using the Bay es rule (1), as Ba yes-Laplace’s B eta (1 , 1) prior assumes P ( G ) = 0 a priori , i.e., θ = 1 / ∈ Θ = (0 , 1), meaning θ = 1 is outside of the parameter space. Even with an exp erimen t with only successes after tremendously large num b er of trials, there is no w a y to accept G : θ = 1 unless the prior of observing one failure is discarded. There is no w a y to attach ev en a mo derate probabilit y to G . Thus, the Ba yes-Laplace solution under a B eta (1 , 1) prior cannot o vercome the degree of sk epticism raised by Hume (1748). Thus, Broad (1918) stated that ‘induction is the glory of science but the scandal of philosoph y’. If there is no w ay to accept any scientific h yp othesis via data, it is also the scandal of science. This is a p eculiar phenomenon, called the probabilit y dilution when G is true. Due to its simple nature, we use the sunrise problem to explain ho w to ov ercome this fundamen tal deficiency of probability-based induction. Jeffreys’s (1939) resolution was the use of another prior, whic h places a p oin t mass 1/2 on the n ull hypothesis G : θ = 1 and a p oin t mass 1/2 on the alternative h yp othesis G C : θ ∼ B eta (1 , 1). Then, as describ ed in the App endix, w e hav e P ( E | T n = n ) = ( n + 1)( n + 3) ( n + 2) 2 and P ( G | T n = n ) = n + 1 n + 2 . Senn (2009) considered Jeffreys’s (1939) work to b e ‘a touc h of genius, necessary to rescue the Laplacian form ulation of induction’ because it o vercomes probabilit y dilution 5 b y allo wing P ( G | T n = n ) > 0. As n increases, P ( G | T n = n ) ev entually go es to one under G : θ = 1. Under this resolution, the probabilit y dilution is alleviated, but it cannot completely accept G with finite observ ations b ecause his prior places a p oint mass of 1 / 2 on G C . Is there a w a y to accept G with finite observ ations? Jeffreys’s great insigh t is to recognize the necessit y of the point mass at θ = 1 to av oid the probabilit y dilution of G . Can we allo w a p oin t mass without assuming any prior? According to Hartmann and Sprenger (2010), there are three pillars of Bay esian epistemology: (i) the Dutch b ook argument by Ramsey (1926) and de Finetti (1972), (ii) the principal principle of Lewis (1980) and (iii) Bay esian conditionalization. A logical device called the Dutch b ook has b een used to establish Ba yesian sub jectiv e probabilit y as an in ternally consisten t p ersonal b etting price for a single even t. The p ersonal degree of b elief about the h yp othesis can be represen ted b y betting o dds. In de Finetti’s (1972) framework, b etting o dds should ob ey probabilit y axioms to a void the Dutc h bo ok. In effect, his Dutc h bo ok argument assumes ob jectiv e utility (money) and a coherent b etting strategy to arrive at sub jective probabilities. V on Neumman and Morgenstern (1947) assume that the aleatory probability and axioms of rational prefer- ences lead to sub jective utilit y . Sa v age (1954) combined the Dutc h b o ok argument and v on Neumman and Morgenstern’s (1947) preferences in game theory to unify sub jective probabilit y and sub jectiv e utilit y within a decision framework. His axiomatic approac h has great theoretical implications; prior probability can be elected from sub jectiv e pref- erences and then up dated with observ ations by using the Ba y es rule. Prior could reflect either ignorance ab out the h yp othesis in ob jective Bay esian sc ho ol or one’s fullest p os- sible kno wledge, reflecting p ersonal preference in sub jectiv e Bay esian sc ho ol. Coheren t b etting argumen t w ould be convincing only for the latter (V ovk, 1993). Lewis’s (1980) principal principle states that sub jectiv e probability must b e set as equal to ob jectiv e probabilit y if the latter exists. Logical, ob jectiv e Bay esian and fiducial probabilities ha ve tried to iden tify an ob jectiv e epistemic probabilit y . Ba y esian conditionalization is the use of the Bay es rule as learning from new observ ations. By setting the current p osterior P ( G | E ) as the prior P ( G ), the Bay es rule can up date the curren t degrees of b elief P ( G | E ∗ ) in light of new observ ations E ∗ . 4 Confidence and Extended Lik eliho o d Neyman (1937) introduced the idea of confidence as aleatory co verage probabilit y of a confidence interv al (CI) pro cedure. Recen tly , a surge of renew ed interest in confidence has arisen (Sch w eder and Hjort, 2016). Let T n b e a random v ariable generated from a mo del P ( T n | θ ) with fixed unknown θ and θ 0 b e the true but unknown v alue of θ . Supp ose that we construct the (1 − α ) CI pro cedure C I ( T n ) for θ 0 . W e consider a binary latent v ariable U = U ( θ 0 , T n ) = I ( θ 0 ∈ C I ( T n )) , represen ting the true status of the CI pro cedure with P ( U = 1) = P ( θ 0 ∈ C I ( T n )) = 1 − α 6 b eing the aleatory co verage probabilit y with a significant level α . Given an observ ation T n = t, we construct a (1 − α ) × 100% observed CI, namely C I ( t ). Is there an ob jectiv e epistemic concept to state our sense of uncertaint y in a giv en observ ed C I ( t ) without assuming a prior? F or a giv en observ ation T n = t , as an alternativ e to the Ba yesian p osterior P ( θ | t ) , Fisher (1930) defined a confidence (fiducial) density c ( θ ; t ) = ∂ P ( T ∗ n ≥ t | θ ) /∂ θ , (3) where P ( T n ≥ t | θ ) is the righ t side P-v alue and T ∗ n is another data set with the same dis- tribution as T n . W e may call c ( θ ; t ) epistemic confidence among p eople with a common consensus theory P ( T n | θ ). Given T n = t, let u = U ( θ 0 , t ) = I ( θ 0 ∈ C I ( t )) b e the realized as either one or zero but still unknown status of an observed C I ( t ) b ecause θ 0 is unknown. Pa witan and Lee (2021) show ed that the epistemic confidence of an observed CI can b e computed as an extended likelihoo d of u, ℓ e ( u = 1; t ) = C ( θ 0 ∈ C I ( t )) = Z C I ( t ) c ( θ ; t ) dθ = 1 − α, (4) where 1 − α is the confidence of an observed C I ( t ) . Care is necessary b ecause it is not probabilit y . Since C ( θ 0 ∈ C I ( t )) = 1 − α = P ( θ 0 ∈ C I ( t )) = I ( θ 0 ∈ C I ( t )) = u, where u is either 0 or 1 but unknown, we use a distinct notation for the confidence to a void a probability-related con tro versies. F or example, the exp ected utility cannot b e based on the confidence (P a witan and Lee, 2017). When T n is con tinuous and sufficien t, under appropriate conditions, the confidence feature (Sc h weder and Hjort, 2016; Pa witan et al . 2023) holds P ( θ 0 ∈ C I ( T n )) = C ( θ 0 ∈ C I ( t )) , (5) where the LHS is aleatory co verage probability of the CI pro cedure C I ( T n ) and the RHS is epistemic confidence of observ ed in terv al C I ( t ) . Lik eliho o d pro cedures hav e b een criticized for pro viding exact inferences only asymptotically . Bay esian procedures pro vide exact interv al estimation in finite samples but ha ve been criticized for assuming un verifiable prior. The confidence feature of an observ ed in terv al indicates that an exact in terv al estimation can b e established for finite samples, but in a frequen tist sense, b y main taining the aleatory cov erage probability of CI pro cedure. In the sunrise problem, the next section sho ws c ( θ ; t ) = I ( t = n ) D ( θ ) + I ( t < n ) c + ( θ ; t ) , (6) where D ( θ ) denotes the Dirac delta function to assign a p oin t mass one at θ = 1 and c + ( θ ; t ) is the B eta ( t + 1 , n − t ) densit y . Wilkinson (1977) called the p oint mass of 7 confidence at θ = 1 a paradoxical unassigned probabilit y b ecause he presumed Θ = (0 , 1). The uniform prior leads to p osteriors without a p oint mass, so they alwa ys allow t wo-sided credible in terv al I ( t ) = ( a ( t ) , b ( t )) with 0 < a ( t ) < b ( t ) < 1 , satisfying for all 0 < α < 1 and t = 0 , · · · , n P ( θ ∈ I ( t ) | T n = t ) = 1 − α. This is reasonable if Θ = (0 , 1) . Ho w ever, in this pap er, we ha ve Θ = (0 , 1] to allow a h yp othesis G : θ = 1 . When θ 0 = 1 ∈ Θ = (0 , 1], the cov erage probabilit y of tw o-sided credible in terv al b ecomes P ( θ 0 ∈ I ( t )) = 0, b ecause θ 0 = 1 ∈ I ( t ) = ( a ( t ) , b ( t )) for all t . This explains a probability dilution at θ 0 = 1. When Θ = (0 , 1], the p oin t mass at θ = 1 in c ( θ ; t ) is no longer an unassigned probability . Jeffreys’s (1939) prior puts a point mass of 1/2 at θ = 1, indep enden t of the data T n = t. How ev er, confidence has a data- dep enden t p oin t mass of one to giv e the complete confidence C ( θ = 1; n ) = C ( G ; n ) = 1 if T n = n and the n ull confidence C ( G ; t ) = 0 if T n = t < n. Let C I ( t ) b e a tw o-sided observed CI if av ailable. In the sunrise problem, T n is discrete, so the confidence feature (5) holds asymptotically . Figure 1 is a plot of the aleatory cov erage probability P ( θ 0 ∈ C I ( T n )) of the equal-tailed tw o-sided 95% CI pro cedures, against epistemic confidence ℓ e ( u = 1; t ) = C ( θ 0 ∈ C I ( t )) = 1 − α = 0 . 95 of the hypothesis θ 0 ∈ C I ( t ) for n = 10 , 50 and 500 when Θ = (0 , 1] . When T n = t < n, the confidence densit y B eta ( t + 1 , n − t ) can alwa ys pro duce the t w o-sided CI for θ 0 . When θ 0 < 1, T n = t < n for some n. When θ 0 = 1 , T n = n , which leads to an one-sided oracle 100% CI { 1 } . Because of a p oin t mass at θ = 1 the co verage probability of the CI is one when θ 0 = 1 . Ho wev er, the complete confidence C ( G ; n ) = 1 do es not necessarily mean that G : θ = 1 is true. F or example, when θ 0 = 0 . 99 ev en though G is false , C ( θ = 1; n ) = C ( G ; n ) = 1 with a probability P ( T n = n | θ = θ 0 ) , whic h can b e quite large if n is small. Consequen tly , from Figure 1, we can see that the co verage probabilit y at θ 0 = 0 . 99 can b e quite low er than the stated lev el 0.95 in small samples. Ho wev er, consistency means that the stated level 0.95 will b e ac hieved at θ 0 = 0 . 99 ev entually as n increases. In summary , Figure sho ws that the epistemic confidence C ( θ 0 ∈ C I ( t )) of an observ ed C I ( t ) is a consisten t estimator of the aleatory co verage probability P ( θ 0 ∈ C I ( T n )) of the CI pro cedure C I ( T n ) for all θ 0 ∈ (0 , 1), i.e., ℓ e ( u = 1; t ) = 1 − α → P ( θ 0 ∈ C I ( T n )) as n → ∞ . When θ 0 = 1 , it is an oracle estimator ℓ e ( u = 1; n ) = C ( θ 0 ∈ C I ( T n = n )) = C ( G ; n ) = 1 as if θ 0 = 1 is kno wn in adv ance . Due to its consistency , we can assure of our confidence as the n um b er of observ ations gro ws. 5 Confidence Resolution In this section, w e study how confidence can av oid a fundamental deficiency of probability- based induction, namely the probability dilution. P opp er (1959) w as a follow er of the aleatory in terpretation of probabilit y . Ho wev er, for him, the main drawbac k of the aleatory view w as its failure to pro vide ob jectiv e epistemic probabilities for single 8 0.0 0.2 0.4 0.6 0.8 1.0 0.75 0.80 0.85 0.90 0.95 1.00 n=10 θ Cover age Probability 0.0 0.2 0.4 0.6 0.8 1.0 0.75 0.80 0.85 0.90 0.95 1.00 n=50 θ Cover age Probability 0.0 0.2 0.4 0.6 0.8 1.0 0.75 0.80 0.85 0.90 0.95 1.00 n=500 θ Cover age Probability Figure 1: The cov erage probabilit y against the 95% confidence lev el at n = 10 , 50 , 500 . ev ents. What Popper w an ted, I b eliev e, was the confidence. Let us consider a single ev ent for a hypothesis G U = I ( G ) . Hyp othesis testing is a prediction problem of discrete laten t v ariable such as U = I ( G ) = 0 or 1 (Lee and Bjørnstad, 2013). Given evidence T n = t, let u b e the realized but unkno wn status of the h yp othesis G . Then, w e can compute the confidence (extended lik eliho o d) for a h yp othesis G as ℓ e ( u = 1; t ) = C ( G ; t ) . (7) P opp er (1959) sa w falsifiabilit y as a criterion for scien tific h yp othesis; if a h yp othesis is falsifiable, it is scientific, and if not, then it is unscien tific. The h yp othesis G : θ = 1 is then Popper scientific b ecause it can b e falsified if a conflicting observ ation, i.e., one da y of no sunrise, o ccurs. Jeffreys’s resolution corrob orates Popper’s theory that a hypothesis is never accepted: Given T n = n, P ( G | T n = n ) < 1 for all n and set P ( G | T n = n ) as a new P ( G ) . If a new conflicting evidence X n +1 = 0 arrives w e ha ve a complete falsification P ( G | X n +1 = 0) = 0 . Supp ose no w that P ( G | T n = n ) = 1 to set P ( G ) = 1 , then P ( G | X n +1 = 0) = P ( X n +1 = 0 | G ) P ( X n +1 = 0 | G ) P ( G ) + P ( X n +1 = 0 | not G ) P (not G ) = 0 0 (undefined). Th us, conflicting evidence cannot falsify the hypothesis with a prior P ( G ) = 1. T o corrob orate P opp er’s ten tative acceptance theory , the prior and p osterior should nev er b e set as one. Ba y esian challenge is again requested to supp ort P opp er’s theory . Thus, probabilit y cannot allo w a paradigm shift (Kuhn, 2012) (switching from P ( G ) = 1 to P ( G ) = 0) to accept a new scien tific theory . If probability is used for hypotheses, P ( G ) is indeed either 0 or 1 but unknown . Popper’s ten tativ e acceptance theory prohibits 9 P ( G ) = 1, so only P ( G ) = 0 is allo w ed. P opp er (1959, App endix vii) explained wh y he b elieved that P ( G ) = 0 and used it as argumen t against the probabilit y-based induction b ecause there is nothing to learn from the data with P ( G ) = 0. Indeed, he b eliev ed I ( G ) = 0 a priori . This explains wh y the probability may not be adequate; he ma y ob ject the use of the Ba y es rule for inductive reasoning. Giv en T n = t , the confidence densit y (Sc hw eder and Hjort, 2016) can b e written as c ( θ ; t ) ∝ c 0 ( θ ; t ) L ( θ ; t ) , (8) where c 0 ( θ ; t ) is the implied prior, analogous to the Ba yesian prior and L ( θ ; t ) = P ( T n = t | θ ) is the Fisher (1921) lik eliho o d. Th us, the confidence density c ( θ ; t ) can b e viewed as a Ba y esian p osterior under an implied prior c 0 ( θ ; t ) . The Jeffreys inv arian t prior has b een advocated to impro ve the Bay es–Laplace uniform ( B eta (1 , 1)) prior, leading to Bernardo’s (1979) reference prior for m ulti-parameter cases. In single parameter cases, the inv ariant (equiv alen t to reference) prior gives a asymptotically close p osterior to c ( θ ; t ) (Lindley , 1958; W elc h and Peers 1963). These priors hav e b een developed as robust priors in ob jectiv e Ba yesian sc ho ol. In sunrise problem, the Jeffreys (reference) prior is B eta (1 / 2 , 1 / 2), but as shown in the App endix, any B eta ( α, β ) prior with α > 0 and β > 0 cannot ov ercome probabilit y dilution; P ( G | T n = n ) = 0 for all n. How ever, this problem has been often ignored b ecause it occurs only at a point θ = 1. In Stein’s (1959) high-dimensional problem, the use of uniform prior can lead to a probability dilution in the entire parameter space. This causes that the credible in terv al based on the uniform prior cannot maintain the stated lev el of confidence. Ho w ev er, the reference prior cannot ov ercome the probabilit y dilution neither, whereas the confidence o vercomes probability dilution to allow a CI, maintaining the stated lev el of cov erage probabilities for all parameter v alues. The p oin t mass of confidence (6) at b oundary prev ents suc h a probabilit y dilution (Lee and Lee, 2023). In Appendix, w e sho w that c 0 ( θ ; t ) = 1 / (1 − θ ) in the sunrise problem. Interpretation of the implied prior c 0 ( θ ; t ) = 1 / (1 − θ ) as B eta (1 , 0) , means that only one success is observ ed prior to the exp erimen t. This lea ves ro om for a p ossibility of an exp erimen t with successes only , i.e., θ = 1. Consider a significant test with a n ull hypothesis G. Giv en T n = n, confidence allows us to accept G with complete confidence C ( G ; n ) = ℓ e ( u = 1; n ) = 1 . As a measure of consistency with the n ull hypothesis G, P ( T n < n | G ) = 0 gives the actual level of significance attained by the data (Co x, 1958). This explains wh y it accepts G with complete confidence, C ( G ; T 1 = 1) = P ( T 1 = 1 | G ) = 1 , even with a single corrob orating observ ation . How ev er, the confidence is not a probability but an extended lik eliho od. Many con trov ersies of confidence hav e stemmed from its confusion as a probability (P awitan and Lee, 2017; 2021; 2022; 2024). Even though we hav e the complete confidence C ( G ; n ) = 1 for giv en T n = n, in con trast to the Ba yes rule, a single new conflicting observ ation X n +1 = 0 can alter complete confidence C ( G ; T n = n ) = 1 to null confidence C ( G ; T n +1 = n ) = 0. This shows that the epistemic confidence 10 allo ws a switch from 1 to 0. Curren t inductiv e reasoning uses the Bay es rule (1), by presuming that X n +1 and G ha v e a join t probability . In particular, it implies that G has an unv erifiable prior probability P ( G ). How ev er, the degree of confidence on the h yp othesis G is based on the whole data, without using the conditional probability P ( G | X n +1 ). Consequen tly , the integrated confidence is no longer a confidence; it may not eliminate nuisance parameters b y in tegration as Ba yesian do es. Therefore, the marginalization paradox (Da wid et al., 1972) do es not apply to confidence. Probability allo ws marginalization and computation of expected utilit y . The confidence C ( G ; t ) = 1 is in fact a simply consisten t estimator of I ( G ) = 1 based on an observ ation T n = t , whereas the probability P ( G ) = 1 is equiv alent to I ( G ) = 1 . 6 Supp ort in terv al V ersus Confidence In terv al Consisten t with Carnap’s (1950) corrob oration theory , the Bay esian supp ort in terv al can b e constructed based on the change from the prior to posterior (PTP; W agenmakers et al . 2022), P T P ( θ ; t ) = f ( θ | t ) f ( θ ) = P ( t | θ ) P ( t ) ∝ P ( t | θ ) = L ( θ ; t ) , (9) where P ( t ) = R Θ P ( t | θ ) f ( θ ) dθ and L ( θ ; t ) is the Fisher likelihoo d. The likelihoo d is a relativ e concept since it is not a probabilit y of θ ; R Θ L ( θ ; t ) dθ = 1 . The Neyman-P earson test is based on the likelihoo d ratio (LR) LR ( θ 1 , θ 2 ; t ) = P ( T n = t | θ = θ 1 ) P ( T n = t | θ = θ 2 ) = L ( θ 1 ; t ) L ( θ 2 ; t ) . Th us, lik eliho o d provides an immediate ob jective tool to compare h yp otheses; θ 1 is preferred ov er θ 2 if LR ( θ 1 , θ 2 ; t ) > 1 . How ever, traditional likelihoo d inference re- quires a sort of probabilit y-based calibration: see examples in Pa witan and Lee (2024), sho wing how lik eliho o d v alue alone can b e misleading. Because L ( θ 1 ; t ) /L ( θ 2 ; t ) = P T P ( θ 1 ; t ) /P T P ( θ 2 ; t ) , all the evidence ab out θ in the data is in the PTP as w ell as in the likelihoo d, (Birnbaum, 1962): P T P ( θ ; t ) can giv e maxim um lik eliho od estimation and Neyman-Pearson test (W agenmak ers et al ., 2022). Since the likelihoo d L ( θ ; t ) is prop ortional to the p osterior under the flat prior f ( θ ) = 1 , the likelihoo d-based supp ort in terv al can b e constructed based on the normalized likelihoo d L n ( θ ; t ) = L ( θ ; t ) R Θ L ( θ ; t ) dθ ∝ L ( θ ; t ) . Th us, the likelihoo d-based supp ort interv al can b e interpreted as the credible interv al under the flat prior on θ . The Bay es rule (conditional probability) w ould b e meaningless unless a joint prob- abilit y of θ and y is defined. Instead of assuming a prior on θ in (8), in sunrise prob- lem, let c 0 ( θ ; t ) = | ∂ η /∂ θ | = 1 / (1 − θ ) b e the Jacobian term for a particular scale 11 η = η ( θ ; t ) = − log(1 − θ ) to satisfy c 0 ( η ; t ) ∝ 1 . Then, the confidence density is a normalized likelihioo d of the scale η c ( η ; t ) = L n ( η ; t ) , where θ = θ ( η ) = 1 − e − η , c ( θ ; t ) = L n ( θ ; t ) | ∂ η /∂ θ | = c ( η ; t ) | ∂ η /∂ θ | and L n ( η ; t ) is the normalized likelihoo d with respect to η suc h that R ∞ 0 L n ( η ; t ) dη = 1. Normalized likelihoo ds are not inv arian t with resp ect to non-linear transformation of θ due to the Jacobian term . The CI is the lik eliho o d-based supp ort interv al under the flat prior on a particular scale η , allo wing the confidence-calibration C ( A ; t ) ≡ Z A c ( θ ; t ) dθ = Z A ∗ L n ( η ; t ) dη , where A ∗ = { η : η = η ( θ ; t ) for θ ∈ A } . Th us, the CI has an ob jective alreatory in terpretation, by satisfying the confidence feature (5). In Stein’s (1959) example, the scale η is dep ending up on the observed data (Lee and Lee, 2023). The choice of prior ma y corresp ond to that of scale to define the likelihoo d-based sup- p ort in terv al. F or example, in sunrise problem, the Jeffreys in v ariant (reference) prior, c 0 ( θ ; t ) = | ∂ η /∂ θ | = θ − 1 / 2 (1 − θ ) − 1 / 2 , leads to the p osterior, which is a normalized lik eli- ho od in the scale η = B eta ( θ ; 1 / 2 , 1 / 2) = R θ 0 t − 1 / 2 (1 − t ) − 1 / 2 dt, where B eta ( θ ; 1 / 2 , 1 / 2) is the incomplete b eta function with B eta (1; 1 / 2 , 1 / 2) = B eta (1/2,1/2). Th us, all the credible in terv als could b e viewed as likelihoo d-based supp ort interv als under the flat prior on some scales. F raser and McDunnough (1984) show ed that the asymptotic normal distribution of the maximum lik eliho o d estimators and normalized likelihoo ds are appro ximations to the confidence density c ( θ ; t ) . Thus, all the support in terv als satisfy the confidence feature asymptotically . Choice of scale is imp ortant for a go o d appro ximation in finite samples. F or example, the normalization transform of θ for the W ald interv al based on asymptotic normality and the Jeffreys (reference) prior lead to go od appro ximations to the confidence densit y (W elch and P eers, 1963). A ma jor contribution of Lee and Nelder (1996) is a sp ecification of a scale of ran- dom parameters to define the h-lik eliho o d among extended likelihoo ds for prediction of random unknowns. No w, consider Bay es’s (1763) billiard example. Supp ose n + 1 balls are randomly thrown on to a table of length 1, and let θ b e an unobserv ed p osition of the first ball. Then, we observe whether the n other balls come to rest to the left or righ t of the first ball. Among the n balls, T n = t balls are observ ed to b e to the left of the first ball. Ba y es identified that θ is a scale of random parameter, whic h follows a uniform distribution, B eta (1 , 1). Using extended lik eliho o d, Lee and Lee (2024) sho wed that a predictive interv al (equiv alent to credible in terv al) can b e made for an unkno wn realized v alue θ 0 of the random parameter θ , which satisfies the confidence feature with a confidence in terpretation (5). Thus, the billiard example can b e accommo dated by confidence approach. 12 T o ha ve a confidence in terv al with full data evidence, P awitan et al . (2023) pro- p osed conditioning on ancillary statistics: for more discussion, see Pa witan and Lee (2024). Cox (1958) addressed necessities of n uisance parameters for a b etter approxi- mation to the truth and of robust mo dels, less sensitiv e to mo del assumptions. Robust hea vy-tailed mo dels can b e build by adding random effects in v arious disp ersion pa- rameters of the mo del (Lee et al ., 2017). Recen tly , Lee and Lee (2024) extended the confidence densit y for fixed unknowns to mo dels with additional random unknowns. These extensions lead to multi-parameter mo dels with n uisance parameters, in which the normalized lik eliho ods can giv e asymptotically exact supp ort interv als in W agen- mak ers et al. (2022). It is c hallenging to extend the curren t exact CI theory mainly for a single parameter to that for multi-parameters. 7 Ba y es F actor V ersus Extended lik eliho o d Ratio In this section, we study Bay esian and lik eliho o d procedures for Neyman-P earson type h yp othesis testing. In the sunrise problem, the Jeffreys resolution leads to the Ba y es factor (BF) for the simple null G : θ = 1 versus a general alternative G C : θ ∽ B eta (1 , 1). The PTP for G C b ecomes P T P ( G C ; t ) = P ( G C | t ) P ( G C ) = P ( t | G C ) P ( t ) ∝ P ( t | G C ) , whic h yields an extension of lik eliho o d to a general hypothesis G C . This leads to the BF B F ( G, G C ; t ) = P ( t | G ) P ( t | G C ) = P ( G | t ) P ( G C | t ) P ( G C ) P ( G ) , whic h is an extension of the LR for the general hypotheses, G : θ = 1 v ersus G C : θ ∼ B eta (1 , 1) . Th us, B F ( G, G C ; t ) ≥ k means that the data supports G o ver G C b y k times. In the sunrise problem, B F ( G, G C ; t ) = P ( T n = t | θ = 1) P ( T n = t | θ ∼ B eta (1 , 1)) = n + 1 if t = n and = 0 if t < n, and B F ( G, G C ; t ) = P ( T n = t | θ = 1) P ( T n = t | θ ∼ B eta (1 , 1)) = 0 if t < n, Th us, the BF fa vors G ov er G C if t = n, and it accepts G C if t < n. Th us, the BF can b e used for testing general h yp otheses. Using the extended likelihoo d ratio (ELR), Lee and Bjørnstad (2013) obtained the optimal multiple test. Is there an extension of the LR to a comp osite alternative h yp othesis G N : θ < 1? W e ma y use the ELR E LR ( G, G N ; t ) = ℓ e ( u = 1; t ) ℓ e ( u = 0; t ) = C ( G ; t ) C ( G N ; t ) = C ( { 1 } ; t ) C ((0 , 1); t ) = ∞ if t = n 13 and RE L = 0 if t < n. This ELR accept G if t = n and accept G N if t < n . Note that BF and ELR hav e differen t alternatives. The Fisher (1921) likelihoo d L ( θ ; t ) = P ( T n = t | θ ) represents evidence ab out θ in the observ ed data T n = t. How ev er, the P-v alue P ( T n ≥ t | θ ) and therefore the confidence uses evidence in other p ossible data sets T ∗ n > t in (3), generated from the theory P ( T n | θ ) . Thus, it is not lik eliho o d but the extended lik eliho o d, leading to confidence-based calibration, C ( G ; t ) + C ( G N ; t ) = C ( { 1 } ; t ) + C ((0 , 1); t ) = G (Θ; t ) = 1 . Confidence uses additional information of data generating pro cess to allow a sort of probabilit y-based calibration. Thus, the lik eliho o d and extended likelihoo d are v ery distinct concepts: see discussion b etw een Lavine and Bjørnstad (2022) and Pa witan and Lee (2022). In this pap er, we view b oth ‘confidence’ and Jeffreys’s resolution as a consistent estimator (or predictor) of unobserv able v ariable U = I ( G ), the true status of a hy- p othesis G . Both are asymptotically equiv alen t, so w e compare them in finite samples. In hypothesis testing, the type 1 error is the rejection of a true null G , while the type 2 error is the rejection of a true alternativ e. In practice, these t wo errors are traded off against each other, i.e., an effort to reduce one usually increases the other. Since it is imp ossible to av oid b oth errors, it is natural to consider the amount of risk one is willing to tak e in deciding whether to accept n ull or alternative. When the n ull G is true, the confidence resolution allows an oracle acceptance with a n ull type 1 er- ror ( RE L ( G, G N ; n ) = ∞ ) whereas the Jeffreys resolution has a tentativ e acceptance ( ∞ > B F ( G, G C ; n ) > 1) with a p ositiv e t yp e 1 error. Because alternative h yp otheses of t wo resolutions are differen t, the type 2 errors ma y not b e directly compared. F rom a decision-making p ersp ectiv e, the confidence resolution would b e preferred to Jeffreys’s resolution due to null type 1 error in finite samples. The Laplace solution under a uni- form prior has a type 1 error of one. As an alternativ e to probabilit y-based reasoning, accepting a scien tific h yp othesis with complete confidence is legitimate, not irrational, as pro claimed by the ‘Ba yesian c hallenge’. The use of Haldane’s prior B eta (0 , 0) assumes the parameter space Θ = [0 , 1] with an oracle prop erty at b oth θ 0 = 0 and 1 . It leads to decision making for three h y- p otheses; θ = 0 , 0 < θ < 1 and θ = 1 . The use of consonan t b elief has b een prop osed (Deno eux and Li, 2018) to o vercome probability dilution. How ever, without plausibil- it y (Dempster, 1968), a b elief function alone ma y not b e suitable for v alid hypothesis testing, in which confidence is preferred (Lee and Lee, 2023). 8 Complemen tary Roles of Confidence Epistemic probabilit y ma y not be en tirely epistemic b ecause P ( G ) = 1 or 0 is equiv alen t to the ob jective status I ( G ) = 1 or 0 , resp ectively . Fisher was against the aleatory 14 in terpretation of probability because the ortho do x frequen tist view is emphatic that it can be applied not to a single ev en t suc h as I ( G ) but to a repeatable pro cedure. Th us, the epistemic interpretation is una v ailable or ev en denied in the frequen tist sc ho ol. Ho wev er, an epistemic in terpretation is compulsory in science b ecause scientists may not rep eat the same exp erimen t again (Fisher, 1958) as the frequentist CI pro cedure. Giv en that probability cannot suitably represen t uncertaint y ab out I ( G ), the meaning of Fisher’s confidence (FP) has b een puzzling ov er the last cen tury . Because of the confidence feature, for all θ 0 ∈ Θ and for all n ℓ e ( u = 1; t ) ≡ C ( θ 0 ∈ C I ( t )) ≡ P ( U = 1) = P ( θ 0 ∈ C I ( T n )) , (10) epistemic confidence of the observ ed CI can agree with the aleatory frequency of a rep eatable pro cedure. Lik eliho o d pro cedures ha ve b een criticized for pro viding exact inferences only asymptotically . Bay esian pro cedures pro vide exact interv al estimation in finite samples but hav e b een criticized for assuming unv erifiable prior. Confidence can allow exact interv al estimation in the frequentist p ersp ectiv e. Th us, t w o distinct epistemic and aleatory in terpretations of probability are reconciled with the confidence. Confidence provides complemen tary alternatives for the three pillars of Bay esian epistemology . According to the Sav age-Ramsey-de Finetti Dutch b o ok, a b etting strat- egy is coherent as long as it satisfies the probability la ws. Ho wev er, their definition of probabilit y is p ersonal b ecause it deals with for b et ween you and me. P a witan et al . (2023) discussed ho w to use confidence as a b etting price for an observed CI, protected from the Dutch b o ok and show ed that it can pla y a role in ob jective probability in Lewis’s (198) principal principle by not allo wing a relev ant subset. Due to a consensus among scien tists on the scientific theory P ( T n | θ ), it leads to an inter-sub jective (mar- k et) price for the h yp othesis. Conceptual separation of personal and consensus mark et prices allows for b oth an epistemic and an ob jectiv e aleatory meaning of confidence. It allows a logical interpretation as a degree of rational b elief based on a consensus scien tific theory: see Pa witan and Lee (2024) for more discussion. The Bay es rule is not used to up date the confidence, which is simply based on the full data. According to Kaplan (1996), a p erson with P ( G ) = 1 should b e willing to b et on G at any cost: One should not prefer the status quo (doing nothing) to a b et on G in which G b eing true yields nothing and G b eing false results in a million dollars b ecause the p ersonal exp ected utilit y of b oth actions is the same. Ho wev er, this is irrational. Thus, Ba yesian prohibits the term ‘b elief ’, namely , P ( G ) = 1. In epistemic probabilit y , P ( G ) = 1 is equiv alent to I ( G ) = 1, which do es not allow a leaning from conflict data. How ever, in confidence, C ( G ; T n = n ) = 1 is an estimator of unknown I ( G ) with a risk (t yp e 2 error). With confidence the zero estimated exp ected utility of the b et on G is sus- ceptible to risk, so the status quo is preferred for having no risk. The consistency of complete confidence means that the risk of accepting G v anishes ev entually as n → ∞ , i.e., C ( G ; n ) → I ( G ) as n → ∞ . Th us, an estimator C ( G ; n + 1) = 1 with T n +1 = n + 1 is more convincing than an estimator C ( G ; n ) = 1 with T n = n b ecause there is a less risk for the former. In confidence approach, given finite observ ations, oracle acceptance of the null hypothesis is rational to hav e null type 1 error. Such an oracle acceptance 15 can b e altered to a complete rejection with null type 2 error as a new conflicting ob- serv ation arises. Thus, the use of an estimated exp ected utility without accoun ting for risk in estimation would b e irrational. Economists ha v e b een aw are of this problem in using an exp ected utilit y for decision making; v arious alternativ es ha ve b een prop osed, for example Kahneman and Tversky’s (1979) prosp ect theory: see also P awitan and Lee (2024) for more discussion. 9 Concluding Remarks F rank (1954) studied a v ariety of reasons for the acceptance of scientific h yp otheses. Among scientists, it is tak en for granted that a scien tific hypothesis should b e accepted if and only if it is truly true ( I ( G ) = 1). F rank indicated that the ‘simplicit y (or b eaut y) of a hypothesis’ and ‘agreement with the common sense’ are imp ortan t for a hypothesis to b ecome the consensus scien tific theory . In practice, b eing true means b eing in ‘agreemen t with observ ations’ that can b e logically deriv ed from the hypothesis; this agreement can be established b y the consisten t oracle estimation of the true status of h yp othesis I ( G ) via confidence C ( G ; t ). Russell (1912) illustrated the induction problem: ‘Domestic animals exp ect fo o d when they see the p erson who usually feeds them. All these rather crude exp ectations of uniformit y are liable to b e misleading. The man who has fed the c hick en every da y throughout its life at last wrings its neck instead, sho wing that more refined views as to the uniformit y of nature would hav e b een useful to the chic ken.’ W e agree with Russell’s claim that p ersonal b elief of the dead c hick en P ( G ) = 1 is wrong b ecause in reality I ( G ) = 0. How ever, the rest of c hick ens who witness the incidence E will switc h their inter-sub jective confidence from C ( G ) = 1 to C ( G ; E ) = 0. Before encountering an incidence E , confidence C ( G ) = 1 is not wrong, but is simply a bad estimator of I ( G ), whic h can b e altered correctly to C ( G ; E ) = 0. W e hav e seen that inductive logic can b e based on the confidence. The Laplace– Ba yes probabilit y-based solution has a probability dilution of P ( G | T n = n ) = 0 even when G is true. This is caused by the prior assumption of P ( G ) = 0, equiv alen tly I ( G ) = 0. With Jeffreys’s (1939) resolution, T n = n corrob orates the h yp othesis G p ositiv ely by putting a p oin t mass 1/2 on G but never accept G , P ( G | T n = n ) < 1 with a finite n , b ecause he set P ( G ) = 0 with a p oint mass 1 / 2 a priori . Confidence is not a probabilit y , hence it do es not ha v e a probability dilution. The confidence resolution allo ws an oracle acceptance of C ( G ; T n = n ) = 1 when G is true. It is a consistent oracle estimator of I ( G ). The confidence is neither a probability nor a lik eliho o d, to allo w for switc hing from 1 to 0, b y not ob eying the Bay es rule. It also gives a meaningful epistemic confidence in terpretation for an observed CI by main taining the confidence feature. With the confidence, to accept a h yp othesis from particular instances without an unv erifiable prior, inductive reasoning do es not require to review all the instances but to ha ve a consensus s cien tific theory P ( T n | θ ) on the generation of instances. Then, w e infer G based on agreemen t with observ ed data, predicted from G . It is legitimate to 16 predict the uniformit y un til it fails to hold. The general relativit y theory w as accepted in 1919 by observing light b ending as predicted b y relativity theory . This approach allo ws a dynamic paradigm shift (Kuhn, 2012) by accepting a new general relativity theory and rejecting the former Newtonian theory . Suc h an oracle acceptance of a new relativit y theory with complete confidence is rational (C1). This w ay of the rational acceptance and falsification of hypotheses is theoretically consistent decision making (C2) and agrees with evidence from the data (C3). App endix A: Ba y esian approac h Laplace (1814) used the Bernoulli mo del for the sunrise problem. Let X = ( X 1 , · · · , X n ) b e indep enden t and iden tically distributed Bernoulli random v ariables with the success probabilit y θ . Once we ha ve observ ed data x = ( x 1 , · · · , x n ) , we ha ve the lik eliho o d L ( θ ; x ) = P ( X = x | θ ) ∝ P ( T n = t | θ ) ∝ θ t (1 − θ ) n − t = L ( θ ; t ) , where T n = P X i is a sufficien t statistic and t = P x i . Laplace (1814) used a uniform prior P 0 ( θ ) = 1 on θ ∈ (0 , 1) . T o find the logical conditional probabilit y of θ giv en T n = t , the Bay es-Laplace rule can b e used: The conditional probabilit y density of θ giv en the data T n = t is called the p osterior f ( θ | t ) = P 0 ( θ ) L ( θ ; t ) R L ( θ ; t ) P 0 ( θ ) dθ = P 0 ( θ ) L ( θ ; t ) P ( T n = t ) , (11) where P ( T n = t ) = Z L ( θ ; t ) P 0 ( θ ) dθ = Z θ t (1 − θ ) n − t dθ = B eta ( t + 1 , n − t + 1) , and B eta ( · , · ) is the b eta function. Let E b e a particular even t that the sun rises tomorro w. Then, P ( T n = n ) = B eta ( n + 1 , 1) = 1 n + 1 , to give P ( E | The sun has risen n consecutive da ys) = P ( X n +1 = 1 | T n = n ) = P ( T n +1 = n + 1 | T n = n ) = n + 1 n + 2 = 2190001 2190002 = 0 . 9999995 . This shows that P ( E | T n = n ) → 1 as n → ∞ . One observ ation increases the probability of the particular hypothesis E from n n +1 to n +1 n +2 , so that the incremen t of probabilit y is n +1 n +2 − n n +1 = 1 ( n +1)( n +2) . Th us, the probabilit y 17 of this particular hypothesis even tually b ecomes one. How ever, this is not enough to ensure the h yp othesis G that the sun rises forev er holds (Senn, 2003). The probability that the sun rises in the next m consecutive da ys, giv en the previous n consecutiv e sunrises, is P ( X n +1 = 1 , ..., X n + m = 1 | T n = n ) = P ( T n + m = n + m | T n = n ) = n + 1 n + m + 1 . As long as n is finite, the probabilit y of h yp othesis G b ecomes zero b ecause for all n > 0 P ( G | T n = n ) = lim m →∞ P ( T n + m = n + m | T n = n ) = 0 . Let us consider Jeffreys’s prior, whic h places 1/2 of probability on θ = 1 and puts a uniform prior on (0,1) with 1/2 probability . Then, P ( T n = n ) = 1 2 1 + 1 n + 1 to give P ( E | T n = n ) = P ( T n +1 = n + 1 | T n = n ) = 1 + 1 / ( n + 2) 1 + 1 / ( n + 1) = ( n + 1)( n + 3) ( n + 2) 2 , P ( T n + m = n + m | T n = n ) = 1 + 1 / ( n + m + 1) 1 + 1 / ( n + 1) = ( n + 1)( n + m + 2) ( n + 2)( n + m + 1) . Th us, P ( G | T n = n ) = n + 1 n + 2 → 1 as n → ∞ . App endix B: Confidence approac h Let us consider the righ t-side P-v alue P ( T ∗ n ≥ t | θ ) = n X y = t P ( T ∗ n = y | θ ) = n X y = t n ! y !( n − y )! θ y (1 − θ ) n − y = R θ 0 x t − 1 (1 − x ) n − t dx B eta ( t, n − t + 1) . This leads to B eta ( t, n − t + 1) density as the confidence density c ( θ ; t ) = θ t − 1 (1 − θ ) n − t B eta ( t, n − t + 1) . Here, the implied prior c 0 ( θ ; t ) ∝ c ( θ ; t ) /L ( θ ; t ) ∝ θ − 1 , namely , the B eta (0 , 1) distribution, is improp er as R 1 0 θ − 1 dθ = ∞ . Necessary computa- tions of B eta (0 , 1) can b e obtained as the limit of a prop er B eta ( a, 1) distribution for a > 0. B eta ( a, 1) leads to P ( T n = n ) = Z 1 0 P ( T n = n | θ ) c 0 ( θ ; n ) dθ = Γ( a + 1) Γ( a ) Z 1 0 θ n + a − 1 dθ = Γ( a + 1) ( n + a )Γ( a ) 18 and P ( X n +1 = 1 | T n = n ) = P ( T n +1 = n + 1) P ( T n = n ) = n + a n + a + 1 , P ( T n + m = n + m | T n = n ) = P ( T n + m = n + m ) P ( T n = n ) = n + a n + m + a . Th us, lim a ↓ 0 P ( T n + m = n + m | T n = n ) = n n + m Giv en n, B eta (0 , 1) implied prior leads to C ( G ; T n = n ) = lim m →∞ P ( T n + m = n + m | T n = n ) = 0 . This confidence cannot ov ercome the degree of skepticism y et. No w, we apply the confidence to the transformed data b y defining Y i = 0 if the sun rises on the i th day , where P ( Y i = 1 | θ ∗ ) = θ ∗ = 1 − θ and θ is the long-run frequency of sunrises. Then, Y i = 1 − X i and θ ∗ = 1 are equiv alent to θ = 0. Let T ∗ n = P Y i = n − T n . Then, the right-side P-v alue function is P ( T ∗ n ≥ n − t | θ ∗ ) = P ( T n ≤ t | θ ∗ ) = 1 − P ( T n ≥ t + 1 | θ ∗ ) for t = 0 , 1 , · · · , n − 1. F or t = n , we ha ve for an y θ ∗ ∈ [0 , 1] P ( T n ≤ n | θ ∗ ) = 1 whic h gives p oint mass 1 at θ ∗ = 0, equiv alently θ = 1. The confidence distribution for t = n leads to P ( T ∗ n = 0) = P ( T n = n ) = 1 , P ( T ∗ n + m = 0 | T ∗ n = 0) = P ( T n + m = n + m | T n = n ) = 1 , and C ( G ; T n = n ) = lim m →∞ P ( T n + m = n + m | T n = n ) = 1 . Th us, w e can say that the sun will rise forev er with complete confidence. Because c 0 ( θ ; t ) = c ∗ 0 ( θ ∗ ; n − t ) ∝ c ∗ ( θ ∗ ; n − t ) L ( θ ∗ ; n − t ) ∝ 1 1 − θ , w e can obtain the same result from the implied prior B eta (1 , 0) ∝ (1 − θ ) − 1 , The computations for B eta (1 , 0) can b e obtained as the limit of B eta (1 , a ) for a > 0, whic h 19 leads to P ( T n = n ) = Z 1 0 P ( T n = n | θ ) c 0 ( θ ; n ) dθ = Γ( a + 1) Γ( a )Γ(1) Z 1 0 θ n (1 − θ ) a − 1 dθ = Γ( a + 1) Γ( a )Γ(1) Γ( n + 1)Γ( a ) Γ( n + a + 1) Z 1 0 Γ( n + a + 1) Γ( n + 1)Γ( a ) θ n (1 − θ ) a − 1 dθ = Γ( a + 1)Γ( n + 1) Γ( n + a + 1) → 1 as a ↓ 0 . Thus, C ( G ; T n = n ) = 1 . With a prior B eta ( α, β ) where α > 0 and β > 0, P ( X n +1 = 1 | T n = n ) = B eta ( n + 1 + α , β ) B eta ( n + α, β ) = n + α n + α + β . F urthermore, log P ( T n + m = n + m | T n = n ) = log m − 1 Y i =0 n + i + α n + i + α + β ! = m − 1 X i =0 log 1 − β n + i + α + β ≤ − β m − 1 X i =0 1 n + i + α + β . to give 0 ≤ C ( G ; T n = n ) = lim m →∞ P ( T n + m = n + m | T n = n ) ≤ exp( −∞ ) = 0 , pro vided β > 0 . References Barnard, G. A. (1987). R.A. Fisher-a true Ba yesian? International Statistic al R eview , 55 , 182-189. Ba yes, T. (1763). An Essay T ow ards Solving a Problem in the Do ctrine of Chances. By the late Rev. Mr. Bay es, F. R. S. comm unicated by Mr. Price, in a Letter to John Canton, A. M. F. R. S.. Philosophic al T r ansactions of the R oyal So ciety of L ondon , 53 , 370-418. Bernardo, J. M. (1979). Reference Posterior Distributions for Ba yesian Inference. Journal of the R oyal Statistic al So ciety Series B: Statistic al Metho dolo gy , 41 , 113-128. 20 Birbaum, A. (1962). On the foundation of statistical inference. Journal of the Amer- ic an Statistic al Asso ciation , 57 , 269-326. Broad, C. D. (1918). On the Relation Bet w een Induction and Probability (Part I.). Mind , 27 , 389-404. Co x, D. R. (1958). Some problems connected with statistical inference. The Annals of Mathematic al Statistics , 29 , 357-372. Da wid, A. P ., Stone, M. and Zidek, J. V. (1973). Marginalization paradoxes in Ba yesian and structural inference. Journal of the R oyal Statistic al So ciety Se- ries B: Statistic al Metho dolo gy , 35 , 189-233. De Finetti, B. (1972). Pr ob ability, Induction and Statistics . (London: J. Wiley .) Dempster, A. P . (1968). Upp er and low er probabilities generated by a random closed in terv al. The Annals of Mathematic al Statistics , 39 , 957-966. Deno eux, T. and Li, S. (2018). F requency-calibrated b elief functions: Review and new insights. International Journal of Appr oximate R e asoning , 92 , 232-254. Empiricus, S. (1933). Outlines of Pyrrhonism . (T ranslated by R. G. Bury . London: W. Heinemann) Fisher, R. A. (1921). On the probable error’ of a co efficien t of correlation deduced from a small sample. Metr on , 1 , 1-32. Fisher, R. A. (1930). In verse Probabilit y . Mathematic al Pr o c e e dings of the Cambridge Philosophic al So ciety , 26 , 528-535. Fisher, R. A. (1958). The Nature of Probabilit y . Centennial R eview of Arts and Scienc e , 2 , 261-274. F rank, P . G. (1954). The v ariet y of reasons for the acceptance of scien tific theories. The Scientific Monthly , 79 , 139-145. F raser, D. A. S. and McDunnough, P . (1984). F urther remarks on asymptotic nor- malit y of lik eliho o d and conditional analyses. Canadian Journal of Statistics , 12 , 183-190. Hartmann, S. and Sprenger, J. (2010). Ba y esian Epistemology . (In D. Pritchard and S. Berneck er (Eds.), The R outle dge Comp anion to Epistemolo gy . (London: Routledge) Hume, D. (1748). An Enquiry Conc erning Human Understanding , P . Millican (Ed.). (Oxford: Oxford Univ ersity Press) 21 Ja ynes, E. T. (2003). Pr ob ability The ory: The L o gic of Scienc e , G. L. Bretthorst (Ed.). (Cambridge: Cambridge Univ ersity Press) Jeffreys, H. (1939). The ory of Pr ob ability . (New Y ork: Oxford Universit y Press) Kahneman, D. and Tversky , A. (1954). Prosp ect theory: Analysis of decision under risk. Ec onometric a , 47 , 263-292. Kaplan, M. (1996). De cision The ory as Philosophy . (Cam bridge: Cam bridge Univ er- sit y Press) Kolmogoro v, A. N. (1933). F oundations of Pr ob ability . (T ranslated b y N. Morrison. New Y ourk: Chelsea Publishing Compan y) Kuhn, T. S. (2012). The Structur e of Scientific R evolutions , 4th ed. with I. Hacking (in tro). (Universit y of Chicago Press) Laplace, P . S. (1814). A Philosophic al Essay on Pr ob abilities . (T ranslated b y F. W. T ruscott and F. L. Emory . New Y ork: Dov er.) La vine, M., and Bjørnstad, J. F. (2022). Commen ts on Confidence as Likelihoo d by P awitan and Lee in Statistical Science, Nov ember 2021. Statistic al Scienc e , 37 , 625-627. Lee, H. and Lee, Y. (2023). Poin t mass in the confidence distribution: Is it a dra wback or an adv an tage?. arXiv pr eprint arXiv:2310.09960 . Lee, H. and Lee, Y. (2024). On the Statistical F oundations of H-lik eliho o d for Unob- serv ed Random V ariables. arXiv pr eprint arXiv:2310.09955 . Lee, Y. and Bjørnstad, J. F. (2013). Extended Likelihoo d Approach to Large-scale Multiple T esting. Journal of the R oyal Statistic al So ciety Series B: Statistic al Metho dolo gy , 75 , 553-575. Lee, Y. and Nelder, J. A. (1996). Hierarc hical Generalized Linear Mo dels. Journal of the R oyal Statistic al So ciety Series B: Statistic al Metho dolo gy , 58 , 619-678. Lee, Y., Nelder, J. A. and Pa witan, Y. (2017). Gener alize d Line ar Mo dels with R andom Effe cts: Unifie d A nalysis via H-likeliho o d , 2nd ed. (Boca Raton: CRC Press) Lewis, D. (1980). A Sub jectivist Guide to Ob jectiv e Chance. (In W. L. Harp er, R. Stalnak er and G. Pearce (Eds.), Ifs . Berkeley: Universit y of California Press) Lindley , D. V. (1958). Fiducial distributions and Bay es’ theorem. Journal of the R oyal Statistic al So ciety Series B: Statistic al Metho dolo gy , 20 , 102-107. Meng, X. L. (2009). Deco ding the h-likelihoo d. Statistic al Scienc e , 24 , 280-293. 22 Neyman, J. (1937). Outline of a theory of statistical estimation based on the classical theory of probability . Philosophic al T r ansactions of the R oyal So ciety of L ondon. Series A, Mathematic al and Physic al Scienc es , 236 , 333-380. Neyman, J. (1956). Note on an Article by Sir Ronald Fisher. Journal of the R oyal Statistic al So ciety Series B: Statistic al Metho dolo gy , 18 , 288-294. Neyman, J. and P earson, E. S. (1933). On the problem of the most efficient tests of statistical h yp otheses. Philosophic al T r ansactions of the R oyal So ciety of L ondon. Series A, Containing Pap ers of a Mathematic al or Physic al Char acter , 231 , 289- 337. Olsson, E. J. (2018). Ba yesian Epistemology . in S. O. Hansson and V. F. Hendricks (eds), Intr o duction to F ormal Philosophy . P aulos, J. A. (2011). The Mathematics of Changing Y our Mind. New Y ork Times , 5 August 2011. P awitan, Y., Lee, H. and Lee, Y. (2023). Epistemic confidence in the observed confidence interv al. Sc andinavian Journal of Statistics , 50 , 1859-1883. P awitan, Y. and Lee, Y. (2017). W allet game: Probability , likelihoo d, and extended lik eliho o d. The A meric an Statistician , 71 , 120-122. P awitan, Y. and Lee, Y. (2021). Confidence as Likelihoo d. Statistic al Scienc e , 36 , 509-517. P awitan, Y. and Lee, Y. (2022). Rejoinder: Confidence as Lik eliho od. Statistic al Scienc e , 37 , 628-629. P awitan, Y. and Lee, Y. (2024). Philosophies, Puzzles and Par adoxes: A Statistician ’s Se ar ch for T ruth . (Bo ca Raton: CRC Press) P opp er, K. R. (1959). New App endices. (In The L o gic of Scientific Disc overy . New Y ork: Routledge) P opp er, K. R. (1972). Obje ctive know le dge . (Oxford: Oxford Universit y Press) Ramsey , F. P . (1926). T ruth and Probability . (In R. B. Braithw aite (Ed.), The F oundations of Mathematics and other L o gic al Essays . London: Routledge and Kegan Paul) Ro yal, R. M. (1997). Statistic al Evidenc e: A Likeliho o d Par adigm , 2nd ed. (London : Chapman & Hall) Russell, B. (1912). The Pr oblems of Philosophy . (New Y ork: Henry Holt and Co.) Sa v age, L. J. (1954). The F oundations of Statistics . (New Y ork: Jon Wiley and Sons) 23 Sa v age, L. J. (1961). The foundations of statistics reconsidered. In Pr o c e e dings of the F ourth Berkeley Symp osium on Mathematic al Statistics and Pr ob ability, V olume 1: Contributions to the The ory of Statistics (V ol. 4, pp. 575-587). (Universit y of California Press) Sc hw eder, T. and Hjort, N. L. (2016). Confidenc e, Likeliho o d, Pr ob ability . (Cam bridge Univ ersity Press) Senn, S. (2003). Dicing with De ath: Chanc e, R isk and He alth . (Cam bridge Univ ersity Press) Senn, S. (2009). Comment on Harold Jeffreys’s Theory of Probability Revisited. Statistic al Scienc e , 24 , 185-186. Stein, C. (1959). An example of wide discrepancy b et ween fiducial and confidence in terv als. The Annals of Mathematic al Statistics , 30 , 877-880. W elch, B. L. and P eers, H. W. (1963). On formulae for confidence p oin ts based on in tegrals of weigh ted likelihoo ds. Journal of the R oyal Statistic al So ciety Series B: Statistic al Metho dolo gy , 25 , 318-329. Wilkinson, G. N. (1977). On resolving the con trov ersy in statistical inference. Journal of the R oyal Statistic al So ciety Series B: Statistic al Metho dolo gy , 39 , 119-144. V on Mises, R. (1928). Pr ob ability, Statistics and T ruth , 2nd English ed. (T ranslated b y H. Geiringer. London: Allen and Un win) V on Neumann, J. and Morgenstern, O. (1947). The ory of Games and Ec onomic Behavior , 2nd ed. (Princeton Universit y Press) W agenmakers, E. J., Gronau, Q. F., Dablander, F. and Etz, A. (2022). The supp ort in terv al. Erkenntnis , 87 , 589-601. 24

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment