On the Sample Complexity of One Hidden Layer Networks with Equivariance, Locality and Weight Sharing

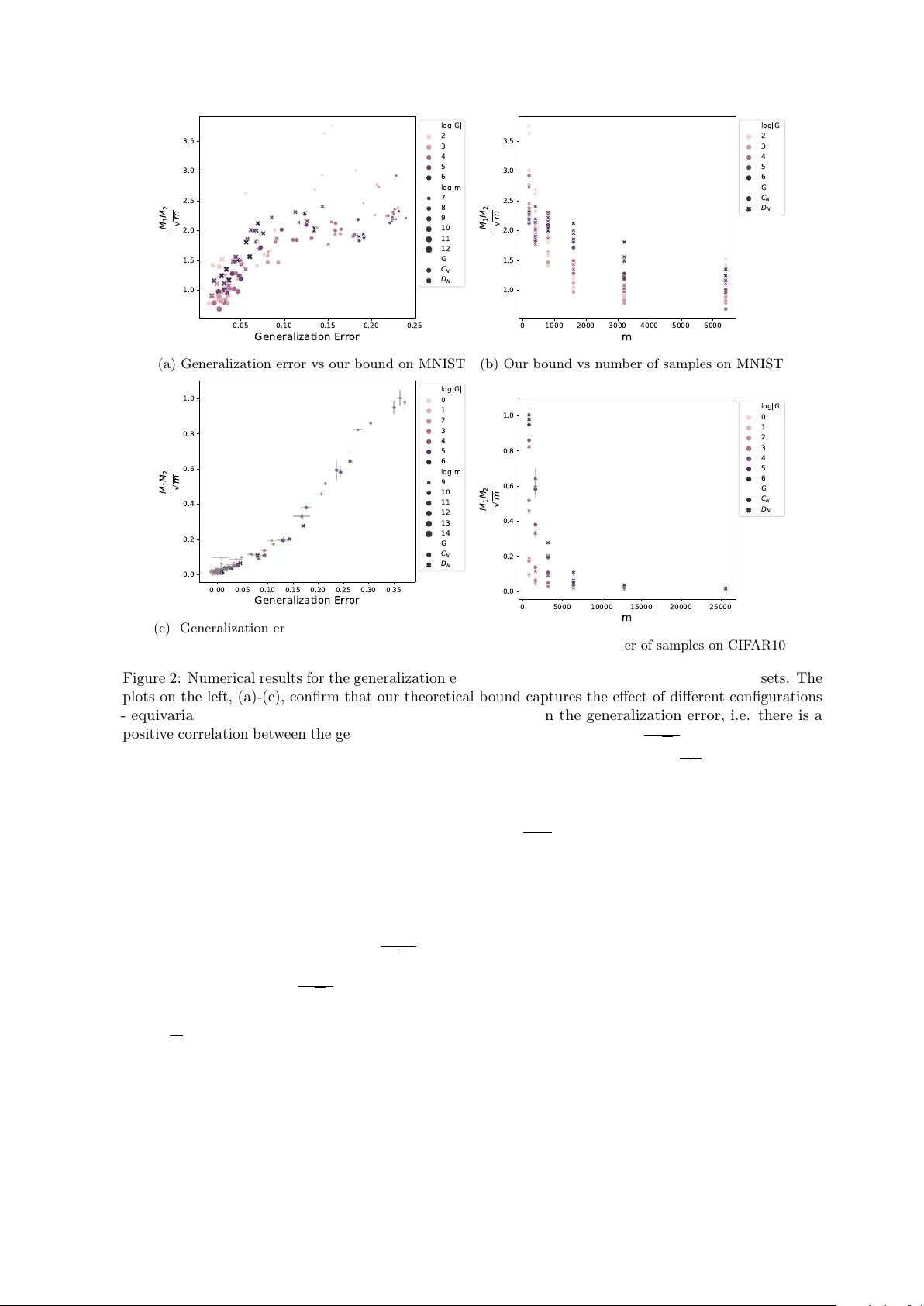

Weight sharing, equivariance, and local filters, as in convolutional neural networks, are believed to contribute to the sample efficiency of neural networks. However, it is not clear how each one of these design choices contributes to the generalizat…

Authors: Arash Behboodi, Gabriele Cesa