A Scalable Variational Bayes Approach to Fit High-dimensional Spatial Generalized Linear Mixed Models

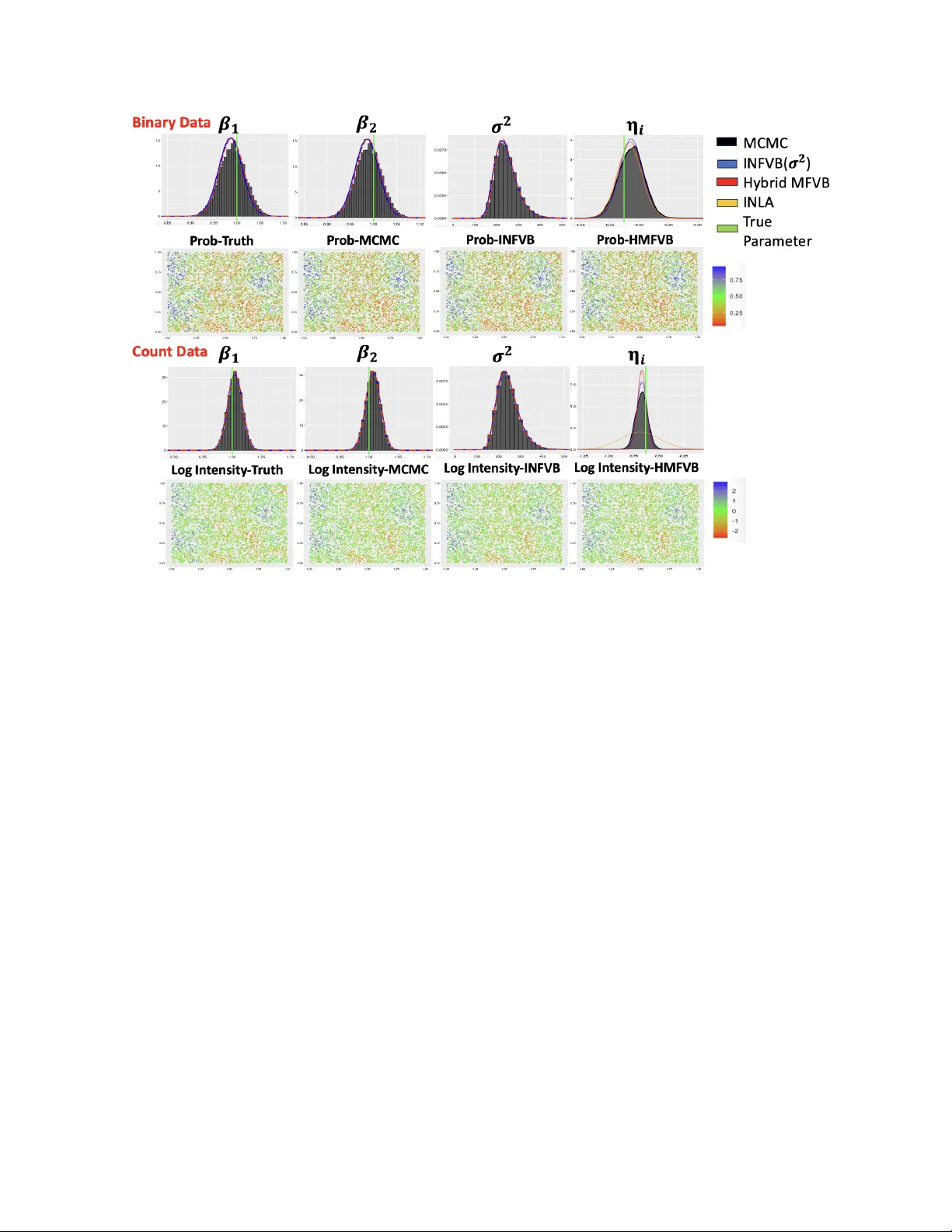

Gaussian and discrete non-Gaussian spatial datasets are common across fields like public health, ecology, geosciences, and social sciences. Bayesian spatial generalized linear mixed models (SGLMMs) are a flexible class of models for analyzing such da…

Authors: Jin Hyung Lee, Ben Seiyon Lee