Isotonic propensity score matching

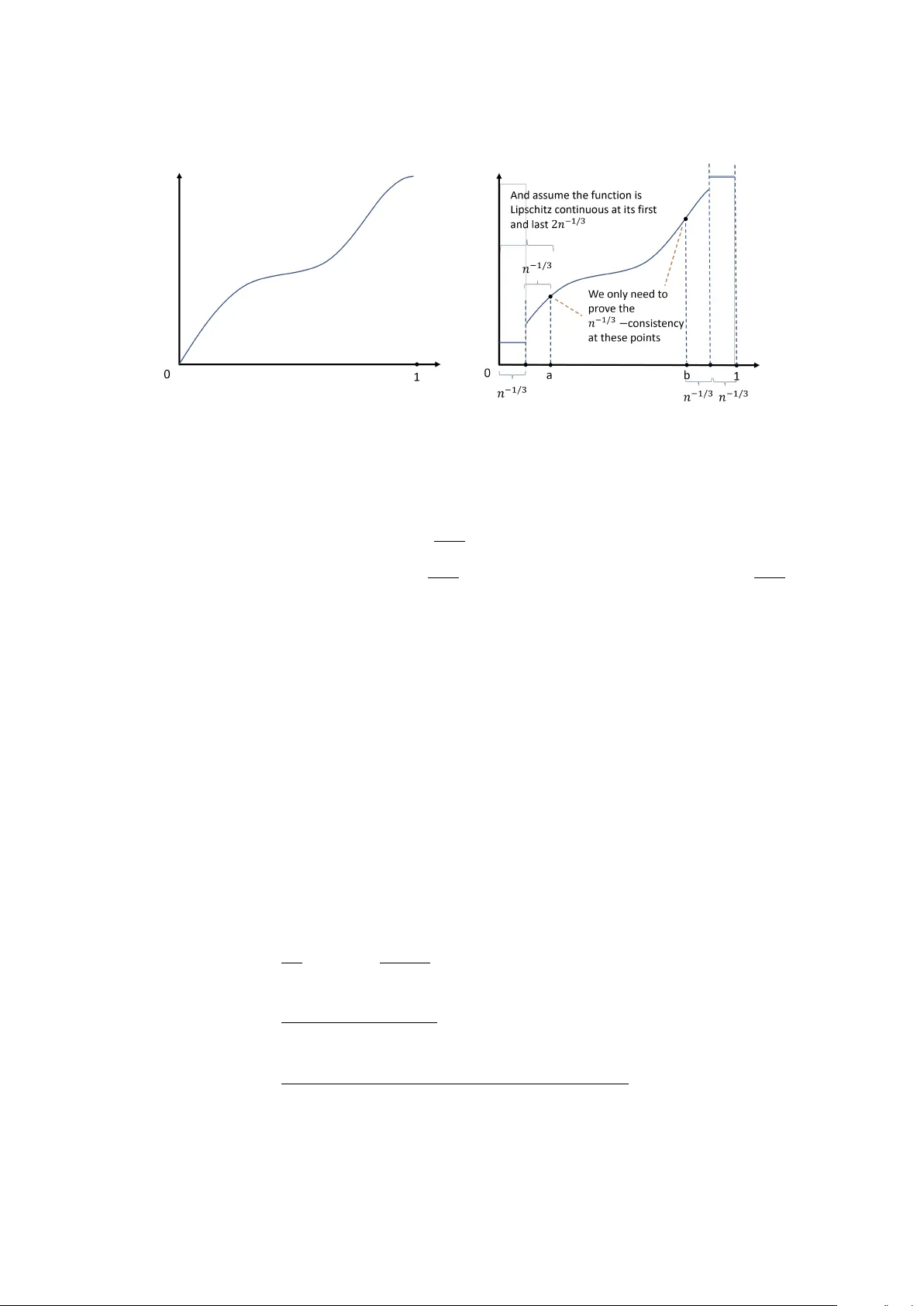

We propose a one-to-many matching estimator of the average treatment effect based on propensity scores estimated by isotonic regression. This approach is predicated on the assumption of monotonicity in the propensity score function, a condition that …

Authors: Mengshan Xu, Taisuke Otsu