Spreadsheet Debugging

Spreadsheet programs, artifacts developed by non-programmers, are used for a variety of important tasks and decisions. Yet a significant proportion of them have severe quality problems. To address this issue, our previous work presented an interval-b…

Authors: Yirsaw Ayalew, Rol, Mittermeir

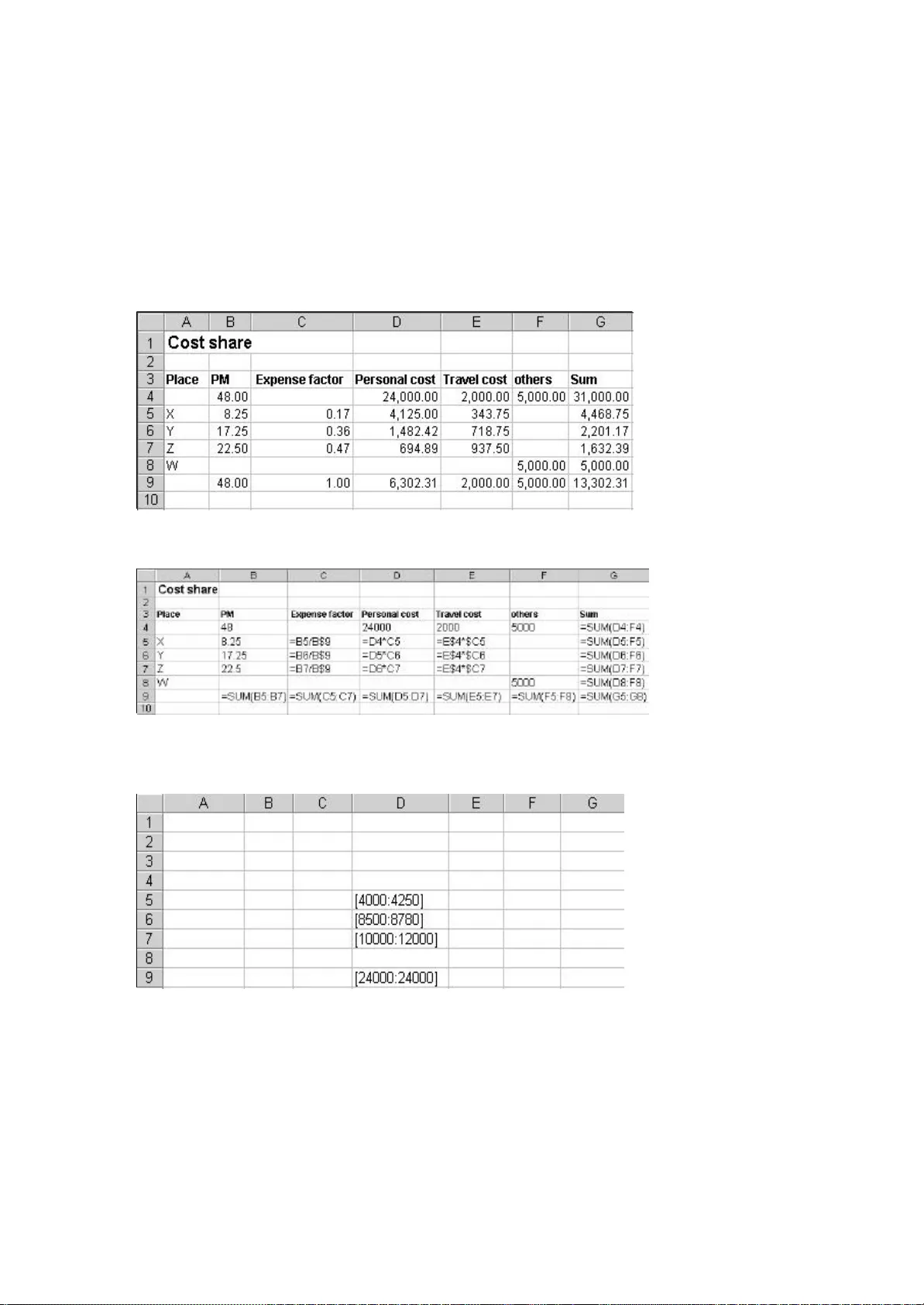

Spreadsheet Debugging Yirsaw Ayalew Department of Computer Science Addis Ababa University P.O.Box 1176 Addis Ababa, Ethiopia yirsawa@math.aau.edu.et Roland Mittermeir Institut of Informatics - Systems Univ ersity of Klagenfurt Universitätsstr. 65 - 67, A - 9020, Klagenfurt, Austria roland@isys.uni - klu.ac.at ABSTRACT Spreadsheet programs, artifacts developed by non - programmers, are used for a variety of impor tant tasks and decisions. Yet a significant proportion of them have severe quality problems. To address this issue, our previous work presented an interval - based testing methodology for spreadsheets. Interval - based testing rests on the observation that spr eadsheets are mainly used for numerical computations. It also incorporates ideas from symbolic testing and interval analysis. This paper addresses the issue of efficiently debugging spreadsheets. Based on the interval - based testing methodology, this paper presents a technique for tracing faults in spreadsheet programs. The fault tracing technique proposed uses the dataflow infor - mation and cell marks to identify the most influential faulty cell(s) for a given formula cell containing a propagated fault. Ke ywords: spreadsheet debugging, fault tracing, spreadsheets, end - user programming 1. INTRODUCTION Spreadsheet systems are widely used and highly popular end - user systems. They are used for a variety of important tasks such as mathematical modeling, scien tific computation, tabular and graphical data presentation, data analysis and decision - making. Many business applications are based on the results of spreadsheet computations and consequently important decisions are made based on spreadsheet results. As we cannot assume professionalism of spreadsheet developers in writing and testing their spreadsheet programs, we cannot assume professionalism in debugging. Therefore, this paper presents an approach to help users in identifying cells containing actually f aulty entries. The unifying property of spreadsheet applications is that they involve numeric computations. Numeric computations constitute the primary turf of spreadsheets, in spite of the fact that the spreadsheet model finds derived applications in othe r areas too. These are as diverse as information visualization [7], concurrent computation [22, 23], or user interface specifications [10], to name just a few. There is also a trend towards using the spreadsheet model as a general model for end - user progra mming [14]. Despite their popularity due to their ease of use and suitability for numerical computations, a significant proportion of spreadsheet programs have severe quality problems. In recent years, there has been an increased awareness of the potenti al impact of faulty spreadsheets on business and decision - making. A number of experimental studies and field audits [5, 9, 15, 16, 17, 18, 19, 20] have shown the serious impact spreadsheet errors have on business and on decisions made on the basis of resul ts computed by spreadsheets. In contrast to the professional use of the results computed by spreadsheets, the development of these results is less professional. The developers of spreadsheets are mainly end users who are not expected to follow a formal p rocess of software develop - ment although the sheets they produce are of the nature of regular programs and quite often reach the complexity of typical data processing application software 1 . Nevertheless, spreadsheet developers rather uncritically rely on t he initial correctness of their programs. To address this problem, our previous work [3, 4, 13, 24] presented an interval - based testing methodology for spreadsheets. The interval - based testing methodology was pro - posed based on the premise that spreadshe et developers are not software professionals. The approach takes into account inherent characteristics of spreadsheets as well as the conceptual models of spreadsheet programmers. It also incorporates ideas from symbolic testing and interval analysis. Inte rval - based testing focuses on the functionality of spreadsheet formulas instead of the internal structure of a spreadsheet program (i.e., it is not based on a traditional code coverage criterion). With interval testing, cells containing suspicious values are identified. But as with conventional programming, the spot where a wrong value figures is not necessarily the location of the fault in the program. This might be some step in the algorithm that has been executed on the way to the respective output sta tement. Since with spreadsheets, basically all intermediate results of computations are visible on the user interface (exceptions are only computations within a cell and computations in hidden cells), the issue of directing spreadsheet users from the cell containing a wrong result to the cell containing the wrong formula becomes specifically important. Based on the interval - based testing methodology, this paper presents a technique for tracing faults in a spreadsheet program. Cells whose value is outside a computed interval (see section 2) are referred to as cells containing a symptom of fault. The issue is, given a formula cell with a symptom of fault which depends directly and/or indirectly on other cells which have symptoms of faults, how to identify th e most influential faulty cell. If we can identify the most influential faulty cell, then correcting that cell may correct many of the cells showing incorrect values, which are dependent on it thereby simplifying the debugging process. The fault tracing ap proach uses information from the interval - based testing system about the verification status of the cells, dataflow infor mation, and priority values of cells. 1 For this reason, we refer to the system of interlinked data and formula cells of a spreadsheet also as “spreadsheet program” This paper is organized as follows: Section 2 briefly describes the interval - based testing met hodology. Section 3 discusses the general problem of fault tracing in relation to conventional software debugging techniques and spreadsheet debugging. Section 4 discusses the fault tracing strategy supported by the tool developed to support interval - based spreadsheet analysis. While tracing for faults, there is a need for minimizing the search region. This requires the identification of those faulty cells, which have a higher likelihood of containing the most influential faulty cell(s). This is presented i n Section 4.1. An example and a fault tracing algorithm are described in this same section. Finally, the main points of the fault tracing approach are summarized in Section 5. 2. INTERVAL - BASED TESTING Generally, the main task in testing a program is to be able to detect the existence of symptoms of faults in the program. By running the program with test cases and comparing the result with the expected outcome described in the specification or generated by a test oracle, the existence of a fault can be d etected. However, in spread - sheet programming most spreadsheet programmers do not have the expertise to design and execute effective test cases. In the absence of a specification and automated test oracle, the user plays the role of a test oracle and provi des the expected outcome during testing. The root of spreadsheet programming lies in the definition of formulas. In spreadsheets, users want to make sure that their spreadsheet formulas are correct with respect to the actual data that they need for their applications instead of arbitrary data chosen for testing purposes (this does not include those who develop templates). Hence, the reasonableness of the computed value is used to judge the validity of the formula. Users usually have a gut feeling of the r ange of reasonable values for each given cell. Interval - based testing is proposed to check the existence of symptoms of faults in formu - las, which are defined for numerical computations. Spreadsheets are mainly used for numerical computations by end user s. Hence, we require from the user a vision of the ranges of possible values of formula computations. Interval - based testing requires the user to specify for a given spreadsheet on a mirror image of the sheet intervals expected for the desired input and formula cells respectively. For numeric input cells, the user specifies the range of reasonable values in the form of intervals, which serve as input domains. For formula cells, the user specifies the expected outcome of the formula again in the form of in tervals. The prototype system developed in the context of [3], an add on to spreadsheet packages like MS - Excel, allows also for selective specification of such expected intervals. It mirrors the user’s sheet by means of an interval - value sheet containing b oundaries within which the spreadsheet user expects the result of a formula to be. Further, an interval - formula sheet where such boundaries are derived based on the formulas of the users actual sheet by means of interval arithmetic applied to the intervals given for input cells is established by the system. The attached intervals will be stored as strings (since the spreadsheet system used with the prototype neither supports interval data types nor allows user defined data types) in a behind - the - scene sprea dsheet using the same cell coordinates as the cells in the ordinary spreadsheet. Figure 1: Architecture of the prototype supporting the interval - based testing methodology In figure 1, Ordinary spreadsheet is the usual spreadsheet defined by the user i n which computation is based on discrete values. Expected spreadsheet is a behind - the scene spreadsheet which contains expected intervals for input and formula cells as given by the user. For a formula defined at the ordinary spreadsheet, the formula is ev aluated based on the interval values stored in the expected spreadsheet and the resulting interval will be stored as an interval string in the respective cell in the bounding spreadsheet. In some cases, a user may not attach input and expected intervals fo r some input and formula cells. In such situations, the discrete values of the cells from the ordinary spreadsheet are used during interval computation as intervals of width zero. The bounding spreadsheet , which is a behind - the - scene spreadsheet, contains computed bounding interval values. A bounding interval is an interval computed by the formulas in the users regular spread - sheet with the operators in formula cells replaced by the respective interval operators. It is used to check the reasonableness of th e expected interval specified by the user. Once the necessary values are available from the three sources, namely, spreadsheet compu - tation, user expectation, and interval computation, the comparator may determine the existence of symptoms of faults. Whene ver there is a discrepancy between spreadsheet com pu tation, user expectation and interval computation, the comparator marks those cells with symptoms of faults and those which seem to be correct in different colors. Finally, among those cells with sympt oms of faults which contribute to a faulty cell, the most influential cell is identified using the fault tracer. A discussion of the fault tracer is the main subject of this paper. 3. FAULT TRACING BACKGROUND Once symptoms of faults are detected, the nex t task is to find the location of the actual faults. A testing system cannot exactly indicate the location of faults; it rather provides a hint or a symptom of a fault. However, a testing system can facilitate the search for the location of faults by provi ding testing information about the possible paths that lead to the likely fault location. A symptom of fault is a signal indicating the existence of a possible fault. A symptom of fault is generated whenever there is a discrepancy between the expected beha vior of a program and its actual behavior. Fault tracing is the process of identifying the location of faults in a program. In the following sections, we discuss the process of debugging conventional software and spreadsheet debugging. 3.1 Debugging conve ntional software Generally, fault tracing in conventional software debugging involves program slicing techniques to minimize the search for the potential faults [1, 2, 8, 11]. The first important information needed in fault tracing is to compute a stati c backward slice. It contains all variables that may affect the variable at which a symptom of fault is detected at a given statement. Since a symptom of fault is generated based on a particular test case, those variables, which are directly and/or indirec tly involved in the current computation will contain the statement producing the faulty variable provided that the fault is not due to missing statements. This requires the computation of dynamic backward slices. In order to reach the potential faulty vari ables, further reduction of the dynamic backward slice should be made using the technique of dicing. Dicing is carried out by removing the sub - slice corresponding to correct variables. However, the use of dicing imposes some preconditions to be satisfied in order for the resulting dice to contain the fault [12]. For example, dicing assumes that only one faulty variable exists in the dynamic backward slice. In addition, it assumes that if a variable is faulty then all variables in the dynamic forward slice of that variable are faulty. This misses situations where faults compensate within the slice. Hence, the general process of fault tracing in conventional software can be described as follows. Static slice → Dynamic slice → Dice → Potential location of f ault 3.2 Spreadsheet debugging The problem in spreadsheet debugging is: given a formula cell with a symptom of fault but not having a fault in the formula, how to identify the faulty cell among those cells on which the given cell directly and/or indirect ly depends. Since this analysis shows only cells with deviations between discrete and interval computations, we can only cautiously speak of symptoms of faults. The deviating cells might well result from propagations. In some cases, a symptom of fault can be generated even though there is no fault in the formula. This happens when the user expectation is specified incorrectly and due to propagation of faulty values. As the conceptual view of a spreadsheet program is based on data dependency relations, spr eadsheets can be considered as dataflow driven. On the other hand the conceptual view of a procedural program is control flow - driven. In spite of this difference, a similar procedure to conventional software debugging can be used for fault tracing in sprea dsheets. Therefore, the notion of a slice is not directly applicable. We have rather a set of cells linked just by dataflow connection. Nevertheless, for the sake of comparison, we refer to this set as slice. However, in our approach we do not impose the r equirement that the dynamic backward slice contains only one faulty cell. There can be several cells in the dynamic backward slice of a faulty cell which are marked as faulty. Cell marking is performed based on the result of the comparison made by the comp arator as described in section 2. The fault tracing procedure uses the backward slice and the cell marks recorded by the interval - based testing system. The fault localization technique, proposed by DeMillo et al. [8], was based on the analysis of the ste ps used by programmers experienced in debugging. Following a similar procedure, the spreadsheet fault tracing process contains the following steps: 1. Determine the cells (directly and/or indirectly) involved in the computation of an incorrect formula (i.e., look backward) 2. Select suspicious cells 3. Form hypotheses about suspicious cells Step 1 requires the computation of ”backward slices” with respect to a faulty cell for which we want to locate the source of the fault. Step 2 requires the identification of th ose cells, which have a likelihood of propagating faults through the data flow. These cells are marked by the comparator during the verification process. The comparator determines the existence of a symptom of a fault for a formula cell by comparing the us ual spreadsheet computation, user expectation, and interval computation (see figure 1). Step 3 requires reasoning about the most influential cell. In other words, this step involves the com pu - tation of the priority values for the suspicious cells and the identification of the one, which has the highest likelihood of contributing to the faulty cells in the dynamic backward slice. If the fault is local, i.e., either the formula or the expected interval of the given cell is specified incorrectly, then the f ault can be fixed by examining the faulty cell itself. If the fault is not local, we are looking for the most influential cell. The assumption in this approach is that if the most influential faulty cell is found, then correcting this cell may correct many of the dependent faulty cells in the data dependency graph, thereby reducing the remaining task of the spreadsheet debugging process. To address this problem, we propose an approach using priority setting based on the number of incorrect precedents and de pendents. To do so, we rely on the dynamic backward slice of a given faulty cell as we are dealing with propagated faults. A similar approach was proposed by Reichwein et al. [21] for debugging Form/3 programs. In this approach, a user marks cells as cor rect and incorrect and based on the all - uses dataflow test adequacy criterion, the degree of testedness of formulas is compu - ted. Cells are given different colors based on their degree of testedness. Fault tracing is performed based on the degree of tested ness of cells and by computing the fault likelihood of cells. Fault likelihood is computed based on the number of correct and incorrect dependents of a cell. During the fault localization process, for the cell under consideration, the cells in the dynamic backward slice will be highlighted in different colors based on their degree of testedness. The further process of fault localization is carried out by performing testing using additional test cases. This approach requires the user to provide different tes t cases to localize the faulty cell. The work proposed by Chen and Chan [6] presented a model for spreadsheet debugging. This work described the cognitive aspects of spreadsheet debugging and provided the essential episodes, which could be applied to the d ebugging process in spreadsheets. Our approach does not require the user to provide different test cases since we cannot assume a sufficient testing discipline. As users are working on actual data that they need for their applications, it is likely that they need to know the correctness of their spreadsheets based on the actual data instead of arbitrary data chosen just for testing purposes. The fault tracing approach presented here requires the computation of priority values based on the verification sta tus of precedent cells. If this is not sufficiently discriminating the priority values are also based on dependent cells. 4. FAULT TRACING STRATEGY This section describes how the fault tracing approach works and presents an example to illustrate the app roach. Finally, a fault tracing algorithm is provided. The fault tracing strategy proposed uses information from different sources to locate the most influential faulty cell. The first information that we need is the dataflow infor - mation. This informati on is already available since it is used by the spreadsheet system during the evaluation of formulas. For example, in Microsoft Excel, this information is used by its built - in auditing tool to show the backward and forward slices of cells of interest one l evel at a time. While traversing the backward slice, we need a mechanism of selecting the cells, which have a likelihood of being the most influential faulty cells. This infor ma tion can be obtained from the testing system as cells are marked with differe nt colors depending on the existence of symptoms of faults. Hence, cell marks are used to guide the search process. During traversing the backward slice, the verification status of cells is used to guide the search to the path where the most influential fa ulty cell may be located 4.1 Search for the most influential faulty cell During the verification process, cells in a spreadsheet are categorized into three groups. These are cells with symptoms of faults, cells without symptoms of faults, and cells , whic h are unchecked. Those cells with symptoms of faults are of interest during the process of identifying the most influential faulty cell(s). The search for the most influential faulty cell(s) of a given cell is based on the number of faulty precedents and t he contribution of the faulty precedents to incorrect dependents. A cell, which has many faulty precedents, is more likely to contain the most influential cell(s) than the one with few faulty precedents. In addition, a faulty cell, which has more incorrect dependents, is more influential than the one with few incorrect dependents. Furthermore, those faulty cells, which are at a higher level of the data dependency graph (i.e., near the input cells) are more influential than those at the lower level of the da ta dependency graph. Therefore, correcting faulty cells at the highest level of the data dependency graph may correct cells showing incorrect values (without being actually faulty), which are dependent on the corrected cell thereby reducing the effort of t he debugging process. 4.2 An example Let us consider the cost calculation spreadsheet shown in figure 2(a). Figure 2(e) depicts the spreadsheet with those cells, which have symptoms of faults highlighted. Those cells, which have symptoms of faults, are s haded red by the interval - based testing system (dark in this paper) and those without symptoms of faults are shaded yellow (light grey in this paper). For a demonstration purpose, intervals were attached only for cells in column D and hence verification is done only for this column. Suppose the user wants to trace the most influential faulty cell for the final result of the computation in cell D9. Actually, the first thing to examine is the faulty cell itself. If the formula and the expected interval atta ched are correct, then the fault is due to referencing a faulty cell. In such cases, we need to trace the source of the fault. In the example given, the task in column D was to compute the personal cost based on the fixed amount given in cell D4 for each location. However, the user made a referencing error. Cell D4 should have been used as an absolute reference in the formula of D5 as it is copied downward. An error of referencing will have an effect on the copies and not on the source. As a result, the c ells D6, D7, and D9 are marked as faulty. The direct precedents of cell D9 are cells D5, D6, and D7 (see figure 3). The direct precedents fall into the two categories: correct (those without symptoms of faults) and faulty. The faulty category, which cont ains cells D6 and D7, is the candidate for further investigation. The next task is to identify which one of D6 and D7 contains the most influential faulty cell. Since they have equal verification status, we check the number of their faulty direct precedent s and dependents. D6 has no faulty precedents but D7 has one faulty precedent, D6. Therefore, the path to D7 should be followed to locate the most influential faulty cell. Next, we check the faulty precedents of D7. It has only one precedent cell, D6, whic h is marked as faulty. Among the precedents of D6, there is no faulty precedent. Therefore, cell D6 is considered to be the most influential faulty cell and as a result, whenever the user requests for the most influential faulty cell for cell D9, the sys tem highlights the cell D6. However, if two or more faulty cells have equal number of faulty direct precedents, then we need to consider also the number of their direct dependents. While using the number of faulty direct dependents, there are two possibil ities to consider. • the number of faulty direct dependents combined with the number of faulty direct precedents • the number of faulty direct dependents when the number of faulty direct precedents are equal (a) Cost computation (b) Formula view (c) Expected spreadsheet (d) Bounding spreadsheet (e) A spreadsheet with symptom of faults Figure 2: Interval - based testing in action Figure 3: Data dependency graph for cell D9 If we use the first choice, we may find the most influential cell without going far in the data dependency graph. This influential cell may not be the most influential for the faulty cell under consideration but it contributes to many other faulty dependent cells. Therefore, correcting this cell may also correct many other cells, whi ch are not in the dynamic backward slice of the cell under consideration. This option identifies the most influential cell in terms of the number of incorrect dependents of a cell. If we use the second choice, then we can reach to the most influential cell with respect to the cell under consideration, which is at a higher level in the data dependency graph. Therefore, correcting this cell may correct many cells in the dynamic back - ward slice of the cell under consideration. Though both options provide the p ossibili - ty of correcting many cells, we prefer to locate the most influential cell using the second option as this identifies the most influential cell for the cells in the dynamic backward slice. In the case where two or more faulty cells have equal numb er of faulty precedents and dependents, one of them will be chosen arbitrarily. 4.3 Fault tracing algorithm The algorithm for identifying the most influential faulty cell is presented in algorithm 1 for propagated faults. Let C e be the erroneous cell we are interested in to identify it’s most influential faulty cell(s). Algorithm 1: Algorithm to identify the most influential faulty cell 1: GE = { C i | C i is a direct precedent of C e and C i has a symptom of fault } . 2: If GE = ∅ , then C e is the most influent ial faulty cell and stop. 3: Compute the faulty direct precedents of elements of GE. GEE i = { C ij | C ij is a direct precedent of C i and C ij has a symptom of fault } . 4: Extract precedents with maximal number of second order precedents GGEE = { C i such that | G EE i | is maximal } . 5: If GGEE is singleton, repeat from step 1 with C e ∈ GGEE . 6: If | GGEE | > 1 compute the faulty direct dependents of C i . GED i = { D ij | D ij depends on C i and D ij has a symptom of fault } . 7: Extract precedents with maximal number of second o rder precedents and maximum number of dependents GED = { C i | C i ∈ GGEE and | GED i | is maximal } 8: If GED is singleton, repeat from steps 1 with C e ∈ GED . 9: If | GED | > 1 take arbitrarily C i ∈ GED and repeat from step 1. Thus, departing from erroneous cell C e , first a section of the backward slice is computed in such a way that at each step, one aims to reduce a branching slice to the most promising singleton (steps 1 to 5). If this attempt terminates in a situation where the algorithm cannot decide which ce ll in a set of faulty candidates is most influential, it changes direction and computes the forward slice from the respective dependents, assu - ming that the root having most dependents is the one the user should look at first. 5. CONCLUSION Debugging in volves the identification of the location of the actual faults and fixing the faults given testing revealed some incorrect values. Since most spreadsheets are not produced by software professionals, this process calls for machine support. In spreadsheets, the identification of cells with symptoms of faults and those without symptoms of faults is carried out by the testing system (i.e., interval - based testing). As spreadsheets are dataflow - driven, faults are propagated in the direction of the dataflow. Ther efore, we need a mechanism of identifying the most influential faulty cell in the data dependency graph against the direction of the data flow, so that correcting it may correct many cells in the data dependency graph thereby simplifying the debugging proc ess. In this paper, we have presented a technique for the identification of the most influential faulty cell(s) for a given faulty cell, which has a propagated fault. Unlike conventional software fault localization techniques, which apply dicing, we do no t limit the number of faulty cells in the dynamic backward slice of the cell under consideration to one. Several cells with symptoms of faults can appear in the analog of a dynamic backward slice of a given faulty cell. For the identification of the most i nfluential faulty cells, the fault tracing strategy uses the dataflow information, which is available from the spreadsheet language and the cell marks obtained from the testing system. Path selection is based on the number of faulty precedent and dependent cells. 6. REFERENCES [1] H. Agrawal, R. A. DeMillo, and E. H. Spafford. Debugging with Dynamic Slicing and Backtracking. Software Practice & Experience , 23(6):589 – 616, June 1993. [2] H. Agrawal, J. R. Horgan, S. London, and W. E. Wong. Fault Localizatio n using Execution Slices and Dataflow Tests. Proceedings of the 6 th International Symposium on Software Reliability Engineering , pages 143 – 151, October 1995 [3] Y. Ayalew. Spreadsheet Testing Using Interval Analysis . PhD thesis, Klagenfurt University, Nove mber 2001. [4] Y. Ayalew, M. Clermont, and R. T. Mittermeir. Detecting Errors in Spreadsheets. Proceedings of EuSpRIG 2000 Symposium Spreadsheet Risks, Audit & Development Methods , pages 51 – 62, July 2000. [5] P. S. Brown and J. D. Gould. An Experimental St udy of People Creating Spread - sheets. ACM Transactions on Office Information Systems , 5(3):258 – 272, July 1987. [6] Y. Chen and H. C. Chan. An Exploratory Study of Spreadsheet Debugging Processes. The 4 th Pacific Asia Conference on Information Systems , page s 143 – 155, 2000. [7] E. H. Chi. A Framework for Information Visualization Spreadsheets . PhD thesis, University of Minnesota, March 1999. [8] R. A. DeMillo, H. Pan, and E. H. Spafford. Critical Slicing for Software Fault Locali - zation. International Symposi um on Software Testing and Analysis , pages 121 – 134, January 1996. [9] M. Clermont, C. Hanin, and R. Mittermeir. A Spreadsheet Auditing Tool Evaluated in an Industrial Context. Proc. Spreadsheet Risks, Audit and Development Methods, vol 3, pages 35 – 46, EU SPRIG, July 2002 . [10] S. E. Hudson. User Interface Specification Using an Enhanced Spreadsheet Model. ACM Transactions on Graphics , pages 209 – 239, 1994. [11] B. Korel and J. Rilling. Application of Dynamic Slicing in Program Debugging. Proceedings of t he 3 rd International Workshop on Automatic Debugging , pages 43 – 57, May 1997. [12] J. R. Lyle and M. Weiser. Automatic program bug location by program slicing. Proceedings of the 2 nd International Conference on Computers and Applications , pages 877 – 883, Jun e 1987. [13] R. Mittermeir, M. Clermont, and Y. Ayalew. User - Centered Approaches for Impro - ving Spreadsheet Quality. Technical Report ISYS - MCA - 2000 - 01, Institute for Infor - matics - Systems, University of Klagenfurt, August 2000. [14] B. A. Nardi and J. R. Mi ller. The Spreadsheet Interface: A Basis for End User Pro - gram ming.Technical Report HPL - 90 - 08, Hewlett - Packard Software Technology Laboratory, March 1990. [15] R. R. Panko. What we Know About Spreadsheet Errors. Journal of End User Computing: Special issu e on Scaling Up End User Development , 10(2):15 – 21, 1998. [16] R. R. Panko. Applying code inspection to spreadsheet testing. Journal of Manage - ment Information Systems , 16(2):159 – 176, 1999. [17] R. R. Panko. Spreadsheet Errors: What We Know.What We Think We Can Do. Proceedings of EuSpRIG Symposium Spreadsheet Risks, Audit & Development Methods , pages 7 – 14, July 2000. [18] R. R. Panko. Two Corpuses of Spreadsheet Errors. Proceedings of the 33 rd Hawaii International Conference on System Sciences , 2000. [19] R. R. Panko and R. P. Halverson. Spreadsheets on Trial: A Survey of Research on Spreadsheet Risks. Proceedings of the 29 th Hawaii International Conference on System Sciences , pages 326 – 335, January 1996. [20] R. R. Panko and R. H. Sprague. Hitting the wall: Er rors in developing and code inspec ting a ’simple’ spreadsheet model. Decision Support Systems , 22(4):337 – 353, April 1998. [21] J. Reichwein, G. Rothermel, and M. Burnett. Slicing Spreadsheets: An Integrated Methodology for Spreadsheet Testing and Debuggin g. Proceedings of the 2 nd Conference on Domain Specific Languages , pages 25 – 38, October 1999. [22] A. G. Yoder and D. L. Cohn. Architectural Issues in Spreadsheet Languages. International Conference on Programming Languages and System Architectures , March 1993. [23] A. G. Yoder and D. L. Cohn. Real Spreadsheets for Real Programmers. Inter na - tional Conference on Computer Languages , pages 20 – 30, May 1994. [24] Y. Ayalew and R. Mittermeir. Interval - based Testing for Spreadsheets, Proceedings of Inter - national Arab Conference on Information Technology , pages 414 – 422, December, 2002.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment