A Privacy Preserving Randomized Gossip Algorithm via Controlled Noise Insertion

In this work we present a randomized gossip algorithm for solving the average consensus problem while at the same time protecting the information about the initial private values stored at the nodes. We give iteration complexity bounds for the method…

Authors: Filip Hanzely, Jakub Konev{c}ny, Nicolas Loizou

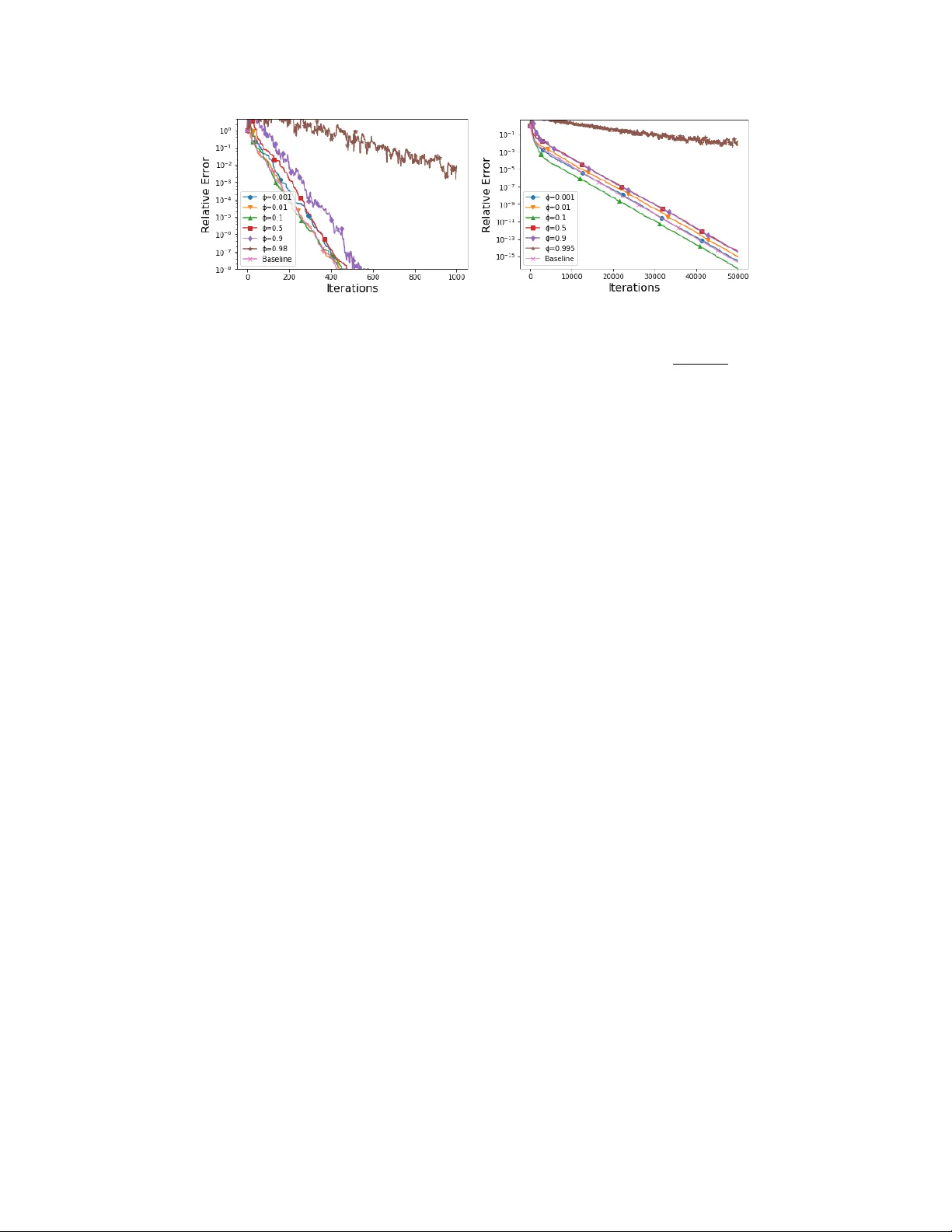

A Priv acy Pr eserving Randomized Gossip Algorithm via Contr olled Noise Insertion Filip Hanzely a Jakub K one ˇ cný b Nicolas Loizou c, ∗ Peter Richtárik a,c,d Dmitry Grishchenko e a King Abdullah University of Science and T echnology , KSA b Google, USA c University of Edinb ur gh, UK d Moscow Institute of Physics and T echnology , Russia e Université Gr enoble Alpes, F rance Abstract In this work 2 we present a randomized gossip algorithm for solving the av erage consensus problem while at the same time protecting the information about the initial pri vate v alues stored at the nodes. W e giv e iteration complexity bounds for the method and perform extensi ve numerical e xperiments. 1 Introduction In this paper we consider the av erage consensus (AC) problem. Let G = ( V , E ) be an undirected connected netw ork with node set V = { 1 , 2 , . . . , n } and edges E such that |E | = m . Each node i ∈ V “knows” a pri vate value c i ∈ R . The goal of AC is for e very node of the network to compute the av erage of these v alues, ¯ c def = 1 n P i c i , in a distrib uted fashion. That is, the exchange of information can only occur between connected nodes (neighbours). The literature on distributed protocols for solving the average consensus problem is vast and has long history [ 18 , 19 , 1 , 8 ]. In this work we focus on one of the most popular class of methods for solving the average consensus, the randomized gossip algorithms and propose a gossip algorithm for protecting the information of the initial v alues c i , in the case when these may be sensitive. In particular , we de velop and analyze a pri v acy preserving v ariant of the randomized pairwise gossip algorithm (“randomly pick an edge ( i, j ) ∈ E and then replace the v alues stored at vertices i and j by their av erage”) first proposed in [ 2 ] for solving the a verage consensus problem. While we shall not formalize the notion of priv acy preserv ation in this work, it will be intuitiv ely clear that our methods indeed make it harder for nodes to infer information about the pri v ate values of other nodes, which might be useful in practice . 1.1 Related W ork on Privacy Pr eserving A v erage Consensus The introduction of notions of priv acy within the AC problem is relati vely recent in the literature, and the existing w orks consider two dif ferent ideas. In [ 7 ], the concept of dif ferential pri vac y [ 3 ] is used to protect the output value ¯ c computed by all nodes. In this work, an exponentially decaying Laplacian noise is added to the consensus computation. This notion of priv acy refers to protection of ∗ Corresponding author (n.loizou@sms.ed.ac.uk) 2 The full-length paper , which includes a number of additional algorithms and results (including proofs of statements and experiments), is a vailable in [6]. 32nd Conference on Neural Information Processing Systems (NIPS 2018), Montréal, Canada. the final avera ge , and formal guarantees are pro vided. A different line of w ork with a more stricter goal is the design of priv acy-preserving a verage consensus protocols that guarantee protection of the initial values c i of the nodes [ 16 , 14 , 15 ]. In this setting each node should be unable to infer a lot about the initial v alues c i of any other node. In the existing w orks, this is mainly achie ved with the clev er addition of noise through the iterati ve procedure that guarantees preservation of pri vac y and at the same time con verges to the e xact av erage. W e shall howe ver mention, that none of these works address any specific notion of pri vac y (no clear measure of priv acy is presented) and it is still not clear how the formal concept of dif ferential priv acy [3] can be applied in this setting. 1.2 Main Contributions In this work, we present the first randomized gossip algorithm for solving the A verage Consensus problem while at the same time protecting the information about the initial values. T o the best of our kno wledge, this work is the first which combines the gossip-async hr onous frame work with the priv acy concept of protection of the initial values. Note that all the pre viously mentioned priv acy preserving av erage consensus papers propose protocols which work on the synchronous setting (all nodes update their values simultaneously). The conv ergence analsysis of proposed gossip protocol (Algorithm 1) is dual in nature. The dual approach is explained in detail in Section 2. It was first proposed for solving linear systems in [ 5 , 12 ] and then extended to the concept of a verage consensus problems in [ 10 , 13 ]. The dual updates immediately correspond to updates of the primal variables, via an af fine mapping. Algorithm 1 is inspired by the works of [ 14 , 15 ], and protects the initial values by inserting noise in the process. Broadly speaking, in each iteration, each of the sampled nodes first adds a noise to its current value, and an average is computed afterward. Conv ergence is guaranteed due to the correlation in the noise across iterations. Each node remembers the noise it added last time it was sampled, and in the following iteration, the previously added noise is first subtracted, and a fresh noise of smaller magnitude is added. Empirically , the protection of initial values is provided by first injecting noise into the system, which propagates across the network, b ut is gradually withdrawn to ensure con ver gence to the true av erage. 2 T echnical Pr eliminaries Primal and Dual Problems Consider solving the (primal) problem of projecting a gi ven vector c = x 0 ∈ R n onto the solution space of a linear system: min x ∈ R n { P ( x ) def = 1 2 k x − x 0 k 2 } subject to A x = b, (1) where A ∈ R m × n , b ∈ R m , x 0 ∈ R n . W e assume the problem is feasible, i.e., that the system A x = b is consistent. W ith the above optimization problem we associate the dual problem max y ∈ R m D ( y ) def = ( b − A x 0 ) > y − 1 2 k A > y k 2 . (2) The dual is an unconstrained concav e (but not necessarily strongly conca ve) quadratic maximization problem. It can be seen that as soon as the system A x = b is feasible, the dual problem is bounded. Moreov er , all bounded conca ve quadratics in R m can be written in the as D ( y ) for some matrix A and vectors b and x 0 (up to an additiv e constant). W ith any dual v ector y we associate the primal v ector via an af fine transformation, x ( y ) = x 0 + A > y . It can be shown that if y ∗ is dual optimal, then x ∗ = x ( y ∗ ) is primal optimal. Hence, any dual algorithm producing a sequence of dual variables y t → y ∗ giv es rise to a corresponding primal algorithm producing the sequence x t def = x ( y t ) → x ∗ . See [ 5 , 12 ] for the correspondence between primal and dual methods. Randomized Gossip Setup: Choosing A . In the gossip frame work we wish ( A , b ) to be an averag e consensus (A C) system. Definition 1. ([ 10 ]) Let G = ( V , E ) be an undir ected graph with |V | = n and |E | = m . Let A be a r eal matrix with n columns. The linear system A x = b is an “avera ge consensus (A C) system” for graph G if A x = b iff x i = x j for all ( i, j ) ∈ E . 2 Algorithm 1 Priv acy Preserving Gossip Algorithm via Controlled Noise Insertion Input: vector of pri v ate values c ∈ R n ; initial variances σ 2 i ∈ R + and variance decrease rate φ i such that 0 ≤ φ i < 1 for all nodes i . Initialize : Set x 0 = c ; t 1 = t 2 = · · · = t n = 0 , v − 1 1 = v − 1 2 = · · · = v − 1 n = 0 . for t = 0 , 1 , . . . k − 1 do 1. Choose edge e = ( i, j ) ∈ E uniformly at random 2. Generate v t i i ∼ N (0 , σ 2 i ) and v t j j ∼ N (0 , σ 2 j ) 3. Set w t i i = φ t i i v t i i − φ t i − 1 i v t i − 1 i and w t j j = φ t j j v t j j − φ t j − 1 j v t j − 1 j 4. Update the primal v ariable: x t +1 i = x t +1 j = x t i + w t i i + x t j + w t j j 2 , ∀ l 6 = i, j : x t +1 l = x t l 5. Set t i = t i + 1 and t j = t j + 1 end retur n x k In the rest of this paper we focus on a specific A C system; one in which the matrix A is the incidence matrix of the graph G (see Model 1 in [ 5 ]). In particular, we let A ∈ R m × n be the matrix defined as follows. Ro w e = ( i, j ) ∈ E of A is giv en by A ei = 1 , A ej = − 1 and A el = 0 if l / ∈ { i, j } . Notice that the system A x = 0 encodes the constraints x i = x j for all ( i, j ) ∈ E , as desired. It is also kno wn that randomized Kaczmarz method [ 17 , 4 , 11 ] applied to Problem 1 is equiv alent to randomized gossip algorithm (see [10, 13, 9] for more details). 3 Private Gossip via Contr olled Noise Insertion In this section, we present the Gossip algorithm with Controlled Noise Insertion. As mentioned in the introduction, the approach is similar to the technique proposed in [ 14 , 15 ]. Those works, howe ver , address only algorithms in the synchronous setting, while our work is the first to use this idea in the asynchronous setting. Unlike the abov e, we provide finite time con ver gence guarantees and allow each node to add the noise differently , which yields a stronger result. In our approach, each node adds noise to the computation independently of all other nodes. Howe ver , the noise added is correlated between iterations for each node. W e assume that ev ery node owns two parameters — the initial magnitude of the generated noise σ 2 i and rate of decay of the noise φ i . The node inserts noise w t i i to the system e very time that an edge corresponding to the node w as chosen, where variable t i carries an information ho w many times the noise was added to the system in the past by node i . Thus, if we denote by t the current number of iterations, we have P n i =1 t i = 2 t . In order to ensure con ver gence to the optimal solution, we need to choose a specific structure of the noise in order to guarantee the mean of the values x i con ver ges to the initial mean. In particular , in each iteration a node i is selected, we subtract the noise that was added last time, and add a fresh noise with smaller magnitude: w t i i = φ t i i v t i i − φ t i − 1 i v t i − 1 i , where 0 ≤ φ i < 1 , v − 1 i = 0 and v t i i ∼ N (0 , σ 2 i ) for all iteration counters k i ≥ 0 is independent to all other randomness in the algorithm. This ensures that all noise added initially is gradually withdrawn from the whole network. After the addition of noise, a standard Gossip update is made, which sets the v alues of sampled nodes to their av erage. Hence, we ha ve lim t →∞ E h c − 1 n P n i =1 x t i 2 i = 0 , as desired. It is not the purpose of this paper to define any quantifiable notion of protection of the initial v alues formally . Howev er , we note that it is likely the case that the protection of priv ate value c i will be stronger for bigger σ i and for φ i closer to 1 . W e now provide results of dual analysis of Algorithm 1. Theorem 2. Let us define ρ def = 1 − α ( G ) 2 m and ψ t def = 1 P n i =1 ( d i σ 2 i ) P n i =1 d i σ 2 i 1 − d i m 1 − φ 2 i t , wher e α ( G ) stands for algebr aic connectivity of G and d i denotes the de gr ee of node i . Then for all 3 k ≥ 1 we have the following bound E D ( y ∗ ) − D ( y k ) ≤ ρ k D ( y ∗ ) − D ( y 0 ) + P d i σ 2 i 4 m k X t =1 ρ k − t ψ t . Note that ψ t is a weighted sum of t -th powers of real numbers smaller than one. F or large enough t , this quantity will depend on the largest of these numbers. This brings us to define M as the set of indices i for which the quantity 1 − d i m 1 − φ 2 i is maximized: M = arg max i 1 − d i m 1 − φ 2 i . Then for any i max ∈ M we hav e ψ t ≈ 1 P n i =1 ( d i σ 2 i ) X i ∈ M d i σ 2 i 1 − d i m 1 − φ 2 i t = P i ∈ M d i σ 2 i P n i =1 ( d i σ 2 i ) 1 − d i max m 1 − φ 2 i max t , which means that increasing φ j for j 6∈ M will not substantially influence con vergence rate. Note that as soon as we hav e ρ > 1 − d i m 1 − φ 2 i (3) for all i , the rate from theorem 2 will be dri ven by ρ k (as k → ∞ ) and we will hav e E D ( y ∗ ) − D ( y k ) = ˜ O ρ k . One can think of the above as a threshold: if there is i such that φ i is large enough so that the inequality (3) does not hold, the con ver gence rate is driven by φ i max . Otherwise, the rate is not influenced by the insertion of noise. Thus, in theory , we do not pay anything in terms of performance as long as we do not hit the threshold. One might be interested in choosing φ i so that the threshold is attained for all i , and thus M = { 1 , . . . , n } . This motiv ates the following result: Corollary 3. Let us choose φ i def = q 1 − γ d i for all i , wher e γ ≤ d min . Then E D ( y ∗ ) − D ( y k ) ≤ 1 − min α ( G ) 2 m , γ m k D ( y ∗ ) − D ( y 0 ) + P n i =1 d i σ 2 i 4 m k ! . As a consequence, φ i = q 1 − α ( G ) 2 d i is the larg est decr ease rate of noise for node i such that the guaranteed con verg ence rate of the algorithm is not violated. 4 Experiments In this section we present a preliminary experiment (for more experiments see Section ?? , in the Appendix) to e valuate the performance of the Algorithm 1 for solving the A verage Consensus problem. The algorithm has two dif ferent parameters for each node i . These are the initial v ariance σ 2 i ≥ 0 and the rate of decay , φ i , of the noise. In this experiment we use tw o popular graph topologies the cycle graph (ring network) with n = 10 nodes and the random geometric graph with n = 100 nodes and radius r = p log( n ) /n . In particular , we run Algorithm 1 with σ i = 1 for all i , and set φ i = φ for all i and some φ . W e study the effect of v arying the value of φ on the conv ergence of the algorithm. In Figure 1 we see that for small values of φ , we eventually recover the same rate of linear con vergence as the Standard Pairwise Gossip algorithm (Baseline) of [ 2 ]. If the v alue of φ is sufficiently close to 1 howe ver , the rate is driven by the noise and not by the conv ergence of the Standard Gossip algorithm. This v alue is φ = 0 . 98 for cycle graph, and φ = 0 . 995 for the random geometric graph in the plots we present. 4 (a) (b) Figure 1: Con vergence of Algorithm 1, on the c ycle graph (left) and random geometric graph (right) for different v alues of φ . The “Relativ e Error " on the vertical axis represents the k x t − x ∗ k 2 k x 0 − x ∗ k 2 References [1] Dimitri P Bertsekas and John N Tsitsiklis. P arallel and distributed computation: numerical methods , volume 23. Prentice hall Englewood Clif fs, NJ, 1989. [2] Stephen Boyd, Arpita Ghosh, Balaji Prabhakar , and Dev a vrat Shah. Randomized gossip algorithms. IEEE T ransactions on Information Theory , 14(SI):2508–2530, 2006. [3] Cynthia Dwork, Aaron Roth, et al. The algorithmic foundations of differential pri v acy . F oundations and T rends R in Theor etical Computer Science , 9(3–4):211–407, 2014. [4] Robert M Gower and Peter Richtárik. Randomized iterati ve methods for linear systems. SIAM Journal on Matrix Analysis and Applications , 36(4):1660–1690, 2015. [5] Robert M Gower and Peter Richtárik. Stochastic dual ascent for solving linear systems. , 2015. [6] F . Hanzely , J. Kone ˇ cný, N. Loizou, P . Richtárik, and D. Grishchenko. Privac y preserving randomized gossip algorithms. , 2017. [7] Zhenqi Huang, Sayan Mitra, and Geir Dullerud. Differentially priv ate iterati ve synchronous consensus. In Pr oceedings of the 2012 A CM W orkshop on Privacy in the Electronic Society , pages 81–90. A CM, 2012. [8] David K empe, Alin Dobra, and Johannes Gehrke. Gossip-based computation of aggregate information. In F oundations of Computer Science, 2003. Pr oceedings. 44th Annual IEEE Symposium on , pages 482–491. IEEE, 2003. [9] Nicolas Loizou, Michael Rabbat, and Peter Richtárik. Prov ably accelerated randomized gossip algorithms. arXiv:1810.13084 , 2018. [10] Nicolas Loizou and Peter Richtárik. A new perspecti ve on randomized gossip algorithms. In 4th IEEE Global Confer ence on Signal and Information Pr ocessing (GlobalSIP) , 2016. [11] Nicolas Loizou and Peter Richtárik. Linearly conv ergent stochastic heavy ball method for minimizing generalization error . NIPS Optimization for Machine Learning W orkshop , 2017. [12] Nicolas Loizou and Peter Richtárik. Momentum and stochastic momentum for stochastic gradient, newton, proximal point and subspace descent methods. , 2017. [13] Nicolas Loizou and Peter Richtárik. Accelerated gossip via stochastic heavy ball method. arXiv:1809.08657 , 2018. [14] Nicolaos E Manitara and Christoforos N Hadjicostis. Pri vac y-preserving asymptotic av erage consensus. In Contr ol Confer ence (ECC), 2013 Eur opean , pages 760–765. IEEE, 2013. [15] Y ilin Mo and Richard M Murray . Privac y preserving av erage consensus. IEEE T ransactions on A utomatic Contr ol , 62(2):753–765, 2017. [16] Erfan Nozari, Pav ankumar T allapragada, and Jor ge Cortés. Differentially priv ate a verage consensus: obstructions, trade-offs, and optimal algorithm design. Automatica , 81:221–231, 2017. 5 [17] Thomas Strohmer and Roman V ershynin. A randomized Kaczmarz algorithm with exponential conv ergence. Journal of F ourier Analysis and Applications , 15(2):262–278, 2009. [18] John N Tsitsiklis. Problems in decentralized decision making and computation. T echnical report, DTIC Document, 1984. [19] John N Tsitsiklis, Dimitri Bertsekas, and Michael Athans. Distributed asynchronous deterministic and stochastic gradient optimization algorithms. IEEE T ransactions on Automatic Contr ol , 31(9):803–812, 1986. 6

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment