Orthogonal Floating Search Algorithms: From The Perspective of Nonlinear System Identification

The present study proposes a new Orthogonal Floating Search framework for structure selection of nonlinear systems by adapting the existing floating search algorithms for feature selection. The proposed framework integrates the concept of orthogonal …

Authors: Faizal Hafiz, Akshya Swain, Eduardo Mendes

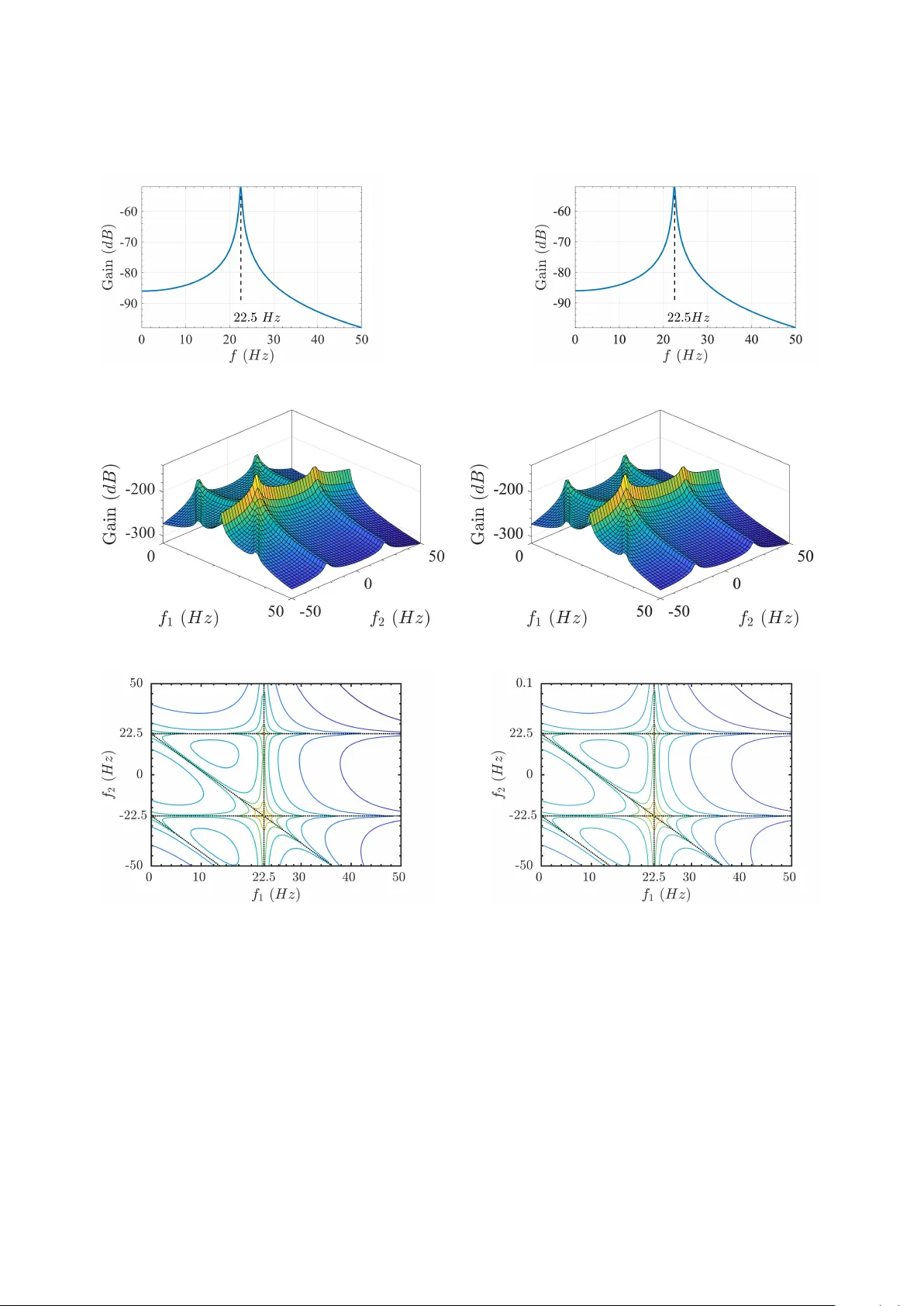

Orthogonal Floating Search Algorithms: From The Perspecti ve of Nonlinear System Identification Faizal Hafiz a, ∗ , Akshya Swain a , Eduardo Mendes b a Department of Electrical & Computer Engineering , The University of Auc kland, Auc kland, New Zealand b Department of Electr onics Engineering, F ederal University of Minas Ger ais, Belo Horizonte, Brazil Abstract The present study proposes a ne w Orthogonal Floating Search framew ork for structure selection of nonlinear systems by adapting the existing floating search algorithms for feature selection. The proposed framework inte- grates the concept of orthogonal space and consequent Error -Reduction-Ratio (ERR) metric with the existing floating search algorithms. On the basis of this framew ork, three well-known feature selection algorithms ha ve been adapted which include the classical Sequential F orward Floating Search (SFFS), Improv ed sequential F orward Floating Search (IFFS) and Oscillating Search (OS). This framework retains the simplicity of classical Orthogonal Forward Regression with ERR (OFR-ERR) and eliminates the nesting e ff ect associated with OFR-ERR. The performance of the proposed framew ork has been demonstrated considering se veral benchmark non-linear systems. The results sho w that most of the existing feature selection methods can easily be tailored to identify the correct system structure of nonlinear systems. K e ywor ds: Forward model selection, Model structure selection, N ARX model, Nonlinear system, System identification 1. Introduction Over the last sev eral decades, researchers from v arious fields of science and engineering have developed several methods to construct mathematical models from measured input-output data ( popularly known as system identifica- tion) [1, 2]. Since most of the practical systems are nonlinear , the first step in the process of fitting the models is the choice of the model amongst v arious av ailable models (e.g., V olterra, W iener , Polynomial / Rational) which can e ff ec- tiv ely capture the dynamics in input-output data. In this study , the focus is on the identification of nonlinear systems represented by polynomial nonlinear auto-regressiv e with exogenous inputs (NARX) models [1]. Note that the iden- tification of system is a two stage process which inv olves 1) Determination of the significant terms and 2) Estimation of the corresponding parameters. While the parameters can be estimated using least-squares based algorithms, the determination of the significant terms is a challenging task and often referred to as ‘structur e selection’ . A close examination of the structure selection problem in nonlinear system identification re veals that this problem has significant similarities with the featur e selection problem encountered in the pattern recognition. In essence, both problems belong to a broader class of combinatorial optimization problem which is referred here as the attribute selection problem. T o understand this further , consider a superset of ‘ n ’ number of ‘attrib utes’ , denoted by: X model = { x 1 , x 2 , . . . x n } , where any attribute (say ‘ x i ’), could represent either a featur e (in pattern recognition ) or a term of ∗ Corresponding author Email addr ess: faizalhafiz@ieee.org (Faizal Hafiz) the NARX model (in system identification ). The goal of the attribute selection is to determine a subset of significant attributes, X ? ⊂ X model , by maximizing a suitable criterion function,‘ J ( · )’, as follows: J ( X ? ) = max X⊂X model J ( X ) (1) An exhausti ve search to solv e such combinatorial problem is often intractable even for a moderate number of attrib utes, ‘ n ’, as it requires the ev aluation of 2 n subsets. Over the years sev eral approaches have been proposed to address the structure selection problem of NARX models, e.g . , see [3 – 8]. Among these, the Orthogonal Forward Regression (OFR) [4, 9, 10] is, arguably , computationally the most e ff ectiv e. Perhaps, for this reason, it has been extensiv ely applied in many applications [1, 11]. The central idea of this approach is to decouple the terms in the orthogonal space so that the contribution of each term to the output variance can indi vidually be determined. From the search perspecti ve, the OFR is a sequential gr eedy approach where the term with the highest performance metric, referred to as Err or-Reduction-Ratio (ERR), is included in each step and it has prov en to be very e ff ective in various applications [3, 11]. Howe ver , it has been shown that under certain conditions the OFR may yield sub-optimal term subsets [12 – 16]. The reason for such suboptimal performance is often attributed to the performance metric, ERR. For example, Mao and Billings [12] have shown that for the given term, the value of ERR is dependent on the orthogonalization path , i.e. , the order in which the terms are orthogonalized. This may lead to the selection of spurious terms, when there is an information overlap among the non-orthogonal terms, especially in the earlier stages of the search [15]. T o alle viate these issues, several approaches hav e been proposed to extend / modify the classical OFR algorithm. These can be categorized mainly into two approaches: 1) T wo-step approach [12, 15, 17] and 2) Improv ed performance metric [13, 14, 16]. In the two-step approach, OFR is applied first to identify the initial term subset which is further refined by a secondary search procedure. For example, in [12], the initial term subset is refined by applying Genetic Algorithm (GA) to identify the optimal orthogonalization path . Howe ver , the explosi ve growth of the search space limits the application of this approach, e.g. , for ‘ n ’ number of terms, the total number of possible orthogonalization paths becomes ‘ n !’. In comparison, if GA (or any suitable algorithm) is applied directly to select term subset the search space reduces from n ! to 2 n [18]. The ‘iterative OFR’ (iOFR) algorithm [15] uses each term in the initial term subset as a pivot to identify new , and possibly better , term subsets in the secondary search. In [17], a backwar d elimination approach is proposed as the secondary search to remove the spurious terms contained in the initial term subset. Most of the two-step approach, e.g. [17], are usually e ff ective pro vided all the system / significant terms are identified in the initial term subset. The focus of the second approach is to replace the performance metric, ‘ERR’ [13, 14, 16]. For this purpose, sev eral performance metrics hav e been proposed based on Simulation Error [13], Mutual Information [14] and Ultra- orthogonal least squares [16]. While these metrics improv e the search performance of OFR in man y cases, they often increase the computational complexity of OFR. It is worth to emphasize that the aforementioned approaches to improv e OFR are based on the pre vailing belief that the sub-optimal performance of OFR is due only to its performance metric, ERR. Howe ver , in this study , it is argued that this may be in part due to the uni-dir ectional sequential search nature of OFR where terms are progressively added into the term subset until pre-specified ERR threshold is reached. This leads to the ‘nesting e ff ect’ , i.e. , once a term is included in the subset, it cannot be removed from the term subset. This imposes a hierarchy of terms, whereas in practice, all significant terms are equally important. Note that the nesting e ff ect is not new and it has been well-known among the feature selection community since the early se venties [19–21]. Over the years, state-of-the-art 2 ‘floating’ feature selection algorithms have specifically been developed to address the nesting e ff ect [22 – 25]. Since there already exist a class of floating feature selection algorithms and gi ven that both structure selection and feature selection share a common search objectiv e, the question arises: Can the featur e selection algorithms be adapted to addr ess the structur e selection pr oblem? The present study aims to address this apropos question. In particular , the proposed Orthogonal Floating Search Framew ork integrates the ke y properties of the classical Sequential Forward Floating Search (SFFS) and its variants such as Improv ed F orward Floating Search (IFFS) and Oscillating Search (OS) with the Error-Reduction-Ratio (ERR) to determine the structure of nonlinear systems. In each step of these algorithms, after adding a ‘significant’ term (dis- cussed in Section 3.1), the selected terms are re-examined to identify and remove the ‘least significant’ term (discussed in Section 3.1). Thus, the spurious terms can e ventually be replaced by the system / significant terms during the search process. The search performance is therefore significantly improv ed without the need for a second search procedure or a complicated performance measure. The e ffi cacy of the proposed approach is demonstrated on se veral benchmark non-linear systems. The worst case scenario ( non-persistent e xcitation ) and the identification of a discrete-time model of a continuous-time system are also considered. The results of this in vestigation convincingly demonstrate that the feature selection algorithms can indeed be tailored for the purpose of structure selection. The rest of the article is or ganized as follo ws: The polynomial N ARX model and the OFR approach are discussed briefly in Section 2. Next, the proposed Orthogonal Floating Search Framework is discussed in detail in Section 3. The frame work of this in vestigation is described in Section 4. Finally , the results of this in vestigation are discussed at length in Section 5. 2. Preliminaries In the following, the polynomial N ARX model and the orthogonal regression approach are briefly discussed. 2.1. The P olynomial N ARX Model The NARX model represents a non-linear system as a function of recursiv e lagged input and output terms as follows: y ( k ) = F n l { y ( k − 1) , . . . , y ( k − n y ) , u ( k − 1) , . . . , u ( k − n u ) } + e ( k ) where y ( k ) and u ( k ) respecti vely represent the output and input at time intervals k , n y and n u are corresponding lags and F n l {· } is some nonlinear function of degree n l . The total number of possible terms or model size ( n ) of the N ARX model is giv en by , n = n l X i = 0 n i , n 0 = 1 and n i = n i − 1 ( n y + n u + i − 1) i , i = 1 , . . . , n l (2) This model is essentially linear-in-parameters and can be e xpressed as: y ( k ) = n X i = 1 θ i x i ( k ) + e ( k ) , k = 1 , 2 , . . . N (3) where, x 1 ( k ) = 1 , and x i ( k ) = p y Y j = 1 y ( k − n y j ) q u Y k = 1 u ( k − n u k ) i = 2 , . . . , n , 3 p y , q u ≥ 0; 1 ≤ p y + q u ≤ n l ; 1 ≤ n y j ≤ n y ;1 ≤ n u k ≤ n u ; n l is the degree of polynomial expansion; ‘ N ’ denotes the total number of data points. 2.2. Orthogonal Re gression In this study , the orthogonalization is used to determine the significance of a particular term, which will be dis- cussed in Section 3.1. It is, therefore, pertinent to briefly discuss the concept of orthogonal regression introduced by Billings et al. [4, 9, 10]. In the orthogonal regression, as the name suggests, the original terms are replaced by the corresponding orthogonal terms. Gi ven that the orthogonal terms are decoupled, the significance of each term can be determined independently in the orthogonal space. This could be achiev ed by a simple Gram-Schmidt orthogonaliza- tion: w 1 = x 1 , w i = x i − i − 1 X m = 1 h w m x i i w T m w m Consequently , the N ARX model (3) can be represented in the orthogonal space as follows: y ( k ) = n X i = 1 w i ( k ) g i + e ( k ) , k = 1 , 2 , . . . N Giv en that, in the orthogonal space, w T i w j = 0 holds for i , j , the significance of the i th term, x i , can be easily be determined using the Error-Reduction-Ratio (ERR) metric as follo ws: E RR ( x i ) = g 2 i w T i w i y T y , where, g i = w T i y w T i w i , i ∈ [1 , n ] (4) 3. Proposed Orthogonal Floating Sear ch Framework This study aims to in vestigate whether the existing feature selection algorithms can be tailored to address the non- linear system identification problem. For this purpose, a new search framew ork is being proposed which integrates the term orthogonalization into ‘ floating ’ feature selection algorithms and therefore referred to as ‘ Orthogonal Floating Sear ch F rame work ’. The term orthogonalization decouples the structure selection and the parameter estimation, which are crucial steps of the nonlinear system identification. It is, therefore, a vital first step to adapt the feature selection algorithms for system identification. Further, the following ‘ floating ’ feature selection algorithms hav e been adapted: Sequential F or- war d Floating Sear ch (SFFS) [22], Impr oved F orwar d Floating Sear ch (IFFS) [25] and Oscillating Sear ch (OS) [24]. The moti vation for the selection of these methods is two-fold: 1) The term orthogonalization can be integrated with relativ e ease into the floating search methods. 2) The step-wise subset building approach of the sequential floating methods ( e.g . , SFFS and IFFS) is similar to that of the Orthogonal Forward Regression (OFR-ERR) [4]. Further , in OS, the depth of the search process can easily be controlled as per the prev ailing requirements, as will be discussed in Section 3.4. The concept of feature significance is central to the floating search methods. In this study , we extend this concept in the context of system identification and integrate the term orthogonalization to determine the significance of a term / term subset. This is discussed in Section 3.1. Further , each search method and associated adaption are discussed in the conte xt of the system identification in Section 3.2, Section 3.3 and Section 3.4. Note that the adapted v ersions of SFFS, IFFS and OS are referred to respectively as Orthogonal Sequential Floating search (OSF), Orthogonal Improv ed sequential Floating search (OIF) and Orthogonal Oscillating Search (O 2 S). 4 3.1. T erm Significance Determination of feature significance is the core of the floating search methods [22 – 25], where in each search step, the most significant feature / features are selected from the pool of a vailable features and then the least significant feature / features are removed, from the selected feature subset. If a similar approach is to be applied to address the structure selection problem, it is essential to determine first the ‘ significance ’ of a term / term subset. For this purpose, in this study , we extend the definitions of feature significance developed by Pudil, Somol and co-workers [22–24] as follows: Let X k = { x 1 , x 2 , . . . x k } be the term subset selected by the search algorithm at particular search step from the superset of N ARX terms, X model = { x 1 , x 2 , . . . x n } . Consequently , the subset of a vailable terms is gi ven by X model \ X k . Let X i o denote i th subset containing ‘ o ’ number of terms. The criterion function corresponding to the term subset X k is denoted by J ( X k ) and is giv en by J ( X k ) = k X i = 1 E RR ( x i ) , ∀ x i ∈ X k (5) where, E RR ( x i ) is giv en by (4). For these subsets, the significance of term / term subset is defined as follo ws: Definition 1. The most significant term with r espect to X k is denoted as ‘ x M S ’ and is defined as: x M S = { x i | J ( X k ∪ x i ) = max i J ( X k ∪ x i ) , x i ∈ X model \ X k } , ∀ i ∈ [1 , n ] (6) Definition 2. The least significant term in X k is denoted as ‘ x LS ’ and is defined as: x LS = { x i | J ( X k \ x i ) = max i J ( X k \ x i ) , x i ∈ X k } , ∀ i ∈ [1 , n ] (7) Definition 3. The most significant subset of ‘o’ number of terms with r espect to X k is denoted as ‘ X M S o ’ and is defined as: X M S o = {X i o | J ( X k ∪ X i o ) = max i J ( X k ∪ X i o ) , X i o ∈ X model \ X k } (8) Definition 4. The least significant subset of ‘o’ number of terms in X k is denoted as ‘ X LS o ’ and is defined as: X LS o = {X i o | J ( X k \ X i o ) = max i J ( X k \ X i o ) , X i o ∈ X k } (9) 3.2. Orthogonal Sequential Floating Sear ch The principle of the generalized floating search was introduced by Pudil et al. in [22] which is essentially a sequential approach with a distinct ability to ‘ backtr ack ’, i.e. , the selected / discar ded term can be discar ded / selected in later stages. It is worth to note that the floating search can proceed either in forward (bottom-up) or backwar d (top-down) direction. In this study , we focus on the forward or bottom-up approach. This is referred to as Orthogonal Sequential Floating (OSF) search. The pseudo code for OSF is shown in Algorithm 1. Note that the OSF does not include ‘ term swapping ’ procedure (Line 21-31, Algorithm 1), in contrast to the improv ed floating search approach (which will be discussed in Section 3.3). 5 Algorithm 1: Orthogonal Floating Structure Selection. Input : Input-output Data, ( u , y ); Number of required terms, ‘ ξ ’ Output: Identified Structure, X 1 Begin with the empty subset: X ← ∅ , k = 0, J ( X i ) = 0 , ∀ i ∈ [1 , ξ ] 2 while k < ξ do */ Sequential Forward Selection 3 Add the most significant term to X , i.e. , ˆ X ← {X ∪ x MS } 4 if J ( ˆ X ) > J ( X k + 1 ) then 5 X ← ˆ X ; J ( X ) ← J ( ˆ X ) 6 else 7 X ← X k + 1 ; J ( X ) ← J ( X k + 1 ) 8 end 9 k ← k + 1 */ Backwards Elimination 10 Set the first e xclusion flag , f 1 = 1 11 while k > 2 do 12 Identify the least significant term, x LS , in X 13 if ( f 1 = 1 ∧ x LS = x MS ) OR J ( X\ x LS ) ≤ J ( X k − 1 ) then 14 Stop Elimination. 15 else 16 Remov e the least significant term, i.e. , 17 X ← X\ x LS ; J ( X ) ← J ( X\ x LS ); k ← k − 1 18 end 19 f 1 = 0 20 end */ Term Swapping : Included only in OIF 21 for i = 1 to k do 22 Remov e the i th term, i.e. , ˆ X i ← X\ x i 23 Add the most significant term to ˆ X i , i.e. , ˆ X i ← { ˆ X i ∪ x MS } 24 end 25 Select the best swapping subset, i.e. , ˆ X = { ˆ X i | J ( ˆ X i ) = max i = 1: k J ( ˆ X i ) } 26 if J ( ˆ X ) > J ( X k ) then 27 X ← ˆ X ; J ( X ) ← J ( ˆ X ) 28 if k > 2 then 29 Go T o Step 11 for the Backward Elimination 30 end 31 end 32 end Note that the OSF does not include the ‘ T erm Swapping ’ procedure sho wn in Line 21-31. The search process in OSF be gins with an empty term subset and is continued till the term subset with the desired cardinality ‘ ξ ’ is obtained. Each search step essentially in volv es two procedures: inclusion of the most significant term followed by the removal of the least significant term / terms through backtr acking . This procedure is discussed briefly in the following: Let X k denote a term subset with k number of terms at a particular search step. First, follo wing Definition 1, the most significant term ( x M S ) with respect to X k , is identified from the pool of av ailable terms. This term is included in the term subset provided it leads to a better criterion function, i.e. , J ( X k ∪ x M S ) > J ( X k + 1 ). This procedure is outlined in Lines 3-9, Algorithm 1. After including a term, the focus is on removing least-significant terms present in the 6 Figure 1: Search Behavior of OSF for the numerical example with ξ = 6. Note that the ‘backtracking’ at Step 6 led to better subsets, e.g. , J ( X + 2 ) > J ( X 2 ), J ( X + 3 ) > J ( X 3 ) and so on. T able 1: Search Steps In volv ed in OSF † Step ξ s t e p Subset † J ( · ) 1 1 X 1 = { x 1 } 0.7454 2 2 X 2 = { x 1 , T 1 } 0.8609 3 3 X 3 = { x 1 , x 3 , T 1 } 0.9496 4 4 X 4 = { x 1 , x 3 , x 4 , T 1 } 0.9636 5 5 X 5 = { x 1 , x 3 , x 4 , x 5 , T 1 } 0.9708 6 2 X + 2 = { x 2 , x 5 } 0.8804 7 3 X + 3 = { x 2 , x 3 , x 5 } 0.9795 8 4 X + 4 = { x 2 , x 3 , x 5 , T 2 } 0.9937 9 5 X + 5 = { x 1 , x 2 , x 3 , x 4 , x 5 } 0.9986 10 6 X 6 = { x 1 , x 2 , x 3 , x 4 , x 5 , T 3 } 0.9986 † System T erms: x 1 → c , x 2 → y ( k − 1), x 3 → u ( k − 2), x 4 → y ( k − 2) 2 , x 5 → u ( k − 1) 2 Spurious T erms: T 1 → y ( k − 1) u ( k − 1) 2 , T 2 → y ( k − 1) y ( k − 2) 2 , T 3 → u ( k − 1) u ( k − 2) 2 selected term subset through adaptive backtracking, as sho wn in Lines 10-20, Algorithm 1. It is worth to emphasize that the backtracking continues to remov e the least significant term until an improvement in the criterion function is obtained, i.e. , J ( X k \ x LS ) > J ( X k − 1 ). T o gain an insight into the search dynamics of the proposed OSF , consider the following system. S 1 : y ( k ) = 0 . 5 + 0 . 5 y ( k − 1) + 0 . 8 u ( k − 2) − 0 . 05 y ( k − 2) 2 + u ( k − 1) 2 + e ( k ) (10) For identification purposes, 1000 input-output data-points are generated by exciting the system S 1 with zero-mean uniform white noise sequence with unit variance, u ∼ W U N (0 , 1) and Gaussian white noise, e ∼ W G N (0 , 0 . 05). The model set of 165 N ARX terms is obtained with the following specifications: [ n u , n y , n l ] = [4 , 4 , 3]. The OSF is applied to identify six significant terms of S 1 , i.e . , ξ = 6. Note that the floating search methods require a priori specification of subset size, ‘ ξ ’. A simple approach to select the appropriate subset size ξ and the associated issues will be discussed in Section 3.5. The search behavior of OSF is explained as follows: In each search step, the term subset, its cardinality and the corresponding criterion function are recorded, which are shown in T able 1 and Fig. 1. For the sake of clarity , the system terms and spurious terms are respecti vely denoted by ‘ x ’ and ‘ T ’. Further, a better subset for the giv en cardinality is annotated by ‘ + ’, e.g . , X + 2 indicates that J ( X + 2 ) > J ( X 2 ). The positi ve e ff ects of backtracking are clearly evident in Fig. 1. The backtracking occurs after the subset X 5 is obtained. Note that the subsets obtained after backtracking ( X + 2 , X + 3 , X + 4 and X + 5 ) yield improved criterion function in comparison to the previous subsets with the similar cardinality ( X 2 , X 3 , X 4 and X 5 ). A closer examination of subsets given in T able 1 reveals that the backtracking could remov e the spurious term ‘ T 1 ’ which is first included at Step 2 and remains in the selected subsets till Step 5. Further, the subset containing all the system terms, X + 5 = { x 1 , x 2 , x 3 , x 4 , x 5 } , is obtained during backtracking at Step 6. This subset is ev entually selected at Step 9 when the forward inclusion procedure failed to yield a better subset, i.e. , the search is restored to pre vious best subset (in this case X + 5 , as J ( X + 4 ∪ x M S ) < J ( X + 5 ); see Line 4-9, Algorithm 1). 7 Figure 2: Search Behavior of OIF for the numerical example with ξ = 5. Note the backtracking after X 4 leading to better subsets, X + 2 , X + 3 and X + 4 . T able 2: Search Steps In volv ed in OIF † Step ξ s t e p Subset J ( · ) 1 1 X 1 = { x 1 } 0.7454 2 2 X 2 = { x 1 , T 1 } 0.8609 3 3 X 3 = { x 1 , x 3 , T 1 } 0.9496 4 4 X 4 = { x 1 , x 3 , x 4 , T 1 } 0.9636 5 2 X + 2 = { x 2 , x 5 } 0.8804 6 3 X + 3 = { x 2 , x 3 , x 5 } 0.9795 7 4 X + 4 = { x 2 , x 3 , x 5 , T 2 } 0.9937 8 5 X 5 = { x 1 , x 2 , x 3 , x 4 , x 5 } 0.9986 † System T erms: x 1 → c , x 2 → y ( k − 1), x 3 → u ( k − 2), x 4 → y ( k − 2) 2 , x 5 → u ( k − 1) 2 Spurious T erms: T 1 → y ( k − 1) u ( k − 1) 2 , T 2 → y ( k − 1) y ( k − 2) 2 3.3. Orthogonal Impr oved Floating Sear ch The next algorithm considered in this study is based on the improved floating search proposed in [25]. This algo- rithm adds a new procedure referred to as ‘ swapping ’ to replace a weak feature, besides retaining both the procedures of the floating search. The impro ved floating search is adapted for the structure selection by including the ERR metric associated with orthogonal least square algorithms of [4]. This is referred to as Orthogonal Improved Floating (OIF) search and discussed briefly in the following. The procedures in volv ed in OIF are shown in Algorithm 1. As discussed earlier , OIF retains the ‘ forward in- clusion ’ (Line 3-9, Algorithm 1) and ‘ bac ktrac king ’ (Line 10-20, Algorithm 1) procedures of the OSF and the same discussions are valid here. In addition, the swapping procedure is introduced after backtrac king as shown in Line 21- 31. The objective of this procedure is to replace a weak / non-significant term which is e xplained as follo ws: Assume that X k = { x 1 , x 2 , . . . x k } is the term subset obtained after bac ktracking . T o replace a non-significant term, sev eral ne w candidate term subsets are generated from X k . The i th candidate term subset (denoted as ˆ X i ) is generated from X k in the following tw o steps: 1. First, the i th term is remov ed from X k , i.e. , ˆ X i ← {X k \ x i } 2. Next, the most significant term with respect to ˆ X i is included, i.e. , ˆ X i ← { ˆ X i ∪ x M S } Follo wing these steps, a total of ‘ k ’ candidate term subsets are obtained from X k and the subset with the highest criterion function, J ( · ), is referred to as ‘ swapping subset ’(denoted by ˆ X ). If the swapping subset yields an improved criterion function, then it replaces the current term subset X k and sent for backtracking, as outlined in Line 26-31, Algorithm 1. Thus, OIF takes a two-pronged approach to remove weak / non-significant terms: the backtrac king removes the weak term / terms and the swapping replaces a weak term. Especially , the swapping procedure encourages the explo- ration of new term subsets which is likely to yield improv ed search performance in comparison to OSF . T o in vestigate this further, the OIF is applied to identify the significant terms of the system S 1 considered in (10) under the similar test conditions. The final subsets obtained in each step and the corresponding criterion function are shown in T able 2. The varia- tions in the criterion function are sho wn in Fig. 2. Though, the ov erall search dynamics of both OSF (Fig. 1) and OIF (Fig. 2) may appear similar in nature, it is interesting to note that the backtracking in OIF occurs one step earlier (Step 8 Algorithm 2: Orthogonal Oscillating Search (O 2 S) for Structure Selection Input : Input-output Data, ( u , y ); Number of required terms, ‘ ξ ’ Output: Identified Structure, X ξ */ Initialize 1 Select the maximum search depth, O ma x 2 Set the search depth, o = 1 and ‘ flags ’, f 1 = 0, f 2 = 0 3 X ξ ← ∅ 4 for i = 1 to ξ do 5 Add the most significant term to X ξ , i.e. , X ξ ← {X ξ ∪ x MS } 6 end 7 while o < O ma x do */ Down Swing 8 Remov e ‘ o ’ number of least significant terms from X ξ , i.e. , X ξ − o ← X ξ \X LS o 9 Add ‘ o ’ number of most significant terms to X ξ − o , i.e. , ˆ X ξ ← {X ξ − o ∪ X MS o } 10 if J ( ˆ X ξ ) > J ( X ξ ) then 11 X ξ ← ˆ X ξ ; J ( X ξ ) ← J ( ˆ X ξ ); f 1 ← 0 */ better subset is found 12 else 13 f 1 ← 1 */ down swing is unsuccessful 14 end 15 if f 1 = 1 ∧ f 2 = 1 then 16 o = o + 1 */ increase search depth 17 end */ Up Swing 18 Add ‘ o ’ number of most significant terms to X ξ , i.e. , X ξ + o ← {X ξ ∪ X MS o } 19 Remov e ‘ o ’ number of least significant terms from X ξ + o , i.e. , ˆ X ξ ← X ξ + o \X LS o 20 if J ( ˆ X ξ ) > J ( X ξ ) then 21 X ξ ← ˆ X ξ ; J ( X ξ ) ← J ( ˆ X ξ ); f 2 ← 0 */ better subset is found 22 else 23 f 2 ← 1 */ up swing is unsuccessful 24 end 25 if f 1 = 1 ∧ f 2 = 1 then 26 o = o + 1 */ increase search depth 27 end 28 end 5, T able 2) in comparison to OSF (Step 6, T able 1). This could be e xplained by the swapping procedure of OIF which enables the inclusion of missing system term ‘ x 2 ’ one step earlier . 3.4. Orthogonal Oscillating Sear ch The third algorithm considered in this study is the oscillating search introduced by Somol and Pudil in [24] which is the natural successor of the floating search principle. It retains one of the key properties of floating search, i.e. , the ability to backtr ack . Unlike the floating search principle, where the focus is on the individual feature, the oscillating search focuses on a subset of features. The basic search engine in this method is the ‘ swing ’ procedure where the subset under consideration is perturbed by successi ve addition / r emoval and r emoval / addition of multiple features. In this study , the oscillating search is also adapted for the structure selection by the introduction of ‘orthogonalization’ 9 T able 3: Search Steps In volv ed in O 2 S with ξ = 5 and O ma x = 2 Step Search Depth ‘ o ’ ξ s t e p Subset † J ( · ) f 1 f 2 Remark 1 - 5 X 5 = { x 1 , x 3 , x 4 , x 5 , T 1 } 0.97079 0 0 Initial Model 2 1 4 X 4 = { x 1 , x 3 , x 4 , T 1 } Down Swing Did Not Improv e 3 5 X 5 = { x 1 , x 3 , x 4 , x 5 , T 1 } 0.97079 0 0 4 1 6 X 6 = { x 1 , x 2 , x 3 , x 4 , x 5 , T 2 } Up Swing Improv ed 5 5 X + 5 = { x 1 , x 2 , x 3 , x 4 , x 5 } 0 . 99856 1 0 6 1 4 X 4 = { x 2 , x 3 , x 4 , x 5 } Down Swing Did Not Improv e 7 5 X 5 = { x 1 , x 2 , x 3 , x 4 , x 5 } 0.99856 1 0 8 1 6 X 6 = { x 1 , x 2 , x 3 , x 4 , x 5 , T 2 } Up Swing Did Not Improv e 9 5 X 5 = { x 1 , x 2 , x 3 , x 4 , x 5 } 0.99856 1 1 10 2 3 X 3 = { x 2 , x 3 , x 5 } Down Swing Did Not Improv e 11 5 X 5 = { x 2 , x 3 , x 5 , T 3 , T 4 } 0.99505 1 1 † System T erms: x 1 → c , x 2 → y ( k − 1), x 3 → u ( k − 2), x 4 → y ( k − 2) 2 , x 5 → u ( k − 1) 2 Spurious T erms: T 1 → y ( k − 1) u ( k − 1) 2 , T 2 → u ( k − 1) u ( k − 2) 2 , T 3 → y ( k − 1) y ( k − 2) 2 , T 4 → y ( k − 2) 3 and referred to as Orthogonal Oscillating Search (O 2 S). The steps in volved in O 2 S are outlined in Algorithm 2 and discussed briefly in the following: Let ‘ ξ ’ denote the required number of terms. The search is initialized with a subset, X ξ , containing ξ number of terms. This initial subset is obtained by successi vely adding the most significant terms (See Definition 1) as outlined Line 4-6, Algorithm 2. Subsequently , this subset is perturbed throughout the search process by the ‘ swing ’ procedures. The rationale behind the swing procedure is that a better subset could be obtained by replacing multiple (say , ‘ o ’) number of weak or non-significant terms present in the current subset with the similar number of rele vant or significant terms. This could be achiev ed by the following two ‘swing’ procedures: 1) Do wn Swing and 2) Up Swing. During the ‘ down swing ’, at first ‘ o ’ number of least significant terms in X ξ are remov ed which yields a subset of ( ξ − o ) terms, X ξ − o . Subsequently , ‘ o ’ number of most significant terms are added to X ξ − o to yield a ne w , and possibly a better subset of ξ terms, ˆ X ξ . This new subset is retained provided the criterion function is improved. The down swing procedure is outlined in Line 8-14, Algorithm 2. The Up-swing procedure, as the name suggests, first adds ‘ o ’ number of most significant terms to X ξ to yield a subset X ξ + o . Next, a ne w subset ˆ X ξ is obtained by removing the o number of least significant terms from X ξ + o . The other aspects are similar to the down swing. This procedure is outlined in Line 18-24, Algorithm 2. It is clear that both swing procedures require identification of the follo wing two subsets with respect to the term subset under consideration: the subset of ‘ o ’ most significant terms (denoted as X M S o ; see Definition 3) and the subset containing ‘ o ’ weak / non-significant terms (denoted as X LS o ; see Definition 4). In this study , X M S o is identified using OSF search procedure described in Section 3.2. The set of weak terms X LS o is identified using the top-down or backwar d sear ch v ariant of OSF . 10 Figure 3: The v ariation in subset cardinality during the O 2 S Search Further , the ‘search depth’ (denoted by ‘ o ’) determines the number of terms which are either to be added or to be remov ed in a particular swing. The search depth is adaptiv e and its value ‘ o ’ is dependent on the search dynamics. The search begins with o = 1 and if two successi ve swings fail to identify a better term subset then it is incremented by 1. The search depth is reset to 1 whenev er swing leads to an improved subset. The search is terminated when ‘ o ’ reaches to a pre-specified maximum search depth, O ma x . Consequently , the depth of search can easily be controlled by varying O ma x . This is one of the distinct and important ability of O 2 S which regulates the search e ff orts as per the prev ailing requirement. T o analyze the search behavior of O 2 S, it is applied to identify system terms of the system S 1 considered in the numerical example (10) with the following specifications: ξ = 5 and O ma x = 2. During this search for system terms, the term subset and the corresponding criterion function obtained in each step are recorded and shown here in T able 3. The change in subset cardinality during the search process is shown in Fig. 3. The search is initialized by forward inclusion of 5 terms, as per Line 4-6, Algorithm 2. This initial subset selects one spurious term, instead of the system term x 2 , as shown in Step 1, T able 3. The search begins by the down swing procedure which does not improve the subset, as seen in Step 3, T able 3. Ho wev er , in the subsequent up-swing, a better term subset, X + 5 , is identified, i.e. , J ( X + 5 ) > J ( X 5 ). Note that at this stage, all the system terms hav e been identified and the spurious term is discarded, as seen in Step 5, T able 3. The subsequent down swing (Step 7) and up swing (Step 9) identifies the same subset with no improvement in the criterion function, J ( · ). Consequently , the search depth is increased by ‘1’, i.e. , o = 2. The search terminates at Step 11 as the down swing could not find a better subset and any further increase in search depth will lead to o > O ma x . Note that the variation in subset cardinality during the aforementioned swing procedures are clearly visible in Fig. 3. Especially , see the drop in subset cardinality at Step 10. The increase in search depth at this step requires the search for the term subset with { ξ − o } = 3 number of terms. 3.5. Selection of Subset Car dinality (Model Or der Selection) The floating search algorithms require the specification of ‘subset cardinality’ or number of terms to be identi- fied. Giv en that this is not known a priori , in this study , the following procedure is followed to estimate the subset cardinality or ‘ model or der ’. For the system under consideration, a search algorithm is applied to identify several term subsets of increasing cardinality in a predefined search interval, denoted by [ ξ min , ξ ma x ]. A set of subsets thus obtained can be used to estimate the model order using an appropriate Information Criterion (IC). The objecti ve here is to locate a ‘ plateau ’ 11 Figure 4: The selection of subset cardinality or ‘model order’. ξ ? denotes the known cardinality of the system. Note that the knee-point for all criteria is obtained at ξ = 5. or ‘ knee-point ’ in the information criteria which would indicate an acceptable compromise for the bias-variance dilemma. The term subsets corresponding to such knee-point or plateau can be selected as the system model. T o further understand this procedure, consider the system S 1 in numerical example (10). F or this system, OIF is applied to identify term subsets of increasing cardinality in the range of [ ξ min , ξ ma x ] = [2 , 20]. Thus, a family identified term subsets, denoted by ‘ Ω ’, is obtained as follows: Ω = {X ξ min , X ξ min + 1 , . . . , X ξ max } (11) Next, for each identified subset, X ξ ∈ Ω , the various information criteria given in Appendix A are determined. Fig. 4 shows the variation in information criteria as cardinality is varied from ξ min to ξ ma x . It is observed that the ‘ knee-point ’ for all the criteria is obtained at ξ = 5. This coincides with the actual cardinality of the system S 1 . In sev eral comparativ e in vestigations [18, 26], the Bayesian Information Criterion (BIC) was found to be com- parativ ely robust among the existing information criteria. Therefore, for the remainder of the study , the cardinality corresponding to the minimum BIC is selected as the model order . Although following this approach the correct model order could be obtained for all test system (as will be shown in Section 5.1), in practice, we recommend the closer inspection of se veral term subsets surrounding the plateau or knee-point; as the existing research indicated that the information criteria tend to ov er-fit [26]. Since the existing research suggest that the dynamics of many nonlinear systems can be captured with 15 or fewer significant terms [10], a pragmatic approach is followed in this study to fix the search interval of the cardinality as follows: [ ξ min , ξ ma x ] = [2 , 20]. This can be considered as a thumb-rule. Howe ver , some exception may exist in certain non-linear systems. A simple procedure to determine the cardinality interv al in such a scenario is discussed in Section 5.2. 4. In vestigation Framework In the following, the frame work of this in vestigation is discussed. The o verall procedure follo wed for the structure selection is shown in Algorithm 3. The test nonlinear system considered in this study are discussed in Section 4.1. 12 Algorithm 3: Orthogonal Floating Structure Selection Input : Input-output Data, ( u , y ) Output: Identified Model, Structure and Coe ffi cients */ Data Pre-processing 1 Generate set of model terms by specifying n u , n y and n l of the N ARX model */ Search for the system structure 2 Select a orthogonal floating search algorithm: OSF , OIF (Algorithm 1) or O 2 S (Algorithm 2) 3 Select the search interval, [ ξ min , ξ ma x ] 4 Ω ← ∅ 5 for eac h ‘k’, k ∈ [ ξ min , ξ ma x ] do 6 Identify subset of ‘ k ’ significant terms, X k 7 Ω ← { Ω ∪ X k } 8 end 9 Select the term-subset ( X alg ) with the minimum BIC, i.e. , X alg = {X i | BI C ( X i ) = arg min BI C ( X i ) , ∀ X i ∈ Ω } Further , the possible search outcomes are discussed in Section 4.2, to ev aluate the term subset found by the search algorithms qualitativ ely . 4.1. T est Non-linear Systems In this study , a total of 6 non-linear system have been selected from the existing in vestigations on structure se- lection [6, 7, 12, 13, 27, 28] and shown here in T able 4. The systems are excited by a white noise sequence having either uniform or Gaussian distribution as sho wn in T able 4. For identification purposes, a total of 1000 input-output data points, ( u , y ), are generated from each system. The structure selection is performed following the principle of cr oss-validation ; where 700 data points are selected for the estimation purpose and the remaining data points are used for v alidation, i.e. , N v = 300. For each system, the N ARX model is generated by setting the input-output lags and the degree of non-linearity to: [ n u , n y , n l ] = [4 , 4 , 3]. This gives the N ARX model set, X model , with a total of 165 terms following (2), i.e . , n = 165. T able 4: T est Non-linear Systems System Known Structure Input ( u ) † Noise ( e ) † S 1 y ( k ) = 0 . 5 + 0 . 5 y ( k − 1) + 0 . 8 u ( k − 2) + u ( k − 1) 2 − 0 . 05 y ( k − 2) 2 + e ( k ) WUN(0 , 1) WGN(0 , 0 . 05) S 2 y ( k ) = 0 . 5 y ( k − 1) + 0 . 3 u ( k − 1) + 0 . 3 u ( k − 1) y ( k − 1) + 0 . 5 u ( k − 1) 2 + e ( k ) WUN(0 , 1) WGN(0 , 0 . 002) S 3 y ( k ) = 0 . 8 y ( k − 1) + 0 . 4 u ( k − 1) + 0 . 4 u ( k − 1) 2 + 0 . 4 u ( k − 1) 3 + e ( k ) WGN(0 , 1) WGN(0 , 0 . 33 2 ) S 4 y ( k ) = 0 . 1586 y ( k − 1) + 0 . 6777 u ( k − 1) + 0 . 3037 y ( k − 2) 2 − 0 . 2566 y ( k − 2) u ( k − 1) 2 − 0 . 0339 u ( k − 3) 3 + e ( k ) WUN(0 , 1) WGN(0 , 0 . 002) S 5 y ( k ) = 0 . 7 y ( k − 1) u ( k − 1) − 0 . 5 y ( k − 2) + 0 . 6 u ( k − 2) 2 − 0 . 7 y ( k − 2) u ( k − 2) 2 + e ( k ) WUN( − 1 , 1) WGN(0 , 0 . 004) S 6 y ( k ) = 0 . 2 y ( k − 1) 3 + 0 . 7 y ( k − 1) u ( k − 1) + 0 . 6 u ( k − 2) 2 − 0 . 7 y ( k − 2) u ( k − 2) 2 − 0 . 5 y ( k − 2) + e ( k ) WUN( − 1 , 1) WGN(0 , 0 . 004) † WUN( a , b ) denotes white uniform noise sequence in the interval [ a , b ]; WGN( µ, σ ) denotes white Gaussian noise sequence with the mean ‘ µ ’ and the variance ‘ σ ’. 13 4.2. Sear ch Outcomes For comparati ve ev aluation purposes, the term subset found by each search algorithm is qualitatively ev aluated. For this purpose, the follo wing term sets are defined with reference to the N ARX model gi ven by (3), • X model : the set containing all terms of the NARX model • X ? : the optimum term subset or the set of system terms, X ? ⊂ X model • X alg : subset of terms identified by the search algorithm, X alg ⊂ X model • X s pur : set of spurious terms which are selected by the search algorithm, b ut are not present in the actual system, i.e. , X s pur = X alg \ X ? • ∅ : the null set On the basis of these definitions, one of the following four search outcome can be e xpected: 1. Identification of the Correct Structur e ( Exact F itting ) : In this scenario the identified model contains all the system terms and does not include any spurious terms, i.e. , X alg = X ? and X s pur = ∅ 2. Over Fitting : The identified model contains all the system terms; however spurious terms are also selected , i.e. , X alg ⊃ X ? and X s pur , ∅ 3. Under F itting-1 : The algorithm fails to identify all the system terms; though it does not include any spurious terms , i.e. , X alg ⊂ X ? and X s pur = ∅ 4. Under F itting-2 : The algorithm fails to identify all the system terms; however spurious terms are selected , i.e. , X alg 2 X ? and X s pur , ∅ Thus, qualitatively , the search is successful when all the system / significant terms are identified, i.e. , when the outcome is either Exact-F itting or Over-F itting . Note that at this stage, the structure identified by the algorithms are unaltered. The inclusion of few spurious terms can be tolerated provided that all significant terms are included ( i.e. Over -Fitting scenario) as the spurious terms can easily be identified and removed through a simple null-hypothesis test on the corresponding coe ffi cients. 5. Results The goal of this study is to inv estigate the suitability of existing feature selection algorithms for the task of non- linear system identification. For this purpose, 3-well known floating search algorithms hav e been adapted in the proposed orthogonal floating search frame work: OSF , OIF and O 2 S, which are discussed in Section 3. The adapted algorithms are initially applied to identify the significant terms of 6 test non-linear systems described in Section 4.1. The search performance of the algorithms is compared on these systems from v arious perspecti ves in Section 5.1. The issues related to selection of cardinality interval [ ξ min , ξ ma x ] are discussed in Section 5.2. Further , it is w orth to note that while the proposed orthogonal floating search can work with any suitable criterion function, in this study , the Error-Reduction-Ratio (ERR) has been selected for this purpose due to its relati ve simplic- ity . Ho wev er , ERR is often criticized for its ‘ local ’ nature and blamed for the unsatisfactory search outcome. This issue is in vestigated via a numerical e xample in Section 5.3. 14 (a) S 1 (b) S 2 (c) S 3 (d) S 4 (e) S 5 (f) S 6 Figure 5: Model Order Selection. ‘ ξ ? ’ denotes the known cardinality of the system. T able 5: Search Outcomes † System ξ ? OSF OIF O 2 S ξ al g Outcome ξ al g Outcome ξ al g Outcome S 1 5 6 Over-Fitting 5 Exact-Fitting 5 Exact-Fitting S 2 4 4 Exact-Fitting 4 Exact-Fitting 4 Exact-Fitting S 3 4 4 Exact-Fitting 4 Exact-Fitting 4 Exact-Fitting S 4 5 5 Exact-Fitting 5 Exact-Fitting 5 Exact-Fitting S 5 4 4 Exact-Fitting 4 Exact-Fitting 4 Exact-Fitting S 6 5 5 Exact-Fitting 5 Exact-Fitting 5 Exact-Fitting † See Section 4.2 for the definitions of search outcomes Finally , the proposed orthogonal floating algorithms are applied to identify a discrete model of a continuous time system. The identified discrete model is v alidated through generalized frequency response functions. This part of the in vestigation is discussed in Section 5.4. 5.1. Comparative Evaluation The objectiv e of this part of the study is to compare the performance of orthogonal floating search algorithms. Follo wing the procedure outlined Algorithm 3, the significant terms of the system shown in T able 4 are identified. It is worth to note that while OSF and OIF do not have any control parameter , O 2 S requires the selection of maximum search depth, O ma x . In this study , a maximum of 25% terms is allo wed to be exchanged, i.e. , O ma x = d 0 . 25 × min { ξ , n − ξ }e . The outcomes of model order selection are shown in Fig 5. Note that the ‘knee-point’ in BIC coincides with 15 (a) S 1 + OS F (b) S 1 + O I F (c) S 1 + O 2 S (d) S 2 + OS F (e) S 2 + O I F (f) S 2 + O 2 S Figure 6: T erm Selection Beha vior-I. ξ ? denotes actual cardinality of the system. The ‘dotted’ vertical line indicate the v alue ξ when all the system terms are identified. the known cardinality (actual number of terms) of the systems. The selected cardinality (number of terms) for each system is shown in T able 5 along with the qualitativ e search outcomes. It is interesting to see that the best possible search outcome, ‘ Exact-F itting ’, is obtained for all combinations of system and search algorithm, except one. For the system S 1 , an ov er-fitted model (with one spurious term) is selected by OSF as seen in Fig. 5a and T able 5. Note that this spurious term can easily be removed via a simple null-hypothesis test. Nev ertheless, it is pertinent to identify the cause of this ‘ov er-fitting’ which could be ascribed either to the information criterion or to the search algorithm. T o in vestigate this further , the term selection behavior of the algorithms is determined. In particular , as the car- dinality , ξ , is increased progressively from ξ min to ξ ma x , the selection frequency of system terms is observed over the subsets identified by the algorithm, as follows: τ i ,ξ = 1 , if x i ∈ X ξ 0 , otherwise , ∀ X ξ ∈ Ω (12) where, ‘ τ i ,ξ ’ encodes whether the i th term, ‘ x i ’, is included in the term subset X ξ identified by the algorithm. Following this procedure, the term selection behavior is determined for all algorithms and sho wn here in Fig. 6, 7 and 8. W e first focus on the ‘ o ver-fitting ’ outcome by OSF on S 1 . The term selection by OSF for S 1 is depicted in Fig. 6a. It is observed that all the system terms are first identified at ξ = 6 which is slightly higher than ξ ? . This explains the ‘ov er-fitting’ outcome. For the same system, OIF (Fig. 6b) and O 2 S (Fig. 6c) could identify all system terms from ξ = ξ ? . This further underlines the e ffi cacy of the ‘ term swapping ’ procedure introduced in OIF . Further , although the BIC could identify correct model order for all the systems in this study , the possibility 16 (a) S 3 + OS F (b) S 3 + O I F (c) S 3 + O 2 S (d) S 4 + OS F (e) S 4 + O I F (f) S 4 + O 2 S Figure 7: T erm Selection Beha vior-II. ξ ? denotes actual cardinality of the system. The ‘dotted’ vertical line indicate the v alue ξ when all the system terms are identified. of ‘over -fitting’ cannot be ruled out. W e therefore focus on the search behavior for ξ > ξ ? . It is easier to follow that all the system terms must be included in the term subsets with cardinality ξ ≥ ξ ? . Thus it is desirable to hav e τ i ,ξ = 1 , ∀ ξ ≥ ξ ? . If this condition is satisfied then the correct system structure can still be obtained from the over - fitted structures through an appropriate secondary refinement procedure, e.g. , null-hypothesis test on the coe ffi cients. The results show that this condition is satisfied for all systems by OIF and O 2 S, as seen in Fig. 6, 7 and 8. OSF could also satisfy this condition for all the systems except S 1 . 5.2. Comments on the Interval Selection The objective of this part of the study is to discuss the issues related to the selection of the cardinality interval. Giv en that the cardinality of the system under consideration is not kno wn a priori , a careful selection of the cardinality interval is crucial. In the pre vious subsections, a pragmatic approach is followed to select the cardinality interv al, i.e. , [ ξ min , ξ ma x ] = [2 , 20]; since in practice, ov er-parameterized models with higher cardinality are usually not desirable. Howe ver , the selection of cardinality interval is system dependent. Therefore, the cardinality interval should be selected judiciously , especially for the higher order systems. In order to gain further insight into this issue, consider 17 (a) S 5 + OS F (b) S 5 + O I F (c) S 5 + O 2 S (d) S 6 + OS F (e) S 6 + O I F (f) S 6 + O 2 S Figure 8: T erm Selection Behavior-III. ξ ? denotes actual cardinality of the system. The ‘dotted’ vertical line indicate the v alue ξ when all the system terms are identified. the following nonlinear system with 23 terms, S 7 : y ( k ) = 0 . 8833 u ( k − 1) + 0 . 0393 u ( k − 2) + 0 . 8546 u ( k − 3) + 0 . 8528 u ( k − 1) 2 (13) + 0 . 7582 u ( k − 1) u ( k − 2) + 0 . 1750 u ( k − 1) u ( k − 3) + 0 . 0864 u ( k − 2) 2 + 0 . 4916 u ( k − 2) u ( k − 3) + 0 . 0711 u ( k − 3) 2 − 0 . 0375 y ( k − 1) − 0 . 0598 y ( k − 2) − 0 . 0370 y ( k − 3) − 0 . 0468 y ( k − 4) − 0 . 0476 y ( k − 1) 2 − 0 . 0781 y ( k − 1) y ( k − 2) − 0 . 0189 y ( k − 1) y ( k − 3) − 0 . 0626 y ( k − 1) y ( k − 4) − 0 . 0221 y ( k − 2) 2 − 0 . 0617 y ( k − 2) y ( k − 3) − 0 . 0378 y ( k − 2) y ( k − 4) − 0 . 0041 y ( k − 3) 2 − 0 . 0543 y ( k − 3) y ( k − 4) − 0 . 0603 y ( k − 4) 2 + e ( k ) For the identification purposes, 1000 input-output data points are gathered with u ∼ W U N (0 , 1) and e ∼ W G N (0 , 0 . 01 2 ). A total of 286 candidate terms are obtained with the following specification for the NARX model: [ n u , n y , n l ] = [5 , 5 , 3]. Initially , the system model is identified follo wing the similar procedure, where the cardinality interval is specified as: [ ξ min , ξ ma x ] = [2 , 20]. The outcome of the cardinality selection for this interval is shown in Fig. 9a which sho ws that the BIC is minimum at ξ = 20. Howe ver , it is not certain whether this is the desired ‘knee-point’. Therefore, the cardinality interval is increased to ξ ma x = 50. The consequent outcome is sho wn in Fig. 9b. This result indicates a continual increase in BIC for ξ ≥ 30 and thereby confirms that the plateau around ξ ∈ [22 , 29] does contain the desired knee-point. As illustrated by this numerical example, the cardinality interval can easily be adjusted by observing the con- 18 (a) ξ ma x = 20 (b) ξ ma x = 50 Figure 9: Determination of Cardinality Interv al sequent ‘ ξ vs. BIC’ relationship. W e recommend the OSF to determine / adjust the cardinality interv al due to its inexpensi ve computational requirements. 5.3. Orthogonal Floating Sear ch Under Slow V arying Input As discussed in Section 3.1, the present inv estigation uses the Error-Reduction-Ratio (ERR), instead of the other performance metrics, such as simulation error, ultra least square criterion. Howe ver , in recent years, the OFR-ERR has been criticized for leading to suboptimal search performance under certain scenarios [6, 7, 13, 15], where it is argued that this is due to the ERR criterion. Howe ver , the results of this inv estigation on se veral benchmark nonlinear systems con vincingly demonstrate that ERR could be an e ff ectiv e metric, pro vided it is paired with an appropriate search strate gy . Thus, it can be ar gued that the suboptimal performance of OFR-ERR may be the consequence of the search strategy and not of the performance metric. Note that OFR-ERR is essentially a unidirectional sequential search approach which leads to the ‘ nesting e ff ect ’, i.e . , once a term is included it cannot be discarded. Thus the search performance may significantly be improved if the nesting e ff ect could be alleviated. The proposed orthogonal search framework e ff ecti vely addresses this issue and therefore it can yield better search performance ev en with a relati vely simple metric such as ERR. T o demonstrate this, we consider the follo wing numerical example, which has been used by various researchers for comparativ e in vestigation [13, 15, 16]: S 8 : w ( k ) = u ( k − 1) + 0 . 5 u ( k − 2) + 0 . 25 u ( k − 1) u ( k − 2) − 0 . 3 u ( k − 1) 3 (14) y ( k ) = w ( k ) + 1 1 − 0 . 8 z − 1 e ( k ) , e ( · ) ∼ W G N (0 . 02) with u ( k ) = 0 . 3 1 − 1 . 6 z − 1 + 0 . 64 z − 2 v ( k ) , v ( · ) ∼ W G N (0 , 1) where, ‘ y ( · )’ is the observ ed value of ‘ w ( · )’. The system is excited with the slow-v arying input u ( · ) which is an AR process with a repeat pole at 0.8. A total of 165 candidate terms are obtained by the following N ARX specifications: [ n u , n y , n l ] = [4 , 4 , 3]. The system is identified by the follo wing three algorithms: OFR-ERR, OIF and O 2 S. The search dynamics of the compared algorithms are recorded and are sho wn in T able 6 (OFR-ERR), T able 7 (OIF) and T able 8 (O 2 S). Note that, initially , all the algorithms selected the autoregressiv e terms ‘ y ( k − 1)’ and ‘ y ( k − 2)’. As seen in T able 6, OFR-ERR could not remove the autoregressiv e terms due to the nesting e ff ect . Furthermore, sev eral other spurious terms have 19 T able 6: OFR-ERR Search Dynamics for S † 8 ξ Subset ‡ J ( · ) 1 { y ( k − 1) } 0.87426 2 { y ( k − 1) , y ( k − 2) } 0.92403 3 { y ( k − 1) , y ( k − 2) , u ( k − 1) } 0.94207 4 { y ( k − 1) , y ( k − 2) , u ( k − 1) , u ( k − 1) 2 } 0.98738 5 { y ( k − 1) , y ( k − 2) , u ( k − 1) , u ( k − 1) 2 , u ( k − 1) 3 } 0.99383 6 { y ( k − 1) , y ( k − 2) , u ( k − 1) , u ( k − 1) 2 , u ( k − 1) 3 , u ( k − 2) 3 } 0.99428 7 { y ( k − 1) , y ( k − 2) , u ( k − 1) , u ( k − 1) 2 , u ( k − 1) 3 , u ( k − 2) 3 , u ( k − 3) 3 } 0.99871 † spurious terms are shown in bold face ‡ The following system terms ha ve not been included: u ( k − 2), u ( k − 1) u ( k − 2) T able 7: OIF Search Dynamics for S † 8 Step ξ s t e p Subset J ( · ) 1 1 { y ( k − 1) } 0.87418 2 2 { y ( k − 1) , y ( k − 2) } 0.92385 3 3 { y ( k − 1) , u ( k − 1) 3 , u ( k − 2) 3 } 0.98635 4 3 { u ( k − 1) , u ( k − 1) u ( k − 2) , u ( k − 1) 3 } 0.99632 5 4 { u ( k − 1) , u ( k − 2) , u ( k − 1) u ( k − 2) , u ( k − 1) 3 } 0.99757 † spurious terms are shown in bold face T able 8: O 2 S Search Dynamics for S † 8 Step Search Depth ‘ o ’ ξ s t e p Subset J ( · ) Remarks 1 - 4 { y ( k − 1) , y ( k − 2) , u ( k − 1) 3 , u ( k − 2) 3 } 0.98717 Initial Model 2 1 3 { u ( k − 1) , u ( k − 1) u ( k − 2) , u ( k − 1) 3 } 0.99632 Down Swing Improv ed 3 4 { u ( k − 1) , u ( k − 2) , u ( k − 1) u ( k − 2) , u ( k − 1) 3 } 0.99757 4 1 5 { y ( k − 1) , u ( k − 1) , u ( k − 2) , u ( k − 1) u ( k − 2) , u ( k − 1) 3 } 0.99758 Up Swing Did Not Improv e 5 4 { u ( k − 1) , u ( k − 2) , u ( k − 1) u ( k − 2) , u ( k − 1) 3 } 0.99757 6 1 3 { u ( k − 1) , u ( k − 1) u ( k − 2) , u ( k − 1) 3 } 0.99632 Down Swing Did Not Improv e 7 4 { u ( k − 1) , u ( k − 2) , u ( k − 1) u ( k − 2) , u ( k − 1) 3 } 0.99757 † spurious terms are shown in bold face been selected instead of two system terms: ‘ u ( k − 2)’ and ‘ u ( k − 1) u ( k − 2)’. In contrast, OIF could easily remo ve the autoregressi ve terms through swapping procedure (see Step 3 and 4, T able 7). Similarly , the autoregressiv e terms are replaced by the system terms and the correct structure is identified in the first down-swing procedure of O 2 S (see Step 3, T able 8). 20 It is obvious that the floating frame work enabled OIF and O 2 S to identify a better subset for the giv en cardinality . For example, all the system terms are identified by OIF and O 2 S at ξ = 4 with the criterion function J ( · ) = 0 . 99757 (see T able 7-8). In contrast, OFR-ERR identified only one system term at ξ = 4. This is further reflected in the relativ ely lower value of the criterion function, J ( · ) = 0 . 98738 (see T able 6). This example thus clearly demonstrate that ERR could be an e ff ectiv e performance metric when it is paired with a proper search strategy . It is worth to emphasize that, for identification purposes, the input signal to the system should be persistently exciting in nature. The example considered in (14) is the e xception to this fundamental fact, and it represents a worst- case scenario. In practice, such data should not be used for identification purposes. This example has been considered only for comparativ e analysis. 5.4. Discr ete models of Continuous-time Systems The e ff ectiv eness of the proposed framew ork is further demonstrated by fitting a polynomial N ARX model to a continuous-time system. Since a discrete model of a continuous time system is not unique, the identified discrete-time model is validated by comparing Generalized Frequency Response Functions (GFRFs). Note that if the identified model has captured the system dynamics, the corresponding GFRFs should match. For this purpose, the Du ffi ng ’ s Oscillator is considered, which is gi ven by , ¨ y ( t ) + 2 ζ ω n ˙ y ( t ) + ω 2 n y ( t ) + ω 2 n ε y ( t ) 3 − u ( t ) = 0 (15) where, ω n = 45 π , ζ = 0 . 01 and ε = 3. The input-output data are generated by exciting the system with u ( · ) ∼ W U N (0 , 1) and the output is sampled at 500 H z . A total of 1000 data points are generated. The discrete NARX model is fitted using 700 data points, and the remaining 300 data points are used for v alidation. The super-set containing a total of 286 N ARX terms is obtained by the following specifications: [ n u , n y , n l ] = [5 , 5 , 3]. The following model is identified by all the proposed algorithms OIS, OIF and O 2 S: y ( k ) = 1 . 9152 y ( k − 1) − 0 . 99436 y ( k − 2) + 1 . 983 × 10 − 6 u ( k − 1) (16) + 1 . 9792 × 10 − 6 u ( k − 2) − 0 . 34289 y ( k − 1) 3 + 0 . 22813 y ( k − 2) y ( k − 1) 2 − 1 . 079 × 10 − 7 u ( k − 1) y ( k − 1) 2 − 0 . 11847 y ( k − 1) y ( k − 2) 2 The fitting error obtained ov er the validation data points is 3 . 96 × 10 − 6 . The linear and the third order GFRFs of the continuous system are shown in Fig. 10a, 10c and 10e. As indicated by these results, the frequency response of the Du ffi ng’ s oscillator is characterized by the presence of se veral ‘ridges’ and ‘peaks’. The ridges occur when the system is excited by the linear resonant frequency ( i.e . , f 1 , f 2 or f 3 = ± 22 . 5 Hz) or when the follo wing condition is satisfied: f 1 + f 2 + f 3 = ± 22 . 5. These ridges are sho wn by ‘dotted’ lines in the contour plot shown in Fig. 10e. Further , the peaks occur whenev er all frequencies are equal to ± 22 . 5. Note that these observations are in line with the earlier in vestigation [29]. The GFRFs obtained with the discrete identified model are shown in Fig. 10b, 10d and 10f. It is clear that the identified model could capture all the characteristics features of the Du ffi ng’ s oscillator . 6. Discussion & Conclusions A ne w Orthogonal Floating Search Framework has been proposed to identify significant terms of polynomial N ARX models. The proposed framework integrates the classical ERR metric with floating search algorithms which retains the simplicity of OFR while eliminating its nesting e ff ects. Follo wing this framework, three well-known 21 (a) Linear GFRF (continuous) (b) Linear GFRF (discrete) (c) Third Order GFRF (continuous) (d) Third Order GFRF (discrete) (e) Third Order GFRF Contour (continuous) (f) Third Order GFRF Contour (discrete) Figure 10: Linear and Third Order Frequency Response of Du ffi ng’ s oscillator . For the third order GFRF f 3 = f 1 . Note that in the contour plots ridges align at f 1 + f 2 + f 3 = ± 22 . 5. feature selection algorithms, such as SFFS, IFFS and OS, hav e been adapted to solve the structure selection problem. The e ff ecti veness of this approach has been demonstrated considering se veral benchmark nonlinear systems including a system excited by a slowly varying input. The later system is often considered as a worst-case scenario. Further , a discrete-time model is fitted to Du ffi ng’ s oscillator and it is validated by comparing generalized frequency response functions. These results convincingly demonstrate that the floating feature selection algorithms can easily be tailored 22 for the purpose of structure selection in nonlinear system identification. For example, relati vely simple procedures of the floating search, such as bac ktrac king , term swapping and swing , can e ff ecti vely counter the nesting e ff ect and thereby yield significant improvement in the search performance (as shown in the results). The results of this in vestigation support the argument that the suboptimal performance of OFR-ERR is due in major part to its poor search strategy . Appendix A. Information Criteria Let X ξ , ξ ∈ [ ξ min , ξ ma x ] denote a term subset identified the search algorithm. For this subset and the corresponding model- pr edicted output ( ˆ y ), the information criteria are giv en by , • Akaike Information Criterion (AIC): AI C ( X ξ ) = N v ln( E ) + %ξ, with % = 2 (A.1) • Bayesian Information Criterion (BIC): BI C ( X ξ ) = N v ln( E ) + ln( N v ) ξ (A.2) • Final Prediction Error (FPE): F PE ( X ξ ) = N v ln( E ) + N v ln( N v + ξ N v − ξ ) (A.3) • Khundrin’ s Law of Iterated Logarithm Criterion (LILC): L I L C ( X ξ ) = N v ln( E ) + 2 ξ ln ln N v (A.4) where,‘ N v ’ denotes the length of the validation data; E = 1 N v N v P k = 1 [ y k − ˆ y k ] 2 References [1] S. A. Billings, Nonlinear system identification: NARMAX methods in the time, frequency , and spatio-temporal domains, John Wile y & Sons, 2013. [2] L. Ljung, System Identification : Theory for the User , Prentice Hall PTR, Upper Saddle Riv er , NJ, USA, 1999. [3] X. Hong, R. J. Mitchell, S. Chen, C. J. Harris, K. Li, G. W . Irwin, Model selection approaches for non-linear system identification: a revie w , International journal of systems science 39 (10) (2008) 925–946. [4] S. A. Billings, S. Chen, M. J. K orenberg, Identification of MIMO non-linear systems using a forward-regression orthogonal estimator , International Journal of Control 49 (6) (1989) 2157–2189. [5] T . Baldacchino, S. R. Anderson, V . Kadirkamanathan, Structure detection and parameter estimation for NARX models in a unified EM framew ork, Automatica 48 (5) (2012) 857–865. [6] T . Baldacchino, S. R. Anderson, V . Kadirkamanathan, Computational system identification for bayesian NARMAX modelling, Automatica 49 (9) (2013) 2641–2651. [7] A. Falsone, L. Piroddi, M. Prandini, A randomized algorithm for nonlinear model structure selection, Automatica 60 (2015) 227–238. [8] X. T ang, L. Zhang, X. W ang, Sparse augmented lagrangian algorithm for system identification, Neurocomputing. [9] M. Korenber g, S. Billings, Y . Liu, P . McIlroy , Orthogonal parameter estimation algorithm for non-linear stochastic systems, International Journal of Control 48 (1) (1988) 193–210. [10] S. Chen, S. A. Billings, W . Luo, Orthogonal least squares methods and their application to non-linear system identification, International Journal of control 50 (5) (1989) 1873–1896. [11] N. Chiras, C. Evans, D. Rees, Nonlinear gas turbine modeling using NARMAX structures, IEEE Transactions on Instrumentation and Measurement 50 (4) (2001) 893–898. [12] K. Mao, S. Billings, Algorithms for minimal model structure detection in nonlinear dynamic system identification, International journal of control 68 (2) (1997) 311–330. [13] L. Piroddi, W . Spinelli, An identification algorithm for polynomial N ARX models based on simulation error minimization, International Journal of Control 76 (17) (2003) 1767–1781. [14] H. L. W ei, S. A. Billings, Model structure selection using an integrated forward orthogonal search algorithm interfered with squared correla- tion and mutual information, Automatic Control and Systems Engineering, Univ ersity of She ffi eld, 2006. [15] Y . Guo, L. Guo, S. A. Billings, H.-L. W ei, An iterative orthogonal forward regression algorithm, International Journal of Systems Science 46 (5) (2015) 776–789. 23 [16] Y . Guo, L. Guo, S. Billings, H.-L. W ei, Ultra-orthogonal forward regression algorithms for the identification of non-linear dynamic systems, Neurocomputing 173 (2016) 715 – 723. [17] K. Li, J.-X. Peng, E.-W . Bai, A two-stage algorithm for identification of nonlinear dynamic systems, Automatica 42 (7) (2006) 1189 – 1197. [18] F . Hafiz, A. Swain, E. M. Mendes, N. Patel, Structure selection of polynomial NARX models using two dimensional (2D) particle swarms, in: 2018 IEEE Congress on Evolutionary Computation (CEC), 2018, pp. 1–8. [19] M. Michael, W . Lin, Experimental study of information measure and inter-intra class distance ratios on feature selection and orderings, IEEE T ransactions on Systems, Man, and Cybernetics SMC-3 (2) (1973) 172–181. [20] S. D. Stearns, On selecting features for pattern classifiers, in: Proceedings of the 3rd International Joint Conference on Pattern Recognition, 1976, pp. 71–75. [21] J. Kittler , Feature set search algorithms, Pattern recognition and signal processing (1978) 41–60. [22] P . Pudil, J. Novo vi ˇ cov ´ a, J. Kittler , Floating search methods in feature selection, Pattern Recognition Letters 15 (11) (1994) 1119–1125. [23] P . Somol, P . Pudil, J. Novovi ˇ cov ´ a, P . Paclık, Adaptive floating search methods in feature selection, Pattern Recognition Letters 20 (11) (1999) 1157–1163. [24] P . Somol, P . Pudil, Oscillating search algorithms for feature selection, in: Proceedings 15th International Conference on Pattern Recognition. ICPR-2000, V ol. 2, 2000, pp. 406–409 vol.2. [25] S. Nakariyakul, D. P . Casasent, An impro vement on floating search algorithms for feature subset selection, Pattern Recognition 42 (9) (2009) 1932–1940. [26] P . Stoica, Y . Selen, Model-order selection: a revie w of information criterion rules, IEEE Signal Processing Magazine 21 (4) (2004) 36–47. [27] E. M. A. M. Mendes, Identification of nonlinear discrete systems with intelligent structure detection, Ph.D. thesis, Uni versity of She ffi eld, England, UK (1995). [28] M. Bonin, V . Seghezza, L. Piroddi, NARX model selection based on simulation error minimisation and LASSO, IET control theory & applications 4 (7) (2010) 1157–1168. [29] S. A. Billings, J. C. P . Jones, Mapping non-linear integro-di ff erential equations into the frequency domain, International Journal of Control 52 (4) (1990) 863–879. 24

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment