Micro Hand Gesture Recognition System Using Ultrasonic Active Sensing

In this paper, we propose a micro hand gesture recognition system and methods using ultrasonic active sensing. This system uses micro dynamic hand gestures for recognition to achieve human-computer interaction (HCI). The implemented system, called ha…

Authors: Yu Sang, Laixi Shi, Yimin Liu

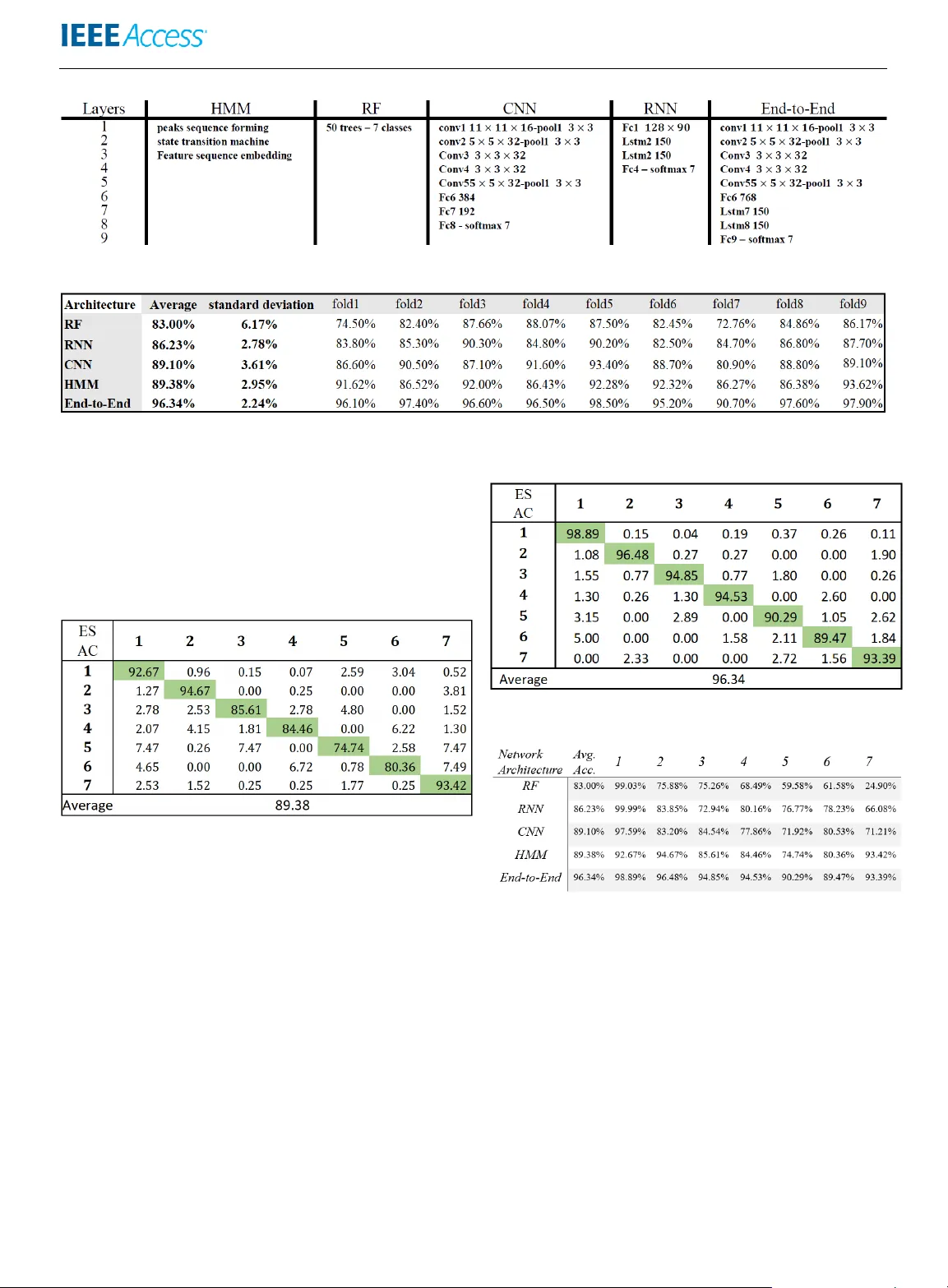

Received June 4, 2018, accepted August 21, 2018, date of publication September 3, 2018, date of current version Nov ember , 2024. Digital Object Identifier 0.1109/ACCESS.2018.2868268 Micr o Hand Gesture Recognition System Using Ultrasonic Active Sensing YU SANG 1 , LAIXI SHI 2 , AND YIMIN LIU 3 (Member , IEEE) 1 Department of Electronic Engineering, Tsinghua Univ ersity , Beijing, 100086 China 2 Department of Electronic Engineering, Tsinghua Univ ersity , Beijing, 100086 China 3 Department of Electronic Engineering, Tsinghua Univ ersity , Beijing, 100086 China Corresponding author: Y imin Liu (e-mail: yiminliu@tsinghua.edu.cn). Revised No vember 28, 2024; This is a updated arxi v version of the publiced IEEE Access v ersion. ABSTRA CT In this paper , we propose a micro hand gesture recognition system and methods using ultrasonic acti ve sensing. This system uses micro dynamic hand gestures for recognition to achie ve human- computer interaction (HCI). The implemented system, called hand-ultrasonic-gesture (HUG), consists of ultrasonic acti ve sensing, pulsed radar signal processing, and time-sequence pattern recognition by machine learning. W e adopt lo wer-frequency (300 kHz) ultrasonic activ e sensing to obtain high resolution range- Doppler image features. Using high quality sequential range-Doppler features, we propose a state-transition- based hidden Marko v model for gesture classification. This method achieves a recognition accuracy of nearly 90% by using symbolized range-Doppler features and significantly reduces the computational complexity and power consumption. Furthermore, to achiev e a higher classification accuracy , we utilize an end-to-end neural network model and obtain a recognition accuracy of 96.32%. In addition to offline analysis, a real-time prototype is released to verify our method’ s potential for application in the real world. INDEX TERMS Ultrasonic acti ve sensing, range-Doppler , micro hand gesture recognition, hidden Markov model, neural network. I. INTRODUCTION R ECENTL Y, human-computer interaction (HCI) has be- come increasingly more influential in both research and daily life [1]. In the area of HCI, human hand gesture recog- nition (HGR) can significantly facilitate many applications in different fields, such as driving assistance for automobiles [2], smart houses [3], wearable devices [4], virtual reality [5], etc. In application scenarios where haptic controls and touch screens may be physically or mentally limited, such as during driving, hand gestures, as a touchless input modality , are more con venient and attractiv e to a large extent. Among hand gestures, micro hand gestures, as a subtle and natural input modality , hav e great potential for many applications, such as wearable, portable, mobile devices [6] [7]. In contrast with wide-range and large shift actions, such as wa ving or rolling hands, these micro hand gestures only in volve the mov ements of multiple fingers, such as tapping, clicking, rotating, pressing, and rubbing. The y are e xactly the ways in which people normally use their hands in the real physical world. Directly using the hand and multiple fingers to control devices is a natural, flexible, and user-friendly input modality that requires less learning effort. In the hand gesture and micro hand gesture recognition area, various techniques have been applied. Numerous optical methods, which are related to computer vision, utilize RGB cameras [8] [9], and depth cameras, such as infrared cameras [10] [11]. These camera-based methods hav e been proposed, and some, such as the Microsoft Kinect or Leap Motion, hav e ev en been commercialized. These optical approaches that use RGB images or depth information also perform well in subtle finger motion tracking [5] [12]. Ho wev er, to our best knowledge, their reliability in harsh en vironments, such as in the nighttime darkness or under direct sunlight, is an issue owing to the variance of light conditions. It is also not energy efficient for these approaches to achieve continuous real-time hand gesture recognition for HCI, as required for wearable and portable de vices [5]. In addition to camera-based sensing, there are other passive sensing methods such as pyroelectric infrared sensing and W iFi signal sensing. These methods are utilized in HGR and hav e achieved great ef fects [4] [13]. In comparison, active sensing is more robust under dif- ferent circumstances because probing wav eforms can be de- signed. T echnologies of sound [14], magnetic field [15] and radio frequency (RF) [7] acti ve sensing ha ve begun to attract VOLUME 4, 2016 1 Y u Sang et al. : Micro Hand Gesture Recognition System Using Ultrasonic Active Sensing interest. Magnetic sensing achie ved a high precision in finger tracking but required fingers to be equipped with sensors [15]. Radar technologies based on sound or radio frequency (RF) acti ve sensing can obtain range profile, Doppler and an- gle of arri val (A OA) features from the receiv ed signal. Such features are competiti ve and suitable for use in identifying the signature of hand gestures [7] [16] [17]. The range resolution is determined by the bandwidths of RF w a ves [18]. Howe ver , generating RF wav es with large enough bandwidths to meet the resolution demand for micro hand gestures recognition costs a lot. Compared to RF radar , activ e sensing using sound has adv antages that can meet the high range and velocity res- olution demand o wing to the low propagation velocity [19] [20] [21]. Furthermore, the hardware of activ e sensing using sound can be integrated and miniaturized with MMIC or MEMS techniques with low system complexity , thus having great potential to be used in wearable and portable devices [21]. As introduced in [22], ultrasonic activ e sensors with MEMS technique usually consume less energy than CMOS image sensors. In this work, we build a system consisting of active ul- trasonic transceiv er, range-Doppler feature extraction pro- cessing and time-sequence pattern recognition methods to recognize different kinds of micro hand gestures. W e named this system HUG (hand-ultrasonic-gesture). The HUG sys- tem transmits ultrasonic waves and receiv es echoes reflected from the palms and fingers. After that, we implement a pulsed Doppler radar signal processing technique to ob- tain time-sequential range-Doppler features from ultrasonic wa ves, measuring objects’ precise distances and velocities simultaneously through a single transceiving channel. Mak- ing use of the high-resolution features, we propose a state- transition based hidden Markov model (HMM) approach for micro dynamic gesture recognition and achieve a com- petitiv e accuracy . For comparison and ev aluation, we also implement methods such as random forest, a con volutional neural network (CNN), a long short-term memory (LSTM) neural network, and an end-to-end neural network. A brief introduction of this work was given in [23]. In this paper, we giv e the details and further performance ev aluation of the HUG system. The main contributions of this work are as follows: • A micro hand gesture recognition system using ultra- sonic activ e sensing has been implemented. Consid- ering the high-resolution range-Doppler features, we propose a state transition mechanism-based HMM to represent the features, significantly compressing the fea- tures and extracting the most intrinsic signatures. This approach achiev es a classification accuracy of 89.38% on a dataset of sev en micro hand gestures. It also greatly improv es the computational and energy efficiency of the recognition part and provides more potential for portable and wearable devices. • W ith higher resolution of the range-Doppler features, the end-to-end neural network model has been imple- FIGURE 1: Pipeline Design Block Diagram mented, achieving a classification accuracy of 96.34% on the same dataset. Furthermore, we hav e released a real-time micro hand gesture recognition prototype to demonstrate the real-time control of a music player to verify our system’ s real-time feasibility . The rest of the paper is organized as follows: in Section II, related works performed in recent years are discussed. In Section III, we formally describe our micro hand gesture recognition system HUG, including the system design, signal processing technologies and machine learning methods for recognition. In Section IV , we introduce the experiments designed for our system, including implementation and per- formance e valuation. W e finally conclude our work, follo wed by future work and ackno wledgments. II. RELA TED W ORK Other related w ork has been performed for micro hand gesture recognition. A project named uTrack utilized mag- netic sensing and achieved high finger tracking accuracy but required fingers to be equipped with sensors [15]. Passi ve infrared (PIR) sensing was exploited for micro hand ges- tures in the project called Pyro and applicable results were achiev ed [4]. By sensing thermal infrared signals radiating from fingers, the researchers implemented signal processing and random forest for thumb-tip gesture recognition. A fine- grained finger gesture recognition system called Mudra [13] used a W iFi signal to achie ved mm-le vel sensitivity for finger motion. Acoustic sensing also achiev ed great resolution in finger tracking, which showed potential for micro hand ges- tures [19] [20]. In addition to these works, many radar signal processing technologies combined with machine learning methods or neural network models have been used in hand gesture, and micro hand gesture recognition, especially the Doppler effect. Different kinds of radar , including continuous wa ve radar [16], Doppler radar [24] and FMCW radar [17] [25] hav e been utilized for HGR and achieved results competiti ve with those of other methods. A K-band continuous wav e (CW) radar has been exploited in HGR. It obtained up to 90% accuracy for 4 wide-range hand gestures [16]. N. Patel et al dev eloped W iSee, a nov el gesture recognition system based 2 V OLUME 4, 2016 Y u Sang et al. : Micro Hand Gesture Recognition System Using Ultrasonic Active Sensing FIGURE 2: Platform Design on a dual-channel Doppler radar that lev eraged wireless signals to enable whole-home sensing and recognition under complex conditions [3]. These works aroused more interest in utilizing radar signal processing in HGR. Howe ver , as we can see, the works above mainly focused on relativ ely wide-range and large-shift human gestures. As most radar signals’ central frequencies and bandwidth limit the feature properties, they are not suitable for recognizing micro hand gestures with subtle motions in sev eral fingers, as they usually cannot distinguish between fingers. The limitations in range and velocity resolution are big challenges for micro hand gesture recognition. Google announced Project Soli in 2015, which, by uti- lizing 60-GHz radar , aims at controlling smart devices and wearable devices through micro hand gestures [7] [26]. Our project differs from Google Soli in sev eral aspects. W e com- bined the ultrasonic advantage that ultrasonic wa ves travels at a much slo wer speed than light and radar signal processing to design our system HUG. Choosing ultrasound wa ves, we obtain a millimeter-lev el range and centimeter-per -second- lev el velocity resolution, much more precise than Soli’ s millimeter wav e radar . Considering the better range-Doppler features, we propose a state transition mechanism to extract and compress features, follo wed by a HMM model for ges- ture recognition. This approach achieves competitiv e results compared to Soli with much concise feature and model sizes of recognition algorithms. Furthermore, to achieve higher accuracy , we implement the computationally more expensi ve end-to-end network, and achiev e a classification accuracy of 96.34% using our more discriminating range-Doppler features. Last but not the least, ultrasonic activ e sensing, particularly with Doppler effects [27]–[35], represents a significant ad- vancement in identification and recognition tasks, especially those in volving motion. The design of our ultrasonic radar system draws considerable inspiration from this technology , lev eraging its high range and Doppler resolution—features that are highly desirable for micro hand gesture recognition tasks. III. METHODOLOGY A. PLA TFORM DESIGN T o obtain high-quality features, we designed an ultrasonic front-end that transmits ultrasonic wa ves and receives re- flected signals. The parameters of the system are designed to meet our resolution requirement. T o clearly differentiate finger positions and motions, millimeter-le vel range resolution ( < 1 cm) and centimeter- per-second-le vel velocity resolution ( < 0 . 1 m/s) are needed. In addition, the finger velocity is usually no more than 1 m/s, and the demand for the stop-and-hop assumption has to be met. High precision in range and v elocity is also recommended. Considering the radar wa veform design and signal processing criteria, the velocity resolution v d and range resolution R d are v d = λ 2 M T , R d = c 2 B , (1) where c is the wa ve propagation speed, λ is the wav elength of the ultrasound, B is the bandwidth, T stands for the pulse repetition interv al (PRI), and M is the total number of pulses in a frame. Thus, to meet the range resolution, we chose the bandwidth B of 20 kHz. The bandwidth is usually 5% to 20% of the central frequency , so we chose ultrasonic wa ves around the frequency of 300 kHz [7] [17]. As the maximum finger velocity is 1 m/s and there is demand for the stop-and- hop assumption, we chose the PRI as 600 µ s. Finally , we chose pulse number M as 12 for the requirement of velocity resolution. Under such parameter settings, the requirements for dif- ferentiating fingers’ subtle motions are reached, as listed in T able 1. Compared to Soli’ s millimeter wa ve radar range res- olution of 2 . 14 cm, the HUG system is capable of providing much more precise features, which are quite helpful for micro hand gesture recognition. T ABLE 1: Parameter setting and system performance Fig. 1 illustrates the block diagram of the whole prototype, and the real system is illustrated in Fig. 2. T o e xtract accurate Doppler features, the clock synchronization of D AC and ADC is required. Leakage canceling is implemented in the digital domain. The modulated pulse signal is produced by the D AC and then amplified by the front-end circuit. The am- plified signal stimulates an MA300D1-1 Murata Ultrasonic T ransceiver to emit ultrasonic wa ves. When ultrasonic w av es are reflected from fingers and palms with noise, receiv ed wa ves are amplified by the front-end circuits before being VOLUME 4, 2016 3 Y u Sang et al. : Micro Hand Gesture Recognition System Using Ultrasonic Active Sensing filled into the ADC. After sampling in ADC, considering the flexibility and real-time capability , the raw signal is transmitted to a PC by Ethernet for the ne xt signal processing module. B. RANGE-DOPPLER FEA TURE EXTRACTION In our system, we use the range-Doppler pulsed radar signal processing method to detect the palm and the fingers’ move- ment and to extract features for later classifiers. Pulse radar measures the target range via the round trip delay and utilizes the Doppler ef fect to determine the v elocity by emitting pulses and processing the returned signals at a specific pulse repetition frequency . T wo important procedures in the pulse radar signal processing method are fast-time sampling and slow-time sampling. The former refers to the sampling inside the PRI, which determines the range; the latter refers to sam- pling across multiple pulses using the PRI as the sampling period to determine the Doppler shift for velocity . T o ov ercome the weakness of echoes and attenuate the influence of noise, chirp pulses are applied to the system as an emitting wav eform to improv e the SNR while k eeping the range resolution. The baseband wa veform can be expressed as x ( t ) = M − 1 X m =0 a ( t − mT ) , (2) where a ( t ) = cos π B τ t 2 − π B t 0 ≤ t ≤ τ . (3) In the expressions, a ( t ) is a single pulse with time duration τ and the other parameters, including B , T , M and PRI, are the same as mentioned abov e. The signal processing, after receiving waves are reflected from a hand, is as follows. The recei ved reflected echoes of the ultrasonic wa ves are processed via the I&Q quadrature demodulator and the low-pass filter to obtain baseband sig- nals. FIGURE 3: Range-Doppler feature W ith fast-time sampling at the frequency F s of 400 kHz, matched filters are applied to detect the ranges of the palm and fingers. With slo w-time sampling, N -point FFTs are ap- plied to the samples of different pulses to detect objects’ ve- locities. After two-dimensional sampling, the range-Doppler image features would be generated at a frame le vel. Every frame of a range-Doppler image is in the shape of 256 × 180 as in Fig. 3, which is the region of interest (R OI) of the range- Doppler features at a specific time m . The data of a micro hand gesture are a 3-dimensional (range-Doppler -time) cube, which is the stack of range-Doppler images along the time dimension as the output. Fig. 4 sho ws an example “button off" gesture, with four range-Doppler images sampled at different times from the data cube. C. MICRO HAND GESTURE RECOGNITION A pattern recognition module is cascaded after the feature ex- traction module to classify the time sequential range-Doppler signatures. In our HUG system, we first implemented a state transition-based hidden Markov model, and then, other four different machine learning methods were realized for performance comparison. 1) Hidden Markov Model T aking advantage of high-resolution range-Doppler images, we can separate contiguous scattering points. W e aim at finding a method to e xtract and compress features containing more ef ficient information and less noise before feeding them into a classification architecture. Considering the fact that the scattering points of ultrasonic wa ves correspond to different fingers, the detected peaks in the range-Doppler images can reflect these fingers’ motion. For example, the moving trajectory of the scattering peaks shown in Fig. 4 illustrates distinct features of the process of the action “b utton off". In addition to precise ranges and velocities of fingers, the different fingers’ trajectories and the relations between fingers are exactly the indispensable features we used for recognition. Therefore, we propose a state transition-based HMM for gesture classification. This method consists of three parts cascading, which includes finite-state transition machines, a feature mapping module, and HMM classifiers. • Finite-state T ransition Machine A state transition mechanism is proposed to summarize each detected finger’ s state. The state of one detected scat- tering point is determined by the follo wing procedure. The reflecting intensity and extent are used to erase noise. In one range-Doppler image, the scattering points’ moving direction and velocity determine whether the point is in a static or dynamic condition. Within the range-Doppler images, the distance between the scattering points of adjacent images can be used to determine whether they should be merged with or separated from other points. Tracking mechanisms are used to complement and improv e the rob ustness of the whole state transition processing as well. The detailed design is shown in Fig. 5. Through the extraction process, the features of each frame’ s range-Doppler image would be represented as an indefinite length vector p m . For a gesture, we compress the data to a sequence of vectors X = { p m } M m =1 , (4) 4 V OLUME 4, 2016 Y u Sang et al. : Micro Hand Gesture Recognition System Using Ultrasonic Active Sensing FIGURE 4: Range-Doppler feature images sampled in the “button of f ” (see Fig. 8) gesture. The first subfigure shows the inde x finger and thumb separated at a 3 cm distance. Noise will be removed using time smoothing to increase robustness to the 3 marked “noise” objects in the first subfigure. The second and third subfigures show the index finger moving do wn with an acceleration while the thumb is nearly motionless with only a tiny upwards velocity . The last subfigure sho ws two fingers being touched. Note that an object’ s trajectory will always be a curve in the range-Doppler image. The represented states are labeled at the center of the detected objects. where the features of the range-Doppler image in frame m are extracted as a vector p m . The vector of symbols p m can be described as (5) p m = L ( n m , v m , r m ) , (5) where n m is the sequence number of scattering points, and v m and r m are the velocity and range of the scattering points. L ( · ) is the state transition function. • Feature Mapping Module T o fit the features into the HMM model, we represent the features of each range-Doppler image as an integer s m . S is the sequence of range-Doppler images maintaining the time order . The features of each image are extracted to a vector as we mentioned above. A mapping function σ ( · ) is used to discretize and map the v ector of integers to the summary variance s m . The lengths of feature vectors are finite, and each element is chosen from a small set of scattering points’ states. These v ariances from each frames form the final feature sequence S = { s m } M m =1 defines as (6): s m = σ ( p m ) , (6) where σ ( · ) is the function which projects the feature vector p m to integer s m . The feature of each frame is mapped into an integer , which forms this frame’ s observation of the HMM model. Features in such a form av oid much calculation compared to other methods. • HMM for Classification W e use the HMM to e xtract the patterns of the symbolized states, and Fig. 6 illustrates the work flow . As described in equation (6), the HMM used in this model is composed of a hidden state transition model and a multinomial emission model, where S = { s m } M m =1 is the observ ation sequence, Z = { z m } M m =1 is the hidden state sequence ( z m is the hidden state of time m .), and A is the transition probability matrix. θ = { π , A, ϕ } is the set of all the parameters of the model, where π is the initial hidden state distribution and ϕ represents the measurement probability . F or each pre-defined micro hand gesture, an HMM is trained through the Baum- W elch algorithm [36]. There are N kinds of micro hand gestures, and C is one of them. When the ground truth of an input gesture is class C , the likelihood of the models of each kind is calculated through the forward algorithm. Then, FIGURE 5: State transition diagram. The states represent the types of scattering points, and the arrows denote the methods of transition. Here is an introduction to all the states: Initialization states consist of two states, namely , the state ‘new’ and the state ‘0’, where state ‘new’ means the initial states, and state ‘0’ represents uncertain states and the first missing scattering points states. Missing states include states ‘-1’ and ‘-2’, which reflect missing some frames’ range- Doppler feature image’ s scattering points. T racking states include states ‘1’, ‘2’, ‘3’, and ‘4’, which describe static and dynamic objects’ tracking and locking. Ending states include ‘8’ and ‘del’, which denote the end of motion when the dynamic object’ s speed is less than the threshold or more than 3 frames’ scattering points are missing. VOLUME 4, 2016 5 Y u Sang et al. : Micro Hand Gesture Recognition System Using Ultrasonic Active Sensing FIGURE 6: Feature extraction and classification work flo w . we compare all the likelihoods from a Bayesian posterior perspectiv e as described by equation (7): p ( S , Z | θ ) = p ( z 1 | π ) " N Y n =2 p ( z n | z n − 1 , A ) # N Y m =1 p ( s m | z m , ϕ ) , p ( C = n | S ) = p ( S | C = n ) p ( C = n ) P n p ( S | C = n ) p ( C = n ) . (7) 2) Comparison Methods For comparison with the state transition-based HMM, we im- plemented another four frequently used methods, including an end-to-end netw ork, a random forest, a con volutional neu- ral network and a long short-term memory neural network. Considering that combined networks are widely used and are competitive compared with only CNNs or LSTM net- works [37], the end-to-end network is realized and obtains much higher accuracies in hand gesture classification. The detailed network is illustrated in Fig. 7. W e cascade the auto- matic feature extraction CNN part with the LSTM dynamic gesture classification jointly to form the end-to-end network. In the experiment, we train the two parts together instead of training them individually . W e transfer the output of the last con volutional layer of the CNN as a feature sequence of one frame into the LSTM to produce the frame-level classification of hand gestures through a softmax layer . T o efficiently use the dynamic process of the micro hand gesture, we add a time-weight filter in a window of 30 frames to obtain gesture-lev el classification. The filter parameters are empirical by experience. Considering the nearest frame with high weights, the time differential pooling filter was designed as follows: g out = N X i =1 w i σ [ g ( i )] , w i = 2 i N , where g out is the gesture prediction, g ( i ) is the per-frame prediction, w i are the filter weights. FIGURE 7: The end-to-end neural network architecture. Another method we implemented is random forest. Ran- dom forest has prov ed to be time and energy efficient in image classification tasks [38]. Considering range-Doppler images as regular images, we choose random forest as one approach to implement gesture recognition. A random forest method consisting of 50 trees was realized, and its model size is less than 2 MB. Among deep learning algorithms, con volutional neural networks are one of the most widely used methods to per- form image classification by automatically extracting fea- tures through multiple con volutional layers and pooling lay- ers [39]. Considering the range-Doppler image cube as a sequence of re gular images, the CNN was chosen to be applied to our system. The implemented network consists of fiv e con v-layers and three pooling layers. The concrete architecture is illustrated in T able II. W e also implemented the LSTM network, which is a specific kind of recurrent neural network (RNN) suitable for performing time sequen- tial classifications owing to the loops in the network. The network architecture is briefly introduced in T able II. IV . IMPLEMENT A TION AND PERFORMANCE EV ALU A TION A. GESTURE SET There are se ven micro hand gestures e v aluated in our system. As shown in Fig. 8, we have six gestures (index ed from the 2nd to the 7th), including finger press, button on, button down, motion up, motion down, and screw . Other kinds of gestures, including the case with no finger, are categorized into the 1st kind, i.e., no-finger . The principles used in our design are as follows: (1) The micro hand gestures chosen only in volv e subtle finger motions; the motions of large muscle groups in the wrist and arm are not included. (2) All gestures are dynamic gestures, which enable ultrasonic wa ves to capture Doppler features of the fingers’ motions. B. D A T A ACQUISITION T o ensure that adequate variation of gesture execution re- sulted from individual characteristics and other en vironmen- tal facets, we demanded that subjects repeat all the gestures. W e in vited nine subjects to execute the six designed gestures, 6 V OLUME 4, 2016 Y u Sang et al. : Micro Hand Gesture Recognition System Using Ultrasonic Active Sensing FIGURE 8: Examples of micro hand gestures, named finger press, button on, button off, motion up, motion do wn, and screw . The 1st one is no-finger . only giving sketchy instructions for how to perform these gestures. Each kind of gesture was labeled, without remov- ing any data from the dataset to ensure large variance. For each gesture, 50 samples were recorded from each subject, resulting in 9 × 6 × 50 = 2700 sample sequences. In addition to these 6 gestures, we added no-finger as another kind of gesture (labeled as 0). W e obtained another 2700 samples for the no-finger, and these samples were divided into each subjects’ sub-datasets. Finally , a dataset of 5400 samples in total was used in all of our experiments as the ra w input. For training and v alidation, we used standard k -fold leav e- one-subject-out, where k is the nine subjects in our experi- ments. C. EXPERIMENT AL IMPLEMENT A TION The implementation details of all the fi ve methods are sho wn in T able II. For our particular HMM architecture, as it specializes in dealing with input of unfixed length, we just feed sequences of the features with different lengths into models and train sev en models for the sev en micro gestures respecti vely . Af- ter extracting frame-level features using the state transition machine, we embedded these features into the final feature sequence, S , according to the produced dictionary . As ex- pected, the training process cost less time compared to those of neural network methods. In our experiments, only ten iteration loops were used. The classification is based on the maximum a posteriori method, where the prior probability is used to adjust the classification owing to no-finger being the usual case. For the random forest method, we use a forest size of 50 with no maximum depth. W e do wnsampled the time sequence feature cube to the tensor size of 64 × 45 × 30 (height, width, time sequence) and transformed it into a one- dimensional sequence. Then these one-dimensional feature sequences were fed into the random forest for classification. The three deep neural network methods, the end-to-end, the CNN, and the LSTM, hav e similar training procedures. For the end-to-end and the CNN, as the gestures sampled in our dataset are of variable length, mainly from 30 frames to 120 frames, we do wnsampled the raw data to a fixed length of 256 × 180 × 30 as a three-dimensional input. For the LSTM, to reduce the feature size and obtain more data, we divide each raw sequence data into a uniform length of 30 frames and then subsample them into a size of 128 × 90 × 30 . Then the produced samples are stochastically shuffled in the training procedure, and the time order is maintained within each sequence. The alignment of samples makes mini-batch training possible and accelerates the training process. T o av oid local optima and dampening oscillation, and also for the training efficienc y , we use mini-batch adaptive moment estimation (Adam) [40] with the initial learning rate of 0.001 and a batch size of 16 or 32 for all the neural networks. In the training process of the end-to-end, the fully connected layer after the con volutional layers in the CNN is connected to the LSTM network directly and trained jointly . D . COMPUT A TION COMPLEXITY AND REAL-TIME PERFORMANCE All the training and tests were carried out on the platform with an Nvidia T esla K20 GPU. The five methods described abov e all achiev ed real-time micro hand gesture recognition in our system. Among them, the end-to-end architecture has the biggest model size of 150 MB and the model size of random forest, standalone CNN and RNN are all on the order of MB. Howe ver , our proposed HMM-based method is just 40 KB, which is much smaller than the others. For real-time performance, considering frame-lev el prediction, the end-to- end processed 450 frames per second, whereas the Soli’ s end- to-end predicts frames at 150 Hz [7]. Furthermore, for gesture lev el prediction, our proposed state transition-based HMM predicts gestures at a much higher rate (250 Hz) than the end- to-end neural network method (15 Hz). On account of the small size and high prediction rate, the state-transition-based HMM has great potential to be embedded into wearable and portable devices. E. RESUL TS AND PERFORMANCE EV ALU A TION For comparison of micro hand gesture classification, we select equal numbers of relativ ely micro hand gestures from the 11 hand gestures that Google’ s Soli used in [7]. Ex- cluding four wide-range hand gestures, there are actually six relativ ely micro hand gestures, including pinch index, pinch pinky , finger slide, finger rub, slow swipe, and fast swipe, as well as a static gesture (palm hold), which are rele v ant to our work [4]. For comparison, we delete the rest four kinds of wide-range hand gesture results and calculated the confusion matrix again. Because the micro hand gestures are not easy to be incorrectly recognized as wide-range gestures, we transfer the corresponding false neg ativ es as true positiv es when calculating the new confusion matrix. According to the positiv e calculation, the av erage classification accuracy of the sev en micro hand gestures is approximately 88%. Fig. 9 shows the confusion matrix of the results achieved by our state-transition-based HMM model. The av erage of VOLUME 4, 2016 7 Y u Sang et al. : Micro Hand Gesture Recognition System Using Ultrasonic Active Sensing T ABLE 2: Network architecture used in our experiments T ABLE 3: Accuracies of v arious classification methods for 9 subjects and their a verage fold 1 − fold 9 representing the 9 subjects. the accuracy is 89%, which is competitiv e compared to Soli but obtains a balance between accuracy and computation load. Due to the model’ s small size and high prediction rate, the state-transition-based HMM model has great potential to be used in wearable and embedded systems such as smart watches or virtual reality . FIGURE 9: Confusion matrix of accuracy of the HMM. Fig. 10 sho ws the confusion matrix corresponding to the end-to-end network, whose av erage accuracy is as high as 96.34%. Profiting from the advantages of the ultrasonic sig- nal’ s high resolution, the end-to-end architecture can achie ve highly accurate classification, where the highest accurac y is 98.89%, and the lowest is 89.47%. T able III illustrates the various subject classification val- idation accuracies of all the fiv e classification methods, using leav e-one-subject-out cross-validation. Owing to the personal variance in gesture execution, we use 8 subjects’ samples and leave the last subject’ s samples separately for validation. The e xperiment can be a good predictor of real- world gesture recognition, as the v alidation datasets are en- tirely separated from the training sets. The variance between the subjects shown in T able III, which is less than 7% in these methods, also verifies that our architecture has good generalization capability . FIGURE 10: Confusion matrix of accuracy of the end-to-end. T ABLE 4: The accuracy of each gesture of different clas- sification methods. All of them use leave-one-subject-out cross-validation: (1) random forest with per-gesture sequence accuracy , (2) CNN with per-gesture sequence accuracy , (3) RNN with sequence pooling of per -frame prediction, and (4) end-to-end with sequence pooling of per-frame prediction T able IV introduces the ov erall classification accuracy of different architectures. W e use sequence-level recognition instead of frame-lev el classification because our micro hand gestures are all dynamic ones. As we can see, for the 7th gesture, the classification accuracies of the state-transition- based HMM and the end-to-end are much higher than other three methods. The 1st gesture, which is labeled as no-finger , 8 V OLUME 4, 2016 Y u Sang et al. : Micro Hand Gesture Recognition System Using Ultrasonic Active Sensing achiev es the highest accuracy among all the classification methods. The classification accuracy of the 5th gesture is the worst among sev en gestures by all the fiv e methods. The reason is that this gesture is easy to be classified into the 3rd gesture owing to their similar features. A demonstration of HUG system is presented in [41], where a prototype was realized to control a music player by micro hand gestures in real-time. In this demo, one can find that our system HUG can recognize 7 kinds of gestures and realize the interaction between the user and the music player . V . CONCLUSION In this paper , we proposed a system and methods for micro hand gesture recognition by using ultrasonic active sens- ing. Owing to the high resolution provided by ultrasonic wa ves, better quality features can be extracted. The pro- posed state-transition-based HMM method, which has less computational complexity , achie ved a comparable 89.38% classification accuracy . Furthermore, we used an end-to-end method and achieved a classification accuracy of 96.34%. Our system and methods of recognizing micro hand gestures showed great potential in HCI, especially in scenarios where a touchless input modality is more attractiv e, such as for wearable and portable devices or for dri ving assistance. A CKNOWLEDGMENT W e are grateful to all the participants for their efforts in collecting hand gesture data for our experiments. This work is supported by the National Natural Science Foundation of China (Grant No. 61571260 and No. 61801258). REFERENCES [1] J. Kjeldskov and C. Graham, “ A revie w of mobile HCI research methods, ” in International Conference on Mobile Human-Computer Interaction. Springer , 2003, pp. 317–335. [2] C. A. Pickering, K. J. Burnham, and M. J. Richardson, “ A research study of hand gesture recognition technologies and applications for human vehicle interaction, ” in Automotive Electronics, 2007 3rd Institution of Engineering and T echnology Conference on. IET , 2007, pp. 1–15. [3] Q. Pu, S. Gupta, S. Gollakota, and S. Patel, “Whole-home gesture recogni- tion using wireless signals, ” in Proceedings of the 19th annual international conference on Mobile computing & networking. ACM, 2013, pp. 27–38. [4] J. Gong, Y . Zhang, X. Zhou, and X.-D. Y ang, “Pyro: Thumb-tip gesture recognition using pyroelectric infrared sensing, ” in Proceedings of the 30th Annual A CM Symposium on User Interface Software and T echnology. A CM, 2017, pp. 553–563. [5] uSens, “Fingo, ” https://www .usens.com/fingo. [6] H. Y e, M. Malu, U. Oh, and L. Findlater, “Current and future mobile and wearable device use by people with visual impairments, ” in Proceedings of the SIGCHI Conference on Human F actors in Computing Systems. A CM, 2014, pp. 3123–3132. [7] S. W ang, J. Song, J. Lien, I. Poupyrev , and O. Hilliges, “Interacting with Soli: Exploring fine-grained dynamic gesture recognition in the radio- frequency spectrum, ” in Proceedings of the 29th Annual Symposium on User Interface Software and T echnology . A CM, 2016, pp. 851–860. [8] F .-S. Chen, C.-M. Fu, and C.-L. Huang, “Hand gesture recognition using a real-time tracking method and hidden Markov models, ” Image and vision computing, vol. 21, no. 8, pp. 745–758, 2003. [9] Y . Y ao and C.-T . Li, “Hand gesture recognition and spotting in uncon- trolled environments based on classifier weighting, ” in Image Processing (ICIP), 2015 IEEE International Conference on. IEEE, 2015, pp. 3082– 3086. [10] Z. Ren, J. Meng, and J. Y uan, “Depth camera based hand gesture recogni- tion and its applications in human-computer-interaction, ” in Information, Communications and Signal Processing (ICICS) 2011 8th International Conference on. IEEE, 2011, pp. 1–5. [11] J. Suarez and R. R. Murph y , “Hand gesture recognition with depth images: A revie w , ” in Ro-man, 2012 IEEE. IEEE, 2012, pp. 411–417. [12] J. Song, G. Sörös, F . Pece, S. R. Fanello, S. Izadi, C. Keskin, and O. Hilliges, “In-air gestures around unmodified mobile devices, ” in Pro- ceedings of the 27th annual A CM symposium on User interface software and technology . ACM, 2014, pp. 319–329. [13] O. Zhang and K. Sriniv asan, “Mudra: User-friendly Fine-grained Gesture Recognition using WiFi Signals, ” in Proceedings of the 12th International on Conference on emerging Networking EXperiments and T echnologies. A CM, 2016, pp. 83–96. [14] S. Gupta, D. Morris, S. Patel, and D. T an, “Soundwave: using the Doppler effect to sense gestures, ” in Proceedings of the SIGCHI Conference on Human Factors in Computing Systems. A CM, 2012, pp. 1911–1914. [15] K.-Y . Chen, K. L yons, S. White, and S. Patel, “uTrack: 3D input using tw o magnetic sensors, ” in Proceedings of the 26th annual A CM symposium on User interface software and technology. A CM, 2013, pp. 237–244. [16] G. Li, R. Zhang, M. Ritchie, and H. Griffiths, “Sparsity-based dynamic hand gesture recognition using micro-Doppler signatures, ” in Radar Con- ference (RadarConf), 2017 IEEE. IEEE, 2017, pp. 0928–0931. [17] P . Molchanov , S. Gupta, K. Kim, and K. Pulli, “Short-range FMCW monopulse radar for hand-gesture sensing, ” in Radar Conference (Radar- Con), 2015 IEEE. IEEE, 2015, pp. 1491–1496. [18] M. I. Skolnik, “Introduction to radar, ” Radar Handbook, v ol. 2, 1962. [19] W . W ang, A. X. Liu, and K. Sun, “Device-free gesture tracking using acoustic signals, ” in Proceedings of the 22nd Annual International Con- ference on Mobile Computing and Networking. A CM, 2016, pp. 82–94. [20] R. Nandakumar, V . Iyer, D. T an, and S. Gollakota, “Fingerio: Using activ e sonar for fine-grained finger tracking, ” in Proceedings of the 2016 CHI Conference on Human Factors in Computing Systems. A CM, 2016, pp. 1515–1525. [21] Snapdragon, “Snapdragon sense ID, ” https://www .qualcomm.com/ products/features/security/fingerprint- sensors. [22] R. J. Przybyla, H.-Y . T ang, A. Guedes, S. E. Shelton, D. A. Horsley , and B. E. Boser , “3D ultrasonic rangefinder on a chip, ” IEEE Journal of Solid- State Circuits, vol. 50, no. 1, pp. 320–334, 2015. [23] H. Godrich, “Students’ design project series: Sharing experiences [sp education], ” IEEE Signal Processing Magazine, vol. 34, no. 1, pp. 82–88, 2017. [24] Y . Kim and B. T oomajian, “Hand gesture recognition using micro-Doppler signatures with conv olutional neural network, ” IEEE Access, vol. 4, pp. 7125–7130, 2016. [25] Z. Peng, C. Li, J.-M. Muñoz-Ferreras, and R. Gómez-García, “ An FMCW radar sensor for human gesture recognition in the presence of multiple targets, ” in Microwa ve Bio Conference (IMBIOC), 2017 First IEEE MTT - S International. IEEE, 2017, pp. 1–3. [26] J. Lien, N. Gillian, M. E. Karagozler , P . Amihood, C. Schwesig, E. Olson, H. Raja, and I. Poupyrev , “Soli: Ubiquitous gesture sensing with millime- ter wav e radar, ” ACM Transactions on Graphics (TOG), vol. 35, no. 4, p. 142, 2016. [27] Z. Zhang, P . O. Pouliquen, A. W axman, and A. G. Andreou, “ Acoustic micro-doppler radar for human gait imaging, ” The Journal of the Acousti- cal Society of America, vol. 121, no. 3, pp. EL110–EL113, 2007. [28] Z. Zhang and A. G. Andreou, “Human identification experiments using acoustic micro-doppler signatures, ” in 2008 Argentine School of Micro- Nanoelectronics, T echnology and Applications. IEEE, 2008, pp. 81–86. [29] G. Garreau, C. M. Andreou, A. G. Andreou, J. Georgiou, S. Dura-Bernal, T . W ennekers, and S. Denham, “Gait-based person and gender recognition using micro-doppler signatures, ” in 2011 IEEE Biomedical Circuits and Systems Conference (BioCAS). IEEE, 2011, pp. 444–447. [30] S. Dura-Bernal, G. Garreau, C. Andreou, A. Andreou, J. Georgiou, T . W en- nekers, and S. Denham, “Human action categorization using ultrasound micro-doppler signatures, ” in Human Behavior Understanding: Second In- ternational W orkshop, HBU 2011, Amsterdam, The Netherlands, Novem- ber 16, 2011. Proceedings 2. Springer , 2011, pp. 18–28. [31] B. Raj, K. Kalgaonkar, C. Harrison, and P . Dietz, “Ultrasonic doppler sensing in hci, ” IEEE Pervasive Computing, vol. 11, no. 2, pp. 24–29, 2012. [32] S. Dura-Bernal, G. Garreau, J. Georgiou, A. G. Andreou, S. L. Denham, and T . W ennekers, “Multimodal integration of micro-doppler sonar and VOLUME 4, 2016 9 Y u Sang et al. : Micro Hand Gesture Recognition System Using Ultrasonic Active Sensing auditory signals for behavior classification with convolutional networks, ” International journal of neural systems, vol. 23, no. 05, p. 1350021, 2013. [33] J. Craley , T . S. Murray , D. R. Mendat, and A. G. Andreou, “ Action recog- nition using micro-doppler signatures and a recurrent neural network, ” in 2017 51st Annual Conference on Information Sciences and Systems (CISS). IEEE, 2017, pp. 1–5. [34] T . S. Murray , D. R. Mendat, K. A. Sanni, P . O. Pouliquen, and A. G. Andreou, “Bio-inspired human action recognition with a micro-doppler sonar system, ” IEEE Access, vol. 6, pp. 28 388–28 403, 2017. [35] K. Kalgaonkar , R. Hu, and B. Raj, “Ultrasonic doppler sensor for voice activity detection, ” IEEE Signal Processing Letters, vol. 14, no. 10, pp. 754–757, 2007. [36] Ó. Pérez, M. Piccardi, J. García, M. Á. Patricio, and J. M. Molina, “Comparison between genetic algorithms and the baum-welch algorithm in learning HMMs for human activity classification, ” in W orkshops on Applications of Evolutionary Computation. Springer, 2007, pp. 399–406. [37] N. T . V u, H. Adel, P . Gupta, and H. Schütze, “Combining recurrent and con volutional neural networks for relation classification, ” arXiv preprint arXiv:1605.07333, 2016. [38] A. Bosch, A. Zisserman, and X. Munoz, “Image classification using random forests and ferns, ” in Computer V ision, 2007. ICCV 2007. IEEE 11th International Conference on. IEEE, 2007, pp. 1–8. [39] A. Krizhevsky , I. Sutskever , and G. E. Hinton, “Imagenet classification with deep con volutional neural networks, ” in Adv ances in neural informa- tion processing systems, 2012, pp. 1097–1105. [40] D. P . Kingma and J. Ba, “ Adam: A method for stochastic optimization, ” arXiv preprint arXi v:1412.6980, 2014. [41] HUG, “Hug music player controlling, ” https://www .youtube.com/watch? v=8FgdiIb9WqY. YU SANG received the B.S. degree in electronic engineering from Tsinghua Univ ersity , Beijing, China, in 2016. He is currently a master student in the Intelligence Sensing Lab. (ISL), Department of Electronic Engineering, Tsinghua University . His current research interests include signal process- ing and machine learning. LAIXI SHI will receive the B.S. degree in elec- tronic engineering from Tsinghua Uni versity , Bei- jing, China, in 2018. She will be a Ph. D. student in the Department of Electrical and Computer Engineering, Carnegie Mellon University . Her cur- rent research interests include signal processing, noncon ve x optimization and relativ e application. YIMIN LIU (M’12) received the B.S. and Ph.D de- grees (both with honors) in electronics engineering from the Tsinghua Univ ersity , China, in 2004 and 2009, respectiv ely . From 2004, he was with the Intelligence Sens- ing Lab. (ISL), Department of Electronic Engi- neering, Tsinghua University . He is currently an associate professor with Tsinghua, where his field of acti vity is study on ne w concept radar and other microw av e sensing technologies. His current re- search interests include radar theory , statistic signal processing, compressive sensing and their applications in radar , spectrum sensing and intelligent transportation systems. 10 VOLUME 4, 2016

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment