Intrinsic Stability: Global Stability of Dynamical Networks and Switched Systems Resilient to any Type of Time-Delays

In real-world networks the interactions between network elements are inherently time-delayed. These time-delays can not only slow the network but can have a destabilizing effect on the network's dynamics leading to poor performance. The same is true …

Authors: David Reber, Benjamin Webb

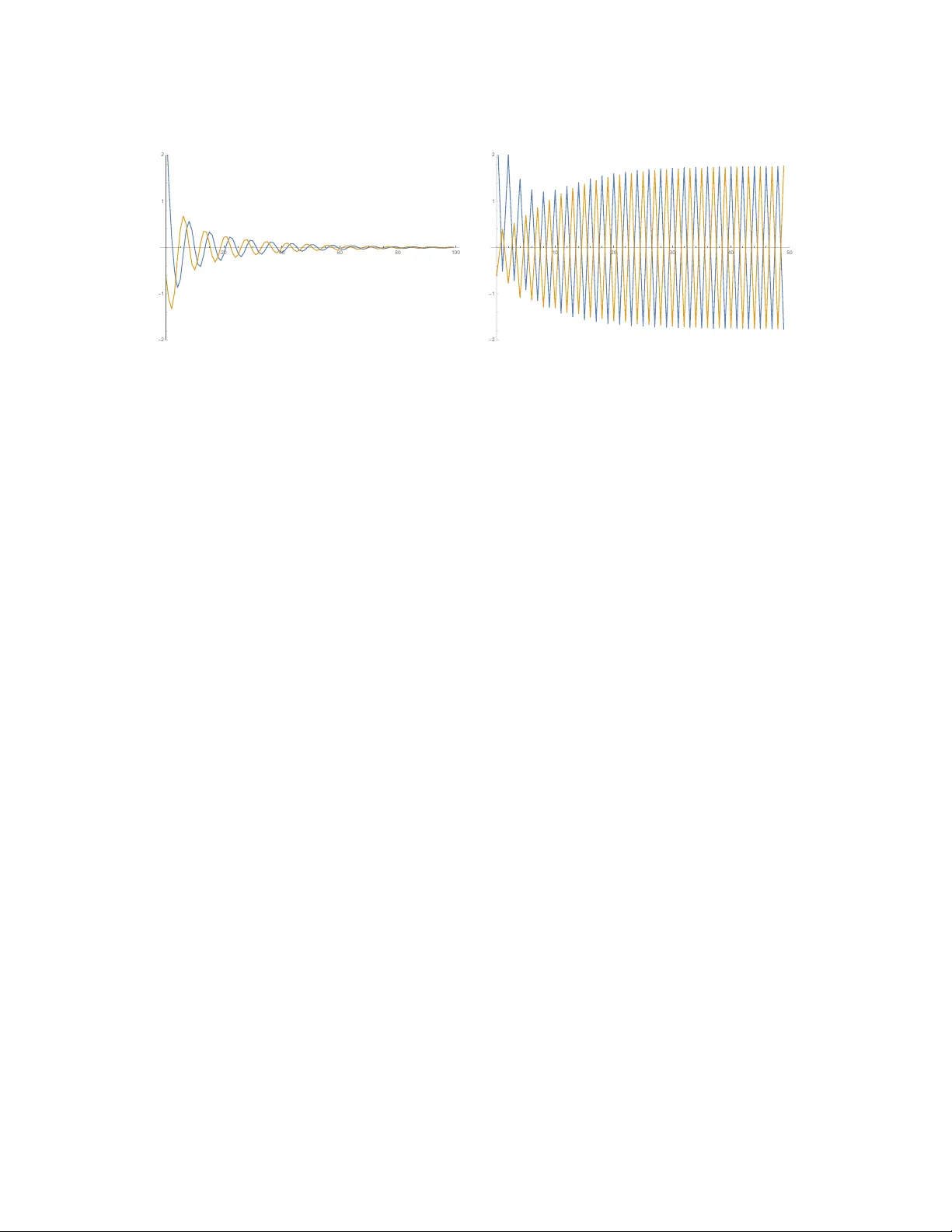

Intrinsic Stability: Global Stability of Dynamical Networks and Switched Systems Resilient to any T ype of T ime-Delays David Reber a , Benjamin W ebb b a Department of Mathematics, Brigham Y oung University , Pr ovo, UT 84602, USA, davidpreber@mathematics.byu.edu b Department of Mathematics, Brigham Y oung University , Pr ovo, UT 84602, USA, bwebb@mathematics.byu.edu Abstract In real-world networks the interactions between netw ork elements are inherently time-delayed. These time- delays can not only slow the network but can hav e a destabilizing e ff ect on the network’ s dynamics leading to poor performance. The same is true in computational networks used for machine learning etc. where time-delays increase the network’ s memory but can degrade the network’ s ability to be trained. Howe ver , not all networks can be destabilized by time-delays. Previously , it has been shown that if a network or high-dimensional dynamical system is intrinsically stabile, which is a stronger form of the standard notion of global stability , then it maintains its stability when constant time-delays are introduced into the system. Here we show that intrinsically stable systems, including intrinsically stable networks and a broad class of switched systems , i.e. systems whose mapping is time-dependent, remain stable in the presence of any type of time-v arying time-delays whether these delays are periodic, stochastic, or otherwise. W e apply these results to a number of well-studied systems to demonstrate that the notion of intrinsic stability is both computationally inexpensi ve, relativ e to other methods, and can be used to improve on some of the best known stability results. W e also show that the asymptotic state of an intrinsically stable switched system is exponentially independent of the system’ s initial conditions. K eywor ds: dynamical networks, time-v arying time-delays, neural networks, switched systems 1. Intr oduction The study of networks deals with understanding the properties of systems of interacting elements. In the social sciences these elements are typically individuals whose social interactions create networks such as Facebook and T witter . In the biological sciences networks range from metabolic networks of single-cell organisms to the neuronal networks of the brain to the larger physiological network of the organs within the body . Networks such as citation networks and other well-studied networks such as the W orld W ide W eb belong to what are referred to as information networks. In the technological sciences examples of networks include the internet, po wer grids, and transportation networks. (For an overvie w of these di ff erent types of networks see [1].) These real-world networks are dynamic in that both their topology , which is the network’ s structure of interactions, and the state of the network are time dependent. Here our focus is on the changing state of the network, which is often referred to as the dynamics on the network. The state of the network is the collectiv e states of the network elements. The fundamental concept in a dynamical network is that the state of a given element depends on the dynamics of its neighbors , which are the other network elements that directly interact with this element. Pr eprint submitted to Elsevier September 23, 2024 Here we refer to the emergent behavior of these interacting elements, which is the changing state of these network elements, as the network’ s dynamics . The network’ s dynamics can be periodic, as is found in many biological networks [2], synchronizing, which is the desired condition for transmitting power ov er large distance in power grids [3], and stable or multistable dynamics such as is found in gene-regulatory networks [4], etc. In real-world networks the interactions between network elements are inherently time-delayed. This comes from the fact that network elements are spatially separated, that information and other quantities can only be processed and transmitted at finite speeds, and that these quantities can be slo wed by network tra ffi c [5]. These time-delays not only slo w the network, leading to poor performance, b ut can hav e a destabilizing e ff ect on the network’ s dynamics, which can lead to network failure [6, 7]. The same is true in computational networks used for machine learning etc. where time-delays can be used to increase the network’ s ability to detect long term temporal dependencies [8] but at the cost of potentially degrading the ability to train the network. Not all networks can be destabilized by time-delays. In [9] the notion of intrinsic stability was intro- duced, which is a stronger form of the standard notion of stability , i.e. a system in which there is a globally attracting fixed point (see [10] for more details). If a network is intrinsically stable the authors showed that constant-type time-delays , which are delays that do not v ary in time, ha ve no e ff ect on the network’ s stability . As was shown in [9] an advantage of the intrinsic stability method over other methods such as L yapunov- type methods, Linear Matrix Inequalities (LMI), and Semi-Definite Programming (SPD) is that determining whether a network is intrinsically stable can be done with respect to the lower -dimensional undelayed net- work and does not require the creation of special functions or the use of interior point methods, etc. What is required is finding the spectral radius of the network’ s Lipschitz matrix, which for large network’ s can be done e ffi ciently by use of the power method [11]. In many situations, howe ver , the delays networks or, or more generally high-dimensional systems, ex- perience are not constant-type time-delays. Time delays can be periodic, such as the daily / annual cycles in population models [12], or even stochastic as in tra ffi c models [13]. It is worth noting that these time- varying time-delays are more complicated than constant-type time delays and as such the theory of systems with time-v arying time-delays is less de veloped than the theory of systems with constant time-delays, which in turn is less dev eloped than the theory of systems without delays. The main goal of this paper is to further develop the theory describing the stability of dynamical systems that experience time delays. Here we show that intrinsically stable systems, including intrinsically stable net- works and a broad class of switched systems , i.e. systems whose mapping is time-dependent, remain stable in the presence of any time-varying time-delays whether these delays are periodic, stochastic, or otherwise (see Main Results 2 and 3). W e apply these results to a number of well-studied systems to demonstrate that the notion of intrinsic stability is both computationally inexpensiv e, relativ e to other methods, and can be used to impro ve on some of the best kno wn results. (See for instance Example 4.4, compared to results found in [14, 15, 16, 17, 18].) W e also sho w that the asymptotic state of intrinsically stable switched systems is independent of the system’ s initial conditions (see Main Result 1). This allows us to show that the globally attracting state of any intrinsically stable netw ork and any time-delayed version of the network are identical (see Proposition 1). The main results of this paper are demonstrated using Cohen-Grossberg Neural (CGN) Networks, which are dynamical networks whose stability is often studied in the presence of constant-type and time-varying time-delays [19, 20]. It is worth noting that the results of this paper justify the modeling of dynamical net- works and switched systems without formally including delays in the model if it is kno wn that the system is intrinsically stable. The reason is that although delays do change the specific details the system’ s dynamics, if the system is intrinsically stable it will have the same qualitativ e dynamics and same asymptotic state 2 whether or not its delays are included (see Main Results 1, 2, and 3)). The adv antage is that determining or correctly anticipating what delays a network will experience can be quite complicated for most any real- world system. Hence, this theory also has implications to system design as an intrinsically stable network will not be destabilized by any type of une xpected delays. As this theory is in many ways di ff erent from the standard theory of stable dynamical systems, much e ff ort has gone into, first, making this theory understandable and, second, emphasizing the computational and algorithmic adv antages of this method. T o this end examples are gi ven throughout this paper describing each result, its usefulness, and how these results can be implemented in an e ffi cient manner . This paper is or ganized as follo ws. In Section 2 we define a dynamical network and the notion of intrinsic stability . In Section 3 we introduce networks with constant time-delays and show that these delayed systems hav e the same fixed points as their undelayed versions. In Section 4 we define switched networks, i.e. switched systems, and gi ve our Main Results 1 and 2, which sho w that intrinsically stable switched systems hav e the same asymptotic state irrespective of initial condition if intrinsically stable and intrinsically stable networks with time-varying time-delays have a globally attracting fixed point, respecti vely . W e then apply this theory to linear systems with distinct delayed and undelayed interactions, which allow us to compare our results to some of the most well-studied time-delayed systems. In Section 5 we extend our results to a more general class of switched networks and similarly compare our results to a number of well-studied switching systems. In Section 6 we introduce some analytical and computational considerations related to determining whether a network is intrinsically stable. Section 7 contains some remarks about future work and applications of this theory . The Appendix contains the proofs of our results. 2. Dynamical Netw orks A netw ork is composed of a set of elements , which are the indi vidual units that make up the network and a collection of interactions between these elements. An interaction between two network elements can be thought of as an element’ s ability to influence the behavior of the other network element. More generally , there is a directed interaction from the j th to the i th elements of a network if the j th network element can influence the state of the i th network element (where there may be no influence of the i th network element on the j th ). The dynamics of a network can be formalized as follo ws: Definition 1. (Dynamical Network) Let ( X i , d i ) be a complete metric space for 1 ≤ i ≤ n. Let ( X , d ma x ) be the complete metric space formed by endowing the pr oduct space X = L n i = 1 X i with the metric d ma x ( x , y ) = max i d i ( x i , y i ) wher e x , y ∈ X and x i , y i ∈ X i . Let F : X → X be a continuous map, with i th component function F i : X → X i given by F i = F i ( x 1 , x 2 , . . . , x n ) in which x j ∈ X j for j = 1 , 2 , . . . , n wher e it is understood that there may be no actual dependance of F i on x j . The dynamical system ( F , X ) generated by iterating the function F on X is called a dynamical network . If an initial condition x 0 ∈ X is given, we define the k th iterate of x 0 as x k = F k ( x 0 ) , with orbit { F k ( x 0 ) } ∞ k = 0 = { x 0 , x 1 , x 2 , . . . } in which x k is the state of the network at time k ≥ 0 . The component function F i = F i ( x 1 , x 2 , . . . , x n ) describes the dynamics and interactions with the i th element of the network, where there is a directed interaction between the i th and j th elements if F i actually depends on x j . The function F : X → X describes all interactions of the network ( F , X ). For the initial 3 condition x 0 ∈ X the state of the i th element at time k ≥ 0 is x k i = ( F k ( x 0 )) i ∈ X i so that X i is the element’ s state space. The state space X = L n i = 1 X i is the collectiv e state space of all network elements. While the definition of a dynamical network allo ws for the function F to be defined on general products of complete metric spaces, for the sake of intuition and direct applications of the theory we develop in this paper , our examples will focus on dynamical networks in which X = R n with the infinity norm k x k ∞ = max i | x i | . T o giv e a concrete example of a dynamical network and to illustrate results throughout this paper, we will use Cohen-Grossberg neural (CGN) netw orks. Example 2.1. (Cohen-Gr ossberg Neural Networks) F or W ∈ R n × n , σ : R → R , and c i , ∈ R for 1 ≤ i ≤ n let ( C , R n ) be the dynamical network with components C i ( x ) = (1 − ) x i + n X j = 1 W i j σ ( x j ) + c i 1 ≤ i ≤ n , (1) which is a special case of a Cohen-Gr ossber g neural network in discr ete-time [21]. The function σ is assumed to be bounded, di ff er entiable, and monotonically incr easing, with Lipschitz constant K , that is, σ ( x ) − σ ( y ) ≤ K x − y for all x , y ∈ R . In a Cohen-Grossberg neural network the v ariable x i represents the activation of the i th neuron. The func- tion σ is a bounded monotonically increasing function, which describes the i th neuron’ s response to inputs. The matrix W giv es the interaction strengths between each pair of neurons and describes how the neurons are connected within the network. The constants c i indicate constant inputs from outside the network. In a globally stable dynamical network ( F , X ), the state of the network tends toward an equilibrium irrespectiv e of its initial condition. That is, the network has a globally attracting fixed point y ∈ X such that for any x ∈ X , F k ( x ) → y as k → ∞ . Global stability is observed in a number of important systems including neural networks [21, 22, 19, 23, 20], epidemic models [24], and the study of congestion in computer networks [25]. In such systems the globally attracting equilibrium is typically a state in which the network can carry out a specific task. Whether or not this equilibrium stays stable depends on a number of factors including external influences but also internal processes such as the netw ork’ s own gro wth, both of which can destabilize the network. T o gi ve a su ffi cient condition under which a netw ork ( F , X ) is stable, we define a Lipsc hitz matrix (called a stability matrix in [26]). Definition 2. (Lipschitz Matrix) F or F : X → X suppose there ar e finite constants a i j ≥ 0 such that d i ( F i ( x ) , F i ( y )) ≤ n X j = 1 a i j d j ( x j , y j ) for all x , y ∈ X . Then we call A = [ a i j ] ∈ R n × n a Lipschitz matrix of the dynamical network ( F , X ) . It is worth noting that if A is a Lipschitz matrix of a dynamical network then an y matrix B A , where denotes the element-wise inequality , is also a Lipschitz matrix of the network. Howe ver , if the function F : X → X is piecewise di ff erentiable and each X i ⊆ R then the matrix A ∈ R n xn giv en by a i j = sup x ∈ X ∂ F i ∂ x j ( x ) (2) 4 is the Lipschitz matrix of minimal spectral radius of ( F , X ) (see [26]). From a computational point of view , the Lipschitz matrix A = [ a i j ] of ( F , X ) can be more straightforward to find by use of Equation (2) if the function F : X → X is di ff erentiable, compared to the more general formulation in Definition 2. Using Equation (2) it follows that the Lipschitz matrix A of the Cohen-Grossberg neural network from Example 2.1 is giv en by a i j = | 1 − | + K | W ii | if i = j K W i j otherwise. (3) It is straightforward to v erify that a Lipschitz matrix exists for a dynamical network ( F , X ) if and only if the mapping F is Lipschitz continuous. The idea is to use the Lipschitz matrix to simplify the stability analysis of nonlinear networks, using the follo wing theorem of [26]. Here ρ ( A ) = max λ ∈ σ ( A ) | λ | denotes the spectral radius of a matrix A , where σ ( A ) are the eigen values of A . Theorem 1. (Network Stability) Let A be a Lipschitz matrix of a dynamical network ( F , X ) . If ρ ( A ) < 1 , then ( F , X ) is stable. It is worth noting that if we use the Lipschitz matrix A to define the dynamical network ( G , X ) by G ( x ) = A x then ( G , X ) is stable if and only if ρ ( A ) < 1. Thus, a Lipschitz matrix of a dynamical network ( F , X ) can be thought of as the worst-case linear approximation to F . If this approximation has a globally attracting fixed point, then the original dynamical network ( F , X ) must also be stable. Note, ho wev er, that the condition that ρ ( A ) < 1 is su ffi cient but not necessary for ( F , X ) to be stable. In fact, this stronger condition implies much more than network stability , so follo wing the con vention introduced in [26] we assign it the name of intrinsic stability . Definition 3. (Intrinsic Stability) Let A ∈ R n xn be a Lipschitz matrix of a dynamical network ( F , X ) . If ρ ( A ) < 1 , then we say ( F , X ) is intrinsically stable . The Cohen-Grossberg neural network ( C , R n × n ) has the stability matrix A = | 1 − | I + K | W | giv en by Equation (3) with spectral radius ρ ( A ) = | 1 − | + K ρ ( | W | ) Here, | W | ∈ R n × n is the matrix W in which we take the absolute value of each entry . Thus, ( C , R n × n ) is intrinsically stable if | 1 − | + K ρ ( | W | ) < 1. 3. Constant-T ime-Delayed Dynamical Networks As mentioned in the introduction, the dynamics of most real networks are time-delayed . That is, an interaction between tw o network elements will typically not happen instantaneously b ut will be delayed due to either the physical separation of these elements, their finite processing speeds, or be delayed due to other factors. W e formalize this phenomenon by introducing a delay distrib ution matrix D = [ d i j ] into a dynamical network ( F , X ), where each d i j is a nonnegati ve integer denoting the constant number of discrete time-steps by which the interaction from the j th network element to the i th network element is delayed. Definition 4. (Constant T ime-Delayed Dynamical Network) 5 Out[224]= 20 40 60 80 100 - 2 - 1 1 2 10 20 30 40 50 - 2 - 1 1 2 Stable Network ( C , X ) Constant T ime-Delayed Network ( C D , X 3 ) Figure 1: Left: The stable dynamics of the two-neuron Cohen-Grossberg network ( C , X ) from Example 3.1 is shown. Right: The unstable dynamics of the constant time-delayed version of this network ( C D , X 3 ) is shown with the delay distribution gi ven by the matrix D in Equation (7). Let ( F , X ) be a dynamical network and D = [ d i j ] ∈ N n × n a delay distribution matrix with max i , j d i j ≤ L, a bound on the delay length. Let X L , the extension of X to delay-space, be defined as X L = L M ` = 0 n M i = 1 X i ,` wher e X i ,` = X i for 1 ≤ i ≤ n and 0 ≤ ` ≤ L . Componentwise, define F D : X L → X L by ( F D ) i ,` + 1 : X i ,` → X i ,` + 1 given by the identity map ( F D ) i ,` + 1 ( x i ,` ) = x i ,` for 0 ≤ ` ≤ L − 1 (4) and ( F D ) i , 0 : n M j = 1 X j , d i j → X i , 0 given by ( F D ) i , 0 = F i ( x 1 , d i 1 , x 2 , d i 2 , . . . , x n , d in ) (5) wher e F i : X → X i is the i th component function of F for i = 1 , 2 , . . . , n. Then ( F D , X L ) is the delayed version of F corresponding to the fixed-delay distrib ution D with delay bound L. W e order the component spaces of X L in the following w ay . If x ∈ X L then x = [ x 1 , 0 , x 2 , 0 , . . . , x n , 0 , x 1 , 1 , x 2 , 1 , . . . , x n , L ] T where x i ,` ∈ X i ,` for i = 1 , 2 , . . . , n and ` = 0 , 1 , . . . , L . The formalization in Definition 4 captures the idea of adding a time-delay of length d i j ≤ L to an interaction: Each X i is e ff ectively copied L times, and past states of the i th element are passed down this chain by the identity component functions ( F D ) i ,` + 1 for 0 ≤ ` ≤ L − 1 in Equation (4). When a state of the i th element has been passed through the chain d i j times over d i j time-steps it then influences the i th network element, as described by the entry-wise substitutions of x j , d i j for x j in ( F D ) i , 0 in Equation (5). Example 3.1. Consider a simple 2-neur on version of a Cohen-Gr ossberg neur al network ( C , X ) given by C " x 1 x 2 # ! = " C 1 ( x 1 , x 2 ) C 2 ( x 1 , x 2 ) # = " (1 − ) x 1 + W 11 φ ( x 1 ) + W 12 φ ( x 2 ) + c 1 (1 − ) x 2 + W 21 φ ( x 1 ) + W 22 φ ( x 2 ) + c 2 # , (6) 6 in which X = R 2 . F or the delay distribution D given by D = " 1 2 1 3 # , (7) which has a maximum delay of L = 3 the time-delayed network ( C D , X 3 ) is given by C D x 1 , 0 x 2 , 0 x 1 , 1 x 2 , 1 x 1 , 2 x 2 , 2 x 1 , 3 x 2 , 3 = C 1 ( x 1 , 1 , x 2 , 2 ) C 2 ( x 1 , 1 , x 2 , 3 ) x 1 , 1 x 2 , 1 x 1 , 2 x 2 , 2 x 1 , 3 x 2 , 3 = (1 − ) x 1 , 1 + W 11 φ ( x 1 , 1 ) + W 12 φ ( x 2 , 2 ) + c 1 (1 − ) x 2 , 3 + W 21 φ ( x 1 , 1 ) + W 22 φ ( x 2 , 3 ) + c 2 x 1 , 0 x 2 , 0 x 1 , 1 x 2 , 1 x 1 , 2 x 2 , 2 in which X 3 = R 8 . The time-delayed network ( C D , X 3 ) is the same as the original network ( C , X ) e xcept that the state of x 1 , 0 gets passed thr ough one identity mapping before it is input into F 1 and twice befor e it is input into F 2 . Similarly , x 2 , 0 gets passed thr ough one identity mapping befor e it is input into F 1 and three identity mappings befor e it is input into F 2 . A natural question is to ask is whether constant time-delays a ff ect the stability of a network. W e note that if W = 0 − 3 4 3 4 0 , c 1 = c 2 = 0 , σ ( x ) = tanh ( x ) , and = 2 5 then the dynamical network ( C , X ) given in Equation (6) is stable as can be seen in F igur e (1) (left). However , the time-delayed version ( C D , X 3 ) of this network is unstable as is shown in the same figure (right). That is, the time-delays given by the delay distribution D have a destabilizing e ff ect on this network. An important fact about the network constructed in this e xample is that its spectral radius ρ ( A ) = | 1 − | + K ρ ( | W | ) = 1 . 35 > 1 . That is, although ( C , X ) is stable it is not intrinsically stable. In [26], the authors demonstrate that intrinsically stable systems are resilient to the addition of constant time-delays, as is stated in the following theorem. Theorem 2. (Intrinsic Stability and Constant Delays) Let ( F , X ) be a dynamical network and D = [ d i j ] a delay-distribution matrix. Let L satisfy max i , j d i j ≤ L. Then ( F , X ) is intrinsically stable if and only if ( F D , X L ) is intrinsically stable. Beyond maintaining stability , we note that any fixed point(s) of an undelayed network ( F , X ) will also be fixed point(s) of any delayed version ( F D , X L ). This is formalized in the following proposition, and proven in the Appendix. Before stating this proposition, we require the follo wing definition. Definition 5. (Extension of a Point to Delay-Space) Let E L ( x ) ∈ X L be equal to L + 1 copies of x ∈ X stack ed into a single vector , namely E L ( x ) = x 0 x 1 . . . x L where x ` = x for 0 ≤ ` ≤ L . 7 Out[349]= 5 10 15 20 - 1.5 - 1.0 - 0.5 0.5 1.0 1.5 2.0 5 10 15 20 - 1.5 - 1.0 - 0.5 0.5 1.0 1.5 2.0 Intrinsically Stable Network ( C , X ) Constant T ime-Delayed Network ( C D , X 3 ) Figure 2: Left: The dynamics of the intrinsically stable network ( C , X ) from Example 3.2 is shown. Right: The stable dynamics of the constant time-delayed version of this network ( C D , X 3 ) is shown with the delay distribution giv en by the matrix D in Equation (7). Both systems are attracted to the fixed point x ∗ = ( − . 386 , 1 . 595). Proposition 1. (Fixed Points of Delayed Netw orks) Let x ∗ be a fixed point of a dynamical network ( F , X ) . Then for all delay distributions D with max i j d i j ≤ L, E L ( x ∗ ) is a fixed point of ( F D , X L ) . As an immediate consequence of Proposition 1 and Theorem 2, if an undelayed network ( F , X ) is in- trinsically stable with a globally attracting fixed point x ∗ ∈ X , then the delayed version ( F D , X L ) will hav e the “same” globally attracting fixed point E L ( x ∗ ), in that x ∗ is the restriction of y = E L ( x ∗ ) to the first n component spaces of X L . Thus, the asymptotic dynamics of an intrinsically stable network and any version of the network with constant time delays are essentially identical. Example 3.2. Consider again the Cohen-Grossber g neural network ( C , X ) and delay matrix D given in Example 3.1 where W and σ are as before but = 4 5 , c 1 = − 1 and c 2 = 1 . Since | 1 − | + ρ ( | W | ) = . 95 < 1 then ( C , X ) is intrinsically stable with the globally attracting fixed point x ∗ = ( − . 386 , 1 . 595) as seen in F igur e 2 (left). Since ( C , X ) is intrinsically stable then Theor em 2 together with Pr oposition 1 imply that not only is ( C D , X 3 ) stable but its globally attr acting fixed point is E 3 ( x ∗ ) . This is shown in F igur e 2 (right). These results justify the modeling choice of ignoring constant time-delays when analyzing intrinsically stable real-w orld networks. Howe ver , these results do not account for the potential of time-dependent delays arising from external or stochastic influences, etc. The main results of this paper , presented in the next section, focus on strengthening the conclusion of Theorem 2. 4. T ime-V arying Time-Delayed Dynamical Netw orks As the title of this section suggests, constant time-delays are not the only type of time delays that can occur in dynamical networks. More importantly , time-delays that vary with time occur in many real-world networks and in such systems are a significant source of instability [5, 6, 7]. It is worth noting that modeling such delays introduces ev en more complexity into models of dynamical networks that can already be quite complicated. This can hinder the tractability of analyzing such systems. In order to define a netw ork with time-v arying time-delays, we first define the more general concept of a switched network . 8 Definition 6. (Switched Network) Let M be a set of Lipschitz continuous mappings on X , such that for every F ∈ M , ( F , X ) is a dynamical network. Then we call ( M , X ) a switched network on X . Given some sequence { F ( k ) } ∞ k = 1 ⊂ M , we say that ( { F ( k ) } ∞ k = 1 , X ) is an instance of ( M , X ) , with orbits determined at time k by the function F k ( x ) = F ( k ) ◦ . . . ◦ F (2) ◦ F (1) ( x ) for x ∈ X . F or the switched network ( M , X ) we construct a Lipschitz set S consisting of a set of n × n matrices as follows: F or each F ∈ M , contribute exactly one Lipsc hitz matrix A of ( F , X ) to the set S . ( M , X ) is an ensemble of dynamical systems formed by taking all possible sequences of mappings { F ( k ) } ∞ k = 1 ⊂ M . For a switched network ( M , X ), the set S serves an analogous purpose to the Lipschitz matrix A of a dynamical network ( F , X ), as we soon demonstrate. Before describing this we consider the following e xample. Example 4.1. Let ( P , R 2 ) and ( Q , R 2 ) be the simple dynamical networks given by P ( x ) = " 1 0 # x and P ( x ) = " 0 1 # x for small << 1 . F or M = { P , Q } let { F ( k ) } ∞ k = 1 be the sequence that alternates between P and Q, i.e. F ( k ) = P if k is odd and F ( k ) = Q if k is even. Note that if we let U = Q ◦ P then U ( x ) = " 2 1 + 2 # with ρ ( U ) = 1 2 (1 + 2 2 + √ 1 + 4 2 ) > 1 . Since F (2 k ) ( x ) = U ◦ . . . ◦ U ( x ) for x ∈ R 2 then, as U ( x ) is a linear system lim k →∞ F (2 k ) ( x ) = ∞ for any x , 0 . This is despite the fact that both ( P , R 2 ) and ( Q , R 2 ) are intrinsically stable both having the globally attracting fixed point 0 . The issue is that although the spectral radius of both P and Q in this example are arbitrarily small, their joint spectral radius is not. Definition 7. (Joint Spectral Radius) Given some z 0 ∈ R n and some set of matrices S ⊂ R n × n , let z k = A k . . . A 2 A 1 z 0 for some sequence { A i } ∞ i = 1 ⊂ S . The joint spectral radius ρ ( S ) of the set of matrices S is the smallest value ρ ≥ 0 such that for e very z 0 ∈ R n ther e is some constant C > 0 for which || z k || ≤ C ( ρ ) k . It is known that { z k } ∞ k = 1 con verges to the origin for all z 0 ∈ R n if and only if ρ ( S ) < 1 [27]. This allows us to state the following result regarding the asymptotic behavior of a nonlinear switched network ( M , X ) whose Lipschitz set S satisfies ρ ( S ) < 1. Main Result 1. (Independence of Initial Conditions for Switched Networks) Let S be a Lipschitz set of a switched network ( M , X ) satisfying ρ ( S ) < 1 , and let ( { F ( k ) } ∞ k = 1 , X ) be an instance of ( M , X ) . Then for all initial conditions x 0 , y 0 ∈ X , ther e exists some C > 0 such that d ma x ( F k ( x 0 ) , F k ( y 0 )) ≤ C ρ ( S ) k . Additionally , if x ∗ is a shar ed fixed point of ( F , X ) for all F ∈ M , then lim k →∞ F k ( x 0 ) = x ∗ for all initial conditions x 0 ∈ X . 9 Out[995]= 5 10 15 20 25 - 3 - 2 - 1 1 2 3 10 20 30 40 50 60 70 - 0.4 - 0.2 0.2 0.4 Independence to Initial Conditions Dynamics with a Shared Fixed Point Figure 3: Left: The dynamics of the switched network in Example 4.2 is shown for two di ff erent initial conditions x 0 = (2 , 3) (shown in blue and yellow) and y 0 = ( − 2 , − 3) (shown in green and red). As the corresponding joint spectral radius ρ ( S ) of the network is less than 1, the orbits of these initial conditions conv erge to each other . Right: Modifying this switched network so that both F , G ∈ M have the shared fixed point 0 results in a stable switched system with the globally attracting fixed point 0 . Hence, if the joint spectral radius of the Lipschitz set S of M is less than 1, all orbits in a switched network become asymptotically close to one another as time goes to infinity . Even if this limit-orbit is not conv ergent to any fixed point, this result implies an asymptotic independence to initial conditions. An example of this is the follo wing. Example 4.2. Let ( G , X ) and ( H , X ) be the Cohen-Grossber g neural networks given by G " x 1 x 2 # ! = (1 − 1 ) x 1 − 3 4 φ ( x 2 ) + c 1 (1 − 1 ) x 2 + 3 4 φ ( x 1 ) + c 2 and H " x 1 x 2 # ! = (1 − 2 ) x 1 + 1 4 φ ( x 2 ) + d 1 (1 − 2 ) x 2 + 1 4 φ ( x 1 ) + d 2 , r espectively , in which σ ( x ) = tanh( x ) , 1 = 4 5 , 2 = 3 10 , c 1 = d 2 = − 1 , and c 2 = d 1 = 1 . Setting M = { G , H } then S is the Lipschitz set of M given by S = 1 5 3 4 3 4 1 5 , 7 10 1 4 1 4 7 10 . It can be shown that the joint spectral r adius ρ ( S ) = . 95 (by use of Pr oposition 2 in Section 5). As this is less than 1, then for any instance ( { F ( k ) } ∞ k = 1 , X ) of M and any initial conditions x 0 and y 0 , we have lim k →∞ d ma x ( F k ( x 0 ) , F k ( y 0 )) = 0 by the Main Result 1. This can be seen in F igure 3 (left) wher e ( { F ( k ) } ∞ k = 1 , X ) is the instance given by { F ( k ) } ∞ k = 1 = { G , G , G , H , H , H , . . . } . If we set c 1 = c 2 = d 1 = d 2 = 0 in both ( G , X ) and ( H , X ) then both systems have the shared fixed point 0 . In this case Main Result 1 indicated that any instance ( { F ( k ) } ∞ k = 1 , X ) of M will be stable with the globally attracting fixed point 0 . This is shown in F igure 3 (right) wher e again { F ( k ) } ∞ k = 1 = { G , G , G , H , H , H , . . . } . Thus, as might be e xpected from the complicated nature of a switched system, the condition ρ ( S ) < 1 alone is not able to match the strong implication of global stability , as ρ ( A ) < 1 does for a dynamical network (see Theorem [2]). Furthermore, it is often notoriously di ffi cult to compute or approximate the joint spectral radius ρ ( S ) of a general set of matrices S [27, 33]. 10 Out[470]= 5 10 15 20 25 - 1 1 2 10 20 30 40 - 1 1 2 Periodically Delayed Network ( C P , X 5 ) Stochastically Delayed Network ( C U , X 10 ) Figure 4: Left: The dynamics of the intrinsically stable two-neuron Cohen-Grossberg network ( C , X ) from Example 3.2 is shown in which the network has periodic time-varying time-delays. Right: The dynamics of the same Cohen-Grossberg network is shown in which the network has stochastic time-v arying time-delays. Both systems are attracted to the fixed point x ∗ = ( − . 386 , 1 . 595) similar to the behavior sho wn in Figure 2. Somewhat surprisingly , these issues are resolved when our switched system arises from a network ex- periencing time-varying time-delays. In this case, the computation of the joint spectral radius reduces to computing the spectral radius of the Lipschitz matrix of the original undelayed dynamical network ( F , X ), which for even large systems can be done e ffi ciently using the power method [11]. This provides a general and computationally e ffi cient method for verifying asymptotic stability despite time-v arying time-delays. Main Result 2. (Intrinsic Stability and Time-V arying Time-Delayed Networks) Suppose ( F , X ) is in- trinsically stable with ρ ( A ) < 1 , wher e A ∈ R n × n is a Lipschitz matrix of F and x ∗ is the network’s globally attracting fixed point. Let L > 0 and M d = { F D | D ∈ N n × n with max i j d i j ≤ L } and let S d be the Lipschitz set of M d . Then E L ( x ∗ ) is a globally attracting fixed point of every instance ( { F D ( k ) } ∞ k = 1 , X L ) of ( M d , X L ) . Furthermor e, ρ ( S d ) = ρ ( A L ) < 1 , wher e A L = " 0 n × nL A I nL × n L 0 nL × n # . Hence, any intrinsically stable dynamical network ( F , X ) retains con vergence to the same equilibrium ev en when it experiences time-varying time-delays. This extends Theorem 2.3 of [26] to the much larger and more complicated class of switching-delay networks. Furthermore, note that intrinsic stability is a delay- independent result, which makes no assumption regarding the rate of growth of the time delays. In Main Result 2 we call ρ ( S d ) the con ver gence rate of the system, since it provides the exponential bound on the rate at which all orbits con verge to the fix ed point E L ( x ∗ ). It is worth emphasizing that as a consequence of this result, to determine the asymptotic beha vior of a switched network ( M , X L ) with M = { F D | D ∈ N n × n with max i j d i j ≤ L } , in which the presence and magni- tude of time delays is not exactly known, it su ffi ces to study the dynamics of the much simpler undelayed system ( F , X ). 11 Example 4.3. (Periodic and Stochastic Time-V arying Delays) Consider the intrinsically stable Cohen- Gr ossber g neural network ( C , X ) from Example 3.2 with the exception that we let { D ( k ) } ∞ k = 1 be the sequence of delay distributions given by D ( k ) = " k mod 5 k mod 6 k mod 6 k mod 5 # . (8) These time-varying delays ar e periodic with period 30 and L = 5 . The result of these delays on the network’ s dynamics can be seen in F igur e 4 (left) wher e we let ( C P , X 5 ) denote the network ( C , X ) with time-varying time-delays given by (8) . Note that although the trajectories are altered by these delays the y still con ver ge to the same fixed point x ∗ = ( − . 386 , 1 . 595) as in the undelayed and constant time-delayed networks (cf . F igur e 2) as guaranteed by Main Result 2. If instead we let D ( k ) U [0 , 10] ∈ N 2 × 2 (9) be the random matrix in which eac h entry is sampled uniformly fr om the inte gers { 0 , 1 , . . . , 10 } the r esulting switched network is still stable, as guaranteed by Main Result 2. The network’ s trajectories still con verg e to the point x ∗ = ( − . 386 , 1 . 595) as shown in F igure 4 (right) wher e we let ( C U , X 10 ) denote the network ( C , X ) with time-varying time-delays given by 9. 4.1. Application: Linear Systems with Distinct Delayed and Undelayed Interactions A number of papers hav e published delay-dependant results regarding time-v arying time-delayed sys- tems whose delayed interactions are separated from the undelayed interactions (see , for instance, [14, 15, 16, 17, 18]). By delay-dependent we mean that the criteria that determines whether a network is stable depends on the length of the delays the netw ork experiences, as opposed to the delay-independent results of Section 4. W e demonstrate how to analyze these by use of Main Result 2, and compare our results to those in the literature. Consider a linear system of the form x k + 1 = A x k + B x k − τ ( k ) where 1 ≤ τ ( k ) ≤ L for k ≥ 0 (10) where A , B ∈ R n × n are constant matrices, and τ ( k ) is a positiv e integer representing the magnitude of the time-varying time-delay , bounded by some L > 0. Here A and B represent distinct weights of the delayed and undelayed interactions. The minimally delayed version of system (10) is gi ven by x k + 1 = A x k + B x k − 1 , (11) which we can express in terms of a single transition matrix e A as e x k + 1 = e A e x k where e A = " A B I n × n 0 n × n # and e x k = " x k x k − 1 # . (12) As in [28], we say that system (12) is the lifted r epresentation of system (11). Now observe that we can represent system (10) in the notation of Main Result 2 as the system ( F , R 2 n ) where F ( e x k ) = e A e x k 12 where, since F is linear, the Lipschitz matrix of F is giv en by e A ∈ R 2 n × 2 n and the zero vector 0 ∈ R n is a fixed point. Then system (10) is the switched system instance ( { F D ( k ) } ∞ k = 1 , X L ) obtained from the sequence of delay distributions D ( k ) = τ ( k ) " 0 n × n 1 n × n 0 n × n 0 n × n # ensuring that delays only occur to the delayed interactions modeled by B , with magnitude τ ( k ) ≤ L . It follo ws immediately from Main Result 2 that the system (10) is stable for arbitrary large delay bounds L when ( F , R 2 n ) is intrinsically stable, that is when ρ ( e A ) < 1 is satisfied. Example 4.4. (Intrinsically Stable) Consider system (10) with A = " 0 . 6 0 0 . 35 0 . 7 # , B = " 0 . 1 0 0 . 2 0 . 1 # . The transition matrix of the lifted r epr esentation is e A = 0 . 6 0 0 . 1 0 0 . 35 0 . 7 0 . 2 0 . 1 1 0 0 0 0 1 0 0 which satisfies ρ ( e A ) ≈ 0 . 822 < 1 . Then this system is intrinsically stable, so is in fact stable for an arbitrarily larg e delay bound L > 0 . In the following table, we compar e this delay-independent result with the delay-dependent r esults of [14, 15, 16, 17, 18]: Method Max Upper Bound L Theor em 3.1 of [15] 10 Theor em 1, Theorem 2 of [16] 13 Theor em 3.2 of [17] 12 Theor em 1 of [18] 15 Theor em 2 of [14] 10 · 10 21 Main Result 2 of this paper ∞ It is worth noting that the methods of [14, 15, 16, 17, 18] employ techniques involving linear matrix inequal- ities and Lyapunov functionals. These methods ar e avoided with a straight-forwar d application of Main Result 2, which only r equir es computing the spectral radius of single 4 × 4 matrix. As elaborated in Section 6, c hecking intrinsic stability is extr emely computationally e ffi cient. Furthermore , because intrinsic stability is a delay-independent r esult, it becomes immediately clear that an intrinsically stable network is stable for arbitrarily lar ge delay bounds L. Beyond improving the results described in Example 4.4, in a similar manner , we can also impro ve the results of Example 2 of [29]. In doing so we replicate the delay-independent result of Example 6.1 of [30], in which the system is found to be stable for arbitrarily large L . Howe ver , we do so in a much more computationally e ffi cient manner , without having to solve a series of linear matrix inequalities (see [29]). There are systems of the form (10) which are not intrinsically stable, in which case delay-dependent results provide greater insight. 13 Example 4.5. (Not Intrinsically Stable) Consider system (10) with A = " 0 . 8 0 0 . 05 0 . 9 # , B = " − 0 . 1 0 − 0 . 2 − 0 . 1 # . The transition matrix of the lifted r epr esentation is e A = 0 . 8 0 − 0 . 1 0 0 . 05 0 . 9 − 0 . 2 − 0 . 1 1 0 0 0 0 1 0 0 which satisfies ρ ( e A ) = 1 . Hence this system is not intrinsically stable, even though [14] showed that it is stable for all 0 ≤ L ≤ 9 . 61 × 10 8 . Even though some systems that are not intrinsically stable turn out to be stable, at least for certain types of delays, the fact that intrinsic stability can be verified with relatively little e ff ort may be reason enough to check. In fact, it may be the case that a spectral radius slightly above or equal to 1 is an indication that a system’ s stability is resilient to time delays as in Example 4.5. Howe ver , this is still an open question. For further analysis of the computational complexity of checking intrinsic stability , see section 6. 5. Ro w-Independence Closure of Switched Netw orks Using Main Result 1 and Main Result 2, we can extend our analysis of systems with time-varying time- delays to a more general class of switched networks. Definition 8. (Row-Independence Closure) Let ( M , X ) be a switched network with Lipschitz set S . Then we denote RI ( S ) , the row-independence closure of S , by RI ( S ) = { A ∗ | a ∗ i = a ( i ) i for some A (1) , . . . , A ( n ) ∈ S } wher e a ( i ) i denotes the i th r ow of the i th matrix A ( i ) . The idea is that row-independence indicates that there is no conditional relationship between ro ws of the matrices in RI ( S ). Note that S ⊂ R I ( S ). The ro w-independence closure provides a computationally e ffi cient su ffi cient condition for satisfying the hypothesis of Main Result 1. The follo wing proposition follo ws directly from Definition 8 and the results of [27] (restated as Theorem 5 in the Appendix). Proposition 2. (Row-Independence Closure, Intrinsic Stability , and Switched Networks) Let ( M , X ) be a switched network with Lipsc hitz set S . Then ρ ( S ) ≤ ρ ( R I ( S )) = max A ∈ RI ( S ) ρ ( A ) . This allo ws for the follo wing extension of Main Result 2, which provides a su ffi cient condition ensur- ing that when time-varying time-delays are applied to a stable already-switched system, the ne w resulting switched system retains stability . 14 Main Result 3. (Intrinsic Stability and Row-Independent Switched Networks) Let ( M , X ) be a switched network with Lipschitz set S . Assume x ∗ is a shared fixed point of ( F , X ) for all F ∈ M and ρ ( A ) < 1 for all A ∈ R I ( S ) . Let L > 0 and M d = { F D | F ∈ M , D ∈ N n × n with max i j d i j ≤ L } and let S d be the Lipschitz set of M d . Then E L ( x ∗ ) is a globally attracting fixed point of e very instance ( { F ( k ) D ( k ) } ∞ k = 1 , X L ) of ( M d , X L ) . Furthermor e, ρ ( S d ) ≤ max A ∈ RI ( S ) ρ ( A L ) < 1 wher e given some A ∈ R n × n , A L is defined as A L = " 0 n × nL A I nL × n L 0 n × n # . Thus, a switched network with a shared fixed point which also satisfies ρ ( A ) < 1 for all A ∈ R I ( S ) retains con vergence to the same equilibrium, e ven when it experiences time-varying time-delays. This extends Main Result 2 further to the ev en more complicated class of switched networks with time-varying time-delays. 5.1. Application: Switched Linear Systems with Distinct Delayed and Undelayed Interactions W e now e xtend our analysis of Section 4.1 to the case where system (10) is also a switched system: x k + 1 = A σ ( k ) x k + B σ ( k ) x k − τ ( k ) where 1 ≤ τ ( k ) ≤ L (13) where as before, each A σ ( k ) , B σ ( k ) ∈ R n × n are constant matrices index ed by σ ( k ), and τ ( k ) is a positi ve inte ger representing the magnitude of the time-v arying time-delay , bounded by some L > 0. The di ff erence between system (13) and system (10) is that in system (13) there are multiple possibilities for the transition weights of the delayed and undelayed interactions giv en by A σ ( k ) and B σ ( k ) , respectiv ely . The minimally delayed version of system (13) is gi ven by x k + 1 = A σ ( k ) x k + B σ ( k ) x k − 1 (14) so the lifted version of system (14) is e x k + 1 = e A σ ( k ) e x k where e A σ ( k ) = " A σ ( k ) B σ ( k ) I n × n 0 n × n # and e x k = " x k x k − 1 # . (15) Now observe that we may represent system (13) in the notation of Main Result 3 as the system ( M 0 , R 2 n ) where M 0 = { F | F ( e x k ) = e A σ ( k ) e x k } where, since each F is linear, the Lipschitz matrix of F is giv en by e A σ ( k ) and the zero vector 0 ∈ R n is a shared fixed point. Then system (13) is the switched system instance ( { F ( k ) D ( k ) } ∞ k = 1 , X L ) obtained from the sequence of transition matrices e A σ ( k ) and the sequence of delay distributions D ( k ) = τ ( k ) " 0 n × n 1 n × n 0 n × n 0 n × n # 15 once the sequences σ ( k ) and τ ( k ) are determined. This ensures that delays only occur to the delayed interac- tions modeled by B σ ( k ) , with magnitude τ ( k ). It follo ws immediately from Main Result 3 that the system (13) is stable for arbitrary large delay bounds L when ( M 0 , R 2 n ) satisfies the relativ ely simple condition ρ ( A ) < 1 for all A ∈ R I ( S ) of the Lipschitz set S of M 0 . W e apply our analysis to two examples from [31] to demonstrate the e ff ecti veness of Main Result 3. Example 5.1. (Ro w-Independent Switched Network) Consider system (13) with A 1 = A 2 = " 0 0 . 3 − 0 . 2 0 . 1 # , A 3 = A 4 = " 0 0 . 3 − 0 . 2 − 0 . 1 # B 1 = B 3 = " 0 0 . 1 0 0 . 2 # , B 2 = B 4 = " 0 0 . 1 0 0 # In Example 1 of [31], this system was shown to be exponentially stable for a delay bound of L = 13 . The set M 0 of lifted transition matrices consists of the following four matrices: e A 1 = 0 0 . 3 0 0 . 1 − 0 . 2 0 . 1 0 0 . 2 1 0 0 0 0 1 0 0 e A 2 = 0 0 . 3 0 0 . 1 − 0 . 2 0 . 1 0 0 1 0 0 0 0 1 0 0 e A 3 = 0 0 . 3 0 0 . 1 − 0 . 2 − 0 . 1 0 0 . 2 1 0 0 0 0 1 0 0 e A 4 = 0 0 . 3 0 0 . 1 − 0 . 2 − 0 . 1 0 0 1 0 0 0 0 1 0 0 Note that here M 0 = R I ( M 0 ) . Furthermore , ρ ( e A 1 ) = ρ ( e A 3 ) ≈ 0 . 59 < 1 and ρ ( e A 2 ) = ρ ( e A 4 ) ≈ 0 . 39 < 1 . Hence, this switched system is intrinsically stable, so is in fact stable for any bound L < ∞ , no matter the sequence of A σ ( k ) and B σ ( k ) that ar e chosen. It is worth mentioning that this conclusion was r eached without having to iteratively solve a system of linear matrix inequalities as in [31]. In Example 5.1, the set M 0 trivially satisfied M 0 = R I ( M 0 ). In our next example, we consider the more nuanced case where M 0 is a proper subset of RI ( M 0 ). Example 5.2. (Row-Independent Closure of a Switched Network) Consider the following modified ver- sion of system (13), wher e we hold A and B constant in time, but add in a switc hing contr ol term c σ ( k ) u k : x k + 1 = A x k + B x k − τ ( k ) + c σ ( k ) u k wher e 1 ≤ τ ( k ) ≤ L and u k = q T x k . (16) This system has the minimally-delayed lifted r epresentation e x k + 1 = e A σ ( k ) e x k wher e e A σ ( k ) = " A + c σ ( k ) q T B I n × n 0 n × n # and e x k = " x k x k − 1 # . (17) In Example 2 of [31], system 16 was shown to be stable with a upper delay bound L = 2 for A = " 0 . 7 0 0 . 05 0 . 8 # , B = " − 0 . 1 0 − 0 . 3 − 0 . 1 # , q = " 0 . 1510 − 0 . 2176 # 16 and c 1 = " 0 0 . 01 # , c 2 = " 0 . 01 0 # , c 3 = " 0 . 01 0 . 01 # . In this case we have thr ee matrices in M 0 : e A 1 = 0 . 7 0 − 0 . 1 0 0 . 050151 0 . 7997824 − 0 . 3 − 0 . 1 1 0 0 0 0 1 0 0 e A 2 = 0 . 700151 − 0 . 0002176 − 0 . 1 0 0 . 05 0 . 8 − 0 . 3 − 0 . 1 1 0 0 0 0 1 0 0 e A 3 = 0 . 700151 − 0 . 0002176 − 0 . 1 0 0 . 050151 0 . 7997824 − 0 . 3 − 0 . 1 1 0 0 0 0 1 0 0 . However , R I ( M 0 ) contains e A 1 , e A 2 , e A 3 as well as the following fourth matrix e A 4 = 0 . 7 0 − 0 . 1 0 0 . 05 0 . 8 − 0 . 3 − 0 . 1 1 0 0 0 0 1 0 0 corr esponding to c 4 = " 0 0 # . Her e ρ ( e A 1 ) ≈ 0 . 9097 < 1 , ρ ( e A 2 ) ≈ 0 . 9106 < 1 , ρ ( e A 1 ) ≈ 0 . 9104 < 1 , ρ ( e A 1 ) ≈ 0 . 9099 < 1 . Thus this switched network is intrinsically stable. Therefor e, using Main Result 3 we have been able to e ffi ciently show that this system is stable for arbitrarily lar ge delay bounds L. It is worth emphasizing that while each of the examples in this section considers linear switched net- works, the analysis applies directly to nonlinear switched networks as well, once the stability set M 0 of the various nonlinear mappings is kno wn. 6. Analytical and Computational Considerations W e now summarize how these results can be used to analyze dynamical systems with a network structure. That is, for a dynamical network with either constant time-delays ( F D , X L ) or network with time-varying time-delays with instances giv en by ( { F D ( k ) } , X L ) using the following steps: Step 1: Consider the simpler undelayed version of the network ( F , X ) where all interactions occur in- stantaneously . For a system giv en by Equation (10) consider the minimally delayed version of the system giv en by Equation (11). Step 2: Find a Lipschitz matrix A of the network by Equation (2) if the mapping F is piecewise di ff er- entiable and X = R n , otherwise directly determine the Lipschitz constants A = [ a i j ] by use of Definition 2. Recall that there are infinitely many Lipschitz matrices A for a giv en dynamical network. Ideally we would like to find one which minimizes the spectral radius ρ ( A ), as this improv es our estimate of the con vergence rate of the system to its unique equilibrium, if ρ ( A ) < 1. Howe ver , in certain cases it may simplify analysis considerably to merely find a bound for each entry of the Lipschitz matrix. Specifically , a bound that shows 17 ρ ( A ) < 1 for some Lipshitz matrix A . Step 3: The spectral radius ρ ( A ) can be computed e ffi ciently in O ( mn ) time by use of the po wer method, where m is the number of nonzero entries in A [11]. As soon as a single Lipschitz matrix A of ( F , X ) satisfies ρ ( A ) < 1, ev en if this A does not hav e minimal spectral radius, the results of Theorem 2 and Main Result 2 apply . These results guarantee that all delayed versions of ( F , X ) are stable, even if the delays are v arying in time so long as the magnitude of these delays are ev entually bounded by some L < ∞ . Moreov er, the con vergence rate of the delayed system is gi ven by ρ ( A L ), where A L is defined in Main Result 2. Since A L is sparse with m + n L nonzero entries, ρ ( A L ) can also be computed in O ( mn L + n 2 L 2 ) time using the power method. 7. Conclusion The method described in this paper for determining whether a network is stable or can be destabilized by time-delays has a number of advantages over other methods. First, one need not consider the system itself but rather the simpler undelayed, and therefore lower -dimensional, version of the system. Second, the method(s) described here, at least for networks, require only the computation and spectral analysis of a single matrix rather than the use of L yapunov-type methods, Linear Matrix Inequalities, or Semi-Definite Programming methods. Hence, very large systems can be analyzed using this method under the condition that their Lipschitz matrix can be e ffi ciently computed. Moreov er, if a system (process) can be shown (designed) to be intrinsically stable, there is no need to formally include delays in the system (model). The reason is that delays do change the qualitativ e dynamics of the system and it will same asymptotic state whether or not its delays are included. As has been shown, this is not the case for general dynamical networks. One question that remains open is if a system is not intrinsically stable does there exist a time-delayed version of the system that is unstable. Another is that certain systems may be only locally intrinsically stable , meaning that delaying certain network interactions may have no e ff ect on the network’ s stability while delaying other interactions may change the system’ s dynamics. Determining for a giv en nonintrinsically stable network which is which would be important for determining which parts of the network are susceptible to this specific type of attack. Lastly , as mentioned in the introduction networks are dynamic in two distinct ways. The first is the one considered in this paper, which is the changing state of the network’ s elements. The seconding is the ev olving structure or topology of the network. As time-varying time-delays e ff ect the network’ s structure of interactions these delays also e ff ect the underlying topology of the network. An important implication of this paper is that certain topological changes to a network, e.g. those induced by time-delays, can in general hav e a destabilizing e ff ect on the network’ s dynamics. Howe ver , if the network’ s dynamics are intrinsically stable, this class of topological transformations does not e ff ect the network’ s asymptotic state. It is unkno wn if there are other types of intrinsic dynamics, i.e. other stronger forms of dynamics, that are resilient to changes in the network’ s structure. 8. A ppendix Here we give the proofs of the results found in this paper . W e begin by proving the result(s) of Sections 3. 18 8.1. Appendix A A proof of Proposition 1 is the following. Pr oof. Let x ∗ = [ x ∗ 1 , x ∗ 2 , . . . , x ∗ n ] T be a fixed point of a dynamical network ( F , X ). Then by definition F i ( x ∗ 1 , x ∗ 2 , . . . , x ∗ n ) = x ∗ i for all 1 ≤ i ≤ n . Let D be a delay distribution with max i j d i j ≤ L for some finite L > 0. With the usual ordering of the component spaces of x ∈ X L , we hav e F D ( E L ( x ∗ )) = ( F D ) 1 , 0 ( x ∗ 1 , x ∗ 2 , . . . , x ∗ n ) ( F D ) 2 , 0 ( x ∗ 1 , x ∗ 2 , . . . , x ∗ n ) . . . ( F D ) n , 0 ( x ∗ 1 , x ∗ 2 , . . . , x ∗ n ) ( F D ) 1 , 1 ( x ∗ 1 ) ( F D ) 2 , 1 ( x ∗ 2 ) . . . ( F D ) n , L ( x ∗ n ) = F 1 ( x ∗ 1 , x ∗ 2 , . . . , x ∗ n ) F 2 ( x ∗ 1 , x ∗ 2 , . . . , x ∗ n ) . . . F n ( x ∗ 1 , x ∗ 2 , . . . , x ∗ n ) x ∗ 1 x ∗ 2 . . . x ∗ n = x ∗ 1 x ∗ 2 . . . x ∗ n x ∗ 1 x ∗ 2 . . . x ∗ n = E L ( x ∗ ) . Hence, the extended fix ed point E L ( x ∗ ) is a fixed point of ( F D , X L ). 8.2. Appendix B Next we gi ve a proof of Main Result 1, one of the two main results found in Section 4. Pr oof. Let x , y ∈ X and k > 0 be arbitrary . Note that for any F ∈ M with corresponding A ∈ S we ha ve, by definition of A being a Lipschitz matrix of F , that d 1 ( F 1 ( x ) , F 1 ( y )) . . . d n ( F n ( x ) , F n ( y )) A d 1 ( x 1 , y 1 ) . . . d n ( x n , y n ) where denotes an element-wise inequality . Thus, for the specific instance ( { F ( k ) } ∞ k = 1 , X ) giv en in the hy- pothesis, we hav e inductively d 1 ( F k 1 ( x ) , F k 1 ( y )) . . . d n ( F k n ( x ) , F k n ( y )) = d 1 ( F ( k ) 1 ◦ F k − 1 ( x ) , F ( k ) 1 ◦ F k − 1 ( y )) . . . d n ( F ( k ) n ◦ F k − 1 ( x ) , F ( k ) n ◦ F k − 1 ( y )) A ( k ) d 1 ( F k − 1 1 ( x ) , F k − 1 1 ( y )) . . . d n ( F k − 1 n ( x ) , F k − 1 n ( y )) A ( k ) A ( k − 1) . . . A (1) d 1 ( x 1 , y 1 ) . . . d n ( x n , y n ) . 19 By the definition of the joint spectral radius, there exists some positiv e constant C (possibly dependant on x and y ) such that d ma x ( F k ( x ) , F k ( y )) = d 1 ( F k 1 ( x ) , F k 1 ( y )) . . . d n ( F k n ( x ) , F k n ( y )) ∞ ≤ A ( k ) A ( k − 1) . . . A (1) d 1 ( x 1 , y 1 ) . . . d n ( x n , y n ) ∞ ≤ C ( ρ ( S )) k where k x k ∞ = max i | x i | . Now , assume x ∗ is a shared fixed point of ( F , X ) for all F ∈ M . Then F 1 ( x ∗ ) = F (1) ( x ∗ ) = x ∗ , and if it is assumed that F k − 1 ( x ∗ ) = x ∗ , then it follows immediately that F k ( x ∗ ) = F ( k ) ◦ F k − 1 ( x ∗ ) = F ( k ) ( x ∗ ) = x ∗ . Hence, by induction, x ∗ is a fixed point of ( { F ( k ) } ∞ k = 1 , X ). Thus d ma x ( F k ( x 0 ) , x ∗ ) = d ma x ( F k ( x 0 ) , F k ( x ∗ )) ≤ C ρ ( S ) k so lim k →∞ F k ( x 0 ) = x ∗ for all initial conditions x 0 ∈ X . 8.3. Appendix C Next we prove Main Result 3, which we do by dividing the proof of this result into sev eral lemmata. First, we make explicit the e ff ect of time delays on the Lipschitz matrix of a dynamical netw ork. Lemma 1. (Structure of the Lipschitz Matrix of a Delayed Network) Let ( F , X ) be a dynamical network with Lipschitz matrix A = [ a i j ] ∈ R n × n and D = [ d i j ] ∈ N n × n a delay distribution matrix with max i , j d i j ≤ L. Let A D be defined in terms of A as A D = A 0 A 1 . . . A L − 1 A L I n 0 . . . 0 0 0 I n . . . 0 0 . . . . . . . . . . . . . . . 0 0 . . . I n 0 ∈ R n ( L + 1) × n ( L + 1) wher e each A ` ∈ R n × n is defined element-wise as A ` = h a i j 1 d i j = ` i , with the indicator function 1 d i j = ` defined as 1 d i j = ` = 1 if d i j = ` 0 otherwise. for 0 ≤ ` ≤ L Then A D is a Lipschitz matrix of ( F D , X L ) . 20 Pr oof. Recall that for x ∈ X L , we order the components x i ,` of x as x = [ x 1 , 0 , x 2 , 0 , . . . , x n , 0 , x 1 , 1 , x 2 , 1 , . . . , x n , L ] T where x i ,` ∈ X i ,` for i = 1 , 2 , . . . , n and ` = 0 , 1 , . . . , L . Let x , y ∈ X be gi ven. Then d i , 0 ( F D ) i , 0 )( x ) , ( F D ) i , 0 )( y ) = d i F i ( x 1 , d i 1 , x 2 , d i 2 , . . . , x n , d in ) , F i ( y 1 , d i 1 , y 2 , d i 2 , . . . , y n , d in ) ≤ n X j = 1 a i j d j ( x j , d i j , y j , d i j ) = L X ` = 0 n X j = 1 a i j 1 d i j = ` d j ,` ( x j ,` , y j ,` ) which matches the first n rows of A D . For ` ≥ 1, d i ,` ( F D ) i ,` )( x ) , ( F D ) i ,` )( y ) = d i ,` − 1 ( x i ,` − 1 , y i ,` − 1 ) , which yields the identity matrices I n in A D . The following theorem follo ws as a direct corollary of Lemma 3.3 of [10], which is needed in our proof of Main Result 3. Theorem 3. Let ( F , X ) be a dynamical network with Lipschitz matrix A. Then for any delay distribution D the constant time-delayed dynamical network ( F D , X D ) has the Lipschitz matrix A D with (i) ρ ( A ) ≤ ρ ( A D ) < 1 if ρ ( A ) < 1 ; (ii) ρ ( A D ) = 1 if ρ ( A ) = 1 ; and (iii) ρ ( A ) ≥ ρ ( A D ) > 1 if ρ ( A ) > 1 . W e thus hav e the following immediate corollary by Theorem 3 part (i): Theorem 4. Suppose D and ˆ D are delay distrib ution matrices such that D ˆ D, i.e. entries of D ar e less than or equal to the corr esponding entries of ˆ D. If ( F , X ) , ( F D , X D ) , and ( F ˆ D , X ˆ D ) have the corresponding Lipschitz matrices A, A D , and A ˆ D , r espectively , with ρ ( A ) < 1 then ρ ( A ) ≤ ρ ( A D ) ≤ ρ ( A ˆ D ) < 1 . In other words, the spectral radius of the network is monotonic with respect to the addition of delays if ρ ( A ) < 1. W e no w require the follo wing results re garding the joint spectral radius. First we need the following def- inition and theorem originally occurring as Equation (3.1) and Theorem 2 in [27] regarding sets of matrices with independent row uncertainties, respecti vely . Definition 9. (Independent Row Uncertainties) W e say that a set of matrices S ⊂ R n × n has independent r ow uncertainty if S can be expr essed as S = { ( a 1 , a 2 , . . . , a n ) T | a i ∈ Q i , 1 ≤ i ≤ n } wher e the sets Q i ⊂ R n , 1 ≤ i ≤ n ar e closed and bounded. Theorem 5. (Joint Spectral Radius of Nonnegative Matrices with Independent Row Uncertainty) Let S be a set of nonne gative matrices with independent r ow uncertainty . Then ρ ( S ) = max A ∈ S ρ ( A ) . 21 Furthermore, we will use the equiv alence of Definition 7 of the joint spectral radius with the following representation from [32]. Theorem 6. (Alternate F orm of the Joint Spectral Radius) Given a set of matrices S ⊂ R n × n , the joint spectral r adius ρ ( S ) is given by ρ ( S ) = lim sup k →∞ max {|| A || 1 k : A is a pr oduct of length k of matrices in S } It follows immediately from Theorem 6 that if S 1 ⊂ S 2 , then ρ ( S 1 ) ≤ ρ ( S 2 ). This allows us to giv e the following proof of Proposition 2. Pr oof. Let ( M , X ) be a switched network with Lipschitz set S . By the form of Theorem 6 we ha ve ρ ( S ) ≤ ρ ( RI ( S )) since S ⊂ R I ( S ). For 1 ≤ i ≤ n , let Q i = { a i | A ∈ S } be the set of i th rows of all A ∈ S . Then R I ( S ) may be expressed as S = { ( a 1 , a 2 , . . . , a n ) T | a i ∈ Q i , 1 ≤ i ≤ n } implying RI ( S ) is row-independent. Thus ρ ( S ) ≤ ρ ( R I ( S )) = max A ∈ RI ( S ) ρ ( A ) by Theorem 5, as desired. Lemma 2. (Equality of A D ’ s) Let L > 0 and 1 ≤ i ≤ n be given. Let the matrices A (1) , A (2) satisfy a (1) i = a (2) i , and the matrices D (1) , D (2) satisfy d (1) i = d (2) i . Then ( A (1) D (1) ) i = ( A (2) D (2) ) i . Pr oof. It su ffi ces to sho w that ( A (1) ` ) i = ( A (2) ` ) i , where gi ven some A and D , A ` is defined as in Lemma 1. Let 0 ≤ ` ≤ L be arbitrary . Then by Lemma 1, ( A (1) ` ) i j = a (1) i j 1 d (1) i j = ` = a (2) i j 1 d (2) i j = ` = ( A (2) ` ) i j for 1 ≤ j ≤ n . Thus ( A (1) ` ) i = ( A (2) ` ) i , so by Lemma 1, ( A (1) D (1) ) i = ( A (2) D (2) ) i . Lemma 3. (Equality of sets) Let ( M 0 , X ) be a switched network with Lipschitz set S . Let L > 0 and D = { D ∈ N n × n | max i j d i j ≤ L } . Then RI ( { A D | A ∈ S , D ∈ D } ) = { A D | A ∈ R I ( S ) , D ∈ D } . Pr oof. Let ( A D ) ∗ ∈ R I ( { A D | A ∈ S , D ∈ D } ). Then there exist ( A D ) (1) , . . . , ( A D ) ( n ) ∈ { A D | A ∈ S , D ∈ D } such that ( A D ) ∗ i = (( A D ) ( i ) ) i . Furthermore, there exist A (1) , . . . , A ( n ) ∈ S and D (1) , . . . , D ( n ) ∈ D such that ( A D ) ( i ) = A ( i ) D ( i ) . Let A ∗ be constructed as ( A ∗ ) i = a ( i ) i , and D ∗ be constructed as ( D ∗ ) i = d ( i ) i . Then A ∗ ∈ R I ( S ), and since each D ( i ) satisfies d i j ≤ L , we ha ve D ∗ ∈ D . Thus, ( A D ) ∗ i = (( A D ) ( i ) ) i = ( A ( i ) D ( i ) ) i = ( A ∗ D ∗ ) i for 1 ≤ i ≤ n , where the last equality follows from Lemma 2. Therefore, ( A D ) ∗ = A ∗ D ∗ ∈ { A D | A ∈ R I ( S ) , D ∈ D } , so RI ( { A D | A ∈ S , D ∈ D } ) ⊂ { A D | A ∈ R I ( S ) , D ∈ D } . Now let A D ∈ { A D | A ∈ R I ( S ) , D ∈ D } . Then there exist A (1) , . . . , A ( n ) ∈ S and D ∈ D such that ( A D ) i = (( A ( i ) ) D ) i . Let ( A D ) ( i ) = ( A ( i ) ) D . Then ( A D ) ( i ) ∈ { A D | A ∈ S , D ∈ D } so A D ∈ R I ( { A D | A ∈ S , D ∈ D } ). Hence, { A D | A ∈ R I ( S ) , D ∈ D } ⊂ R I ( { A D | A ∈ S , D ∈ D } ) completing the proof. 22 W e now gi ve the proof of Main Result 3 found in Section 5. Follo wing this we show that Main Result 2 is a corollary of this result. Pr oof. Let ( M 0 , X ) be a switched network with Lipschitz set S . Assume x ∗ is a shared fixed point of ( F , X ) for all F ∈ M 0 and ρ ( A ) < 1 for all A ∈ R I ( S ). Let L > 0, D = { D ∈ N n × n | max i j d i j ≤ L } , M d = { F D | F ∈ M 0 , D ∈ D } , and let S d be the Lipschitz set of M d . W e will show ρ ( A D ) < 1 for all A D ∈ R I ( S d ) and inv oke Proposition 2. By Lemma 3, we hav e R I ( S d ) = { A D | A ∈ R I ( S ) , D ∈ D } . Then max A D ∈ RI ( S d ) ρ ( A D ) = max A ∈ RI ( S ) max D ∈ D ρ ( A D ) < 1 by the hypothesis and Theorem 3. Now given some A ∈ R I ( S ), by Lemma 1 and repeated application of Theorem 3 we hav e that max D ∈ D ρ ( A D ) = ρ ( A L ) where A L = " 0 n × nL A I nL × n L 0 n × n # . Thus by Proposition 2, ρ ( S d ) ≤ ρ ( R I ( S d )) = max A D ∈ RI ( S d ) ρ ( A D ) = max A ∈ RI ( S ) ρ ( A L ) < 1 . Since x ∗ is a shared fixed point of ( F , X ) for all F ∈ M 0 , by Proposition 1 E L ( x ∗ ) is a shared fixed point of ( F D , X L ) for all F D ∈ M d . Thus by Main Result 1, E L ( x ∗ ) is a globally attracting fixed point of every instance ( { F ( k ) D ( k ) } ∞ k = 1 , X L ) of ( M d , X L ). Lastly , we give a proof of Main Result 2. Pr oof. Let M 0 be the singleton set consisting of F . Then the Lipschitz set S of M 0 consists only of the matrix A , and so tri vially satisfies S = RI ( S ). Thus the hypothesis of Main Result 3 is tri vially satisfied, and the result follows. 9. Refer ences References [1] Ne wman,M.E.J. Networks an Intr oduction. Oxford University Press, Oxford (2010) [2] an der Heiden, U. J. Delays in physiological systems. Mathematical Biology (1979) 8: 345. https: // doi.org / 10.1007 / BF00275831 [3] Nishika wa, T akashi, Ferenc Molnar , and Adilson E. Motter . ”Stability Landscape of Power -Grid Synchronization. ” IF AC P apersOnLine 48, no. 18 (2015): 1-6, https://www-sciencedirect-com.erl.lib.byu.edu/science/article/pii/S2405896315022594. [4] Hasty , Je ff and McMillen, David and Isaacs, Farren and Collins, James J. ”Computational studies of gene regulatory networks: in numero molecular biology . ” Nature Revie ws Genetics 2, pp. 268-279 (2001). https://doi.org/10.1038/35066056 [5] Sipahi, R., S. Niculescu, Chaouki T . Abdallah, W . Michiels, and Keqin Gu. Stability and Stabilization of Systems with T ime Delay . IEEE Control Systems, Feb, 2011. 38. 23 [6] H. Logemann and S. T ownley , “The e ff ect of small delays in the feed- back loop on the stability of neutral systems, ” Syst. Contr . Lett., vol. 27, pp. 267-274, 1996. [7] W . Michiels, K. Engelborghs, D. Roose, and D. Dochain, “Sensitivity to infinitesimal delays in neutral equations, ” SIAM J. Contr . Optim., vol. 40, pp. 1134-1158, 2002. [8] V . Peddinti, D. Pov ey , and S. Khudanpur , “ A time delay neural netw ork architecture for e ffi cient model- ing of longtemporal contexts, ” INTERSPEECH, 2015. [9] W ebb, Benjamin and Leonid Bunimovich, “Restrictions and Stability of T ime-Delayed Dynamical Net- works” Nonlinearity 26(8) DOI: 10.1088 / 0951-7715 / 26 / 8 / 2131 2012. [10] W ebb, Benjamin and Leonid Bunimovich. Isospectral T ransformations: A New Approach to Analyzing Multidimensional Systems and Networks. Ne w Y ork, NY : Springer , 2014. [11] T refethen, L.N., Bau, D., 1998. Numerical Linear Algebra. Philadelphia: Series in Applied Mathemat- ics, V ol. 11. SIAM. [12] Zhao XQ. (2017) A Population Model with Periodic Delay . In: Dynamical Systems in Population Biology . CMS Books in Mathematics (Ouvrages de mathematiques de la SMC). Springer, Cham [13] Annabell Berger , Andreas Gebhardt, Matthias Muller-Hannemann, Martin Ostro wski. Stochastic Delay Prediction in Large Train Networks. In: 11th W orkshop on Algorithmic Approaches for Transportation Modelling, Optimization, and Systems, pp. 100-111. Schloss Dagstuhl–Leibniz-Zentrum fuer Infor- matik, 2011, http://drops.dagstuhl.de/opus/volltexte/2011/3270 [14] Stojano vic, Sreten B., Dragutin L. J. Debeljko vic, and Nebojsa Dimitrije vic. Stability of Discrete-T ime Systems with T ime-V arying Delay: Delay Decomposition Approach. International Journal of Computers Communications and Control 7, no. 4 (Sep 16, 2014): 776. [15] E.K. Boukas, Discrete-time systems with time-v arying time delay: stability and stabilizability , Mathe- matical Pr oblems in Engineering , Article ID 42489:1-10, 2006. [16] X.G. Liu, R.R. Martin, M. W u and M.L. T ang, Delay-dependent robust stabilization of discrete-time systems with time-varying delay , IEEE Pr oc.: Contr ol Theory and Applications , 153(6): 689-702, 2006. [17] V . Leite and M. Miranda, Robust Stabilization of Discrete-T ime Systems with T ime-V arying Delay: An LMI Approach, Mathematical Pr oblems in Engineering , 2008: Article ID 876509, 15 pages, 2008. [18] K.F . Chen and I-K Fong, Stability of discrete-time uncertain systems with a time-v arying state delay , Proc. IMechE, Part I: J . Systems and Contr ol Engineering , 222: 493-500, 2008. [19] Chena,S.,Zhaoa,W .,Xub,Y . Ne w criteria for globally exponential stability of delayed Cohen-Grossber g neural network. Math.Comput.Simul.79, 1527-1543 (2009) [20] T ao,L.,Ting,W .,Shumin,F . Stability analysis on discrete-time Cohen-Grossberg neural networks with bounded distributed delay . In:Proceedings of the 30th Chinese Control Conference,Y antai,22-24 July (2011) [21] Cohen,M.,Grossber g S. Absolute stability and global pattern formation and parallel memory storage by competitiv e neural networks. IEEE T rans.Syst.Man Cybern.SMC-13, 815-821 (1983) 24 [22] Cao,J. Global asymptotic stability of delayed bi-directional associativ e memory neural networks. Appl.Math.Comput.142(2-3),333-339 (2003) [23] Cheng,C.-Y .,Lin,K.-H.,Shih,C.-W . Multistability in recurrent neural networks. SIAM J.Appl.Math.66(4),1301-1320 (2006) [24] W ang,L.,Dai,G.-Z. Global stability of virus spreading in complex heterogeneous networks. SIAMJ.Appl.Math.68(5),1495-1502 (2008) [25] Alpcan,T .,Basar,T . A globally stable adaptiv e congestion control scheme for internet-style networks with delay . IEEE / ACMT rans.Netw .13, 6 (2005) [26] W ebb, Benjamin and Leonid Bunimovich. Intrinsic Stability , T ime Delays and T ransformations of Dynamical Networks. Advances in Dynamics, Patterns, Cognition. Springer International Publishing, 2017. [27] V incent D Blondel and Y urii Nestero v . Polynomial-T ime Computation of the Joint Spectral Radius for some Sets of Nonnegativ e Matrices. SIAM Journal on Matrix Analysis and Applications 31, no. 3 (Sep 1, 2009): 865-876. [28] Zhang, Xian-Ming and Qing-Long Han. Abel Lemma-Based Finite-Sum Inequality and its Application to Stability Analysis for Linear Discrete T ime-Delay Systems. Automatica 57, (Jul, 2015): 199-202, https://www.sciencedirect.com/science/article/pii/S000510981500179X. [29] Li, Xu, Rui W ang, and Xudong Zhao. Stability of Discrete-Time Systems with T ime-V arying Delay Based on Switching T echnique. Journal of the Franklin Institute 355, no. 13 (Sep, 2018): 6026-6044, https://www.sciencedirect.com/science/article/pii/S0016003218303910. [30] Fridman, Emilia. Introduction to T ime-Delay Systems : Analysis and Control, Section 6. Systems and Control: Foundations and Applications. 2014th ed. Cham: Birkhauser Boston, 2014. [31] Zhang, W en-An and Y u, Li. Stability analysis for discrete-time switched time-delay systems. Automat- ica 45, (May , 2009): 2265-2271. [32] G.-C. Rota and W . G. Strang. A note on the joint spectral radius. Indag. Math., 22 (1960), pp. 379-381. [33] Tsitsiklis, J.N. and Blondel. “The L yapunov exponent and joint spectral radius of pairs of matrices are hard-when not impossible-to compute and to approximate”. V .D. Math. Control Signal Systems (1997) 10: 31. https: // doi.org / 10.1007 / BF01219774 25

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment