Bidirectional skip-frame prediction for video anomaly detection with intra-domain disparity-driven attention

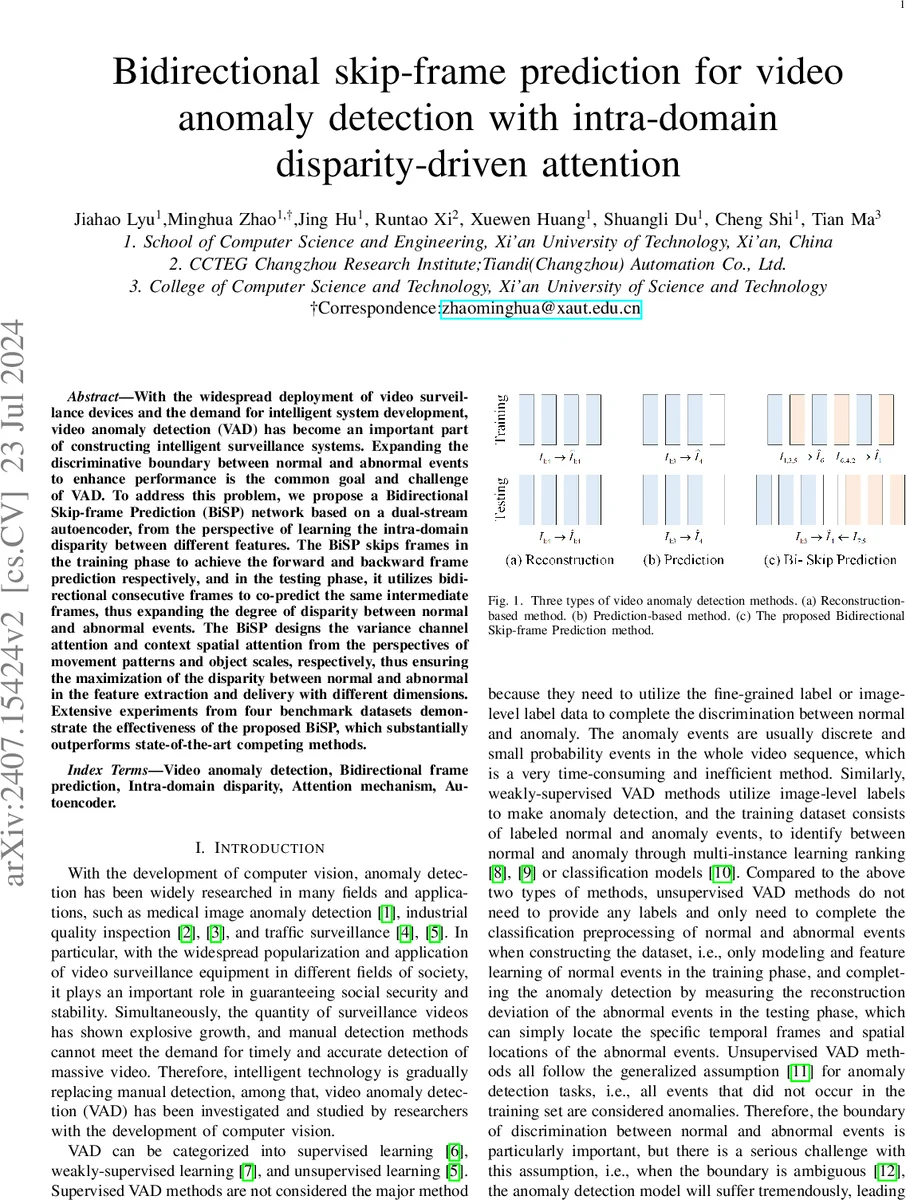

With the widespread deployment of video surveillance devices and the demand for intelligent system development, video anomaly detection (VAD) has become an important part of constructing intelligent surveillance systems. Expanding the discriminative boundary between normal and abnormal events to enhance performance is the common goal and challenge of VAD. To address this problem, we propose a Bidirectional Skip-frame Prediction (BiSP) network based on a dual-stream autoencoder, from the perspective of learning the intra-domain disparity between different features. The BiSP skips frames in the training phase to achieve the forward and backward frame prediction respectively, and in the testing phase, it utilizes bidirectional consecutive frames to co-predict the same intermediate frames, thus expanding the degree of disparity between normal and abnormal events. The BiSP designs the variance channel attention and context spatial attention from the perspectives of movement patterns and object scales, respectively, thus ensuring the maximization of the disparity between normal and abnormal in the feature extraction and delivery with different dimensions. Extensive experiments from four benchmark datasets demonstrate the effectiveness of the proposed BiSP, which substantially outperforms state-of-the-art competing methods.

💡 Research Summary

**

The paper tackles the persistent challenge in video anomaly detection (VAD) of insufficient separation between normal and abnormal events within the same domain. To enlarge this intra‑domain disparity, the authors propose a Bidirectional Skip‑frame Prediction (BiSP) framework built on a dual‑stream autoencoder (AE) architecture.

Core Idea – Skip‑frame Strategy

During training, the video sequence is split into two interleaved streams: forward skip frames (e.g., I₁, I₃, I₅) and backward skip frames (e.g., I₆, I₄, I₂). Each stream feeds an independent AE (identical in structure but with separate parameters) that predicts a future frame at the opposite end of the sequence (forward AE predicts I₆, backward AE predicts I₁). This forces the networks to learn long‑range temporal dynamics rather than merely short‑term continuity.

In the testing phase, the model receives consecutive frames from both ends of a clip and jointly predicts the same intermediate frame (e.g., I₃). For normal footage the two predictions are almost identical, yielding a low L₂ error; for anomalous footage the predictions diverge sharply, producing a large error. By deliberately using different input strategies in training and testing, BiSP amplifies the error gap between normal and abnormal events, thereby expanding the discriminative boundary.

Attention Mechanisms

To further boost the model’s ability to distinguish subtle anomalies, two complementary attention modules are introduced:

-

Variance Channel Attention (VarCA) – operates on the channel dimension. It computes the statistical variance of each feature map; channels with higher variance (indicating stronger motion changes) receive larger weights, while static channels are suppressed. This parallel‑structured module is inserted into both encoder and decoder, emphasizing motion‑related cues.

-

Context Spatial Attention (ConSA) – works on the spatial dimension. It first aggregates global context via average pooling, then generates a spatial attention map that modulates feature activations according to object scale and location. Implemented in a serial fashion within the decoder, ConSA enriches the representation of objects of varying sizes, helping the network detect small or distant anomalies.

Network Architecture & Losses

Each AE comprises an encoder that mixes 2‑D convolutions (for spatial abstraction) with occasional 3‑D convolutions (to capture short‑term temporal cues), followed by a decoder that reconstructs the predicted frame. The overall loss combines:

- Forward prediction loss L_fp = ‖Ĩ_f – I_f‖₂²

- Backward prediction loss L_bp = ‖Ĩ_b – I_b‖₂²

- Consistency loss that penalizes the discrepancy between the two predictions of the same intermediate frame during testing.

The dual‑stream design ensures that forward and backward temporal patterns are learned independently, while the shared attention modules provide a unified way to highlight discriminative features.

Experimental Validation

BiSP is evaluated on four widely used surveillance datasets: Avenue, ShanghaiTech, UCSD Ped2, and Subway. Using standard metrics (AUC, EER, AP), BiSP consistently outperforms state‑of‑the‑art methods, improving AUC by 3–7 percentage points across datasets. Notably, on the challenging ShanghaiTech dataset, which contains diverse scenes and lighting conditions, BiSP achieves a 7.5% gain, demonstrating the effectiveness of VarCA and ConSA in handling varied motion patterns and object scales.

Computationally, the model relies mainly on 2‑D convolutions, keeping the parameter count modest and enabling real‑time inference (~30 FPS) on a single GPU, a significant advantage over many 3‑D‑heavy approaches.

Limitations & Future Directions

- The skip interval is fixed (typically two frames), which may limit sensitivity to very fast motions. Adaptive or learnable skip intervals could further improve robustness.

- Maintaining two separate AEs and two attention modules increases memory consumption, posing challenges for high‑resolution video streams. Model compression or lightweight Transformer‑based alternatives could alleviate this.

- Some complex anomalies involving simultaneous appearance and illumination changes still cause false positives; integrating multimodal cues (audio, metadata) or employing self‑supervised pre‑training may address these cases.

Conclusion

BiSP introduces a novel combination of bidirectional skip‑frame prediction and dual attention mechanisms to deliberately enlarge the intra‑domain disparity between normal and abnormal events. The approach yields superior detection accuracy while preserving real‑time performance, marking a meaningful step forward in unsupervised video anomaly detection. Future work will explore dynamic skip strategies, memory‑efficient designs, and multimodal extensions to further strengthen the framework.

Comments & Academic Discussion

Loading comments...

Leave a Comment