Adversarial Example Generation using Evolutionary Multi-objective Optimization

This paper proposes Evolutionary Multi-objective Optimization (EMO)-based Adversarial Example (AE) design method that performs under black-box setting. Previous gradient-based methods produce AEs by changing all pixels of a target image, while previo…

Authors: Takahiro Suzuki, Shingo Takeshita, Satoshi Ono

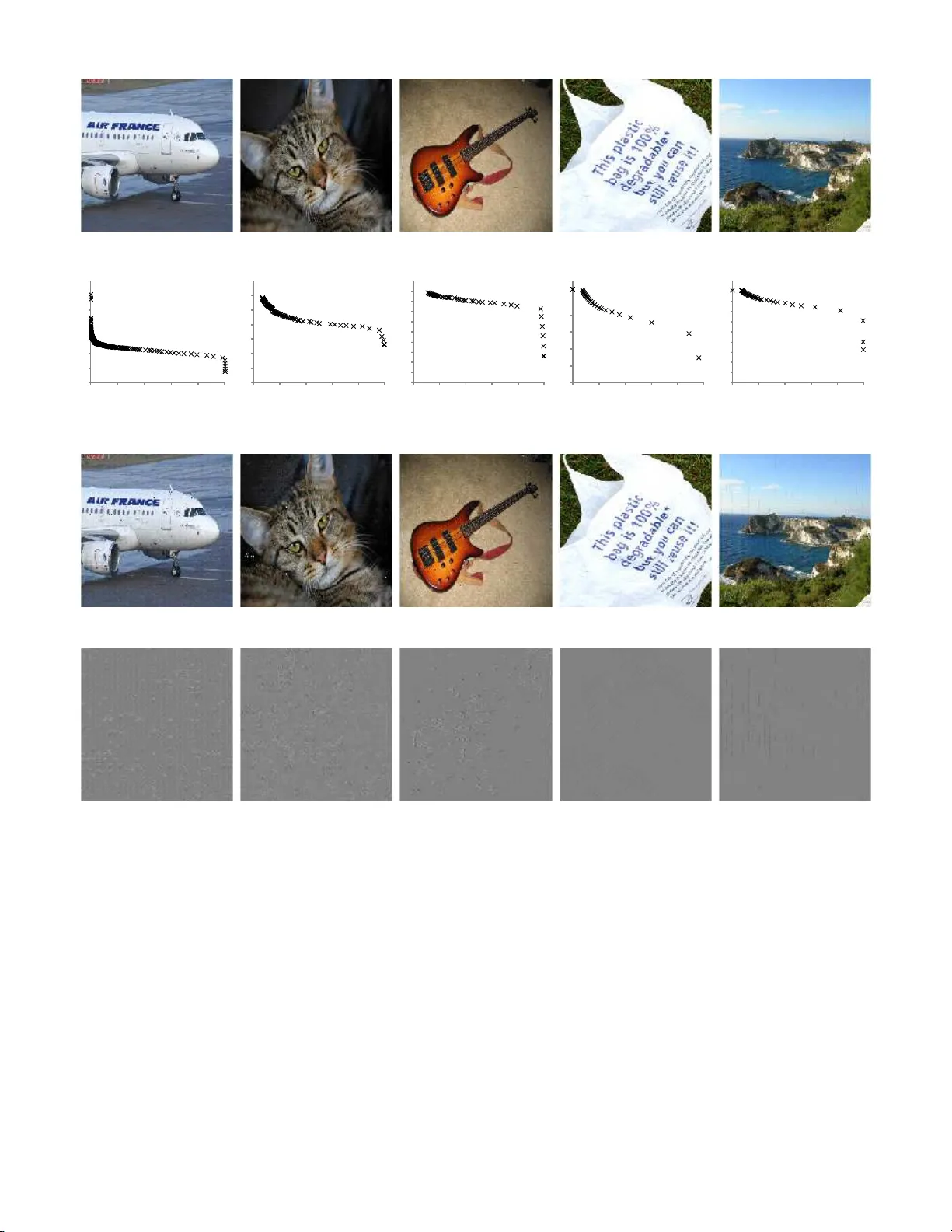

Adv ersarial Example Generation using Ev olutionary Multi-object i v e Optimizati on T akahiro Suzuki Department of Information Science and Biomedical Engin eering, Graduate School o f Scien ce and En gineering, Kagoshima University Kagoshima, Japan sc11502 9@ibe.kago shima-u.ac.jp Shingo T akeshita Department of Information Science and Bio medical Engineering, Graduate S chool of Science and Engineering, Kagoshima Un iversity Kagoshima, Japan sc11303 5@ibe.kago shima-u. a c.jp Satoshi Ono Department of Info rmation Science and Bio medical Eng ineering, Graduate School o f Science and Engineering, Kagoshima University Kagoshima, Japan ono@ibe.k agoshima- u.ac.jp Abstract —This paper proposes Evolutionary Mu lti-objective Optimization (EMO)-based Adversar ial Example (AE) design method that performs under black-box setting. Pre vious gradient- based methods produce AEs b y changing all pixels of a tar get image, whil e prev ious EC- based method changes small number of pixels to produce A E s. Thanks to EMO’ s property of population based-search, the propose d method produces va rious types of AEs in volving ones loca ting between AEs generated by the previous two appro aches, w h ich helps to kn ow the characteristics of a target model or t o know unk nown attack patterns. Experimental results showed t he potential of th e p roposed method, e.g., it can generate robust AEs and , with the aid of DCT -based perturbation pattern generation, AEs for high r esolution images. Index T erms —component, forma tting, style, styling, insert I . I N T R O D U C T I O N Over the past se veral y ears, deep learning has emerged as a “g o-to” techn ique for classification. In p a rticular, object im- age recognitio n p e rforma n ce h as been significantly improved due to the rapid progress of Con volutional Neural Netw orks (CNNs) [1]. On the other han d, recent studies revealed that Neural Network (NN)-based classifiers are susceptiv e to ad - versarial examples (AEs) [ 2]–[6], [6]– [12] that a n attacker has intentionally designed to cau se the model to make a mistake. AEs inv olve small changes ρ to origin al images I and fool the target NN as fo llows: C ( I + ρ ) 6 = C ( I ) (1) where C ( · ) denotes classification resu lt. Such AEs can be easily g enerated using in side information of a target NN s uch as g radient o f loss functio n [2]. Considering p ractical aspects, the re are many cases tha t the inner informatio n o f target mod els cannot b e av a ilable, e.g., commercia l or pro prietary software an d services. Theref ore, some studies attempted to attack NNs under b la c k -box set- ting wher e the attacker cannot access to the gradien t of the classifier [3]–[7]. Under the b la c k -box setting , Ev olutionar y Computation (EC) is expected to play an impo rtant role. In fact, one of the previous work [7] emp loyed Differential Evolution (DE) [13].The previous work that directly uses EC changed o ne or a very small nu mber of pixels because this method mu st determ in e both which pixels and how stron g the pixels sho uld be p erturbe d . In opp osite, method s und er white- box setting such as the gradien t-based method a r e likely to change many pixels of a target image. It is mean ingful to compreh ensively generate various AEs inclu ding ones lo cating between AEs generated b y EC and g radient- b ased method from the viewpoint of both creating unkn own kind of AEs and kno wing the characteristics of NN deep e r . By the w ay , ge n erating AEs essentially in volves mo re than one objective func tio n that have trade- off relation ship such as classification accu racy versus pertur bation amou nt. Most AE design methods put them together into single objectiv e f unc- tion by linear combin a tion, and, to th e best of our knowledge, no study attempted to generate AEs witho ut integrating the objective functions in a multi-ob jectiv e op tim ization (MOO) manner . Therefo re, this study proposes an evolutionary multi- objective optimization (EMO) ap proach f or AE g eneration . Thanks to p opulation -based search ch aracteristics of EMO, The pr oposed metho d can gen erate AEs under b lack-bo x setting. In addition, takin g the ad vantages of popu la tio n-based search of EMO, th e prop osed method generates various AEs such as robust AEs against im age transfo rmation. Ex peri- mental results o n representative datasets of CIF AR-10 and ImageNet1 0 00 h av e shown that the pr oposed m ethod can generate various AEs locating be twe e n the EC- an d grad ient based p revious methods, an d attem pt h av e been conducted to generate r obust AEs against image rotation. W e summarize the contr ibutions of this paper as follows: • The first a ttempt to design AEs using EMO: which allows flexible design o f o bjective fun ctions and con- straints; non-d ifferentiab le, multimodal, no isy functions can b e used . • Ro b ust AE generation under black-box setting: Pre- vious work d esigning robust AEs optimize expected classification probab ility [8], [9]; however , consid ering only av eraged accuracy might g e nerate AEs that can inappro priately be classified its cor rect class in r a re cases. T aking the advantage of EM O, the p r oposed metho d supplemen tarily employs its d eviation as secon d objective function , a llowing to generate more robust AEs. • DCT -based method: T o g enerate AEs for high reso- lution images, the prop osed method designs per turba- tion patterns on fre quency coef ficients o btained by two- dimensiona l Discr ete Cosine T ransform (2D-DCT) [14], resulting in reduc in g the dim e n sion of th e design variable space. I I . R E L AT E D W O R K The most pop u lar appro ach to gen erate ad versarial examples is to adopt g radient of lo ss functio n in a target classifier under white-box setting [2]. It generates AEs by simply adding the small perturb ation to all pixels o f a target image accordin g to gradient o f a loss function. Recently , universal pertur bation that is ap plicable arbitrary images and c a n lead NNs to make a misclassification [1 0]. Interestingly , th e p erturba tio n pattern work s well no t on ly for the NN u sed to design the p attern but also other NNs. Howe ver , because it is a universal patter n, once the p attern is known, it can be easily detected. From the practical vie wpoint, AE design method s th at can work und er blac k -box setting ar e d esirable; such m ethod allows to ana lyzing the characteristics of the consum er or propr ietary software o r ser v ices. In ad d ition, d ifferent types of AEs from ones gene r ated b y gradient- based methods h elp to f u rther analy sis of target NN mod els. Su et al. proposed one pixel attac k metho d [7] using Dif ferential E volution [13] that r ev ealed the fragileness of the c lassifiers. Nina et al. propo sed a local search metho d that app roxima te s the net- work gr adient [5]. The above meth ods [ 5], [7] chang es small number p ixels of a target im age to mimic the target classifier , whereas the gradient- b ased metho d changes all pixels. These two approach es prod uced different types o f AEs. Discovering various AEs is usef u l from bo th the v iewpoint of knowing th e characteristics of NN more d eeply and knowing an unknown attack pattern s. That is th e motiv ation we introduce multi- objective op timization fo r AE design. I I I . T H E P R O P O S E D M E T H O D A. K e y I dea 1. Formulating an a dversarial pat t ern design problem a s multi-objective optimization: AE design pro blem essentially consists of mor e than one cost function s that compete with each oth e r such as accu r acy versus visibility . T herefor e, it is natur a l to solve the problem without integrating them into single objective fun ction in acco r dance with the way of multi- objective o ptimization. T he pr oposed m e thod does not req uire any par ameters to integrate the fun c tions, a n d a llows consid er- ing non -differentiable an d /or non- c onv ex o bjective fu nctions. For instance, introd ucing two function s of th e numbe r o f perturb ed pixels ( l − 0 n orm) and the strength of the per- turbation ( l − 1 norm ) isolately allows clar ifying the trade-o ff relationship between them. De cision m akers can choose the most balan ced AE from the Pareto optimal solution s while considerin g target image pro p erties. 2. Applying Evolutionary Multi-objective Optimization (EMO) algorithm: Th e prop osed m ethod adop ts an EMO algorithm to per form MOO. Compared to the approach that trains substitute models [6], the pro posed method d oes no t need to train the substitute mo del and is ap plicable mod - els other than NNs. In addition, thanks to EMO’ s essential proper ty of p opulation -based search, the pr o posed method compreh ensively prod uces non - domina ted solutions. Although there is no g uarantee th at the pr oposed m e thod pr o duces better AEs than pre vious work, finding various AEs with the propo sed meth o d he lps to know the ch a racteristics of a target NN model more deeply or to know unk nown attack patterns. Furthermo re, EM O doe s not requir e that the objective function be differentiable, smooth, an d u n imodal, then various types of objective fun ctions and constraints can be used in the propo sed method . For instance, th e p roposed m ethod can produce AEs mo r e robust against image tr ansforma tio n by adding standard deviation of classification accuracy in to objective fu nctions in addition to the expec ted accuracy . 3. Black-box approach: T aking one of the EMO’ s ad - vantages, i.e., population- based search, the pr oposed m ethod perfor ms under black-box setting [3], [5], [ 6], which means that the p roposed meth o d do es not requ ire gradien t info rmation in a target mod e l; classification results inv olving assigned labels and correspo nding confiden ce are sufficient 1 . Therefo re, the prop osed method is ap plicable to p roprietar y systems and models other than NNs. 4. Using Discrete Co sine T ransform (DCT) to pert urb images: Naive form ulation of the AE d e sign p roblem enlarges the prob lem size. Th erefore , we pro pose a DCT -based p e rtur- bation generation meth o d to sup press incr ease of the number of dim e n sions. The concu rrent work [11] also p ropo sed a DCT -based perturbation pattern d esign method; howe ver , this method o ptimizes sin g le ob jectiv e function . B. F ormulation 1) Design V ariables: In the propo sed method, there a re two methods to determine how to p erturb a n input image: d irect and DCT -based method . • Direct method: I n the direct me thod, pixel in tensity of input image I is pe rturbed directly based on a solution candidate x . Th us, x comprises variables x ( Dir ) u,v, c as follows: x = n x ( Dir ) u,v, c o ( u,v, c ) ∈ I (2) where ( u , v ) denotes a N w × N w pixels blo ck p osition in I , and c d enotes colo r co mpone n ts. T he r esolution o f I is W I × H I pixels and I is decom p osed in to ⌈ W I N w ⌉ × ⌈ H I N w ⌉ blocks. • DCT -based metho d: When g enerating ad versar ial ex- amples for hig h resolu tio n images, the direct metho d requires many variables and the proble m becomes hu g e. Therefo re, this study pr oposes an alter native meth od 1 Utiliz ing the high degree of freedom of the proposed method in the design of the object i ve functions, ev en the confidence is unnecessary . using two d imensiona l Discrete Cosine Transform (2D - DCT), which is called as a DCT -based method. Th e DCT - based me thod inv olves two types of variables as follows: x = χ ∪ x ( DC T ) (3) χ = n χ ( P S ) u,v o ( u,v ) ∈ I (4) x ( DC T ) = n x ( DC T ) r o 1 ≤ r ≤ N AP (5) x ( DC T ) r = n x ( DC T ) p,q,r o 1 ≤ p ≤ N DC T , 1 ≤ q ≤ N DC T (6) where x ( DC T ) p,q,r represents alteration pattern of 2 D- DCT coefficients of subband ( p, q ) . T o adaptively pertu rb inpu t image I accordin g to image block f e a tures, the DCT - based metho d prep ares N AP alteration pa tterns an d suffix r repr esents the pattern ind ex. χ ( P S ) u,v determines the generated 2D-DCT coefficient alteration pattern s to app ly image b lock ( u, v ) in input imag e I , i.e. , χ ( P S ) u,v = 0 , 1 , . . . , N AP . If χ ( P S ) u,v > 0 , the co rrespond ing a lteration pattern is a p plied to blo ck ( u, v ) , othe rwise, the f requen cy coefficients of the block do not change. 2) Objective Functions: In this pa p er , the following three scenarios are considered to demonstrate the advantage of the propo sed EMO-based approach. • Accura cy versus perturbation amount scenario This is th e fundamental scenario o f multi-obje c ti ve ad - versarial exam p le gen eration inc lu ding the fo llowing two objective fu nctions: minimize f 1 = P ( C ( I + ρ ) = C ( I )) minimize f 2 = || ρ || e (7) The first ob jectiv e f unction f 1 indicates a prob ability that a target classifier classifies a pertu rbed ima ge I + ρ to the cor rect class C ( I ) where C ( · ) d enotes a classification result. The secon d ob jective fun ction indicates the amoun t of the p erturba tio n ρ which can b asically be calcu lated by l e norm of ρ . Th is scenario clarifies the trade-o ff relationship between the classification accuracy and the perturb ation amoun t wh ile g enerating v arious p erturba - tion p atterns. • l 0 versus l 1 norms scenario The grad ient-based m ethod gener ates AE by giving small perturb ation to all p ixels of a target image, and EC- based previous work [7] generates A E s by p e rturbing one or relatively small number of pixels. On the oth er hand, the prop osed method can comp r ehensively gen erate various AE s th at h ave different nu mber o f per turbed pixels located between AEs generated by gradient- and EC-based m ethods. T o this e nd, th e n umber o f perturbed pixels is emp loyed as o ne of objective functions. The followings are examp le ob je c ti ve functions: minimize f 1 ( x ) = || ρ || 0 minimize f 2 ( x ) = || ρ || 1 sub ject to P ( C ( I + ρ ) = C ( I )) < T acc (8) where || ~ ρ || 0 denotes the nu mber of pixels whose values are n ot zer o in ρ , and T acc is a thresho ld. • Ro b ust AE generation scenar io Robust optimization is one of th e op timizations taking ad vantage o f the charac- teristics of evolutionary compu tation [15], [16]. Previous work was based on white-box setting [8], [9], [12] and minimizes only a veraged (o r expected) classification ac- curacy [8], [9]; howe ver , this migh t cause AEs th at cou ld be correctly classified under a certain condition b ecause such ra r e cases ca nnot be represen ted the averaged value. Adding deviation to objective function s prevents such exceptional failure of misclassification, resulting in g e n- erating mo re r o bust AEs against image transformation. minimize f 1 ( x ) = E ( P ( C ( τ i ( I + ρ )) = C ( I ))) minimize f 2 ( x ) = σ ( P ( C ( τ i ( I + ρ )) = C ( I ))) minimize f 3 ( x ) = || ρ || e (9) where E ( · ) and σ ( · ) are expected value and standard deviation of classification accuracy , and τ i ( · ) denote s image transformation. Note that th e se th ree scenario s have d ifferent purpo ses f rom each o ther but share the need fo r mu lti- objective op timization. C. Pr o cess Flow The pr oposed algorith m ad o pts any ev olutionary multi- objective o ptimization alg orithms such as NSGA-II [17] and MOEA/D [18]. Here we explain the process flow of the propo sed method takin g MO E A/D as an examp le. MOEA/D conv erts the ap prox im ation prob le m of the true Pareto Front into a set of single-objective o ptimization prob- lems. Her e , an or iginal multi-ob je c ti ve optimization problem is d e scribed as follows: minimize f ( x ) = f 1 ( x ) , . . . f N f ( x ) sub ject to x ∈ F (10) There are sev eral models to conv ert the above pro blems into scalar optimization prob lems; for instance, in Tchevycheff approa c h , the a b ove pro blem can be decom posed in to the following problem . minimize g ( x | λ j , z ∗ ) = max 1 ≤ i ≤ N f n λ j i | f i ( x ) − z ∗ i o sub ject to x ∈ F (11) where λ j = ( λ j 1 , . . . , λ j N f ) are weigh t vectors ( λ j i ≥ 0 ) and P N f i =1 λ j i = 1 , and z ∗ is a ref e r ence point calculated as follows: z ∗ i = min { f i ( x ) | x ∈ F } (12) By prepa r ing N D weight vectors and op timizing N D scalar objective fun ctions, MOEA /D finds various n on-do minated solutions at one optimization. The detailed algo rithm of the prop osed method based on MOEA/D is as follo ws: [Step 1] Initializatio n [Step 1-1] Determine neighbor hood relations for each weight v ector λ i . By calcu lating the Eu clidean distance between weigh t vectors, N n neighbo ring weigh t vectors { λ k } ( k ∈ B ( i ) = { i 1 , . . . , i N n } ) ar e selected. [Step 1-2] Genera te an initial population. Th e in itial so- lution candidates x 1 , . . . , x N p − 1 are genera te d by sampling them at unifor mly rando m from F . The solution who se all variable values are set to 0, which cor respond s to inv olving no perturb ation and would surviv e o n the edge of th e Pareto front through out the o ptimization, is also added to the initial populatio n. [Step 1 -3] Determine t he reference point. Th e refer ence point is calculated by eq.(1 2). [Step 2] Selection N f best individuals are selected for N f objective fu nctions resp ectiv ely , an d then, by applying tournam ent selection, the in dexes of the sub prob lems I are selected ( |I | = N 5 − N f ). [Step 3] P opulation updat e T h e following step s 3- 1 thr ough 3-6 are condu cted for e ach i ∈ I . [Step 3-1] Selection of mating and update range. W ith the probab ility δ , the upd ate ran ge P was limited to B i , otherwise P = 1 , . . . , N d . [Step 3-2] Crossov er Randomly selects two in d ices r 2 and r 3 from P and set r 1 = i , and gen erates a so lu tion ¯ y whose element ¯ y k is calcu lated b y th e fo llowing e q uation: ¯ y k = x r 1 k + F ( x r 2 k − x r 3 k ) with probabilit y C R x r 1 k with pr obability 1 − C R (13) The above equation is an operator pr oposed in DE, and C R and F are control par ameters. [Step 3-3] Mutation W ith the p robab ility p m , a p o lynom ial mutation opera tor [19] is ap plied to ¯ y to f orm a new cand idate y , i.e., the mutated value y k is calcu lated a s follows: y k = ¯ y k + ¯ δ ∆ max (14) where ∆ max represents the m a ximun permissible perturba n ce in the parent v alue ¯ y k and ¯ δ is calculated as follows: ¯ δ = ( (2 u ) 1 n +1 − 1 if u < 0 . 5 1 − [2(1 − u )] 1 n +1 otherwise (15) where u is a rand om num ber in [0 , 1 ] . [Step 3-4] Evaluation Evaluate y by gen erating per turbation pattern ρ . In the direc t meth od, intensity ρ a,b at po sition ( a, b ) of perturb ation pattern ρ is directly d etermined by variables, i.e ., ρ a,b = x ( DC T ) u,v (16) where 1 ≤ a ≤ I W , 1 ≤ b ≤ I H , u = ⌊ a/c ⌋ , and v = ⌊ b/c ⌋ . In the DCT -based method, DCT is applied to input image I and coefficients of basis functio ns ¯ X p,q are obtained. Th en, values of x ( DC T ) p,q,r in x are adde d to the coefficients ¯ X p,q as follows: X p,q = ¯ X p,q + x ( DC T ) p,q,r (17) where r = ξ ( P S ) u,v and ( u, v ) ∈ I . Finally inv erted DCT is applied to X p,q to fo rm a perturb ed image I + ρ . After ge nerating th e per turbed im a ge I + ρ , a target classifier is applied to it an d obtains its reco gnition re sult C ( I + ρ ) with a confidenc e score, which is re f erred to c alculate o bjective func- tions or constraints. Other objective fu nctions and constraints are calcu lated b ased o n ρ or I + ρ . [Step 3-5] Update of reference point If z j > f j ( y ) for each j = 1 , . . . , N f , then replace the value of z j with f j ( y ) . [Step 3-6] Update of so lut ions Perf orm the following p roce- dure to update p opulation . (1) Set c = 0 . (2) I f c = n r or P is empty , then go to (4). Othe r wise, pick an ind ex k fro m P at rand om. (3) I f any of the following cond itions are satisfied, th e n replace x k with y and set c = c + 1 . y 6∈ F ∧ x k 6∈ F ∧ v io ( y ) < v i o ( x k ) (18) y ∈ F ∧ x k 6∈ F (19) y ∈ F ∧ x k ∈ F ∧ g ( y | λ k , z ) ≤ g ( x k | λ k , z ) (20) where v io ( · ) den otes the a m ount of con straint violations. (4) Remove k from P an d g o ba ck to (2) [Step 4] Stop condition After iterated N g generation s, the algorithm stops the optimization. Oth erwise, go b ack to Step 2. I V . E V A L U A T I O N A. Experimen tal Setup Four experimen ts were con ducted to dem onstrate the ef- fectiveness of the form ulation of AE generation problem as multi-objec ti ve o ptimization . Exp eriment 1 shows wh e ther the propo sed me th od generates v arious AEs under l 0 versus l 1 norms scenario , i.e., th e first ob jective fu nction is the number of per turbed pixels and the secon d one is || ρ || 1 , both of wh ic h should be minimized. E xperimen t 2 demon strates whether th e proposed mu lti-objective b lack-bo x optimization approa c h c a n g enerate a dversarial examples r obust against image rotatio n. Exper iment 3 com pares the propo sed two methods, the direct method an d the DCT based method on a higher resolutio n image. Ex perimen t 4 d emonstrates some examples of adversarial attac ks on ImageNe t- 1000 data. In all the experime n ts, MOEA/D was used. T o conv ert the multi-objective optimization pro blem into a set of scalar optimization p roblems, Tc h ebysheff app roach is adopted . The neighbo rhoo d size N n was set to 10 , δ = 0 . 8 an d n r = 1 , In exper im ents 1 and 2 , we prepare can onical CNN models that inv olve • two sets o f conv olution layers with ReLU activation function , p ooling and drop out layers, • a fully c onnected layer with ReLU activ ation functio n followed by a dropo ut layer , and • o u tput lay e r consisting of a fully connected lay er with softmax activation function . The above ne twork was tr ained with Ada m [2 0] u sing 45,000 labeled images in CIF AR-10. The batch size and the number of epoch were set to 128 and 10 , respectiv ely . In experimen ts Fig. 1. Input image I 1 used in expe riments 1 and 2. 0 10 20 30 40 50 60 70 0 10 0 20 0 3 00 4 0 0 50 0 f 2 f 1 Fig. 2. Results of experi ment 1: obtained non-dominated soutions in l 0 versus l 1 norms scenario. i) f 1 = 18 ii) f 1 = 100 iii) f 1 = 327 (a) Generat ed adversarial examlpes. i) f 1 = 18 ii) f 1 = 100 iii) f 1 = 327 (b) Perturbed patterns. Fig. 3. Results of experime nt 1: generated adversari al example s in l 0 versus l 1 norm scenario. 3 and 4, VGG16 [21], which is a wide ly-used classifier based on CNN, was ado pted. W e used the p retrained VGG16 m odel implemented on Keras framework. B. Experimen t 1: l 0 versus l 1 norms o f the perturbation pattern In this first exper iment, the propo sed metho d was applied to design ad versarial examp les for image I 1 shown in Fig. 1 under l 0 versus l 1 norms scenario. That is, the fir st objective function w as th e nu mber o f pe r turbed pixels and the second objective fun c tio n was the stren g th o f chan ging pixel inten sity on the perturbed pattern ρ . A co n straint in whic h P ( C ( I 1 + ρ ) = C ( I 1 )) shou ld be less than 0.2 was also con sidered. The T ABLE I R E S U LT S O F E X P ER I M E N T 2 : C L A S S I F I C AT I O N R E S U LT S A N D C O N F I D E N C E O F T H E G E N E R AT E D E X A M P L E R O B U S T A G A I N S T RO TA T I O N . Rotati on Recogni tion results and confidence angle Clean image I Perturbed image I + ρ -60 deg Frog: 26.8% (Cat: 51.6%) Frog: 1.0% (Cat: 65.1%) -45 deg Frog: 20.9% (Cat: 69.0%) Frog: 1.2% (Cat: 69.3%) -30 deg Frog: 98.9% Frog: 0.9% (Truck: 96.3%) -15 deg Frog: 90.6% Frog: 1.8% (Bird: 65.6%) 0 deg Frog: 99.3% Frog: 3.6% (Deer: 77.0%) 15 deg Frog: 99.5% Frog: 5.9% (Truck: 44.0%) 30 deg Frog: 94.6% Frog: 2.7% (Truck: 64.5%) 45 deg Frog: 77.0% Frog: 1.1% (Cat: 60.6%) 60 deg Frog: 70.5% Frog: 3.2% (Cat: 47.6%) propo sed m ethod uses the direct metho d and set N w = 1 . Because the input imag e size was 32 × 32 and they have 3 color chann els, th e total n umber of design variables was 3,072. The populatio n size and the gener ation limit were set to 5 00 and 1,000, respectively . In this experiment, the initial populatio n was generated by dividing ind ividuals into e ig ht gro ups and imposing up per limits on th e num ber of pixels to b e chang ed an d pixel perturb ation ranges. Dif ferent upp er limits were set for each group , 0.5%, 5%, 20 %, 35%, 50%, 65%, 80%, and 95 %, respectively , wh ile pixel pertur bation ran ge w e re also lim ited to ± 200 , ± 200 , ± 10 0 , ± 50 , ± 33 , ± 2 5 , ± 20 , and ± 16 , respectively . The first two grou ps were also imposed to alter pixel values at least ± 15 0 and ± 100 , respe ctiv ely . Fig. 2 shows th e obtained non-domin ated solutions, which demonstra te s tha t the p ropo sed meth od cou ld gen erate various adversarial examples including o nes in which 15 to over 400 pixels wer e changed and lo c a ted between AEs gene r ated b y the pr evious EC- and gra dient-based methods. Fig . 3(a) shows some examples o f th e obtained b y the prop osed metho d. All the thr ee images shown in Fig. 3(a) were classified to ’ d eer’ with the c onfidenc e of 50 .3%, 45.9 %, a nd 41.8 %, respectively whereas origin ally , I 1 was classified to ’frog ’ with the confidence of 99.28%. Fig. 3(b ) sho ws th e perturbation patterns in which gray pixel indicates that wer e not modified, brighter pixels represent that were chan g ed to their intensity was increa sed , an d darker p ixels represent that wer e chan ged in the op posite direction. From the p erturba tion patterns shown in Fig. 3(b ) , different pertur b ation p atterns could be seen, though similar distributions we re observed b etween ii) and iii). C. Experiment 2: generating r ob ust AE s again st image trans- formation In this exp eriment, tak ing the ad vantage of multi-objec ti ve optimization , we attempt to design robust ad versarial exam- ples ag ainst image tran sformation . Simple imag e rota tio n was considered as imag e transfo rmation in this exper im ent because rotation h as a greater influence tha n translation. Here , for the purpo se of enhan cing the robustness against imag e rotation , three o bjective fun ctions were minimized : expected value and standard deviation of recog nition accu r acy of tran sformed T ABLE II R E S U LT S O F E X P E R I M E N T 3 : C L A S S I FI C AT I O N R E S U LT S A N D C O N FI D E N C E O F T H E G E N E R ATE D E X A M P L E F O R V G G 1 6 . Perturbed images I + ρ Rank Clean image I Direct method DCT -based method N AP = 1 N AP = 5 N AP = 10 1st T abby: 60.8% En ve lope: 13.6% jigsaw puzz le: 76.4% Purse: 20.8% Coyote: 35.2% 2nd Tig er cat: 30.4% Jigsaw puzzle: 10.0% tabby: 4.6% W ood rabb it: 13.2% W al laby: 16.0% 3rd Egyptian cat: 7.4% Carton: 9.7% tiger cat: 2.8% Jigsaw puzz le: 7.0% W ombat: 13.1% 4th Doormat: 0.4% W allet: 9.7% screen: 1.2% W indo w screen: 6.9% Hare: 4.5% 5th Radiat or: 0.2% Door m at: 9.2% prayer rug: 1.1% Mitten: 6.9% German shepherd 4.5% Fig. 4. Re sults of experimen t 2: obtained non-dominated solution s for generat ing adversaria l example robust against rotati on. (a) Perturbation pat- tern ρ (b) Perturbed image I 1 + ρ Fig. 5. Results of experime nt 2: generated adversari al example robust agai nst rotati on. 0.0 0% 10 .00 % 20 .00 % 30 .00 % 40 .00 % 50 .00 % 60 .00 % 70 .00 % 80 .00 % 90 .00 % 10 0 .00 % -60 - 40 - 20 0 20 40 60 Co nf iden ce score of 'frog' Ro ta t i on [deg] Or ig i na l ima ge I Genera ted AE Fig. 6. Result s of experiment 2: robustn ess of the generated exampl e against rotati on. images ( f 1 ( · ) and f 2 ( · ) ), and l 1 norm o f pertur b ation pattern ρ ( f 3 ( · ) ). T wo constraints were also imposed: the reco gnition ac- curacy o f the target image was less than 10 % w ith out rotatio n, and the expec te d accuracy was less than 50 %. The maximum rotation an gle was set to ± 60 degrees. The p opulatio n size and the generation limit were set to 500 and 2,0 0 0, respec ti vely . T ABLE III C L A S S L A B E L S R E G A R D E D A S C O R R E C T O N E S I N E X P E R I M E N T 4 . Image Original label Labels regarded as correct I 3 Airline r Plane, Airship, W ing, W arp lane, space shuttl e I 4 tiger ca t tabby , Egyptioan cat, L ynx, Persian cat, Siamese cat I 5 elect ric guitar acoustic guita r , Vi olin, Banjo, cello I 6 Plastic bag mailbag, s leepin g bag I 7 Promontory Seashore, Lakeside, Clif f, cliff dwel ling, V alle y , Breakw ater Other experim ental conditions were the same as experimen t 1. Fig. 4 shows the obtained non-do minated solution s in the final generatio n. W e picked up one non -domin ated solution from them an d Fig. 5 and Fig. 6 and show its image p ertur- bation patter n and its robustness against rotation , respectively . The recogniz e d class lab els while changing rotation angle are shown in T able II. These r esults ind icate that the gene rated AE successfully deceives the classifier in both with or without rotation cases. D. Expleriment 3: effectiveness of the DCT -based method In orde r to verify the effectiv eness of the DCT -b ased method in higher reso lu tion imag es, th e d ir ecet an d DCT - based meth ods were co mpared on generating AE fo r an image in I mageNet-1 000 und er accuracy versus perturbation amount scenario. The first ob jectiv e functio n is th e classifi- cation accu racy to the o riginal class. In this experiment we consider more gener a l class than the or iginal lab el assigned in ImageNet-1 000, e.g ., in the case generating AEs for image I 2 shown in Fig. 7 (a) which has a co rrect lab e l ‘tab by’, labels of ‘Egyptian cat’, ‘ lynx’, ‘Persian cat’, ‘Siamese cat’, and ‘tiger cat’ we r e also considere d as cor rect lab e ls. The second ob jectiv e f unction is Root Mean Square Error ( RMSE) between an or ig inal an d perturb ed im a ges 2 . A co nstraint, P ( C ( I + ρ )) ≤ 0 . 4 , was also considered to enhance search exploitation. In exper iment 3 an d subseque nt experiments, we use the pretrained V GG16 as the target classifier . In the case using the direct method , th e total numbe r o f design variables was 5 , 625 because we changed the input 2 The reason why we did not simply use l 2 norm of ρ w as to e v aluat e the af fection by DCT . In the case using DCT -based method, the image qualit y slightly deteriora ted via D CT and inv erse DCT ev en if the frequenc y coef ficie nts were not changed. (a) Origina l (clean) image I 2 (b) Perturbed image I 2 + ρ by direct method (c) Perturbe d image I 2 + ρ by DCT -based method ( N AP = 1 ) (d) Perturbed image I 2 + ρ by DCT -based method ( N AP = 5 ) (e) Perturbe d image I 2 + ρ by DCT -based method ( N AP = 10 ) Fig. 7. Results of experiment 3: generat ed adversari al examples by direct and DCT -based methods. image resolution to 2 2 4 × 224 , we set N w = 3 , and the perturb ation w as ad d ed to b rightness com ponen t of I . The DCT -based metho d requires less variables than the direct method, i.e., 848 , 1 , 104 , and 1 , 424 d im ensions for N AP = 1 , 5 , an d 1 0 , r espectively . Fig. 7 shows the rep resentative AEs g enerated by the two methods, and T able II sh ows the recognitio n results and confidence scores of the generated AEs. Both methods could generate AEs that make the c lassifier misclassify . In addition, th e DCT -based m ethod genera ted AEs including less conspicuo us patterns wh en N AP = 10 . E. Experimen t 4: Other examples by DCT -b ased metho ds In th e fin a l expe r iment, we attempted to ge nerate AEs using the pro posed DCT -based method for o ther images of ImageNet-1 000 unde r the accu r acy versus perturba tio n amoun t scenario. I n this experiment, we added a solution candidate x 0 whose all variables were set to 0 in to an initial pop ulation. Other expe r imental condition s were the same as those in experiment 3. Fig. 8(a ) shows target origina l images whose resolution was chang ed to 2 2 4 × 224 . As in experim ent 3, class labels similar to the original o n es were regarded as corr ect ones, as shown in T able I I I. Fig. 8(b) shows the distributions of o btained n on-do minated solutions. Add ing x 0 allowed th e pr o posed method to clarify the trade-o ff r elationship be twe en the acc u racy an d the p ertur- bation amount. Note that RMSE of x 0 was not zero becau se of th e ef fect of DCT and in verse DCT process. Fig.8 (c) and (d ) shows examples o f gener ated AEs an d their perturb ation p atterns, respectively . Different typ es of per turbed patterns could be seen; AEs for I 4 and I 5 include small number s of br ight pixels, whereas A E s for I 3 , I 6 , an d I 7 include striped an d thin striped patter ns. Th is demonstrates that the prop osed metho d co uld adaptively g enerate AEs acco rding to the target clean image pr o perties. T able IV shows the recognized classes and correspond ing confidenc e scores. Here we fo cus o n the results in each ima g e. Because I 3 is an image of the fro nt part o f an airplane which in volv es less textures, there are very few classes th at can induce misrecogn itio n, r esulting in erroneou s reco gnition on label ’ a ir craft carrier’. Other images I 4 throug h I 7 were the objects inv olving high frequen cy components and character- istic color s co mpared to I 3 and I 6 , then their AEs made the T ABLE IV R E S U LT S O F E X P ER I M E N T 4 : C L A S S I FI C ATI O N R E S U LT S A N D T H E I R C O N FI D E N C E S C O R E S O F O R I G I NA L C L E A N A N D P E RT U R B E D I M A G E S . (a) I 3 Rank Recogni tion results and confidence C ( I 3 ) C ( I 3 + ρ ) 1st Airli ner: 99.7% aircraft carrer: 94.9% 2nd Win g: 2.6% airline r: 3.0 % 3rd W arplane: 0.0% warplane: 1.4 % 4th Space shutt le: 0.0% w ing: 0.2% 5th Airship : 0.0% airship: 0.1% (b) I 4 Rank Reco gnition results and confidence C ( I 4 ) C ( I 4 + ρ ) 1st tig er cat: 81.9% Leopard: 31.3% 2nd tabby: 15.8% jaguar: 10.5% 3rd Egyptian c at: 2.0% lion: 9.3% 4th L ynx: 0.2% snow leo pard: 9.2% 5th Lens cap: 0.0% cheetah: 9.1% (c) I 5 Rank Recogni tion results and confidence C ( I 5 ) C ( I 5 + ρ ) 1st ele ctric guitar: 96.7% Eft: 19.7% 2nd acoustic guitar: 2.7% Banded gec ko: 11.3% 3rd V iolin: 0.3% European fire salama nder: 10.2% 4th Banjo: 0.1% Common ne wt: 10.3% 5th che llo: 0 .0% alligator liza rd: 9.7% (d) I 6 Rank Recogni tion results and confidence C ( I 6 ) C ( I 6 + ρ ) 1st Plast ic bag: 96.2% sock: 22.5% 2nd brassiere: 1.0% brassiere: 8.6% 3rd T oilet tissue : 0.2% pillo w: 7.8% 4th dia per: 0.2% diaper: 7.8% 5th sulphur -creste d cockatoo: 0.2% handkerchi ef: 7.8% (e) I 7 Rank Recogni tion results and confidence C ( I 7 ) C ( I 7 + ρ ) 1st Promontor y: 96.6% alp: 17.8% 2nd seashore: 1.7% Irish wolfhound : 8.8% 3rd clif f: : 1.4% marmot: 7.5% 4th bacon: 0.2% timber wolf: 7.4% 5th lak eside : 0.0% bighorn: 7.4% classifier misclassified to various classes. Intere sting ly , I 5 and I 7 were erroneously recognized as various animals, wh ereas I 3 I 4 I 5 I 6 I 7 (a) I nput clean images. 0 0.01 0.02 0.03 0.04 0.05 0.06 0.07 0 0 .2 0.4 0.6 0.8 1 f 2 f 1 0 0.00 5 0.01 0.01 5 0.02 0.02 5 0.03 0.03 5 0 0 .2 0 .4 0.6 0 .8 1 f 2 f 1 0 0.00 2 0.00 4 0.00 6 0.00 8 0.01 0.01 2 0.01 4 0.01 6 0.01 8 0.02 0 0 .2 0 .4 0.6 0 .8 1 f 2 f 1 0 0.00 1 0.00 2 0.00 3 0.00 4 0.00 5 0.00 6 0 0 .2 0 .4 0.6 0 .8 1 f 2 f 1 0 0.00 1 0.00 2 0.00 3 0.00 4 0.00 5 0.00 6 0.00 7 0.00 8 0.00 9 0.01 0 0 .2 0 .4 0.6 0 .8 1 f 2 f 1 I 3 I 4 I 5 I 6 I 7 (b) Obtained non-d ominated solutions. I 3 I 4 I 5 I 6 I 7 (c) Designed pertur bation pattern examples. I 3 I 4 I 5 I 6 I 7 (d) Generated adversarial exam ple images. Fig. 8. Results of experiment 4: input images, obtained non-dominated solutions, designed perturb patte rns, and generated adver sarial example images. I 6 was misclassified mainly as artificial th ings. F . Discussion Although some of the above e xperimen ts in v olve high di- mensional pr oblems whose num ber of d e sign variable exceeds 1,000 , the pr oposed m e th od could successfully gen erated AEs under black-box conditio n, i.e., with out gra dient info rmation and o ther in te r nal infor m ation of target classifiers except final recogn itio n resu lt (a class label a nd its c o nfidence sco r e). The above results revealed that th e p otential of EMO to AE design , though there is no guaran tee that the obtain ed solution s were globally optima. It is p ossible th at an AE design pro blem in volv es a highly multimod al fitn e ss landscap e including many promising q uasi-optim a l solu tions, which EMO is ap propr iate for finding. V . C O N C L U S I O N S This paper proposes an evolutionary multi-o bjective opti- mization appro ach to design adversarial exam ples th at can n ot be correc tly reco gnized by machin e lear ning models. Th e propo sed method is black-b o x method that does no t requ ire internal inf ormation in th e target mo dels, and produ ces various AEs b y simultaneously o ptimizing mu ltiple ob jectiv e func tio ns that hav e trad e-off r elationship. Ex perimen ta l re su ltse showed the potentials of the prop osed EMO- based ap proach ; e.g., the propo sed method co uld pro duce various AEs that h ave different pro perties fro m o n es generated b y the previous EC- and grad ient-based methods, and AEs robust against image rotation. This pap er also demo n strated that the DCT -based method c o uld generate AEs for highe r resolution images. On the other hand, the proposed m ethod h as many rooms for improvement fr om the viewpoint of co m prehen si vely generating more d iv erse solutio ns. I ntrodu cing sch e mes to promo te search explo r ation a nd to r educe problem dimen sion, and hybridizatio n with local search are our impor tan t fu ture work. The flexibility of E MO fo r designing objective fu n ctions would allow emerging new techniques to d esign AE s. R E F E R E N C E S [1] A. Krizhe vsky , I. Sutske v er , and G. E. Hinton, “Imagenet classification with deep con vol utiona l neural networks, ” in A dvance s in neural infor- mation proc essing systems , 2012, pp. 1097–1105. [2] I. Goodfello w , J. Pouget-Aba die, M. Mirza, B. Xu, D. W arde-Farle y , S. Ozair , A. Courville, and Y . Bengio, “Generati ve adve rsarial nets, ” in Advances in neural inf ormation pr ocessin g systems , 2014, pp. 2672– 2680. [3] P . -Y . Chen, H. Z hang, Y . Sharma, J. Yi , and C.-J. Hsieh, “Zoo: Z eroth order optimiza tion based black-box attacks to dee p neural netwo rks without training s ubstitut e models, ” in AISec@CCS , 2017. [4] A. Ilyas, L. Engstrom, A. Athalye, and J. Lin, “Black-box adver- sarial attac ks with limited querie s and informat ion, ” arX iv pr eprin t arXiv:1804.08598 , 2018. [5] N. Narodytska and S. P . Kasivisw anatha n, “Simple black-box adversari al attac ks on deep neural networks. ” in CVPR W orkshops , vol. 2, 2017. [6] N. Pa pernot, P . McDanie l, I. Goodfello w , S. Jha, Z. B. Celik, and A. Swami, “Practical black-box att acks against machine l earnin g, ” in Pr oceed ings of the 2017 ACM on Asia Confer enc e on Computer and Communicat ions Security , 2017, pp. 506–519. [7] J. Su, D. V . V argas, a nd K. Sakurai, “One pixel atta ck for fooling deep neura l netwo rks, ” CoRR , vol . abs/ 1710.08864, 2017. [Onli ne]. A va ilabl e: http:/ /arxi v .org/a bs/1710.0886 4 [8] A. Ath alye, N. Carlini , and D. W agner , “Obfuscated gradi ents gi ve a false sense of securit y: Circumven ting defenses to adversa rial exampl es, ” arXiv preprint arXiv:1802.00420 , 2018. [9] D. Song, K. Eykholt, I. Evtimov , E. Fernandes, B. Li, A. Rahmati, F . Tramer , A. Prakash, and T . K ohno , “Ph ysical a dve rsarial e xampl es for object detectors, ” in 12th { USENIX } W orkshop on Offen sive T ech- nolo gies ( { WOO T } 18) , 2018. [10] S.-M. Moosavi-Dezf ooli, A. Fawzi, O. Fa wzi, and P . Frossard, “Uni ver - sal adve rsarial perturbations, ” in 2017 IE EE Confer ence on Computer V ision and P attern R eco gnition (CVPR) , 2017, pp. 86–94. [11] C. Guo, J. R. Gardner , Y . Y ou, A. G. Wilson, and K. Q. W einberger , “Simple black-bo x adversari al attacks, ” 2019. [Online]. A vai lable : https:/ /openre view . net/forum?id=rJeZS3RcYm [12] R. Shin and D. Song, “Jpeg-resista nt adversari al images, ” in NIPS 2017 W orkshop on Machine L earning and Computer Security , 2017. [13] R. Storn and K. Price, “Diffe rentia l ev olutio n a simple and efficien t heuristi c for global optimiza tion over continuous spaces, ” J. of Global Optimizatio n , v ol. 11, pp. 341–359, 1997. [Onl ine]. A va ilabl e: http:/ /dl.acm.or g/cit ation.cfm?id=596061.596146 [14] K. R. Rao and P . Y ip, Discret e Cosine T ransfo rm: Algorithms, Advan- tag es, Applica tions . San Diego, CA, USA: Aca demic Press Profes- sional, Inc., 1990. [15] K. Shi moyama, A. Oyama , and K. Fujii, “Mul ti-obj ecti ve six sig ma approac h appl ied to robu st airfoil design for mars airplane, ” in 48th AIAA/ASME/ASCE/AHS/ASC Structur es, Structur al Dynamics, and Ma- terials Confer ence , 2007, p. 1966. [16] S. Ono and S. Nakayama, “Multi-obje cti v e particle swarm optimizatio n for robust optimizat ion and its hybridizati on with gradient searc h, ” in Evolutio nary Computation, 2009. CEC’09. IE EE Congress on . IEEE, 2009, pp. 1629–1636. [17] K. De b, A. Pratap, S. Agarwal, and T . Me yari van, “ A fa st and eliti st multiobj ecti ve gene tic al gorithm: Nsga-ii , ” Evoluti onary Computat ion, IEEE T ran sactions on , vol. 6, no. 2, pp. 182–197, 2002. [18] Q. Zhang and H. Li, “MOEA/D: A Multiobject i ve Evolution ary Algo- rithm Based on Decomposition, ” IEEE T ransactions on Evolu tionary Computati on , vol. 11, no. 6, pp. 712–731, December 2007. [19] K. Deb and M. Go yal, “ A combined gene tic adapti ve search (geneas) for engine ering design, ” Computer Scien ce and Informatics , vol. 26, pp. 30–45, 1996. [20] D. P . Kingma and J. Ba, “ Adam: A method for stochastic optimizat ion, ” arXiv preprint arXiv:1412.6980 , 2014. [21] K. Simonya n and A. Zisserman, “V ery deep con v olutio nal networks for larg e-scale image recogniti on, ” arXiv preprin t arXiv:1409.1556 , 2014.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment