The Impact of Complex and Informed Adversarial Behavior in Graphical Coordination Games

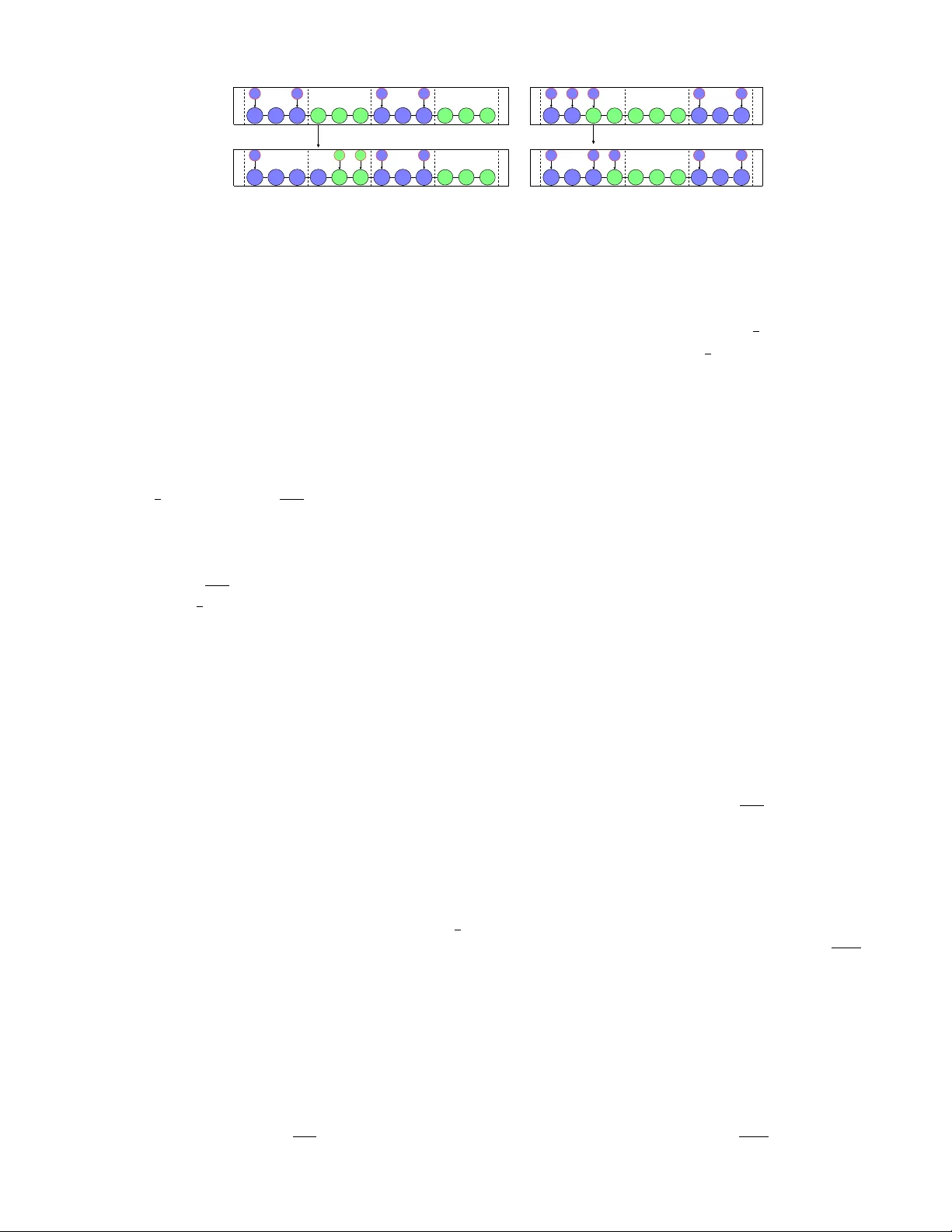

How does system-level information impact the ability of an adversary to degrade performance in a networked control system? How does the complexity of an adversary's strategy affect its ability to degrade performance? This paper focuses on these quest…

Authors: Keith Paarporn, Brian Canty, Philip N. Brown