Bioequivalence Design with Sampling Distribution Segments

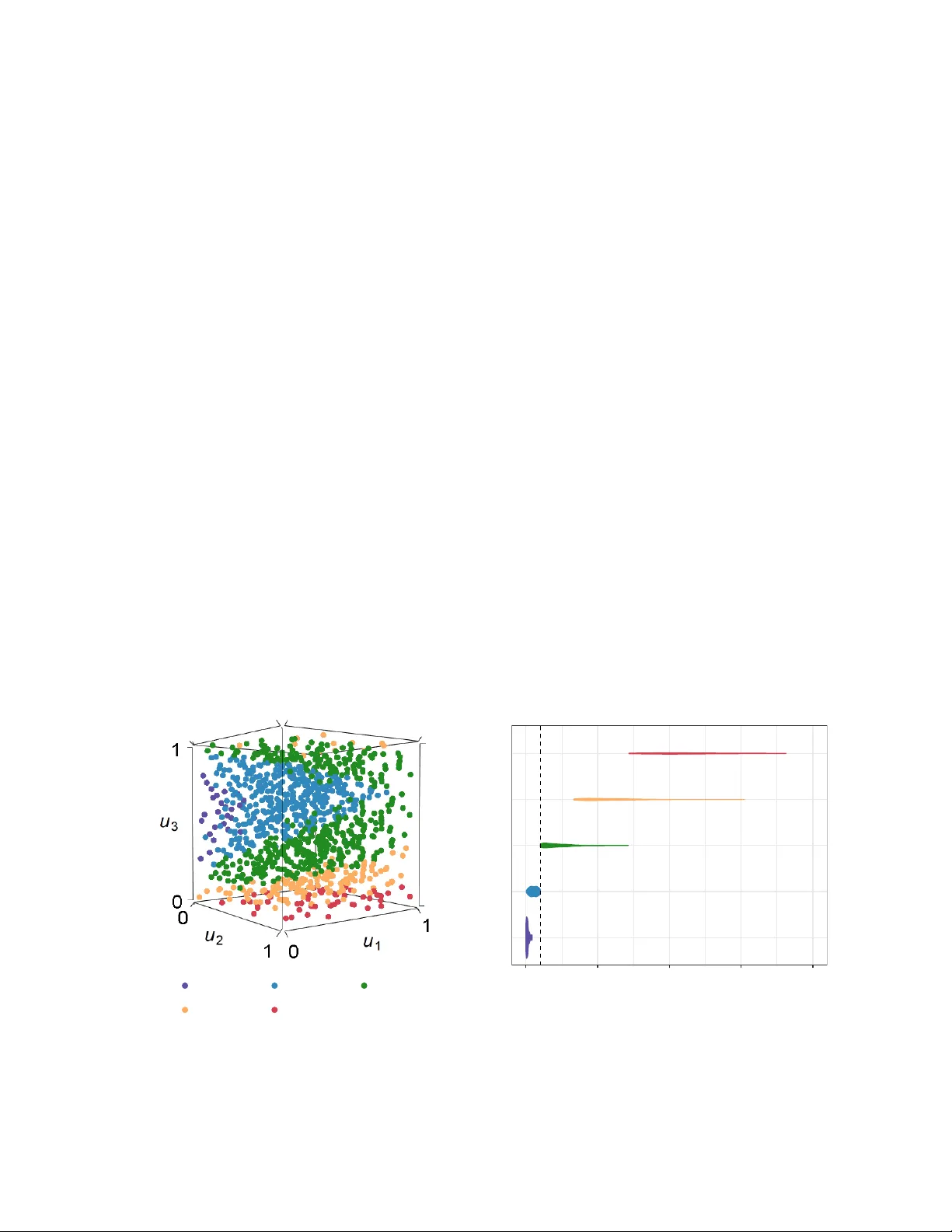

In bioequivalence design, power analyses dictate how much data must be collected to detect the absence of clinically important effects. Power is computed as a tail probability in the sampling distribution of the pertinent test statistics. When these …

Authors: Luke Hagar, Nathaniel T. Stevens