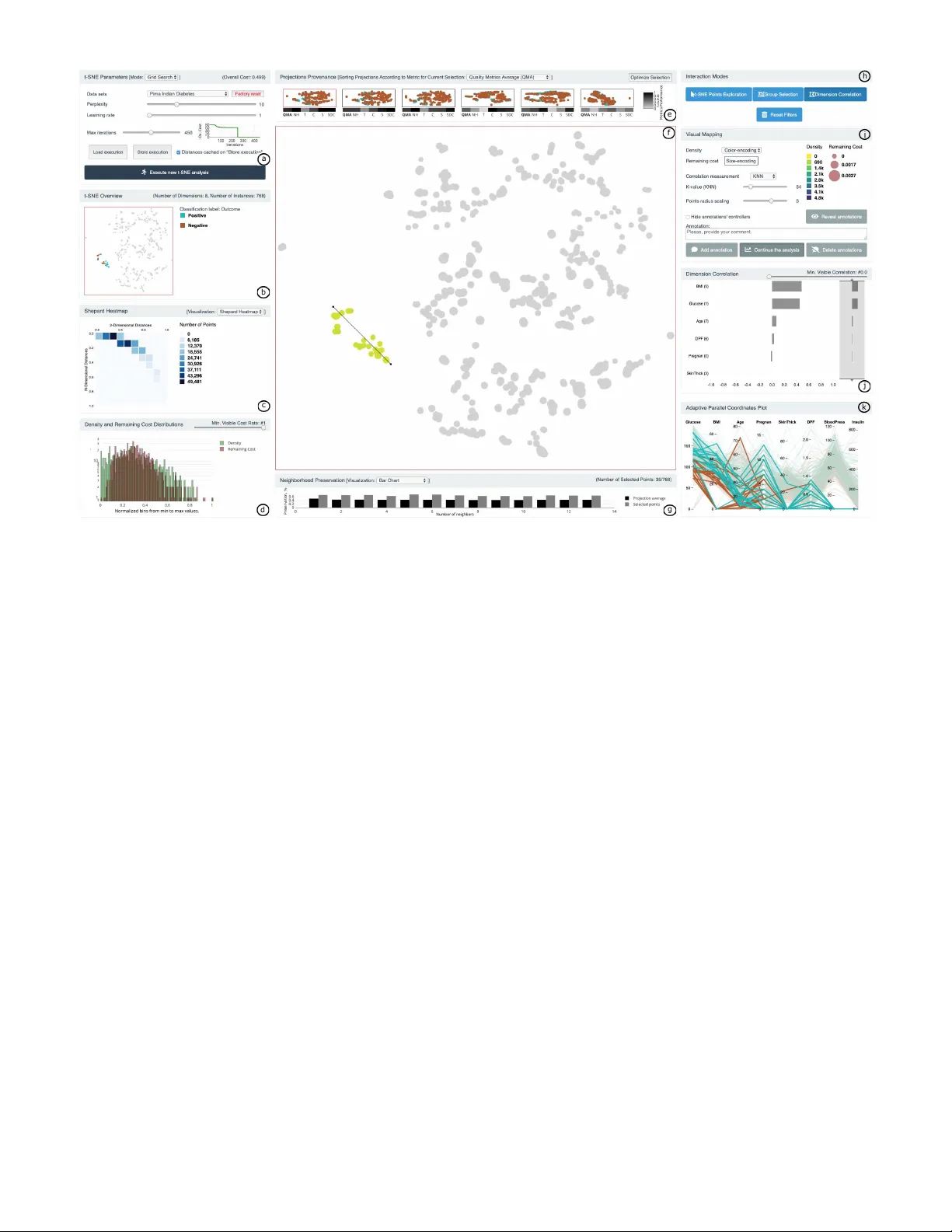

t-viSNE: Interactive Assessment and Interpretation of t-SNE Projections

t-Distributed Stochastic Neighbor Embedding (t-SNE) for the visualization of multidimensional data has proven to be a popular approach, with successful applications in a wide range of domains. Despite their usefulness, t-SNE projections can be hard t…

Authors: Angelos Chatzimparmpas, Rafael M. Martins, Andreas Kerren