Neural Memory Networks for Seizure Type Classification

Classification of seizure type is a key step in the clinical process for evaluating an individual who presents with seizures. It determines the course of clinical diagnosis and treatment, and its impact stretches beyond the clinical domain to epileps…

Authors: David Ahmedt-Aristizabal, Tharindu Fern, o

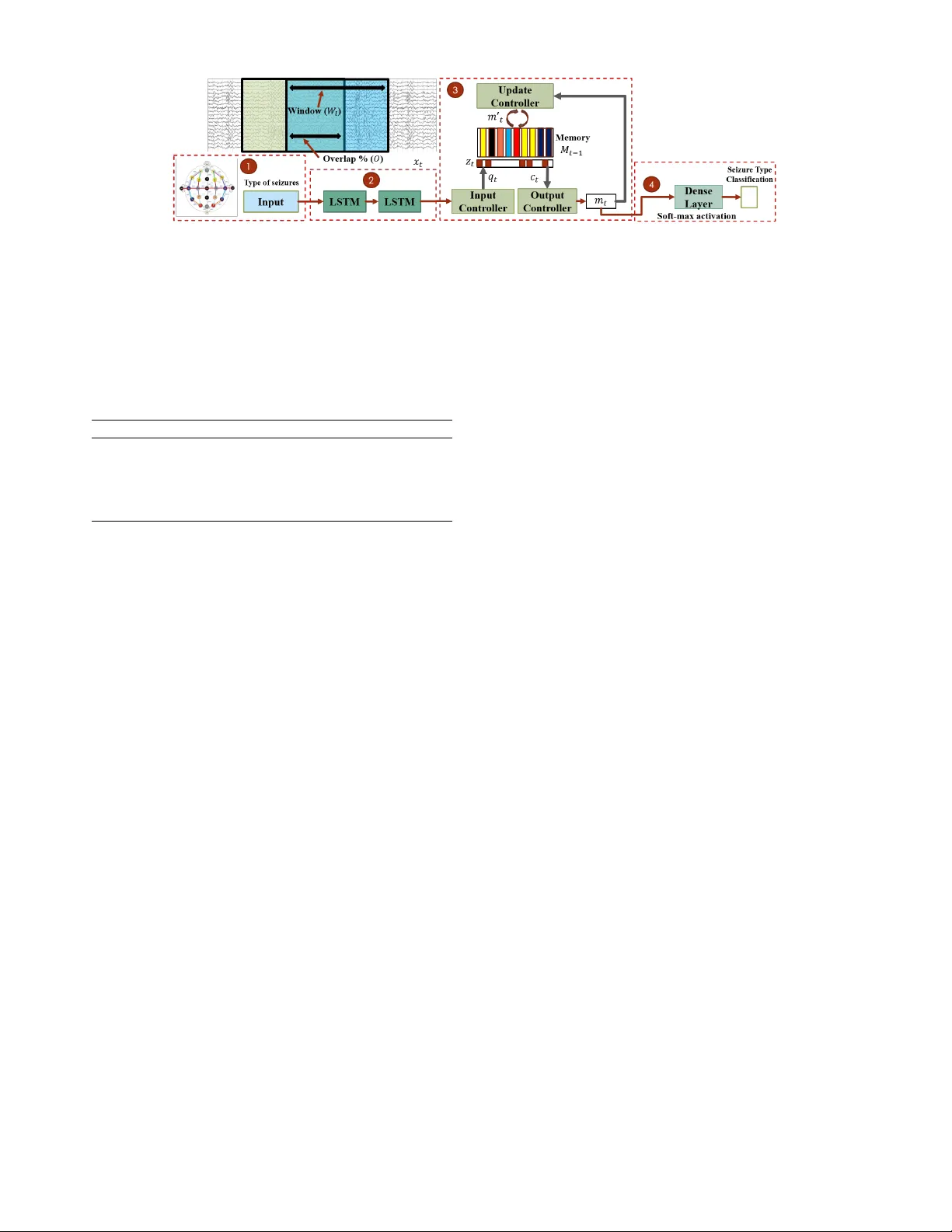

Neural Memory Networks f or Seizur e T ype Classification David Ahmedt-Aristizabal 1 , 2 , Tharindu Fernando 2 , Simon Denman 2 , Lars Petersson 1 , Matthew J. Ab urn 3 , Clinton Fook es 2 Abstract — Classification of seizure type is a key step in the clinical process f or evaluating an individual who presents with seizures. It determines the course of clinical diagnosis and treatment, and its impact stretches bey ond the clinical domain to epilepsy resear ch and the development of novel therapies. A utomated identification of seizure type may facili- tate understanding of the disease, and seizure detection and prediction hav e been the f ocus of recent resear ch that has sought to exploit the benefits of machine learning and deep learning architectur es. Nevertheless, there is not yet a definitive solution f or automating the classification of seizure type, a task that must currently be perf ormed by an expert epileptologist. Inspired by recent advances in neural memory networks (NMNs), we introduce a nov el approach f or the classification of seizure type using electrophysiological data. W e first explore the performance of traditional deep learning techniques which use convolutional and recurrent neural networks, and enhance these architectures by using external memory modules with trainable neural plasticity . W e show that our model achie ves a state-of-the-art weighted F1 score of 0.945 for seizure type classification on the TUH EEG Seizure Corpus with the IBM TUSZ preprocessed data. This work highlights the potential of neural memory networks to support the field of epilepsy resear ch, along with biomedical r esearch and signal analysis more broadly . I . I N T R O D U C T I O N Epilepsy is one of the most prev alent neurological con- ditions and people with epilepsy have recurrent seizures. Separating indi vidual seizures into diff erent types helps guide antiepileptic therapies [1]. Classification of seizures serves many purposes. It is informativ e of the potential triggers for a patient’ s seizures, the risks of comorbidities including intellectual disability , learning difficulties, mortality risk such as sudden unexpected death from epilepsy , and psychiatric features such as autism spectrum disorder[1]. T ogether with observ ation of clinical signs, electroen- cephalography (EEG) plays a major role in seizure type ev aluation and automating this process can support clinical ev aluation. Recent adv ances in artificial intelligence and deep learning have demonstrated high success in other healthcare applications using brain signals [2]. Howe ver , application of these architectures within neuroscience and specifically to the processing of EEG recordings for epilepsy research, hav e been limited to date [3], [4]. Current deep learning approaches have mostly focused on the goals of seizure detection [5], [6] and seizure onset prediction [7]; and deep con volutional neural networks (CNN) and recurrent neural 1 CSIR O, DA T A61, Canberra, Australia. 2 Image and V ideo Research Laboratory , SAIVT , Queensland Univ ersity of T echnology , Brisbane, Australia. 3 QIMR Ber ghofer Medical Research Institute, Brisbane, Australia.. networks (RNN) have been the most common architectures proposed to capture patterns during seizures [8], [9], [10]. Nev ertheless, the automated capability to discriminate among seizure types ( e .g. focal or generalized seizures) is a chal- lenging and largely underde veloped field due to both a lack of datasets and the highly complex nature of the task. A significant public data resource, the TUH EEG Corpus [11], has recently become av ailable for epilepsy research, creating a unique opportunity to ev aluate deep learning techniques. T o date, a limited set of methods hav e been applied to this challenging corpus in full for the task of seizure classifica- tion [12], [13], [14], although some researchers hav e used a small number of data sample from selected seizure types as input for their models [15], [16], [17]. Motiv ated by the tremendous success of neural memory networks to precisely store and retriev e relev ant informa- tion [18], [19], [20], we propose a novel approach based on long-term memory modules to identify and exploit rela- tionships across the entire EEG data set for seizure e vents. W e capture the variability , both intrasubject (seizures of the same patient) and intersubject (seizures across patients), for each epilepsy type in this long-term relationship. One of the main limitations of using traditional recurrent neural networks such as Long Short T erm Memory (LSTM) [21] or Gated Recurrent Unit (GRU) [22] layers with seizure recordings is that they focus more on the recent history and previous memories are lost after updates [23], i.e . they consider dependencies only within a giv en input sequence. T o address this limitation, we need to e xtract and store ev ents ov er time, and this is possible with an external memory bank. In this scenario, a frame work with an external memory should also learn when to store an e vent, as well as when to recall it for use in the future [20]. With the help of the external memory , the network no longer needs to squeeze all useful past information into the state variable (the cell state that saves information from the past) of the LSTM or GR U. W e also adopt the concept of synaptic plasticity , which emulates the biological process of the same name to enable ef ficient lifelong learning, and to enhance the attention based kno wledge retrie val mechanisms used in memory networks [24]. The plastic neural memory exploits both static and dynamic connection weights in the memory read, write and output generation procedures ( i.e. a connection between any two neurons has both a fixed component and a plastic component) [25]. In this research, we perform cross-patient seizure type classification, with an application of supporting the analysis of scalp EEG seizure recordings where epileptologists are not av ailable. W e first explore the feasibility of adapting deep learning algorithms that hav e shown promising results for seizure detection for the specific ev aluation of seizure type classification. Then, we introduce our frame work based on memory networks and trainable neural plasticity [24], which is a mechanism for kno wledge discov ery , i.e. a dynamic strategy to read and write relev ant information to capture temporal relationships. W e expand on the work introduced for anomaly detection [25] to demonstrate the potential of our architecture for the complex task of multi-class classifi- cation of seizures, and compare the results with previously published baseline methods. Our technical contributions are summarized as follo ws: 1) This study presents baseline results that compare se v- eral traditional deep learning algorithms proposed for seizure detection optimised and ev aluated for the task of classifying seizure types. 2) W e propose a rob ust approach based on neural memory networks which outperforms state-of-the-art methods for seizure type classification on the TUH EEG Seizure corpus [11]. 3) W e introduce the first application of memory modules, which are capable of mapping long-term relationships, to the field of epilepsy research and demonstrate how they can provide a clear separation between classes using the extracted memory embeddings. I I . M A T E R I A L S A N D M E T H O D S In this paper, we propose a neural memory network (NMN), which f acilitates trainable neural plasticity for robust classification of seizure types. W e compare and explore the dif ference between traditional deep learning techniques such as recurrent conv olutional neural networks (RCNN) and our proposed frame work using an external memory module. T raditional deep learning techniques exploit short spatio- temporal relationships to model sequential data. Memory modules, on the other hand, act as a large knowledge- store, and instead of making decisions based on the current observation (input data sample), map long-term relationships across all seizure recordings. A typical memory module [26] consists of a memory stack for information storage, a read controller to query the kno wledge stored in the memory , an update controller to update the memory with ne w knowledge, and an output controller which controls what results are passed out from the memory . An abstract view of these components and their interaction with the specific application of seizure classification is given in Fig. 1. W e compare the proposed approach with baseline algorithms for seizure detection [27], [28], [29], [30], [6], [31], [9], [10] and classification [12], [13], [14], and train all methods using supervised learning for direct comparison. A. Seizur e Dataset W e use the world’ s largest publicly av ailable database of seizure recordings, the T emple Univ ersity Hospital EEG (TUH EEG) [11] database. W e focus on the subset, the TUH EEG Seizure Corpus (TUSZ, v1.4.0), which was dev eloped to moti vate research on seizure detection. Recordings were sampled at 250Hz and contain the standard channels of a 10- 20 configuration. The seizure corpus contains 2012 seizures with dif ferent lengths and eight types of seizure. Some seizures of the same patient are categorised with different seizure types. Seizure recordings were annotated based on the following manifestations: electrographic, electroclinical, and clinical. For seizure type classification experiments, we exclude only myoclonic seizures because of the small number of seizures recorded (three seizure e vents). The sev en types of seizure selected for analysis are Focal Non- Specific Seizure (FNSZ), Generalized Non-Specific Seizure (GNSZ), Simple Partial Seizure (SPSZ), Complex Partial Seizure (CPSZ), Absence Seizure (ABSZ), T onic Seizure (TNSZ), and T onic Clonic Seizure (TCSZ). The data for one seizure ev ent consists of only the interval that contained a seizure based on the labeling reported in [11]. One class is defined as the combination of all seizure recordings across sessions and patients for the same seizure type. Although we are considering here se ven classes of seizure labeled in the corpus based on neurologists’ reports as described in [11], we note that these are not clinically disjoint classes. Clinically SPSZ and CPSZ are more specific subclasses of FNSZ while ABSZ, TNSZ, and TCSZ are more specific subclasses of GNSZ. Thus, in cases where there was insufficient evidence to classify the type of seizure more finely , the corpus categorises the seizure ev ent as the more general class of FNSZ or GNSZ depending on how and where it began in the brain. W e adopt the preprocessed version of TUSZ known as the IBM TUSZ pre-processed data (v1.0.0, method #1) [12]. This work used the temporal central parasagittal montage (TCP) [32] of 20 selected pair channels as the input. In this preprocessing method, the authors applied fast fourier transform (FFT) to each fixed-length window W l ( W l = 1 second and f max = 24 frequency bands) with O seconds ov erlap (0 . 75 W l ) across all EEG recording channels, as is illustrated in Fig. 1. The transformed data of all channels in one time window constitutes one data sample. Thus the task here is to perform classification based on 1 second of EEG data. The number of data sample per seizure type corresponds to the total number of windows all seizures across all patients. The input shape representation to train and test the model to classify seizure types is defined by [#data sample, #channels, #frequenc y bands]. W e adopt this input data to compare the performance of our framework with baseline results using traditional machine learning and deep learning techniques. T able I summarises the total number of seizures, patients and data samples av ailable for each seizure type. B. T raditional deep learning methods and baseline models Deep learning has re volutionised man y medical applica- tions and with the increasing a v ailability of EEG datasets, these algorithms hav e been applied to quantify information regarding seizures [33], [3]. W e aim to adapt well-known classical deep learning structures from related domains and Fig. 1. Ov erview of the frame work proposed for classifying seizure types using sequential and neural memory networks. 1. W e use the TUH EEG Seizure Corpus which contains scalp EEG data from seizure recordings and a pre-processing strategy based on the fast Fourier transform. 2. W e map each data sample with 2 stacked LSTMs as input to the memory model. 3. External memory model: The state of the memory at time instant t − 1 is M t − 1 . The input contr oller receiv es the encoded hidden states x t and determines what facts within the input data to use to query the memory q t . An attention score vector z t is used to quantify the similarity between the content stored in each slot of M t − 1 and the query vector q t to generate the input to the output controller . The output contr oller regulates what results from the memory stack ( c t ) are passed out to the memory module for the current state ( m t ). The update contr oller updates the memory state based on the output of the memory module and propagates it to the next time step. These controllers utilise a combination of fixed weights and plastic components. 4. The output of the memory model is fed to a dense layer with a soft-max acti vation to predict each seizure type. T ABLE I T OT A L C O U N T F O R S E I ZU R E S A N D P AT IE N T S P E R S E I Z UR E T Y PE . Seizure T ype Seizures Patients Data sample 1. Focal Non-specific Seizure (FNSZ) 992 108 292,725 2. Generalized Non-Specific Seizure (GNSZ) 415 44 137,033 3. Simple Partial Seizure (SPSZ) 44 2 6,028 4. Complex Partial Seizure (CPSZ) 342 34 132,200 5. Absence Seizure (ABSZ) 99 12 3,087 6. T onic Seizure (TNSZ) 67 2 4,888 7. T onic Clonic Seizure (TCSZ) 50 11 22,524 ev aluate them for the specific task of seizure type classifica- tion. While the objecti ve of seizure detection is to classify the input data into two classes (a seizure class and a non- seizure class), seizure type classification aims to identify different types of epileptic seizures; i.e. it is a multi-class classification task. As such, methods initially proposed for seizure detection can be applied to the task of seizure type classification. As it is not possible to consider all existing methods for seizure detection and classification in our study , we adopt only the most significant approaches based on their overall precision; the compatibility of their input data ( e.g. pre- processing, image-based EEG) with the IBM TUSZ pre- processed data [12], and lev el of documentation provided by the original authors to ensure full and correct reproducibility of each model. 1) T echniques adapted fr om the seizur e detection domain: The following methods are adapted to the task of seizure classification and are ev aluated in this paper: • Stacked auto-encoders (SAE): SAE are an unsuper- vised learning technique composed of multiple sparse autoencoders [34]. An auto-encoder consists of two parts, an encoder and a decoder . The encoder is used to map the input data to a hidden representation, and decoder is used to reconstruct input data from the hidden representation. SAE based approaches hav e been used by [27], [35]. • Con volutional neural networks (CNNs): A CNN con- sists of multiple stacked layers of different types: con- volutional layers, nonlinear layers, and pooling layers, followed by fully connected layers. Compared with traditional feed-forward neural networks, CNNs ex- ploit spatial locality by enforcing local connectivity and parameter sharing [36]. The purpose of pooling is to achieve in variance to small local distortions and reduce the dimensionality of the feature space [36]. The dif ferences in the architecture of v arious proposed CNNs is due to the number of layers included in the framew ork, and layer parameters. Some methods fine- tune a well-known architecture such as V GG, ResNet, while other design their own deep or shallo w network. Methods including [37], [38], [28], [39], [29], [30], [40] are examples of this approach. • Recurrent neural networks (RNNs): RNNs introduce the notion of time into a deep learning model by including recurrent edges that span adjacent time steps [41]. RNNs are termed recurrent as they perform the same task for ev ery element of a sequence, with the output being dependant on the previous computations. LSTMs [21] were proposed to provide more flexibility to RNNs by employing an external memory , termed the cell state to deal with the vanishing gradient problem. Three logic gates are also introduced to adjust this external memory and internal memory . GR Us [22] are a v ariant of LSTMs which combine the forget and input gates making the model simpler . RNNs are used by papers including [6], [31]. • Hybrid networks: Hybrid or cascaded networks such as recurrent conv olutional neural networks (RCNN) are used to better exploit v ariable-length sequential data [42], to extract spatio-temporal features and clas- sify through an end-to-end deep learning model [43]. In this scenario, RCNN denotes a number of conv olution layers followed by stacked recurrent units (LSTMs or GR Us). Such methods have been proposed in [9], [10], [32]. Giv en the dynamic nature of EEG data, RCNNs appear to be a reasonable choice for modeling the temporal e volution of brain acti vity . Therefore, we hav e designed a shallo w RCNN based on architectures used for seizure detection with video recordings as an input [33], [44]. W e aim to demonstrate that shallo w architectures are capable of reaching similar results to more complex traditional deep learning models. Through extensiv e experiments, the design of the network architecture that shows the best performance consists of 1) CNN: two conv olutional layers (32 kernels of size 3 × 3) stacked together followed by one max-pooling layer (size 2 × 2) and a fully-connected layer (512 nodes); 2) LSTM: two LSTM layers each with 128 cells followed by a densely connected layer with a softmax activ ation layer . 2) Baseline methods for seizur e type classification: The following methods are compared directly with the proposed approach: • T raditional machine learning techniques: K-Nearest Neighbors, SGD, XGBoost, and AdaBoost classifiers were proposed in [12]. • T raditional CNNs: a residual network ResNet50 was retrained to perform classification in [12]. Three pre- trained models, AlexNet, VGG16 and VGG19, were used in [14] to solve the classification problem. Ho w- ev er, an additional class of non-seizure ev ents was included in this publication. • SeizureNet [13]: the authors proposed two sub- networks, a deep con volutional network (multiple bot- tleneck conv olutions interconnected through dense con- nections) and a classification network. C. Neural memory networks and neur al plasticity The design of the network architecture for the task of seizure type classification from EEG recordings is displayed in Fig. 1. This approach aims to update the external mem- ory model (a memory stack for information storage) with new information from each data sample, and as such the memory learns to store distincti ve characteristics from each seizure type across patients. First, for modelling short-term relationships within the data sample we use LSTMs. T o extract the relev ant attributes through long-term dependen- cies (across seizures and patients), we employ the proposed neural memory architecture. The seizure classification output is generated using a dense layer with softmax classification. The neural memory architecture is composed of a memory stack M , with l memory slots each with an embedding size k ( M ∈ R l × k ), and its respectiv e input, output and update controllers. Each of these controllers is composed of an LSTM cell follo wing [18], [20]. The input controller passes the encoded hidden state from the stacked LSTMs, x t , at time instant t and generates a vector , q t , to retrieve the salient information from the stored kno wledge in the memory . W e generate an attention score vector z t to quantify the similarity between q t and the content of each slot of M t − 1 . Then, the output controller can retriev e the memory output, m t , for the current state. W e pass this resultant embedding through an update controller to generate an updated vector m 0 t , which is used to update the memory and propagate it to the next time step. W e update the content of each memory slot based on the informativ eness reflected in the score vector [25]. W e define the input, output and update operations such that, q t = f LSTM input ( x t ) , (1) z t = softmax ( q T t M t − 1 ) , (2) m t = z t M t − 1 , (3) m 0 t = f LSTM update ( m t ) , (4) M t = M t − 1 ( I − ( z t ⊗ e k ) T ) + ( m 0 t ⊗ e l )( z t ⊗ e k ) T , (5) where I is a matrix of ones, e l and e k are vectors of ones and ⊗ denotes the outer vector product which duplicates its left v ector l or k times to form a matrix. Ideally , we expect that the memory output m t should capture salient information from both the input and stored history that can be used to estimate each type of seizure. Inspired by the success of [24] in demonstrating how neural plasticity can be optimized by gradient descent in recurrent networks, we adopt neural plasticity to enhance the memory access mechanisms in the memory model. T o perform the injection of plasticity for memory compo- nents, we adopt the formulation of the Hebbian rule for its flexibility and simplicity (“neurons that fire together , wire together”) [24]. W e define a fix ed component (a traditional connection weight w ) and a plastic component for each pair of neurons i and j , where the plastic component is stored in a Hebbian trace Hebb, which ev olves ov er time based on the inputs and outputs. The Hebbian trace is simply an average of the product of pre- and post-synaptic activity . Thus, the network equation for the output x t of neuron j are: x j t = tanh ( ∑ i ∈ in put s [ w i , j x i t − 1 + α i , j Hebb i , j t x i t − 1 ]) , (6) Hebb i , j t + 1 = Hebb i , j t η x j t ( x i t − 1 − x j t Hebb i , j t ) , (7) Here α controls the contribution from fixed and plastic terms of a particular weight connection, and η is the learning rate of plastic components. Thus, we replace the component of the controllers to produce a plastic neural memory such that, q t = tanh ( ∑ ∀ i , j ∈ k [ ˙ w i , j x i t − 1 + ˙ α i , j ˙ Hebb i , j t x i t − 1 ]) , (8) c t = z t M t − 1 , (9) m t = tanh ( ∑ ∀ i , j ∈ k [ ˆ w i , j x i t − 1 + ˆ α i , j ˆ Hebb i , j t c i t − 1 ]) , (10) m 0 t = tanh ( ∑ ∀ i , j ∈ k [ ˜ w i , j x i t − 1 + ˜ α i , j ˜ Hebb i , j t m i t − 1 ]) , (11) Further technical information on neural memory networks and plasticity can be found in [18], [19], [20], [25]. I I I . E V A L U A T I O N A. Experimental setup All models were assessed through a 5-fold cross validation (CV) strategy to ensure that the data for hyperparameter tuning, and the data to test the algorithm were disjoint. For each fold, the data sample of each seizure type are randomly split into 60% for training, 20% for validation and 20% for test. W e used a weighted-F1 score to measure performance as this is a multiclass classification with highly unev en class distribution. The weighted F1 score is calculated as follows, W eighted F 1 = 7 ∑ i = 1 2 × precision i × recall i precision i + recall i × w i , (12) where w i is the weight of the i − t h class depending on the number of positive examples in that class. For each traditional deep learning models adapted for the task of seizure type classification, we follo w the specifica- tions provided by each author to train the architecture by optimizing the categorical cross-entropy loss. Our proposed shallow RCNN model was also trained by optimizing the categorical cross-entropy loss. W e used the Adam opti- mizer [45] with a learning rate of 10 − 3 , and decay rates for the first and second moments of 0.9 and 0.999 respectively . For regularization, we employed dropout with a probability of 50% in the fully connected layer . Batch-size was set to 32. W e trained the model ov er 150 epochs using the default initialization parameters from Keras [46]. For the proposed plastic NMN model, we also adopt the Adam optimizer and cate gorical cross-entropy loss and train for 50 epochs. Hyper-parameters k = 80 (hidden state dimension), l = 25 (memory length), and η = 0 . 5 (learning rate of plasticity) were ev aluated experimentally , and were chosen as they provide the best accuracy on the v alidation set. B. Classification of seizure type T able II summarizes the results of seizure type classifica- tion on the TUH EEG Seizure corpus with the IBM TUSZ pre-processed data using our proposed framework, along with the baseline methods and the adapted methodologies from the seizure detection domain. It is evident that through the utilization of the proposed external memory model via augmented read and write mechanisms with plasticity , we were able to achiev e superior classification results. Fig. 2 shows the normalized confusion matrices of the se ven types of seizure for the proposed Plastic NMN method. In this confusion matrix, we can identify that the most difficult seizure types to discriminate are those which hav e a small number of seizure recordings available for training and testing ( i.e . SPSZ, ABSZ, TNSZ and TCSZ). T o qualitatively illustrate the significance of the salient information and what the model has learned in terms of the model activ ations, we randomly sample 500 inputs from the test set and apply PCA [47] and plot the top two components in 2D. The embeddings are extracted from the last LSTM layer in the RCNN model and from the external memory in the plastic NMN. Fig. 3 and Fig. 4 depict the resultant plot where each seizure type is indicated based on the ground truth class identity . W e observe clear separation between the sev en type of seizures using the memory embeddings compared to the features learnt by our proposed RCNN model or SeizureNet [13]. This clearly demonstrates that the resultant sparse vectors are sufficient to discriminate between classes with simple classifiers. T ABLE II C RO S S - V A L I DA T I ON P E RF O R M AN C E O F C L AS S I F YI N G SE I Z U RE T Y P E Baseline methods W eighted-F1 scor e Adaboost [12] 0.509 SGD [12] 0.649 XGBoost [12] 0.782 KNN [12] 0.884 CNN (ResNet50) [12] 0.723 CNN (AlexNet) [14] 0.802 SeizureNet [13] 0.900 Baseline from adapted methods W eighted-F1 score SAE (based on [27]) 0.675 CNN (based on [28]) 0.716 CNN (based on [29]) 0.826 CNN (based on [30]) 0.901 LSTM (based on [6]) 0.692 LSTM (based on [31]) 0.701 CNN-LSTM (based on [9]) 0.795 CNN-LSTM (based on [10]) 0.831 CNN-LSTM (this work) 0.824 Proposed framework W eighted-F1 score Plastic NMN (this work) 0.945 Fig. 2. Normalized confusion matrices for seizure type classification on the TUH EEG Seizure Corpus for the proposed Plastic NMN model. C. Discussion Sev eral studies have demonstrated that machine learning models, specifically deep learning networks, can successfully detect and/or predict the onset of seizures from scalp and intracranial EEG. Although such models may be useful in identifying biomarkers of an existing epileptic condition, they are rarely of use for discriminating between dif ferent type of seizures. In this paper, we have ev aluated traditional deep learning methods proposed in the epilepsy domain for cross-patient seizure type classification, and we hav e improv ed on existing reported results by presenting a neural memory network based frame work. W e note that RCNNs hav e reached better performance than models based on CNNs or RNNs alone for the task of seizure type classification, which is similar to their relativ e performance reported for the seizure detection task. Gi ven the inherent temporal structure of EEGs, we expected that recurrent networks would be more widely employed than models that do not consider time dependencies. Howe ver , almost half of the models proposed in the epilepsy domain hav e used CNNs. This observation supports recent discus- sions regarding the effecti veness of CNNs for processing time series data [48]. Another finding of our study of baseline models is that the shallow RCNN proposed performed as well as deep CNNs models. This supports other research that has preferred shallow networks for analysing EEG data. Schirrmeister et al. [49] focused on this aspect, comparing the performance of architectures with dif ferent depths and structures. The authors sho wed that shallower fully conv olu- tional models outperformed their deeper counterparts. How- ev er, we note that hyperparameter tuning of baseline models may be key to using deeper architectures with physiological recordings. The potential of recurrent neural networks to handle sequence information was e vident in the e xperimental results. Howe ver , it is essential to consider historic behaviour over the full length of seizures, and map long-term dependencies between seizures to generate more precise classification. The process of capturing seizure behaviour is highly complex because of the increased heterogeneity of participants and the temporal evolution during epileptic seizures. Analyzing dynamic changes during a seizure is a major aspect of epilepsy patient assessment. Even though an RNN model has the ability to capture temporal information, it considers only the relationships within the current sequence due to the internal memory structure, making accurate long-term prediction intractable. RNNs such as LSTMS or GR Us exhibit one common limitation related to their storage ca- pacity because their internal state is modified, hea vily or slightly , at each computation step. By incorporating a neural memory network, we are able to increase the model’ s storage capacity without having to increase the size of the model; as demonstrated by [18], who compared using neural memory networks to map long-term dependencies among the stored facts with LSTMs which map dependencies within the input sequence. The memory network proposed in this paper is capable of capturing both short-term (within each data sample) as well as long-term (across the entire collection of data samples) relationships to predict a seizure type ( i.e. long-term mem- ory and working memory). Therefore, our proposed system eliminates the deficiencies of current baseline models in epilepsy classification which only consider within-sequence relationships. An additional benefit of the implemented memory network is that we have introduced the concept of synaptic plasticity through the read and write operation of the learnable controllers. W e apply local plasticity rules (Hebbian trace) to update feed-forward synaptic weights following feedback projection. The plastic nature of the memory access mechanisms in the neural memory model allows our system to provide a varying level of attention to the stored information, i.e. the plastic network acts as a content-addressable memory . T o allow comparison with baseline methods [12], [13], [14] we defined the classification task here similarly: to separate sev en classes of seizure labeled in the Corpus. As noted above, these classes are not actually clinically disjoint, but form a hierarchy . This semantic structure is not exploited by the present method. Where for example in Fig. 4 more specific seizure classes such as ABSZ are readily separated from the more general class GNSZ, this may indicate overfitting due to the small number of distinct patients for some seizure classes in the Corpus. This shows the v alue of continuing to expand the seizure corpus with Fig. 3. 2D illustration of extracted embeddings from the CNN-LSTM model for randomly selected 500 samples from the test set. Fig. 4. 2D illustration of extracted memory embeddings from the Plastic NMN for randomly selected 500 samples from the test set. more patients for future work. I V . C O N C L U S I O N S This paper presents a deep learning based framework which consists of a neural memory network with neural plasticity for EEG-based seizure type classification. A brief ov ervie w of commonly used deep learning approaches in the epilepsy domain is also presented. The proposed approach is capable of modelling long-term relationships which enables the model to learn rich and highly discriminative features for seizure type classification. With increasing computational capabilities and the collection of larger datasets, clinicians and researchers will increasingly benefit from the significant progress already made in their application to epilepsy . An accurate classification of seizures along with neuroimaging and behavioural analsyis are one step towards more accurate prognosis. In future, we plan to in vestigate the introduction of the memory component to map relationships directly from raw intracranial EEG recordings without a preprocessing phase, i.e. . without extracting information contained in the frequency transform of the time-series EEG. R E F E R E N C E S [1] R. S. Fisher , J. H. Cross, J. A. French, N. Higurashi, E. Hirsch, F . E. Jansen, L. Lagae, S. L. Mosh ´ e, J. Peltola, E. Roulet Perez et al. , “Operational classification of seizure types by the international league against epilepsy: Position paper of the ilae commission for classification and terminology , ” Epilepsia , vol. 58, no. 4, pp. 522–530, 2017. [2] O. Faust, Y . Hagiwara, T . J. Hong, O. S. Lih, and U. R. Acharya, “Deep learning for healthcare applications based on physiological signals: A revie w , ” Computer methods and pr ograms in biomedicine , vol. 161, pp. 1–13, 2018. [3] D. Ahmedt-Aristizabal, C. Fookes, S. Dionisio, K. Nguyen, J. P . S. Cunha, and S. Sridharan, “ Automated analysis of seizure semiology and brain electrical activity in presurgery e valuation of epilepsy: A focused surve y , ” Epilepsia , vol. 58, no. 11, pp. 1817–1831, 2017. [4] A. Craik, Y . He, and J. L. P . Contreras-V idal, “Deep learning for electroencephalogram (EEG) classification tasks: A review , ” Journal of neural engineering , 2019. [5] M. Zabihi, S. Kiranyaz, V . Jantti, T . Lipping, and M. Gabbouj, “Patient-specific seizure detection using nonlinear dynamics and null- clines, ” IEEE journal of biomedical and health informatics , 2019. [6] D. Ahmedt-Aristizabal, C. Fookes, K. Nguyen, and S. Sridharan, “Deep classification of epileptic signals, ” in EMBC , 2018, pp. 332– 335. [7] L. Kuhlmann, K. Lehnertz, M. P . Richardson, B. Schelter, and H. P . Zav eri, “Seizure predictionready for a new era, ” Nature Reviews Neur ology , p. 1, 2018. [8] Y . Zhang, Y . Guo, P . Y ang, W . Chen, and B. Lo, “Epilepsy seizure prediction on eeg using common spatial pattern and conv olutional neural network, ” IEEE Journal of Biomedical and Health Informatics , 2019. [9] P . Thodorof f, J. Pineau, and A. Lim, “Learning robust features using deep learning for automatic seizure detection, ” in MLHC , 2016, pp. 178–190. [10] M. Golmohammadi, S. Ziyabari, V . Shah, E. V on W eltin, C. Camp- bell, I. Obeid, and J. Picone, “Gated recurrent networks for seizure detection, ” in SPMB , 2017, pp. 1–5. [11] V . Shah, E. V on W eltin, S. Lopez de Die go, J. R. McHugh, L. V eloso, M. Golmohammadi, I. Obeid, and J. Picone, “The temple university hospital seizure detection corpus, ” Fr ontiers in Neur oinformatics , vol. 12, p. 83, 2018. [12] S. Roy , U. Asif, J. T ang, and S. Harrer, “Machine learning for seizure type classification: Setting the benchmark, ” arXiv preprint arXiv:1902.01012 , 2019. [13] U. Asif, S. Roy , J. T ang, and S. Harrer , “Seizurenet: A deep con- volutional neural network for accurate seizure type classification and seizure detection, ” arXiv pr eprint arXiv:1903.03232 , 2019. [14] N. Sriraam, Y . T emel, S. V . Rao, P . L. Kubben et al. , “ A con volutional neural network based framework for classification of seizure types, ” in EMBC , 2019, pp. 2547–2550. [15] I. R. D. Saputro, N. D. Maryati, S. R. Solihati, I. Wijayanto, S. Hadiyoso, and R. P atmasari, “Seizure type classification on EEG signal using support vector machine, ” in Journal of Physics: Confer - ence Series , vol. 1201, no. 1, 2019, p. 012065. [16] I. R. D. Saputro, R. Patmasari, and S. Hadiyoso, “T onic clonic seizure classification based on EEG signal using artificial neural network method, ” in SOFTT , no. 2, 2018. [17] X. Song, L. Aguilar , A. Herb, and S.-c. Y oon, “Dynamic modeling and classification of epileptic EEG data, ” in NER , 2019, pp. 49–52. [18] T . Munkhdalai and H. Y u, “Neural semantic encoders, ” in Proceedings of the conference. Association for Computational Linguistics. Meeting , vol. 1. NIH Public Access, 2017, p. 397. [19] T . Fernando, S. Denman, S. Sridharan, and C. Fookes, “T ask specific visual saliency prediction with memory augmented conditional gener- ativ e adversarial networks, ” in WA CV , 2018, pp. 1539–1548. [20] T . Fernando, S. Denman, A. McFadyen, S. Sridharan, and C. Fookes, “T ree memory networks for modelling long-term temporal dependen- cies, ” Neur ocomputing , vol. 304, pp. 64–81, 2018. [21] K. Greff, R. K. Sriv astava, J. K outn ´ ık, B. R. Steunebrink, and J. Schmidhuber, “Lstm: A search space odyssey , ” IEEE transactions on neural networks and learning systems , vol. 28, no. 10, pp. 2222– 2232, 2016. [22] K. Cho, B. V an Merri ¨ enboer , C. Gulcehre, D. Bahdanau, F . Bougares, H. Schwenk, and Y . Bengio, “Learning phrase representations using rnn encoder-decoder for statistical machine translation, ” arXiv preprint arXiv:1406.1078 , 2014. [23] Q. Chen, X. Zhu, Z. Ling, S. W ei, and H. Jiang, “Enhancing and combining sequential and tree lstm for natural language inference, ” arXiv pr eprint arXiv:1609.06038 , 2016. [24] T . Miconi, K. Stanley , and J. Clune, “Differentiable plasticity: training plastic neural networks with backpropagation, ” in ICML , 2018, pp. 3556–3565. [25] T . Fernando, S. Denman, D. Ahmedt-Aristizabal, S. Sridharan, K. Lau- rens, P . Johnston, and C. Fookes, “Neural memory plasticity for anomaly detection, ” arXiv pr eprint arXiv:1910.05448 , 2019. [26] C. Xiong, S. Merity , and R. Socher, “Dynamic memory networks for visual and textual question answering, ” in ICML , 2016, pp. 2397–2406. [27] Q. Lin, S.-q. Y e, X.-m. Huang, S.-y . Li, M.-z. Zhang, Y . Xue, and W .- S. Chen, “Classification of epileptic ee g signals with stacked sparse autoencoder based on deep learning, ” in ICIC , 2016, pp. 802–810. [28] U. R. Acharya, S. L. Oh, Y . Hagiwara, J. H. T an, and H. Adeli, “Deep conv olutional neural network for the automated detection and diagnosis of seizure using eeg signals, ” Computers in biology and medicine , v ol. 100, pp. 270–278, 2018. [29] A. OShea, G. Lightbody , G. Boylan, and A. T emko, “In vestigating the impact of cnn depth on neonatal seizure detection performance, ” in EMBC , 2018, pp. 5862–5865. [30] Y . Hao, H. M. Khoo, N. von Ellenrieder , N. Zazubo vits, and J. Gotman, “Deepied: An epileptic discharge detector for eeg-fmri based on deep learning, ” Neur oImage: Clinical , vol. 17, pp. 962–975, 2018. [31] K. M. Tsiouris, V . C. Pezoulas, M. Zervakis, S. Konitsiotis, D. D. K outsouris, and D. I. Fotiadis, “ A long short-term memory deep learning network for the prediction of epileptic seizures using eeg signals, ” Computers in biology and medicine , v ol. 99, pp. 24–37, 2018. [32] V . Shah, M. Golmohammadi, S. Ziyabari, E. V on W eltin, I. Obeid, and J. Picone, “Optimizing channel selection for seizure detection, ” in SPMB , 2017, pp. 1–5. [33] D. Ahmedt-Aristizabal, S. Denman, K. Nguyen, S. Sridharan, S. Dion- isio, and C. Fookes, “Understanding patients’ beha vior: V ision-based analysis of seizure disorders, ” IEEE journal of biomedical and health informatics , 2019. [34] H. Larochelle, Y . Bengio, J. Louradour, and P . Lamblin, “Exploring strategies for training deep neural networks, ” Journal of machine learning r esearc h , vol. 10, no. Jan, pp. 1–40, 2009. [35] M. Golmohammadi, A. H. Harati Nejad T orbati, S. Lopez de Diego, I. Obeid, and J. Picone, “ Automatic analysis of eegs using big data and hybrid deep learning architectures, ” Fr ontiers in human neur oscience , vol. 13, p. 76, 2019. [36] Y . LeCun, Y . Bengio, and G. Hinton, “Deep learning, ” Natur e , v ol. 521, no. 7553, pp. 436–444, 2015. [37] A. Page, C. Shea, and T . Mohsenin, “W earable seizure detection using con volutional neural networks with transfer learning, ” in ISCAS , 2016, pp. 1086–1089. [38] A. Antoniades, L. Spyrou, C. C. T ook, and S. Sanei, “Deep learning for epileptic intracranial eeg data, ” in MLSP , 2016, pp. 1–6. [39] I. Ullah, M. Hussain, H. Aboalsamh et al. , “ An automated system for epilepsy detection using eeg brain signals based on deep learning approach, ” Expert Systems with Applications , vol. 107, pp. 61–71, 2018. [40] Z. W ei, J. Zou, J. Zhang, and J. Xu, “ Automatic epileptic eeg detection using con volutional neural network with improvements in time-domain, ” Biomedical Signal Processing and Contr ol , vol. 53, p. 101551, 2019. [41] Z. C. Lipton, J. Berkowitz, and C. Elkan, “ A critical revie w of recurrent neural networks for sequence learning, ” arXiv preprint arXiv:1506.00019 , 2015. [42] P . Bashi van, I. Rish, M. Y easin, and N. Codella, “Learning represen- tations from eeg with deep recurrent-con volutional neural networks, ” arXiv pr eprint arXiv:1511.06448 , 2015. [43] J. Donahue, L. Anne Hendricks, S. Guadarrama, M. Rohrbach, S. V enugopalan, K. Saenko, and T . Darrell, “Long-term recurrent con volutional networks for visual recognition and description, ” in CVPR , 2015, pp. 2625–2634. [44] D. Ahmedt-Aristizabal, C. Fookes, S. Denman, K. Nguyen, S. Srid- haran, and S. Dionisio, “ Aberrant epileptic seizure identification: A computer vision perspective, ” Seizur e , vol. 65, pp. 65–71, 2019. [45] D. P . Kingma and J. Ba, “ Adam: A method for stochastic optimiza- tion, ” arXiv preprint , 2014. [46] F . Chollet et al. , “Keras, ” 2017. [47] I. Jollif fe, Principal component analysis . Springer , 2011. [48] S. Bai, J. Z. K olter , and V . Koltun, “ An empirical e valuation of generic con volutional and recurrent networks for sequence modeling, ” arXiv pr eprint arXiv:1803.01271 , 2018. [49] R. T . Schirrmeister, J. T . Springenberg, L. D. J. Fiederer , M. Glasstet- ter , K. Eggensper ger , M. T angermann, F . Hutter, W . Burgard, and T . Ball, “Deep learning with conv olutional neural netw orks for ee g decoding and visualization, ” Human brain mapping , vol. 38, no. 11, pp. 5391–5420, 2017.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment