ScoreDec: A Phase-preserving High-Fidelity Audio Codec with A Generalized Score-based Diffusion Post-filter

Although recent mainstream waveform-domain end-to-end (E2E) neural audio codecs achieve impressive coded audio quality with a very low bitrate, the quality gap between the coded and natural audio is still significant. A generative adversarial network…

Authors: Yi-Chiao Wu, Dejan Marković, Steven Krenn

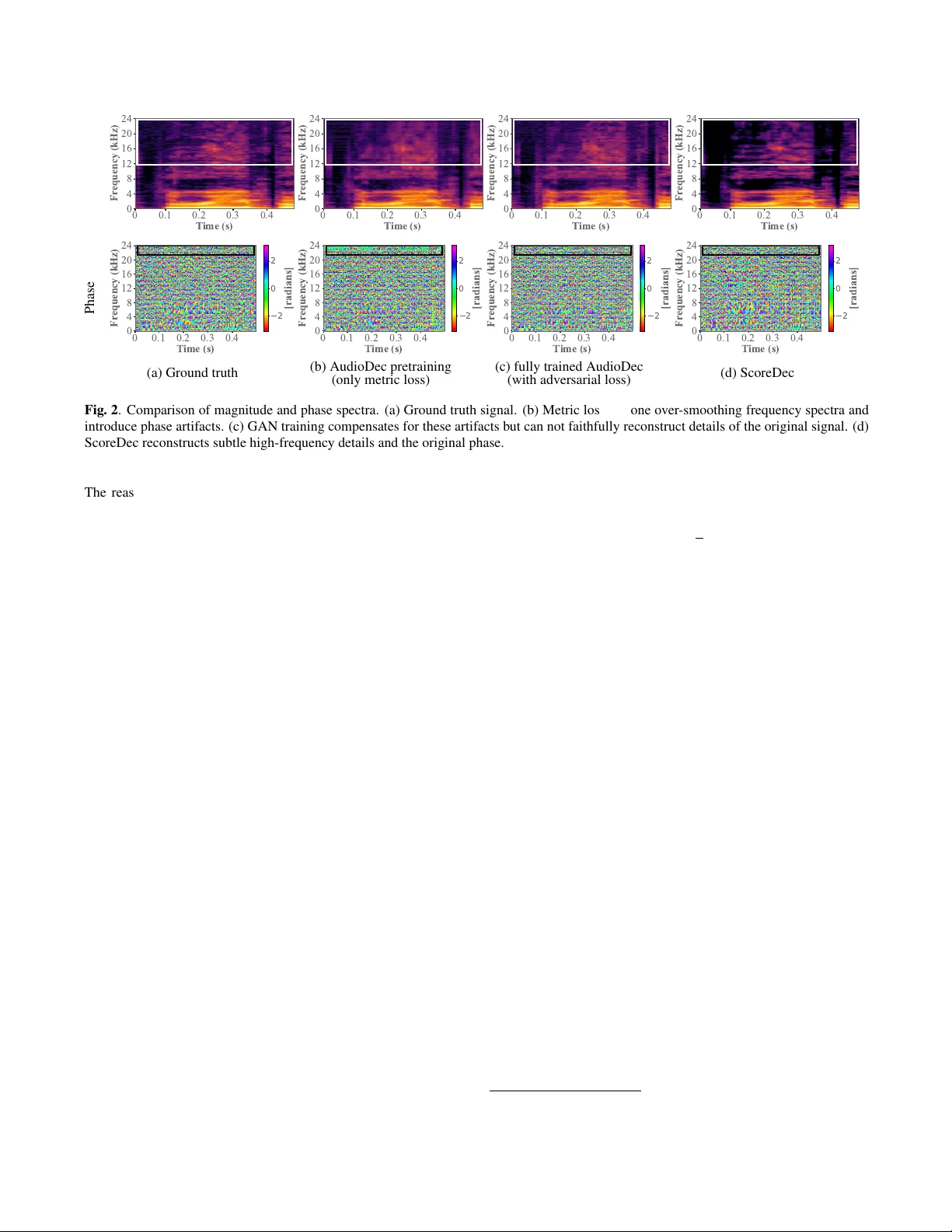

SCOREDEC: A PHASE-PRESER VING HIGH-FIDELITY A UDIO CODEC WITH A GENERALIZED SCORE-B ASED DIFFUSION POST -FIL TER Y i-Chiao W u, Dejan Markovi ´ c, Steven Kr enn, Israel D. Gebru, Ale xander Richar d Codec A vatars Lab, Pittsb urgh P A, USA ABSTRA CT Although recent mainstream wa veform-domain end-to-end (E2E) neural audio codecs achiev e impressiv e coded audio quality with a very low bitrate, the quality gap between the coded and natural audio is still significant. A generative adversarial network (GAN) training is usually required for these E2E neural codecs because of the difficulty of direct phase modeling. Howe ver , such adversarial learning hinders these codecs from preserving the original phase information. T o achie ve human-level naturalness with a reasonable bitrate, preserve the original phase, and get rid of the tricky and opaque GAN training, we dev elop a score-based dif fusion post-filter (SPF) in the complex spectral domain and combine our previous AudioDec with the SPF to propose ScoreDec, which can be trained using only spectral and score-matching losses. Both the objec- tiv e and subjectiv e experimental results show that ScoreDec with a 24 kbps bitrate encodes and decodes full-band 48 kHz speech with human-lev el naturalness and well-preserved phase information. Index T erms — audio codec, phase-preserving codec, codec post-filter , score-based generativ e model, human-lev el naturalness 1. INTRODUCTION Audio signals ha ve a v ery high temporal resolution. F or instance, the standard sample rate of CD stereo 16-bit audio is 44.1 kHz, requiring a 1,411 kbps bitrate for transmission and 172 KB for storing one second of audio. Audio codecs take adv antage of the redundancies caused by the quasi-periodic nature of audio to produce lo w-bitrate and compact codes for ef ficient audio storage and transmission, and a decoder is needed to con vert these codes back into the wa veform. The dev elopment of audio codecs dates back decades. In the early days, due to limited transmission bandwidth, most codec de- signs focused on low-bitrate approaches, trading off audio quality for compact codes. Howe ver , with the e ver-increasing use of voice and videophone applications, as well as the distribution of audio- visual data for internet video and broadcast applications, sev eral au- dio codecs and standards hav e been proposed with an emphasis on better audio quality . Lossless audio codecs [1, 2] with compression ratios as lo w as 2 × and lossy audio codecs [3–5] with a compression ratio of up to 10 × have been proposed based on carefully engineered design choices and handcrafted signal processing components. Al- though these lossy audio codecs meet the compression requirements of most current applications, the ad hoc designs and limited model- ing capacity of these lossy codecs still result in a significant quality gap between natural and reconstructed audio signals. T o av oid ad hoc designs and take advantage of the powerful modeling capacity of neural networks (NNs), end-to-end (E2E) neu- ral audio codecs [6–11] in the w aveform domain recently ha ve been intensiv ely in vestigated. Although these neural codecs achiev e im- pressiv e coded audio quality in very low bitrate conditions (e.g., 3–8 kbps), there are three main problems, i.e . saturation in qual- ity , tractability , and phase preservation. Most neural codecs control the bitrate by adopting different numbers of codebooks [12] while keeping the same temporal resolution of the codes because of the fixed netw ork architecture. As a result, these neural codecs tend to underperform the digital-signal-processing (DSP)-based codecs and quickly reach a quality saturation point when the bitrate is gradu- ally increased to the operation bitrate of the DSP-based codecs (e.g., 24 kbps for Mono Opus [3]), which is a reasonable bitrate for most current systems. Additionally , the neural codecs usually rely on a generativ e adversarial network (GAN) [13] training to achie ve high- fidelity audio reconstruction. Ho wev er , because of the indirect ob- jectiv e function of fooling the discriminators and the lack of explicit phase modeling, the generators tend to generate a plausible phase in- stead of the original phase. Since the sound directivity and spatiality reconstructions highly depend on the multi-channel phase informa- tion, the broken phase relationships result in significant modeling errors in binaural audio and ambient sound field codings. Based on the success of score-based diffusion generati ve mod- els (SGMs) [14, 15], many score-based speech generative [16, 17] and enhancement [18–20] models have been proposed. Notely , the score-based generativ e model for speech enhancement (SGMSE) [18, 19] achie ves very impressi ve performance for restoring the original phase by explicitly tackling complex spectral restorations. T o take advantage of the precise phase modeling of SGMSE, we de velop a score-based diffusion post-filter (SPF) in the complex spectral domain for the E2E AudioDec codec [11]. The proposed ScoreDec attains high-fidelity speech reconstruction, preserves the original phase information, and gets rid of the tricky GAN training. According to the objectiv e and subjective e xperimental results, the reconstructed coded speech achieves human-lev el naturalness with a significantly lower wav eform difference from the input nat- ural speech. The effecti veness of the proposed SPF for the DSP- based Opus codec [3] is also ev aluated to demonstrate its generality . The main contrib utions of this paper are as follows: (1) W e pro- pose a score-based dif fusion post-filter that significantly improves the quality of both neural [11] and DSP-based [3] audio codecs; (2) The whole system can be trained with only metric losses in an inter - pretable manner and the tricky adversarial training is not required; (3) ScoreDec well preserves phase information, resulting in highly accurate original wa veform reconstruction. 2. BA CKGROUND In this section, the neural audio codec and score-based generativ e model backbones of the proposed ScoreDec are briefly introduced. 2.1. A udioDec As shown in Fig. 1, AudioDec [11] is a classical neural codec com- posed of an encoder , quantizer, and decoder , which are trained in an 1 E n c o d e r D e c o d e r Q u a n t i z e r c o d e E n c o d e r D e c o d e r Q u a n t i z e r c o d e M S D s + M P D s T r a i n i n g w / o n l y m e t r i c l o s s ( e s ) T r a i n i n g w / m e t r i c a n d a d v e r s a r i a l l o s s e s T r a i n i n g F i x e d A E P r e - t r a i n i n g S y m m e t r i c A u d i o D e c P r o j e c t o r P r o j e c t o r E n c o d e r D e c o d e r Q u a n t i z e r c o d e S P F T r a i n i n g w / s c o r e - m a t c h i n g l o s s S c o r e D e c P r o j e c t o r Fig. 1 . Comparison between symmetric AudioDec and ScoreDec. E2E manner in the waveform domain. The main differences between AudioDec and other E2E neural codecs are the adopted efficient training paradigm and the modularized architecture. Specifically , the essential GAN training is the most computation-consuming pro- cess, but the GAN training af fects mostly the decoder in fine-tuning the wav eform details. T o improve the training ef ficiency , AudioDec adopts a two-stage training. In the first stage, the whole autoencoder is trained using only metric losses such as a mel loss to make training fast. In the second stage, only the decoder and the multi-scale and multi-period discriminators (MSDs [21] and MPDs [22]) are trained while the encoder is fixed. The modularized architecture also en- ables exchanging the lightweight AudioDec decoder with a power - ful vocoder such as HiFiGAN [22] with only decoder-sided fine- tuning. In this paper, symmetric AudioDec denotes the model with a symmetric encoder -decoder architecture while AudioDec denotes the encoder-v ocoder version as proposed in the original paper [11]. 2.2. Score-based Generative Model for Speech Enhancement The SGMSE [18, 19] includes a forward process to transfer the un- known clean speech distribution into a simple normal distribution and a reverse process to generate the estimated clean speech from sampling the tractable distribution. Specifically , given a clean speech x 0 as the initial state, the corresponding noisy speech y , a diffu- sion time step t ∈ [0 , T ] , and a standard W iener process w , the stochastic forward process of the SGMSE is defined by an Ornstein- Uhlenbeck v ariance exploding (OUVE) stochastic dif ferential equa- tion (SDE) [23] as d x t = γ ( y − x t ) | {z } := f ( x t , y ) d t + h σ min ( σ max σ min ) t q 2 log ( σ max σ min ) i | {z } := g ( t ) d w , (1) where f ( x t , y ) is the drift function, g ( t ) is the diffusion coefficient, and γ and ( σ min , σ max ) are the constant hyperparameters respectively controlling the stiffness and the injected amount of Gaussian noise of the process at each timestep. Furthermore, the corresponding re verse SDE [15, 24] is formulated as d x t = − f ( x t , y ) + g ( t ) 2 ∇ x t log p t ( x t ) d t + g ( t )d ¯ w . (2) The gradient of the logarithm distribution of x t , ∇ x t log p t ( x t ) , is called the score function, and ¯ w is the time-reversed W iener process. Because Eq. 1 is a Gaussian process, the distrib ution of state x t is a normal distribution whose mean has a closed-form solution as µ ( x 0 , y , t ) = e − γ t x 0 + (1 − e − γ t ) y , (3) and the variance also has a closed-form solution as σ ( t ) 2 = σ 2 min ( σ max σ min ) 2 t − e − 2 γ t log( σ max σ min ) γ + log( σ max σ min ) . (4) As a result, the corresponding score function can be formulated as ∇ x t log p t ( x t | x 0 , y ) = − x t − µ ( x 0 , y , t ) σ ( t ) 2 . (5) Although an arbitrary x t is av ailable based on the giv en clean-noisy pair ( x 0 , y ) in the training stage, the clean speech x 0 is agnostic in the inference stage. T o estimate the clean speech based on the noisy speech using the rev erse process, SGMSE adopts a neural network as the score estimator during the inference. Specifically , giv en a sampled Gaussian noise z , the x t can be computed by x t = µ ( x 0 , y , t ) + σ ( t ) z . (6) By substituting Eq. 6 into Eq. 5, the score estimator s θ can be trained by the score-matching [25] objectiv e function arg min θ E x t | ( x 0 , y ) , y , z , t " s θ ( x t , y , t ) + z σ ( t ) 2 2 # . (7) The central difference between SGMSE and other conditional SGMs [16, 17, 20] is the direct incorporation of the speech gen- erativ e task into the forward and re verse processes. 3. METHOD In this section, we first try to answer three related questions: Why is explicit phase modeling difficult? Why is GAN training essential for the current E2E neural codecs? And why is GAN training not preferred? Subsequently , we define the problem tackled in this paper and introduce the proposed method. 3.1. Problems of GAN-based E2E Neural Codec The difficulties of explicit phase modeling mainly root in phase be- ing a white-noise-like signal with a principal v alue interval ( − π , π ] . The lack of significant patterns makes phase modeling dif ficult, and the phase prediction error in angular space should be formulated in a complicated form to account for phase wrapping [26], min {| ˆ p − p | , 2 π − | ˆ p − p |} . (8) As a result, most current neural codecs adopt only magnitude spec- tral losses [11] or losses implicitly tackling the phase such as multi- resolution spectral [9] and waveform losses [10] and let the GAN training teach the model to learn the magnitude and phase consis- tency . Since these losses focus on mostly the signal en velope, the model trained with only these losses suffers from over -smoothing and high-frequency aliasing problems. As Fig. 2-(b) illustrates, the AudioDec trained with only the mel spectral loss misses subtle de- tails and generates some undesired details such as high-frequency carrier wav es resulting in buzzy sounds. This answers our second question: GAN training is required since these defects can be easily detected by the discriminators, ensuring that the model still gener- ates high-fidelity speech with a reasonable phase (see Fig. 2-(c)). 2 Magnitude Phase 2 0 2 2 0 2 2 0 2 2 0 2 (a) Ground truth (b) AudioDec pretraining (only metric loss) (c) fully trained AudioDec (with adversarial loss) (d) ScoreDec Fig. 2 . Comparison of magnitude and phase spectra. (a) Ground truth signal. (b) Metric losses alone over -smoothing frequency spectra and introduce phase artifacts. (c) GAN training compensates for these artifacts but can not faithfully reconstruct details of the original signal. (d) ScoreDec reconstructs subtle high-frequency details and the original phase. The reason such training is not preferable (question three) is that there is no guarantee of preserving the original phase because of the fuzzy training objecti ve (i.e., maximizing the probability that the discriminators classify the generated speech as natural speech) of the model, and the obscurity of the tricky GAN training hinder the model’ s capacity to faithfully reconstruct the input speech, particu- larly subtle frequency details and original phase. 3.2. Architecture Overview The comparison of the original and predicted spectra in Fig. 2 shows that the generated speech of AudioDec without the GAN training (AD stage1 ) includes an ov er-smoothing and high-frequency-noise cor- rupted magnitude spectrum and a distorted phase spectrum, which is similar to a classical speech enhancement problem. Therefore, adopting SGMSE as a post-filter to enhance both the real and imagi- nary spectra, which ha ve clear patterns, of the AD stage1 coded speech is a reasonable solution to av oid the tricky GAN training and chal- lenging direct phase modeling while restoring the original phase. The proposed ScoreDec is composed of the symmetric neural audio codec AudioDec [11] and a score-based diffusion post filter (SPF), which are separately trained using the mel-loss and score- matching loss, respectively . The symmetric AudioDec encodes speech into low-bitrate discrete codes and decodes the preliminary speech for the following SPF processing to generate the final speech. Specifically , a symmetric AudioDec is first trained using only the mel loss (i.e., the 1 st stage of AudioDec) as shown in Fig. 1. Then, giv en the paired input wav eform x w as the clean speech and the AD stage1 coded wa veform ˆ x w as the noisy speech, the proposed SPF can be trained using the score-matching objective from Eq. 7. T o train the SPF in the complex spectral domain, the waveform signals x w and ˆ x w are first transformed into complex spectra x c and ˆ x c using a short-time Fourier transform (STFT). Follo wing the data representation in [18, 19] to av oid the model being dominated by only the high-energy components, an amplitude modulation x a = β | x c | α e i ∠ ( x c ) (9) is applied to both x c and ˆ x c , where ∠ ( · ) denotes the angle of a com- plex number , α ∈ (0 , 1] is an amplitude companding constant, and β is the scaling constant to normalize the final amplitudes roughly within [0 , 1] , and the corresponding demodulation x c = β − 1 | x a | 1 α e i ∠ ( x a ) (10) is applied to the enhanced complex spectra before the in verse STFT . 4. EXPERIMENTS 4.1. Experimental Setting This paper focuses on speech modeling because of the majority of human communication. Specifically , the codecs were evaluated on the full-band 48 kHz VCTK [27]-deriv ed V alentini [28] dataset con- sisting of 84 gender-balanced English speakers for training and two speakers (female p257 and male p232) for testing. Each speaker has around 400 utterances, and the utterance lengths vary from 1–16s. The symmetric AudioDec (symAD) and AudioDec [11] codecs were adopted as the baselines. The proposed ScoreDec and symAD share the pre-trained AutoEncoder (AE) as shown in Fig. 1 while the symAD decoder was further trained with the GAN training. Score- Dec in contrast uses the score-based post-filter (SPF). The vocoder- based AudioDec codec, which replaced the symAD decoder with a powerful HiFi-GAN vocoder , was also included for fair compar- isons. In addition to the mel loss, the wrapped angle loss from Eq. 8 ( L angle ), the multi-resolution mel loss ( L mm ) [29], and the L1 waveform loss ( L wav ) were applied to the symAD and AudioDec codecs to show the dif ficulties of phase modeling. The model archi- tectures and hyperparameters of the pre-trained AE and HiFi-GAN follow [11] 1 but the compression rate and the number of the 10- bit codebooks were increased to 320 and 16 to achieve a bitrate of 24 kbps. The AE was trained with only the metric losses for the first 500k iterations, and the decoder/vocoder was further trained with the discriminators for another 500k iterations. Opus [3] with 24 kbps, which maintains only the most audio in- formation under 20 kHz, was also adopted to sho w the ef fectiv eness of the proposed SPF for different codecs. The SPFs of ScoreDec and Opus follo wed the SGMSE 2 architecture. The comple x spectra were 1 https://github.com/facebookresearch/AudioDec 2 https://github.com/sp- uhh/sgmse 3 T able 1 . Objectiv e ev aluations of 48 kHz codecs w/ 24 kbps W av( × 10 -3 ) ↓ SI-SDR ↑ STOI ↑ PESQ ↑ symAD 8.5 -17.38 0.91 3.12 symAD w/ L angle 2.6 0.70 0.90 2.60 symAD w/ L mm 2.9 0.29 0.91 2.51 symAD w/ L wav 1.3 5.00 0.91 2.57 AudioDec 3.1 -0.50 0.91 2.67 AudioDec w/ L angle 2.4 1.34 0.90 2.58 AudioDec w/ L mm 2.6 0.76 0.92 2.53 AudioDec w/ L wav 1.2 5.19 0.92 2.60 ScoreDec (ours) 0.7 8.17 0.97 3.68 Opus 10.0 -20.62 0.89 4.21 Opus SPF (ours) 0.2 16.20 0.98 4.29 Fig. 3 . W av eform comparison of the existing neural audio codec AudioDec to ScoreDec (top) and of Opus with and without our proposed score-based diffusion post-filter (bottom). ScoreDec and Opus SDF preserve the original phase well. extracted using 510 FFT size and 320 hop size. The real and imag- inary spectra were treated as two separate channels in the models. The stochastic parameters were set to σ min = 0 . 05 , σ max = 0 . 5 , and γ = 1 . 5 . The diffusion time step was within [0 . 03 , 1] . The mod- ulation parameters were set to α = 0 . 5 and β = 0 . 15 . The batch size was 8 and the batch length w as 256 frames. Both models were trained for 161 epochs with a learning rate of 10 -4 . The predictor- corrector (PC) sampler [15] with one corrector step was adopted for inference. The step size of the annealed Langevin dynamic in the corrector was 0.5. W e run the sequential inference of the score-based model for 30 steps. More details can be found in [19]. 4.2. Objective Evaluation T o ev aluate the phase-preserving capability via the waveform sim- ilarity , we report wav eform mean-square-error (W a v) and scale- in variant source-to-distortion ratio (SI-SDR) [30] in dB. T o e valuate the speech intelligibility and quality , wide-band STOI [31] and PESQ [32] were applied to the downsampled 16 kHz speech. The results are the averages of all testing utterances. As shown in T able 1, the proposed ScoreDec and Opus SPF respecti vely outperform their base codecs in all measurements, especially the wav eform similarity . The much higher SI-SDR and greatly reduced W av loss (10 -3 → 10 -4 ) demonstrate the significant phase-preserving improvement of the proposed models while achieving e ven higher speech intelligibility and quality . On the other hand, the markedly worse wav eform sim- T able 2 . Mean Opinion Scores of 48 kHz codecs w/ 24 kbps Natural symAD AudioDec ScoreDec Opus Opus SPF 4.14 ± .08 3.86 ± .09 3.79 ± .10 4.16 ± .08 3.88 ± .09 4.16 ± .08 ilarity of models adopting the explicit or implicit phase modeling losses also show the difficulties of direct phase modeling. That is, although these losses can improve the wav eform similarity , the wa veform similarity gap is still significant, and the speech quality also slightly degrades. T o demonstrate the outstanding phase preservation in ScoreDec, we plot a waveform example in Fig. 3. The results indicates that although the symAD-generated wav eform has a similar magnitude spectrum to the natural one as shown in Fig. 2, the phase is very different from the natural one. Although AudioDec achieves a better wa veform similarity , the phase differences are still significant. Even the DSP-based Opus codec has the same problem. Ho wever , the ScoreDec- and Opus SPF-generated wa veforms well align with the natural wa veform, sho wing that the original phase is well preserved. On the other hand, we also ev aluated the inference speed using an NVIDIA A100 80GB SXM GPU. ScoreDec with a real-time f ac- tor (R TF) of 1.707 is significantly slower than symAD with a 0.019 R TF and AudioDec with a 0.022 R TF as e xpected. The non-causal architecture and iterativ e inference hider ScoreDec from streaming applications, and we leave fast inference as an important future work. 4.3. Subjective Evaluation Mean opinion score (MOS) tests were conducted to e valuate the per- ceptual quality . W e randomly selected 15 utterances of each test speaker to form the testing set including natural and codec-generated speech. Fifteen participants took the tests in a quiet environment with headphones. The participants are either native speakers or audio researchers. The score range is 1 (very unnatural)–5 (very natural), and the average score with a 95% confidence interval of each sys- tem is shown in T able 2. The results sho w that an E2E neural codec such as symmetric AudioDec achie ves only similar speech quality to the DSP-based Opus codec when adopting the normal Opus opera- tion bitrate, 24 kbps. Even increasing the model capacity by using a powerful HiFi-GAN in AudioDec, the quality improv ements of the generated speech are saturated. Ho wev er , the proposed SPF can fur - ther impro ve the speech quality to a human-le vel naturalness. More- ov er , SPF generalizes well to both neural- and DSP-based codecs, since both ScoreDec and Opus SPF achieve human-level natural- ness. More details can be found on our demo page 3 . 5. CONCLUSION In this paper, we demonstrate that score-based diffusion post- filtering improves existing neural- and DSP-based audio codecs to human-level naturalness in speech modeling, and adversarial training is not required since high-fidelity results can be achieved exclusi vely using metric and score-matching losses. The proposed ScoreDec preserves phase information well and therefore renders itself suitable not only for mono audio codecs but also for multi- channel codecs. For future w ork, the effecti veness of ScoreDec for modeling general spatial audio signals beyond speech should be ev aluated. A streamable ScoreDec with a causal architecture and fast inference is also an important topic to extend the ScoreDec capability from the limited non-streaming applications. 3 https://bigpon.github.io/ScoreDec_demo/ 4 6. REFERENCES [1] Tilman Liebchen and Y uriy A Reznik, “MPEG-4 ALS: An emerging standard for lossless audio coding, ” in Pr oc. DCC , 2004, pp. 439–448. [2] J. Coalson, F ree Lossless Audio Codec , Accessed: 2000. [3] J.-M. V alin, G. Maxwell, T . B. T erriberry , and K. V os, “High- quality , low-delay music coding in the opus codec, ” in AESC 135 , 2013. [4] B. Bessette et al., “The adaptive multirate wideband speech codec (AMR-WB), ” IEEE TSAP , v ol. 10, no. 8, pp. 620–636, 2002. [5] M. Dietz et al., “Overview of the EVS codec architecture, ” in Pr oc. ICASSP , 2015, pp. 5698–5702. [6] S. Kankanahalli, “End-to-end optimized speech coding with deep neural networks, ” in Proc. ICASSP , 2018, pp. 2521–2525. [7] C. G ˆ arbacea and other , “Low bit-rate speech coding with vq- vae and a wav enet decoder, ” in Pr oc. ICASSP , 2019, pp. 735– 739. [8] K. Zhen, J. Sung, M. S. Lee, S. Beack, and M. Kim, “Cascaded cross-module residual learning to wards lightweight end-to-end speech coding, ” in Pr oc. Interspeech , 2019, pp. 3396–3400. [9] N. Zeghidour , A. Luebs, A. Omran, J. Skoglund, and M. T agliasacchi, “SoundStream: An end-to-end neural audio codec, ” IEEE/A CM T ASLP , vol. 30, pp. 495–507, 2021. [10] A. D ´ efossez, J. Copet, G. Synnae ve, and Y . Adi, “High fidelity neural audio compression, ” arXiv preprint , 2022. [11] Y .-C. W u, I. D. Gebru, D. Markovi ´ c, and A. Richard, “ Au- dioDec: An open-source streaming high-fidelity neural audio codec, ” in Pr oc. ICASSP , 2023. [12] A. V asuki and P .T . V anathi, “ A revie w of vector quantization techniques, ” IEEE P otentials , v ol. 25, no. 4, pp. 39–47, 2006. [13] I. Goodfello w , J. Pouget-Abadie, M. Mirza, B. Xu, D. W arde- Farle y , S. Ozair , A. Courville, and Y . Bengio, “Generativ e ad- versarial nets, ” in Pr oc. Pr oc. NeurIPS , 2014, pp. 2672–2680. [14] J. Ho, A. Jain, and P . Abbeel, “Denoising diffusion probabilis- tic models, ” in Pr oc. NeurIPS , 2020. [15] Y . Song, J. Sohl-Dickstein, D. P . Kingma, A. Kumar , S. Er- mon, and B. Poole, “Score-based generati ve modeling through stochastic differential equations, ” in Proc. ICLR , 2021. [16] Z. Kong, W . Ping, J. Huang, K. Zhao, and B. Catanzaro, “Dif- fW av e: A versatile diffusion model for audio synthesis, ” in Pr oc. ICLR , 2021. [17] N. Chen, Y . Zhang, H. Zen, R. J. W eiss, M. Norouzi, and W . Chan, “W a veGrad: Estimating gradients for w av eform gen- eration, ” in Pr oc. ICLR , 2021. [18] S. W elker , J. Richter , and T . Gerkmann, “Speech enhancement with score-based generati ve models in the complex STFT do- main, ” in Pr oc. Interspeech , 2022, pp. 2928–2932. [19] J. Richter , S. W elker , J.-M. Lemercier , B. Lay , and T . Gerk- mann, “Speech enhancement and derev erberation with diffusion-based generative models, ” IEEE/ACM T ASLP , vol. 31, pp. 2351–2364, 2023. [20] Y .-J. Lu, Z.-Q. W ang, S. W atanabe, A. Richard, C. Y u, and Y . Tsao, “Conditional dif fusion probabilistic model for speech enhancement, ” in Pr oc. ICASSP , 2022, pp. 7402–7406. [21] K. Kumar et al., “MelGAN: generativ e adversarial networks for conditional wa veform synthesis, ” in Proc. NeurIPS , 2019. [22] J. K ong, J. Kim, and J. Bae, “HiFi-GAN: Generati ve adv ersar- ial networks for ef ficient and high fidelity speech synthesis, ” in Pr oc. NeurIPS , 2020. [23] G. E. Uhlenbeck and L. S. Ornstein, “On the theory of the brownian motion, ” Physical re view , vol. 36, no. 5, pp. 823, 1930. [24] B. D. Anderson, “Re verse-time diffusion equation models, ” Stochastic Processes and their Applications , vol. 12, no. 3, pp. 313–326, 1982. [25] A. Hyv ¨ arinen and P . Dayan, “Estimation of non-normalized statistical models by score matching., ” Journal of Machine Learning Resear ch , v ol. 6, no. 4, 2005. [26] Y . Ai and Z.-H. Ling, “Neural speech phase prediction based on parallel estimation architecture and anti-wrapping losses, ” in Pr oc. ICASSP , 2023. [27] C. V eaux, J. Y amagishi, and K. MacDonald, “CSTR VCTK corpus: English multi-speaker corpus for CSTR voice cloning toolkit, ” University of Edinbur gh. CSTR , 2017. [28] C. V alentini-Botinhao, “Noisy speech database for training speech enhancement algorithms and TTS models, ” University of Edinbur gh. CSTR , 2017. [29] R. Y amamoto, E. Song, and J.-M. Kim, “Parallel W aveGAN: A fast w av eform generation model based on generativ e adver- sarial networks with multi-resolution spectrogram, ” in Proc. ICASSP , 2020, pp. 6199–6203. [30] J. L. Roux, S. Wisdom, H. Erdogan, and J. R. Hershe y , “Sdr– half-baked or well done?, ” in Pr oc. ICASSP , 2019, pp. 626– 630. [31] C. H. T aal, R. C. Hendriks, R. Heusdens, and J. Jensen, “ An algorithm for intelligibility prediction of time–frequency weighted noisy speech, ” IEEE/ACM T ASLP , v ol. 19, no. 7, pp. 2125–2136, 2011. [32] A. Rix, J. Beerends, M. Hollier , and A. Hekstra, “Perceptual ev aluation of speech quality (PESQ)-a ne w method for speech quality assessment of telephone networks and codecs, ” in Pr oc. ICASSP , 2001, vol. 2, pp. 749–752. 5

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment