Look, Listen and Recognise: Character-Aware Audio-Visual Subtitling

The goal of this paper is automatic character-aware subtitle generation. Given a video and a minimal amount of metadata, we propose an audio-visual method that generates a full transcript of the dialogue, with precise speech timestamps, and the chara…

Authors: Bruno Korbar, Jaesung Huh, Andrew Zisserman

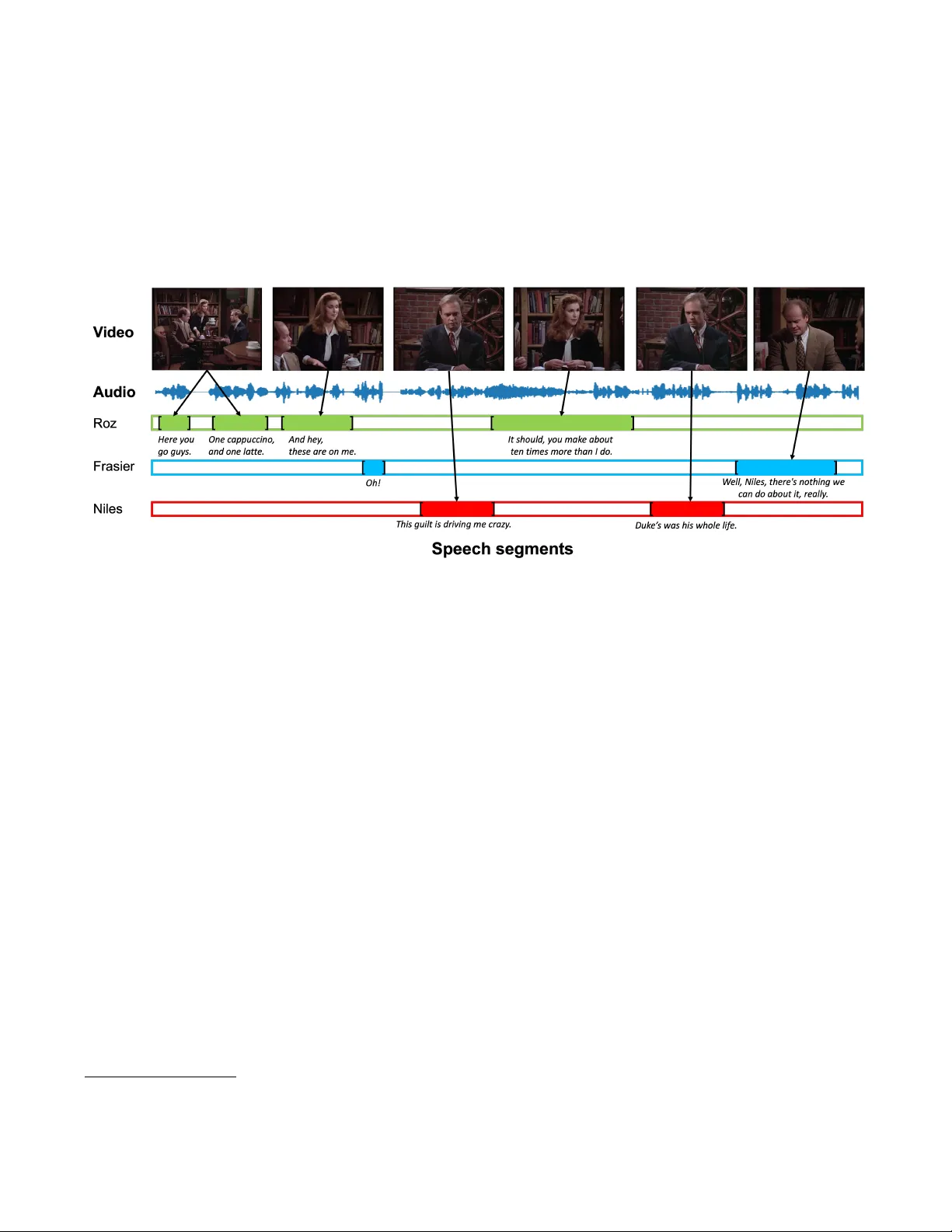

LOOK, LISTEN AND RECOGNISE: CHARA CTER-A W ARE A UDIO-VISU AL SUBTITLING Bruno K orbar ∗ J aesung Huh ∗ Andr ew Zisserman V isual Geometry Group, Department of Engineering Science, Uni versity of Oxford, UK Fig. 1 : Character-a ware audio-visual subtitling. The generated data covers what is said, when it said, and by whom it is said. ABSTRA CT The goal of this paper is automatic character -aware subtitle genera- tion. Giv en a video and a minimal amount of metadata, we propose an audio-visual method that generates a full transcript of the dia- logue, with precise speech timestamps, and the character speaking identified. The key idea is to first use audio-visual cues to select a set of high-precision audio exemplars for each character, and then use these ex emplars to classify all speech se gments by speaker iden- tity . Notably , the method does not require face detection or track- ing. W e e valuate the method o ver a v ariety of TV sitcoms, including Seinfeld, Fraiser and Scrubs. W e en vision this system being use- ful for the automatic generation of subtitles to improv e the accessi- bility of the v ast amount of videos av ailable on modern streaming services. Project page : https://www.robots.ox.ac.uk/ ˜ vgg/research/look- listen- recognise/ Index T erms — character-aw are subtitling, audio-visual speaker diarisation, speech recognition, video understanding 1. INTRODUCTION W ith the rise of streaming platforms that allo w watching videos “on- demand”, more video content is made av ailable to the general public and researchers than ever in history . With more than 80% of users of one such platform relying on subtitles [1], automatic subtitle gen- eration and captioning has become an important research topic in the community [2, 3]. Unfortunately , many subtitles, whether au- tomatically generated or not, do not comply with the standards for Subtitles for Deaf and Hard-of-hearing (SDH): namely , they do not ∗ These authors contributed equally to this w ork. This research w as funded by EPSRC Programme Grant V isualAI EP / T028572 / 1, and a Royal Society Research Professorship. include information about speaker identification, nor do they contain sound effects and music. In this paper, we take the next step towards automatic generation of SDH – we aim to make the subtitles character-aware. Character- aware subtitles would also be of great benefit to researchers. They would allo w for the automatic generation of lar ge-scale video datasets, which could fuel the next generation of visual-language models capable of learning higher-le vel semantics from the paired data. There has been a plethora of works using audio-visual networks for speech recognition [4, 5], speaker diarisation [6, 7, 8] or character recognition [9, 10, 11, 12] which are subtasks of our main goal. Howe ver , these works require additional processing for detecting and tracking faces. W e present a simpler method that does not re- quire face detection or tracking and uses only of f-the-shelf deep neu- ral network models and the cast list for each episode. W e make the following four contributions: (i) we propose a new task, character-aware audio-visual subtitling, which aims to generate the what , when and by whom for subtitles, with minimal required metadata. (ii) we dev elop an automatic pipeline for this task that does not require face detection or tracking (Section 2); (iii) we curate an evaluation dataset that includes subtitles labelled with characters individually for three different sitcom series: Fraiser , Scrubs and Seinfeld (Section 3); and (iv) we assess the method on the ev aluation dataset and report the performance (Section 4). 1.1. Related work Labelling people in videos. is a well studied topic in computer vi- sion [10, 11, 12]. Often, the av ailability of various lev els of prior information is required such as scripts [10], clean images for actor- lev el supervision [12], or ground truth subtitles with correct times- tamps [13, 14]. [15] relaxes the need for cleaned data and makes VAD de tec tio n and ASR Audio - visual speaker detection Elaine Visual char acter classification × Stage 1: Build ing audio exempla rs Stage 2: Assigni ng identities to speech segm ents Nearest centroid classificat ion Jerry Elaine George exemplars centroids query Jerry Elaine unknown George Fig. 2 : Overvie w of our method. W e first build a database of au- dio exemplars for each character by filtering speech segments until only a high precision set remains (left). Each speech segment is then assigned to a character by comparing its voice embedding to the ex- emplar embeddings (right). their method scalable by gathering a large amount of data via au- tomated image search to obtain the corroborative evidence they use for supervision. Like [15], our model retriev es the necessary infor- mation via search engines, howe ver , it does not pre-process video frames, sav e for the transformations required by a neural network. A udio-only speaker diarisation. Speaker diarisation is the task of identifying “who spoke when” from a given audio file with human speech. There are two branches of works in this area: (i) using ex- isting V oice Acti vity Detection (V AD) and a speaker model together with clustering [16, 17, 18] and (ii) using an end-to-end model which goes from the V AD to assigning speakers [19, 20]. Both of them suffer when the number of speakers is large such as in TV shows or dramas. Furthermore, the current state-of-the-art speaker recog- nition models assume that the input is long ( > 2 sec), while most of the speeches in TV shows are relativ ely short including exclama- tions, which leads to the degradation of speaker clustering perfor- mance. In this paper , we include the active speaker detection model and person-identification model, which are strong in short videos, to identify the character . A udio-visual speaker diarisation. In the last fe w years, efforts were made to improv e the performance of diarisation by borrowing the power of face recognition models or lipsync models, which are closely related to human speech [6, 8, 21]. [6] utilises audio-visual activ e speak er detection model and speech enhancement models, but mostly in celebrity interviews or news segment where the length of speeches are generally short. [8] introduces an Audio-V isual Rela- tion Network (A VR-Net) that leverages the cross-modal correlation to recognise the speaker’ s identity . Our approach is different from these works in two ways: (i) we do not use any face detection or tracking; and (ii) we introduce character-a ware audio-visual subti- tling that b uilds the character bank within each video and figures out not only the speaker clusters but the speakers’ identity for each utterances and the speech content. Datasets. The Bazinga! dataset [22] also provides subtitles labelled with characters for a large number of TV series. Howe ver , it is an audio only dataset, and consequently is not directly suitable for ap- plying the audio-visual approach we dev elop. 2. METHOD This section explains our approach to creating subtitles for the video and attributing speakers to each speech segment. Our method con- sists of two distinct stages. First, we detect speech segments from the video, recognise the spoken words, and process the data to create a database of what we refer to as speec h exemplars – sample video clips where a speaker is clearly audible, visible and identifiable. In the second stage, the speech ex emplars for each character are used to assign the identities to all speech segments. In order to label the characters we require the following meta- data for each episode: (i) the names of the characters in the show; and (ii) for each character 1–10 sample images of the actor and their names that we can use as visual examples. This metadata can be obtained automatically from online database of movies or TV se- ries [23]. 2.1. Stage 1: building audio exemplars The goal of stage 1 is to create a database of character v oices. W e take multiple episodes of a TV series, and obtain a set of speech segments for each character . In order to do this, we first split videos into speech segments, and transcribe them. For each segment we determine if only one speaker is visible and is speaking – a crucial step because it allows us to be confident that the speech segment corresponds to the face in the frame. W e collect a set of speech segments for each character that we can confidently recognise from their face, and then filter the samples in each set to remove potential label noise using v oice embeddings. W e end up with a set of speech segments for each character that are recognised with high precision, and refer to these as speech exem- plars . The building of these e xemplars is illustrated in Figure 2, and we giv e details of each sub-step below . 1. V AD detection and A utomatic Speech Recognition (ASR). In this stage, we take an entire video and split it into segments where speech is detected and recognised. W e first detect the voice re gions across the entire dataset and determine the spoken content of each segment. W e do this with a language-guided V AD model. W e apply the WhisperX [3] model on the audio stream of our dataset which detects the speech regions with w ord-lev el timestamps. W e concate- nate the generated words to obtain the entire transcription per video, then use a sentence tokenizer to separate them by sentences. Assum- ing each sentence is spok en by a single speak er , we use the start and end times of the sentences as our unit of speech segments. W e also find that most TV sho ws contain laughter tracks (audience laughter) which are voice regions b ut are not of interest to this work. Thus, we run a pretrained laughter detector [24] for each of the re- maining v oice segments and remove the ones from the candidates of ex emplars if laughter is detected. After this step, we know precisely when characters in the show are speaking and what the y are saying. W e don’t yet know who is saying what. 2. A udio-visual speaker detection. The goal of this stage is to take speech segments from the previous stage and select only those with a single visible speaker . This will produce a subset of speech se gments where we can recognise the speaker . T o achieve this, we localise the speaker with an audio-visual synchronisation model [25] which produces a spatial location of the audible objects and has been shown to detect speakers well. In practice, it generates an audio-guided heatmap ov er each video frame. W e average the heatmaps over the length of each speech se gment to a void unnecessary noise and detect peaks in the heatmap through a combination of maximum filtering and non-maximum suppression. Example heatmap outputs can be seen in Figure 2. When a single peak is visible throughout the video clip, we can assume that only one speaker is present. If there are no detected peaks, or there are multiple ones, we discard that speech segment from the candidates of e xemplars. 3. V isual character classification. In this step a character name is assigned to each of the single-speaker speech se gments from the pre- vious step where possible. This leav es us with a further reduced set of speech segments, each having a character name associated with it. Character classification is the only step in our annotation process that external data is used. Specifically , the 1–10 sample images of each actor are used to form a visual embedding of that character . Our classification model [26] compares a visual embedding of the frames of a speech segment to a combination of actor visual embedding and actor name (details are given belo w). W e select the best match or discard the clips which cannot be classified with a high degree of confidence. Note, (i) the comparison is at the frame le vel, no face detector or cropping is required for this visual recognition; (ii) we compute visual embeddings for all characters in a gi ven season, but only consider ones present in that episode at inference time. 4. A udio filtering. Finally , we group the labelled speech segments from the previous stage by character, and for each character we filter their voice samples to remove potential noise from the groupings as follows: we compute voice embeddings for each sample, and con- sider that a sample is positiv e for a giv en character if its 5 nearest neighbours are labelled as the same character . Note that for char- acters where the number of samples n is smaller than 5, we keep all the samples in our database. This gives us the final ex emplar set for a giv en TV series and hopefully leaves us knowing what each character sounds like. 2.2. Stage 2: Assigning characters to speech segments The aim of this stage is to assign a character name to each of the de- tected audio segments that we are confident of, re gardless of whether a speaker is visible or not. On a high-level, we achie ve this by com- paring the distance between each speech segment and the audio ex- emplars for each character . W e do not assign an identity if the mini- mum distance is abov e a certain threshold. Specifically , for each character we compute the mean of ex emplar embeddings and use it as a centroid representation for that character . T o classify speech segments, we embed them with the same model used to generate the ex emplar embeddings, and measure distances to class centroids. The segment is assigned to the speaker corre- sponding to the nearest centroid. Howe ver , if the minimum distance between the segment embedding and each centroid is bigger than a threshold d , then that segment is classified as “unkno wn”. This cov- ers uncertainty and also the cases where we don’t ha ve e xemplars. 2.3. Implementation details W e detect speech and perform ASR with an off-the-shelf Whis- perX [3] model, and the sentences are tokenized with NL TK [27] tokenizer . W e use the laughter detector by [24] with a detection threshold of 0.8. All voice embeddings are encoded with ECAP A- TDNN [28], which is pretrained with V oxCeleb [29]. For discovery of speaking faces, we use a pretrained L WTNet [25]. For each gen- erated heatmap we detect 4 peaks, and consider each a positiv e if it’ s larger than τ det = 0 . 7 . For actor face classification, we use the CLIP- P AD model [26] pretrained on VGGF ace and VGGFace2 [30]. Ac- tor text-image embeddings are formed as "An image of Name Surname" where is an average representation of query images computed using a face-embedding network, as in [26]. T o classify the actors in the scene, we measure the cosine similarity between the visual embedding of the frames and the text-image embedding and choose the ones with highest similarity score where the score is over threshold τ rec = 0 . 85 as positiv es. All hyper- parameters are determined via grid search on the three validation episodes, and kept fixed otherwise. T able 1 : Evaluation dataset statistics. # episode : number of episodes, duration : total duration of the dataset, #IDs : total number of characters, speech % : percentage of video time that is speech and # spks : min / mean / max of number of speakers per video. Dataset # episode duration # IDs speech % # spks Seinfeld 6 2h 09m 36 60.6 6 / 9.2 / 12 Frasier 6 2h 11m 29 59.5 6 / 9.2 / 12 Scrubs 6 2h 02m 48 67.9 13 / 15.7 / 18 3. EV ALU A TION DA T ASET In this section, we describe a semi-automatic annotation pipeline used to generate the ground truth character names, timestamps and subtitles for speech segments. The goal is to annotate the identities for all subtitles with accurate time intervals in the video. 3.1. Annotation procedure The dataset collection process consists of two stages: (i) automatic initial annotations by aligning a transcript with timed subtitles; and (ii) human annotators revie wing and further refining these annota- tions. Note that our dataset differs from other speaker diarisation datasets since we are also interested in the identity of each speaker and speech transcriptions. Aligning transcripts and timestamps. T o associate character names with corresponding temporal timestamps, we le verage two readily accessible source of textual video annotation: original tran- scripts and subtitles with word-lev el timestamps. T ranscripts are obtained from multiple online sources [31, 32, 33]. They include spoken lines and information about who is speaking. Howe ver , they do not provide any timing information beyond the order in which the lines are spoken. W e use WhisperX [3] to obtain the timed subtitles. W e find this suitable since its transcription and timestamps are highly accurate, whereas the timestamps in subtitles from other online sources often do not align with the actual speech in the video. T o align the original transcripts and timed subtitles, we employ the approach from [34]. W e use Dynamic T ime W arping (DTW) to obtain the word-lev el alignment between the transcript and timed subtitles to associate the speaker with each of these words. Please refer to the original paper for the detailed process. Manual correction. The output of the automatic pipeline is prone to sev eral errors such as (i) a mismatch between the text of the tran- script and WhisperX’ s transcription results; and (ii) mispredicted timestamps. W e correct any errors in timestamps and character names manually using the VIA V ideo Annotator [35]. 3.2. Dataset statistics Three TV series datasets are used to e valuate our method. W e anno- tate the first six episodes of Season 2 of Frasier , Season 2 of Scrubs and Season 3 of Seinfeld . W e utilise the sixth episode in each season as our validation set, while the remaining episodes serve as our test set. The detailed statistics are shown in T able 1. 4. RESUL TS This section provides a detailed analysis of Stage 1 and 2, followed by the ov erall result on our test set. 4.1. Detailed analysis of Stage 1 and 2 Perf ormance evaluation of Stage 1. W e e valuate the yield and clas- sification accuracy of the speech exemplars on the five episodes of Seinfeld in our test set. In T able 2, it can be seen that 19.3% of voice activity segments can be considered as exemplars. W e also T able 2 : Exemplar yield after steps in Stage 1 (on Seinfeld). Step # of exemplars % of total V AD detection 2107 100.0 Audio-visual speaker detection 1271 60.3 V isual character classification 806 38.3 Audio filtering 407 19.3 T able 3 : Exemplar recognition performance for named characters in Stage 1 in Seinfeld. ‘others’ is a group of 21 characters, all named correctly . Char . name # exemplars # correct Acc (%) T otal 407 406 99.8 Jerry 273 272 99.6 Elaine 30 30 100 Kramer 12 12 100 George 14 14 100 others 78 78 100 Fig. 3 : Stage 2 Precision-POCS Curves for the test set of the three TV series, obtained by varying the threshold d (for classification as “unknown”). The left figure shows the performance using all de- tected speech segments. The right figure shows the performance only for the long se gments ( > 2 sec). W e also show the oracle points (‘x’ in each graph) for each TV series. The oracle point is where all segments for which there are character e xemplars are correctly clas- sified, and other segments are classified as “unkno wn”. ev aluate the performance quantitativ ely by manually inspecting the ex emplars. The results, shown in T able 3, demonstrate that the ac- curacy of Stage 1 is almost perfect, being 100% correct for most characters. There are 11 characters for which we have no ex emplars in the 5 episodes of Seinfeld. They cover only 1.8% of speech seg- ments – most of them speak less than fiv e sentences in the episodes. Perf ormance ev aluation of Stage 2. W e demonstrate the trade-of f between the Proportion of Classified Segments (POCS) and ov er- all precision by varying the threshold d used in the nearest centroid voice classification to assign speech segments as “unknown”. T rue positiv es are the segments that overlap with the ground truth seg- ments and the character is correctly identified. Figure 3 sho ws the result. It can be seen that precision decreases as we classify more segments. Also, long segments show higher precision in all three TV series at any giv en POCS, which shows that the speaker model produces better representations for longer segments. 4.2. Overall performance on the test set Perf ormance measures. In addition to the traditional diarisation metric of Diarisation Error Rate (DER), we report the ov erall char- acter recognition accurac y as well as the av erage of the per-character T able 4 : Performance on the test set. W e report the Diarisation Error Rate both with and without consideration of the overlapping regions, DER(O) and DER respecti vely . Acc denotes a character recognition accuracy for the segments that overlap with the groundtruth. Ppc and Rpc are the av erage per-character precision and recall, respecti vely . Showname DER ↓ DER(O) ↓ Acc ↑ Ppc ↑ Rpc ↑ Seinfeld 29.6 29.7 81.2 0.922 0.841 Frasier 23.8 24.3 83.1 0.933 0.888 Scrubs 32.6 36.4 76.1 0.883 0.853 T able 5 : W ord Error rate (WER) (%) on each dataset. Model V ersion Seinfeld Frasier Scrubs W av2V ec2.0 [36] ASR BASE 960H 45.0 36.9 36.3 Whisper [2] medium.en 13.2 13.5 10.6 WhisperX [3] medium.en 11.8 11.2 9.2 00:17:15,931 ~ 00:17:17,034 Jerry : Oh, please. I lo ve her. 00:17:18,235 ~ 00:17:19,616 George : I’ve just met her, but I’m very impressed. 00:17:20,640 ~ 00:17:23,531 Ralph : I don’t understand. I’ve never had a problem with these notes before. 00:17:23,832 ~ 00:17:24,332 Jerry : What’ s the next move? … … Fig. 4 : Qualitativ e example. Our method produces the speech seg- ments with timestamps, and assigns the character who spoke it. precision and recall metrics for the characters of each show . W e use a 0.25-second collar to calculate DER. Accuracy is calculated for the segments that o verlap with one of the ground truth segments. The results are gi ven in T able 4. W e can see that the model performs best on Frasier and worst on Scrubs in all metrics. This is due to the dif ference in size of the casts in each dataset. Scrubs has more characters than Frasier (48 > 29) for a similar total duration (see T able 1). Thus, Scrubs provides more potential assignments for each segment, making identification more challenging. W e also report the diarisation performance with and without the ov erlapping speech in T able 4. The dif ference in DER for these two categories is small in Seinfeld and Frasier , meaning that there is not much ov erlapping speech within these two shows. Speech transcription performance. Our method uses the Whis- perX ASR model which also produces the speech transcription re- sults. W e compare the performance with the state-of-the-art models in T able 5. W ord Error Rate (WER) is computed after applying the Whisper text normaliser to both ground truth and predictions which can be found in the original paper [2]. W e see that WhisperX outper - forms both W av2vec2.0 and Whisper . This is because the V AD Cut & Mer ge preprocessing reduces the hallucination of Whisper , which is also mentioned in the original paper [3]. Qualitative example. W e show a qualitative example of our results in Figure 4. As can be seen, our method assigns the character for each speech segment, as well as timestamps and the transcription. 5. CONCLUSIONS In this work, we show promising first steps towards a model for character-a ware subtitling, which we hope will be beneficial for im- proving accessibility , and facilitating further research in video un- derstanding. Our method is not perfect, ho wev er . Our recognition efforts fail on short segments such as exclamations and also do not deal with overlapping speech – though the latter does not appear to be a serious limitation in practice. Furthermore, to generate the true SDH subtitles, we would need to classify and categorise e very sound, not just speech – something our model is not yet capable of. 6. REFERENCES [1] “Netflix player control tests, ” https://about.netflix. com/en/news/player- control- tests , 2023. [2] Alec Radford, Jong W ook Kim, T ao Xu, Greg Brockman, Christine McLeav ey , and Ilya Sutskev er , “Robust speech recognition via large-scale weak supervision, ” Pr oc. ICML , 2022. [3] Max Bain, Jaesung Huh, T engda Han, and Andre w Zisserman, “Whisperx: Time-accurate speech transcription of long-form audio, ” Pr oc. Inter speech , 2023. [4] Triantafyllos Afouras, Joon Son Chung, Andrew Senior, Oriol V inyals, and Andrew Zisserman, “Deep audio-visual speech recognition, ” IEEE P AMI , 2019. [5] Bowen Shi, W ei-Ning Hsu, and Abdelrahman Mohamed, “Ro- bust self-supervised audio-visual speech recognition, ” Pr oc. Interspeech , 2022. [6] Joon Son Chung, Jaesung Huh, Arsha Nagrani, Triantafyl- los Afouras, and Andrew Zisserman, “Spot the con versation: speaker diarisation in the wild, ” in Pr oc. Interspeech , 2020. [7] Y ifan Ding, Y ong Xu, Shi-Xiong Zhang, Y ahuan Cong, and Liqiang W ang, “Self-supervised learning for audio-visual speaker diarization, ” in Pr oc. ICASSP , 2020. [8] Eric Zhongcong Xu, Zeyang Song, Satoshi Tsutsui, Chao Feng, Mang Y e, and Mike Zheng Shou, “ A va-a vd: Audio- visual speaker diarization in the wild, ” 2022, MM ’22. [9] Rahul Sharma and Shrikanth Narayanan, “ Audio visual char- acter profiles for detecting background characters in entertain- ment media, ” arXiv preprint , 2022. [10] Mark Everingham, Josef Sivic, and Andre w Zisserman, “T ak- ing the bite out of automatic naming of characters in TV video, ” Image and V ision Computing , vol. 27, no. 5, 2009. [11] Monica-Laura Haurilet, Makarand T apaswi, Ziad Al-Halah, and Rainer Stiefelhagen, “Naming tv characters by watching and analyzing dialogs, ” in Pr oc. W ACV . IEEE, 2016, pp. 1–9. [12] Arsha Nagrani and Andrew Zisserman, “From benedict cum- berbatch to sherlock holmes: Character identification in tv se- ries without a script, ” in Pr oc. BMVC , 2017. [13] Bogdan Mocanu, Ruxandra T apu, and Titus Zaharia, “En- hancing the accessibility of hearing impaired to video con- tent through fully automatic dynamic captioning, ” in 2019 E- Health and Bioengineering Confer ence (EHB) , 2019. [14] W ataru Akahori, T atsunori Hirai, and Shigeo Morishima, “Dy- namic subtitle placement considering the re gion of interest and speaker location, ” in International Confer ence on Computer V ision Theory and Applications . SciT ePress, 2017. [15] Andrew Bro wn, Ernesto Coto, and Andre w Zisserman, “ Auto- mated video labelling: Identifying faces by corroborativ e evi- dence, ” in International Confer ence on Multimedia Informa- tion Processing and Retrieval , 2021. [16] Quan W ang, Carlton Do wney , Li W an, Philip Andrew Mans- field, and Ignacio Lopz Moreno, “Speak er diarization with lstm, ” in Pr oc. ICASSP , 2018. [17] Aonan Zhang, Quan W ang, Zhenyao Zhu, John Paisle y , and Chong W ang, “Fully supervised speaker diarization, ” in Pr oc. ICASSP , 2019. [18] Y oungki Kwon, Hee Soo Heo, Jaesung Huh, Bong-Jin Lee, and Joon Son Chung, “Look who’ s not talking, ” in 2021 IEEE Spoken Language T echnology W orkshop (SLT) , 2021. [19] Y usuke Fujita, Naoyuki Kanda, Shota Horiguchi, Kenji Naga- matsu, and Shinji W atanabe, “End-to-end neural speaker di- arization with permutation-free objectives, ” Pr oc. Interspeech , 2019. [20] Shota Horiguchi, Y usuke Fujita, Shinji W atanabe, Y awen Xue, and Kenji Nagamatsu, “End-to-end speaker diarization for an unknown number of speakers with encoder-decoder based at- tractors, ” Pr oc. Interspeech , 2020. [21] Joon Son Chung, Bong-Jin Lee, and Icksang Han, “Who said that?: Audio-visual speaker diarisation of real-world meet- ings, ” Pr oc. Interspeech , 2019. [22] Paul Lerner, Juliette Bergo ¨ end, Camille Guinaudeau, Herv ´ e Bredin, Benjamin Maurice, Sharleyne Lefevre, Martin Bouteiller , Aman Berhe, L ´ eo Galmant, Ruiqing Y in, et al., “Bazinga! a dataset for multi-party dialogues structuring, ” in LREC , 2022. [23] “International movie database, ” https://www.imdb.com . [24] Jon Gillick, W esley Deng, Kimiko Ryokai, and David Bam- man, “Robust laughter detection in noisy en vironments., ” in Pr oc. Interspeech , 2021. [25] Triantafyllos Afouras, Andrew Owens, Joon Son Chung, and Andrew Zisserman, “Self-supervised learning of audio-visual objects from video, ” in Pr oc. ECCV , 2020. [26] Bruno K orbar and Andrew Zisserman, “Personalised clip or: how to find your v acation videos, ” in Proc. BMVC , 2022. [27] Steven Bird, “Nltk: the natural language toolkit, ” in Pr o- ceedings of the COLING/ACL 2006 Interactive Pr esentation Sessions , 2006, pp. 69–72. [28] Brecht Desplanques, Jenthe Thienpondt, and Kris Demuynck, “Ecapa-tdnn: Emphasized channel attention, propagation and aggregation in tdnn based speaker verification, ” Pr oc. Inter- speech , 2020. [29] Arsha Nagrani, Joon Son Chung, W eidi Xie, and Andrew Zis- serman, “V oxceleb: Large-scale speaker verification in the wild, ” Computer Speech and Language , 2019. [30] Omkar M. Parkhi, Andrea V edaldi, and Andrew Zisserman, “Deep face recognition, ” in Pr oc. BMVC , 2015. [31] “The Frasier Archives, ” https://www.kacl780.net/ . [32] “Seinfeld scripts dot com, ” https://www. seinfeldscripts.com/seinfeld- scripts.html . [33] “Scrubs fandom, ” https://scrubs.fandom.com/ wiki/Category:Transcripts . [34] Mark Ev eringham, Josef Si vic, and Andre w Zisserman, “Hello! my name is... buf fy”–automatic naming of characters in tv video., ” in BMVC , 2006, vol. 2, p. 6. [35] Abhishek Dutta and Andrew Zisserman, “The VIA annota- tion software for images, audio and video, ” in Proceedings of the 27th ACM International Conference on Multimedia , New Y ork, NY , USA, 2019, MM ’19, A CM. [36] Alexei Baevski, Y uhao Zhou, Abdelrahman Mohamed, and Michael Auli, “wav2v ec 2.0: A framework for self-supervised learning of speech representations, ” NeurIPS , vol. 33, pp. 12449–12460, 2020.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment