TimbreTron: A WaveNet(CycleGAN(CQT(Audio))) Pipeline for Musical Timbre Transfer

In this work, we address the problem of musical timbre transfer, where the goal is to manipulate the timbre of a sound sample from one instrument to match another instrument while preserving other musical content, such as pitch, rhythm, and loudness.…

Authors: Sicong Huang, Qiyang Li, Cem Anil

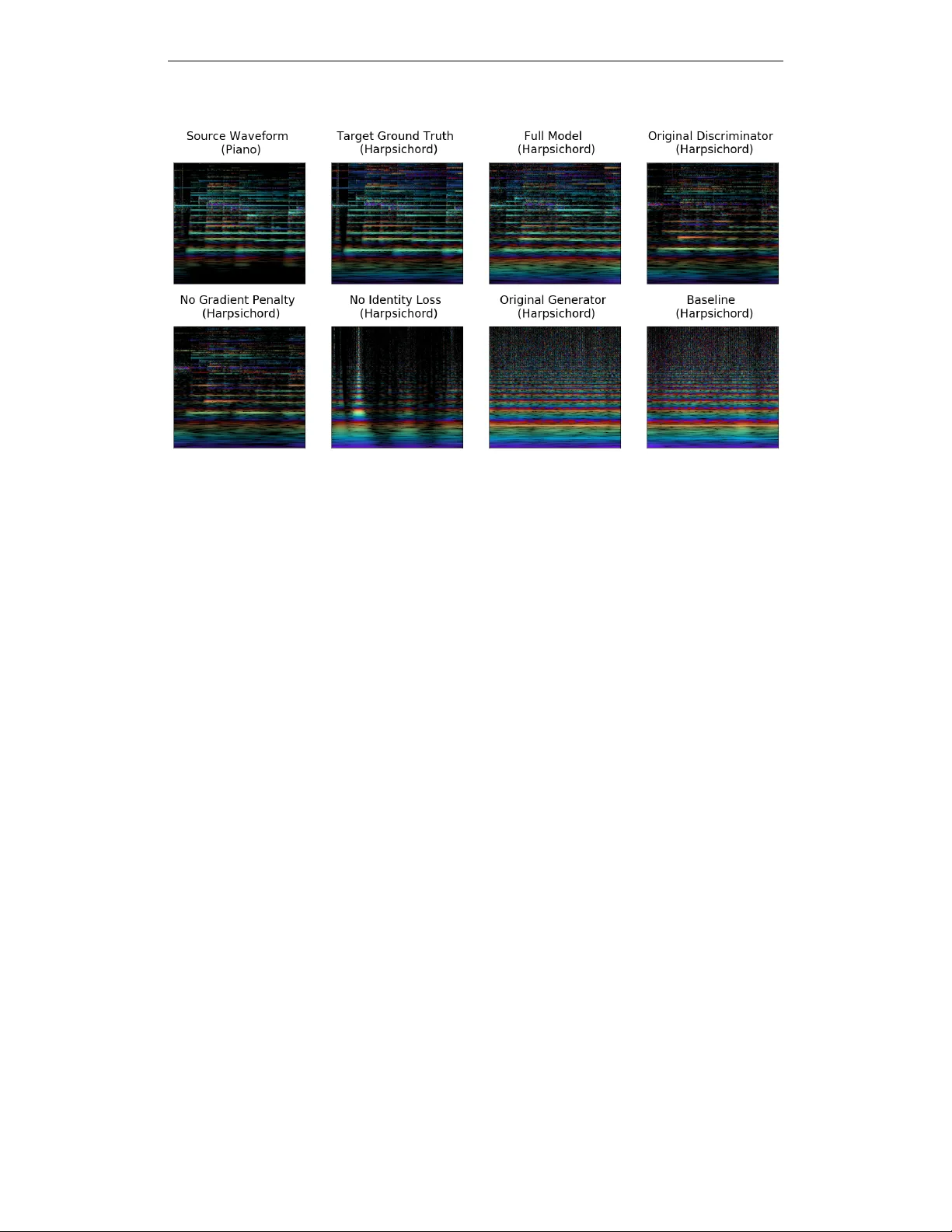

Published as a conference paper at ICLR 2019 T I M B R E T RO N : A W A V E N E T ( C Y C L E G A N ( C Q T ( A U D I O ) ) ) P I P E L I N E F O R M U S I C A L T I M B R E T R A N S F E R Sicong Huang 1 , 2 , Qiyang Li 1 , 2 , Cem Anil 1 , 2 , Xuchan Bao 1 , 2 , Sageev Oor e 2 , 3 , Roger B. Grosse 1 , 2 Univ ersity of T oronto 1 , V ector Institute 2 , Dalhousie Univ ersity 3 A B S T R AC T In this work, we address the problem of musical timbre transfer , where the goal is to manipulate the timbre of a sound sample from one instrument to match another instrument while preserving other musical content, such as pitch, rhythm, and loudness. In principle, one could apply image-based style transfer techniques to a time-frequenc y representation of an audio signal, but this depends on having a representation that allows independent manipulation of timbre as well as high- quality wav eform generation. W e introduce TimbreT ron, a method for musical timbre transfer which applies “image” domain style transfer to a time-frequency representation of the audio signal, and then produces a high-quality w av eform using a conditional W aveNet synthesizer . W e show that the Constant Q T ransform (CQT) representation is particularly well-suited to conv olutional architectures due to its approximate pitch equiv ariance. Based on human perceptual e valuations, we confirmed that TimbreT ron recognizably transferred the timbre while otherwise preserving the musical content, for both monophonic and polyphonic samples. W e made an accompanying demo video 1 which we strongly encourage you to watch before reading the paper . 1 I N T RO D U C T I O N T imbre is a perceptual characteristic that distinguishes one musical instrument from another playing the same note with the same intensity and duration. Modeling timbre is very hard, and it has been referred to as “the psychoacoustician’ s multidimensional waste-basket category for e v erything that cannot be labeled pitch or loudness” 2 . The timbre of a single note at a single pitch has a nonlinear dependence on the volume, time and e ven the particular w ay the instrument is played by the performer . While there is a substantial body of research in timbre modelling and synthesis (Cho wning (1973); Risset and W essel (1999); Smith (2010; 2011)), state-of-the-art musical sound libraries used by orchestral composers for analog instruments (e.g. the V ienna Symphonic Library (GmbH, 2018)) are still obtained by extremely careful audio sampling of real instrument recordings. Being able to model and manipulate timbre electronically carries importance for musicians who wish to experiment with different sounds, or compose for multiple instruments. (Appendix A discusses the components of music in more detail.) In this paper , we consider the problem of high quality timbr e transfer between audio clips obtained with dif ferent instruments. More specifically , the goal is to transform the timbre of a musical recording to match a set of reference recordings while preserving other musical content, such as pitch and loudness. W e take inspiration from recent successes in style transfer for images using neural networks (Gatys et al., 2015; Johnson et al., 2016; Ulyanov et al., 2016; Chu et al., 2017). An appealing strategy would be to directly apply image-based style transfer techniques to time-frequenc y representations of images, such as short-time Fourier transform (STFT) spectrograms. Howe v er , needing to con vert the generated spectrogram into a wav eform presents a fundamental obstacle, since accurate reconstruction requires phase information, which is dif ficult to predict (Engel et al., 2017), and existing techniques for inferring phase (e.g., Grif fin and Lim (1984)) can produce characteristic artifacts which are undesirable for high quality audio generation (Shen et al., 2017). 1 Link to the demo video: www.cs.toronto.edu/~huang/TimbreTron/index.html 2 McAdams and Bregman (1979), pg 34 1 Published as a conference paper at ICLR 2019 Recent years ha ve seen rapid progress on audio generation methods that directly generate high-quality wa veforms, such as W av eNet (v an den Oord et al., 2016), SampleRNN (Mehri et al., 2016), and T acotron2 (Shen et al., 2017). W av eNet’ s ability to condition on abstract audio representations is particularly rele vant, since it enables one to perform manipulations in high-le vel auditory represen- tations from which reconstruction would ha ve pre viously been impractical. T acotron2 performs high-lev el processing on time-frequency representations of speech, and then uses W av eNet to output high-quality audio conditioned on the generated mel spectrogram. W e adapt this general strate gy to the music domain. W e propose T imbreTron, a pipeline that performs CQT -based timbre transfer with high-quality wa veform output. It is trained only on unrelated samples of two instruments. For our time-frequency representation, we choose the constant Q transform (CQT), a perceptually moti vated representation of music (Brown, 1991). W e sho w that this representation is particularly well-suited to musical timbre transfer and other manipulations due to its pitch equiv ariance and the way it simultaneously achiev es high frequency resolution at low frequencies and high temporal resolution at high frequencies, a property that STFT lacks. T imbreTron performs timbre transfer by three steps, shown in Figure 1. First, it computes the CQT spectrogram and treats its log-magnitude values as an image (discarding phase information). Second, it performs timbre transfer in the log-CQT domain using a CycleGAN (Zhu et al., 2017). Finally , it con verts the generated log-CQT to a wa veform using a conditional W aveNet synthesizer (which implicitly must infer the missing phase information). Empirically , our T imbreT ron can successfully perform musical timbre transfer on some instrument pairs. The generated audio samples ha ve realistic timbre that matches the target timbre while otherwise expressing the same musical content (e.g., rhythm, loudness, pitch). W e empirically verified that the use of a CQT representation is a crucial component in T imbreTron as it consistently yields qualitati vely better timbre transfer than its STFT counterpart. CQT Conditional WaveNet CycleGAN Raw Violin Waveform Violin CQT Generated Flute CQT Generated Flute Waveform Figure 1: The T imbreT ron pipeline that performs timbre transfer from V iolin to Flute. 2 B A C K G RO U N D 2 . 1 T I M E - F R E Q U E N C Y A N A LY S I S T ime-frequency analysis refers to techniques that aim to measure how the signal’ s frequency domain representation changes ov er time. Short Time Fourier T ransform (STFT) The STFT is one of the most commonly applied techniques for this purpose. The discrete STFT operation can be compactly e xpressed as follows: S T F T { x [ n ] } ( m, ω k ) = ∞ X n = −∞ x [ n ] w [ n − m ] e − j ω k n The abov e formula computes the STFT of an input time-domain signal x [ n ] at time step m and frequency ω k . w refers to a zero-centered window function (such as Hann W indow), which acts as a means of masking out the values that are aw ay from m . Hence, the equation abov e can be interpreted as the discrete Fourier transform of the masked signal x [ n ] w [ n − m ] . An example spectrogram is shown in Figure 2. Constant Q T ransform (CQT). The CQT (Brown, 1991) is another time-frequency analysis tech- nique in which the frequency values are geometrically spaced, with the following particular pat- tern (Blankertz): ω k = 2 k b ω 0 . Here, k ∈ { 1 , 2 , 3 , ...k max } and b is a constant that determines the 2 Published as a conference paper at ICLR 2019 geometric separation between the different frequenc y bands. T o make the filter for different frequen- cies adjacent to each other , the bandwidth of the k th filter is chosen as: ∆ k = ω k +1 − ω k = ω k (2 1 b − 1 ) . This results in a constant frequency to resolution ratio (as kno wn as the “quality (Q) factor”): Q = ω k ∆ k = (2 1 b − 1) − 1 Huzaifah (2017) sho wed that CQT consistently outperformed traditional representations such as Mel-frequency cepstral coefficients (MFCCs) in en vironmental sound classification tasks using CNNs. Rainbowgram. Engel et al. (2017) introduced the rainbo wgram, a visualization of the CQT which uses color to encode time derivat iv es of phase; this highlights subtle timbral features which are in visible in a magnitude CQT . Examples of CQTs and rainbowgrams are sho wn in Figure 2. Figure 2: The STFT of a piano clip (left) , the CQT of the same piano clip (second left) , the rainbo wgram of the same piano clip (second right) and the rainbo wgram of a flute clip which has the same pitch as the first piano clip (right) . Note that the harmonics of dif ferent pitches are appr oximate translations of each other in the CQT representation. 2 . 2 W A V E F O R M R E C O N S T RU C T I O N F RO M S P E C T RO G R A M S Synthesis (wa veform reconstruction) from the aforementioned time-frequency analysis techniques can be performed in the presence of both magnitude and phase information (Allen and Rabiner, 1977) (Holighaus et al., 2013). In the absence of phase information, one of the common methods of synthetically generating phase from STFT magnitude is the Griffin-Lim algorithm (Grif fin and Lim, 1984). This algorithm works by randomly guessing the phase v alues, and iterativ ely refining them by performing STFT and in verse STFT operations until con ver gence, while keeping the magnitude values constant throughout the process. De veloped to minimize the mean squared error between the target spectrogram and predicted spectrogram, this algorithm is shown to reduce the objective function at each iteration, while having no optimality guarantees due to the non-conv exity of the optimization problem (Grif fin and Lim, 1984; Sturmel and Daudet) Although recent de velopments in the field ha ve enabled performing the in verse operation of CQT (V elasco et al., 2011; Fitzgerald et al., 2006), these techniques still require both phase and magnitude information. 2 . 3 W A V E N E T W av eNet, proposed by van den Oord et al. (2016), is an auto-regressi ve generativ e model for generating raw audio waveform with high quality . The model consists of stacks of dilated causal con volution layers with residual and skip connections. W aveNet can be easily modified to perform conditional wa veform generation; for example, it can be trained as a v ocoder for synthesizing natural, high-quality human speech in TTS systems from low-le vel acoustic features (e.g., phoneme, fundamental frequency , and spectrogram) (Arik et al., 2017; Shen et al., 2017). One limitation of W av eNet is that the generation of wav eforms can be expensiv e, which is undesirable for training procedures that require auto-regressi ve generation (e.g., GAN training, scheduled sampling). 2 . 4 G A N A N D C Y C L E G A N Generativ e Adversarial Networks (GANs) are a class of implicit generativ e models introduced by Goodfellow et al. (2014). A GAN consists of a discriminator and a generator , which are trained 3 Published as a conference paper at ICLR 2019 adversarially via a two-player min-max game, where the discriminator attempts to distinguish real data from samples, and the generator attempts to fool the discriminator . The objecti ve is: G ∗ , D ∗ = arg min G max D E x ∼X [log D ( x )] + E z ∼Z [log(1 − D ( G ( z )))] , (1) where D is the discriminator , G is the generator , z is the latent code vector sampled from Gaussian distribution Z , and x is sampled from data distrib ution X . GANs constituted a significant advance ov er previous generati ve models in terms of the quality of the generated samples. CycleGAN (Zhu et al., 2017) is an architecture for unsupervised domain transfer: learning a mapping between tw o domains without any paired data. (Similar architectures were proposed independently by Y i et al. (2017); Liu et al. (2017); Kim et al. (2017).) The CycleGAN learns two generator mappings: F : X → Y and G : Y → X ; and two discriminators: D X : X → [0 , 1] and D Y : Y → [0 , 1] . The loss function of CycleGAN consists of both adv ersarial losses (Eqn. 1), combined with a cycle consistency constraint which forces it to preserv e the structure of the input: L cyc ( F , G, X , Y ) = E x ∼X [ ∥ G ( F ( x )) − x ∥ 1 ] + E y ∼Y [ ∥ F ( G ( y )) − y ∥ 1 ] (2) 3 M U S I C P R O C E S S I N G W I T H C O N S TA N T - Q - T R A N S F O R M R E P R E S E N T A T I O N This section focuses on the first and last steps of the TimbreT ron pipeline: the steps related to the transforming raw w av eforms to and from time frequency representations. W e explain our reasoning for choosing the CQT representation and introduce our conditional W av eNet synthesizer which con verts a (possibly generated) CQT to a high-quality audio wa veform. 3 . 1 C Q T F O R M U S I C R E P R E S E N T A T I O N The CQT representation (Brown, 1991) has desirable characteristics that mak e it especially suitable for processing musical audio signals. It uses a logarithmic representation of frequency , where the frequencies are generally chosen to exactly cover all the pitches present in the twelve tone, well- tempered scale. Unlike the STFT , the CQT has higher frequenc y resolution to wards lo wer frequencies, which leads to better pitch resolution for lower re gister instruments (such as cello or trombone), and higher time resolution tow ards higher frequencies, which is advantageous for recovering the fine timing of rhythms. Since indi vidual notes contain information across many frequencies (due to their pattern of ov ertones), this combination of resolutions ought to allo w simultaneous recov ery of pitch and timing information for any particular note. (While this information is preserved in the signal, wa veform reco very is a dif ficult problem in practice; this is discussed in Section 3.2). Another key feature of the CQT representation in the context of TimbreT ron is (approximate) pitch equiv ariance. Thanks to the geometric spacing of frequencies, a pitch shift corresponds (approximately) to a vertical translation of the “spectral signature” (unique pattern of harmonics) of musical instruments. This means that the conv olution operation is approximately equiv ariant under pitch translation, which allows con volutional architectures to share structure between dif ferent pitches. A demonstration of this can be seen in Figure 3. Since the harmonics of a musical instrument are approximately integer multiples of the fundamental frequency , scaling the fundamental frequency (hence the pitch) corresponds to a constant shift in all of the harmonics in log scale. W e also want to emphasize on some of the reasons why the equi variance is only approximate: • Imperfect multiples: In real audio samples from instruments, the harmonics are only approximately integer multiples of the fundamental frequenc y , due to the material properties of the instruments producing the sound. • Dependence of spectral signature on pitch and beyond: For each pitch, each instrument has a slightly different spectral signature, meaning that a simple translation in the frequency axis cannot completely account for the changes in the frequency spectrum. Furthermore, ev en at a given pitch it can still change depending on how it’ s played. [sheldon: newly updated @sageev] W e used 16ms frame hop (256 time steps under 16kHz). More details can be found in Appendix B. 4 Published as a conference paper at ICLR 2019 Figure 3: The rainbo wgram of a C major scale played by piano. 3 . 2 W A V E F O R M R E C O N S T RU C T I O N F RO M C Q T R E P R E S E N T A T I O N U S I N G C O N D I T I O N A L W A V E N E T Since empirical studies hav e shown it is dif ficult to directly predict phase in time-frequency represen- tations (Engel et al., 2017), we discard the phase information and perform the image-based processing directly on a log-amplitude CQT representation. Therefore, in order to reco ver a w av eform consistent with the generated CQT , we need to infer the missing phase information, which is a difficult problem (V elasco et al., 2011). T o con vert log magnitude CQT spectrograms back to wa veforms, we use a 40-layer conditional W av eNet with the dilation rate of 2 k (mo d 10) for the k th layer . The model is trained using pairs of a CQT and a wa veform; this requires only a collection of unlabeled wav eforms, since the CQT can be computed from the waveform. 3 See Appendix C.4 for the details of the W av eNet architecture. W av eNet reconstructed audio samples can be found here 4 Beam Search Because the conditional W av eNet generates stochastically from its predicti ve distri- bution, it sometimes produces low-probability outputs, such as hallucinated notes. Also, because it has dif ficulty modeling the local loudness, the loudness often drifts significantly ov er the timescale of seconds. While these issues could potentially be addressed by impro ving the W aveNet architecture or training method, we instead tak e the perspecti ve that the W aveNet’ s role is to produce a w av eform which matches the tar get CQT . Since the above artif acts are macro-scale errors which happen only stochastically , the W aveNet has a significant probability of producing high-quality outputs over a short segment (e.g. hundreds of milliseconds). Therefore, we perform a beam search using the W av eNet’ s generations in order to better match the tar get CQT . See Appendix C.5 for more details about our beam search procedure. Rev erse Generation In early e xperiments, we observed that percussiv e attacks (onset characteristics of an instrument in which it reaches a large amplitude quickly) are sometimes hard to model during forward generation, resulting in multiple attacks or missing attacks. W e believ e this problem occurs because it is difficult to determine the onset of a note from a CQT spectrogram (in which information is blurred in frequency), and it is dif ficult to predict precise pitch at the note onset due to the broad frequency spectrum at that moment. W e found that the problems of missing and doubled attacks could be mostly solved by ha ving the W av eNet generate the wav eform samples in re verse order , from end to beginning. 4 T I M B R E T R A N S F E R W I T H C Y C L E G A N O N C Q T R E P R E S E N T A T I O N In this section, we describe the middle step of our TimbreT ron pipeline, which performs timbre transfer on log-amplitude CQT representations of the wav eforms. As training data, we hav e collections of unrelated recordings of different musical instruments. Hence, our timbre transfer problem on log-amplitude CQT “images” is an instance of unsupervised “image-to-image” translation. T o achie ve 3 W e up-sample the CQT spectrograms to the rate of the audio using nearest neighbour interpolation before conditioning them to the W aveNet. The audio sample is quantized using 8-bit mu-law , and the output of the W aveNet is from softmax layer ov er 256 quantized v alues. At test time, we run the conditional W av eNet autoregressi vely with the initial condition of zero as the first sample value. 4 Link to W aveNet reconstructed sample s www.cs.toronto.edu/~huang/TimbreTron/others. html#wavenet 5 Published as a conference paper at ICLR 2019 this, we applied the CycleGAN architecture, but adapted it in se veral ways to make it more ef fectiv e for time-frequency representations of audio. Removing Checkerboard Artifacts The con vnet-resnet-decon vnet based generators from the original CycleGAN led to significant checkerboard artif acts in the generated CQT , which corresponds to sev ere noise in the generated wa veform. T o alleviate this problem, we replaced the decon volution operation with nearest neighbor interpolation followed with regular con volution, as recommended by Odena et al. (2016). Full-Spectrogram Discriminator Due to the local nature of the original CycleGAN’ s transforma- tions, Zhu et al. (2017) found it adv antageous for the discriminator only to process a local patch of the image. Howe ver , when generating spectrograms, it’ s crucial that different partials of the same pitch be consistent with each other; a discriminator which is local in frequenc y cannot enforce this. Therefore, we gav e the discriminator the full spectrogram as input. Gradient Penalty(GP) Replacing the patch discriminator with the full-spectrogram one led to unstable training dynamics because the discriminator was too po werful. T o compensate for this, we added the Gradient Penalty(GP) (Gulrajani et al., 2017) to enforce a soft Lipschitz constraint: L GP ( G, D, Z , ˆ X ) = α · E ˆ x ∼ ˆ X [( ∥∇ ˆ x D ( ˆ x ) ∥ 2 − 1) 2 ] (3) Here ˆ X are samples taken along a line between the true data distrib ution X and the generator’ s data distribution X g = { F ( z ) | z ∼ Z } via con vex combination of a real data point and a generated data point. Fedus et al. (2018) showed empirically that the GP can stabilize GAN training. Furthermore, Gomez et al. (2018) sho wed that GP can also stabilize and improv e CycleGAN training with word embeddings. W e observed the same benefits in our experiments. Identity loss In addition to the adversarial loss and the reconstruction loss that we applied to the generators, we also added identity loss, which w as proposed by Zhu et al. (2017) to preserve color composition in the original CycleGAN. Empirically , we found out that the identity loss component helps generators to preserve music content, which yields better audio quality empirically . L identity ( F , G, X , Y ) = E x ∼X [ ∥ F ( x ) − y ∥ 1 ] + E y ∼Y [ ∥ G ( y ) − x ∥ 1 ] (4) Our weighting of the identity loss followed a linear decay schedule (details in Appendix C.3). In this way , at the start of training, the generator is encouraged to learn a mapping that preserves pitch; as training progresses, the enforcement is reduced, allo wing the generator to learn more expressi ve mappings. See Appendix C.2, C.3, and C.6 for more details of our CycleGAN architecture, and training and generation methods. 5 R E L A T E D W O R K There is a long history of using clever representations of images or audio signals in order to perform manipulations which are not straightforward on the ra w signals. In a seminal w ork, T enenbaum and Freeman (1999) used a multilinear representation to separate style and content of images. Ulyanov and Lebedev (2016) and V erma and Smith (2018) then applied the optimization technique proposed by Gatys et al. (2015) to the audio domain by applying the image-based architectures to spectrogram representations of the signals. Grinstein et al. (2017) took a similar approach, but used hand-crafted features to extract statistics from the spectrograms. Howe ver , a recent revie w by Dai et al. (2018) pointed out that the disentanglement of timbre and performance control information remains unsolv ed. Zhu et al. (2017) introduced Cycle GAN approach to learn an “unsupervised image-to-image mapping” between two unpaired datasets using two generator networks and two discriminator netw orks with generativ e adversarial training. Gi ven the success of the CycleGAN on image domain style transfer, Kaneko and Kameoka (2017) applied the same architecture to translate between human voices in the Mel-cepstral coefficient (MCEP) domain and Brunner et al. (2018) applied it to musical style transfer with MIDI representations. 6 Published as a conference paper at ICLR 2019 What the aforementioned audio style transfer approaches hav e in common is that the reconstruction quality is limited by the existing non-parametric algorithms for audio reconstruction (e.g., the Griffin- Lim algorithm for STFT domain reconstruction (Griffin and Lim, 1984), or the WORLD vocoder for MCEP domain reconstruction of speech signals (Morise et al., 2016)), or existing MIDI synthesizer . Another strategy is to operate directly on wa veforms. van den Oord et al. (2016) demonstrated high-quality audio generation using W av eNet. Follo wing on this, Engel et al. (2017) proposed a W av eNet-style autoencoder model operating on raw wa veforms that was capable of creating ne w , realistic timbres by interpolating between already e xisting ones. Donahue et al. (2018) proposed a method to synthesize wa veforms directly using GANs with impro ved quality o ver nai ve generativ e models such as SampleRNN (Mehri et al., 2016) and W av eNet. Mor et al. (2018) used an encoder- decoder approach for the T imbre Transfer problem, where they trained a uni versal encoder to learn a shared representation of raw wav eforms of v arious instruments, as well as instrument-specific decoders to reconstruct wa veforms from the shared representation. In a parallel work, Bitton et al. (2018) approached the man y-to-many timbre transfer problem with their MoVE model which is based on UNIT (Liu et al., 2017) b ut with Maximum Mean Discrepancy(MMD) as their objecti ve. While their approach has the adv antage of training a single model for many transfer directions, our T imbreTron model has the advantage that it uses a GAN-based training objecti ve, which (in the image domain) typically results in outputs with higher perceptual quality compared to V AEs. 6 E X P E R I M E N T S W e conducted two sets of e xperiments to 1) experiment with pitch-shifting and tempo-changing to further v alidate our choice of CQT representation; 2) test our full T imbreT ron pipeline (along with ablation experiments to validate our architectural choices). See Appendix C for the details of our experimental setup. For this section, please listen to audio samples we pro vided in our website 5 as you read along. 6 . 1 D A TA S E T S T raining TimbreT ron requires collections of unrelated recordings of the source and tar get instruments. W e built our o wn MIDI and real world datasets of classical music for training T imbreT ron. W ithin each type of dataset, we gathered unrelated recordings of Piano, Flute, V iolin and Harpsichord and then di vided the entire dataset into training set and test set. W e ensured that the training and test sets were entirely disjoint in terms of musical content by splitting the datasets by musical piece. The training dataset was di vided into 4-second chunks, which were the basic units processed by our CycleGAN and W av eNet. Links to source audio and more details about our dataset are given in Appendix C.1 6 . 2 D I S E N TA N G L I N G P I T C H A N D T E M P O U S I N G C Q T R E P R E S E N T A T I O N Before presenting our timbre transfer results, we first consider the simpler task of disentangling pitch and tempo. Recall that the two properties are entangled in the time domain representation, e.g. subsampling the wa veform simultaneously increases the tempo and raises the pitch. Changing the two independently requires more sophisticated analysis of the signal. In the conte xt of our T imbreT ron pipeline, due to the CQT’ s pitch equiv ariance property , pitch shifting can be (approximately) performed simply by translating the CQT representation on the log-frequency axis. (Since the STFT uses linearly sampled frequencies, it does not lend itself easily to this type of simple transformation.) A udio time stretching can be done using either the CQT or STFT representations, combined with the W aveNet synthesizer , by changing the number of waveform samples generated per CQT window . Regardless of the number of samples generated, the W aveNet synthesizer is able to produce the correct pitch based on the local frequenc y content. (See section 6.2 of the OneDri ve folder) In conclusion, our method was able to v ary the pitch and tempo independently while otherwise preserving the timbre and musical structure. 5 Link to the final samples: https://www.cs.toronto.edu/~huang/TimbreTron/samples_ page.html 7 Published as a conference paper at ICLR 2019 6 . 3 T I M B R E T R A N S F E R E X P E R I M E N T S While most of our experiments on timbre transfer are conducted on real world music recordings, we also use synthetic MIDI audio data in our ablation studies because it is possible to produce paired dataset for ev aluation purpose. In this section, we show our e xperimental findings on the full T imbreTron pipeline using real w orld data, verify the correctness of our reasoning about CQT , and show the generalization capability of T imbreT ron. Comparing CQT and STFT Representations One of the key design choices in T imbreT ron was whether to use an STFT or CQT representation. If the STFT representation is used, there is an additional choice of whether to reconstruct using the Griffin-Lim algorithm or the conditional W av eNet synthesizer . W e found that the STFT -based pipeline had two problems: 1) it sometimes failed to correctly transfer low pitches, likely due to the STFT’ s poor frequency resolution at low frequencies, and 2) it sometimes produced a random permutation of pitches. For example, we ran T imbreTron on a Bach piano sample played by a professional musician. The STFT T imbreTron transposed parts of the longer excerpt by different amounts, and for a fe w notes in particular , seemed to fail to transpose them by the same amount as it did the others. As is shown by audio samples here 6 , those problems were completely solved using CQT TimbreT ron (likely due to the CQT’ s pitch equiv ariance and higher frequency resolution at lo w frequencies). Both of these artifacts occurred in both W aveNet and Griffin-Lim reconstruction methods (See T able 4), which suggests that the source of the artifacts are likely to be from the CycleGAN stage of the pipeline. (Please listen to corresponding samples in section 6.3 of the OneDrive folder) This empirically demonstrates the effecti veness of the CQT representation compared with STFT . Generalizing from MIDI to Real-W orld A udio T o further explore the generalization capability of T imbreTron, we also tried one domain adaptation experiment where we took a CycleGAN trained on MIDI data, tested it on the real world test dataset, and synthesized audio with W avenet trained on training real world data. As is shown from the corresponding audio examples in this section 7 , the quality of generated audio is very good, with pitch preserved and timbre transfered. The ability to generalize from MIDI to real-world is interesting, in that it opens up the possibility of training on paired examples. 6 . 4 E V A L UAT I O N W I T H A M A Z O N M E C H A N I C A L T U R K ( A M T ) W e conducted a human study to in vestigate whether TimbreT ron could transfer the timbre of a reference collection of signals while otherwise preserving the musical content. W e also ev aluated the effecti veness of the CQT representation by comparing with a v ariant of T imbreTron with the CQT replaced by the STFT . All results are sho wns in T ables 2, 3 and 4, with detailed discussion in this section. A list of questions ask ed in AMT can be found in T able 1. Does Timbr eT ron transfer timbre? T o be effecti ve, the system must transform a gi ven audio input so that the output is (1) recognizable as the same (or appropriately similar) basic musical piece, and (2) recognizable as the target instrument. W e address both of these criteria by two types of comparison-based experiments: instrument similarity and musical piece similarity . The questions we asked are listed in T able 1. T able 2 shows results for the instrument similarity comparison and T able 3 shows results for the music piece similarity comparison. The respondents were also asked to provide their subjecti ve judgment about the instrument used for the provided samples. The original questionnaire can be found here 8 . (1) Preserving the musical piece. A dif ferent instrument playing the same notes may not alw ays sound subjecti vely like the same “piece”. When this is done in musical conte xts, the notes themselves are often changed in order to adapt pieces between instruments, and this is generally referred to as a 6 Link to audio samples for STFT vs. CQT comparison: https://www.cs.toronto.edu/~huang/ TimbreTron/others.html#cqt_stft 7 Link to audio samples for generalization: www.cs.toronto.edu/~huang/TimbreTron/others. html#generalization 8 Link to AMT questionnaire for Does TimbreT ron transfer timbre? : https://www.cs.toronto. edu/~huang/TimbreTron/AMT_Does_TimbreTron_transfer_Timbre.html 8 Published as a conference paper at ICLR 2019 Listen to two audio clips: (Embedded link for clip A and clip B) The clip A and B may be similar in some ways, and dif ferent in others. Rate their similarities with the following criteria: (i) Instrument similarity: (a) Instrument is very similar (e.g. A and B were generated with two different pianos) (b) Instrument is similar (A and B are in the same family: both wind instrument, or both string instrument, etc) (c) Instrument is different (d) I don’t kno w (ii) Musical piece similarity: (a) Musical pieces are nearly identical (e.g. A and B are tw o different performances of the same piece: perhaps a fe w notes are different, perhaps timing is slightly dif ferent) (b) Musical pieces are very similar (e.g. A and B are different v ersions of the same piece, e.g. tw o different arrangements) (c) Musical pieces are related (e.g. A and B are tw o different, b ut related, pieces) (d) Entirely different (unrelated) musical piece (e) I don’t kno w (iii) What instrument did clip A primarily sound like to you? (iv) What instrument did clip B primarily sound like to you? T able 1: The exact question format that was used in the AMT studies. For part (i) and (ii), participants were asked to choose one answer among the options. For part (iii) and (i v), a text box w as provided for participants to type in their answers. T otal Samples Audio Sample Answer V ery Similar Similar Different Do not know 120 T arget Instrument & T im- breT ron Generation 49 . 2% 35 . 8% 15 . 0% 0 . 0% 60 Original Instrument & Tim- breT ron Generation 26 . 7% 18 . 3% 55 . 0% 0 . 0% T able 2: AMT results on pair-wise instrument comparisons between our proposed T imbreTron without beam search, ground truth original instrument and ground truth target instrument. This corresponds to question type (i) in T able 1. new “arrangement” of an e xisting piece. Thus, ev en in the cases where we had a recording av ailable in the target domain, the exact notes or timings were not always identical to those in the original recording from which we transferred. Overall, when we did hav e such a target domain recording of a real instrument, we found that for the pair of (Real T arget Instrument, T imbreT ron Generated T arget Instrument), 88% of responses considered the musi cal pieces to be nearly identical or v ery similar, while roughly 10.5% considered them related and 1.5% considered them dif ferent. (Details in T able 3.) Thus, it appears that generally the musical piece w as indeed preserved. (2) T ransferring the timbre. Evaluating this is challenging because, if the transfer is not perfect (which it is not), then judging similarity of not-quite-identical instruments is fraught with perceptual challenges. With this in mind, we included a range of pairwise comparisons and gav e a likert scale with v arious anchors. Overall, we found that for the pair (Ground T ruth T arget audio, T imbreT ron Generated audio), roughly 85% of responses considered the instrument gene rating the audio to be very similar (e.g. still piano, but a dif ferent piano) or similar (e.g. another string instrument). (More details in T able 2.) W e also asked participants to identify the instrument that they heard in some of the audio excerpts, with an open-ended question. Generally we found that participants were indeed able to either identify the correct instrument, or confused with a very similar -sounding instrument. For e xample, one participant described a generated harpsichord as a banjo, which is in fact very close to harpsichord in terms of timbre. As a reference, participants had similar reasonable confusions about identifying ground truth instruments as well (e.g., one participant described a real harpsichord as being a sitar). Based on perceptual ev aluations above, we claim that T imbreTron is able to transfer timbre recognizably while preserving the musical content. 9 Published as a conference paper at ICLR 2019 T otal Samples Architecture Answer Nearly Identical V ery Similar Related Entirely Different Do not know 120 T arget Instrument & Tim- breT ron Generation 41 . 7% 45 . 8% 10 . 8% 1 . 7% 0 . 0% 60 Original Instrument & T im- breT ron Generation 33 . 3% 30 . 0% 20 . 0% 16 . 7% 0 . 0% T able 3: AMT results on pair-wise musical piece comparisons between our proposed T imbreT ron without beam search, ground truth original instrument and ground truth target instrument. This corresponds to question type (ii) in T able 1. T otal Samples Audio Sample Answer CQT same STFT 240 STFT+W aveNet counterpart 46 . 7% 25 . 8% 27 . 5% 180 STFT+Griffinlim counter- part 56 . 7% 27 . 5% 15 . 8% T able 4: AMT results on timbre quality compar - isons between our proposed T imbreT ron, Tim- breT ron but with STFT W av enet and TimbreT ron with STFT Griffin-Lim. P articipants are asked: which one of the follo wing two samples sounds more like the instrument pro vided in the target in- strument sample? Comparing CQT vs. STFT T o empirically test if our proposed T imbreT ron with CQT representa- tion is better than its STFT -W av enet counterpart, or its STFT -GriffinLim counterpart, we conducted a human study using AMT . The original questionnaire can be found here 9 In the questionnaire, we asked T urkers to listen to three audio clips: the original audio from instrument A (the “instrument example”), the T imbreT ron generated audio of instrument A, and its STFT conterparts, then asked them: “In your opinion, which one of A and B sounds more like the instrument pro vided in ‘instru- ment example”’? , where A and B in the questions are the generated samples (presented in random order). Naturally , sounding closer to the “instrument sample” means the timbre quality is better . W e conducted two groups of experiment. In the first group, the STFT counterpart is the W avenet and CycleGAN trained on STFT representation and the result is in first ro w of the T able 4: most people think the CQT T imbreTron is better . In the second group, we took the same CycleGAN trained on STFT , but instead simply generated the wa veform using Grif fin-Lim algorithm. The results are in the second row: Even more people think CQT TimbreT ron is better . In conclusion, compared to Griffin-Lim as the baseline, training a W avenet on STFT impro ved T imbre quality mar ginally . Furthermore, samples generated by T imbreTron trained on CQT was proven to have significantly better timbre quality . 6 . 5 A B L A T I O N S T U D Y F O R T I M B R E T R O N T o better understand and justify each modification we made to the original CycleGAN, we conducted an ablation study where we remov ed one modification at a time for MIDI CQT experiment. (W e used MIDI data for ablation because the dataset has paired samples, which provides a con venient ground truth for transfer quality ev aluation.) Figure 4 demonstrates the necessity of each modification for the success of T imbreTron. 7 C O N C L U S I O N W e presented the TimbreT ron, a pipeline for perfoming high-quality timbre transfer on musical wa ve- forms using CQT -domain style transfer . W e perform the timbre transfer in the time-frequency domain, and then reconstruct the inputs using a W aveNet (circumv enting the difficulty of phase recov ery from an amplitude CQT). The CQT is particularly well suited to conv olutional architectures due to its approximate pitch equiv ariance. The entire pipeline can be trained on unrelated real-world music segments, and intriguingly , the MIDI-trained CycleGAN demonstrated generalization capability to real-world musical signals. Based on an AMT study , we confirmed that T imbreTron recognizably transferred the timbre while otherwise preserving the musical content, for both monophonic and poly- 9 Link to AMT questionnaire for Comparing CQT vs. STFT : https://www.cs.toronto.edu/ ~huang/TimbreTron/AMT_Comparing_CQT_vs_STFT.html 10 Published as a conference paper at ICLR 2019 Figure 4: Rainbowgrams of the 4-second audio samples for the ablation study on MIDI test dataset. The source ground truth and the tar get ground truth come from a paired samples in the dataset. All other audio samples are the timbre transfer results from the source ground truth with different v ersions (full and ablated) of our T imbreT ron. “Full Model” corresponds to the output of our final T imbreTron, which is perceptually closest to target ground truth and hav e the best audio quality . “Original discriminator” or “Original generator” corresponds to the TimbreT ron pipeline with the discriminator or generator replaced by the original discriminator or generator in the original CycleGAN. “No gradient penalty”, “No identity loss”, and “No data augmentation” refer to the full model without the corresponding modifications. “Baseline” is the original CycleGAN (Zhu et al., 2017) phonic samples. W e believe this w ork constitutes a proof-of-concept for CQT -domain manipulation of musical signals with high-quality wa veform outputs. A C K N O W L E D G M E N T S W e thank Doug Eck, Jesse Engel, and Phillip Isola for helpful discussions. R E F E R E N C E S Jont B Allen and La wrence R Rabiner . A unified approach to short-time Fourier analysis and synthesis. Pr oceedings of the IEEE , 65(11):1558–1564, 1977. Sercan O Arik, Mik e Chrzanowski, Adam Coates, Gregory Diamos, Andre w Gibiansky , Y ongguo Kang, Xian Li, John Miller, Jonathan Raiman, Shubho Sengupta, et al. Deep voice: Real-time neural text-to-speech. arXiv preprint , 2017. Adrien Bitton, Philippe Esling, and Axel Chemla-Romeu-Santos. Modulated variational auto- encoders for many-to-man y musical timbre transfer . arXiv preprint , 2018. Benjamin Blankertz. The constant Q transform. URL http://doc.ml.tu- berlin.de/ bbci/material/publications/Bla_constQ.pdf . Judith C Bro wn. Calculation of a constant Q spectral transform. The Journal of the Acoustical Society of America , 89(1):425–434, 1991. Gino Brunner , Y uyi W ang, Roger W attenhofer, and Sumu Zhao. Symbolic music genre transfer with CycleGAN. arXiv preprint , 2018. J. M. Cho wning. The synthesis of complex audio spectra by means of frequency modulation. Journal of the Audio Engineering Society , 21(7):526–534, 1973. 11 Published as a conference paper at ICLR 2019 Casey Chu, Andre y Zhmoginov , and Mark Sandler . CycleGAN, a master of steg anography . CoRR , abs/1712.02950, 2017. URL . Shuqi Dai, Zheng Zhang, and Gus Xia. Music style transfer issues: A position paper . CoRR , abs/1803.06841, 2018. URL . Chris Donahue, Julian McAuley , and Miller Puckette. Synthesizing audio with generativ e adversarial networks. CoRR , abs/1802.04208, 2018. URL . Jesse Engel, Cinjon Resnick, Adam Roberts, Sander Dieleman, Douglas Eck, Karen Simonyan, and Mohammad Norouzi. Neural audio synthesis of musical notes with wavenet autoencoders. CoRR , abs/1704.01279, 2017. URL . W illiam Fedus, Mihaela Rosca, Balaji Lakshminarayanan, Andrew M Dai, Shakir Mohamed, and Ian Goodfellow . Many paths to equilibrium: GANs do not need to decrease a div ergence at ev ery step. 2018. Derry Fitzgerald, Matt Cranitch, and Marcin T Cycho wski. T ow ards an in verse constant Q transform. In Confer ence papers , page 12, 2006. Leon A. Gatys, Alexander S. Ecker , and Matthias Bethge. A neural algorithm of artistic style. CoRR , abs/1508.06576, 2015. URL . V ienna Symphonic Library GmbH, 2018. URL https://www.vsl.co.at/en/Products . Aidan N Gomez, Sicong Huang, Iv an Zhang, Bryan M Li, Muhammad Osama, and Lukasz Kaiser . Unsupervised cipher cracking using discrete GANs. arXiv preprint , 2018. Ian Goodfellow , Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David W arde-Farley , Sherjil Ozair, Aaron Courville, and Y oshua Bengio. Generativ e adversarial nets. In Advances in neural informa- tion pr ocessing systems , pages 2672–2680, 2014. Daniel Griffin and Jae Lim. Signal estimation from modified short-time Fourier transform. IEEE T ransactions on Acoustics, Speech, and Signal Pr ocessing , 32(2):236–243, 1984. Eric Grinstein, Ngoc Q. K. Duong, Ale xey Ozerov , and Patrick Pérez. Audio style transfer . CoRR , abs/1710.11385, 2017. URL . Ishaan Gulrajani, F aruk Ahmed, Martin Arjovsk y , V incent Dumoulin, and Aaron Courville. Improv ed training of wasserstein GANs. arXiv preprint , 2017. Jeffre y Hass. Chapter one: An acoustics primer , 2018. URL http://www.indiana.edu/ ~emusic/etext/acoustics/chapter1_loudness.shtml . Judy Hoffman, Eric Tzeng, T aesung Park, Jun-Y an Zhu, Phillip Isola, Kate Saenko, Alex ei A Efros, and T revor Darrell. Cycada: Cycle-consistent adversarial domain adaptation. arXiv pr eprint arXiv:1711.03213 , 2017. Nicki Holighaus, Monika Dörfler , Gino Angelo V elasco, and Thomas Grill. A framework for in vertible, real-time constant-Q transforms. IEEE T ransactions on Audio, Speech, and Language Pr ocessing , 21(4):775–785, 2013. Ehsan Hosseini-Asl, Y ingbo Zhou, Caiming Xiong, and Richard Socher . Augmented cyclic adversar- ial learning for low resource domain adaptation. 2018a. Ehsan Hosseini-Asl, Y ingbo Zhou, Caiming Xiong, and Richard Socher . A multi-discriminator cycle- gan for unsupervised non-parallel speech domain adaptation. arXiv preprint , 2018b. Muhammad Huzaifah. Comparison of time-frequenc y representations for en vironmental sound classification using con volutional neural networks. arXiv preprint , 2017. Justin Johnson, Alexandre Alahi, and Li Fei-Fei. Perceptual losses for real-time style transfer and super-resolution. In Eur opean Confer ence on Computer V ision , pages 694–711. Springer , 2016. 12 Published as a conference paper at ICLR 2019 T akuhiro Kaneko and Hirokazu Kameoka. Parallel-data-free v oice con version using cycle-consistent adversarial networks. arXiv pr eprint arXiv:1711.11293 , 2017. T aeksoo Kim, Moonsu Cha, Hyunsoo Kim, Jungkwon Lee, and Jiwon Kim. Learning to discover cross-domain relations with generati ve adversarial networks. arXiv pr eprint arXiv:1703.05192 , 2017. Diederik P Kingma and Jimmy Ba. Adam: A method for stochastic optimization. arXiv pr eprint arXiv:1412.6980 , 2014. librosa. librosa. https://librosa.github.io. Ming-Y u Liu, Thomas Breuel, and Jan Kautz. Unsupervised image-to-image translation networks. arXiv pr eprint arXiv:1703.00848 , 2017. Stephen McAdams and Albert Bregman. Hearing musical streams. Computer Music J ournal , 3(4): 26–43+60, December 1979. Soroush Mehri, Kundan Kumar , Ishaan Gulrajani, Rithesh Kumar , Shubham Jain, Jose Sotelo, Aaron C. Courville, and Y oshua Bengio. Samplernn: An unconditional end-to-end neural audio generation model. CoRR , abs/1612.07837, 2016. URL 07837 . Noam Mor , Lior W olf, Adam Polyak, and Y aniv T aigman. A univ ersal music translation network. arXiv pr eprint arXiv:1805.07848 , 2018. Masanori Morise, Fumiya Y okomori, and Kenji Oza wa. W orld: a vocoder-based high-quality speech synthesis system for real-time applications. IEICE TRANSA CTIONS on Information and Systems , 99(7):1877–1884, 2016. Augustus Odena, V incent Dumoulin, and Chris Olah. Decon volution and checkerboard arti- facts. Distill , 2016. doi: 10.23915/distill.00003. URL http://distill.pub/2016/ deconv- checkerboard . Jean-Claude Risset and David W essel. Exploration of timbre by analysis and synthesis. In Diana Deutch, editor , The Psychology of Music , pages 113–169. Elsevier , 2 edition, 1999. Juan G Roederer . The physics and psychophysics of music: an introduction . Springer Science & Business Media, 2008. Jonathan Shen, Ruoming P ang, Ron J W eiss, Mik e Schuster , Navdeep Jaitly , Zongheng Y ang, Zhifeng Chen, Y u Zhang, Y uxuan W ang, RJ Skerry-Ryan, et al. Natural TTS synthesis by conditioning wa venet on mel spectrogram predictions. arXiv preprint , 2017. Julius O. III Smith. Physical Audio Signal Pr ocessing: for V irtual Musical Instruments and Audio Effects . W3K Publishing, 2010. Julius O. III Smith. Spectr al Audio Signal Pr ocessing . W3K Publishing, 2011. Nicolas Sturmel and Laurent Daudet. Signal reconstruction from STFT magnitude: A state of the art. Joshua B T enenbaum and W illiam T Freeman. Separating style and content with bilinear models. 1999. Dmitry Ulyanov and V adim Lebede v . Audio texture synthesis and style transfer . 2016. URL https://dmitryulyanov.github.io/ audio- texture- synthesis- and- style- transfer/ . Dmitry Ulyanov , V adim Lebede v , Andrea V edaldi, and V ictor S. Lempitsky . T exture networks: Feed-forward synthesis of textures and stylized images. CoRR , abs/1603.03417, 2016. URL http://arxiv.org/abs/1603.03417 . Aäron v an den Oord, Sander Dieleman, Heiga Zen, Karen Simon yan, Oriol V inyals, Alex Gra ves, Nal Kalchbrenner , Andrew W . Senior, and K oray Kavukcuoglu. W av enet: A generati ve model for raw audio. CoRR , abs/1609.03499, 2016. URL . 13 Published as a conference paper at ICLR 2019 Gino Angelo V elasco, Nicki Holighaus, Monika Dörfler , and Thomas Grill. Constructing an in vertible constant-Q transform with non-stationary gabor frames. Pr oceedings of DAFX11, P aris , pages 93–99, 2011. Prateek V erma and Julius O Smith. Neural style transfer for audio spectograms. arXiv pr eprint arXiv:1801.01589 , 2018. Zili Y i, Hao Zhang, Ping T an Gong, et al. DualGAN: Unsupervised dual learning for image-to-image translation. arXiv preprint , 2017. Jun-Y an Zhu, T aesung Park, Phillip Isola, and Alex ei A. Efros. Unpaired image-to-image translation using c ycle-consistent adversarial networks. CoRR , abs/1703.10593, 2017. URL http:// arxiv.org/abs/1703.10593 . A C O M P O N E N T S O F A M U S I C A L T O N E In this section, we will briefly describe the main components of a musical tone: pitch, loudness and timbre (Roederer, 2008). Pitch is described subjectively as the “height” of a musical tone, and is closely tied to the fundamental mode of oscillation of the instrument that is producing the tone. This oscillation mode is often called the fundamental fr equency , and can often be observed as the lowest band in spectrogram visualizations (Figure 3). Loudness is linked to the perception of sound pressure, and is often subjecti vely described as the “intensity” of the tone. It roughly correlates with the amplitude of the wa veform of the percei ved tone, and has a weak dependence to pitch (Hass, 2018). Timbr e is the perceptual quality of a musical tone that enables us to distinguish between dif ferent instruments and sound sources with the same pitch and loudness (Roederer, 2008). The physical char- acteristics that define the timbre of a tone are its ener gy spectrum (the magnitude of the corresponding spectrogram) and its en velope . Since sounds generated by physical instruments mostly rely on oscillations of physical material, the energy spectra of instruments consist of bands, which correspond to (approximately) the integer multiples of the fundamental frequency . These multiples are called harmonics , or overtones , and can be observed in Figure 3. The timbre of an instrument is tightly related to the relati ve strengths of the harmonics. The spectral signature of an instrument not only depends on the pitch of the tone played, but also changes ov er time. T o see this clearly , consider that a single piano note of duration 500 milliseconds is played in re verse - the resultant sound will not be recognizable as a piano, although it will ha ve the same spectral energy . The en velope of a tone corresponds to ho w the instantaneous amplitude changes over time, and is mainly aff ected by the instrument’ s attack time (the transient “noise” created by the instrument when it is first played), decay/sustain (how the amplitude decreases ov er time, or can be sustained by the player of the instrument) and release (the very end of the tone, follo wing the time the player “releases” the note). All these factors add to the complexity and richness of an instrument’ s sound, while also making it difficult to model it e xplicitly . B S P E C T RO G R A M P RO C E S S I N G D E T A I L S W avef orm to CQT Spectrogram Using constant-Q transform as described in Section 2.1, CQT spectrogram can be easily computed from time-domain wa veforms. In this work, we use a 16 ms frame hop (256 time steps under 16kHz), ω 0 = 32 . 70 Hz (the frequenc y of C1 10 ), b = 48 , k max = 336 for the CQT transform. Standard implementations of CQT (e.g., librosa (librosa)) also allow scaling the Q v alues by a constant γ > 0 to hav e finer control over time resolution - choosing γ ∈ (0 , 1) results in increased time resolution. In our experiments, we choose γ = 0 . 8 . After the transformation, we take the log magnitude of the CQT spectrogram as the spectrogram representation. 10 C1 refers to the “C1” key , corresponding to the lowest “C” on the piano k eyboard. 14 Published as a conference paper at ICLR 2019 W avef orm to STFT Spectr ogram All the STFT spectrograms are generated using STFT with k max = 337 . The window function is pick ed to be Hann W indow with a windo w length of 672 . A 16 ms frame hop is also used (256 time steps under 16kHz). Similar to CQT spectrogram, we also take the log magnitude of the STFT spectrogram as the spectrogram representation after the STFT . C D E T A I L E D E X P E R I M E N TA L S E T T I N G S C . 1 D A TA S E T S MIDI Dataset Our MIDI dataset consists of two parts: MIDI-BA CH 11 and MIDI-Chopin 12 . MIDI-B ACH dataset is synthesized from a collection of bach MIDI files which have a total duration of around 10 hours 13 . Each dataset contains 6 instruments: acoustic grand, violin, electric guitar , flute, and harpsichord. W e generated the audio with the same melody but different timbre, which makes it possible to obtain paired data during e valuation. Real W orld Dataset Our Real W orld Dataset comprises of data collected from Y ouT ube videos of people performing solo on different instruments including piano, harpsichord, violin and flute. Each instrument contains around 3 to 10 hours of recording. Here is a complete list of Y ouTube links from which we collected our Real W orld Dataset. Note that we’ ve also randomly taken out some segments for the validation set. • Piano https://www.youtube.com/watch?v=cOrKeFUZSJ0 https://www.youtube.com/watch?v=GujB0ahKFrY https://www.youtube.com/watch?v=0sDleZkIK- w&t=629s • Harpsichord https://www.youtube.com/watch?v=oeY4a4C- Xuk&t=1555s https://www.youtube.com/watch?v=Seu9ju7g9u8 • V iolin https://www.youtube.com/watch?v=wtbIT8ALNEA&t=21s https://www.youtube.com/watch?v=XkZvyA69wCo • Flute https://www.youtube.com/watch?v=6GwfuWhOOdY https://www.youtube.com/watch?v=s6CUi8Gthzc https://www.youtube.com/watch?v=uE9SjAqPGsc&t=1001s C . 2 D O M A I N S P E C I FI C G L O B A L N O R M A L I Z AT I O N As is shown in Figure 5 in Appendix C.7, the distribution of spectrogram pixel magnitude is roughly centered at -2, which is not good for learning because of the tanh acti vation function works better when the activ ation is in the range of [ − 1 , 1] . Thus, we globally normalized the spectrogram data to be mostly in the range of [ − 1 , 1] for each instrument domain. W e scaled and shifted the spectrograms based on the mean and standard deviation of each instrument domain to achieve Domain Specific Global Normalization in the input pipeline, and reverse this operation on the output of CycleGAN to minimize possible distribution shift before feeding the output for w av enet generation. C . 3 C Y C L E G A N T R A I N I N G D E TA I L S In CycleGAN training, because we made sev eral architectural changes, we retuned the hyperparam- eters. The weighting for our cycle consistency loss is 10 and the weighting of the identity loss is 11 from website www.jsbach.net/midi/ 12 from website www.piano- midi.de/chopin.htm 13 For all the synthesized audio, we use T imidity++ synthesizer 15 Published as a conference paper at ICLR 2019 5. In the original CycleGAN the weighting of identity loss is constant throughout training but in our experiment, it stays constant for the first 100000 steps, then it starts linearly decay to 0. W e set the weighing for Gradient Penalty to be 10, as was suggested in Gulrajani et al. (2017). Our learning rate is exponentially warmed up to 0 . 0001 ov er 2500 steps, stays constant, then at step 100000 starts to linearly decay to zero. The total training step is 1.5 million steps, trained with Adam optimizer (Kingma and Ba, 2014) with β 1 = 0 and β 2 = 0 . 9 , with a batch size of 1. C . 4 C O N D I T I O N A L W A V E N E T T R A I N I N G For the conditional w av enet , we used kernel size of 3 for all the dilated con volution layers and the initial causal con volution. The residual connections and the skip connections all ha ve width of 256 for all the residual blocks. The initial causal conv olution maps from a channel size of 1 to 256. The dilated con volutions map from a channel size of 256 to 512 before going through the gated activ ation unit. The conditional wavenet is trained with a learning rate of 0 . 0001 using Adam optimizer (Kingma and Ba, 2014), batch size of 4 , sample length of 8196 ( ≈ 0 . 5 s for audio with 16000 Hz sampling rate). T o improve the generation quality we maintain an exponential mo ving a verage of the weights of the network with a decaying f actor of 0 . 999 . The averaged weights are then used to perform the autoregressi ve generation. T o make the model more robust, we augmented the training dataset by randomly rescaling the original w av eform based on its peak v alue based on a uniform distrib ution unif or m (0 . 1 , 1 . 0) . In addition, we also added a constant shift to the spectrogram before feeding it into the W av eNet as the local conditioning signal; this shift of +2 was chosen to achiev e a mean of approximately zero. C . 5 B E A M S E A R C H During autoregressi ve generation, we perform a modified beam search where the global objective is to minimize the discrepancy between the target CQT spectrogram and the CQT spectrogram of the synthesized audio wav eform. Our beam search alternates between two steps: 1) run the autoregressi ve W aveNet on each e xisting candidate wav eforms for n steps ( n = 2048 ) to extend the candidate wa veforms, 2) prune the wa veforms that ha ve lar ge squared error between the wav eforms’ CQT spectrogram and the target CQT spectrogram (beam search heuristic). W e maintain a constant number of candidates (beam width = 8) by replicating the remaining candidate wa veforms after each pruning process. T o make sure the local beam search heuristic is approximately aligned with the global objectiv e, we take n extra prediction steps forward and use the extra n samples along with the candidate wa veforms to obtain a better prediction of the spectrogram for the candidate w aveforms. The algorithm is provided in details as follo ws given the tar get spectrogram C targ et : 1. k ← 0 2. Perform 2 n autoregressi ve synthesis step on W aveNet on { x 1 , · · · , x k } with m parallel probes ( m is the beam width) to produce m subsequent wav eforms: { x (1) k +1 , · · · , x (1) k +2 n } , { x (2) k +1 , · · · , x (2) k +2 n } , · · · , { x ( m ) k +1 , · · · , x ( m ) k +2 n } . 3. Compute the CQT spectrogram C i of { x ( i ) k +1 , · · · , x ( i ) k +2 n } for each i ∈ { 1 , 2 , · · · , m } , and find the wa veform { x ( i ′ ) k +1 , · · · , x ( i ′ ) k +2 n } with the lowest square difference between C i and the target CQT spectrogram C targ et 4. Update the waveform x j = x i ′ j , ∀ j ∈ { k + 1 , k + 2 , · · · , k + n } 5. k ← k + n C . 6 O N E - S H OT G E N E R A T I O N O F L O N G E R S E G M E N T S In our earlier attempts, we tried generating 4 seconds segments and then mer ge them back. Ho we ver , this resulted in volume inconsistencies between the 4 second generations. W e suspect the CycleGAN learned a random volume permutation, because essentially there’ s no explicit gradient signal against it from the discriminator , after we enabled v olume augmentation during train time. T o resolve this issue, we remov ed the size constraint in our generator during test time so that it can generate based on input of arbitrary length. At test time, the dataset is no longer 4 second chunks, instead, we preserved the original length of the musical piece(e xcept when the piece is too long we cut it down to 16 Published as a conference paper at ICLR 2019 Figure 5: Spectrogram ra w pixel intensity histogram 2 minutes due to GPU memory constraint). During test time generation, the entire piece is fed into the CycleGAN generator in one shot. C . 7 S P E C T R O G R A M R AW P I X E L I N T E N S I T Y H I S T O G R A M Figure 5 shows that the rough distrib ution of spectrograms are centered at -2. As is discussed in Section 3.1, we globally normalized our input data based oh the distribution of spectrograms for each domain of instruments. 17

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment