Fast and energy-efficient neuromorphic deep learning with first-spike times

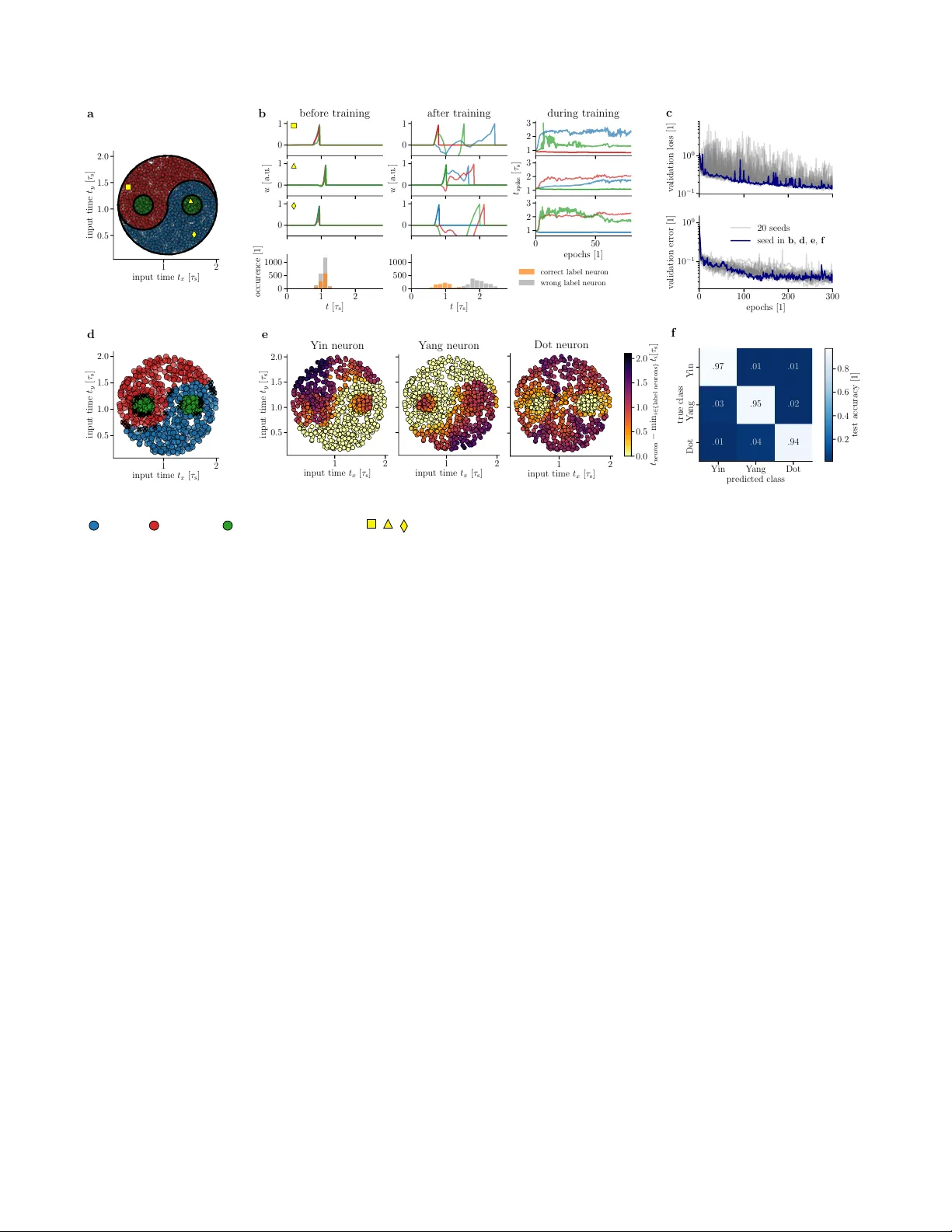

For a biological agent operating under environmental pressure, energy consumption and reaction times are of critical importance. Similarly, engineered systems are optimized for short time-to-solution and low energy-to-solution characteristics. At the…

Authors: Julian G"oltz, Laura Kriener, Andreas Baumbach