MAMAF-Net: Motion-Aware and Multi-Attention Fusion Network for Stroke Diagnosis

Stroke is a major cause of mortality and disability worldwide from which one in four people are in danger of incurring in their lifetime. The pre-hospital stroke assessment plays a vital role in identifying stroke patients accurately to accelerate fu…

Authors: Aysen Degerli, Pekka Jakala, Juha Pajula

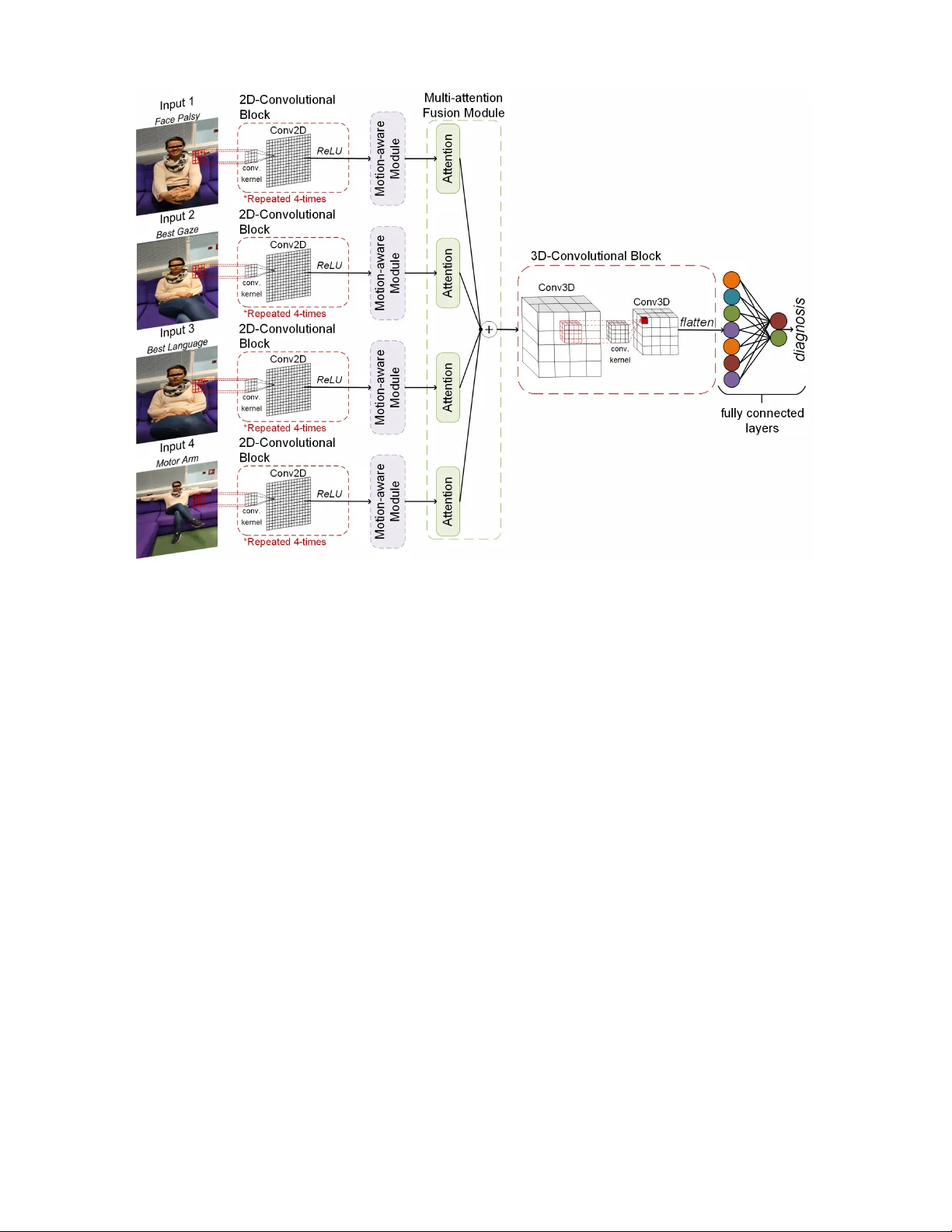

JOURNAL OF L A T E X 1 MAMAF-Net: Motion-A ware and Multi-Attention Fusion Network for Strok e Diagnosis A ysen Degerli, Pekka J ¨ ak ¨ al ¨ a, Juha Pajula, Milla Immonen, Miguel Bordallo L ´ opez Abstract —Stroke is a major cause of mortality and disability worldwide from which one in four people are in danger of incurring in their lifetime. The pre-hospital str oke assessment plays a vital role in identifying str oke patients accurately to accelerate further examination and treatment in hospitals. Accordingly , the National Institutes of Health Stroke Scale (NIHSS), Cincinnati Pre-hospital Stroke Scale (CPSS) and Face Arm Speed Time (F .A.S.T .) are globally known tests for stroke assessment. Howe ver , the validity of these tests is skeptical in the absence of neurologists and access to healthcare may be limited. Theref ore, in this study , we propose a motion-awar e and multi-attention fusion network (MAMAF-Net) that can detect strok e from multimodal examination videos. Contrary to other studies on stroke detection from video analysis, our study for the first time proposes an end-to-end solution from multiple video recordings of each subject with a dataset encapsulating strok e, transient ischemic attack (TIA), and healthy controls. The proposed MAMAF-Net consists of motion-aware modules to sense the mobility of patients, attention modules to fuse the multi-input video data, and 3D con volutional layers to perform diagnosis from the attention-based extracted features. Experimental results over the collected Strok e-data dataset show that the proposed MAMAF-Net achie ves a successful detection of stroke with 93.62% sensitivity and 95.33% AUC scor e. Index T erms —Stroke, T ransient Ischemic Attack, NIHSS, Deep Learning, Self-attention. I . I N T R O D UC T I O N S TR OKE is a cerebrovascular disease that W orld Health Organization (WHO) recognizes as the second leading cause of mortality worldwide. According to Tsao et al. [1], stroke puts one to death in ev ery 3 , 5 minutes on average. Additionally , W orld Stroke Organization rev ealed that the number of strok e surviv ors has almost doubled o ver the years with an increase of younger patients having stroke [2]. Hence, stroke is no longer only a disease of the elderly and its global cost sharply increases. The sev erity of stroke varies from recov ery to severe disability or ev en death [3]. Consequently , its early and accurate diagnosis is vital for the prev ention of mortality and disability . Cerebrov ascular disease encompasses conditions of blood vessels supplying the brain [4]. Accordingly , stroke types are categorized as cerebrovascular accident (CV A) and transient A. Degerli and J. Pajula are with the VTT T echnical Research Centre of Finland, T ampere, Finland (e-mail:name.surname@vtt.fi). P . J ¨ ak ¨ al ¨ a is with the Kuopio Univ ersity Hospital, Kuopio, Finland (e-mail: pekka.jakala@pshyvin vointialue.fi). M. Immonen is with the Lapland University of Applied Sciences, Rov aniemi, Finland (e-mail: milla.immonen@lapinamk.fi). M. B. L ´ opez is with the University of Oulu, Oulu, Finland (e-mail: miguel.bordallo@oulu.fi). This study was supported by Stroke-data project under Business Finland Grant 3617/31/2019. ischemic attack (TIA). A cerebrov ascular accident can either be ischemic stroke or hemorrhagic stroke. Ischemic stroke is a common type of CV A that occurs due to a blockage of blood supply to the brain, whereas hemorrhagic stroke is a more sev ere condition of CV A caused by bleeding into the brain due to rupture of a blood vessel [5]. According to WHO, stroke dev elops symptoms lasting 24 hours or longer (or leading to death). On the other hand, TIA is a mini-stroke, where its symptoms last less than 24 hours [6]. The literature rev eals that TIA heralds the actual attack of a future ischemic stroke [7]. Therefore, an accurate diagnosis of TIA is crucial for the early detection of ischemic stroke. The presence of a strok e can be recognized by imaging tech- niques, electroencephalography (EEG), and assessment tests based on physical examination. The imaging techniques have great significance in stroke prognosis, where abnormalities caused by a stroke in the brain and retinal arterioles can be rev ealed in Computed T omography (CT) scans or Magnetic Resonance Imaging (MRI) [8] and retinal fundus images [9], respectiv ely . Moreover , changes in the EEG patterns after a stroke almost immediately reveal brain ischemia [10]. Even though imaging tools and EEG recordings are sensitiv e to accurate stroke assessment, these techniques are costly and time-consuming [11]. Therefore, stroke assessment based on physical examination is promising for faster and cheaper diagnosis with pre-hospital or acute stroke assessment tests such as the National Institutes of Health Stroke Scale (NIHSS), Face Arm Speed T ime (F .A.S.T .), and Cincinnati Pre-hospital Stroke Scale (CPSS). These tests try to assess stroke from any presence of unilateral facial droops, arm drifts, and speech disorders. Howe ver , stroke is highly heterogeneous for a straightforward definition. In fact, Kasner et al. [12] and Meyer et al. [13] emphasize that NIHSS charts are merely approximate representations and prone to se veral issues such as time consumption, unreliability , and bias, primarily due to miscommunications that can occur during the testing process. Hence, technology is necessary to prevent subjecti ve assessment [14]. T echnological dev elopments have impro ved machine learn- ing (ML) to reach an outstanding performance in the health- care domain with models achieving accurate, fast, and low- cost detection of various diseases and medical emergenc y conditions. Accordingly , ML is indeed promising for the standardization of stroke diagnosis. Ho wev er, in the literature, only the studies [15] and [16] have tried to emulate F .A.S.T . and CPSS tests for automatic stroke detection by deep learning (DL) using examination videos. Additionally , the datasets for stroke detection are rather limited and the existing ones JOURNAL OF L A T E X 2 include only strok e and healthy controls which is unreliable for detecting patients having TIA. Furthermore, previous studies perform stroke analysis over the detected faces or facial & body landmarks, where these models become prone to the errors of face and body detectors. In order to address the aforementioned limitations, in this study , we propose a novel motion-aware and multi-attention fusion network (MAMAF- Net) for stroke diagnosis using the multiple examination videos recorded from each subject as illustrated in Fig. 1. W e ev aluated MAMAF-Net over the collected Stroke-data dataset that includes examination video recordings of 84 stroke, 10 TIA patients, and 54 healthy controls. Accordingly , the key steps of our proposed MAMAF-Net are listed as follows: • MAMAF-Net consists of multi-input channels each in- serted into motion-aware modules for extracting features representing the mobility capacity of subjects. • The outputs of motion-aware modules are fused via attention-based fusion to support the dominant contribu- tions of multi-input channels. • The multi-attention fusion module is attached to 3 D con volutional layers to perform stroke diagnosis. The rest of the paper is org anized as follows. In Section II, a literature revie w including the prior works is provided. Then, the proposed methodology is explained in Section III, and details of data collection are giv en in Section IV. Experimental results are reported in Section V, and technical & clinical implications, limitations, and future work are discussed in Section VI. Lastly , Section VII concludes the study . I I . R E L A T E D W O RK Recent improvements in machine learning and deep learn- ing algorithms led to state-of-the-art performance in many computer vision tasks including image classification and segmentation, object detection, and emotion recognition. In healthcare, these algorithmic improv ements are reflected in computer-aided diagnosis, where various diseases and med- ical emergenc y conditions are diagnosed with the support of ML models leading to outstanding performance. In the literature, computer-aided stroke diagnosis with deep learning- based approaches have been widely studied over CT scans, MRIs, fundus images, and EEG signals. Accordingly , Con- volutional Neural Networks (CNNs) hav e been adapted to perform the classification of stroke in many studies using imaging techniques [17]. Sheth et al. [18] reported 90% area under the curv e (A UC) score for stroke detection ov er CT images with their proposed CNN model. A recent study in [19] has utilized transfer learning from state-of-the-art deep learning models using CT scans and achieved outstanding performance in stroke detection with 98% A UC. Moreover , many studies [20]–[23] have tried similar approaches using CNNs and con ventional machine learning algorithms with features extracted from pre-trained networks to detect stroke- related anomalies in MRIs. In fundus imaging, a similar path was follo wed in se veral studies [24]–[26], where authors compared the strok e detection performance between the hand- crafted features and CNN models using transfer learning. Lastly , in EEG analysis, se veral studies [27]–[29] reported that machine learning models achieved high performance for the diagnosis of stroke. Although the aforementioned machine learning algorithms using biosignals and biomedical images are promising for computer-aided stroke diagnosis, acquiring bio-data is costly and time-consuming. Hence, stroke assessment tests are the first preference for the diagnosis in emergenc y conditions, where stroke symptoms such as facial droops, arm drifts, and speech disorders can be recognized and scored using the CPSS [30] and NIHSS [31] tests, respecti vely . Howe ver , the utilization of these tests under the scarcity of neurologists and healthcare access prev ents effecti ve recognition and scoring of stroke in emergency situations. Therefore, there is a need for an automated test for the standardization of stroke diagnosis, which follows a similar procedure as in the certified stroke assessment tests. Nev ertheless, only the studies [15] and [16] hav e attempted to perform automatic recognition of stroke ov er examination videos using deep learning models. Y u et al. [15] have tried to detect stroke with two-stream networks, where they use as inputs the frame sequences and audio of examination videos that are recorded while patients perform a set of vocal sets. Even though the two-streaming approach is promising, the body mov ements, which are an important indicator of stroke diagnosis, are missing from the analysis. On the other hand, Lee et al. [16] has included comprehensiv e video data for the analysis of body mov ements in addition to facial expressions. Howe ver , existing studies perform ev alu- ations over limited categories having data only from stroke patients and healthy controls without data from TIA patients and they are bonded on the face & body detector algorithms that bring sev eral issues for clinical practice and use. I I I . M E T H O D O L O G Y In this section, we first describe the problem, which is stroke diagnosis using neurological examination videos. Then, we introduce the proposed deep learning model, MAMAF-Net that performs an end-to-end automatic stroke detection. A. Pr oblem Definition In this study , we propose a nov el model to detect any stroke-related assets in patients using neurological examination videos. The problem is formulated as a binary classification task, where the positiv e class is formed by stroke and TIA patients, and the negati ve class includes only healthy controls. The examination videos are recorded by the nurse during the NIHSS assessment at the Stroke Unit of the hospital. Accordingly , four video recordings are selected for the analysis that corresponds to facial palsy , best gaze, best language, and motor arm items in the NIHSS list. Let us denote a video recording as X ∈ R N × w × h × c , where N is the sequence length, w is width, h is height, and c is the color channel of video frames. The dataset including the video recordings I = { X 1 , X 2 , X 3 , X 4 } and their corresponding ground-truth labels y ∈ { 0 , 1 } is denoted as D = { I s , y s } S s =1 , where S is the total number of samples and s is a sample in the dataset. Accordingly , the proposed MAMAF-Net maps input I to predicted class, ˆ y : ˆ y ← Θ µ,α,κ ( I , y ) , where the network JOURNAL OF L A T E X 3 Fig. 1: The proposed MAMAF-Net architecture is illustrated. Θ consists of motion-aware modules µ , multi-attention fusion module α , and con volutional blocks κ as illustrated in Fig. 1. B. MAMAF-Net: Motion-aware and Multi-attention Fusion Network The key components of the proposed MAMAF-Net are described in this section. The proposed network consists of multi-input channels each attached to a 2 D-con volutional block following a motion-aware module. Then, the branches are connected through the multi-attention fusion module, and 3 D-con volutional blocks to reduce the dimension prior to fully connected layers as it is depicted in Fig. 1. 2 D-Con volutional Block. The network Θ consists of four identical 2 D-con volutional blocks, where each block is com- posed of 2 D conv olutional layers and Rectified Linear Unit (ReLU) activ ation functions. A single 2 D-con volutional block, κ ∈ { b l , ω l } L l =1 consists of L = 4 number of con volutional layers each attached to a ReLU activ ation function, where b and ω are bias term and weights of the con volutional layer . For each con volutional layer , a kernel size of k = (3 × 3) and a stride of δ = (2 × 2) are used in order with the number of filters f = { 64 , 32 , 16 , 8 } , where padding is applied with zeros. Motion-aware Module. The output of each 2 D- con volutional block is attached to a motion-aw are module, where features F ← µ Θ ( . ) representing the mobility are extracted as illustrated in Fig. 2. In our study , we are inspired by [32], where they have proposed a motion-aw are attention (M2A) mechanism to take advantage of inherent motion in video recordings. In order to simply adapt M2A to our task, we hav e modified their mechanism. Accordingly , in our motion-aware module, a 2 D con volutional layer with a number of filters f = 8 and a kernel size of (3 × 3) is connected to the 2 D con volutional block. The output of the con volutional layer is denoted as Φ t ∈ R N × 14 × 14 × 8 , where t is the representation of the current time frame. Then, Φ t is shifted with a rolling operation to form Φ ( t +1) by simply copying the first frame of Φ t to the last frame of Φ ( t +1) . The motion information is extracted by computing the difference ( Φ t − Φ ( t +1) ) , which is then follo wed by a dot-scaled attention layer to dominate the relev ant motion patterns. T o further enhance motion patterns, Φ t is added to the output of the attention layer . Then, a 2 D conv olutional layer with a number of filters f = 8 and a kernel size of (3 × 3) followed by a ReLU activ ation function is applied. Lastly , the feature representation F ∈ R N × 14 × 14 × 8 is formed by the element-wise multiplication of the input of the motion-aware module and the output of the last con volutional layer . Multi-attention Fusion Module. The multi-input channels X ∈ R N × 224 × 224 × 3 are mapped to the motion features F ∈ R N × 14 × 14 × 8 , which are the inputs of the multi-attention fusion module α attached to attention layers. The dot-scaled attention layer , which is proposed by [33] is used in our proposed network. The attention function maps a query Q , a set of key K and value V pairs to an output, which is the weighted sum of the values. The weights on the values are JOURNAL OF L A T E X 4 Fig. 2: A branch of the network, where the motion-aware module is depicted in detail. computed as follows: Attention ( Q, K , V ) = softmax QK T √ d k V , (1) where softmax is a function, and d k is the dimension of queries and keys that is used for the scaling fac- tor . Lastly , the outputs of the attention layers A = { A 1 , A 2 , A 3 , A 4 } ∈ R N × 14 × 14 × 8 are added to fuse the multiple features extracted from multi-input channels corre- sponding to { X 1 , X 2 , X 3 , X 4 } . 3 D-Con volutional Block. The last component of the pro- posed network that is attached to the output of multi-attention fusion module A is composed of 3 D con volutional layers to reduce the dimension across the channels simultaneously . Accordingly , the block consists of two 3 D con volutional layers with a kernel size of k = (3 × 3 × 3) , f = 3 number of filters, and strides of (5 × 2 × 2) and (5 × 1 × 1) followed by ReLU activ ation functions. At last, its output Ψ ∈ R N 25 × 7 × 7 × 3 is vectorized and attached to fully connected layers, where the output layer consists of 2 -neurons and the softmax activ ation function for the diagnosis. In this study , in comparison to the proposed MAMAF- Net model, the state-of-the-art networks: ResNet50 [34] and DenseNet-121 [35], where their weights are initialized with the ImageNet dataset weights by transfer learning are used as the baseline methods. Accordingly , we extract deep features from each frame of input video sequences using the state- of-the-art deep networks, where the extracted deep features D ∈ R N × 1000 are fused by addition layer . Then, the fused feature data D is flattened and attached to fully connected layers, where the output layer consists of 2 -neurons and the softmax activ ation function for the diagnosis. Alternati vely , we embed the multi-attention fusion module to state-of-the- art models to form MAF-ResNet50 and MAF-DenseNet-121 models to in vestigate the performance of our proposed module in the diagnosis. I V . C O H O RT The clinical dataset for this study was collected at Kuopio Univ ersity Hospital and Oulu University Hospital between the years 2021 and 2022 under the IRB-approved Stroke-data project. The data was recorded at the Stroke Units of the hospitals during strok e assessments of patients with the NIHSS protocol using the dedicated mobile application. Each patient’ s performance in the NIHSS protocol was ev aluated by study nurses, who had receiv ed the NIHSS protocol certification. The diagnosis of stroke and TIA were done by neurologists at the hospital during the patient’ s stay at the hospital through clinical evidence and other sensing and imaging modalities, i.e., EEG and MRI. Accordingly , the cohort consists of 148 participants from stroke and TIA patients as well as healthy controls. T able I shows the characteristics of the cohort, where Fig. 3: The data collection process using the Stroke-data smartphone application. JOURNAL OF L A T E X 5 T ABLE I: The characteristics of the study cohort, where n indicates the number of subjects. Control Stroke TIA Female ( n = 32) Male ( n = 22) Female ( n = 35) Male ( n = 49) Female ( n = 4) Male ( n = 6) Age (years) Mean 65 66 65 68 64 74 Maximum 78 83 83 87 79 85 Minimum 29 36 31 44 46 57 W eight (kg) Mean 71 . 63 80 . 82 77 . 69 82 . 56 73 84 . 67 Maximum 130 95 148 140 75 95 Minimum 41 65 42 56 72 70 Height (cm) Mean 162 . 66 176 . 91 163 . 20 176 . 63 165 . 23 175 . 25 Maximum 178 182 176 190 168 . 9 190 Minimum 150 172 150 165 162 168 Education level (number) Primary education 12 10 12 17 2 3 Upper secondary education 12 7 18 20 − 3 Bachelors or equivalent 8 3 3 5 1 − Masters/Doctoral or equivalent − 2 2 7 1 − W ork Status (number) Employee 7 5 10 10 2 1 Entrepreneur 1 2 3 7 − − Retired 23 15 21 32 2 5 Student 1 − − − − − Disable to work − − 1 − − − in total 71 females and 77 males are included in the study . It can be observed from the table that the participants are mostly retired and the average age varies from 64 to 74 through stroke & TIA patients and healthy controls. For the collection of the video data, we have developed a smartphone application called the Stroke-data application, which is used to record videos of the patients during the NIHSS protocol as shown in Fig. 3. The collection is per- formed with a tripod stand and a circular light source, where the smartphone with the Stroke-data application is placed in front of the patient while each NIHSS task is recorded separately . The collection procedure takes approximately 10 minutes, and only the research nurse uses the application intended for the video data recording. In the measurement protocol, the smartphone is placed in such a way that only the patient under examination is visible in the camera. In the initial phase, the target is specifically the face, in which case the smartphone is placed as close to the patient as possible. In the last phase, the patient’ s arms must be completely visible on the screen as moving the arms; hence, the smartphone has to be moved further away from the patient. Then, the task starts, and the research nurse receives step-by-step instructions from the application. The NIHSS steps performed for this study are as follows: i) facial palsy : the patient is asked to smile, show teeth and raise eyebrows in succession a few times, ii) best gaze : the patient is asked to follow an object left and right with his/her eyes while keeping his/her head still to check the strength at extraocular muscles, iii) best language : the patient is asked to read aloud the sentences presented on paper, and iv) motor arm : the patient is asked consecuti vely to lift his/her left arm, right arm, and both arms to the sides at a 90 ◦ angle with eyes closed. The study nurses recorded the NIHSS scores for each task during the data recording session according to the NIHSS protocol. V . R E S U LT S In this section, we present the experimental setup and report the results over the Stroke-data dataset. A. Evaluation Appr oach The experimental ev aluations are performed in a stratified 5 -fold cross-validation (CV) scheme with a ratio of 80% training set to 20% test set . In the training phase, 20% of the training set is separated for the validation set in each 5 -fold. Accordingly , the separation of the train, test, and validation sets is performed patient-wise. The standard per- formance metrics are computed over a cumulati ve confusion matrix as follows: sensitivity is the rate of correctly identified stroke samples in the positiv e class, specificity is the ratio of accurately detected control group samples among the negati ve class samples, pr ecision is the rate of correctly identified stroke patients among the samples predicted as a positive class, F1- Scor e is the harmonic average between sensitivity and preci- sion, accuracy is the rate of correctly detected samples in the dataset. Additionally , the Recei ver Operating Characteristics (R OC) curve is computed from which the area under the curve (A UC) scores are calculated. JOURNAL OF L A T E X 6 T ABLE II: A verage detection results (%) of MAMAF-Net and state-of-the-art deep models with respect to the sequence length N ev aluated over 5 -fold CV scheme, where the highest scores are highlighted in bold. Sequence Length Method Sensitivity Specificity Precision F1-Score Accuracy A UC ( N = 25) MAMAF-Net 87 . 23 83 . 33 90 . 11 88 . 65 85 . 81 92 . 93 MAF-ResNet50 85 . 11 68 . 52 82 . 47 83 . 77 79 . 05 86 . 70 MAF-DenseNet-121 92 . 55 70 . 37 84 . 47 88 . 32 84 . 46 89 . 99 ResNet50 84 . 04 68 . 52 82 . 29 83 . 16 78 . 38 85 . 95 DenseNet-121 91 . 49 74 . 07 86 . 00 88 . 66 85 . 14 92 . 65 ( N = 50) MAMAF-Net 84 . 04 88.89 92.94 88 . 27 85 . 81 93 . 60 MAF-ResNet50 81 . 91 68 . 52 81 . 91 81 . 91 77 . 03 85 . 36 MAF-DenseNet-121 86 . 17 77 . 78 87 . 10 86 . 63 83 . 11 93 . 12 ResNet50 81 . 91 64 . 81 80 . 21 81 . 05 75 . 68 82 . 76 DenseNet-121 86 . 17 75 . 93 86 . 17 86 . 17 82 . 43 91 . 86 ( N = 75) MAMAF-Net 93.62 79 . 63 88 . 89 91.19 88.51 95.33 MAF-ResNet50 81 . 91 66 . 67 81 . 05 81 . 48 76 . 35 83 . 41 MAF-DenseNet-121 88 . 30 72 . 22 84 . 69 86 . 46 82 . 43 91 . 41 ResNet50 81 . 91 68 . 52 81 . 91 81 . 91 77 . 03 85 . 50 DenseNet-121 89 . 36 74 . 07 85 . 71 87 . 50 83 . 78 90 . 03 The Stroke-data dataset is used for the ev aluations, which includes a total of 148 subjects with the ground truths of 54 healthy controls and 94 stroke & TIA patients. The video recordings in the dataset are resized to 224 × 224 pixels to have compatible input dimensions for many state-of-the- art deep networks. The video sequence length N is set to different values of { 25 , 50 , 75 } for experimental in vestigations by taking equally distanced N frames from a video record- ing. Additionally , we have in vestigated the impact of data augmentation in the training phase in order to overcome the issue of imbalanced class samples, where the video sequences X ∈ R 25 × 224 × 224 × 3 are augmented up to 100 samples for each class by randomly rotating the sequences at 90 ◦ , 180 ◦ , and 270 ◦ angles, randomly flipping vertically & horizontally , and the combination of these operations. The networks are implemented with the T ensorFlow li- brary using Python and trained on an NV idia® Amper A 100 GPU card with 40 GB memory . Accordingly , the proposed MAMAF-Net is trained over 300 epochs with a learning rate of 10 − 5 using categorical cross-entropy loss. The optimizer choice for the model is Adam with its default parameter settings. Lastly , in the training phase, the weights of the model, which has the minimum loss over the validation set are used for the testing. For the baseline models, the same configurations are valid except for a learning rate of 10 − 3 is used for better con vergence. B. Experimental Results In this section, the experimental results of the test (unseen) set are gi ven for the MAMAF-Net model. T able II presents the av erage detection results of the proposed MAMAF-Net and state-of-the-art computed over the 5 -folds. Accordingly , in the table, the video sequence length is increased to inv estigate the performance of MAMAF-Net model for stroke diagnosis. It can be seen that for each video sequence, the highest A UC scores are obtained by the proposed MAMAF-Net model. On the other hand, among state-of-the-art deep models, the highest A UC score is achie ved by DenseNet-121 with a 92 . 65% for the sequence length of ( N = 25) , where the performance is the closest by 0 . 28% to the proposed MAMAF-Net with 92 . 93% A UC. Additionally , the proposed MAMAF-Net has achieved a successful diagnosis performance with a sensiti vity level of 93 . 62% and F 1 -Score of 91 . 19% for the largest sequence length. Further analysis rev eals that increasing the sequence length improv es the performance of the proposed model in terms of the A UC score as it can be depicted in Fig. 5. Accordingly , the confusion matrices of the MAMAF-Net and DenseNet- 121 models for sequence length ( N = 75) are given in Fig. 4, where their sensitivity lev els are 93 . 62% and 89 . 36% , respec- tiv ely . The confusion matrices reveal that the proposed model can correctly detect 88 stroke patients, and 43 healthy controls, whereas the state-of-the-art DenseNet-121 model detects 84 stroke patients and 40 healthy controls accurately . Moreover , we generally see an improvement in sensitivity metric in state-of-the-art models by utilizing the multi-attention fusion in the model, especially for the smallest sequence length of ( N = 25) . Accordingly , the R OC curve plots of the models (a) (b) Fig. 4: Confusion matrices of the MAMAF-Net (a) and DenseNet-121 (b) models with sequence length ( N = 75) . JOURNAL OF L A T E X 7 Fig. 5: The R OC curves, where the analysis of A UC is computed for each model with video sequence length ( N = 25) at the left side, and the impact of the sequence length of the proposed MAMAF-Net model is plotted at the right side. as the sequence length is ( N = 25) are giv en in Fig. 5, where each model has achiev ed an A UC score of > 85% for stroke diagnosis. W e emphasized the results of ( N = 25) in Fig. 5 since it can generally achiev e comparable results to the leading sequence length of ( N = 75) with less computational complexity , especially , when considering an application of the proposed approach in devices with limited memory and battery . In this study , we further inv estigate the impact of data augmentation on the performance of the models as data augmentation is applied in the training phase. T able III shows the av erage detection results of the proposed MAMAF-Net and state-of-the-art deep models computed over 5 -folds. The analysis is performed for the smallest sequence length of ( N = 25) . Accordingly , the data augmentation helps to improv e the performance of the proposed MAMAF-Net model with a boost of 2 . 13% in sensitivity and 2 . 30% in A UC score as compared to the performance of the model without data augmentation. The loss curves obtained during model training are giv en in Fig. 7, which rev eals that the data augmentation helps the model to achiev e a better con ver gence. Lastly , the performance of each model is giv en in Fig. 6, which indicates the average, the highest, and the lowest lev els of sensitivity , accuracy , and A UC metrics that are calculated ov er 5 -folds. Accordingly , Fig. 6 rev eals that data augmentation in model training generally helps for the stabilization by reducing the standard deviation of performance among each folds. Addi- tionally , MAMAF-Net achiev es the highest performance for the sequence length ( N = 75) as attaining a low standard deviation of 0 . 0159 , 0 . 0515 , and 0 . 0356 for the metrics A UC, sensitivity , and accuracy , respectiv ely . V I . D I S C U S S I O N In this study , we propose MAMAF-Net for the standard- ization of pre-hospital stroke assessment tests conducted at the emergenc y departments of hospitals. For this purpose, we compiled a dataset called Stroke-data that consists of 148 subjects each including video recordings of the NIHSS steps, where face palsy , best gaze, best language, and motor arm steps are used as the multi-input channels to MAMAF-Net deep model. In the proposed network, we use the motion-aw are module to extract features representing the motion patterns across a video sequence, and the multi-attention fusion module to fuse the extracted features as supporting the dominant contributions by dot-scaled attention layers. At the last block of the proposed network, 3 D con volutional layers are used for learning the patterns across dimensions while reducing the size of the tensor . T ABLE III: A verage detection results (%) of MAMAF-Net and state-of-the-art deep models with data augmentation in the training phase evaluated over 5 -fold CV scheme, where the sequence length is ( N = 25) and the highest scores are highlighted in bold. Method Sensitivity Specificity Precision F1-Score Accuracy A UC MAMAF-Net 89 . 36 87.04 92.31 90.81 88.51 95.23 MAF-ResNet50 86 . 17 72 . 22 84 . 38 85 . 26 81 . 08 84 . 57 MAF-DenseNet-121 92.55 72 . 22 85 . 29 88 . 78 85 . 14 91 . 55 ResNet50 81 . 91 74 . 07 84 . 62 83 . 24 79 . 05 84 . 54 DenseNet-121 92.55 75 . 93 87 . 00 89 . 69 86 . 49 92 . 65 JOURNAL OF L A T E X 8 (a) (b) Fig. 6: The performance of each model is given for comparison with respect to sensitivity , accuracy , and A UC metrics, where the network configurations are indicated for the sequence length of a) ( N = 25) with data augmentation, and b) ( N = 75) without data augmentation. T echnical implications. Contrary to previous studies, our compiled Stroke-data dataset is unique in terms of including TIA patients in addition to stroke and data from two distinct hospitals. This way , Stroke-data helps the proposed machine learning model to achiev e a better and more reliable general- ization of stroke for diagnosis. The computational complexity of the proposed model grows as the sequence length increases which also improves the stroke diagnosis performance. How- ev er , experimental results sho w that data augmentation can be the solution for this issue, where we reported that data augmentation can improve the performance of the model. In this way , video sequences with a smaller length can achieve a similar A UC score as a larger sequence length. Additionally , our study proposes for the first time an end-to-end solution for stroke diagnosis using several neurological examination videos recorded from the patients during NIHSS test. Hence, we show that machine learning algorithms are promising tools for the standardization of stroke assessment tests. Clinical implications. In clinical practice, the proposed model can be used in a smartphone application as an assistiv e tool in support of the pre-hospital stroke assessment, where the application would enable common emergency staff to perform NIHSS with minimal human bias and without additional training. Moreover , the application saves the video recordings (a) (b) Fig. 7: The model training curves of the proposed MAMAF-Net are giv en with respect to train and validation loss, where the model is trained a) without data augmentation and b) with data augmentation ov er 300 epochs. JOURNAL OF L A T E X 9 in an encrypted and protected partition of the smartphone, which complies with data priv acy regulations. Limitations and Futur e W ork. In computer-aided diag- nostics, demographic representativeness is highly significant to ensure machine learning models are equitable and unbiased tow ard specific populations. Hence, it would be very beneficial to test our proposed model on open-access datasets, where data is gathered from di verse regions worldwide showing v ariations in the demographics. Unfortunately , at this stage, there are no publicly av ailable video datasets for stroke detection. Additionally , due to the lack of TIA patients in the dataset, the proposed model is trained as a binary classification task, where the positive class is formed by stroke and TIA patients together . In future work, we plan to increase TIA patients in the dataset, so that the proposed model can be trained to discriminate stroke, TIA, and healthy controls as a multi-class problem. The ultimate problem is to regress the v alues of the NIHSS globally (as a total score) and also partially (as a sum of the partial scores) using a dataset, where the NIHSS protocol is fully completed. V I I . C O N C L U S I O N S This study contributes to the standardization of pre-hospital stroke assessment tests with the proposed machine learning- based approach. The current state-of-the-art that tackles the automatic detection of stroke using examination videos is rather limited. Hence, we propose a motion-aware and multi- attention fusion network, namely MAMAF-Net to ov ercome the existing issues in stroke diagnosis. In healthcare, achieving a reliable diagnosis with high sensitivity is crucial for patient treatment. Hence, in this study , in addition to stroke patients, we include TIA patients in the training and ev aluation of MAMAF-Net to prev ent missing any possible future ischemic strokes. Moreover , our proposed network is not dependent on any errors that may arise from face or facial & body landmarks detection algorithms. Experimental results show that the proposed MAMAF-Net achiev es the highest A UC with 95 . 33% score among state-of-the-art deep classifiers. Consequently , the proposed MAMAF-Net can be deployed to a smartphone application, where stroke assessment can be performed in the absence of neurologists in emer gency situations. Moreover , the proposed approach can be used in a variety of healthcare problems such as epileptic seizures and Parkinson’ s disease diagnoses from video recordings. A C K N O W L E D G M E N T S Authors would like to thank the study nurses Riitta Laitala, Saara Haatanen, Matti Pasanen, and T anja Kumpulainen, research assistant Jari Paunonen, and senior scientist T imo Urhemaa for their contributions to data collection. R E F E R E N C E S [1] C. W . Tsao, A. W . Aday , Z. I. Almarzooq, A. Alonso, A. Z. Beaton, M. S. Bittencourt, A. K. Boehme, A. E. Buxton, A. P . Carson, Y . Commodore-Mensah et al. , “Heart disease and stroke statistics—2022 update: a report from the american heart association, ” Circulation , vol. 145, no. 8, pp. e153–e639, 2022. [2] V . L. Feigin, M. Brainin, B. Norrving, S. Martins, R. L. Sacco, W . Hacke, M. Fisher , J. Pandian, and P . Lindsay , “W orld stroke organization (wso): global stroke fact sheet 2022, ” Int. J. Str oke , vol. 17, no. 1, pp. 18–29, 2022. [3] T . M. Gill, E. A. Gahbauer , L. Leo-Summers, and T . E. Murphy , “Recovery from severe disability that develops progressiv ely versus catastrophically: incidence, risk factors, and intervening ev ents, ” J. Am. Geriatr . Soc. , vol. 68, no. 9, pp. 2067–2073, 2020. [4] S. E. Andrade, L. R. Harrold, J. Tjia, S. L. Cutrona, J. S. Saczynski, K. S. Dodd, R. J. Goldberg, and J. H. Gurwitz, “ A systematic revie w of validated methods for identifying cerebrovascular accident or transient ischemic attack using administrative data, ” Pharmacoepidemiol. Drug Saf. , vol. 21, pp. 100–128, 2012. [5] A. Unnithan and P . Mehta, “Hemorrhagic stroke.[updated 2022 feb 5], ” StatP earls [Internet]. T reasur e Island (FL): StatP earls Publishing , 2022. [6] W . H. Organization et al. , “Who steps stroke manual: the who stepwise approach to stroke surveillance, ” 2005. [7] P . Amarenco, “Transient ischemic attack, ” N. Engl. J. Med. , vol. 382, no. 20, pp. 1933–1941, 2020. [8] D. Birenbaum, L. W . Bancroft, and G. J. Felsberg, “Imaging in acute stroke, ” W est. J. Emer g. Med. , vol. 12, no. 1, p. 67, 2011. [9] R. Jeena, A. Sukeshkumar, and K. Mahadev an, “Retina as a biomarker of stroke, ” in Comp. Aid. Interv . Diag. Clin. Med. Img. , 2019, pp. 219– 226. [10] F . Erani, N. Zolotov a, B. V anderschelden, N. Khoshab, H. Sarian, L. Nazarzai, J. W u, B. Chakrav arthy , W . Hoonpongsimanont, W . Y u, B. Shahbaba, R. Srinivasan, and S. C. Cramer, “Electroencephalography might improve diagnosis of acute stroke and large vessel occlusion, ” Str oke , vol. 51, no. 11, pp. 3361–3365, 2020. [11] M. Kaur, S. R. Sakhare, K. W anjale, and F . Akter , “Early stroke prediction methods for prevention of strokes, ” Behav . Neurol. , vol. 2022, 2022. [12] S. E. Kasner , J. A. Chalela, J. M. Luciano, B. L. Cucchiara, E. C. Raps, M. L. McGarvey , M. B. Conroy , and A. R. Localio, “Reliability and validity of estimating the nih stroke scale score from medical records, ” Str oke , vol. 30, no. 8, pp. 1534–1537, 1999. [13] B. C. Meyer and P . D. L yden, “The modified national institutes of health stroke scale: its time has come, ” Int. J. Str oke , vol. 4, no. 4, pp. 267–273, 2009. [14] C. W arlow , “Epidemiology of stroke, ” Lancet , vol. 352, pp. S1–S4, 1998. [15] M. Y u, T . Cai, X. Huang, K. W ong, J. V olpi, J. Z. W ang, and S. T . W ong, “T oward rapid stroke diagnosis with multimodal deep learning, ” in Med. Image Comput. Comput. Assist Interv . (MICCAI) , 2020, pp. 616–626. [16] T . Lee, E.-T . Jeon, J.-M. Jung, and M. Lee, “Deep-learning-based stroke screening using skeleton data from neurological examination videos, ” J. P ers. Med. , vol. 12, no. 10, p. 1691, 2022. [17] G. Zhu, H. Chen, B. Jiang, F . Chen, Y . Xie, and M. Wintermark, “ Appli- cation of deep learning to ischemic and hemorrhagic stroke computed tomography and magnetic resonance imaging, ” Semin Ultrasound CT MR , vol. 43, no. 2, pp. 147–152, 2022. [18] S. A. Sheth, V . Lopez-Riv era, A. Barman, J. C. Grotta, A. J. Y oo, S. Lee, M. E. Inam, S. I. Savitz, and L. Giancardo, “Machine learning–enabled automated determination of acute ischemic core from computed tomog- raphy angiography , ” Str oke , vol. 50, no. 11, pp. 3093–3100, 2019. [19] Y .-T . Chen, Y .-L. Chen, Y .-Y . Chen, Y .-T . Huang, H.-F . W ong, J.-L. Y an, and J.-J. W ang, “Deep learning-based brain computed tomography image classification with hyperparameter optimization through transfer learning for stroke, ” Diagnostics , vol. 12, no. 4, 2022. [20] S. Zhang, S. Xu, L. T an, H. W ang, and J. Meng, “Stroke lesion detection and analysis in mri images based on deep learning, ” J. Healthc. Eng. , vol. 2021, pp. 1–9, 2021. [21] Y . Y u, S. Christensen, J. Ouyang, F . Scalzo, D. S. Liebeskind, M. G. Lansberg, G. W . Albers, and G. Zaharchuk, “Predicting hypoperfusion lesion and target mismatch in stroke from diffusion-weighted mri using deep learning, ” Radiology , p. 220882, 2022. [22] B. R. Gaidhani, R. R.Rajamenakshi, and S. Sonav ane, “Brain stroke detection using conv olutional neural network and deep learning models, ” in 2019 2nd Int. Conf. Intell. Commun. Comput. T echniques (ICCT) , 2019, pp. 242–249. [23] Z. G. Al-Mekhlafi, E. M. Senan, T . H. Rassem, B. A. Mohammed, N. M. Makbol, A. A. Alanazi, T . S. Almurayziq, and F . A. Ghaleb, “Deep learning and machine learning for early detection of stroke and haemorrhage, ” Comput. Mater . Contin. , vol. 72, no. 1, pp. 775–796, 2022. [24] R. Jeena, G. Shiny , A. Sukesh Kumar , and K. Mahadevan, “ A compara- tiv e analysis of stroke diagnosis from retinal images using hand-crafted JOURNAL OF L A T E X 10 features and cnn, ” J. Intell. Fuzzy Syst. , vol. 41, no. 5, pp. 5327–5335, 2021. [25] S. Pachade, I. Coronado, R. Abdelkhaleq, J. Y an, S. Salazar-Marioni, A. Jagolino, C. Green, M. Bahrainian, R. Channa, S. A. Sheth, and L. Giancardo, “Detection of stroke with retinal microvascular density and self-supervised learning using oct-a and fundus imaging, ” J. Clin. Med. , vol. 11, no. 24, 2022. [26] R. Jeena, A. Sukesh Kumar , and K. Mahadev an, “Stroke diagnosis from retinal fundus images using multi texture analysis, ” J. Intell. Fuzzy Syst. , vol. 36, no. 3, pp. 2025–2032, 2019. [27] Y .-A. Choi, S.-J. Park, J.-A. Jun, C.-S. Pyo, K.-H. Cho, H.-S. Lee, and J.-H. Y u, “Deep learning-based stroke disease prediction system using real-time bio signals, ” Sensors , vol. 21, no. 13, 2021. [28] S. Kumar and A. Sengupta, “Eeg classification for stroke detection using deep learning networks, ” in 2022 2nd Int. Conf. Emerg. Fr ont. Elec. Electr on. T echnol. (ICEFEET) , 2022, pp. 1–6. [29] A. F . Nurfirdausi, S. K. Wijaya, P . Prajitno, and N. Ibrahim, “Classifi- cation of acute ischemic stroke eeg signal using entropy-based features, wav elet decomposition, and machine learning algorithms, ” AIP Conf. Pr oc. , vol. 2537, no. 1, p. 050003, 2022. [30] G. T arkanyi, P . Csecsei, I. Szegedi, E. Feher, A. Annus, T . Molnar, and L. Szapary , “Detailed sev erity assessment of cincinnati prehospital stroke scale to detect large vessel occlusion in acute ischemic stroke, ” BMC Emer g. Med. , vol. 20, pp. 1–6, 2020. [31] P . L yden, “Using the national institutes of health stroke scale, ” Str oke , vol. 48, no. 2, pp. 513–519, 2017. [32] B. Gebotys, A. W ong, and D. A. Clausi, “M2a: Motion aware attention for accurate video action recognition, ” in 2022 19th Conference on Robots and V ision (CRV) . IEEE, 2022, pp. 83–89. [33] A. V aswani, N. Shazeer , N. Parmar , J. Uszkoreit, L. Jones, A. N. Gomez, Ł. Kaiser, and I. Polosukhin, “ Attention is all you need, ” Adv . Neural. Inf. Process. Syst. , vol. 30, 2017. [34] K. He, X. Zhang, S. Ren, and J. Sun, “Deep residual learning for image recognition, ” in Pr oc. IEEE Conf. Comput. V is.P attern Recognit. (CVPR) , 2016, pp. 770–778. [35] G. Huang, Z. Liu, L. V an Der Maaten, and K. Q. W einberger , “Densely connected convolutional networks, ” in Pr oc. IEEE Conf. Comput. V is. P attern Recognit. (CVPR) , 2017, pp. 2261–2269.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment