Backward Feature Correction: How Deep Learning Performs Deep (Hierarchical) Learning

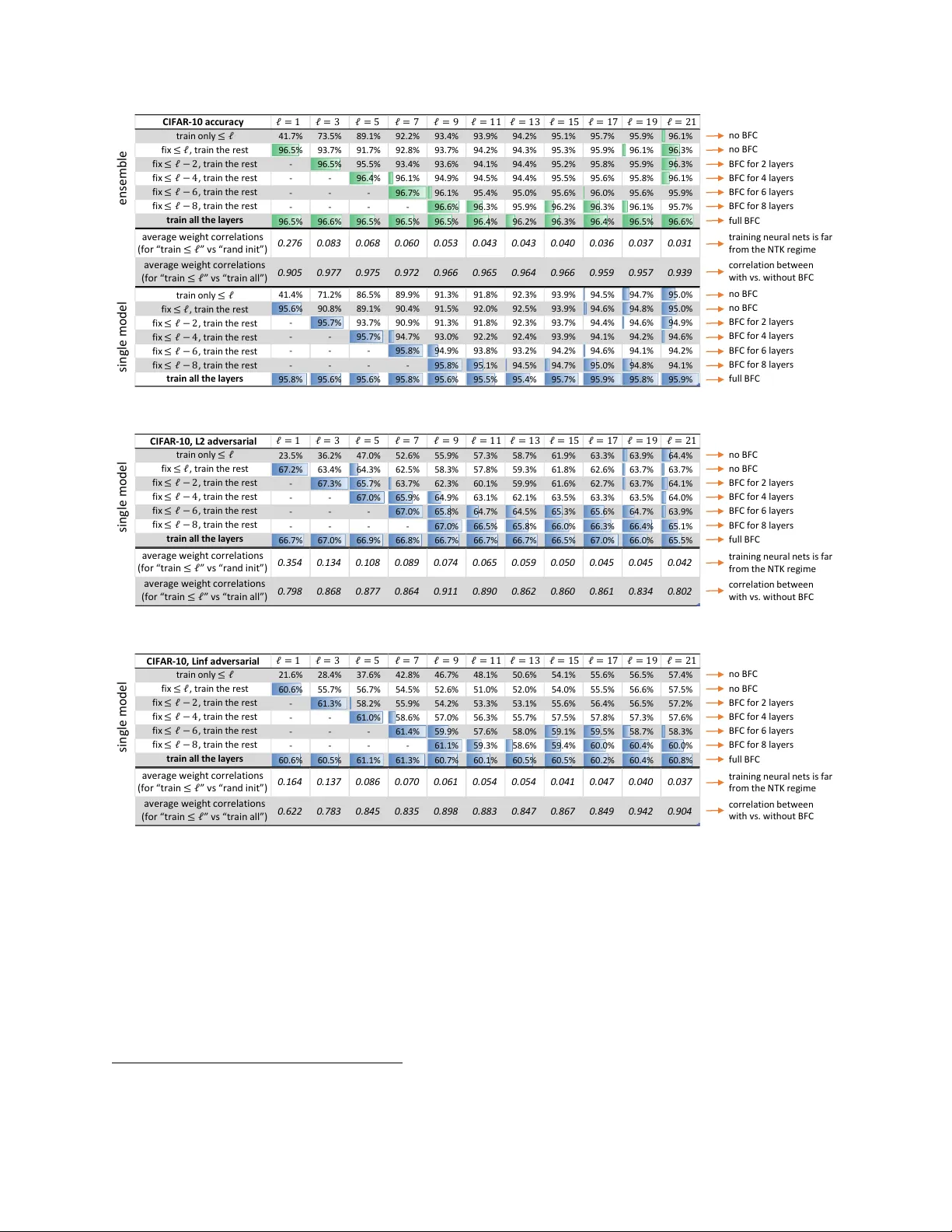

Deep learning is also known as hierarchical learning, where the learner _learns_ to represent a complicated target function by decomposing it into a sequence of simpler functions to reduce sample and time complexity. This paper formally analyzes how …

Authors: Zeyuan Allen-Zhu, Yuanzhi Li