High-dimensional sparse vine copula regression with application to genomic prediction

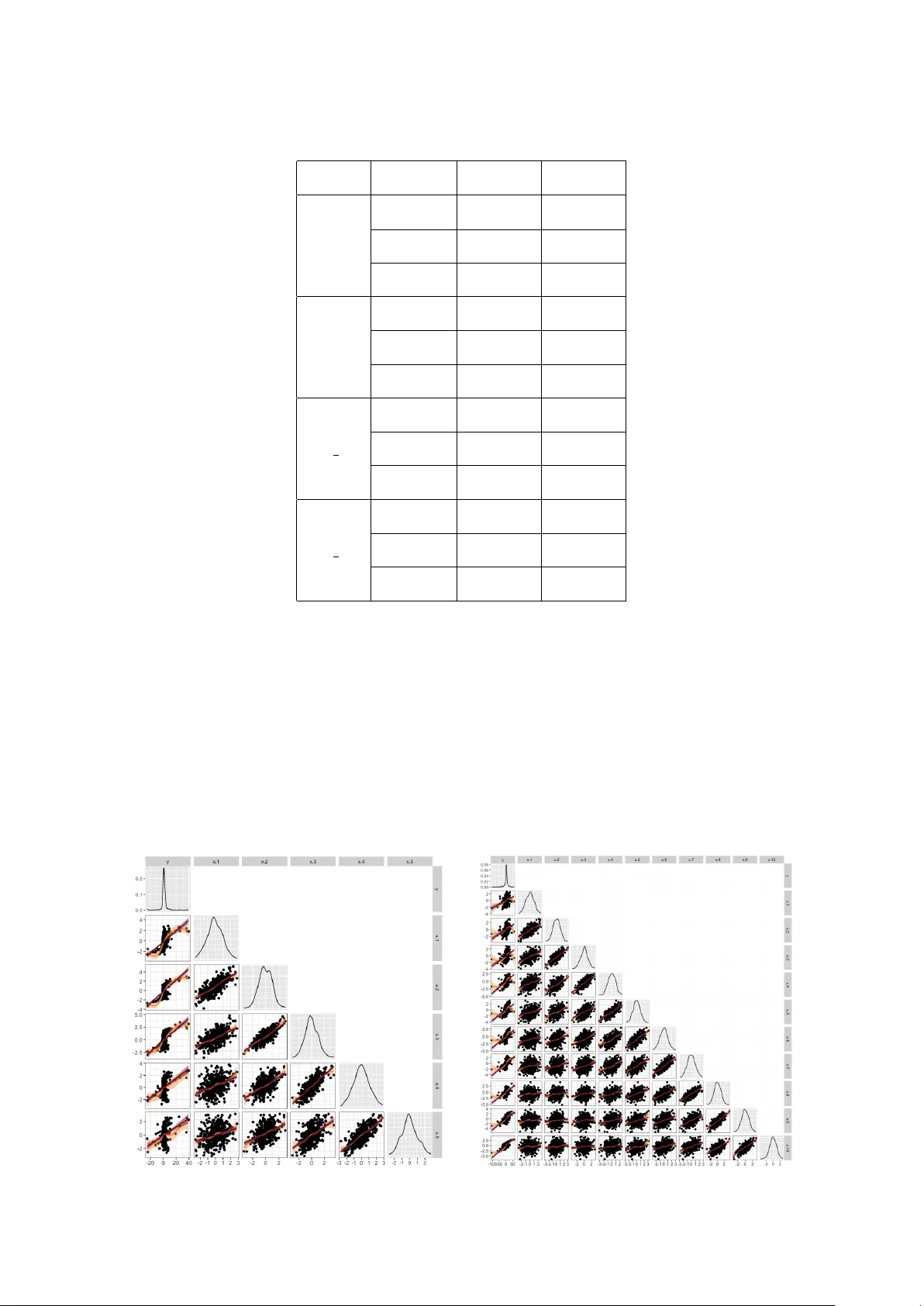

High-dimensional data sets are often available in genome-enabled predictions. Such data sets include nonlinear relationships with complex dependence structures. For such situations, vine copula based (quantile) regression is an important tool. Howeve…

Authors: Özge Sahin, Claudia Czado