Sentiment Analysis on Speaker Specific Speech Data

Sentiment analysis has evolved over past few decades, most of the work in it revolved around textual sentiment analysis with text mining techniques. But audio sentiment analysis is still in a nascent stage in the research community. In this proposed …

Authors: : John Smith, Jane Doe, Michael Johnson

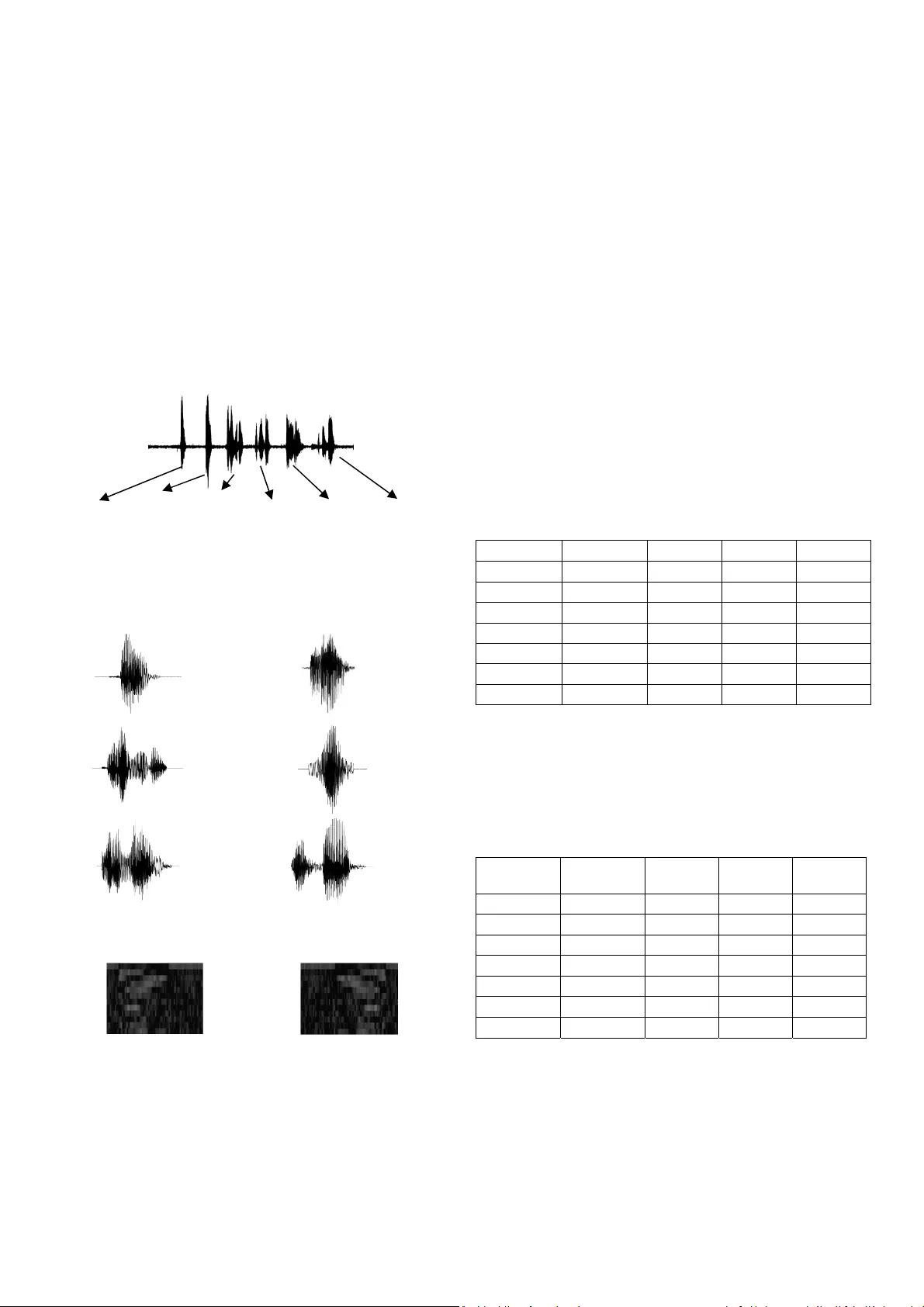

2017 Internati onal Conferen ce on Intelli gent Computing and Control ( I2C2) Sentiment Analysis on S peaker Specific Speech Data Maghilnan S, Rajesh Kum ar M, Senior I EEE, Mem ber School of Electron ic Engineering VIT University Tamil Nadu, Indi a maghi lnan.s2013@vi t.ac.in, mrajeshk umar@vit.ac.in Abstract — Senti ment anal ysi s has evolve d over past f ew deca des, most of the w or k in it revol ved ar oun d tex tual senti ment analy sis wi th tex t mining tec hni ques . B ut audi o se nti ment a naly si s is still in a nascent stage in the resear ch community. In this propose d resea rch, w e perform se nti ment a naly sis on speaker discri m in ated speech transcri pts to detect the emotions of the individ ual speake rs involv ed in the conver sation. We a nalyzed diff ere nt tec h nique s t o per fo r m spea ker disc ri minat io n a nd sentiment analysis to find efficient a lgorithms to perform this task. Index Ter ms — S en timen t An alys is, S peak er R eco gnit ion, Speech Recog nition, M FCC, DTW. I. I NTRODUCTION Sentimen t Analy sis is th e st udy of people’ s em otion o r attitude towar ds a event, convers ation on to pics o r in g eneral . Sentimen t analys is is used in various a pplicati ons, here w e use it to com prehend the mindset of h uman s based on thei r conversations w ith each other . For a m achine to understan d the mi ndset/ mood of th e hum ans thro ugh a conversat ion, it ne eds to know w ho are in terac ting in the convers ation an d w hat is spok en, so we im plem ent a speaker and s peech rec ognition sy stem f irst an d pe rform sen tim ent analy sis on the da ta ext ract ed from pr ior p rocesse s. Underst anding the mood of humans ca n be very useful in many instances . For exam ple, compute rs that posses s the abil ity to perceive a nd respond to human non-lexi cal communic ation such as em otions. In su ch a cas e, afte r d etecting h um ans’ emotion s, the m achin e coul d cust omize the set tings ac cordi ng his/her ne eds and pre feren ces. The res earch comm u nity h as w orked on tran sfo rmin g audi o materia ls such as songs, debates, news, political arguments, to text. And the co mmunity al so worked on audio ana lysis investi gation [1,2,3 ] to study custo mer service p hone conver satio ns and ot her conve rsat ions whic h i nvolved more than one spe aker. Since t here is more t han o ne speaker invol ved in the conv ersati on it becom es clumsy to do analy sis on the audio reco rdi ngs, so in this paper we propo se a system whic h w ould be aware of the spe aker ident ity and perf orm au di o analy sis fo r indiv idual speak ers an d re port th eir em otion. The ap proach f oll owed in the pa per inv estig ates th e challen ges’ and m ethods to pe rform audi o s entimen t analy sis on audi o recor dings us ing speech recogn ition and spe aker recogn ition . We use s peech recog nition tools to t ransc ribe the audio recor dings a nd a proposed spe aker discri mination method based o n certain hypo thesis to ide ntify the speake rs invol ved in a convers ati on. Furth er, sen tim ent analy sis is perform ed on the speaker s pecific spe ech data w hich enables the m achine to unders tand w hat th e hu man s w ere talkin g abou t an d h ow th ey feel. Sect ion-II discusse s the theory behi nd Speaker, Spe ech Recognit ion and S entim ent An alys is is discu ssed . Sect ion- III contain s ex planati on about th e pro posed s ys tem. Secti on -IV contain s de tails about the expe rimen tal setu p an d Secti on- V presents resul t obt ained an d detail ed analy sis. The work is concl uded in Sectio n-VI. II. R ELA T ED W ORK AND B ACKGROUND A. Sentiment Ana lysis: Sentim ent Analy sis, shortly referr ed as SA , wh ich identif ies the sen timen t expres sed in a te xt then analy ses it to f ind w hethe r document e xpresse s positive or negat ive senti ment. Majo rity of work on sentim ent an alysis has focu sed on meth ods such as Naiv e Bayesia n, decisio n tree, support ve ctor machi ne, maximu m entrop y [1,2,3] . In the wor k done by Mostafa et al [4] the senten ces in each d ocumen t are labell ed as s ubje ctive an d objectiv e (disca rd the ob jectiv e part) an d then clas sical machin e learnin g tech niques are a ppli ed f or the su bjectiv e pa rts. S o t hat the polar ity classifier ignore s the irrelevant or misleadi ng terms. Since coll ecting and la belling the data is tim e consum ing at t he sentence lev el, th is a pproach is not easy to tes t. To pe rform sentim ent analy sis, we h ave used the f ollow ing m ethods – Naiv e Bayes, Linear Support Ve ctor Ma chines, VADER [6]. And a compa rison is made t o fin d the efficien t a lgorithm for our pur pose . Text ( Parag raph / Sent ence ) Feature Extr actio n Da t ab ase Textual Classi fier s Po s itiv e Ne utr al Ne ga t ive Fig . 1 . Fr amewor k of G ener ic Sentim ent A nalysis S ys tem 2017 Internati onal Conferen ce on Intelli gent Computing and Control ( I2C2) Pr e - Pr o c e s s i ng Fe a t u r e E x tr a c ti o n Co m p a r i s o n O u t put ( Sp e a k e r I d ) Pr e - Pr o c e s s i ng Sp e e c h Mo d e l O u t put ( Sp e e c h Tr an s c r i b e s ) Pa r s i n g Ou t p u t a s te xt u a l di a l og u e Se nti ment Mo d e l In pu t (Sp e e c h Si g n a l ) SPE A K ER DI SCR IM INAT ION : SPEECH RECOGNIT ION: Positi v e Ne utra l Ne ga ti v e Fig . 2 . Propos ed St ru cture f or th e Sent imen t Analysi s Syst em B. Speech Recognition: Speech recognition is the a bility g iven t o a m achine or progra m to identify words and phrases in language spoke n by hum ans and c onvert them to a m achine-rea dabl e form at, w hich can be fur ther used for pro cessing. In thi s paper, we have used speec h reco gnitio n tool s such a s Sphi nx4 [5 ], B ing Sp eech, Google S peech Rec ognition. A com parison is made an d the best suite f or the pro posed model is ch osen. C. Speaker Recognition: Ident ifying a human based on the vari ations and unique charact eristi cs in th e v oice is refer red t o speake r rec ognition . I t has acq uired a lo t of at tention fro m the rese arc h communit y for almost eight decades [7]. Speech as si gnal contain s sev eral feature s which can extra ct linguistic, emotional , speaker spe cific information [8], speak er recogniti on harnesses the speak er spec ific fe ature s from the speech si gnal. In this paper , Mel F requen cy Cepst rum Coeffi cient ( MFCC) is used for designing a sp eaker discriminant syste m. The MFCC’s for spe ech sam ples f rom various speak ers a re extr act ed and com p ared w ith each other to fin d the si milari ties b etween the spe ech sam p les. 1) F ea tur e Extr act ion : The e xtractio n of unique speaker d iscri minant feature is import ant to ach ieve a bet ter a ccuracy rate. The accur acy of this phase is impo rtant t o the n ext phase , becau se it acts as the in put for the ne xt pha se. MFCC — Humans p ercei ve audio i n a nonlinear sc ale, MFCC tri es to re plica te the h um an ear as a m athematical m odel . The ac tual a coust ic fre quen cies a re m apped to Mel frequ enci es which ty p ically rang e betw een 300Hz to 5KHz. The Mel sca le is linea r below 1 KHz an d log arithmic above 1KHz. MFC C Constants sign ifies the ene rgy associate d w ith each Mel bin , which is uni que t o every s peaker . This uniquenes s en ables u s to identi fy speakers base d on their v oice [9]. 2) Fe ature M atching: Dynami c T ime Wrap pin g(DT W) — St an Salvado r et al [7] describe s DTW algorit hm as Dynamic P rogramming techni ques. This alg orithm measures th e sim ilarity betw een tw o time s eries w hich varies in spe ed or tim e. This tech niqu e is al so used t o find the optim al align men t between the tim es series if one time ser ies may be “ warped” no n-linear ly by stretching or shrinking it along its time axis . This warping between tw o tim e series ca n then be used to find corre sponding re gions betwee n the tw o time series or to det ermin e the similari ty betw een the two tim e series . T he princi ple of DTW is to c ompare t w o dyn amic patt erns an d m easure its sim ilarity by calculatin g a min imum dist ance betw een them . Once th e tim e series is w r apped, vari ous dist ance/s imilari ty compu tation meth ods s uch as Euc lide an dis tance , Ca nberr a Distanc e, C orrelat ion c an be used . A compa rison betw een these methods is shown in results se ction. III. P ROPOSED S YSTEM In thi s pape r, w e pr opos e a model f or sen tim ent analy sis th at utilizes fe atures extract ed from the speech signal to detect the emoti ons o f the speaker s involv ed in the conver sati on. The process invo lves four steps: 1) Pre-processing which inc ludes VAD, 2) Speech Reco gnition System, 3) Speaker Re cognit ion System, 4 ) Sentim ent Analy sis System . The input sign al is pass ed to the V oice Activi ty Detec tion Syst em, which identifi es and segreg ates the v o ices f rom th e signal. T he voices are stored as chunks in the databa se, the chunks are the n passed to speech reco gnitio n and speaker discr iminatio n system for recogniz ing the content and speaker Id. Spea ker reco gnition syste m tags the chunks with t he spea ker ids, it should be noted t hat the system works in an unsuper vised fashio n, i.e. it would find weat her the c hunks are fro m same speake r or d iffere nt and tag it as ‘Spea ker 1’ and ‘Spea ker 2’. The spee ch recogni tion system transc ribes the chunks to text . The system further matches the S peake r Id w ith the transcrib ed text. I t is stor ed as d ialo gue in the datab ase. T he text o utput from the spee ch rec ogniti on sy stem specific t o indivi dua l speak er serves as potential fe ature to e stimate s entim ent emphasiz ed by 2017 Internati onal Conferen ce on Intelli gent Computing and Control ( I2C2) the indi vidual spea ker. The entire pro cess is depicte d pictori ally in Figure 2. IV. E XPERIMENTA L S ETU P A. Dataset Our da tase t co mprise s of 21 aud io fil es rec orded in a co ntroll ed enviro nment [10] . Three di fferent scrip ts are used as conversat ion betw een two peoples . Seven speak ers are tot ally involved in these rec ordings, 4 males and 3 fem ales. The conver satio ns are prelab elled depend ing upon the sce nario. The audio is sa mpled at 16KHz and reco rded as m ono t racks for an averag e of 10 seconds. A dataset sample is shown in Figure 3 Sample 1 Hi Hello How was your day? It was good How was yours ? It was bad Fig . 3 . Sample Waveform Chunk 1 Chunk2 Chunk 3 Chunk 4 Chunk 5 Chunk 6 Fig . 4 . After segmenta tion with VAD Fig . 5 . MFCC feature of Chunk1 and C hunk2 B. Experiment s and Evaluat ion Me trics: The pr opose d sy stem uses speech, s peaker re cogni tion and sentimen t analys is. We have presente d a deta iled ana lysis f or the experi ments performed with vario us tools and a lgorithms. The tool s used for speech reco gnition are Sp hinx4, Bing Spee ch API, Googl e Speech API. And perfor mance metr ic used w as WWR. For s peake r recog ni tion, w e used MFC C as featu re an d D TW with vario us distance co mputatio n methods such as Eucl idean, Corr elatio n, Ca nberra for featur e matc hing. And reco gnitio n rate is used as the performan ce m etric. For sentim ent an alysis, standard sentiment a nalysis data sets viz. twitter datase t, product review dataset [6] are u sed to com mu te the ac curacy of the system. V. R ESU LTS A. Results for Automatic Speech Recognition Engine : Fir st, the au dio file s from the da tas et are co nverted to te xt files thro ugh di ffere nt speec h reco gnition to ols. Tabl e 1 shows the W RR obtained for various scripts which were spoke n by diffe rent spea kers. M1 re fers to Male spe aker 1, simila rly F1 refers to Female speaker 1. Th e WRR is giv en as percenta ge value s. TA BL E I. WRR for Sphi nx4: Speaker 1 Speaker2 Script1 Script 2 Scrip t3 M1 M2 46.67 23.0 8 62.5 0 M2 M3 33.33 30.7 7 18.7 5 M3 M1 26.67 23.0 8 25.0 0 M2 M4 53.33 30.7 7 56.2 5 F1 F2 46.67 38.4 6 31.2 5 F2 F3 26.67 30.7 7 37.5 0 F3 F1 33.33 38.4 6 25.0 0 Table 2 , ta bul ates the WR R obta in ed by using Go ogl e Speech A P I to transc ribe the s p eech s ignals . Th e sam e datas et is used i .e. the s ame scripts an d the s ame pe rsons are us ed to compare the results . This is done to validate to to ols in equal basis . TA BL E II . WRR for Google Sp eech AP I: Speake r 1 Speake r 2 Script 1 Scr ipt2 Scrip t3 M1 M2 93.33 84.6 2 81.2 5 M2 M3 86.67 92.3 1 75.0 0 M3 M1 86.67 84.6 2 43.7 5 M2 M4 80.00 76.9 2 68.7 5 F1 F2 86.67 84.6 2 81.2 5 F2 F3 93.33 84.6 2 37.5 0 F3 F1 80.00 92.3 1 75.0 0 Similarly , Table 3, has WRR obtained for the sam e dataset but by using Bing Speech API. 2017 Internati onal Conferen ce on Intelli gent Computing and Control ( I2C2) TABLE III. WRR f or Bi ng Spe ech: Speake r 1 Speake r 2 Script 1 Scr ipt2 Scrip t3 M1 M2 100.00 92.3 1 87.50 M2 M3 93.33 84.6 2 87.5 0 M3 M1 86.67 92.3 1 93.7 5 M2 M4 86.67 84.6 2 81.2 5 F1 F2 80.00 84.6 2 93.7 5 F2 F3 93.33 92.3 1 87.5 0 F3 F1 86.67 76.9 2 93.7 5 The ave rage of the WRR obtai ned fr om the previ ous tab le is given in Tab le 4. TA BL E IV . Averag e: Speech Engine Average for Script 1 Average for Script 2 Average for Script 3 Average Sphinx4 38.10 30.7 7 36.6 1 35.1 6 Google Speech API 86.67 85.7 1 66.0 7 79.4 8 Bing Speech API 89.52 86.8 1 89.2 9 88.5 4 B. Results f or Speaker Dis crimination Syst em: The ac curacy of th e speak er identif ication w ith respect ive t o the n um ber of fe atu res is illust rate d in Fi gure 5. The num ber of featur es are varie d fr om 1 to 26. Dy nam ic Tim e Wrappin g is used as the f eatur e map ping t echni que in our r esear ch. Va rio us distan ce com mu tation m ethods such as Eu clidean , Canberra are with DTW and c ompare d. The ac curacy vs number of featu res graph i s shown in Graph3. It is noted that the system is highly accurat e w hen w e used 12 – 14 f eatur es, h ence w e took 13 featur es to proc ess in the s yst em . Fig . 6 . Accuracy vs Number of Featu res C. Results for Sentiment Analysis System: Table 5, sh ow s the accu racy of a diff erent alg orithm s used for sentim ent ana lysis such as Naive Bayes, L inear SV M, VADER. TA BL E V . Accur acy S entime nt Method Twitter Data set Movie Re view Naive Baye s 84 72.8 Linear SVM 88 86.4 VADER 95.2 96 VI. C ONCLUS ION AND F UTU RE W ORK This wor k presents a genera lized mode l that takes an audio which c ontai ns a co nversat ion be tween two peop le as inp ut and studies the content an d speak ers’ identity by aut omatical ly conver ting the audio into text and b y per forming sp eaker reco gnition. In this resea rch, we ha ve proposed a simple system to do the above-m entioned task. The system works well with the artifi ciall y generated datase t, w e ar e working on col lecting a larger d ataset an d incr easing th e scala bility of the sy stem. Though the system is accurate in comprehending the sentiment of th e sp eakers in convers ati onal di alog ue, i t suf fe rs som e fla ws, right n ow the sy stem can han dle a conve rsati o n be tween tw o speakers and in the c onversati on only one s peaker sh o uld talk at a given time, it cannot unders tand if two people talk simultaneo usly. Our fut ure work would addres s these issues and improve th e accu racy an d scal ability of the sy stem. R EFERENCES [1] Pang, B., & Lee , L. (2004, July ). A sentim ental educa tion: Sentime nt analysis using subjectivity summarization based o n minimu m cuts. In Proceedi ngs of the 4 2nd ann ual meeting o n Assoc iation for Computa tional Ling uistics ( p. 271). A ssocia tion for Com putational L inguistic s. [2] Pang, B., & Lee , L. (200 5, June ). Se eing st ars: Ex ploiting class relationships f or sentim ent categorization w ith respect to rating scales. In P ro ceedings of th e 43rd a nnual meeting on assoc iation for c omputationa l linguistic s (pp. 1 15-12 4). As sociation f or Computa tional Ling uistic s. [3] Pang, B ., L ee, L ., & Vait hy anathan, S. (20 02, July ). Thumbs up?: sentiment cl assificati on u sing mach ine l earnin g techniqu es. In Proceed ings o f the ACL-02 con ference on E mpirical met hods in natural la nguag e proce ssing- Volum e 10 (pp. 79-8 6). A ssocia tion for Com putational L inguistic s. [4] Shaikh, M., Prendinge r, H., & Mitsur u, I. (2007) . A ssess ing sentim ent of text by sema ntic depe ndency and con textua l vale nce analysis. Aff ective Computing a n d Intell igent Intera ction, 191- 202. [5] Walke r, W., Lame re, P., Kwok, P., Ra j, B., Si ngh, R., G ouvea, E., ... & Woelf el, J. ( 2004) . Sph inx-4: A flexible open source framework for speech reco gnition. [6] Hutto, C. J. , & G ilbert, E. (201 4, May ). Vader : A pa rsimonious rule-based model for sent iment analysis o f social medi a text. In 0 20 40 60 80 100 1 4 7 10 13 16 19 22 25 ACCURACY ( %) NUMBER OF FEATURES Canberra Correlati on Euclid ean 2017 Internati onal Conferen ce on Intelli gent Computing and Control ( I2C2) Eighth I nternati onal A AAI Confere nce on W eblogs a nd Socia l Media. [7] Salvador , S., & Chan, P. (200 4). FastDTW: Toward accurate dynamic ti me warpin g in linear t ime and space. 3 rd Wkshp. on Mining T emporal and Sequent ial Data, A CM KDD '04. Seattle , Was hington (A ugust 22- -25 , 2004). [8] Herbig, T ., Gerl, F., & Mink er, W . (2010, July ). Fast ada ptation of speech an d speaker ch aracteristics for en hanced speech recognition i n adverse intelligent e nvironme nts. In Intellige nt Environm ents ( IE), 2 010 Six th In ternationa l Conf erence on ( pp. 100-105). IEEE. [9] Kinnune n, T ., & Li , H. (201 0). An ov erv iew of text -independe nt speaker recogn itio n: From featu res to supervect ors. S peech comm unication, 5 2(1), 1 2-40. Ezzat, S. , El Gayar, N., & Ghanem, M. (201 2). Sentiment anal ysis of ca ll centre audio c onve rsations using text c lassi fication. I nt. J . Comput. I nf. Sy st. Ind. Manag . Appl, 4(1) , 619-6 27.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment