Semi-Supervised Speech Emotion Recognition with Ladder Networks

Speech emotion recognition (SER) systems find applications in various fields such as healthcare, education, and security and defense. A major drawback of these systems is their lack of generalization across different conditions. This problem can be s…

Authors: Srinivas Parthasarathy, Carlos Busso

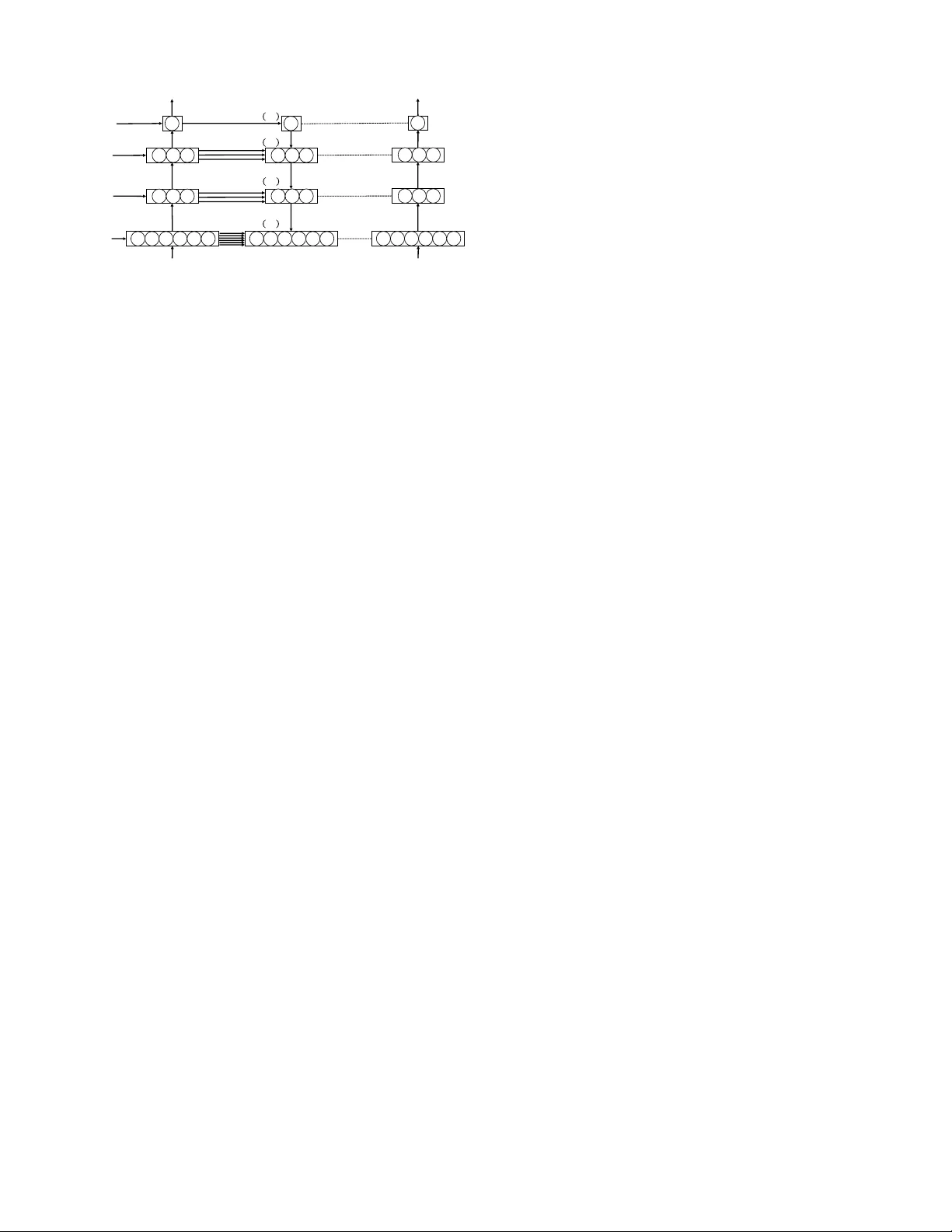

IEEE/A CM TRANSA CTIONS ON A UDIO, SPEECH, AND LANGU A GE PR OCESSING, V OL. XX, NO. X, MA Y 2019 1 Semi-Supervised Speech Emotion Recognition with Ladder Networks Srini v as Parthasarathy , Student Member , IEEE, Carlos Busso, Senior Member , IEEE, Abstract — Speech emotion recognition (SER) systems find ap- plications in various fields such as healthcar e, education, and security and defense. A major drawback of these systems is their lack of generalization across different conditions. For example, systems that show superior performance on certain databases show poor performance when tested on other corpora. This problem can be solved by training models on large amounts of labeled data from the target domain, which is expensi ve and time- consuming. Another appr oach is to increase the generalization of the models. An effective way to achie ve this goal is by regularizing the models through multitask learning (MTL), where auxiliary tasks are learned along with the primary task. These methods often require the use of labeled data which is computationally expensive to collect f or emotion r ecognition (gender , speaker identity , age or other emotional descriptors). This study pr oposes the use of ladder networks for emotion recognition, which utilizes an unsupervised auxiliary task. The primary task is a r egression problem to predict emotional attributes. The auxiliary task is the r econstruction of intermediate feature representations using a denoising autoencoder . This auxiliary task does not require labels so it is possible to train the framework in a semi-supervised fashion with abundant unlabeled data from the target domain. This study shows that the proposed approach creates a powerful framework for SER, achieving superior performance than fully supervised single-task learning (STL) and MTL baselines. The approach is implemented with se veral acoustic features, sho wing that ladder networks generalize significantly better in cross- corpus settings. Compared to the STL baselines, the pr oposed approach achieves relati ve gains in concordance correlation coefficient (CCC) between 3.0% and 3.5% f or within corpus evaluations, and between 16.1% and 74.1% for cross corpus evaluations, highlighting the power of the architectur e. Index T erms —Semi-supervised emotion recognition, ladder networks, speech emotion recognition. I . I N T RO D U C T I O N Recognizing emotions is a key feature needed to build socially aware systems. Therefore, it is an important part of human computer interaction (HCI). Emotion recognition can play an important role in v arious fields such as healthcare (mood profiles) [1], education (tutoring) [2] and security and defense (surveillance) [3]. Speech emotion recognition (SER) hav e enormous potential gi v en the ubiquity of speech-based devices. Howe ver , it is important that SER models generalize well across different conditions and settings sho wing rob ust performance. S. Parthasarathy was with the Erik Jonsson School of Engineering & Computer Science, The Univ ersity of T e xas at Dallas, TX 75080 USA (e- mail: sxp120931@utdallas.edu). C. Busso is with the Erik Jonsson School of Engineering & Computer Science, The Univ ersity of T exas at Dallas, TX 75080 USA (e-mail: busso@utdallas.edu). Manuscript recei ved May 7, 2019; re vised xxxx xx, xxxx. Con v entionally , emotion recognition systems are trained with supervised learning solutions. The generalization of the models is often emphasized by training on a variety of samples with di v erse labels [4]. The state-of-the-art models for standard computer vision tasks utilize thousands of labeled samples. Similarly , automatic speech reco gnition (ASR) systems are trained on several hundred hours of data with transcriptions. Generally , labels for emotion recognition tasks are collected with perceptual e v aluations from multiple ev aluators. The raters annotate samples by listening or watching to the stim- ulus. This ev aluation procedure is cognitiv ely intense and expensi v e. Therefore, standard benchmark datasets for SER hav e limited number of sentences with emotional labels, often collected from a limited number of ev aluators. This limitation sev erely affects the generalization of the systems. An alternative approach to increase the generalization of the models is by building rob ust models. An effecti ve approach to achieve this goal is with multitask learning (MTL) [5], where rele v ant auxiliary tasks are simultaneously solved along with the primary task. By solving rele v ant auxiliary tasks, the models are regularized by finding more general high- lev el feature representations that are still discriminativ e for the primary task. Multitask learning has been successfully used for emotion recognition tasks [6]–[9]. While these MTL methods ha ve achiev ed promising results, most of the proposed solutions ha v e focused on MTL problems that utilize super- vised auxiliary tasks. Examples include gender recognition [9], [10], speaker information [9], other emotional attrib utes [7], [8] and secondary emotions [6]. This approach requires the use of meta labels which further limits the training of the models. In many scenarios, it is possible to collect lar ge amount of data without labels from the target domain. It is important to build models that can effecti vely utilize unsuper- vised auxiliary tasks to regularize the model, lev eraging these unlabelled recordings. This study e xplores this idea with ladder networks, building upon our pre vious work [11]. The ladder network architecture is a framework that combines supervised tasks with unsupervised auxiliary tasks. These auxiliary tasks correspond to the reconstruction of feature representations at various layers in a deep neur al network (DNN). In essence, the ladder network is a denoising autoencoder trained along with a supervised classifier or regressor that utilizes the encoded representation. The uniqueness of the framew ork is the skip connections between the corresponding encoder and decoder layers, which reduce the load on the encoder layers to carry information for decoding the layers. W ith this approach, the higher layers of the encoder learn discriminati ve representa- tions for the supervised task. Furthermore, the reconstruction IEEE/A CM TRANSA CTIONS ON A UDIO, SPEECH, AND LANGU A GE PR OCESSING, V OL. XX, NO. X, MA Y 2019 2 of the feature representations is completely unsupervised. This aspect of the model enables us to use the framework in a semi- supervised fashion, le veraging large amount of data from the target domain without emotional labels. This study provides a comprehensive analysis of auxiliary tasks for speech emotion recognition on the MSP-Podcast corpus [12]. The study focuses on regression problems, where the primary task is to predict the arousal, valence and dom- inance scores. The proposed implementation uses high-level descriptors (HLDs), computed at the speaking turn-le vel as feature inputs. The ev aluation compares the performance of the proposed ladder network framework for emotion recognition against single-task learning (STL) and MTL baselines. The proposed frame work is tested in a fully supervised setting as well as in a semi-supervised setting using unlabeled data (around 10 times the amount of labeled data in the corpus). The experimental results show the benefits of the framework, obtaining state-of-the-art performance on this speech emo- tional corpus. This study also examines the performance of the proposed architectures for cross-corpus experiments, where the models are trained on the MSP-Podcast corpus and tested on other popular databases for SER tasks (USC-IEMOCAP and MSP-IMPR O V corpora). The proposed architectures achie v e significant improvements in the cross-corpus experiments, validating the regularizing po wer of the proposed framework, leading to models that generalize better to unseen conditions. The gains in performance are particularly clear when the approach is implemented in a semi-supervised fashion, adapt- ing the models using unlabeled data from the target domain. Finally , this study replicates the proposed architecture for two frame-lev el feature inputs: (a) dynamic low-level descriptors (LLDs), and (b) Mel-frequency band (MFB) energies. While the HLDs are sentence-lev el feature representation, the LLDs and MFBs are frame-lev el feature representations. The model with ladder networks achiev e significant improvements ov er the baselines in most cases. The rest of the paper is organized as follows. Section II revie ws studies on research areas that are relevant to this work. Section III presents the proposed architecture that exploits unsupervised auxiliary tasks to re gularize the network. Section IV giv es details on the experimental setup including the databases and features used in this study . Section V presents the exhaustiv e experimental ev aluations, showing the benefits of the proposed architecture. Finally , Section VI provides the concluding remarks, discussing potential areas of improve- ments. I I . B A C K G RO U N D This study uses the emotional attrib utes arousal, valence and dominance to describe emotions. SER systems for these problems are often built to recognize indi vidual emotional attributes. Most frame works are trained in a supervised fashion with labeled data. Giv en the limited size of most speech emotional databases, these supervised emotion recognition framew orks are commonly trained with a fe w hours of labeled data. Using unlabeled data is an interesting method to increase the generalization of the models to a ne w domain. A. Semi-Supervised Learning Previous studies for semi-supervised learning have consid- ered the inductive learning technique, where a classifier is first trained on the labeled samples. The trained classifier is then used on the unlabeled set to obtain predictions. The training set is then augmented with samples having highly confident predictions. The classifier is retrained with this augmented training set. This process is iterated a fixed number of times after which the performance often saturates. Zhang et al. [13] used this inductiv e learning procedure for SER to lev erage unlabeled data. They enhanced their supervised learn- ing approach with this method, obtaining better predictions on labeled data [14], [15]. Cohen et al. [16], [17] proposed a similar strate gy for facial expressions using probabilistic Bayesian classifiers. Another approach for semi-supervised learning is the co- training or multi-view learning procedure [18]. In this method, the classifiers are trained on distinct feature partitions (vie ws). The different classifiers are used for predictions on the un- labeled data, augmenting the training set with samples that are consistently recognized by the classifiers. Mahdhaoui and Chetouani [19] proposed multi-view training for SER using different sets of acoustic features. Similarly , Zhang et al. [20] utilized co-training along with active learning where they only annotated emotional labels for samples where the predictions were made with low confidence by the multi-vie w classifiers. Liu et al. [21] proposed multi-view learning for SER, where they used temporal and statistical acoustic features. Studies hav e also considered multi-view learning by incorporating multiple modalities [10], [14], [15]. This study is more closely related to the recent adv ances in deep learning that combine supervised and unsupervised learning. Similar to our work, Deng et al. [22] proposed combining an autoencoder and a classifier for SER. Their framew ork is based on a discriminativ e Restricted Boltzmann Machine (RBM), which considers unlabeled samples as an extra garbage class in the classification problem. Huang et al. [23] proposed learning affect sensitiv e features using a semi-supervised implementation of a con volutional neural network (CNN) for SER. In this study , general features are learned using an unsupervised CNN architecture, and then these features are fine-tuned for affect recognition. Similarly , Mao et al. [24] trained a CNN to learn salient features for SER. The CNNs were first trained on unlabeled samples using a sparse autoencoder and a reconstruction penalization. The in variant features were then used as inputs for learning affect sensitiv e feature representations. Our work follows these studies, further extending semi-supervised SER. Our study differs from previous studies by effecti vely training an autoencoder and a re gressor together , such that the auxiliary task of reconstructing the input feature vector and intermediate feature representations helps the primary supervised learning task. Jointly training the autoencoder (auxiliary task) and the regression problem (primary task) is an important contrib ution leading to more discriminativ e SER models. IEEE/A CM TRANSA CTIONS ON A UDIO, SPEECH, AND LANGUA GE PROCESSING, V OL. XX, NO. X, MA Y 2019 3 B. Auxiliary T asks and Multitask Learning There are multiple studies that have analyzed the regular - izing benefits of auxiliary tasks for SER. Xia and Liu [8] combined the learning of emotional categories and emotional attributes. The primary task was the classification of emotional categories. The secondary task was either classification or regression of emotional attrib utes. Parthasarathy and Busso [7] proposed to jointly predict arousal, valence and domi- nance scores using a MTL framew ork, where recognizing one of the attributes was the primary task and recognizing the other tw o attributes were the secondary tasks. The MTL framew ork learned the inherent correlation between the vari- ous emotional attributes leading to improv ements ov er STL. Similarly , Chang and Scherer [25] used arousal prediction as an auxiliary task for a v alence classifier . Chen et al. [26] showed similar improv ements in performance for the pre- diction of time-continuous emotional attributes. Their system jointly predicted arousal and valence scores, obtaining the best performance for the affect sub-task in the audio/visual emotion challenge (A VEC) in 2017 [27]. Le et al. [28] also used a MTL framework for time-continuous attribute recognition. Their frame work trained classifiers by discretiz- ing attribute scores into discrete classes using the k-means algorithm with k ∈ { 4 , 6 , 8 , 10 } . The different classifiers were then learned together as multiple auxiliary tasks using MTL framew ork. (e.g., learning together a four-class problem and a six-class problem). Similarly , Lotfian and Busso [6] showed improv ements for categorical emotion recognition by using a MTL framew ork for learning the dominant emotion (primary task) and secondary emotions also conv eyed in the sentence (auxiliary task). Previous studies hav e also considered using other auxiliary tasks to improve SER. Kim et al. [29] used gender and naturalness recognition as auxiliary tasks for emotion recog- nition. The naturalness task consisted of a binary classifier that determines whether the sentences were natural or acted recordings across different databases. T ao and Liu [9] used gender recognition and speaker identification as auxiliary tasks for classifying emotional categories on the USC-IEMOCAP corpus. Similarly , Zhao et al. [15] transferred age and gender attributes as auxiliary tasks to predict emotion attributes. Abdelwahab and Busso [30] used an auxiliary task for cross- corpus SER. The auxiliary task learned common representa- tion between the source and target domains using a domain adversarial neural network (D ANN). C. Ladder Networks The idea of ladder networks was first proposed by V alpola [31]. This work showed the benefits of using lateral shortcut connections to aid deep unsupervised learning. Rasmus et al. [32], [33] further extended this idea to support supervised learning. Classification and regression tasks were added to the unsupervised reconstruction of inputs through a denoising autoencoder . Finally , Pezeshki et al. [34] studied the various components that affected the ladder network, noting that lateral connections between encoder and decoder and the addition of noise at every layer of the network greatly contributed to the improv ed performance of this framework. The use of ladder network for SER is appealing since the auxiliary task is unsupervised, so we can use data from the target domain without labels. This feature of the proposed approach is a key distinction between our work and most MTL studies, which use supervised auxiliary tasks. The closest study to our paper is the work of Huang et al. [35], which was simultaneously dev eloped with our preliminary study [11]. They also proposed ladder networks for SER tasks. A key distinction between this study and our paper is that Huang et al. [35] only used ladder network to learn feature representa- tions, where the final classifier was a separate support vector machine (SVM). This tw o-step process is equi valent to training an autoencoder followed by a classifier . Instead, our proposed formulation jointly optimizes the unsupervised reconstruction and the supervised regression task in a single step. The proposed ladder network provides the final predictions for the emotional attrib ute without any additional re gressor , which (1) creates better feature representations that are discriminati ve for the target task, (2) allows our formulation to be trained as an end-to-end system (Sec. V -C). I I I . P RO P O S E D M E T H O D O L O G Y A. Motivation As stated in Section II, data with emotional labels are limited. Furthermore, data from the source domain (train set) is not guaranteed to ha ve the same distrib ution as the target do- main (test set). Therefore, most supervised framew orks trained on one corpus do not generalize well when tested across differ- ent tasks and corpora. Therefore, there is a fundamental need to regularize architectures such that they generalize across different tasks. This study aims to increase the generalization of SER models with (1) unsupervised auxililary tasks, (2) and unlabeled data from the tar get domain. Our moti vations are based on solving unsupervised auxiliary tasks, which aid the primary emotion recognition task. First, we want to fully utilize a vailable labeled data which is expensi ve to annotate. T o this e xtent, we build a MTL framew ork (Section III-C) where we jointly learn the dependencies between multiple emotional attributes. The MTL framew ork regularizes our architecture, but it still demands the utilization of e xpensive data labeled with emotional information. While labeling audio data for emotion is expensi ve, unlabeled data is more easily av ailable. The amount of unlabeled data is often greater than the amount of labeled data. The unlabeled data from the target domain can be used to reduce the gap between the source and target domains. W e propose to use ladder networks (Section III-B) to ef fectiv ely leverage unlabeled data. Collectiv ely , the MTL approach combined with the ladder network creates a semi- supervised architecture that effecti vely generalizes to new domains. This study shows that these powerful representations created by our model can be used across emotional corpora to achiev e state-of-the-art SER performance. B. Ladder Network for Speech Emotion Recognition Ladder networks, at their core, combine an unsupervised auxiliary task with a supervised classifier or regressor . Using IEEE/A CM TRANSA CTIONS ON A UDIO, SPEECH, AND LANGUA GE PROCESSING, V OL. XX, NO. X, MA Y 2019 4 !"#$ % & ' !"#$ % & ' !"#$ % & ' !"#$ % & ' ( ")' * $ * ( "+' * $ * ( "&' * $ * ( ",' * $ * - . ",' - . "&' - . "+' - . ")' / / 0 1 "2' / 3 0 1 "4' 0 1 "5' 6 3 7 "+' 7 "&' 7 ",' 0 8 "5' 0 8 "4' 0 8 "2' / 9 0 "5' 0 "4' 0 "2' / 6 Noisy&Encoder Decoder Clean&Encoder Fig. 1. Ladder network architecture using auxiliary tasks for emotion attribute prediction. The network has a noisy encoder , decoder and clear encoder used for inferences. The ladder connections connect the noisy encoder with the decoder . an autoencoder for supervised tasks is not new . T raditionally , the autoencoder is trained separately from the supervised task, where its goal is to learn features representations that are useful for reconstructing the input. Ho wev er, the information needed to reconstruct the input does not necessarily create a discriminativ e representation for the classification or regres- sion task. Therefore, it is important to combine the training of the autoencoder with the supervised task, which is a ke y feature of the ladder networks. Figure 1 illustrates a conceptual ladder network. A noisy version of the encoder is created by adding noise at e very layer of the encoder . The goal of the autoencoder is to reconstruct the feature representations at the input and intermediate layers. The core concept of the autoen- coder in the ladder network in volv es skip connections between corresponding encoder and decoder layers. Effecti vely , these skip connections provide a shortcut between the decoder and encoder , bypassing higher layers of the encoder . Therefore, the top layers of the encoder can learn representations better suited tow ards the primary discriminati ve task. This is a fundamental difference with simple autoencoders. Note that the ladder network combines the supervised task with an unsupervised auxiliary task. Therefore, the true benefit of the architecture is when it is used in a semi-supervised fashion. The rest of this section explains in detail the encoder and decoder of the ladder network. Encoder: The encoder consists of a multilayer per ceptron (MLP). A zero-mean Gaussian noise with variance σ 2 is added to each layer of the MLP ( N (0 , σ 2 ) in Fig. 1). The decoder is constructed to denoise the noisy latent representations ˜ z at ev ery layer . Therefore, a clean copy of the encoder path is built to get the targets z for reconstruction ( clean encoder in Fig. 1). Since the architecture reconstructs intermediate layers, ˆ z , a tri vial solution to minimize the cost is ˆ z = z = constant. T o avoid this tri vial solution, intermediate layers are normalized using batch normalization. Batch normalization is performed on all layers except the input layer . The scaling and bias v alues are learned as trainable parameters before applying the activ ation. Besides encoding the representations for reconstruction, the final layer of the encoder, ˜ z ( L ) , is used for training the supervised regression task, which in our case is the prediction of emotional attributes. The noisy representation ˜ z further regularizes the network. The clean representations z are used during inference. Decoder: Similar to the encoder, the decoder of the ladder network is a MLP ( decoder in Fig. 1). The layers of the de- coder network mirrors the layers of the encoder . The decoder is constructed to denoise the noisy representations of the encoder . The denoising process combines top down information from the decoder ( ˆ z ( l + 1 ) ) with lateral information from the corre- sponding encoder layer ( ˜ z ( l ) ). W ith the lateral connections, the network passes the information needed for denoising the latent representations, bypassing the top layers of the encoder , which can, instead, provide abstract discriminative information for the supervised re gression task. As a result, an unsupervised auxiliary cost is added without sacrificing the performance of the architecture for the supervised task. Different denoising functions, g ( · ) , can model different probability distributions of the latent v ariables [32], [34]. Previous studies ha ve sho wn that a single layer MLP combining top decoder layers and lateral encoder layers works the best for most tasks. Our preliminary experiments concluded that the same observation also holds for SER tasks. The denoising function g ( · ) takes as input u , ˜ z and u z (layer abbreviations are dropped for clarity), where u is a batch normalized projection of the decoder layer above ˆ z ( l + 1 ) , ˜ z is the corresponding noisy representation, and u z is a element wise product between the decoder and encoder elements. The element wise multiplication assumes that the latent variables are conditionally independent and modulates the encoder representation with the pre vious decoder layer ( ˆ z ( l + 1 ) ). The overall loss for the ladder network is giv en by C Ladder = C c + λ l X l C ( l ) d (1) where C ( l ) d is the reconstruction loss at layer l and λ l is a hyper-parameter that weighs the reconstruction loss at that layer . The supervised loss for predicting the emotional at- tributes, C c , is added when labeled samples are av ailable. Section IV -D gives the implementation and experimental setup used to train the regressor using the proposed ladder network framew ork. C. Multitask Learning for Emotion Recognition While the ladder network architecture makes efficient use of unlabeled samples to regularize the models, the generalization of the models can also be achiev ed by better utilizing labeled samples. F or the prediction of emotional attrib utes, one appeal- ing method is to jointly learn multiple emotional attributes. This procedure can be effecti vely done through MTL with shared and attribute-dependent layers [7]. Figure 2 illustrates a MTL network with shared hidden layers that jointly predicts arousal, v alence and dominance scores. The overall loss for the MTL architecture is giv en by C MTL = αC ar o + β C val + (1 − α − β ) C dom , (2) where C ar o , C val and C dom are individual losses for the prediction of arousal, valence and dominance, respecti vely . IEEE/A CM TRANSA CTIONS ON A UDIO, SPEECH, AND LANGUA GE PROCESSING, V OL. XX, NO. X, MA Y 2019 5 ! "#$ % & '() ! "*$ & +', & -./ 0 "1$ 0 "2$ Fig. 2. Multitask learning (MTL) architecture to jointly predict arousal, v a- lence and dominance [7]. The ladder network architecture can be implemented with MTL, combining supervised and unsupervised auxiliary tasks. These losses are multiplied by the hyper-parameters α and β , respectiv ely , with α, β ∈ [0 , 1] and α + β ≤ 1 . Particular solutions of this formulation are the STL frameworks for arousal ( α = 1 , β = 0 ), valence ( α = 0 , β = 1 ) and dominance ( α = 0 , β = 0 ). An interesting extension of the proposed ladder network formulation for SER is combining the unsupervised and su- pervised auxiliary losses. W e achie ve this goal by replacing C c in Equation 1 with C MTL from Equation 2. In Section V, we ev aluate the implementation of the ladder network with STL and MTL. C Lad + M T L = αC ar o + β C val + (1 − α − β ) C dom + λ l X l C ( l ) d (3) I V . E X P E R I M E N T A L S E T U P A. Datasets This study uses multiple datasets for the dif ferent experi- ments in Section V. The primary corpus is the MSP-Podcast (V ersion 1.2) [36], used for all the within corpus experiments (Sec. V -A). The MSP-Podcast contains speech collected from online downloadable audio shows, covering various topics such as politics, sports, entertainment, and motiv ation talks. Therefore, they contain naturalistic speech spanning the emo- tional spectrum observed during natural con versations. W e use a diarization toolkit which identifies segments from distinct speakers. The podcast con versations are sequentially analyzed by automatic algorithms to remove music, silence portions and noisy recordings. W e also remov e se gments with overlapped speech. The selected segments contain a single speaker with duration between 2.75s and 11s. T o balance the emotional content of the corpus, we retriev e samples that we believ e are emotional following the idea proposed in Mariooryad et al. [37]. Overall, the corpus contains 50 hours of speech (29,440 speaking turns), which were annotated with emotional labels using Amazon Mechanical T urk. The perceptual ev aluation used a modified version of the cro wdsource-based protocol presented in Burmania et al. [38] to track in real-time the performance of the annotators. The data was annotated for both categorical emotions as well as emotional attributes. This study focuses on the emotional attributes. Each speaking turn was annotated on a scale from one to se ven by at least fi ve raters for arousal (1 - very calm, 7 - very activ e), valence (1 - very negativ e, 7 - very positi ve) and dominance (1 - very weak, 7 - very strong). W e manually identified speaking turns belonging to 346 speakers in the MSP-Podcast database. The test set contains data from 50 speakers (7,341 speaking turns). The de velopment set contains data from 20 speakers (3,753 speaking turns). The training set has the remaining labeled speaking turns (18,346 segments). This data partition aims to create speaker independent sets for the train, dev elopment and testing sets. Besides the labeled data, the MSP-Podcast also contains more than 300 hours of unlabeled data (175,196 seg- ments), corresponding to the pool of clean segments identified from the podcasts, which hav e not been annotated. This study uses these segments to train the ladder networks (Sec. III-B) in a semi-supervised fashion. Section V -A presents the results of the experiments conducted on the MSP-Podcast corpus. Besides the MSP-Podcast corpus, we use two other databases for cross corpora e valuations (Sec. V -B). The first database is the USC-IEMOCAP corpus [39], which contains interactions between pairs of actors improvising scenarios. The database contains 10,527 speaking turns from 10 actors appearing in fiv e dyadic sessions. The speech segments were annotated for arousal, v alence and dominance by two raters on a fiv e-Likert scale. More information about this corpus is provided in Busso et al. [40]. W e also use the MSP-IMPR O V corpus [41], which contains interactions between pairs of actors improvising scenarios. In addition to the improvised scenarios, the dataset also contains the interactions between the actors during the breaks, resulting in more naturalistic data. The MSP-IMPR O V corpus was annotated with emotional labels using Amazon Mechanical T urk using the approach proposed by Burmania et al. [38]. Each sentence was annotated for arousal, valence and dominance by five or more raters using a fiv e-Likert scale. More information about this corpus is provided in Busso et al. [41]. B. Acoustic F eatures This study predominantly uses the acoustic features intro- duced for the paralinguistic challenge at Interspeech 2013 [42]. These features, which are referred to as the ComParE feature set, are extracted in a two-step procedure. First, LLDs are extracted over 20 millisecond frames (100 fps). These LLDs include loudness, mel-frequency cepstral coefficients (MFCCs), fundamental frequency (F0), spectral flux, spectral slope, jitter and shimmer . Second, segment-le vel features are calculated over the LLDs, leading to a fixed dimensional feature vector . These statistics are referred to as high-level descriptors (HLDs) and include various functionals such as the arithmetic and geometric means, standard deviations, peak to peak distances and rise and fall times. The ComParE feature set contains 130 LLDs (65 LLDs + 65 delta) and 6,373 HLDs. Most databases are annotated at the segment-le vel with a single annotation capturing the emotional content of the entire IEEE/A CM TRANSA CTIONS ON A UDIO, SPEECH, AND LANGUA GE PROCESSING, V OL. XX, NO. X, MA Y 2019 6 segment. Since speech segments hav e variable lengths, most emotion recognition algorithms hav e to deal with variable length inputs. The HLDs alleviate this problem by creating a fixed dimension input regardless of the length of the sequence. Previous studies have sho wn the benefits of HLDs for SER tasks [7], [43]. C. System Description This study uses two baselines and dif ferent implementations of the proposed approach to analyze the performance of the ladder network architecture. All regression systems are trained on the train set, optimizing their performances on the dev elopment set. The best system per condition in the dev elopment set is then ev aluated on the test set, where we report the results. The study uses two baselines to compare the performance of the proposed architecture. The first baseline uses the STL framew ork, which is the con ventional method for the regres- sion of emotional attributes. The STL framework considers only one of the emotional attribute at a time, creating separate models for arousal, valence and dominance. This approach is referred to as STL . The second baseline uses the MTL framew ork proposed by P arthasarathy and Busso [7] (Sec- tion III-C). This system jointly predicts all three emotional attributes, but it only uses supervised auxiliary tasks without the ladder network. It is e xpected that the MTL systems should provide a stronger baseline compared to the STL systems, since they use supervised auxiliary tasks. This approach is referred to as MTL . The ladder network architecture, denoted with Lad , is studied using four implementations grouped into two settings. The first setting only uses the labeled portion of the corpus. The ladder network is implemented as a supervised problem. W e denote this setting by adding the term L to the name of the system. The second setting uses the entire corpus containing the labeled and unlabeled portions of the corpus. The ladder network is implemented as a semi-supervised problem. For training with the unlabeled set, we alternate between a mini-batch of unlabeled samples and a mini-batch of labeled samples. W e denote this setting by adding the term UL to the name of the system. For both settings, we implement the ladder network with either STL (Eq. 1) or MTL (Eq. 3). W e denote the corresponding implementation by adding the term STL or MTL to the name of the system. F or e xample, the ladder network trained with labeled and unlabeled data using STL is denoted as Lad + UL + STL . W e expect that combining MTL with the ladder network should result in improved performance as we use both supervised and unsupervised auxiliary tasks to aid our primary task of predicting emotional attributes. D. System Ar chitectur e The baselines and the proposed ladder network models are implemented with feed forward dense networks using sentence-lev el features as inputs. The dense networks contain two hidden layers with 256 nodes in each layer . The activ ation of the neurons in each layer corresponds to the r ectified linear unit (ReLU). The input to the dense network is a 6,373D feature vector containing the HLDs for a speaking turn (Sec. IV -B). The output is the predicted value of the emotional attribute. The features and labels are normalized using the z- normalization with the mean and standard deviation calculated ov er the train set. The models are trained with a learning rate of 5 e − 5 for 100 epochs. The model with the best performance on the development set across epochs is ev aluated on the test set. For the architectures of the STL and MTL baselines, we include a dropout of p = 0 . 5 between the input and the first hidden layer, and between the first and second hidden layers. This setting provides the best regularization on the dev elopment set. The hyper-parameters for the MTL methods are optimized using the development set. The parameters α and β are separately optimized for each emotional attribute using the development set. Therefore, we have three systems, one for each attribute, with dif ferent combination for α and β . For the ladder network, we only use dropout between the input layer and the first hidden layer, following our previous work [11]. The dropout is set to p = 0 . 1 . Since the ladder network is also regularized by unsupervised auxiliary tasks, reducing the influence of dropout led to better performance on the dev elopment set. For the noisy encoder (Fig. 1), we add a Gaussian noise with variance σ 2 = 0.3 to the encoder . The hyper -parameter for the reconstruction loss is set to λ l = 1 (Eqs. 1 and 3). A preliminary search on the dev elopment set showed no significant difference between λ l = 1 , λ l = 0 . 1 and λ l = 10 . W e do not optimize the v alue of λ l to reduce the computational resources needed to train the system, acknowl- edging that better results may be possible by conducting an exhausti ve search for this parameter over the dev elopment set. The mean squared err or (MSE) function is used to measure the reconstruction loss. All our models are trained and e v aluated using the concor- dance corr elation coefficient (CCC). The CCC maximizes the Pearson’ s correlation between the true and predicted v alues, while minimizing the difference between their means. Pre vious studies hav e shown the benefits of training with CCC as the objectiv e function over the MSE [11], [44], [45]. All neural networks in this study are trained using the NAD AM optimizer [46]. V . E X P E R I M E N T A L R E S U LT S A. W ithin Corpus Results The experimental ev aluation in this section analyzes the power of the proposed ladder network systems for within corpus experiments in the MSP-Podcast corpus. The systems are trained and tested on the MSP-Podcast corpus using the ComParE feature sets (Section IV -B). W e analyze the perfor- mance in terms of CCC for arousal, valence and dominance. In this section, we report and compare the performance of our models on the dev elopment and test sets to e valuate the generalization of our approach (T able I). The de velopment set includes the best performance, per model, across epochs obtained on this set. W e compare the CCC scores of the proposed models against the baselines, asserting whether the IEEE/A CM TRANSA CTIONS ON A UDIO, SPEECH, AND LANGUA GE PROCESSING, V OL. XX, NO. X, MA Y 2019 7 T ABLE I W I TH I N - CO R P US E V A L UATI O N O N T H E M SP - P O DC A S T C OR P U S . T H E R E SU LT S C O R R ES P O ND T O T HE C C C V A L UE S AC H I EV E D B Y D I FF E RE N T I M PL E M E NTA T I O N S O F T H E L A D D ER N E T WO R K A RC H I TE C T U RE O N T H E D E VE L O P ME N T A ND T E S T S E T S . ( • I N D IC A T E S T H A T O N E M OD E L I S S I GN I FI C AN T L Y B ET T E R TH A N T HE S T L BA S E LI N E ; ∗ IN D I C A T E S T H A T O N E M O D E L I S S I GN I FI C AN T L Y B ET T E R TH A N T H E M T L BA S E LI N E ). T ask Dev elopment Arousal V alence Dominance STL 0.773 0.491 0.713 MTL 0.782 0.509 0.726 Lad + L + STL 0 . 793 •∗ 0.489 0 . 732 • Lad + L + MTL 0.795 •∗ 0.497 0.736 • Lad + UL + STL 0 . 792 •∗ 0.489 0 . 733 • Lad + UL + MTL 0 . 792 •∗ 0.489 0 . 733 • T est Arousal V alence Dominance STL 0.743 0.312 0.670 MTL 0.745 0.293 0.671 Lad + L + STL 0 . 765 •∗ 0.303 0.678 Lad + L + MTL 0 . 763 •∗ 0.293 0 . 690 •∗ Lad + UL + STL 0.770 •∗ 0.301 0.700 •∗ Lad + UL + MTL 0.770 •∗ 0.301 0.700 •∗ differences in performance are statistically significant using the Fisher Z-transformation test (one-tailed z-test, p -v alue < 0.05). On the de velopment set, T able I sho ws that the best perform- ing systems for ladder network architectures are significantly better than the STL baseline for arousal and dominance. For these emotional attrib utes, the best performance is achie ved by the ladder network implemented with MTL with only labeled data. The results on the test set are very consistent with the trends observed in the development set, demonstrating the generalization of the models (T able I). For arousal, the results of the ladder network framew orks are statistically significantly better than the results achieved by both baseline methods. For dominance, the ladder network architectures trained with labeled and unlabeled data lead to statistically significant improv ements o ver both baseline frame works. The frame works trained with unlabeled data give the best performance for both arousal and dominance. Under this setting, the ladder network truly utilizes the abundant unlabeled data and generalizes to unseen data. T able I sho ws that for within corpus ev aluations, the baseline methods achiev e better results for valence. W e will show in Section V -B that this is not the case for cross corpus ev aluations, where our proposed ladder network architectures achiev e better performance than the the baseline methods for all the emotional attributes. W e calculate the average performance between the de velop- ment and test ev aluations for each of the methods, to visualize the general trends in the results. Figure 3 sho ws the results, where statistically significant improv ements over the baseline methods are denoted with symbols on top of the bars. Overall, we achieve relative gains of 3.0% for arousal, and 3.5% for dominance using the proposed architectures over the STL method. B. Cr oss Corpus Results This study also explores the generalization of the proposed ladder network with cross-corpus e xperiments. Specifically , STL MTL Lad + L + STL Lad + L + MTL Lad + UL + STL Lad + UL + MTL 0.7 0.72 0.74 0.76 0.78 0.8 0.758 0.764 0.779 0.779 0.781 0.781 (a) Arousal STL MTL Lad + L + STL Lad + L + MTL Lad + UL + STL Lad + UL + MTL 0.32 0.34 0.36 0.38 0.4 0.42 0.402 0.401 0.396 0.395 0.395 0.395 (b) V alence STL MTL Lad + L + STL Lad + L + MTL Lad + UL + STL Lad + UL + MTL 0.64 0.66 0.68 0.7 0.72 0.692 0.698 0.716 0.716 0.713 0.705 (c) Dominance Fig. 3. Within-corpus e valuation on the MSP-Podcast corpus using sentence- lev el features (HLDs). The figures report the mean CCC v alues obtain in the dev elopment and test sets ( • indicates that one model is significantly better than the STL baseline; ∗ indicates that one model is significantly better than the MTL baseline). we train the models on the MSP-Podcast corpus maximizing performance on its development set. The models are then tested on either the USC-IEMOCAP corpus or MSP-IMPR O V corpus. W e compare the results with within corpus ev aluations using the STL framework, where the models are trained and tested with data from the same corpus ( W ithin-corpus (WC) Baseline in T able II). For the within corpus ev aluation, the USC-IEMOCAP and MSP-IMPRO V corpora are divided into speaker independent partitions. The results are reported across all the test partitions. For consistency , the results for the ladder networks are also estimated for each partition, reporting the av erage across folds. W e train the ladder network architectures introduced in Section IV -C using labeled and unlabeled data. For the labeled setting, we use samples only from the MSP-Podcast corpus. For the unlabeled setting ( UL in T able II), we assume we hav e access to the samples from the target corpus. W e include the target corpus for the unsupervised reconstruction using the autoencoder . Notice that we use the speech recordings, IEEE/A CM TRANSA CTIONS ON A UDIO, SPEECH, AND LANGUA GE PROCESSING, V OL. XX, NO. X, MA Y 2019 8 T ABLE II C RO S S - C O R PU S E V A L UA T I O N WH E R E TH E M O D EL S A R E T R A I NE D O N TH E M S P- P O D CA S T C OR P U S AN D T E ST E D O N EI T H E R T H E U SC - I E MO C A P O R TH E M S P -I M P ROV C OR P O R A . T H E T A B L E RE P O RT S T H E A V E RA G E C C C V A L UE S AC RO S S F OL D S A N D T H E S T A N DA R D D E V I A T I O N . WC Baseline C O R R ES P O N DS T O TH E W I TH I N - CO R P U S B A SE L I NE . ( • I N DI C A T E S T H A T O N E M O D EL I S S I GN I FI C AN T L Y B ET T E R T HA N T H E S T L BA S E LI N E ; ∗ I N DI C A T E S T HAT O NE M O D EL I S S I GN I FI CA N T L Y B E T TE R T H A N T H E M TL BA S E L IN E ) . T ask IEMOCAP Arousal V alence Dominance STL 0.560 ± 0.122 0.135 ± 0.070 0.378 ± 0.103 MTL 0.584 ± 0.078 0.144 ± 0.067 0.370 ± 0.097 Lad + L + STL 0 . 590 ± 0 . 074 •∗ 0 . 154 ± 0 . 052 • 0 . 391 ± 0 . 107 •∗ Lad + L + MTL 0 . 589 ± 0 . 065 • 0.141 ± 0.056 0 . 408 ± 0 . 103 •∗ Lad + UL + STL 0 . 603 ± 0 . 043 •∗ 0.092 ± 0.071 0 . 476 ± 0 . 076 •∗ Lad + UL + MTL 0 . 623 ± 0 . 036 •∗ 0 . 235 ± 0 . 056 •∗ 0 . 441 ± 0 . 086 •∗ WC Baseline 0.661 ± 0.051 0.487 ± 0.044 0.512 ± 0.055 MSP-IMPR OV Arousal V alence Dominance STL 0.471 ± 0.112 0.235 ± 0.078 0.440 ± 0.134 MTL 0.442 ± 0.116 0.231 ± 0.082 0.449 ± 0.128 Lad + L + STL 0 . 490 ± 0 . 108 ∗ 0 . 287 ± 0 . 075 •∗ 0.436 ± 0.130 Lad + L + MTL 0 . 480 ± 0 . 107 ∗ 0 . 293 ± 0 . 073 •∗ 0 . 464 ± 0 . 123 •∗ Lad + UL + STL 0 . 547 ± 0 . 094 •∗ 0 . 349 ± 0 . 087 •∗ 0 . 463 ± 0 . 096 •∗ Lad + UL + MTL 0 . 547 ± 0 . 094 •∗ 0 . 328 ± 0 . 091 •∗ 0 . 463 ± 0 . 096 •∗ WC Baseline 0.599 ± 0.112 0.408 ± 0.090 0.471 ± 0.098 without the emotional labels. Since the emotional attributes in the MSP-Podcast and the target corpora are annotated on different scales, we transform the attribute scores of the MSP- Podcast corpus to match the scales of the target corpora using an affine transformation. W e report the mean and standard deviation ov er all the test partitions. W e compare the CCC values obtained by the ladder networks with the results from the baselines, testing their significance with the one-tailed, matched-paired t-test asserting significance at p -value < 0.05. T able II describes the results for the cross-corpus experiments. Figures 4 (USC-IEMOCAP) and 5 (MSP-IMPR O V) illustrate the mean performance across test partitions. First, we discuss the results for the USC-IEMOCAP database. Under the fully labeled setting, the ladder network systems achiev e significant improvements o ver the STL base- line for arousal and dominance. Additionally , the systems sig- nificantly improve the performance for dominance compared to the MTL baseline. For valence, we achie ve significant gains ov er the STL baseline with the Ladder + L + STL model. With unlabeled data from the USC-IEMOCAP corpus (UL setting), we obtain significant gain ov er the baselines. The systems perform significantly better than the baselines for all three emotional attributes. W e observ e relati ve gains up to 11.3% for arousal, 74.1% for v alence, and 25.9% for dominance over the STL baseline (Figure 4). The CCC values for these systems are closer to the results obtained by the within-corpus baseline. The significant gains reported in this section sho w the potential of the ladder network architecture, especially when unlabeled data from the target corpus is av ailable. W e observ e similar results in the ev aluation on the MSP- IMPR O V database, where most of the architectures using STL MTL Lad + L + STL Lad + L + MTL Lad + UL + STL Lad + UL + MTL Within Corpus 0.55 0.6 0.65 0.7 0.560 0.584 0.590 0.589 0.603 0.623 0.661 (a) Arousal STL MTL Lad + L + STL Lad + L + MTL Lad + UL + STL Lad + UL + MTL Within Corpus 0.1 0.2 0.3 0.4 0.5 0.487 0.135 0.235 0.144 0.154 0.141 0.092 (b) V alence STL MTL Lad + L + STL Lad + L + MTL Lad + UL + STL Lad + UL + MTL Within Corpus 0.35 0.4 0.45 0.5 0.55 0.378 0.370 0.391 0.408 0.476 0.441 0.512 (c) Dominance Fig. 4. Cross-corpus ev aluation, when the models are tested on the USC- IEMOCAP corpus. The figure reports the av erage CCC values across folds ( • indicates that one model is significantly better than the STL baseline; ∗ indicates that one model is significantly better than the MTL baseline). ladder network achiev e significant improvements in the CCC values over the STL and MTL baselines. For valence, the proposed architectures perform significantly better than both baselines. For arousal and dominance, the use of unlabeled data leads to statistically significant improvements ov er the STL and MTL baselines. Figure 5 sho ws that the inclusion of unlabeled data from the target corpus greatly improves the performance of the ladder network architectures, achieving CCC scores that are closer to the within-corpus baseline. Under this setting, the proposed systems are significantly better than the baselines for all three emotional attrib utes. Overall, the Lad + UL +MTL architecture achie ves relative gains of 16.1% for arousal, 40% for v alence, and 5.5% for dominance ov er the STL baseline. These results demonstrate the real benefits of the ladder network architecture, which generalizes better in cross corpus SER problems. IEEE/A CM TRANSA CTIONS ON A UDIO, SPEECH, AND LANGUA GE PROCESSING, V OL. XX, NO. X, MA Y 2019 9 STL MTL Lad + L + STL Lad + L + MTL Lad + UL + STL Lad + UL + MTL Within Corpus 0.4 0.45 0.5 0.55 0.6 0.65 0.471 0.442 0.490 0.480 0.547 0.547 0.599 (a) Arousal STL MTL Lad + L + STL Lad + L + MTL Lad + UL + STL Lad + UL + MTL Within Corpus 0.2 0.25 0.3 0.35 0.4 0.45 0.235 0.231 0.287 0.293 0.349 0.328 0.408 (b) V alence STL MTL Lad + L + STL Lad + L + MTL Lad + UL + STL Lad + UL + MTL Within Corpus 0.4 0.42 0.44 0.46 0.48 0.5 0.440 0.449 0.436 0.464 0.463 0.463 0.471 (c) Dominance Fig. 5. Cross-corpus e valuation, when the models are tested on the MSP- IMPR OV corpus. The figure reports the average CCC values across folds ( • indicates that one model is significantly better than the STL baseline; ∗ indicates that one model is significantly better than the MTL baseline). C. Results with F rame-Level F eatur es Con ventionally , SER problems are formulated using sentence-lev el features ov er short speech segments. Previ- ous studies often rely on statistics estimated over LLDs, where popular examples include the feature sets proposed for the paralinguistic challenges at Interspeech [42], [47]. An alternativ e approach is to directly use a sequence of features extracted at the frame-level o ver short segments (e.g., 40 ms). W e refer to these features as frame-lev el features. W ith the adv ancements of DNNs, many recent techniques for SER have focused on frame-lev el dynamic features. A popular SER option among these framew orks is the use of CNNs to learn high-lev el feature representations from the frame-lev el representations. Cummins et al. [48] borrowed successful CNN architectures from the computer vision do- main by treating speech spectrograms as images. Mao et al. [24] performed SER in a two step approach using a CNN architecture on frame-level features. The first step learned features from unlabeled data and a sparse autoencoder . These features were then used for the recognition task. Trigeor gis et al. [44] proposed a CNN architecture to perform end-to- end SER that took raw speech wav eforms as inputs. They showed high correlation between the learned features and hand-crafted features which hav e been used in pre vious works (e.g loudness, mean fundamental frequenc y). Neumann and V u [49] proposed an attention based con volutional neural network for emotion recognition. An attention layer was used at the final feature representation from the CNN that performed a weighted pooling of the features from different time frames. Y ang and Hirschber g [50] predicted arousal and valence using CNNs trained on spectrogram inputs. Finally , Aldeneh and Prov ost [51] proposed to train 1-D CNNs on mel-filter bank energies to capture regional saliency for emotion recognition. Follo wing these previous works, our study also examines the effect of our system using CNNs on frame-level features, demonstrating that the proposed ladder network architecture can also be implemented with these features. Most previous frame works use either frame-lev el features (e.g., MFB) or audio wa veforms to learn discriminative fea- tures for the task at hand. Such methods enable end-to- end learning, where the features and the classification or regression tasks are jointly learned during training. Follo wing this formulation, this study explores the use of the proposed ladder networks with frame-level features. W e consider two alternativ e frame-le vel features: (1) using the LLDs of the ComParE feature set (65D vector – see Sec. IV -B), and (2) MFB energies. Similar to pre vious studies, we use n =40 bands for the MFB [51]. These models are compared with systems trained with HLDs. Figure 6 shows the proposed CNN-based architecture for frame-based features. The input to the CNN is a 65D × T matrix (ComParE LLD) or a 40D × T (MFB) matrix, where T is the time dimension. The CNN architecture consists of four con volutional layers followed by two fully connected (FC) layers and a linear output layer . The con volutional layers perform 1D con volutions along the time axis with the frame- lev el features as the inputs for the first con volutional layer . W e use a 1D max pooling layer after ev ery con volutional layer to sequentially reduce the dimension of the time axis. W e flatten the outputs from the final con volutional layer before passing them to the FC layers. While the downstream conv olutional layers can deal with variable length sequences, the upstream FC layers require a fixed length input. Therefore, we fix T at 1000, which corresponds to 10 seconds of speech (100 fps). W e use this v alue, since most speech se gments in the dif ferent datasets used in this study are less than 10 seconds. Segments with duration greater than 10 seconds are truncated. Sentences with duration less than 10 seconds are padded with zeros. The analysis in this section includes only within corpus experiments on the MSP-Podcast corpus. All the parameters for the CNN architecture are optimized on the de velopment set of the MSP-Podcast corpus. T raining ladder networks with frame-lev el features is computationally e xpensi ve. T o ease this process, we impose two constraints on the ladder networks trained with frame-level features. First, the reconstruction costs IEEE/A CM TRANSA CTIONS ON A UDIO, SPEECH, AND LANGUA GE PROCESSING, V OL. XX, NO. X, MA Y 2019 10 1000#x#65 1000#x#40 1000#x#128 250#x#128 250#x#128 63#x#128 63#x#256 16#x#256 16#x#256 4#x#256 Conv1 ks =#5 maxpool ps =#4 Conv2 ks =#5 maxpool ps =#4 Conv3 ks =#5 maxpool ps =#4 Conv4 ks =#5 maxpool ps =#4 1 flatten 1024 256 256 fc1 fc2 output LLD MFB Fig. 6. CNN architecture to predict emotional attributes with frame-level features (LLDs or MFB). The architecture contains 4 blocks of 1D conv olutional layer followed by a 1D maxpooling layer . After flattering the last con volution layer , the network includes two fully connected layers and the output prediction layer ( ks : kernel size, ps : pool size and fc : fully connected). T ABLE III E V A L UA T I O N OF L A D DE R N E TW O R K WI T H F RA M E - LE V E L FE ATU R E S . T H E R E SU LT S C O R R ES P O ND T O W IT H I N - C O R P US E V A L UA T I ON S U S IN G T H E M S P- P O D CA S T C OR P U S . T H E T A B LE R E P ORT S C C C FO R D I FFE R E N T A R CH I T E CT U R ES U S I N G C N N S TR A I N ED W I T H E I T H ER L L D S O R M F B ( • I N DI C A T E S T HAT O NE M O D EL I S S I GN I FI CA N T L Y B E T TE R T H A N T H E S TL BA S E L IN E ; ∗ I ND I C A T E S TH AT O N E M OD E L I S SI G N I FIC A N T L Y B E TT E R T H AN T H E M TL B AS E L I NE ) . T ask LLD-CNN Arousal V alence Dominance STL 0.756 0.244 0.682 MTL 0.759 0.223 0.684 Lad+STL+L 0 . 768 • 0 . 274 •∗ 0.687 Lad+MTL+L 0 . 769 • 0 . 274 •∗ 0.681 Lad+STL+UL 0 . 769 • 0.279 •∗ 0.687 Lad+MTL+UL 0.771 •∗ 0 . 269 ∗ 0.685 MFB-CNN Arousal V alence Dominance STL 0.733 0.204 0.659 MTL 0.738 0.254 • 0.659 Lad+STL+L 0.744 0.200 0.659 Lad+MTL+L 0.741 0.200 0.659 Lad+STL+UL 0.743 0 . 232 • 0.655 Lad+MTL+UL 0.740 0.184 0.656 are implemented only on the two fully connected layers after the flattening layer (i.e., layers fc1 and fc2 in Fig.6). This network is similar to the τ network suggested by V alpola et al. [31]. Second, we do not use the entire unlabeled portion of the corpus in every epoch. Instead, we use the same number of unlabeled and labeled samples for ev ery epoch, randomly selecting 29,440 unlabeled samples in every epoch. The STL and MTL baselines are also implemented with CNNs. T able III shows the results for the different systems using the CNN-based architecture trained with either LLDs or MFB features. Similar to Section V -A, we ev aluate the differences in CCC v alues using the Fisher Z-transformation (one-tailed z-test, p -v alue < 0.05). When the CNNs are trained with LLDs, we observe that the ladder networks provide significant gains ov er the baseline for arousal (STL) and valence (STL, MTL). For valence, the proposed architectures provide relativ e gains up to 14.3% on the test set. F or dominance, the models achie ve similar performance to the baselines, where the differences are not statistically significant. When the CNNs are trained with MFB, we observe similar performance. W e observe statistically significant improv ements ov er the STL baseline only for v alence using the Lad+STL+UL network. W e expect that a better result can be achieved if the reconstruction loss is implemented to also include the con volutional layers. Finally , we also compare the ov erall results of the models trained with sentence-lev el features (HLD) and frame-level features (CNN-LLD, CNN-MFB). Figure 7 shows the average CCC results across architectures, including the baselines. For arousal and dominance, we observe similar performance for systems trained with either the HLDs (sentence-level features), or the CNN-LLD (frame-label features). In contrast, the sys- tem trained with sentence-le vel features achie ves better results for valence. The results are consistently lower when using MFB. MFB features only provide spectral information, while the LLDs and HLDs also provide prosodic and voice quality information, which are important cues for SER problems. V I . C O N C L U S I O N S This study proposed the use of ladder network in speech emotion recognition. The approach combines the unsuper- vised auxiliary task of reconstructing intermediate feature representations, with the primary task of predicting emotional attributes. The unsupervised nature of the auxiliary task eases the pressure on the expensi ve emotion labeling process by lev eraging unlabeled data from the source domain. The unsu- pervised auxiliary task reconstructs the input and the interme- diate feature representations through a denoising autoencoder . The ladder networks contain skip connections between the noisy encoder and the decoder , allowing the higher layers of the encoder to learn discriminative representations. Dif ferent implementations of the proposed system were e v aluated in within corpus ev aluations and cross-corpus ev aluations. In the within-corpus ev aluations, we analyzed the benefits of the proposed architectures over competitive STL and MTL baselines, sho wing significant improvements for arousal and dominance. In the cross-corpus ev aluations, the models were trained on the MSP-Podcast corpus and e v aluated on the USC- IEMOCAP and MSP-IMPR O V corpora. The results indicated significant gains when using the proposed models, underlying the generalization power of the ladder networks. The improv e- ments were particularly high when using unlabeled data from the target domain, exploiting all the benefits of the proposed architecture. Finally , the study analyzed the performance of the proposed architecture for different feature inputs. W e showed that we can achiev e similar performance with a CNN-based implementation trained on frame-lev el features. IEEE/A CM TRANSA CTIONS ON A UDIO, SPEECH, AND LANGUA GE PROCESSING, V OL. XX, NO. X, MA Y 2019 11 HLD LLD-CNN MFB-CNN 0.7 0.75 0.8 0.759 0.765 0.740 (a) Arousal HLD LLD-CNN MFB-CNN 0.2 0.25 0.3 0.260 0.212 0.301 (b) V alence HLD LLD-CNN MFB-CNN 0.64 0.66 0.68 0.7 0.72 0.685 0.685 0.658 (c) Dominance Fig. 7. Evaluation of ladder network with frame-lev el features. Mean CCC values across different architectures for sentence-level features (HLD) and frame-lev el features (LLDs and MFB). Frame-le vel features are implemented with CNN using the architecture described in Figure 6. Based on the cross-corpus results, our future work will explore the use of the representations learned by the ladder networks as inputs for emotion recognition tasks in general. W e will also explore the ladder network architecture for emotion recognition from other modalities such as video and image. Previous studies hav e sho wn the dif ficulty of predicting valence from acoustic cues [52], [53]. Our recent study have shown that acoustic cues for v alence are highly speaker dependent, where the networks require higher re gularization [52]. Our future research direction will use these findings to improve the ladder network architectures for predicting valence scores. Finally , we aim to improve the performance of the ladder network architecture with frame-lev el features, paying special attention to valence, extending the scope of our data-driv en speech emotion recognition systems. A C K N O W L E D G M E N T This study was funded by the National Science Foundation (NSF) CAREER grant IIS-1453781. R E F E R E N C E S [1] N. Cummins, S. Scherer , J. Krajewski, S. Schnieder, J. Epps, and T . Quatieri, “ A revie w of depression and suicide risk assessment using speech analysis, ” Speech Communication , vol. 71, pp. 10–49, July 2015. [2] D. Litman and K. Forbes-Riley , “Predicting student emotions in computer-human tutoring dialogues, ” in ACM Association for Compu- tational Linguistics (ACL 2004) , Barcelona, Spain, July 2004, pp. 1–8. [3] C. Clav el, I. V asilescu, L. De villers, G. Richard, and T . Ehrette, “Fear - type emotion recognition for future audio-based surveillance systems, ” Speech Communication , vol. 50, no. 6, pp. 487–503, June 2008. [4] Y . Zhang, Y . Liu, F . W eninger, and B. Schuller, “Multi-task deep neural network with shared hidden layers: Breaking do wn the wall between emotion representations, ” in IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP 2017) , New Orleans, LA, USA, March 2017, pp. 4490–4494. [5] R. Caruana, “Multitask learning, ” Machine Learning , vol. 28, no. 1, pp. 41–75, July 1997. [6] R. Lotfian and C. Busso, “Predicting categorical emotions by jointly learning primary and secondary emotions through multitask learning, ” in Interspeec h 2018 , Hyderabad, India, September 2018, pp. 951–955. [7] S. Parthasarathy and C. Busso, “Jointly predicting arousal, valence and dominance with multi-task learning, ” in Interspeech 2017 , Stockholm, Sweden, August 2017, pp. 1103–1107. [8] R. Xia and Y . Liu, “ A multi-task learning framework for emotion recognition using 2D continuous space, ” IEEE T ransactions on Affective Computing , v ol. 8, no. 1, pp. 3–14, January-March 2017. [9] F . T ao and G. Liu, “ Advanced LSTM: a study about better time dependency modeling in emotion recognition, ” in IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP 2018) , Calgary , AB, Canada, April 2018, pp. 2906–2910. [10] D. Kim, M. Lee, D. Y . Choi, and B. Song, “Multi-modal emotion recog- nition using semi-supervised learning and multiple neural networks in the wild, ” in A CM International Confer ence on Multimodal Inter action (ICMI 2017) , Glasgow , UK, Nov ember 2017, pp. 529–535. [11] S. Parthasarathy and C. Busso, “Ladder networks for emotion recog- nition: Using unsupervised auxiliary tasks to improve predictions of emotional attrib utes, ” in Interspeec h 2018 , Hyderabad, India, September 2018, pp. 3698–3702. [12] R. Lotfian and C. Busso, “Building naturalistic emotionally balanced speech corpus by retrieving emotional speech from existing podcast recordings, ” IEEE T ransactions on Affective Computing , v ol. T o appear, 2019. [13] Z. Zhang, F . W eninger, M. W ollmer , and B. Schuller, “Unsupervised learning in cross-corpus acoustic emotion recognition, ” in IEEE W ork- shop on Automatic Speech Recognition and Understanding (ASR U 2011) , W aikoloa, HI, USA, December 2011, pp. 523–528. [14] Z. Zhang, F . Ringev al, B. Dong, E. Coutinho, E. Marchi, and B. Schuller, “Enhanced semi-supervised learning for multimodal emotion recogni- tion, ” in IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP 2016) , Shanghai, China, March 2016, pp. 5185– 5189. [15] Z. Zhang, J. Han, J. Deng, X. Xu, F . Ringev al, and B. Schuller, “Leverag- ing unlabeled data for emotion recognition with enhanced collaborative semi-supervised learning, ” IEEE Access , vol. 6, pp. 22 196–22 209, April 2018. [16] I. Cohen, N. Sebe, F . Cozman, and T . Huang, “Semi-supervised learning for facial expression recognition, ” in ACM SIGMM international work- shop on Multimedia information retrieval (MIR 2003) , Berkeley , CA, USA, Nov ember 2003, pp. 17–22. [17] I. Cohen, N. Sebe, F . G. Gozman, M. C. Cirelo, and T . S. Huang, “Learning Bayesian network classifiers for facial expression recognition both labeled and unlabeled data, ” in IEEE Conference on Computer V ision and P attern Recognition (CVPR 2003) , Madison, WI, USA, June 2003, pp. 1–7. [18] A. Blum and T . Mitchell, “Combining labeled and unlabeled data with co-training, ” in Pr oceedings of the eleventh annual confer ence on Computational learning theory (COLT 1998) , Madison, WI, USA, July 1998, pp. 92–100. [19] A. Mahdhaoui and M. Chetouani, “Emotional speech classification based on multi vie w characterization, ” in International Confer ence on P attern Recognition (ICPR 2010) , Istanbul, T urkey , August 2010, pp. 4488– 4491. [20] Z. Zhang, E. Coutinho, J. Deng, and B. Schuller , “Cooperati ve learning and its application to emotion recognition from speech, ” IEEE/A CM T ransactions on Audio, Speec h, and Language Pr ocessing , vol. 23, no. 1, pp. 115–126, January 2015. [21] J. Liu, C. Chen, J. Bu, M. Y ou, and J. T ao, “Speech emotion recog- nition using an enhanced co-training algorithm, ” in IEEE International Confer ence on Multimedia and Expo (ICME 2007) , Beijing, China, July 2007, pp. 999–1002. [22] J. Deng, X. Xu, Z. Zhang, S. Fr ¨ uhholz, and B. Schuller , “Semisupervised autoencoders for speech emotion recognition, ” IEEE/A CM T ransactions on Audio, Speech, and Language Pr ocessing , vol. 26, no. 1, pp. 31–43, January 2018. IEEE/A CM TRANSA CTIONS ON A UDIO, SPEECH, AND LANGUA GE PROCESSING, V OL. XX, NO. X, MA Y 2019 12 [23] Z. Huang, M. Dong, Q. Mao, and Y . Zhan, “Speech emotion recognition using CNN, ” in ACM international conference on Multimedia (MM 2014) , Orlando, FL, USA, November 2014, pp. 801–804. [24] Q. Mao, M. Dong, Z. Huang, and Y . Zhan, “Learning salient features for speech emotion recognition using con volutional neural netw orks, ” IEEE T ransactions on Multimedia , vol. 16, no. 8, pp. 2203–2213, December 2014. [25] J. Chang and S. Scherer, “Learning representations of emotional speech with deep con volutional generative adversarial networks, ” in IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP 2017) , New Orleans, LA, USA, March 2017, pp. 2746–2750. [26] S. Chen, Q. Jin, J. Zhao, and S. W ang, “Multimodal multi-task learning for dimensional and continuous emotion recognition, ” in Annual W ork- shop on Audio/V isual Emotion Challenge (A VEC 2017) , Mountain V iew , California, USA, October 2017, pp. 19–26. [27] F . Ringeval, B. Schuller , M. V alstar, J. Gratch, R. Cowie, S. Scherer, S. Mozgai, N. Cummins, M. Schmitt, and M. Pantic, “A VEC 2017: Real-life depression, and affect recognition workshop and challenge, ” in Annual W orkshop on Audio/V isual Emotion Challenge (A VEC 2017) , Mountain V iew , California, USA, October 2017, pp. 3–9. [28] D. Le, Z. Aldeneh, and E. Mower Provost, “Discretized continuous speech emotion recognition with multi-task deep recurrent neural net- work, ” in Interspeech 2017 , Stockholm, Sweden, August 2017, pp. 1108–1112. [29] J. Kim, G. Englebienne, K. Truong, and V . Evers, “T owards speech emotion recognition “in the W ild” using aggregated corpora and deep multi-task learning, ” in Interspeech 2017 , Stockholm, Sweden, August 2017, pp. 1113–1117. [30] M. Abdelwahab and C. Busso, “Domain adv ersarial for acoustic emotion recognition, ” IEEE/ACM T ransactions on Audio, Speec h, and Language Pr ocessing , vol. 26, no. 12, pp. 2423–2435, December 2018. [31] H. V alpola, “From neural PCA to deep unsupervised learning, ” in Advances in Independent Component Analysis and Learning Machines , E. Bingham, S. Kaski, J. Laaksonen, and J. Lampinen, Eds. London, UK: Academic Press, May 2015, pp. 143–171. [32] A. Rasmusi, M. Berglund, M. Honkala, H. V alpola, and T . Raiko, “Semi- supervised learning with ladder networks, ” in Advances in neural infor- mation pr ocessing systems (NIPS 2015) , Montreal, Canada, December 2015, pp. 3546–3554. [33] A. Rasmus, H. V alpola, and T . Raiko, “Lateral connections in denoising autoencoders support supervised learning, ” CoRR , vol. abs/1504.08215, pp. 1–5, April 2015. [Online]. A vailable: http://arxiv .org/abs/1504.08215 [34] M. Pezeshki, L. Fan, P . Brakel, A. Courville, and Y . Bengio, “Decon- structing the ladder network architecture, ” in International Conference on Machine Learning (ICML 2016) , New Y ork, NY , USA, June 2016, pp. 2368–2376. [35] J. Huang, Y . Li, J. T ao, Z. Lian, M. Niu, and J. Y i, “Speech emotion recognition using semi-supervised learning with ladder networks, ” in Asian Confer ence on Affective Computing and Intellig ent Interaction (ACII Asia 2018) , Beijing, China, May 2018, pp. 1–5. [36] R. Lotfian and C. Busso, “Formulating emotion perception as a prob- abilistic model with application to categorical emotion classification, ” in International Conference on Affective Computing and Intelligent Interaction (ACII 2017) , San Antonio, TX, USA, October 2017, pp. 415–420. [37] S. Mariooryad, R. Lotfian, and C. Busso, “Building a naturalistic emo- tional speech corpus by retrieving expressive behaviors from existing speech corpora, ” in Interspeech 2014 , Singapore, September 2014, pp. 238–242. [38] A. Burmania, S. Parthasarathy , and C. Busso, “Increasing the reliability of crowdsourcing ev aluations using online quality assessment, ” IEEE T ransactions on Af fective Computing , vol. 7, no. 4, pp. 374–388, October-December 2016. [39] C. Busso and S. Narayanan, “Recording audio-visual emotional databases from actors: a closer look, ” in Second International W orkshop on Emotion: Corpora for Resear ch on Emotion and Affect, International confer ence on Language Resources and Evaluation (LREC 2008) , Mar- rakech, Morocco, May 2008, pp. 17–22. [40] C. Busso, M. Bulut, C. Lee, A. Kazemzadeh, E. Mower , S. Kim, J. Chang, S. Lee, and S. Narayanan, “IEMOCAP: Interacti ve emotional dyadic motion capture database, ” Journal of Language Resour ces and Evaluation , v ol. 42, no. 4, pp. 335–359, December 2008. [41] C. Busso, S. Parthasarathy , A. Burmania, M. AbdelW ahab, N. Sadoughi, and E. Mo wer Provost, “MSP-IMPRO V: An acted corpus of dyadic in- teractions to study emotion perception, ” IEEE T ransactions on Affective Computing , v ol. 8, no. 1, pp. 67–80, January-March 2017. [42] B. Schuller, S. Steidl, A. Batliner , A. V inciarelli, K. Scherer, F . Ringev al, M. Chetouani, F . W eninger , F . Eyben, E. Marchi, M. Mortillaro, H. Salamin, A. Polychroniou, F . V alente, and S. Kim, “The INTER- SPEECH 2013 computational paralinguistics challenge: Social signals, conflict, emotion, autism, ” in Interspeech 2013 , L yon, France, August 2013, pp. 148–152. [43] M. W ollmer, B. Schuller, F . Eyben, and G. Rigoll, “Combining long short-term memory and dynamic Bayesian networks for incremental emotion-sensitiv e artificial listening, ” IEEE J ournal of Selected T opics in Signal Processing , vol. 4, no. 5, pp. 867–881, October 2010. [44] G. Trigeorgis, F . Ringeval, R. Brueckner , E. Marchi, M. Nicolaou, B. Schuller, and S. Zafeiriou, “ Adieu features? end-to-end speech emo- tion recognition using a deep con volutional recurrent netw ork, ” in IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP 2016) , Shanghai, China, March 2016, pp. 5200–5204. [45] F . Ringev al, F . Eyben, E. Kroupi, A. Y uce, J.-P . Thiran, T . Ebrahimi, D. Lalanne, and B. Schuller , “Prediction of asynchronous dimensional emotion ratings from audiovisual and physiological data, ” P attern Recognition Letter s , vol. 66, pp. 22–30, November 2015. [46] T . Dozat, “Incorporating Nesterov momentum into Adam, ” in W orkshop trac k at International Conference on Learning Representations (ICLR 2015) , San Juan, Puerto Rico, May 2015, pp. 1–4. [47] B. Schuller, S. Steidl, and A. Batliner, “The INTERSPEECH 2009 emotion challenge, ” in Interspeech 2009 - Eur ospeech , Brighton, UK, September 2009, pp. 312–315. [48] N. Cummins, S. Amiriparian, G. Hagerer, A. Batliner , S. Steidl, and B. Schuller , “ An image-based deep spectrum feature representation for the recognition of emotional speech, ” in A CM international confer ence on Multimedia (MM 2017) , Mountain V iew , CA, USA, October 2017, pp. 478–484. [49] M. Neumann and N. V u, “ Attentiv e conv olutional neural network based speech emotion recognition: A study on the impact of input features, signal length, and acted speech, ” in Interspeech 2017 , Stockholm, Sweden, August 2017, pp. 1263–1267. [50] Z. Y ang and J. Hirschberg, “Predicting arousal and valence from wav e- forms and spectrograms using deep neural networks, ” in Interspeech 2018 , Hyderabad, India, September 2018, pp. 3092–3096. [51] Z. Aldeneh and E. Mo wer Provost, “Using regional salienc y for speech emotion recognition, ” in IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP 2017) , New Orleans, LA, USA, March 2017, pp. 2741–2745. [52] K. Sridhar, S. Parthasarathy , and C. Busso, “Role of regularization in the prediction of valence from speech, ” in Interspeech 2018 , Hyderabad, India, September 2018, pp. 941–945. [53] C. Busso and T . Rahman, “Un veiling the acoustic properties that describe the valence dimension, ” in Interspeech 2012 , Portland, OR, USA, September 2012, pp. 1179–1182. Srinivas Parthasarath y received his BS degree in degree in Electronics and Communication Engineer- ing from College of Engineering Guindy , Anna Uni- versity , Chennai, India (2012) and MS (2014) and PhD (2019) degrees in Electrical Engineering from the Uni versity of T exas at Dallas - UT Dallas. During the academic year 2011-2012, he attended as an exchange student The Royal Institute of T echnology (KTH), Sweden. He is an applied scientist at Ama- zon Research. At UT Dallas, he was a member of the Multimodal Signal Processing (MSP) laboratory . He receiv ed the Ericsson Graduate Fellowship during 2013-2014. He has been a research intern at Amazon, Microsoft Research and Bosch Research and T raining Center . His research interest includes affectiv e computing, human machine interaction, machine learning and digital signal processing. IEEE/A CM TRANSA CTIONS ON A UDIO, SPEECH, AND LANGUA GE PROCESSING, V OL. XX, NO. X, MA Y 2019 13 Carlos Busso (S’02-M’09-SM’13) receiv ed the BS and MS degrees with high honors in electrical en- gineering from the University of Chile, Santiago, Chile, in 2000 and 2003, respecti vely , and the PhD degree (2008) in electrical engineering from the Uni- versity of Southern California (USC), Los Angeles, in 2008. He is an associate professor at the Electrical Engineering Department of The Uni versity of T exas at Dallas (UTD). He was selected by the School of Engineering of Chile as the best electrical engineer graduated in 2003 across Chilean universities. At USC, he received a provost doctoral fellowship from 2003 to 2005 and a fellowship in Digital Scholarship from 2007 to 2008. At UTD, he leads the Multimodal Signal Processing (MSP) laboratory [http://msp.utdallas.edu]. He is a recipient of an NSF CAREER A ward. In 2014, he recei ved the ICMI T en- Y ear T echnical Impact A ward. In 2015, his student received the third prize IEEE ITSS Best Dissertation A ward (N. Li). He also receiv ed the Hewlett Packard Best Paper A ward at the IEEE ICME 2011 (with J. Jain), and the Best Paper A ward at the AAAC A CII 2017 (with Y annakakis and Cowie). He is the co-author of the winner paper of the Classifier Sub-Challenge ev ent at the Interspeech 2009 emotion challenge. His research interest is in human-centered multimodal machine intelligence and applications. His current research includes the broad areas of affecti ve computing, multimodal human-machine interfaces, nonv erbal behaviors for con versational agents, in- vehicle active safety system, and machine learning methods for multimodal processing. His work has direct implication in many practical domains, including national security , health care, entertainment, transportation systems, and education. He was the general chair of ACII 2017. He is a member of ISCA, AAA C, and A CM, and a senior member of the IEEE.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment