End-to-end Audiovisual Speech Activity Detection with Bimodal Recurrent Neural Models

Speech activity detection (SAD) plays an important role in current speech processing systems, including automatic speech recognition (ASR). SAD is particularly difficult in environments with acoustic noise. A practical solution is to incorporate visu…

Authors: Fei Tao, Carlos Busso

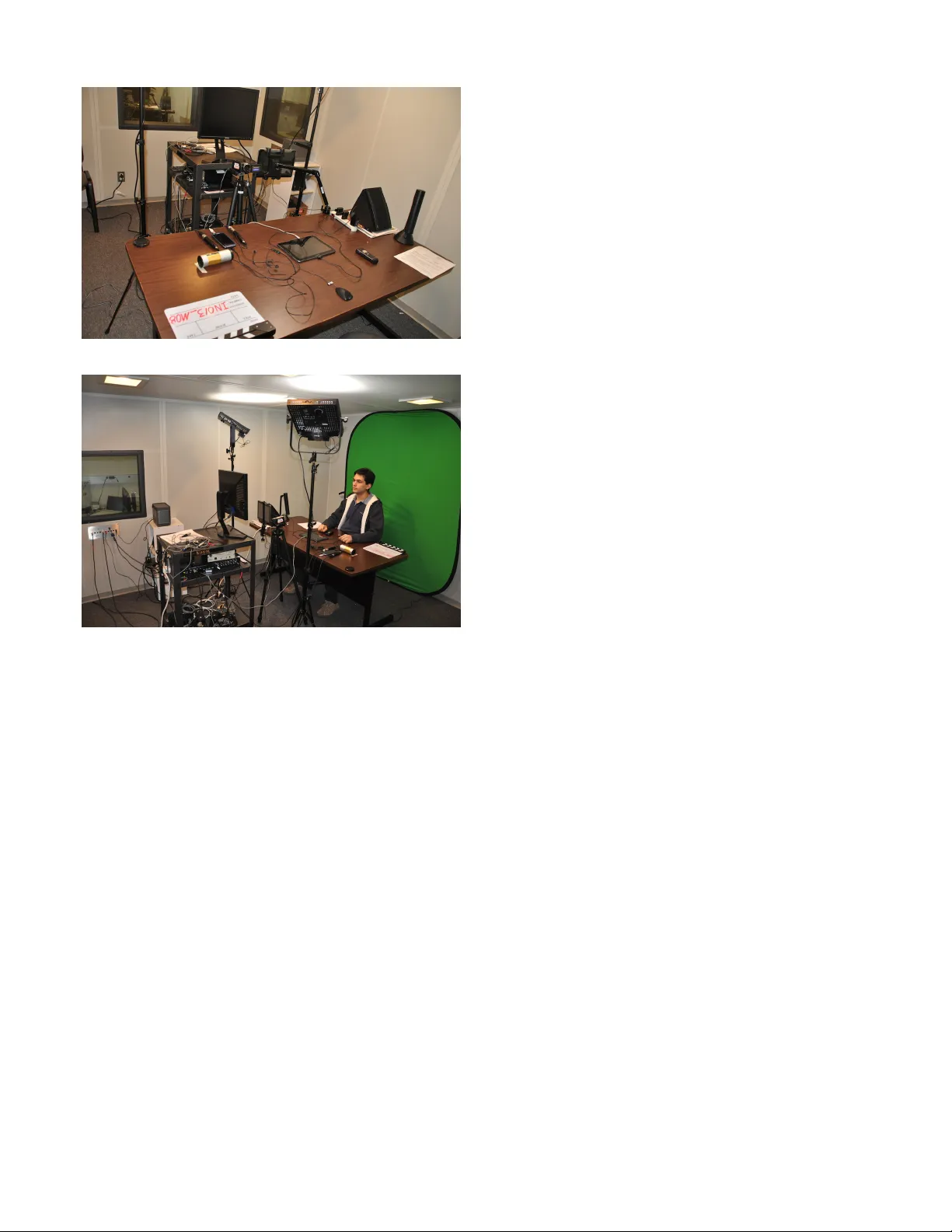

1 End-to-end Audio visual Speech Acti vity Detection with Bimodal Recurrent Neural Models Fei T ao, Student Member , IEEE, Carlos Busso, Senior Member , IEEE, Abstract — Speech activity detection (SAD) plays an important role in current speech processing systems, including automatic speech recognition (ASR). SAD is particularly difficult in en vi- ronments with acoustic noise. A practical solution is to incor- porate visual information, incr easing the rob ustness of the SAD approach. An audiovisual system has the advantage of being rob ust to differ ent speech modes (e.g., whisper speech) or back- ground noise. Recent advances in audiovisual speech processing using deep learning have opened opportunities to capture in a principled way the temporal relationships between acoustic and visual features. This study explores this idea proposing a bimodal recurrent neural network (BRNN) framew ork for SAD . The approach models the temporal dynamic of the sequential audiovisual data, improving the accuracy and robustness of the proposed SAD system. Instead of estimating hand-crafted features, the study in v estigates an end-to-end training approach, where acoustic and visual features ar e directly learned from the raw data during training. The experimental e valuation considers a large audiovisual corpus with over 60.8 hours of recordings, collected from 105 speakers. The results demonstrate that the proposed framew ork leads to absolute improv ements up to 1.2% under practical scenarios over a V AD baseline using only audio implemented with deep neural network (DNN). The proposed approach achie ves 92.7% F1-score when it is evaluated using the sensors from a portable tablet under noisy acoustic envir onment, which is only 1.0% lower than the performance obtained under ideal conditions (e.g., clean speech obtained with a high definition camera and a close-talking microphone). Index T erms —A udiovisual speech activity detection; end-to- end speech framework; deep learning; recurrent neural network I . I N T RO D U C T I O N The success of voice assistant products including Siri, Google Assistant, and Cortana has made the use of speech technology more widespread in our life. These interfaces rely on sev eral speech processing tasks, including speech activity detection (SAD). SAD is a very important pre-processing step, especially for interfaces without push-to-talk button. The accuracy of a SAD system directly affects the performance of other speech processing technologies including automatic speech reco gnition (ASR), speaker verification and identifi- cation, speech enhancement and speech emotion recognition [1]–[4]. A ke y challenge for SAD is the en vironmental noise observed in real w orld applications, which can greatly af fect the performance of the speech interface, especially if the SAD models are built with ener gy-based features [5], [6]. An appealing way to increase the rob ustness of a SAD system against acoustic noise is to include visual features [7], [8], mimicking the multimodal nature of speech perception S. T ao, and C. Busso are with the Erik Jonsson School of Engineering & Computer Science, The University of T e xas at Dallas, TX 75080 (e- mail:fxt120230@utdallas.edu, busso@utdallas.edu). used during daily human interactions [9], [10]. While this solution is not always practical for all applications, the use of portable devices with camera and the advances of human- r obot interaction (HRI) make an audiovisual speech activity detection (A V -SAD) system a suitable solution. Noisy en vi- ronment leads speakers to affect their articulatory production to increase their speech intelligibility , a phenomenon kno wn as Lombard effect. While studies hav e reported differences in visual features between clean and noisy en vironments, these variations are not as pronounced as the differences in acoustic features [11]. Therefore, visual feature are more robust to acoustic noise. For example, T ao et al. [12] sho wed that a visual speech activity detection (V -SAD) system can achiev e robust performance under clean and noisy conditions using the camera of a portable device. Con ventional approaches to integrate acoustic and visual information in SAD tasks hav e relied on ad-hoc fusion schemes such as logic operation, feature concatenation or pre- defined rules [13]–[16]. These approaches oversimplify the relationship between audio and visual modalities, which may lead to rigid models that cannot capture the temporal dynamic between these modalities. Recent adv ances on deep neural network (DNN) have provided ne w data-driven framew orks to appropriately model sequential data [17], [18]. These models av oid defining predefined rules or making unnecessary as- sumptions by directly learning relationships and distributions from the data [19]. Recent studies on audiovisual speech processing have demonstrated the potential of deep learning (DL) in this area [8], [20]–[23]. A straight forward extension from con ventional approaches is concatenating audiovisual features as the input for a DNN [8], [19]. Another way is to rely on auto-encoder to extract bottleneck audiovisual representations [24]. Ho we ver , these methods do not directly capture the temporal relationship between acoustic and visual features. Furthermore, the systems still rely on hand-crafted features, which may not lead to optimal systems. This study proposes an end-to-end framew ork for A V -SAD that explicitly captures the temporal dynamic between acoustic and visual features. The approach builds upon the framework presented in our preliminary work [25], [26], which relies on recurr ent neural networks (RNNs). Our approach, referred to as bimodal recurr ent neural network (BRNN), consists of three subsystems. The first two subsystems independently process the modalities using RNNs, creating an acoustic RNN and a visual RNN. These subsystems are implemented with long short-term memory (LSTM) layers, and their objectiv e is to capture the temporal relationship within each modality that are discriminativ e for speech activity . These subsystems provide high lev el representations for the modalities, which 2 are concatenated and fed as an input vector to a third sub- system. This system, also implemented with LSTMs, predicts the speech/non-speech label for each frame, capturing the temporal information across the modalities. An important contribution of this study is that the acoustic and visual features are directly learned from the data. Recent advances in DNN for speech processing tasks have shown the benefits of learning discriminativ e features as part of the train- ing process, using con volutional neural network (CNN) and sequence modeling with RNN [27], [28]. W e can learn end-to- end system with this approach, which has led to performance improv ements o ver hand-crafted features in many cases [22], [29]. Furthermore, we can capture the characteristics of the raw input data and e xtract discriminati ve representation for a target task [30], [31]. These observations motiv ate us to learn discriminate features from the data. The inputs of the BRNN framew ork are the raw image around the orofacial area as visual features, and the Mel-filterbank as acoustic features. For the visual input, we use three 2D con volutional layers to extract high-level representation from the raw image around the mouth area. On top of the con v olutional layers, we use LSTM layers to model temporal information. For the acoustic input, we use fully connected (FC) layers that are connected to LSTM layers to model the temporal evolution of the data, similar to the visual part. The proposed approach is jointly trained learning discriminativ e features from the data, creating an effecti ve and robust end-to-end A V -SAD system. W e ev aluate our frame work on a subset of the CRSS- 4English-14 corpus consisting of over 60h of recordings from 105 speakers. The corpus includes multiple sensors, which allows us to ev aluate the proposed approach under ideal channels (i.e., close-taking microphone, high definition camera) or under more practical channels (i.e., camera and microphone from a portable tablet). The corpus also has noisy sessions where different types of noise were played during the recordings. The v arious conditions can mimic practical scenarios for speech-based interfaces. W e replicate state-of- the-art supervised SAD approaches proposed in previous stud- ies to demonstrate the superior performance of the proposed approach. The experimental ev aluation shows that our end- to-end BRNN approach achieves the best performance under all conditions. The proposed approach can achiev e at least 0.6% absolute improv ement compared to the state-of-the-art A-V AD system. Among the A V -SAD systems, the proposed approach outperforms the best baseline by 1.0% in the most challenging scenario corresponding to sensors from a portable device under noisy en vironment. This result for this condition is 1.2% higher than an A-SAD system, pro viding clear benefits of the proposed audiovisual solution for SAD. The paper is organized as following. Section II revie ws previous studies on A V -SAD, describing the differences with our approach, and highlighting our contributions. Section III describes the CRSS-4English-14 corpus and the post- processing steps to use the recordings. Section IV intro- duces our proposed end-to-end BRNN framework. Section V presents the e xperimental ev aluations that demonstrate the benefits of our approach. The paper concludes with Section VI, which summarizes our study and discusses potential future directions in this area. I I . R E L A T E D W O R K A successful SAD system can hav e a direct impact on ASR performance by correctly identifying speech se gments. While speech-based SAD systems hav e been e xtensiv ely in v estigated, SAD systems based on visual features are still under develop- ment. V isual information describing lip motion provides valuable cues to determine the presence or absence of speech. Se veral studies have relied on visual features to detect speech activity in speech-based interfaces [32], [33]. These methods either rely exclusiv ely on visual features (V -SAD) [12], [34]–[38], or complement acoustic features with visual cues [13], [14], [39]. As an emer ging research topic, sev eral methods ha ve been proposed. Early studies relied on Gaussian mixtur e models (GMM) [34], [40], hidden Markov models (HMMs) [13], [38], or static classifiers such as support vector machine (SVM) [35]. Recent studies have demonstrated the potential of using deep learning for V -SAD and A V -SAD [24]. The use of deep learning offers better alternatives to fuse audiovisual modalities. Early studies relied on simple fusion schemes, including concatenating audiovisual features [14], combining the indi vidual SAD decisions using basic “ AND” or “OR” operations [13], [15], and creating hierarchical decision rules to assess which systems to use [16]. In contrast, DL solutions can incorporate in a principled manner the two modalities. DL techniques can be used to build powerful data- driv en frameworks, relying on the input data rather than rigid assumptions or rules [17], [18]. DL-based approaches provide better solutions for A V -SAD task compared with con ventional approaches, increasing the flexibility of the systems. The pioneer work of Ngiam et al. [19] demonstrated that DL can be a powerful tool for audiovisual automatic speech r ecognition (A V -ASR). For A V -SAD, howe v er , there are only few studies using DL approaches. One exception is the approach proposed by Ariav et al. [24]. They used an autoencoder to create an audiovisual bottleneck representation. The acoustic and visual features were concatenated and used as input of the autoencoder . The bottleneck features from the autoencoder were used as input of a RNN, which aimed to detect speech activity . This approach separated the fusion of audiovisual features (autoencoder) from the classifier (RNN), which may lead to a suboptimal system where the bottleneck features are not optimized for the SAD task. T o globally optimize the fusion and temporal modeling, T ao and Busso [25], [26] proposed the bimodal r ecurr ent neural network (BRNN) framew ork for A V -SAD task. The approach used three RNNs as subnets following the structure in Figure 2. The framew ork can model the temporal information within and across modalities. The results show that this structure can outperform an RNN taking concatenated audiovisual features. A. F eatur es for Speec h Activity Detection For acoustic features, studies ha ve relied on Mel frequency cepstral coefficients (MFCCs) [41], spectrum energy [5] and features describing speech periodicity [42]. Ho we ver , there is 3 no standard set for visual features, where studies hav e pro- posed sev eral hand-crafted features. For example, Nav arathna et al. [34] and Almajai and Milner [14] used appearance- based features such as 2D discr ete cosine transform (DCT) coefficients from the orofacial area. Other studies hav e re- lied on geometric features [16], [36], [38], [40]. A common approach is to use active appearance model (AAM) [38]. T ao and Busso [43] and Neti et al. [44] suggested that appearance based features ha ve the disadv antage of being more speaker dependent, so using geometric features can provide representations with better generalization. Some studies hav e combined appearance and geometric features [43]. Instead of using hand-crafted features, an appealing idea is to learn discriminativ e features from the data using end-to-end systems. The benefit of this approach is that the feature extrac- tion and task modeling are jointly learned from the data, pro- viding fle xible and rob ust solutions. While this approach has been used in other areas, we are not aware of previous studies on end-to-end systems for A V -SAD. W e hypothesize that this approach can lead to improvements in the performance, since feature representations obtained during the learning process hav e been shown to be effecti ve on other tasks. CNN was originally used to learn features from images, since CNN can learn spatial, translation inv ariant representations from raw pixels [45]. The spatial in variance property in CNN allows the system to learn robust high-level representations from the input data [46]. Saitoh et al. [47] used CNN to extract visual representation from concatenated images for visual automatic speech r ecognition (V -ASR) task. Petridis et al. [22] presented an end-to-end systems for V -ASR. They used raw images and their corresponding delta information as input to recognize words, relying on bidirectional LSTMs (BLSTMs). Amodei et al. [30] and Sercu et al. [48] used CNN to extract high-le v el acoustic feature representations from raw acoustic data for audio automatic speech reco gnition (A-ASR) tasks. In these studies, FC layers were stacked over the CNN, mapping the representation extracted by the CNNs into the classification task space (Soltau et al. [49] sho wed the benefits of adding FC layers on top of CNN layers). Inspired by these studies, this study adopts a CNN as a feature e xtractor for visual features to learn high-le vel representations that are discriminativ e for A V -SAD tasks. B. T emporal Modeling for Speech Activity Detection Speech is characterized by semi-periodic patterns that are distinctiv e from non-speech sounds such as laugh, lip-smack, and deep breath. The temporal cues are observed not only on speech features, but also on orofacial features reflecting the articulatory mov ements needed to produce speech. Therefore, modeling temporal information is very important for SAD. An approach to model temporal information is to include features that con vey dynamic cues. A classic approach is by concatenating contiguous frames, creating contextual feature vectors [34], [41]. Howe ver , this approach relies on a pre- defined context window , which will constraint the capability of static frameworks such as DNN. T emporal information can also be added by taking temporal deriv ativ es of the features [14], [36], [40], or by relying on optical flo w features [37]. For example, Sodoyer et al. [36] demonstrated that dynamic features extracted by taking deriv atives are more effecti ve than the actual v alues describing the lip configuration. T akeuchi et al. [13] extracted the variance of optical flow as visual features to capture dynamic information. An alternative, but complementary , approach to model tem- poral information is by using frameworks that capture recur- rent connections. A common approach in speech processing tasks is the use of RNNs, which rely on connections between two contiguous time steps capturing temporal dependencies in sequential signals [50]–[53]. Ariav et al. [24] used RNNs to capture temporal information in a A V -SAD task. A popular RNN framework is the use of LSTM units, which have been successfully used for A V -SAD task, showing competitiv e performance [25], [26]. Our proposed approach b uild on the bimodal recurr ent neural network (BRNN) framework proposed in our previous studies [25], [26], which is described in Section IV -C. C. Contrib utions of this Study This study extends the BRNN frame work proposed in T ao and Busso [25] by directly learning discriminativ e audiovisual features, creating an effecti ve end-to-end A V -SAD system. While aspects of the BRNN frame work were original pre- sented in our preliminary work [25], [26], this study provides key contributions. A key difference between this study and our previous work is the features used for the task. While our preliminary studies relied on hand-craft audiovisual features, our method learns discriminativ e features directly from the data. T o the best of our knowledge, this is the first end-to-end A V -SAD system. Other key difference with our previous work is the ex- perimental ev aluation. The framew ork is exhausti vely ev alu- ated with other A V -SAD methods, obtaining state-of-the-art performance on a large audiovisual database. The study also demonstrates the benefits of using Mel-filterbank over speech spectrogram in the presence of acoustic noise. I I I . T H E C R S S - 4 E N G L I S H - 1 4 A U D I O V I S UA L C O R P U S This study uses the CRSS-4English-14 audiovisual corpus, which was collected by the center of r obust speech systems (CRSS) at the Univ ersity of T exas at Dallas. The corpus was described in details in T ao and Busso [8], so this section only describes the aspects of the corpus that are relev ant to this study . Figure 1 shows the settings used to collect the corpus. A. Data Collection The CRSS-4English-14 corpus was collected in a 13 f t × 13 f t American Speech-Language-Hearing Association (ASHA) certified sound booth. Figure 1 sho ws the record- ing setting. The audio was collected at 48 kHz with fiv e microphones: a close-talking microphone (Shure Beta 53), a desktop microphone (Shure MX391/S), the bottom and top microphones of a cellphone (Samsung Galaxy SIII), and a tablet (Samsung Galaxy T ab 10.1N). This study only uses 4 (a) Equipments (b) Recording setting Fig. 1. Equipments and recording setting for the collection of the CRSS- 4English-14 corpus. This study uses the audio from the close-taking and tablet microphones and the videos from the HD camera and the tablet. the close-talking microphone, which was placed close to subject’ s mouth, and the microphone from the tablet, which was placed facing the subjects about two meters from them. The illumination was controlled with two LED light panels to collect high quality videos. The videos were collected with two cameras: a high definition (HD) camera (Sony HDR-XR100) and a camera from the tablet (Samsung Galaxy T ab 10.1N). This study uses the recordings from both cameras. The HD camera has a resolution of 1440 × 1080 at a frame rate of 29.97 fps. The tablet camera has a resolution of 1280 × 720 at a frame rate of 24 fps. Both cameras were placed facing the subjects about two meters from them, capturing frontal views of the head and shoulder of the subjects. A green screen was placed behind the subjects to create a smooth background. The participants were free to mov e their head and body during the data collection, without any constraint. W e used a computer screen about three meters from the subjects, presenting slides with the instructions for each task. The corpus includes read speech and spontaneous speech. For the read speech, we included different tasks such as single words (e.g., “yes”), city names (e.g., “Dallas, T exas”), short phrases or commands (e.g., “change probe”), connected digit sequences (e.g., “1,2,3,4”), questions (e.g. “How tall is the Mountain Ev erest”), and continuous sentences (e.g., “I’ d like to see an action movie tonight, any recommendation?”), . For the spontaneous speech, we proposed questions to the speak- ers, who are required to respond using spontaneous speech. The sentences for each of the tasks are randomly selected from a pool of options created for the data collection, so the content per speaker is balanced, but not the same. The data collection starts with the clean session where the speaker completed all the requested tasks (about 45 minutes). The clean session includes read and spontaneous speech. Then, we collected the noisy session for fiv e minutes. W e played pre-recorded noise using an audio speaker (Beolit 12), which was located about three meters from the subjects. The noise recordings were recorded in four different locations: restaurant, house, office and shopping mall. Playing noise during the recording rather than adding artificial noise after the recording is a strength of this corpus, as it includes speech production variations associated with Lombard ef fect (speakers adapt their speech production in the presence of acoustic noise). For the noisy session, the slides were randomly selected from the ones used in the clean section. Howe ver , we only considered slides with read speech, discarding slides used for spontaneous speech. The corpus contains recording from 442 subjects with four English Accent: American (115), Australian (103), Indian (112) and Hispanic (112). All the subjects are asked to speak in English. This study only uses the subset of the corpus collected from American speakers. During the recordings, we had problem with the data from 10 subjects, so we only use data collected from 105 subjects (55 females and 50 males). The total duration of this set is 60 hours and 48 minutes. The size, variability in tasks, presence of clean and noisy sessions, and use of multiple devices make this corpus unique to ev aluate our A V -SAD framework under dif ferent conditions. B. Data Pr ocessing A bell ring was recorded as a marker ev ery time the subjects switched slides. This signal was used to segment the recordings. W e manually transcribed the corpus, using forced- alignment to identify speech and non-speech labels. For this task, we use the open-source software SAILAlign [54]. In the annotation and transcription of the speech, we annotate non-speech activities such as laughers, smacks, and coughs. W e carefully remove these segments from frames labeled as ‘speech’. W e resample the sampling rate of the videos collected with the tablet to match the sampling rate of the HD camera (e.g., 29.97 fps). W e also resample the audio to 16kHz for both audio channels (close-talking microphone and tablet). I V . P R O P O S E D F R A M E W O R K In this study , we propose an end-to-end A V -SAD system building on the BRNN framework proposed in Fei and Busso [25]. Figure 2 describes the BRNN frame work, which has three subnets implemented with RNN: an audio subnet, a video subnet and an audiovisual subnets. The audio and video subnets separately process each set of the features, capturing 5 O(n+1) A(n+1) V(n+1) O(n) A(n) V(n) O(n-1) A(n-1) V(n-1) t(n-1) t(n) t(n+1) Fig. 2. Diagram of the BRNN framew ork, which consists of three subnets implemented with RNNs. The A-RNN processes acoustic information, while the V -RNN processes visual information. The A V -RNN takes the concatena- tion of the outputs from the A-RNN and V -RNN as input, predicting the task label as output. the temporal dependencies within modality that are rele vant for SAD. The outputs from these two RNNs are concatenated and fed into a third subnet, fusing the information by capturing the temporal dependencies across modalities. This section describes the three subnets. A. A udio Recurrent Neural Network (A-RNN) The audio subnet corresponds to the audio recurr ent neural network (A-RNN) and it is described in Figure 3(a). The A- RNN takes the acoustic features as input of a network consist- ing of static layers and dynamic layers (recurrent layers). The static layers model the input feature space, extracting the es- sential characteristics to determine speech activity . W e rely on two fully connected (FC) layers. This study uses two maxout layers [55] rather than con volutional layers as static layers to reduce the computational complexity in training the models. Each layer has 512 neurons. The outputs of the FC layers are fed to dynamic layers to model the time dependencies within modality , as temporal patterns are important in SAD tasks [26]. W e use two LSTM layers as dynamic layers. While bidirectional LSTMs have been used for this task [25], we only use unidirectional LSTMs to reduce the latency of the model, as our goal is to implement this approach in practical applications. The acoustic feature used in our system are the Mel- filterbank features, which correspond to the energy in the frequency bands defined by the Mel scale filters. Therefore, it is a raw input feature that retains the main spectral information of the original speech. W e use the tool python speech features to extract the mel-filterbank features, using the default setting (25 ms window , 10 ms shifting step, and 26D filters in the mel-filterbank). In this study , we concatenate 11 contiguous frames as input, which includes 10 previous frames, in addition to the current frame. Concatenating previous frames improves the temporal modeling of the framew ork, while keeping the latency of the system lo w . B. V isual Recurrent Neural Network (V -RNN) The video subnet corresponds to the video r ecurrent neural network (C-RNN) and it is also described in Figure 3(a). Softmax FC LSTM LSTM LSTM LSTM LSTM LSTM FC FC CNN AV-RNN V-RNN A-RNN (a) (b) Fig. 3. Detailed structure of the BRNN framework. (a) The structure of the proposed framework for one time frame. The A-RNN subnet includes FC and LSTM layers to process acoustic Mel-filterbank features. The V -RNN subnet has CNN extracting a visual representation from raw pixels and LSTMs to process temporal information. The A V -RNN subnet relies on FC and LSTM to process the concatenated output from the substructures A-RNN and V -RNN. (b) Detailed CNN configuration used to learn visual features. The V -RNN takes raw images as visual feature. It extracts visual representation relying on conv olutional layers. Figure 3(b) describes the details of the architecture. The con volutional layers capture visual patterns, such as edges, corners and texture from raw pixels based on local conv olutions. The visual patterns can depict the mouth appearance and shape associated with speech activity . W e stack three con volutional layers with rectified linear units (ReLUs) [56] to capture the visual patterns. Each layer has 64 filters. The kernel size is 5 × 5 and the stride is two (Fig. 3(b)). By using stride, we reduce the size of the feature map, so we do not need to use the pooling operation. The feature representation defined by the CNNs is a 64D feature vector . The implementation is intended to keep a compact network with lower hardware requirements and computation workload, which is ideal for practical applications. On top of the con volutional layers, we rely on two LSTM layers to further process the extracted visual representation, capturing the temporal information along time. Each layer has 64 neurons. Therefore, the V -RNN is able to directly extract both the visual patterns and temporal 6 Fig. 4. Flowchart to e xtract the R OI around the orofacial area. The solid dots are facial landmarks. The dots with circle are used to estimate the af fine transformation for normalization. The R OI is determined after normalization. The final mouth image is resized to 32 × 32 and transformed into a gray scale. information from raw images that are relev ant for speech articulation. Figure 4 sho ws the flow chart of the visual feature e xtraction process used in this study . W e manually pick a frame of a subject posing a frontal face as the template. W e extract 49 facial landmarks from the template and each frame of the videos. The facial landmarks are extracted with the toolkit IntraFace [57]. IntraFace does not output a valid number when it fails to detect the landmarks, which occurred on some frames. W e apply linear interpolation to predict these values when less than 10% of the frames of a video are missing. Otherwise, we discard the video. W e apply an affine trans- formation to normalize the face by comparing the positions of nine facial points in the template and the current frame. This normalization compensates for the rotation and size of the face. These nine points are selected around the nose area, because they are more stable when the people are speaking (points highlighted on Fig. 4 describing the template). After face normalization, we compute the mouth centroid based on the landmarks around mouth. W e do wnsample the re gion of inter est (ROI) to 32 × 32 and con vert it to gray scale colormap to limit the memory and computation w orkload. C. A udiovisual Recurr ent Neural Network (A V -RNN) The high-lev el feature representations provided by the top layers of the A-RNN and V -RNN subnets are concatenated together and fed into the audiovisual recurr ent neural net- work (A V -RNN) subnet (Fig. 3(a)). The proposed frame work considers two LSTM layers to process the concatenated input. These LSTM layers aim to capture the temporal information across the modalities. On top of the LSTM layers, we include a FC layer implemented with maxout to further process the audiovisual representation. Each of the LSTM and FC layers are implemented with 512 neurons. The output is then sent to a softmax layer for classification, which determines whether the sample correspond to a speech or non-speech se gment. The BRNN framew ork is designed to model the time dependency within single modality and across the modalities. The con volutional layers allow us to directly extract visual representation from raw images. The framew ork also directly obtain acoustic representation from Mel-filterbank features. The proposed BRNN is jointly trained, minimizing a common objectiv e function (in this case, the cross-entropy function). This framew ork provides a po werful end-to-end system for SAD, as demonstrated with the experimental results. V . E X P E R I M E N T S A N D R E S U LT S A. Experiment Settings W e ev aluate our proposed approach on the CRSS-4English- 14 corpus (Sec. III). W e partition the corpus into train (data from 70 subjects), test (data from 25 subjects) and validation (data from 10 subjects) sets. All these sets are gender balanced. W e use accuracy , recall rate, precision rate and F1-score as the performance metrics. The positi ve class to estimate precision and recall rates is speech (i.e., frames with speech acti vity). W e estimate F1-score with Equation 1, combining precision and recall rates. F1-score = 2 × Pr e cision × R e c al l Pr e cision + R e c al l (1) W e consider the F1-score as the main metric to compare alternativ e methods.W e separately compute the results for each of the 25 subjects in the test set, reporting the average performance across individuals. W e perform one-tailed t-test on the average of the F1-scores to determine if one method is statistically better than the other , asserting significance at p - value = 0.05. The experiments were conducted with GPU using the Nvidia GeForce GTX 1070 graphic card (8GB graphic memory). Since all of the three baseline approaches rely on deep learning techniques, we apply the same training strategy (see sec V -B). W e use dropout with p = 0.1 to improv e the generalization of the models. W e use ADAM optimizer [58], monitoring the loss on the validation set. W e rely on early stopping when the v alidation loss stops decreasing. B. Baseline Methods W e aim to compare the proposed approach with state-of- the-art methods proposed for SAD. The study considers three baselines. One approach only relies on acoustic features, and two approaches rely on audiovisual features. Our proposed approach uses unidirectional LSTM instead of BLSTM to reduce the latency in the model, which is a key feature for practical applications. T o make the comparison fair , we also implement the baselines with unidirectional LSTMs. 1) A-SAD using DNN [41]: The first baseline corresponds to the A-SAD framework proposed by Ryant et al. [41], which we denote “Ryant-2013”. This approach is a state-of-the-art supervised A-SAD system using DNN. The system has four fully connected layers with 256 maxout neurons per layer . On top of the four layers, the system has a 2-class softmax layer for SAD classification. This approach uses 13D MFCCs. W e concatenate 11 feature frames as input to make the system comparable with the proposed approach. 7 2) A V -SAD system using BRNN [25]: The second baseline is the A V -SAD system proposed by T ao and Busso [25], which we denote “T ao-2017”. This framework is a state-of-the-art A V -SAD system, relying on BRNN. The network is similar to the approach presented in this paper (Fig. 2). The key difference is the audiovisual features, which correspond to hand-crafted. The acoustic features correspond to the fiv e features pro- posed by Sadjadi and Hansen [42] for A-SAD: harmonicity , clarity , prediction gain, periodicity and perceptual spectral flux. These features capture key speech properties that are discriminativ e of speech segments such as periodicity and slo w spectral fluctuations. Harmonicity , also called Harmonics- to-Noise Ratio (HNR), measures the relative value of the maximum autocorrelation peak, which produces high peaks for voiced segments. Clarity is defined as the relativ e depth of the minimum average magnitude differ ence function (AMDF) val- ley in the possible pitch range. This metric also leads to large values in the voiced segments. Prediction gain corresponds to the ener gy ratio between the original signal and the linear pr ediction (LP) residual signal. It will also show higher values for voiced segments. P eriodicity is a frequency domain feature based on the harmonic product spectrum (HPS). P er ceptual spectral flux captures the quasi-stationary feature of the voice activity , as the spectral properties of speech do not change as quickly as non-speech segments or noise. The details of these features are explained in Sadjadi and Hansen [42]. The visual features include geometric and optical flow fea- tures describing orofacial movements characteristic of speech articulation. W e extract 26D visual features from the ROI shown in Figure 4. This vector is created as follows. First, we extract a 7D feature vector from the R OI (three optical flow features, and four geometric features). The optical flow features consists of the variance of the optical flow in the vertical and horizontal direction within the R OI. The third optical flow feature corresponds to the summation of the variance in both direction, which provides the ov erall temporal dynamic on the frame. The four geometric features include the width, height, perimeter and area of the mouth. Based on the 7D feature vector , we compute three statistics over short- term windo w: v ariance, zer o cr ossing rate (ZCR) and speech periodic char acteristic (SPC) (details are introduced in T ao et al. [15]). The short-term windo w is shifted one frame at a time. W e set its size equal to nine frames (about 0.3s) to balance the trade off between resolution (it requires short window) and robust estimation (it requires long window). The three statistics estimated o ver the 7D v ector results in a 21D feature vector . W e append the summation of the optical flo w v ariances and the first order deri vati ve of the 4D geometric feature to the 21D vector , since they can also provide dynamic information. The final visual feature is, therefore, a 26D vector . All the visual features are z-normalized at the utterance level. W e concatenate 11 audio feature frames as audio input, and use 1 visual feature frame as visual input. The subnet processing the audio features has four layers, each of them implemented with 256 neurons. The first tw o layers are maxout neuron layers and the other two layers are LSTM layers. The subnet processing the video features has four layers, each of them implemented with 64 neurons. The first two layers are maxout neuron layers and the last two layers are LSTM layers. The hidden values from the top layers of the two subnets are concatenated and fed to the third subnet, which has four layers. The first two layers are LSTM layers with 512 neurons. The third layer is implemented with maxout neurons with 512 neurons. The last layer is the softmax layer for classification. 3) A V -SAD System using Autoencoder [24]: The third base- line correspond to the A V -SAD approach proposed by Ariav et al. [24], which relies on autoencoder (Section II describes this approach). W e refer to this method as “ Ariav-2018”. 13D MFCCs are used as audio feature, and optical flow ov er the frame is used as visual feature. There are two stages in this approach. The first stage is the feature fusion stage which relies on an autoencoder . W e implement this approach by concatenating 11 audio feature frames and one visual feature frame. The concatenated features are used as input for a five- layer autoencoder . The middle layer has 64 neurons, and the other layers have 256 neurons. The hidden values extracted from the middle layers are used as bottleneck features. All the layers use maxout neurons. The second stage uses the bottleneck features as the input of a four-layer RNN. The first two layers are LSTM layers, with 256 neurons per layer . The third layer is a maxout layer with 256 neurons. The last layer is the softmax layer for classification. C. Experimental Results While the CRSS-4English-14 corpus has se veral recording devices, this study only considers two combinations. The ideal channels use the data collected with the close-talking micro- phone and the HD camera, which hav e the best quality . The practical channels consider the video and audio recordings collected with the tablet. W e expect that these sensors are good representations of the sensors used in practical speech- based interfaces. In addition, there are two types of en viron- ment as described in Section III-A: clean and noisy audio recordings. Altogether, this ev aluation consider four testing conditions (ideal channel+clean; ideal channel+noise; practical channel+clean; practical channel+noise). All the models are trained with the ideal channels under clear recordings, so the other conditions create train-test mismatches. W e are interested in e valuating the rob ustness of the approaches under these channel and/or environment mismatches. The first four rows of T able I sho ws the performance for the ideal channel under clean audio recordings. The proposed approach can outperform the baseline approaches (“Ryant- 2013” by 0.6%; “T ao-2017” by 4.5%; “ Ariav-2018” by 0.5%). The differences between our framew ork and “T ao-2017” are statistically significant ( p -v alue > 0.05). “T ao-2017” used hand-crafted features. Our end-to-end BRNN system achieves better performance, which demonstrate the benefits of learning the features from the ra w data. The proposed approach perform slightly better than the baselines ‘Ryant-2013” and “ Ariav- 2018”, although the differences are not statistically significant. The last four rows of T able I shows the performance under noisy audio recordings. The proposed approach can outper- form the baselines (“Ryant-2013” by 0.7%; “T ao-2017” by 8 T ABLE I P E RF O R M AN C E O F T HE S A D S Y ST E M S F O R T H E I D E AL C H AN N E L S ( C LO S E - T A L K I NG M I CR OP H O N E , H D C A M E RA ) . “ E N V ” S TA ND S F OR T E ST I N G E N V IR ON M E N T ( “ C” I S C L E AN ; “ N ” I S N OI S Y ) . “ M O DA L IT Y ” S T A N DS F O R M O DA L IT Y U SE D B Y T H E A P P ROAC H ( “ A ” I S A - S AD , “ A V ” I S A V - S AD ) . “A P PR OAC H ” S TAN D S F O R C O R R ES P O ND I N G F R A ME W O RK ( “ A C C : ” A C CU R AC Y ; “ P R E : ” P R E CI S I O N R A T E ; “ R E C :” R E CA L L R ATE ; “ F :” F 1 - S C O R E ) . En v Modality Approach Acc Pre Rec F C A Ryant-2013 90.3 96.6 90.5 93.4 A V T ao-2017 90.1 94.6 84.8 89.5 A V Ariav-2018 93.4 95.4 91.7 93.5 A V Proposed 93.9 95.8 92.3 94.0 N A Ryant-2013 94.8 96.4 93.8 95.0 A V T ao-2017 93.3 93.1 94.0 93.4 A V Ariav-2018 94.4 95.4 94.1 94.7 A V Proposed 95.3 96.2 95.2 95.7 T ABLE II P E RF O R M AN C E O F T HE S A D S Y ST E M S F O R T H E P R AC T IC A L C H A N NE L S ( M IC RO P H O NE A N D C A ME R A F R OM T H E TAB L E T ). “ E NV ” S T A N DS F O R T E ST I N G E N V IR ON M E N T ( “ C” I S C L E AN ; “ N ” I S N OI S Y ) . “ M O DA L IT Y ” S T A N DS F O R M O DA L IT Y U SE D B Y T H E A P P ROAC H ( “ A ” I S A - S AD , “ A V ” I S A V - S AD ) . “A P PR OAC H ” S TAN D S F O R C O R R ES P O ND I N G F R A ME W O RK ( “ A C C : ” A C CU R AC Y ; “ P R E : ” P R E CI S I O N R A T E ; “ R E C :” R E CA L L R ATE ; “ F :” F 1 - S C O R E ) . En v Modality Approach Acc Pre Rec F C A Ryant-2013 92.7 94.3 91.6 92.9 A V T ao-2017 90.0 91.9 87.3 89.4 A V Ariav-2018 92.8 95.2 90.8 92.9 A V Proposed 93.4 95.4 92.0 93.7 N A Ryant-2013 90.8 90.6 92.5 91.5 A V T ao-2017 83.3 77.5 96.7 86.0 A V Ariav-2018 91.2 92.9 90.6 91.7 A V Proposed 92.1 92.9 92.6 92.7 2.3%; “ Ariav-2018” by 1.0%). The differences are statistically significant ( p -value < 0.05) when our approach is compared with “T ao-2017” and “ Ariav-2018”. The classification im- prov ement ov er the “ Ariav-2018” system demonstrates that the BRNN framework combine better the modalities than the autoencorder framework, especially in the presence of noise. The proposed BRNN structure jointly learns how to extract the features and fuse the modalities, improving the temporal modeling of the system. T able I shows that the system tested with ideal channels has better performance under noisy conditions than under clean conditions. This unintuitiv e result is due to two reasons. First, the signal-to-noise ratios (SNRs) under noisy and clean conditions are very similar for the ideal channels since the microphone is close to the subject’ s mouth and far from the audio speaker playing the noise. Figure 5 shows the distribution of the predicted SNR, using the NIST Speech SNR T oolkit [59]. The Figure 5(a) shows an important ov erlap between both conditions. Second, we only have read speech in the noisy section. In addition to read speech, the clean section also has spontaneous speech, which is a more difficult task for SAD. T able II presents the results for the practical channels, which shows that our approach also achieves better performance than the baseline methods across conditions. For clean audio recordings, the proposed approach can significantly outper - forms all the baselines (“Ryant-2013” by 0.8%; “T ao-2017” (a) Ideal channels (b) Practical channels Fig. 5. Distributions of the SNR predictions for the ideal and practical channels. The SNR prediction are estimated with the NIST Speech SNR T oolkit [59](CRSS-4English-14 corpus). For the noisy audio recordings, the microphone in the tablet was closer to the audio speaker playing the noise, so the microphone of the practical channels is more affected by the noise. by 4.3%; “ Ariav-2018” by 0.8%). For noisy audio recordings, we observe that the performances drop across conditions compared to the results obtained under clean recordings. The microphone of the tablet is closer to the audio speaker playing the noise, so the SNR is lower (Fig. 5(b)). The proposed approach can maintain a 92.7% F1-score performance, outper- forming all the baseline frameworks (“Ryant-2013” by 1.2%; “T ao-2017” by 6.7%; “ Ariav-2018” by 1.0%). The differences are statistically significant for all the baselines. This result shows that the proposed end-to-end BRNN framework can ex- tract audiovisual feature representations that are robust against noisy audio recordings. D. BRNN Implemented with Dif ferent Acoustic F eatures W e also re-implement the proposed approach with alterna- tiv es acoustic features to demonstrate the benefits of using Mel-filterbank features. The first acoustic features considered in this section is the spectrogram features without using the Mel filters. W e extract 320D features using a T urkey filter with uniform bins between 0-8KHz. The second acoustic features correspond to the 5D hand-crafted acoustic features proposed by Sadjadi and Hansen [42], which we describe in Section V -B2. In both cases, we concatenate 10 previous frames to the current frame to create a contextual window , following the approach used for the Mel-filterbank features. For the spectrogram, the A-RNN subnet has four layers, each of them implemented with 4,096 neurons. For the 5D hand- craft features, the A-RNN subnet has four layers, each of them implemented with 256 neurons. The configuration for the rest of the framework is consistent with the proposed approach, including the A-RNN and A V -RNN. The ev aluation only considers two conditions: ideal channels with clean audio recordings, and practical channels with noisy audio recordings. 9 T ABLE III P E RF O R M AN C E O F T HE B R N N F R A ME W O R K I M PL E M EN T E D W I T H D I FFE R E N T AC O U ST I C F E A T U RE S . “ C H ” S TAN D S F O R C H A N NE L . “ E N V ” S T A N DS F O R T E S TI N G E N V IR O NM E N T ( “ C ” I S C LE A N ; “ N ” I S N O I S Y ) . “ F EAT UR E ” S TA ND S F O R A C OU S T IC F E A T U R E U S E D I N T H E E V A L UA T I O N ( “ A C C : ” A C CU R AC Y ; “ P R E : ” P R E CI S I O N R A T E ; “ R E C :” R E CA L L R ATE ; “ F :” F 1 - S C O R E ) . CH En v Feature Acc Pre Rec F Ideal C Mel-filterbank 93.8 95.8 92.3 94.0 Spectrogram 93.4 94.8 93.1 93.9 Hand-crafted [42] 92.2 94.0 90.4 92.2 Practical N Mel-filterbank 92.1 92.9 92.6 92.7 Spectrogram 76.8 71.3 97.4 82.2 Hand-crafted [42] 66.9 64.3 88.1 74.3 These two conditions represent the easiest and hardest settings considered in this study , respecti vely . T able III presents the results. For the ideal channels un- der clean audio recordings, using a feature representation learnt from Mel-filterbank is slightly better than using a representation learnt from the spectrogram. Both of these feature representations lead to significantly better performance than the system trained with hand-crafted features. Learning flexible feature representations from the raw data lead to better performance than using hand-crafted features, as they are not constrained by pre-defined rules or assumptions. For the prac- tical channels under noisy audio recordings, the model trained with hand-crafted features achiev e the worse performance. The feature representation learnt from the Mel-filterbank is able to significantly outperforms the representation learnt from the spectrogram by a lar ge margin (10.5%). The feature representation learnt from the spectrogram is more sensitive to acoustic noise. The experiments in this section sho w that learning feature representations from Mel-filterbank leads to better perfor - mance across conditions, showing competitive results under clear and noisy speech. E. P erformance of Unimodal Systems W e also explore the performance of SAD systems trained with unimodal features to highlight the benefits of using audiovisual information. The experimental setup uses the V - RNN and A-RNN modules of the BRNN frame work (Fig. 3(a)). For the audio-based system, we use the pre-trained A- RNN models. The weights of this subnet are not modified. On top of the A-RNN, we implement the same structure used in the BRNN consisting of two LSTM layers, one FC layer , and a softmax layer (Fig. 3(a)). W e train these four layers from scratch, using the same training scheme used to train the BRNN network (i.e., dropout, AD AM, early stopping). The visual-based system is trained using the same strategy , starting with the V -RNN subnet. Similar to Section V -D, the ev aluation only considers the ideal channels with clean audio recordings, and practical channels with noisy audio recordings. T able IV sho ws the performance for the audio-based and visual-based systems. For comparison, we also include the proposed BRNN approach. For the ideal channels under clean audio recordings, the bimodal system can outperform the T ABLE IV P E RF O R M AN C E F O R U N I MO D AL S A D S Y S T EM S A ND T H E B I M OD AL S A D S Y ST E M . ‘ C H ” S TA ND S F OR C H AN N E L . “ E N V ” S TA ND S F OR T E S TI N G E N VI RO N M E NT ( “ C” I S C L E AN ; “ N ” I S N OI S Y ) . “ M O DA L IT Y ” S TAN D S F O R M O DA LI T Y U S E D B Y T H E A P P ROA CH ( “ A C C : ” A CC U R AC Y ; “ P R E : ” P R EC I S I ON R A T E ; “ R E C :” R E CA L L R ATE ; “ F :” F 1 - SC O R E ) . CH En v Modality Acc Pre Rec F Ideal C Bimodal 93.8 95.8 92.3 94.0 Audio 92.7 94.5 91.4 92.8 V ideo 60.0 65.3 50.9 57.2 Practical N Bimodal 92.1 92.9 92.6 92.7 Audio 90.3 89.2 93.9 91.5 V ideo 65.5 69.3 68.5 68.9 unimodal systems, showing the benefits of using audiovisual features. The result from the audio-based system is 35.6% (absolute) better than the result from the video-based systems. This result is consistent with findings from pre vious study [12], [15], [25]. In spite of the lower performance of the visual- based system, the addition of orofacial features lead to clear improv ements in the BRNN system. For noisy channels under noisy audio recordings, the bimodal system still achieves the best performance, outperforming the unimodal systems where the differences are statistically significant. The audio-based system achiev es better results than the visual-based system. The performance for the visual-based system using noisy audio recordings is higher than the results obtained with clean audio recordings. This result is explained due to two reasons: (1) the visual features are not greatly affected by the background acoustic noise, and (2) the data for noisy audio recordings does not contain spontaneous speech, as explained in Section V -C. If we include only read sentences recorded in both noisy and clear recordings, the performance of the visual-based system trained with the ideal channels under clean audio recordings is 69.2%. This result is slightly higher than the value reported in T able IV for visual-based system under noisy audio recordings. The comparison between bimodal and unimodal inputs shows the benefit of using bimodal features. It highlights that our proposed BRNN approach can achieve better performance than state-of-the-art unimodal SAD systems. V I . C O N C L U S I O N A N D F U T U R E W O R K This study proposed an end-to-end A V -SAD framew ork where the acoustic and visual features are directly learnt during the training process. The proposed approach relies on LSTM layers to capture temporal dependencies within and across modalities. This objectiv e is achieved with three subnets. The first two subnets separately learn visual and acoustic repre- sentations that are discriminativ e for SAD tasks. The visual subnet uses CNNs to learn features directly from images of the orofacial area. The audio subnet extracts acoustic representa- tion directly from Mel-filterbank features. The outputs of these subnets are concatenated and used as input of a third subnet, which models the temporal dependencies across modalities. Instead of using BLSTM, the proposed frame work relies on unidirectional LSTM, reducing the latency , and, therefore, increasing the usability of the system in real applications. T o the best of our knowledge, this is the first end-to-end A V -SAD system. 10 W e ev aluated the proposed approach on a set of the CRSS- 4English-14 corpus (105 speakers), which is a large audiovi- sual corpus. The proposed approach outperformed alternati ve state-of-the-art A-SAD and A V -SAD systems. W e observed consistent improvements across conditions. The proposed end- to-end BRNN framework maintained good performance in the presence of different noise and channel conditions. The system also achiev ed better performance than an implementation of the BRNN system using audiovisual hand-crafted features. These results demonstrated the benefits of learning feature representations during the training process. This approach provides an appealing solutions for practical applications. There are sev eral research directions to extend the proposed approach. This study only focused on acoustic noise. In the future, we will ev aluate the framework in the presence of visual artifacts (e.g., blurred images, occlusions). Like wise, the proposed approach assumes that the audiovisual modalities are av ailable. W e are exploring alternative solutions to address missing information. Finally , we leav e as a future work to learn acoustic representations with CNNs. This direction was not pursued on this study due to the high computational cost required to train the models. A C K N O W L E D G M E N T This study was funded by the National Science Foundation (NSF) grants IIS-1718944 and IIS-1453781 (CAREER). R E F E R E N C E S [1] G. Hinton, L. Deng, D. Y u, G. Dahl, A. Mohamed, N. Jaitly , A. Senior , V . V anhoucke, P . Nguyen, T . Sainath, and B. Kingsbury , “Deep neural networks for acoustic modeling in speech recognition: The shared views of four research groups, ” IEEE Signal Pr ocessing Magazine , v ol. 29, no. 6, pp. 82–97, November 2012. [2] G. Liu, Q. Qian, Z. W ang, Q. Zhao, T . W ang, H. Li, J. Xue, S. Zhu, R. Jin, and T . Zhao, “The Opensesame NIST 2016 speaker recognition ev aluation system, ” in Interspeech 2017 , Stockholm, Sweden, August 2017, pp. 2854–2858. [3] S. Parthasarathy and C. Busso, “Jointly predicting arousal, valence and dominance with multi-task learning, ” in Interspeech 2017 , Stockholm, Sweden, August 2017, pp. 1103–1107. [4] F . T ao, G. Liu, and Q. Zhao, “ An ensemble framework of voice- based emotion recognition system for films and TV programs, ” in IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP 2018) , Calgary , AB, Canada, April 2018, pp. 6209–6213. [5] J. Pang, “Spectrum energy based voice activity detection, ” in IEEE An- nual Computing and Communication W orkshop and Conference (CCWC 2017) , Las V egas, NV , USA, January 2017, pp. 1–5. [6] M. Moattar and M. Homayounpour , “ A simple but efficient real-time voice activity detection algorithm, ” in Eur opean Signal Pr ocessing Confer ence (EUSIPCO 2009) , Glasgow , Scotland, August 2009, pp. 2549–2553. [7] G. Potamianos, E. Marcheret, Y . Mroueh, V . Goel, A. Koumbaroulis, A. V artholomaios, and S. Thermos, “ Audio and visual modality com- bination in speech processing applications, ” in The Handbook of Multimodal-Multisensor Interfaces, volume 1 , S. Oviatt, B. Schuller , P . Cohen, D. Sonntag, G. Potamianos, and A. Kr ¨ uger , Eds. A CM Books, May 2017, vol. 1, pp. 489–543. [8] F . T ao and C. Busso, “Gating neural network for large vocabulary audiovisual speech recognition, ” IEEE/ACM T ransactions on Audio, Speech, and Language Processing , vol. 26, no. 7, pp. 1286–1298, July 2018. [9] J. Keil, N. M ¨ uller , N. Ihssen, and N. W eisz, “On the variability of the McGurk effect: audio visual integration depends on prestimulus brain states, ” Cerebr al Cortex , vol. 22, no. 1, pp. 221–231, January 2012. [10] K. V an Engen, Z. Xie, and B. Chandrasekaran, “ Audiovisual sentence recognition not predicted by susceptibility to the McGurk effect, ” Attention, P erception, & Psychophysics , vol. 79, no. 2, pp. 396–403, February 2017. [11] T . Tran, S. Mariooryad, and C. Busso, “ Audiovisual corpus to analyze whisper speech, ” in IEEE International Conference on Acoustics, Speec h and Signal Processing (ICASSP 2013) , V ancouver , BC, Canada, May 2013, pp. 8101–8105. [12] F . T ao, J. Hansen, and C. Busso, “ An unsupervised visual-only v oice activity detection approach using temporal orofacial features, ” in Inter- speech 2015 , Dresden, Germany , September 2015, pp. 2302–2306. [13] S. T akeuchi, T . Hashiba, S. T amura, and S. Hayamizu, “V oice activity detection based on fusion of audio and visual information, ” in Inter- national Conference on Audio-V isual Speech Processing (A VSP 2009) , Norwich, United Kingdom, September 2009, pp. 151–154. [14] I. Almajai and B. Milner , “Using audio-visual features for robust voice activity detection in clean and noisy speech, ” in European Signal Pr o- cessing Conference (EUSIPCO 2008) , Switzerland, Lausanne, August 2008, pp. 1–5. [15] F . T ao, J. L. Hansen, and C. Busso, “Improving boundary estimation in audiovisual speech activity detection using Bayesian information criterion, ” in Inter speech 2016 , San Francisco, CA, USA, September 2016, pp. 2130–2134. [16] T . Petsatodis, A. Pnevmatikakis, and C. Boukis, “V oice activity detection using audio-visual information, ” in International Conference on Digital Signal Processing (ICDSP 2009) , Santorini, Greece, July 2009, pp. 1–5. [17] G. Hinton, S. Osindero, and Y . T eh, “ A fast learning algorithm for deep belief nets, ” Neural Computation , vol. 18, no. 7, pp. 1527–1554, July 2006. [18] Y . Bengio, “Learning deep architectures for AI, ” F oundations and tr ends R in Machine Learning , vol. 2, no. 1, pp. 1–127, January 2009. [19] J. Ngiam, A. Khosla, M. Kim, J. Nam, H. Lee, and A. Ng, “Multi- modal deep learning, ” in International conference on machine learning (ICML2011) , Bellevue, W A, USA, June-July 2011, pp. 689–696. [20] F . T ao and C. Busso, “ Aligning audiovisual features for audiovisual speech recognition, ” in IEEE International Conference on Multimedia and Expo (ICME 2018) , San Diego, CA, USA, July 2018. [21] S. Petridis and M. Pantic, “Deep complementary bottleneck features for visual speech recognition, ” in IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP 2016) , Shanghai, China, March 2016, pp. 2304–2308. [22] S. Petridis, Z. Li, and M. Pantic, “End-to-end visual speech recognition with LSTMs, ” in IEEE International Confer ence on Acoustics, Speech and Signal Processing (ICASSP 2017) , New Orleans, LA, USA, March 2017, pp. 2592–2596. [23] J. Chung, A. Senior, O. V inyals, and A. Zisserman, “Lip reading sentences in the wild, ” in IEEE Confer ence on Computer V ision and P attern Recognition (CVPR 2017) , Honolulu, HI, USA, July 2017, pp. 3444–3453. [24] I. Ariav , D. Dov , and I. Cohen, “ A deep architecture for audio-visual voice activity detection in the presence of transients, ” Signal Processing , vol. 142, pp. 69–74, January 2018. [25] F . T ao and C. Busso, “Bimodal recurrent neural network for audiovisual voice activity detection, ” in Interspeech 2017 , Stockholm, Sweden, August 2017, pp. 1938–1942. [26] ——, “ Audiovisual speech activity detection with advanced long short- term memory , ” in Interspeech 2018 , Hyderabad, India, September 2018, pp. 1244–1248. [27] K. Noda, Y . Y amaguchi, K. Nakadai, H. Okuno, and T . Ogata, “Lipread- ing using con volutional neural network, ” in Interspeech 2014 , Singapore, September 2014, pp. 1149–1153. [28] A. Graves, S. Fern ´ andez, F . Gomez, and J. Schmidhuber, “Connectionist temporal classification: labelling unsegmented sequence data with recur- rent neural networks, ” in International Conference on Machine Learning (ICML 2006) , Pittsbur gh,P A, USA, June 2006, pp. 369–376. [29] Y . Zhang, W . Chan, and N. Jaitly , “V ery deep conv olutional networks for end-to-end speech recognition, ” in IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP 2017) , Ne w Orleans, LA, USA, March 2017, pp. 4845–4849. [30] D. Amodei, S. Ananthanarayanan, R. Anubhai, J. Bai, E. Battenber g, C. Case, J. Casper , B. Catanzaro, Q. Cheng, G. Chen, J. Chen, J. Chen, Z. Chen, M. Chrzanowski, A. Coates, G. Diamos, K. Ding, N. Du, E. Elsen, J. Engel, W . Fang, L. Fan, C. F ougner , L. Gao, C. Gong, A. Hannun, T . Han, L. Johannes, B. Jiang, C. Ju, B. Jun, P . LeGresley , L. Lin, J. Liu, Y . Liu, W . Li, X. Li, D. Ma, S. Narang, A. Ng, S. Ozair, Y . Peng, R. Prenger, S. Qian, Z. Quan, J. Raiman, V . Rao, S. Satheesh, D. Seetapun, S. Sengupta, K. Srinet, A. Sriram, H. T ang, L. T ang, C. W ang, J. W ang, K. W ang, Y . W ang, Z. W ang, Z. W ang, S. Wu, L. W ei, B. Xiao, W . Xie, Y . Xie, D. Y ogatama, B. Y uan, J. Zhan, and Z. Zhu, “Deep speech 2: End-to-end speech recognition in english and 11 mandarin, ” in International Confer ence on Machine Learning (ICML 2016) , New Y ork, NY , USA, June 2016, pp. 173–182. [31] A. Hannun, C. Case, J. Casper , B. Catanzaro, G. Diamos, E. Elsen, R. Prenger , S. Satheesh, S. Sengupta, A. Coates, and A. Ng, “Deep speech: Scaling up end-to-end speech recognition, ” CoRR , vol. abs/1412.5567, December 2014. [32] B. Rivet, L. Girin, and C. Jutten, “Visual voice activity detection as a help for speech source separation from con voluti ve mixtures, ” Speech Communication , vol. 49, no. 7, pp. 667–677, July-August 2007. [33] P . De Cuetos, C. Neti, and A. Senior, “ Audio-visual intent-to-speak detection for human-computer interaction, ” in International Conference on Acoustics, Speec h, and Signal Processing (ICASSP 2010) , vol. 4, Istanbul, T urkey , March 2000, pp. 2373–2376. [34] R. Na varathna, D. Dean, S. Sridharan, C. Fookes, and P . Lucey , “V isual voice activity detection using frontal versus profile views, ” in International Conference on Digital Image Computing T echniques and Applications (DICT A 2011) , Noosa, Queensland, Australia, December 2011, pp. 134–139. [35] B. Joosten, E. Postma, and E. Krahmer , “V isual voice activity detection at different speeds, ” in International Conference on Auditory-V isual Speech Processing (A VSP 2013) , Annecy , France, August-September 2013, pp. 187–190. [36] D. Sodoyer , B. Riv et, L. Girin, J.-L. Schwartz, and C. Jutten, “ An analysis of visual speech information applied to voice acti vity detection, ” in IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP 2006) , vol. 1, T oulouse, France, May 2006, pp. 601–604. [37] A. Aubrey , Y . Hicks, and J. Chambers, “V isual voice activity detection with optical flow , ” IET Image Pr ocessing , vol. 4, no. 6, pp. 463–472, December 2009. [38] A. Aubrey , B. Ri vet, Y . Hicks, L. Girin, J. Chambers, and C. Jutten, “T wo novel visual voice activity detectors based on appearance models and retinal filltering, ” in European Signal Pr ocessing Conference (EUSIPCO 2007) , Pozna ´ n, Poland, September 2007, pp. 2409–2413. [39] R. Ahmad, S. Raza, and H. Malik, “Unsupervised multimodal V AD using sequential hierarchy , ” in IEEE Symposium on Computational Intelligence and Data Mining (CIDM 2013) , Singapore, April 2013, pp. 174–177. [40] P . Liu and Z. W ang, “V oice activity detection using visual information, ” in IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP 2004) , vol. 1, Montreal, Quebec, Canada, May 2004, pp. 609–612. [41] N. Ryant, M. Liberman, and J. Y uan, “Speech activity detection on Y outube using deep neural networks, ” in Interspeec h 2013 , L yon, France, August 2013, pp. 728–731. [42] S. Sadjadi and J. H. L. Hansen, “Unsupervised speech activity detection using voicing measures and perceptual spectral flux, ” IEEE Signal Pr ocessing Letters , vol. 20, no. 3, pp. 197–200, March 2013. [43] F . T ao and C. Busso, “Lipreading approach for isolated digits recognition under whisper and neutral speech, ” in Interspeech 2014 , Singapore, September 2014, pp. 1154–1158. [44] C. Neti, G. Potamianos, J. Luettin, I. Matthews, H. Glotin, D. V ergyri, J. Sison, A. Mashari, and J. Zhou, “ Audio-visual speech recognition, ” W orkshop 2000 Final Report, T echnical Report 764, October 2000. [45] Y . LeCun and Y . Bengio, “Conv olutional networks for images, speech, and time series, ” in The handbook of brain theory and neural networks , M. Arbib, Ed. The MIT Press, July 1998, pp. 255–258. [46] A. Krizhe vsky , I. Sutskev er , and G. E. Hinton, “ImageNet classification with deep con volutional neural networks, ” in Advances in Neural In- formation Processing Systems (NIPS 2012) , vol. 25, Lake T ahoe, CA, USA, December 2012, pp. 1097–1105. [47] T . Saitoh, Z. Zhou, G. Zhao, and M. Pietik ¨ ainen, “Concatenated frame image based CNN for visual speech recognition, ” in Asian Conference on Computer V ision (ACCV) - W orkshops , ser . Lecture Notes in Com- puter Science, C. Chen, J. Lu, and K. Ma, Eds. T aipei, T aiwan: Springer Berlin Heidelberg, November 2016, vol. 10117, pp. 277–289. [48] T . Sercu, C. Puhrsch, B. Kingsbury , and Y . LeCun, “V ery deep multilin- gual conv olutional neural networks for L VCSR, ” in IEEE International Confer ence on Acoustics, Speech and Signal Processing (ICASSP 2016) , Shanghai, China, March 2016, pp. 4955–4959. [49] H. Soltau, G. Saon, and T . Sainath, “Joint training of conv olutional and non-con volutional neural networks, ” in International Conference on Acoustics, Speech, and Signal Pr ocessing (ICASSP 2014) , Florence, Italy , May 2014, pp. 5572–5576. [50] R. W illiams and D. Zipser , “ A learning algorithm for continually running fully recurrent neural networks, ” Neural computation , vol. 1, no. 2, pp. 270–280, Summer 1989. [51] T . Mikolov , M. Karafi ´ at, L. Burget, J. ˇ Cernock ` y, and S. Khudanpur, “Recurrent neural network based language model, ” in Interspeech 2010 , Makuhari, Japan, September 2010, pp. 1045–1048. [52] A. Grav es, A. Mohamed, and G. Hinton, “Speech recognition with deep recurrent neural networks, ” in IEEE International Conference on Acoustics, Speec h and Signal Processing (ICASSP 2013) , V ancouver , BC, Canada, May 2013, pp. 6645–6649. [53] D. Bahdanau, J. Choro wski, D. Serdyuk, P . Brakel, and Y . Bengio, “End- to-end attention-based lar ge vocab ulary speech recognition, ” in IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP 2016) , Shanghai, China, March 2016, pp. 4945–4949. [54] A. Katsamanis, M. P . Black, P . Georgiou, L. Goldstein, and S. Narayanan, “SailAlign: Robust long speech-text alignment, ” in W orkshop on New T ools and Methods for V ery-Larg e Scale Phonetics Resear ch , Philadelphia, P A, USA, January 2011, pp. 1–4. [55] I. Goodfellow , J. Pouget-Abadie, M. Mirza, B. Xu, D. W arde-Farley , S. Ozair, A. Courville, and Y . Bengio, “Generative adversarial nets, ” in Advances in neur al information pr ocessing systems (NIPS 2014) , v ol. 27, Montreal, Canada, December 2014, pp. 2672–2680. [56] V . Nair and G. Hinton, “Rectified linear units improve restricted Boltz- mann machines, ” in International Confer ence on Machine Learning (ICML 2010) , Haifa, Israel, June 2010, pp. 807–814. [57] X. Xiong and F . D. la T orre, “Supervised descent method and its applications to face alignment, ” in IEEE Conference on Computer V ision and P attern Recognition (CVPR 2013) , Portland, OR, USA, June 2013, pp. 532–539. [58] D. Kingma and J. Ba, “ Adam: A method for stochastic optimization, ” in International Confer ence on Learning Repr esentations , San Diego, CA, USA, May 2015, pp. 1–13. [59] V . M. Stanford, “NIST speech SNR tool, ” https://www .nist.gov/information-technology-laboratory/iad/mig/nist- speech-signal-noise-ratio-measurements, December 2005.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment