PEA265: Perceptual Assessment of Video Compression Artifacts

The most widely used video encoders share a common hybrid coding framework that includes block-based motion estimation/compensation and block-based transform coding. Despite their high coding efficiency, the encoded videos often exhibit visually anno…

Authors: Liqun Lin, Shiqi Yu, Tiesong Zhao

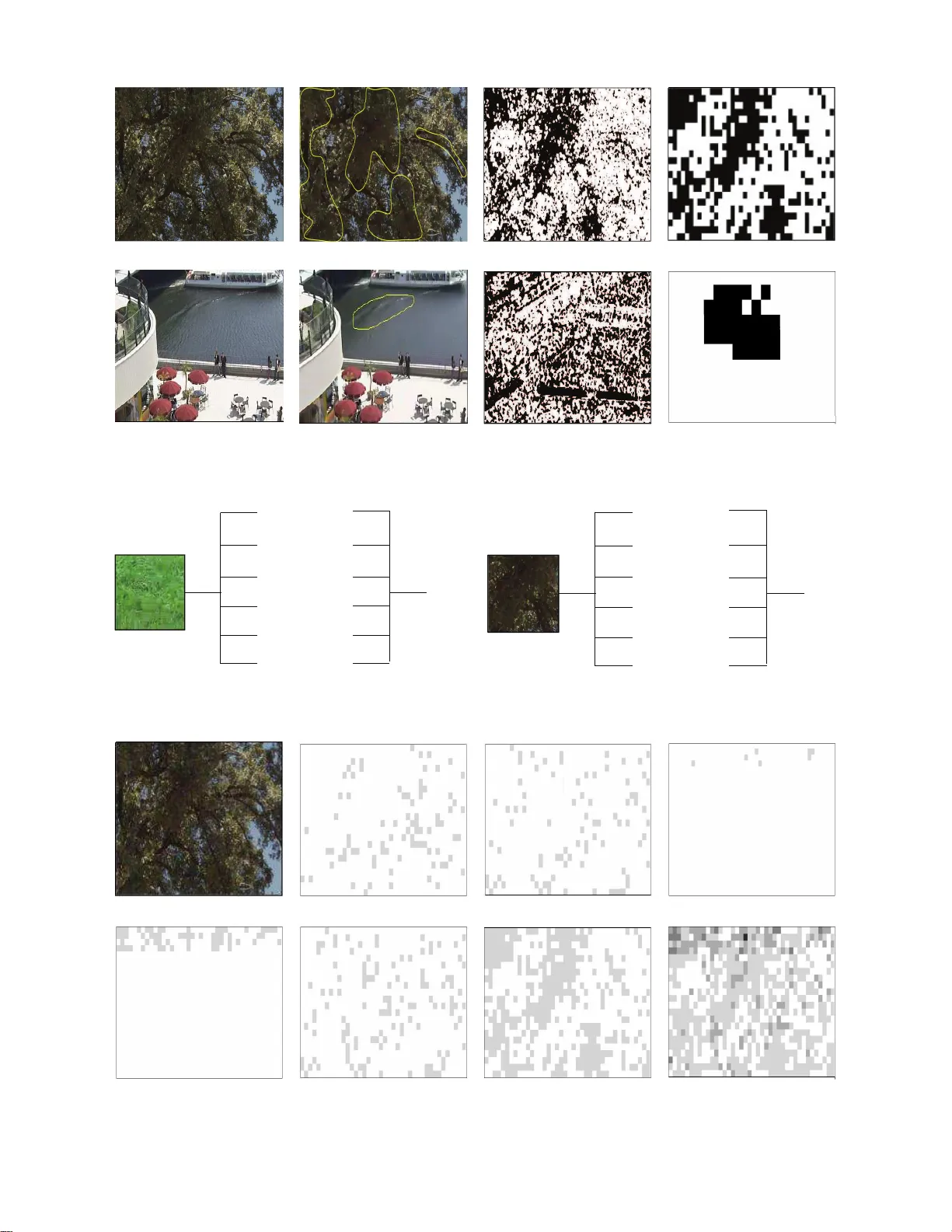

1 PEA265: Perceptual Assess ment of V ideo Compression Artif acts Liqun Lin, Shiqi Y u , Tiesong Zhao, Member , IEEE an d Zhou W ang, F ellow , IEEE Abstract —The most widely used video encoders share a co m- mon hybrid co din g framework that includes block-based motion estimation/compensation and block-based transf orm codi ng. De- spite their high co ding efficiency , the encoded videos often e xhibi t visually annoying artifacts, denoted as P erceiv able Encoding Artifacts (PEAs), which signifi cantly degrade the visual Quality- of-Experience (QoE) of end users. T o mo nitor and improv e visual QoE, it is crucial to deve lop su b jective and objective measures that can identify and qu antify various types of PEAs. In th is work, we make the first attempt to build a larg e-scale sub ject- labelled database composed of H.265/HEVC compresse d videos containing various PEAs. The database, namely th e PEA 265 database, includes 4 types of spatial PEAs ( i. e. blurring, blockin g, ringing and color bleeding) and 2 types of temporal PEAs ( i.e. flickering and floating). Each containing at least 60,000 image or video patches with positive and nega tive labels. T o o bjectively identify th ese PEAs, we train C onv olutional Neural Networks (CNNs) using the PEA265 database. It appears that state -of-the- art ResNeXt is capable of i d entifying each type of PEAs with high accuracy . Fu rthermore, we defi ne PEA pattern and PEA intensity measures to qu antify PEA levels of compressed video sequence. W e believ e that the PEA265 database and our fin dings will benefit the future development of video q uality assessment methods and perce ptu ally motivated video encoders. Index T erms —V ideo coding, blocking, blurrin g, video compr es- sion, distortion, Perceiv able Encoding Artifact (PEA), Con volu- tional Neural Network (CNN ) . I . I N T RO D U C T I O N T HE last decade has w itn essed a boomin g of High Defi- nition (HD)/Ultra HD (UHD) and 3D/360-d egre e videos due to the rapid developments of v id eo capturin g, transmission and display technolo gies. Accor d ing to Cisco V isual Net- working Ind ex (VNI) [ 1 ], video content has taken over 2/3 bandwidth of curren t broad band and mob ile networks, and will grow to 80%-9 0% in the visib le futur e. T o m eet such a d emand, it is necessary to improve network b a ndwidth and maximize video quality und er a limited bitrate or bandwid th constraint, wh ere the latter is genera lly ach iev ed by lossy vid eo coding techno logies. The widely used vid eo codin g schem es are lossy for two reasons. Firstly , Shan non’ s theorem sets th e limit of lossless coding, which cannot fu lfill th e p ractical need s o n vid eo This research is supported by the Nationa l Natura l Science Foundatio n of China (Grant 61671152). L. Lin, S. Y u and T . Zhao are with the Colleg e of Physics and In- formation Engineerin g, Fuzhou Univ ersity , Fuzhou, Fujian 350116, China. e-mails: { lin liqun, n171120077, t.zha o } @fzu.edu.cn. Correspondi ng author: Tie song Z hao. Z. W ang is with the Department of Electrical and Computer En- gineeri ng, Uni versit y of W at erloo, W aterloo, ON, Canada. e-mail: zhou.wa ng@uwater loo.ca. compression ; secondly , the Human V ision System (HVS) [ 2 ] is no t u niformly sensitive to visual signals at all f requen cies, which allows us to suppr ess cer ta in freque n cies with negli- gible loss o f pe rceptual quality . State-of-the-ar t video coding schemes, su ch as H.26 4 Advanced V ideo Coding (H.264/A VC) [ 3 ], H.265 High Efficiency V ideo Codin g (H.2 65/HEVC) [ 4 ], Goo g le VP8/VP9 [ 5 ], [ 6 ], Chin a’ s Aud io-V ideo co d ing Standards ( A VS/A VS2) [ 7 ] , [ 8 ], ado pt the co nventional hyb rid video codin g structure. This infrastructu re, or iginated fro m 1980s [ 9 ], consists o f a grou p of standard proced ures including intra-fram e prediction, inter-frame motion estimation an d com- pensation, followed by spatial transmission, quantization and entropy codin g. T o facilitate these functio ns in videos of large sizes, th e enco der further divides the frames into slices and coding un its. Thereby , wh en the bitrate is n ot sufficially h igh, the compr e ssed video encompa sses various types of inf orma- tion loss within and acro ss blocks, slices an d un its, r e sulting in visually unna tu ral stru c ture impairme n ts o r perceptu al artifacts [ 10 ]. These Per ceiv able Encodin g Artifacts (PEAs) g reatly degrade the visual Quality- o f-Exp erience ( Q o E) of users [ 11 ], [ 12 ]. The detection and classification of PEAs are challeng ing tasks. In video encoders, conventional quality metrics such as Sum o f Ab soluted Differences (SAD) [ 13 ], Sum of Squa red Errors (SSE) [ 14 ], Peak-Sign al-to-Noise Ratio (PSNR) [ 1 5 ], and Structur al SIMilarity ( SSIM) index [ 1 5 ] are weak indi- cators of PEAs. At the u ser-end, the PEAs are highly visible but not properly measured . Recent dev elop ments h av e gr eatly put forward the 4 K/8K era and user-centric video codin g and delivery has become ever imp ortant [ 16 ]. M e a nwhile, the ad vancemen t of computin g an d networking techn ologies have enabled deep inv estigations o n PEA recogn itio n and quantification . In [ 17 ], the classification of diversified PEAs have been elaborated . In [ 18 ], it is observed that these PEAs have significant im pacts on visual qu ality of H . 264/A VC. Specif - ically , 96 % of q u ality variance could be predicted by the intensities of three co mmon PEAs: blurrin g , b locking an d color bleedin g. Until now , b lo cking and blu rring artifacts have been exten sively investigated, which are caused by spa tial- inconsistent a nd high-f r equency sign al lo sses respectively . In many h ybrid encod e rs, de-block ing filters are introduce d to prevent severe blocking artifacts, which may , h owe ver , introdu c e high b lurriness [ 19 ]. Other typ ical artifacts, such as rin ging [ 20 ] an d color bleeding [ 21 ], may be generated due to errors in high frequ encies o f lu ma and c h roma sign als, respectively . T o ad dress the se issues, intr ic a te schemes have been developed to PEA removal [ 22 ]–[ 24 ]. Howe ver , due to 2 (a) Refe rence frame (b) Compressed frame with blurring artifac t Fig. 1. An example of blurring artifac t. their high co m plexities, these algor ithms a r e u su ally deployed at the po st-processing stage in stead of vide o co mpression. Meanwhile, tem poral PEAs h ave also attracted significant attention. In [ 25 ], a simplified robust statistical mode l and the Huber statistical mod e l for temporal ar tifact reductio n are p r oposed. Gong et al. [ 26 ] presented the hierarch ical prediction structure to find plausible r easons of tempor al artifacts. Mean while, a metric for just no ticeable temp oral artifact and an efficient tempo ral PEA e lim inating alg orithm in video coding we re proposed . In addition, Zeng et al. [ 17 ] presented an algorithm d etecting and lo cating the flo ating artifacts. Despite th ese efforts, th e re is still a lack of subjective and objective ap proach e s to sy stem atic PEA re cognition and analysis. Recently , deep learnin g techniqu es [ 27 ], espec ia lly Con volutional Neural Network ( CNN) [ 28 ], hav e dem onstrated their p romise in improving video co ding perfor mance [ 29 ]– [ 33 ]. This in sp ired us to introduce CNN to the recognition of PEAs in h y brid encoding . In this work, we employ state- of-the- a r t video encoder H.2 65/HEVC to develop a PEA database, namely the PEA265 datab a se, for PEA recog nition. The contributions of this work are as follows : (1) A subjective-labelled database of comp ressed video s with PEAs. W e select 6 typical PEAs based on [ 17 ]. W e utilize the H. 265/HEVC to encod e a gr oup of standard sequences and recruit users to mark all the 6 types of PEAs. Finally , we cut the marked sequences into im age/video p atches with positive and n egativ e PEA labels. In total, there are 6 typical PEAs and at least 60 ,000 positive or n egativ e labels are given for each type of PEA. (2) An objec ti ve PEA rec o gnition appro a ch b ased on CNN. For ea c h type o f PEA, we construct and compar e LeNet [ 3 4 ] and ResNeXt [ 35 ] to recogn ize PEA types. It ap pears that state-of-the- art ResNeXt outper forms LeNet in term s of PEA recogn itio n. W e are ab le to a chieve an accu racy of at least 80% for all PE As types. (3) A PEA intensity measur e for a co mpressed video sequence. By summarizing all PEA reco gnitions, we ob tain an overall PEA intensity measu r e of a compre ssed vide o sequence, which helps characterize the subjective an noyance of PEAs in compressed video. The rest of th e paper is organ ized a s follows. In Sec tio n II, we discuss diversified PEAs in H.265 /HE VC and select 6 types of PEAs to develop o ur database. In Sectio n III, we elaborate the details of o ur subjective datab a se in cluding vid eo sequence p reparatio n , subjecti ve testing and data processing. Section I V presents o ur deep learn ing-based PEA reco gnition and the overall PEA intensity measurem ent. Finally , Section V conclu des the paper . I I . P E A C L A S S I FI C A T I O N In this section, w e r evie w the PEA classification in [ 17 ] and selec t typical PEAs to develop our subjective da tabase. According to [ 17 ], th e PE A s are classified into spatial an d temporal artifacts, wh ere spatial artifacts inclu de blurr ing, blocking , co lor bleed in g, ringing and basis pattern effect; temporal a r tifacts inclu de floating , jerk iness and flickering. In this work, we selec t blurrin g , b lo cking, colo r bleed ing, ringin g of spatial artifacts and floating, flickering of temp oral artifacts in the development of our datab ase. Basis p attern effect and jerkiness artifacts are excluded because: 1) the basis pattern effect has similar v isual a p pearanc e and has similar origin to the rin ging effect; 2) the jerkiness artifacts are caused b y image capturing factors such as fr a me ra te instead of comp ression. W e summ arize th e character istics and plau sib le reason s of the 6 typical types of PEAs as follows. A. Spatial Artifacts Block-based v ideo codin g schemes cr eate various spatial artifacts due to blo ck partitionin g an d quan tization. The spatial artifacts, with different visual app e arances, can be id entified without tempo ral reference. 1) Blurring: Aiming at a higher com pression ratio, the HEVC encoder qu a ntizes tran sformed residu als discrepantly . When the video signals are reconstructe d , h igh frequency energy may be severely lost, which may lead to visual blur . Perceptually , blu r ring usually a p pears as the loss of spatial details or sharpness of edges or texture regions in an image. An examp le is shown in th e mar ked rectang u lar region in Fig . 1 (b). It displays the spatial loss o f the basketball field. 2) Blocking: The HE VC encod e r is blo ck-based, and all compression pr o cesses a re per formed within no n -overlapped blocks. This often r e sults in false discon tin uities acro ss b lock bound aries. T he v isual appearance of blocking m ay b e dif fer- ent subject to th e region of visua l d isco ntinuities. In Fig. 2 (b), a blocking example o f the horse tail is highlighted in the marked rectangular region. 3) Ringing : Ring ing is cau sed b y th e coar se quantization of h igh f requen cy c ompon ents. When the h ig h f r equency compon ent of o scillating structure h as a qu antization error, 3 (a) Refe rence frame (b) Compressed frame with blocking artifact Fig. 2. An example of blocking artifact . (a) Refe rence frame (b) Compressed frame with ringing artifac t Fig. 3. An example of ringing artifac t. (a) Refe rence frame (b) Compressed frame with color bleeding artif act Fig. 4. An example of color bleeding artif act. (a) Refe rence frame (b) Compressed frame with flickeri ng artifact Fig. 5. An example of flickeri ng artifact . the p seudo structu re may a ppear n ear strong edges (hig h con- trast), wh ich manifests artificial wa ve-like or ripple structu r es, denoted as ringin g. A ringing example is given in the m arked rectangu la r region in Fig. 3 (b). 4) Color bleeding: The chrom aticity information is coarsely quantized to cause colo r bleedin g. It is rela te d to th e presence o f strong chroma variations in the co mpressed ima ges leading to false colo r edges. It may be a result of inconsistent image ren dering across the luminan ce and chr omatic chann els. A colo r bleedin g example is provided in the marked rectan - gular region in Fig. 4 (b ), which exhibits c hromatic d istortion and ad ditional inco nsistent color sp reading in the r enderin g result. 4 (a) Refe rence frame (b) Compressed frame with floating artifac t Fig. 6. An example of floating artifac t. T ABLE I T E S T I N G S E Q U E N C E S No Class Sequence (Resolution) Frames Frame rate No Class Sequence (Resolution) Frames Frame rate 1 A T raf fic (2560x1600) 150 30fps 13 C Basketbal lDrill (832x480) 500 50fps 2 A P eop leOnStr eet (2560x1 600) 150 30 fps 14 D RaceHorse s (416x240) 300 30fps 3 A Nebu taF estiv al (2560 x1600) 300 60fps 15 D BQSquar e (416x240) 600 60fps 4 A SteamLoco motive (2560 x1600) 300 60 fps 16 D BlowingBubble s (416x240) 500 50fps 5 B Kimono (1920x1080) 24 0 24fps 17 D B asket ballP ass (416x240) 500 50fps 6 B P arkSc ene (1920x1080) 240 24fps 18 E F ourP eopl e (1280x720) 600 60fps 7 B Cactus (1920x1080) 5 00 50fps 19 E J ohnny (1280x720) 600 60fps 8 B BQT errace (1920x1080) 600 60fps 20 E KristenAndSar a (1280x720) 600 60fps 9 B Basket ballDrive (1920x1080) 500 50fps 21 F Baskebal lDrillT ext (832x 480) 500 50fps 10 C RaceHorses (832x480) 300 3 0fps 22 F SlideEdi ting (1280x720) 300 30fps 11 C BQMall (832x480) 6 00 60fps 23 F Slid eShow (1280x720) 500 20fps 12 C P arty Scene (832x480) 500 50f ps B. T emporal Artifacts T em p oral artifacts are manifested as temporal in formatio n loss, and can b e iden tified dur ing video playb ack. 1) Flick ering: Flickering is usually fr equent brightne ss or color chang e s along the time d imension. There are different kinds of flickering including mosquito noise, fine-granular ity flickering and co arse-gran ularity flickerin g. Mosqu ito noise is high fr equency distortion and the em bodimen t o f the coding ef fect in the time do main. It moves tog ether with the objects like mosqu itoes flyin g aro und. It may be caused by th e misma tc h pred iction error of the ringing effect and the mo tio n compen sation. The most likely cause of coarse- granulatin g b linking may be luminan ce variations across Group- Of-Pictures (GOPs). Fine- granu larity flickering may be produ ced by slow motion and blocking effect. An examp le is given in th e marked rectang ular region in Fig . 5 (b) . Frequent lum inance chan ges on the surface of the water produ ce flickering a r tifacts. 2) Floating : Floating r e fers to the appearan ce of illusory movements in c ertain ar eas rather than their surr o undin g en viro nment. V isually these regions cr e ate a strong illusion as if they are floating on top of the sur round ing backgr ound. Most often, a scene with a large textured ar e a such as water or tree s is c a p tured with cameras m oving slowly . T h e flo a ting artifacts may be du e to the skip m o de in video coding, wh ich simply copies a b lo ck f rom one f rame to ano ther with out u pdating the imag e details f u rther . Fig. 6 (b) g iv es a floating example. V isually these region s create a strong illusion as if they are floating on top of the leaves. I I I . P E A 2 6 5 D AT A BA S E The development of the PEA265 database is compo sed of four steps: pr eparation of test video seq uences, subjective PEA region identification , p atch labelin g , and form a tio n of PEA265 database. A. T esting V id eo Sequences The selection of testing sequenc es follows th e Commo n T est Co nditions (CTC) [ 36 ]. These stand ard test sequ ences in YUV4:2 :0 format are sum marized in T able I . W e em p loys HEVC encoder [ 37 ] to com press the video sequences with four Quan tization par ameter (Qp) values of 22 , 27, 32 and 37, respectively . Four types of codin g structures are c overed : all intra, r a n dom access, lo w delay and low delay P . Thus, there are totally 320 encod ed sequences. For con sistency , the outpu t bit dep th is set to 8. B. Subjective PEA Region I dentificatio n In or der to id entify all PEAs, we a sk subjects ( i.e. testees) to la b el all video seq uences. Our testing p rocedu re follows the ITU-R BT . 5 00 [ 38 ] docu ment with two p hases. I n the p re- training phase, all subjects are told abo u t ou r testing pro ce- dures and trained to identify PEAs. In the fo rmal-testing ph a se, all sub jec ts are asked to watch these sequen ces and circle PEA regions. The test sequences are presen ted in random orde r . 5 Mid-term br eaks are set durin g the formal- testing to avoid visual fatigue. 30 subjects, 14 males an d 16 females, aged between 20 and 22, participated in th e sub jectiv e experiment. C. P atch Labeling During subjectiv e test, th e PEA regions are circled by sub - jects (may be an ellipse shap e) an d saved in binar y files, f rom which, we derive p ositiv e and negati ve patches in rectang u lar or cubo id shape s. 1) Spatial artifacts: For spatial artifacts, we lab el the patches b y a sliding window o f 32 × 3 2 o r 72 × 72. In a compressed video, if at least half of the pixels with in the sliding window belong to this circled region, it is labeled as positive; otherwise negative. Patches belo nging to the c o rre- sponding frame of uncompressed vide o ar e rando mly selected and categorized as n egative, whether or n ot they are co-loca ted within th e circled region. The ratio betwee n the num bers of the two types o f negative pa tches is 1:2. The labeling process is illustrated in Fig . 7 . 2) T emporal artifacts: T emporal PEAs appe ar in a gr o up of successiv e video fram es. When a testee pauses video playback and marks a temporal artifact region, 10 frames starting from the curre nt frames are extracted. The video fr agment is then further checked by a spatial slidin g window of 32 × 32 o r 72 × 72 : if at least half of th e pixels in this wind ow ar e within the cir cled region, then the cor respond ing cubo id is labelled as positive, otherwise negati ve. Similar to spatial artifacts, negative tempor a l patch es are also obtain e d f rom co - located region in the u n compr essed sequences. This process is illustrated in Fig . 8 . D. S ummary of th e databa se The PEA265 d atabase covers 6 type s of PEAs including 4 types o f spatial PEAs (b lurring , blocking , ringing and color bleeding) and 2 ty p es of temporal PEAs (flickering and floatin g). Each ty pe of PEAs contain s at least 60,0 00 image or vid e o p atches with po siti ve and negativ e labels, respectively . Three typical PEA (rin g ing, color bleeding and flickering) patches are o f size 32 × 32, and th e other two (blurrin g, block in g and floating ) are of size 72 × 72. These patches are store d in b inary format. The total data size is ab out 28Gb. Each PEA pa tc h , is indexed b y its vide o name, frame number, and co ordinate position. I V . C N N - B A S E D P E A R E C O G N I T I O N In th is sectio n, we utilize the PEA265 d atabase to train a deep-lear n ing-b a sed PEA recognition mod el. W e also pro pose two metrics, PEA pa tter n and PEA intensity , which c an be further employed in vision -based video pro cessing an d coding. A. Subjective r ecognition with CNN W e choose two popular CNN arch itectures, LeNet [ 34 ] and ResNeXt [ 3 5 ] in th is stud y . For e a ch typ e of PEA, we random ly select 50,00 0 g r ound - truth samples from PEA265 database. These samples are furth er split to 75:2 5 train- ing/testing sets. T ABLE II T R A I N I N G / T E S T I N G R E C O G N I T I O N A C C U R A C Y S E T S . PEAs LeNet-5 ResNeXt Tra ining T esting T raining T est ing Blurring 0.6833 0.6768 0.9352 0.8176 Blocki ng 0.7154 0.7162 0.9514 0.9281 Ringing 0.6946 0.6917 0.8524 0.8356 Color bleeding 0.7172 0.7200 0.8706 0.8494 Flick ering 0.6572 0.6496 0.8108 0.8019 Floatin g 0.7096 0.7087 0.8228 0.8051 T ABLE III E L A P S E D T I M E ( M : M I N U T E S , S : S E C O N D S ) O F T R A I N I N G CNN LeNet-5 ResNeXt Elapsed Times 19 66m12s 655m17s 1) LeNet-5 network: The LetNe t ar c hitecture is a classic classifier CNN. I n o ur work, W e u se eight layers (in cluding input) with its structure given in Fig. 9 . The conv1 lay e r lear ns 20 c o n volution filters of size 5 × 5. W e apply a ReLU activ ation function fo llowed by 2 × 2 max- p ooling in b oth x × y direction with a stride of 2. The conv2 laye r learns 5 0 conv olutio n filters. Finally , th e softmax classifier is ap plied to r e turn a list o f probab ilities. The class lab e l with th e largest pro bability is chosen as the final classification from the network. Here, the input samp les are o f sizes 3 2 × 32 or 72 × 72, and are in b inary format. In ord er to obtain a higher accuracy , we aug ment the training data by rotation, width scaling, heigh t scaling, shear , zoom, ho rizontal flip and fill mode. After d ata aug m entation, the accuracy impr oves by abou t 10% to 70% a s shown in T able II . 2) ResNeXt network: The ResNeXt [ 34 ] is a variant of ResNet [ 3 9 ] with the building blo ck shown in Fig. 10 . This block is very similar to the In ception m odule [ 4 0 ]. Th ey b oth comply w ith the split transform-merge paradig m. Our models are realized by the for m of Fig. 10 . In the 3 × 3 layer of the first block, d ownsampling of conv3, 4, and 5 is made b y stride - 2 conv olutio ns in each stage, as sugge sted in [ 39 ]. SGD is utilized w ith a mini-batch size of 256 . The momentum is 0.9, and th e weig ht decay is 0.0001 . The in itial value of learning rate is set to 0.1, and we divide it by a factor o f 10 for three times following the schedule in [ 39 ]. T he we ig ht in itialization of [ 39 ] is adopted, and we rea lize Batch Norm a lization (BN) [ 41 ] right after the conv olutions. ReLU is per formed rig h t after each BN. By training the recog nition mod el of each typ e o f PEA in LeNet a nd ResNeXt, we aim to predict whether or not a type of PEA exists in an imag e/video patch . No te he r e we do not u tilize a m ulti-target classification because of the non-exclusivity of PEAs ( i.e. dif fere n t typ es of PEAs coexist within o ne p atch). Based on the above-mentioned two typical CNN networks, we ind ividually train 6 types of PEA identification models. Let TP , FP , TN an d FN deno te the true positive, false positive, true negative, and false n egati ve rates, respectively , the tr a ining and testing accuracy is defined as Accuracy = [ T P / ( T P + F P ) + T N / ( F N + T N )] / 2 . 6 positive patch negative patch (a) Pa tch labeling in a compressed video frame negative patch negative patch (b) Patch labeling in correspondi ng refere nce video frame Fig. 7. Positi ve/ne gati ve patc h labeling for s patial PEAs. N video fragmen t positive patch negative patch N video fragment (a) Pa tch labeling in compressed video frames N video fragmen t negative patch negative patch N -9 video fragmen t (b) Patch labeling in correspondi ng refere nce video frames Fig. 8. Positi ve/ne gati ve patch labeli ng for temporal PEAs. conv 2 inpu t 72* 72 poo l1 con v1 Conv olutio ns Subs ample Conv olutio ns C5 φ layer 120 F6 ˖ laye r 84 Subs ample Full connec tion poo l2 outp ut10 Gau ssian con nection s Fig. 9. The LeNet-5 structure. 256 ˈ 1*1 ˈ 4 4 ˈ 3*3 ˈ 4 4 ˈ 1*1 ˈ 256 256 ˈ 1*1 ˈ 4 4 ˈ 3*3 ˈ 4 4 ˈ 1*1 ˈ 256 256 ˈ 1*1 ˈ 4 4 ˈ 3*3 ˈ 4 4 ˈ 1*1 ˈ 256 ...... total 32 paths 256-d in 256-d out Fig. 10. A block of ResNeXt with cardinali ty = 32. Meanwhile, the cr o ss-entropy loss function is adop ted. T able II lists the classification perfor mance on our PEA datasets. From th e results o f each in dividual experim e ntal d ata, the recogn ition perform ance based on ResNeXt are signifi- cantly better than tha t solely based on LeNet. For example, T ABLE IV P E R F O R M A N C E C O M PA R I S O N O F F L O ATI N G P E A R E C O G N I T I O N A L G O R I T H M Algorith ms Figure 11 (b) Figure 11 (f) Image3000 Ref[17] 96.1% 54.92% 65.17% Proposed 95.85% 88.23% 85.46% in T able I I , the pro posed blo cking PEA recog nition model yields a testing accu racy of 92. 8 1%, nearly 20% higher than that of the LeNet ( i.e. 71.62%) . Similar results are observed in the other PEA r e c ognition mod els. Compar ed with LeNe t, ResNeXt has mo r e lay ers, an d c an learn mor e co m plex image high-d imensional fe a tures. By repeating a building b lock, ResNeXt is co nstructed. T he building b lock aggr egates a set of transform ations with the same topo logy . On ly a few hyper-parameter s nee d to be set in a h omoge n eous an d multi- branch a rchitecture. Mean w h ile, its bottlen e c k layer red uces 7 the n umber of features. Thu s the o peration complexity of each la y er r educes. Th erefore , the com putational com p lexity greatly r educes, wh ile the speed and accur acy o f th e alg orithm improves. The compu tational complexity o f the tr aining an d testing p rocedu r es using the LeNet an d ResNeXt is summa- rized in T ab le III . ResNeXt is much faster th a n L eNet because of the bottlen e c k layer . Th e training process requires a large number of iteratio ns and is relatively time-consumin g. B. Comparison with o ther benchmarks In order to better illustrate the advantages o f the pro posed recogn itio n, we compare it with the floatin g PEA detectio n method in [ 17 ], in which the low-le vel co ding features were extracted to estimate the spatial distribution of floating. Fig. 11 (a) an d (e) are two original frames, re sp ectiv ely , and Fig. 11 (b) a nd (f) ar e th eir compressed fr ames, coded by HEVC with Qp = 42, wher e the v isual floating regions are m arked manually . Fig. 11 (c) is the flo a ting m ap g enerated by [ 17 ], where black regions indicate the floating a r tifacts. Fig. 11 (d ) is the result o f the p ropo sed PEA reco gnition model. In th is case, both methods p erform s reasonab ly well in floating d etection. Howe ver , the alg orithm in [ 17 ] requ ir es con tent-dep endent parameter adjustmen t a n d do es n ot gen eralize consistently . For example, Fig. 11 (g) fails to detect the actual floating r egion. Compared Fig. 1 1 (g ) with Fig. 11 (h), the proposed float- ing PEA rec o gnition algorith m perfo r ms clearly better . The floating detection accuracy is given in T able IV . In addition, we random ly select 3 000 test imag es, and the per forman ce compariso n results are illustrated in the last column of the T able IV . It app ears that the proposed floatin g PEA recognition model con sistently outperform s [ 17 ]. C. The overall PEA intensity By combin ing the 6 PEA re c ognition models, we ob tain two hybrid PEA metrics: a local PEA me tric, namely PEA pattern, and a h olistic PEA metr ic, n amely PEA inten sity . A PEA pattern is represented as a 6-bin value, each contains a binary value repre sen ting the existence of b lu rring, blockin g, rin g ing, color bleed ing, flickering and floating a r tifacts respectively . W e set it 1 if its correspo nding PEA exists; other wise 0. T o intuitively sh ow the PEA pattern, we present two examples in Fig. 12 . In Fig. 1 2 (a), blur ring, b locking and ringin g ar tifacts exist in this patch, thus its PEA p a ttern is labeled as 111000 ; In Fig. 12 (b), floatin g artifact exists in this patch , thus its PEA pattern is labeled as 0000 01. This pattern den otes the featu re vector of a video patch in terms o f PEAs and thu s can be further u tilized in vision-based v ideo pro c essing. In ad dition, we summ arize the distributions of all typ es of PEAs in Fig. 13 . It is ob ser ved that for a video fr a m e, the distributions of PEAs differ fro m ea c h other in which all types of PEAs m ay not be observed simultaneo usly . Th erefore , only a combinatio n of PEAs, such as in Fig . 13 (h ), shows the im pacts of PEAs on visual quality . W e introdu ce a new metric, PEA intensity , as the perc e n tage of positive binar ies ( i.e. value 1) within a patch, to illustrate this overall impact. The PEA pattern s, 11100 0 and 00 0111, have the same PEA in tensity becau se there are 3 positive bin a ries in both pattern s. For a v ideo sequence, its PEA in tensity is then defined as the a verage PEA intensity of all non -overlapping patches. In Fig. 14 , the overall PEA intensities o f all CTC sequences are measured and presented . Several co nclusions can be drawn here. Firstly , the overall PEA intensity is, in gen eral, positively correlated to th e Qp value. For almost all types of PEAs an d videos, the PEA intensity grows with a higher Qp. This fact highligh ts the impo rtance o f quan tization and in formatio n loss in the g eneration mec h anism of PEA s. As discussed befor e, the potential orig in of sp atial artifacts are inter preted as the loss of high freq u ency sign als, ch r ominan c e signals a n d inco nsistency of infor m ation loss b e tween boun daries, while the temporal artifacts are possibly p roduc ed by inconsistent in formation loss between frames. Theref ore, the fact tha t Qp influences PEA intensity is comp atible with the above interp retations an d a lso provides guidan ce to detailed explorations on th e generatio n mechanism of PEAs. Secondly , th e PEA inten sity is co ntent-de penden t, as it varies subject to video con tents. For example, the sequences SlideEditing (1280 × 7 20, No.22) , SlideShow (12 80 × 7 2 0, No.23) have lo wer PEA intensities in terms of blocking, blurring an d floa ting; o n th e oth er h a n d, mo r e co lo r bleedin g, ringing and flickering artifacts are identified . The sequenc e Kimono (1920 × 1 080, No.5) has se vere in tensities for almost all types o f PEAs wh ile the sequ ence BQSqua r e (4 16 × 2 40, No.15) is with low intensities fo r almo st all PEAs. Th is implies that the v id eo characteristics, including texture and motion, may have an impact on the PEA intensity when being compr e ssed . It may also provide useful instructions for content-aware v ideo codin g optimization. Thirdly , th e f requenc ies o f PEAs can be different subjec t to its type. I n this data b ase, the intensities of blocking, color bleeding and flickering a re significant comp ared with oth e r PEAs inc lu ding blurring, ringin g and floating. Furthermor e, the impact on v isual quality chan ges for different types of PEAs. All typ es of PEAs may not have the same impact on HVS an d the visual q uality o f users may be dominated by parts of PEAs, as con cluded in [ 18 ]. W e put this in fu ture work to explo re ho w PEA d etections should be combined to best ev aluate their impact on visual quality . In o rder to furthe r in vestigate th e differences be twe e n spatial and tem poral PEAs, we present the av erage d PEA intensities for spatial a nd tem poral artifacts in Fig. 15 . The aforem en- tioned conclusion s can also been verified in this figure. V . C O N C L U S I O N W e co n struct PEA265, a first-of-its-kin d large-scale subject- labelled database o f PEAs p rodu ced b y H.265 /HE VC v ideo compression . T he data b ase con ta in s 6 spa tial an d temp oral PEA typ es, inclu ding blu rring, blo c king, ringin g, color b le e d - ing, flickering and floating, each with at least 6 0 ,000 samples with positive or negative labels. Using the database, we train CNNs to recog nize PEAs, and the results show that state-of - the-art ResNext p rovides high accuracies in PEA detec tio n. Moreover , we defin e a PEA intensity measure to assess the overall se vereness of PEAs in compr essed videos. T his work will b enefit th e futur e development of video quality assessment 8 (a) (b) (c) (d) (e) (f) (g) (h) Fig. 11. An exampl e of floating PEA detecti on. Block ing-mode l Blurr ing-mod el Ringin g-model Color B leeding -model Flicke ring-mo del Floati ng-model 1 11 000 1 1 0 0 1 0 (a) PEA pattern with spatial PEA(s) Block ing-mod el Blurri ng-mod el Ringin g-mode l Color Bleeding -model Flicke ring-mo del Floa ting-mode l 00000 1 0 0 1 0 0 0 (b) PEA pattern with temporal PEA(s) Fig. 12. The PEA pattern of image patches. (a) A compressed frame (b) Bloc king artifac t (c) Blur ring artifac t (d) Ringi ng artifa ct (e) Colo r bleedi ng artifact (f) Flick ering artifact (g) Float ing artifac t (h) Combined artif acts Fig. 13. The indivi dual and ov erall PEA distrib utions of a frame. 9 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 Sequence 0 0.2 0.4 0.6 0.8 1 Intensity QP-22 QP-27 QP-32 QP-37 (a) Bloc king intensity 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 Sequence 0 0.2 0.4 0.6 0.8 1 Intensity QP-22 QP-27 QP-32 QP-37 (b) Blurring intensity 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 Sequence 0 0.2 0.4 0.6 0.8 1 Intensity QP-22 QP-27 QP-32 QP-37 (c) Colo r Bleedi ng intensity 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 Sequence 0 0.2 0.4 0.6 0.8 1 Intensity QP-22 QP-27 QP-32 QP-37 (d) Ringi ng intensity 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 Sequence 0 0.2 0.4 0.6 0.8 1 Intensity QP-22 QP-27 QP-32 QP-37 (e) Flic kering intensity 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 Sequence 0 0.2 0.4 0.6 0.8 1 Intensity QP-22 QP-27 QP-32 QP-37 (f) Float ing intensit y Fig. 14. The indi vidual PEA intensity for each type of PE A. algorithm s. It can a lso be u sed to optimize hybrid video encoder s for improved percep tual quality and percep tually- motiv ated video enco ding schemes. R E F E R E N C E S [1] Cisco visual networki ng index : Foreca st and methodology , 2016-2021. [Online]. A v ailable : https:/ / www .cisco.com/c/en/us/solution s/ collateral / service - provider/visual- networking- index- vni/complete- white- paper- c11- 481360.html [2] J. P . Alleba ch, “Human vision and image rendering: Is the s tory ove r , or is it just beginni ng, ” in P r oc. SPIE 3299, Human V ision and Electr onic Imagin g (HVEI) III , Jan. 1998, pp. 26–27. [3] H.264: Advanced video coding for generi c audiovisual services , ITU-T Rec, Mar . 2005. [4] G. J. Sulli va n, J. R. Ohm, and W . J. Han, “Overvie w of the high ef ficienc y vide o coding (HEVC) standard, ” IE E E T rans. Cir cuits Syst. V ide o T ec hnol. , vol. 22, no. 12, pp. 1649–1668, Dec. 2012. [5] J. Bankoski and P . W ilkins, “T echnic al ove rvie w of vp8, an open s ource video codec for the web, ” in Pro c. IEEE Internationa l Conf erenc e on Multime dia and E xpo (ICME) , Jul. 2011, pp. 1–6. [6] D. Mukh erjee, J. Han, J. Ba nkoski, and R. Bultj e, “The late st open- source video codec vp9 - An ove rvie w and preliminary results, ” in Pictur e Coding Symposium (PCS) , Feb . 2013, pp. 390–393. [7] F . Liang, S. Ma, and W . Feng, “Overvi ew of AVS video stand ard, ” in IEEE Internati onal Confer ence on Multimedia and Expo (ICME) , Jun. 10 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 Sequence 0 0.2 0.4 0.6 0.8 1 Intensity QP-22 QP-27 QP-32 QP-37 (a) Spat ial PEA intensity 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 Sequence 0 0.2 0.4 0.6 0.8 1 Intensity QP-22 QP-27 QP-32 QP-37 (b) T emporal PEA intensit y Fig. 15. The spatial and temporal PEA intensit ies on av erage. 2004, pp. 423–426. [8] Z. He, L. Y u, X. Z heng, S. Ma, and Y . He, “Framew ork of AVS2-video coding, ” in IEEE International Confer ence on Image P r ocessing (ICIP) , Sep. 2014, pp. 1515–1519. [9] M. Y uen and H. R. Wu, “ A surv ey of hybrid MC/DPCM/DCT video coding disto rtions, ” Sig nal Proc ess. , vol . 70, no. 3, pp. 247–278, Oct. 1998. [10] A . Leontaris, P . C. Cosman, and A. R. Reibman, “Qualit y ev aluat ion of motion-compe nsated edge artifact s in compre ssed vide o, ” IEEE T rans. Imag e Pro cess. , vol. 16, no. 4, pp. 943–956, Mar . 2007. [11] A . Eden, “No-refe rence image quality analysis for compressed video sequence s, ” IEEE T rans. Bro adcast. , vol. 54, no. 3, pp. 691–697, Sep. 2008. [12] K . Zhu, C. Li, V . Asari, and D. Saupe, “No-refere nce video quality assessment based on artifa ct measurement and statistical analysis, ” IEEE T rans. Circuit s Syst. V ideo T echnol. , vol. 25, no. 4, pp. 533–546, Apr . 2015. [13] K . H. Dang, D. Le, and N. T . Dzung, “Efficie nt determinati on of disparit y map from stereo images with modified sum of absolute dif ferences (SAD) algorithm, ” in Pr oc. Internati onal Confer ence on Advanced T ec hnolo gies for Communications (ATC) , Jan. 2014, pp. 657– 660. [14] H . Kibey a, N. Bahri, M. A yed, and N. Masmoudi, “SAD and SSE implement ation for HEVC encoder on DSP TMS320C6678, ” in Pr oc. Internati onal Image Proce ssing, Applicati ons and Systems (IP AS) , Mar . 2017, pp. 1–6. [15] A . Hor and D. Ziou, “Image quality metrics: PSNR vs. SSIM, ” in P r oc. Internati onal Confer ence on P atte rn Recogni tion (ICPR) , Oct. 2010, pp. 2366–2369. [16] S . Sakaida, Y . Sugito, H. Sakate, and A. Mineza wa, “V ideo coding technol ogy for 8k/4k era, ” Inst. Electr on. Inf . Commun. E ng. , vol. 98, pp. 218–224, Mar . 2015. [17] K . Z eng, T . S. Z hao, A. Rehman, and Z . W an g, “Characteri zing percept ual artif acts in compressed video streams, ” IS & T/SPIE Human V isio n and Elec tr onic Imaging XIX (in vited paper) , v ol. 9014, no. 10, pp. 2458–2462, Feb . 2014. [18] J . Xia, Y . Shi, K. T eunissen, and I. Heynde rickx, “Percei v able artif acts in compressed video and their relation to video quality , ” Signal Proce ss- Imag e. , vol. 24, no. 7, pp. 548–556, Aug. 2009. [19] P . L ist, A. Joch, J. Lainema, G. Bjonte gaard, and M. Karcze wicz, “ Adaptiv e deblockin g filter , ” IEEE T rans. Circui ts Syst. V ideo T ec hnol. , vol. 13, no. 7, pp. 614–619, Jul. 2013. [20] S . B. Y oo , K. Choi, and J. B. Ra, “Blind post-processing for ringing and mosquito artifac t reduct ion in coded videos, ” IEEE T rans. Circ uits Syst. V ideo T ec hnol. , vol. 24, no. 5, pp. 721–732, May 2014. [21] F . X. Coudoux, M. Gazalet, and P . Corlay , “Reduc tion of color bleedi ng for 4:1:1 compressed video, ” IEEE Tr ans. Broadcast . , vol. 51, no. 4, pp. 538–542, Dec. 2005. [22] S . Y ang, Y . H. Hu, T . Q. Nguyen, and D. L. Tull , “Maximum-lik elihood paramete r esti mation for image ringing-arti fact remov al, ” IEEE T rans. Cir cuits Syst. V ide o T echnol. , vol. 11, no. 8, pp. 963–973, Sep. 2001. [23] S . H. Oguz, Y . H. Hu, and T . Q. Nguyen, “Image coding ringing artif act- reducti on using morphological post-filteri ng, ” in Pr oc.IEEE Second W orkshop on Multi media Signal Pr ocessing (MMSP) , Jan. 1999, pp. 628–633. [24] H . S. K ong, A. V e tro, and H. Sun, “Edge map guided adapt iv e postfilter for blockin g and ringing artifac ts remova l, ” in International Symposium on Circ uits and Systems (ISCAS) , Sep. 2004, pp. 929–932. [25] J . X. Y ang and H. R. Wu , “Robust filtering technique for reduction of temporal fluctua tion in H.264 video sequences, ” IEEE T r ans. Circ uits Syst. V ideo T ec hnol. , vol. 20, no. 3, pp. 458–462, Mar . 2010. [26] Y . C. Gong, S. W an, K. Y ang, F . Z. Y ang, and L. Cui, “ An effici ent algorit hm to eliminat e temporal pumping artif act in video coding with hierarc hical predict ion structur e, ” J. VIS. COMMUN. IMAGE. R , vol. 25, no. 7, pp. 1528–1542, Oct. 2014. [27] Y . Lecun, Y . Bengio, and G. Hinton, “Deep learning, ” Nature , vol. 521, no. 7553, pp. 436–436, May 2015. [28] D . C. Ciresan, U. Meier , L. M. Gambardella, and J. Schmidhuber , “Con vo lutional neural network committees for handwrit ten character classifica tion, ” in pr oc. International Confer ence on Document Analysis and Recognit ion (ICDAR) , Nov . 2011, pp. 1135–1139. [29] C. Dong, Y . Deng, C. L. Cha ng, and X. T ang, “Compre ssion artifa cts reducti on by a deep conv olutiona l network, ” in Pr oc. IEEE Internati onal Confer ence on Computer V ision (ICCV) , Feb . 2016, pp. 576–584. [30] C. Dong, C. L. Chen, K. He, and X. T ang, “Learni ng a deep con- volu tional network for image super-resolu tion, ” in Proc. Eur opean Confer ence on Computer V ision (E CCV) , Jan. 2014, pp. 184–199. [31] Y . Y . Dai, D. Liu, and F . Wu , “ A con volu tional neural netw ork approach for post-processin g in HEVC Intra Coding, ” in Internationa l Confer ence on Multimedi a Mode ling (MMM) , Jan. 2017, pp. 28–39. [32] W . S. Park and M. Kim, “CNN-based in-loop filte ring for coding ef ficienc y improvemen t, ” in Proc. Imag e, V ideo, and Mult idimensional Signal Proc essing (IVMSP) , Aug. 2016, pp. 1–5. [33] N . Y a n, D. Liu, H. Li, and F . Wu, “ A con voluti onal neural netwo rk ap- proach for half-pel interp olation in video coding, ” in IEE E International Symposium on Circ uits and Systems (ISCAS) , Mar . 2017, pp. 1–4. [34] Z . H. Zhao, S. P . Y a ng, and M. Qiang, “License plate charac ter recogni tion based on con volution al neural network lenet -5, ” J. Syst. Simul. , vol. 22, no. 3, pp. 638–641, Mar . 2010. [35] S . Xie, R. Girshick, P . Dollar , Z. Tu, and K. He, “ Aggregated residual transformat ions for deep neural networks, ” in Pr oc. IEEE Confer ence on Compute r V ision and P atte rn Recogni tion (CVPR) , Nov . 2017, pp. 5987–5995. [36] F . Bossen, “HM 10 common test conditions and software reference configurat ions, ” in P r oc. J oint Collaborati ve T eam on V ide o Coding Meeti ng (JCT -VC) , Jan. 2013, pp. 1–3. [37] (2016) Fraunhofer institu te for telecommunic ations, heinric h hertz institu te. High Effici ency V ideo Coding (HEVC) reference software HM. [Online]. A v ailable : https:/ / hevc.hhi.fraunh ofer . de/ 11 [38] Methodology for the s ubjective assessment of the quality of tel evi- sion pict ures , Recommendation ITU-R BT . 500-13, Gene va , Switzer- land:In ternationa l T elecommunicat ion Union, 2012. [39] K . He, X. Zhang, S. Ren, and J. Sun, “Deep residual learning for image recogni tion, ” in Pr oc. The IEEE Confer ence on Comput er V ision and P att ern R eco gnition (CVPR) , Aug. 2016, pp. 770–778. [40] C. Szege dy , V . V anhoucke , S. Iof fe, J. Shlens, and Z. W ojna, “Rethinking the incepti on archite cture for computer vision, ” in Pro c. The IEEE Confer ence on Computer V ision and P attern Recogni tion (CVPR) , Dec. 2016, pp. 2818–2826. [41] S . Iof fe and C. Szegedy , “Batch normaliza tion: Accelera ting deep net- work training by reducing internal cov ariat e shift, ” in Pr oc. International Confer ence on Machine Learning (ICML) , Mar . 2015, pp. 1–10.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment