Time-Domain Audio Source Separation Based on Wave-U-Net Combined with Discrete Wavelet Transform

We propose a time-domain audio source separation method using down-sampling (DS) and up-sampling (US) layers based on a discrete wavelet transform (DWT). The proposed method is based on one of the state-of-the-art deep neural networks, Wave-U-Net, wh…

Authors: *제공된 정보에 저자 명단이 포함되어 있지 않습니다.*

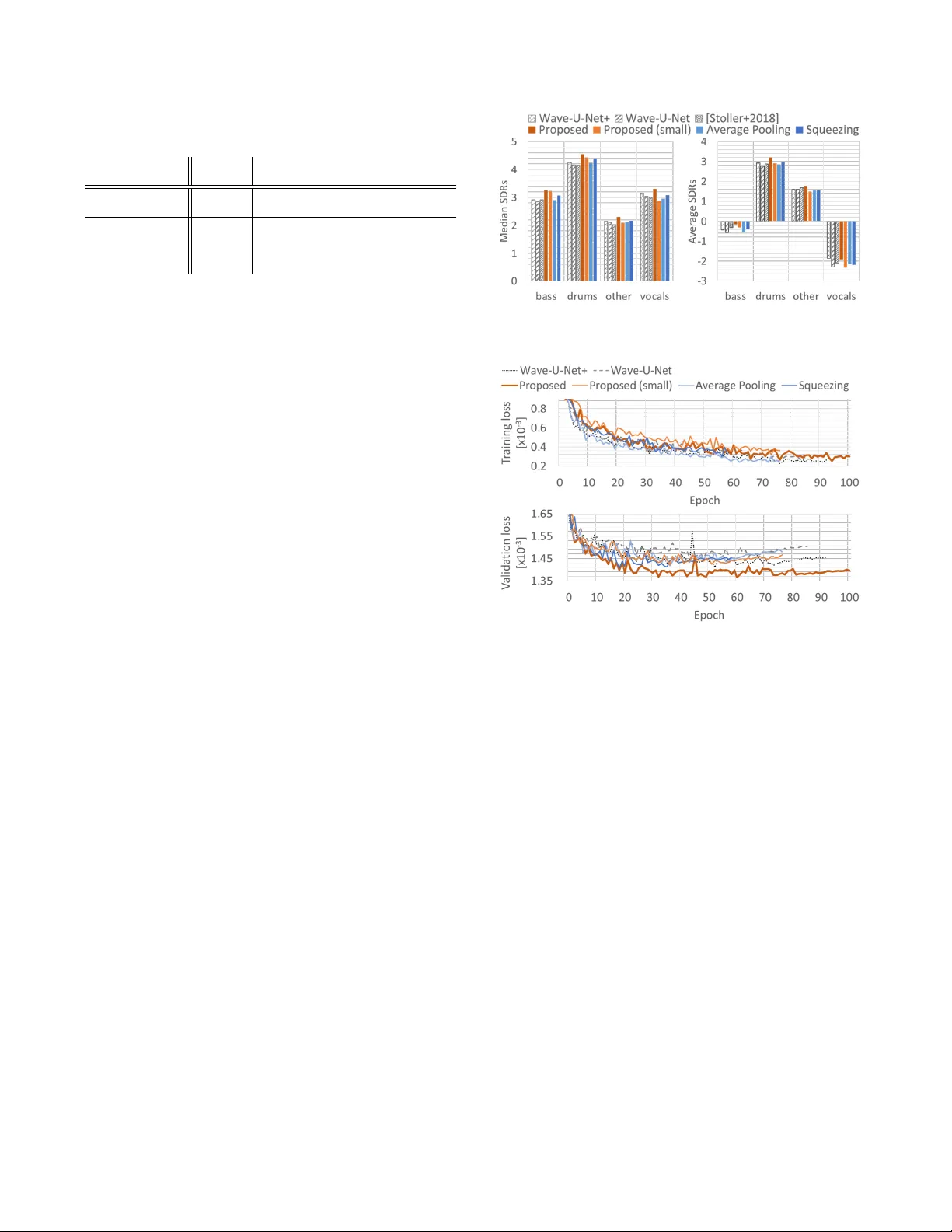

TIME-DOMAIN A UDIO SOURCE SEP ARA TION B ASED ON W A VE-U-NET COMBINED WITH DISCRETE W A VELET TRANSFORM T omohiko Nakamur a, Hir oshi Saruwatari Graduate School of Information Science and T echnology , The Univ ersity of T okyo 7-3-1 Hongo, Bunkyo-ku, T okyo 113-8656, Japan ABSTRA CT W e propose a time-domain audio source separation method using down-sampling (DS) and up-sampling (US) layers based on a dis- crete wa velet transform (D WT). The proposed method is based on one of the state-of-the-art deep neural networks, W ave-U-Net, which successiv ely down-samples and up-samples feature maps. W e find that this architecture resembles that of multiresolution analysis, and rev eal that the DS layers of W ave-U-Net cause aliasing and may dis- card information useful for the separation. Although the effects of these problems may be reduced by training, to achie ve a more reli- able source separation method, we should design DS layers capable of overcoming the problems. W ith this belief, focusing on the fact that the DWT has an anti-aliasing filter and the perfect reconstruc- tion property , we design the proposed layers. Experiments on mu- sic source separation sho w the ef ficacy of the proposed method and the importance of simultaneously considering the anti-aliasing filters and the perfect reconstruction property . Index T erms — Time-Domain Audio Source Separation, W ave- U-Net, Discrete W avelet T ransform, Deep Neural Networks 1. INTR ODUCTION Deep neural netw orks (DNNs) ha ve shown promising results in su- pervised audio source separation [1]. Most DNN-based methods per- form source separation in the magnitude (or power) spectrogram do- main [2–5]. Howe ver , this approach has several drawbacks. First, the approach frequently uses the phase of the observed complex spectrogram as that of each separated spectrogram, but the result- ing spectrogram may be inconsistent, i.e., no corresponding time- domain signal is guaranteed to exist when we use redundant time- frequency transform [6]. Second, although we can use phase re- construction algorithms such as the Griffin–Lim algorithm [7] to ob- tain consistent separated spectrograms, these algorithms increase the computation time. Finally , ignoring the phase in the separation pro- cess may lead to suboptimal solutions. T o ov ercome these draw- backs, an end-to-end approach has been activ ely explored [8–14]. One of state-of-the-art end-to-end DNNs is W ave-U-Net [8], which directly separates a time-domain signal into source signals. W ave-U-Net is based on the U-net architecture [15] and has an encoder–decoder architecture. The encoder successi vely do wn- samples feature maps with down-sampling (DS) blocks to halve the time resolution of the feature maps, and the decoder up-samples the feature maps with up-sampling (US) blocks to double the time resolution of the feature maps. This architecture resembles that of MultiResolution Analysis (MRA) [16] (see Fig. 1 (a)). It repeatedly This work was supported by JSPS KAKENHI Grant Number JP19H01116. decomposes a signal to subband signals with half the time resolution using a discrete wav elet transform (DWT), and the input signal can be perfectly reconstructed from the subband signals with an in verse D WT . Roughly speaking, the DS and US blocks correspond to the D WT and in verse D WT , respectiv ely . This analogy lets us notice the underlying problems of W ave-U-Net. In the DS blocks, DS is implemented as the decimation layer without low-pass filters, which causes aliasing. Owing to this phe- nomenon, for example, shifting an input signal by one sample may change the output of the network markedly and degrade separation performance. One way to reduce the aliasing artifacts is to insert anti-aliasing filters before DS as in [17, 18]. Although this method can be used for our case, another problem remains unsolved. The decimation layer with or without the anti-aliasing filters is apparently not inv ertible and discards part of the feature maps e ven though the discarded components may contain information useful for source separation. Whether the layer can propagate such information to the follo wing layers strongly depends on training. T o achiev e a more reliable source separation method, rather than expecting the model to be trained as we wish, it would be better to design a DS block that can simultaneously ov ercome the abov e problems. W ith this in mind, we propose novel DS and US layers using the D WT and in verse DWT , and develop an extension of W av e-U-Net combined with the proposed layers (see Fig. 1 (b)). Since a DWT can be seen as a filterbank including a low-pass filter and satisfies the perfect reconstruction property , the proposed layers ensure that the DS blocks ha ve anti-aliasing filters and preserv e the entire infor - mation of the feature maps. Note that commonly used DS layers, such as max pooling, average pooling and con volution layers with strides, lacks either anti-aliasing filters or the perfect reconstruction property , and our proposed layers can be useful for many existing DNNs. The contributions of this paper are summarized as follo ws: 1) W e rev eal that the conv entional DS layers lack either anti- aliasing filters or the perfect reconstruction property through the analogy between the architecture in W ave-U-Net and MRA. 2) W e propose DS and US layers using the DWT and in verse D WT and develop an extension of W ave-U-Net by incorporating the layers. 3) Through e xperiments on music source separation, we sho w the efficac y of the proposed model and the importance of simultaneously considering the anti-aliasing filters and the perfect reconstruction property . 2. RELA TED WORKS The aliasing problem has been inv estigated in both audio process- ing [19] and image processing [20]. T o reduce aliasing artifacts, methods of introducing anti-aliasing filters hav e been de veloped for image processing [17, 18, 21]. In [21], a wa velet-based pooling layer (a) Multiresolution analysis and synthesis with L levels. (b) Proposed model with L levels and N sources. Fig. 1 : Schematic illustration of multiresolution analysis and the proposed model. The blue and orange rectangles represent do wn-sampling and up-sampling, respecti vely . h and g ( ˜ h and ˜ g ) denote high-pass and lo w-pass (reconstruction) filters corresponding to the DWT (inv erse D WT). “Concat” denotes the concatenation of two inputs along the channel axis. The dashed lines stand for skipped connections. has been presented. The pooling layer outputs only the second-order wa velet subbands of a feature map; therefore, it lacks the perfect reconstruction property . In contrast, our proposed layers not only include anti-aliasing filters but also satisfy the perfect reconstruction property . T o our kno wledge, this study is the first to address this lack of the perfect reconstruction property in DNNs. As a component of normalizing flow models for image genera- tion, the squeezing operation has been de veloped to halve the spatial resolution of the feature map without changing the number of ele- ments [22]. The time-domain adaptation of this operation simply splits the feature map into the odd-sample and e ven-sample compo- nents and concatenates them along the channel axis, which obviously lacks anti-aliasing filters. Indeed, as shown in section 3.2, the oper- ation is part of the process for our DS layer . This study is the first to rev eal the relationship between the operation and the DWT . 3. PR OPOSED MODEL In this section we describe the motiv ation to derive the proposed layers and dev elop an extension of W av e-U-Net. 3.1. Motivation As described in section 1, we found an analogy between the architec- ture in W ave-U-Net and MRA. This analogy reveals the lack of the anti-aliasing filters and the lack of the perfect reconstruction prop- erty . Although the anti-aliasing problem can be solved by introduc- ing low-pass filters before DS, the other problem remains unsolv ed. The decimation layer with and without the anti-aliasing filters discards part of the inputted feature map. If the layers preceding the decimation layer are trained so that the y pack the components useful for the separation into the decimated feature map, the model works well. If this is not the case, the decoder needs to acquire a way to compensate for the discarded components during the training, which (a) DWT layer . (b) In verse D WT layer . Fig. 2 : Block diagrams of the proposed layers. C − 1 and S − 1 denote the in verse operations of C and S , respecti vely . may lead to overfitting. One may think that, o wing to the U-net ar - chitecture, each US block is connected to the DS block at the same lev el of hierarchy and the decoder can access the discarded compo- nents. Howev er, the conv olution layer contained in the US block is translation-in variant and it is difficult to identify which elements of the feature map coming through the connections are discarded by the decimation layer . Thus, whether the decoder compensates for the discarded components strongly depends on training. In contrast to the decimation layer , the D WT has an anti-aliasing filter and satisfies the perfect reconstruction property , i.e., it can si- multaneously overcome the problems. Focusing on this feature, to achiev e a more reliable source separation method, we design novel DS and US layers, called the D WT and in verse D WT layers, respec- tiv ely , in the follo wing. T able 1 : Detailed architecture of the proposed model Block Layer Input DS block l for l = 1 , · · · , L Conv1D ( C (e) l, f (e) ) DWT layer Intermediate block Conv1D ( C (m) , f (e) ) US block l for l = L, · · · , 1 In verse D WT layer Concat ( DS feature l ) Conv1D ( C (d) l, f (d) ) Concat ( Input ) Conv1D ( C (s) ( N − 1) , 1) 3.2. D WT and Inv erse D WT Layers Let us consider the feature map z = [ z 1 , · · · , z C ] ∈ R T × C , where T and C are the numbers of time points and feature channels, and denote the feature channel index by c . For simplicity , we consider T to be even. The D WT layer first applies the D WT to z c according to the lifting scheme [23], which is a computationally efficient tech- nique for the DWT and in verse D WT compared with the Mallat al- gorithm. The scheme consists of four steps: The first is the time-split step in which each feature channel of z c is split into the odd-sample and even-sample components, z (odd) c ∈ R T / 2 and z (even) c ∈ R T / 2 , respectiv ely . W e denote this split operation by S . The second step is the prediction, in which a prediction of z (odd) c is computed from z (even) c using the prediction operator P , and the prediction is sub- tracted from z (odd) c to obtain the error component e c ∈ R T / 2 . e c = z (odd) c − P z (even) c . (1) This step corresponds to a high-pass filter . Since z (even) c con- tains aliasing artifacts caused by the time-split step, in the third step, called the update step, the smoothed ev en-sample component s c ∈ R T / 2 is computed by applying the update operator U to e c and adding it to z (even) c . s c = z (even) c + U e c . (2) This step corresponds to a lo w-pass filter . The final step is the scaling step, in which s c and e c are scaled by a normalization constant A and its reciprocal, respecti vely , and we obtain ˜ s c = A s c and ˜ e c = e c / A . After applying the above operations to all channels of z , the D WT layer concatenates { ˜ s c } C c =1 and { ˜ e c } C c =1 along the channel axis to form the down-sampled feature map ˜ z ∈ R T / 2 × 2 C . ˜ z = [ ˜ e 1 , · · · , ˜ e C , ˜ s 1 , · · · , ˜ s C ] . (3) W e denote the channel concatenation operation by C . The in verse D WT layer performs the rev erse of the above process. Block dia- grams of the two proposed layers are sho wn in Fig. 2. For odd T , the time-split step is difficult to directly apply , and inserting a padding layer before the step is required so that the num- ber of time points of the padded feature map equals T + 1 . W e experimentally determined the use of the reflection padding. The operators P and U and the normalization constant A are de- termined according to the wav elets. For example, for Haar wa velets, we hav e P = I T , U = 0 . 5 · I T and A = √ 2 where I T is the T identity matrix. Note that if we choose the lazy wav elet [24], which means that there are no lo w-pass or high-pass filters, the D WT layer reduces to the time-domain adaptation of the squeezing operation, i.e., P = 0 T , U = 0 T and A = 1 , where 0 T is the T zero matrix. Compared with the decimation and linear US layers, the DWT and in verse DWT layers with the Haar wa velets require T C / 2 sub- tractions, T C / 2 additions, and T C/ 2 multiplications for the predic- tion, update, and scaling steps, respecti vely . Howev er, these compu- tations are parallelizable at each step and the processing time does not significantly increase at the D WT and in verse DWT layers. 3.3. Incorporation of Proposed Layers into W av e-U-Net In this section, we incorporate the proposed layers into W ave-U-Net. A schematic illustration of the proposed model is shown in Fig. 1 (b). It has an encoder–decoder architecture as in W ave-U-Net 1 . The en- coder (decoder) consists of L DS blocks (US blocks), each of which halves (doubles) the time resolution of the feature maps with the D WT layer (the in verse D WT layer). Let l = 1 , · · · , L , N and C (s) be the le vel index, the number of sources and the number of chan- nels of the input signal, respectively . The l th US block can access the feature map before the D WT layer of the l th DS block. The net- work outputs N − 1 source estimates with C (s) channels, and the N th source estimate is then obtained by subtracting the sum of the N − 1 source estimates from the input signal, which ensures that the sum of the source estimates equals the input signal. The architecture is described in detail in T able 1. Conv1D ( x, y ) denotes a one-dimensional con volution layer with x filters of size y . Concat ( x ) represents the concatenation of the output of the pre- ceding layer and x along the channel axis. T o make the number of time points in the two inputs equal, x is center-cropped before the concatenation. Input and DS feature l respecti vely represent the in- put signal and the feature map before the DWT layer of the l th DS block. All con volution layers are without padding, and the feature maps obtained with the conv olution layers can have an odd number of time points. As described in section 3.2, we insert the reflection padding layer before each DWT layer , and discard the last time el- ements of the feature maps obtained with each in verse D WT layer as in W ave-U-Net. All conv olution layers e xcept for the last are fol- lowed by the leaky ReLU, and the last one is follo wed by the hyper - bolic tangent function to normalize the values of the source estimates within ( − 1 , 1) . Note that if we replace the DWT and in verse D WT layers with the decimation and linear US layers, respecti vely , and remov e the reflection padding layers, the proposed model is reduced to W ave-U-Net. 4. EXPERIMENT AL EV ALU A TION 4.1. Experimental Setup T o ev aluate the proposed model, we conducted experiments using the MUSDB18 dataset [25], which consists of 100 and 50 songs for training and test data, respectiv ely . For all songs, mixture audios and separate recordings of bass, drums, v ocals and other instruments are av ailable. For v alidation, 25 songs were randomly selected from the training data, and all recordings were down-sampled to 22 . 05 kHz in the stereo format. W e implemented all models by extending the open implementation of W ave-U-Net ( https://github.com/f90/ Wave- U- Net ) and used the same experimental settings as [8]. W e compared the proposed model ( Pr oposed ) with the vanilla W ave-U-Net ( W ave-U-Net ), which we re-trained, and its two vari- ants ( A verage P ooling and Squeezing ). The variants were used to separately e valuate the effects of the anti-aliasing filters and the per - fect reconstruction property . As DS and US layers, we used the a v- erage pooling with a kernel size of 2 and a stride of 2 and the linear 1 W e adopted the best architecture reported by the authors; M4 [8]. T able 2 : Characteristics of all models. “perf. rec. prop. ” means the perfect reconstruction property Model #params Has Satisfies anti-aliasing filters perf. rec. prop. W ave-U-Net+ 28 . 31 M No No W ave-U-Net 10 . 26 M No No Proposed 15 . 15 M Y es Y es Proposed (small) 5 . 81 M Y es Y es A verage Pooling 28 . 31 M Y es No Squeezing 15 . 51 M No Y es interpolation layer for A verage P ooling and the squeezing operation and its in verse operation for Squeezing . Although the av erage pooling and decimation layers do not change the channel size of the feature map, the DWT layers and the squeezing operations double that of the feature map. Owing to these features, it is not easy to exactly match the proposed model and W ave-U-Net in terms of the number of parameters. For this reason, we also used a variant of Proposed with a reduced number of parameters ( Pr oposed (small) ) and another variant of W ave-U- Net ( W ave-U-Net+ ) with an increased number of parameters to allow a fair comparison. W e set C (e) = 12 for Pr oposed (small) , C (e) = 24 for Pr oposed , Squeezing and W ave-U-Net and C (e) = 48 for W ave-U-Net+ and A verage P ooling . The characteristics of all models are summarized in T able 2. Following the best architecture of W ave-U-Net reported by the authors [8], we used stereo inputs, i.e., C (s) = 2 , and set L = 12 , C (m) = 312 , C (d) = 24 , f (e) = 15 and f (d) = 5 for all models. For the D WT and inv erse DWT layers, we used Haar wa velets. During training, we randomly cropped audio segments of 147443 samples from the full audio signals and multiplied ran- dom gains within [0 . 7 , 1 . 0] as a data augmentation to form a batch with size 16 . The loss function was the mean squared error function ov er all elements in the batch, and the Adam optimizer with a learn- ing rate of 0 . 0001 and decay rates of β 1 = 0 . 9 and β 2 = 0 . 999 was employed. W e continued to train each model until no improv ements of the validation loss were observed during 20 successiv e epochs. W ith the same stopping criterion, we then fine-tuned the model with the learning rate of 0 . 00001 and the batch size of 32 , and selected the model at the epoch with the lowest v alidation loss. 4.2. Results Fig. 3 shows the median and av erage source-to-distortion ratios (SDRs) for all models, which were calculated using the SiSEC2018 ev aluation procedure [1] and av eraged ov er fi ve trials. Note that [Stoller+2018] denotes the results reported in [8]. Proposed outper- formed W ave-U-Net and [Stoller+2018] for all musical instruments and achiev ed a (slightly) higher performance for bass and drums (vocals and other) compared with W ave-U-Net+ despite the f act that Pr oposed has only half the number of parameters as W ave-U-Net+ . This clearly shows the ef ficacy of the proposed model. Furthermore, ev en with half the number of parameters as W ave-U-Net , Proposed (small) provided higher and competitiv e median and a verage SDRs compared with the con ventional models for all the instruments ex- cept for v ocals. W e can confirm that the proposed layers can better capture the important features and are more suitable for audio source separation than the con ventional DS and US layers used in W ave-U- Net. While we used the Haar wa velet in the e xperiments, the use of other wa velets may further impro ve separation performance, and we will examine, as a future work, which w av elets are suitable. Fig. 3 : Median and average SDRs of all models. The values are av eraged ov er fi ve trials. Fig. 4 : T raining and v alidation losses av eraged per epoch. A verage P ooling and Squeezing did not reach the performance of Pr oposed . W e observed that Squeezing provided lower me- dian signal-to-interference ratios than Proposed and higher median signal-to-artifact ratios than A verage P ooling relatively . This implies that reducing the aliasing artifacts makes it easier to distinguish the target source from other sources and introducing the perfect recon- struction property makes it easier to propagate the entire information of the signal through the network. These results show that both the anti-aliasing filters and the perfect reconstruction property should be simultaneously considered when designing the neural network. As shown in Fig. 4, we found that Pr oposed showed greater training losses but smaller validation losses than the other models at most of the training epochs. This suggests that the proposed lay- ers can reduce ov erfitting, but its further in vestigation is required. 5. CONCLUSION W e presented an extension of W av e-U-Net combined with the D WT and inv erse DWT layers. The motiv ation to dev elop the proposed layers came from our observation that the architecture of W av e-U- Net resembles that of MRA. Through this analogy , we found the two problems of the DS layers in W av e-U-Net: the lack of the anti- aliasing filters and the lack of the perfect reconstruction property . T o simultaneously overcome these problems, we designed the D WT layer . The e xperiments on music source separation sho w the efficacy of the proposed model and the importance of simultaneously consid- ering the aliasing filters and the perfect reconstruction property . 6. REFERENCES [1] F .-R. St ¨ oter , A. Liutkus, and N. Ito, “The 2018 signal separa- tion ev aluation campaign, ” in Pr oc. International Confer ence on Latent V ariable Analysis and Signal Separation , 2018, pp. 293–305. [2] J. R. Hershey , Z. Chen, J. Le Roux, and S. W atanabe, “Deep clustering: Discriminativ e embeddings for segmentation and separation, ” in Pr oc. IEEE International Conference on Acous- tics, Speech and Signal Pr ocessing , 2016, pp. 31–35. [3] A. Jansson, E. J. Humphrey , N. Montecchio, R. M. Bittner , A. Kumar , and T . W eyde, “Singing voice separation with deep U-net con volutional networks, ” in Pr oc. International Society for Music Information Retrieval Conference , 2017. [4] N. T akahashi and Y . Mitsufuji, “Multi-scale multi-band densenets for audio source separation, ” in Pr oc. IEEE W ork- shop on Applications of Signal Pr ocessing to A udio and Acous- tics , 2017, pp. 21–25. [5] N. T akahashi, N. Goswami, and Y . Mitsufuji, “Mmdenselstm: An ef ficient combination of con volutional and recurrent neural networks for audio source separation, ” in Pr oc. International W orkshop on Acoustic Signal Enhancement , 2018, pp. 106– 110. [6] J. Le Roux, N. Ono, and S. Sagayama, “Explicit consis- tency constraints for STFT spectrograms and their application to phase reconstruction., ” in Pr oc. W orkshop on Statistical and P erceptual Audition , 2008, pp. 23–28. [7] D. Grif fin and J. Lim, “Signal estimation from modified short- time Fourier transform, ” IEEE T ransactions on Acoustics, Speech, and Signal Pr ocessing , vol. 32, no. 2, pp. 236–243, 1984. [8] D. Stoller, S. Ewert, and S. Dixon, “W av e-U-Net: A multi- scale neural network for end-to-end audio source separation, ” in Pr oc. International Society for Music Information Retrieval Confer ence , 2018, pp. 334–340. [9] S. V enkataramani, J. Casebeer , and P . Smaragdis, “End-to-end source separation with adaptiv e front-ends, ” in Pr oc. Asilo- mar Conference on Signals, Systems, and Computers , 2018, pp. 684–688. [10] G. W ichern and J. L. Roux, “Phase reconstruction with learned time-frequency representations for single-channel speech sep- aration, ” in Pr oc. International W orkshop on Acoustic Signal Enhancement , 2018, pp. 396–400. [11] O. Slizovskaia, L. Kim, G. Haro, and E. Gomez, “End-to-end sound source separation conditioned on instrument labels, ” in Pr oc. IEEE International Conference on Acoustics, Speech and Signal Pr ocessing , 2019, pp. 306–310. [12] Y . Luo and N. Mesgarani, “Conv-T asNet: Surpassing ideal timefrequency magnitude masking for speech separation, ” IEEE/A CM T ransactions on Audio, Speech, and Language Pr ocessing , vol. 27, no. 8, pp. 1256–1266, 2019. [13] F . Llu ´ ıs, J. Pons, and X. Serra, “End-to-end music source sep- aration: Is it possible in the wav eform domain?, ” in Pr oc. An- nual Conference of the International Speech Communication Association , 2019, pp. 4619–4623. [14] I. Kavalero v , S. W isdom, H. Erdogan, B. Patton, K. W ilson, J. Le Roux, and J. R. Hershey , “Universal sound separation, ” in Pr oc. IEEE W orkshop on Applications of Signal Processing to Audio and Acoustics , 2019. [15] O. Ronneberger , P . Fischer , and T . Brox, “U-net: Conv olu- tional networks for biomedical image segmentation, ” in Pr oc. International Confer ence on Medical Image Computing and Computer-Assisted Intervention , 2015, pp. 234–241. [16] S. G. Mallat, “ A theory for multiresolution signal decomposi- tion: the wavelet representation, ” IEEE T ransactions on P at- tern Analysis and Machine Intelligence , vol. 11, no. 7, pp. 674– 693, 1989. [17] J. Mairal, P . K oniusz, Z. Harchaoui, and C. Schmid, “Conv o- lutional kernel networks, ” in Pr oc. Advances in Neural Infor - mation Pr ocessing Systems , 2014, pp. 2627–2635. [18] R. Zhang, “Making conv olutional networks shift-in variant again, ” in Pr oc. International Confer ence on Machine Learn- ing , 2019. [19] Y . Gong and C. Poellabauer , “Impact of aliasing on deep CNN- based end-to-end acoustic models, ” in Proc. Annual Confer- ence of the International Speech Communication Association , 2018, pp. 2698–2702. [20] M. D. Zeiler and R. Fergus, “V isualizing and understanding con volutional networks, ” in Pr oc. Eur opean Confer ence on Computer V ision , 2014, pp. 818–833. [21] T . W illiams and R. Li, “W avelet pooling for con volutional neu- ral networks, ” in Pr oc. International Conference on Learning Repr esentations , 2018. [22] L. Dinh, J. Sohl-Dickstein, and S. Bengio, “Density estima- tion using Real NVP, ” in Proc. International Conference on Learning Repr esentations , 2017. [23] W . Sweldens, “The lifting scheme: A construction of second generation wav elets, ” SIAM Journal on Mathematical Analy- sis , vol. 29, no. 2, pp. 511–546, 1998. [24] W . Sweldens, “The lifting scheme: A custom-design construc- tion of biorthogonal wa velets, ” Applied and Computational Harmonic Analysis , vol. 3, no. 2, pp. 186–200, 1996. [25] Z. Rafii, A. Liutkus, F .-R. St ¨ oter , S. I. Mimilakis, and R. Bit- tner , “The MUSDB18 corpus for music separation, ” 2017.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment