Adjusting Pleasure-Arousal-Dominance for Continuous Emotional Text-to-speech Synthesizer

Emotion is not limited to discrete categories of happy, sad, angry, fear, disgust, surprise, and so on. Instead, each emotion category is projected into a set of nearly independent dimensions, named pleasure (or valence), arousal, and dominance, know…

Authors: Azam Rabiee, Tae-Ho Kim, Soo-Young Lee

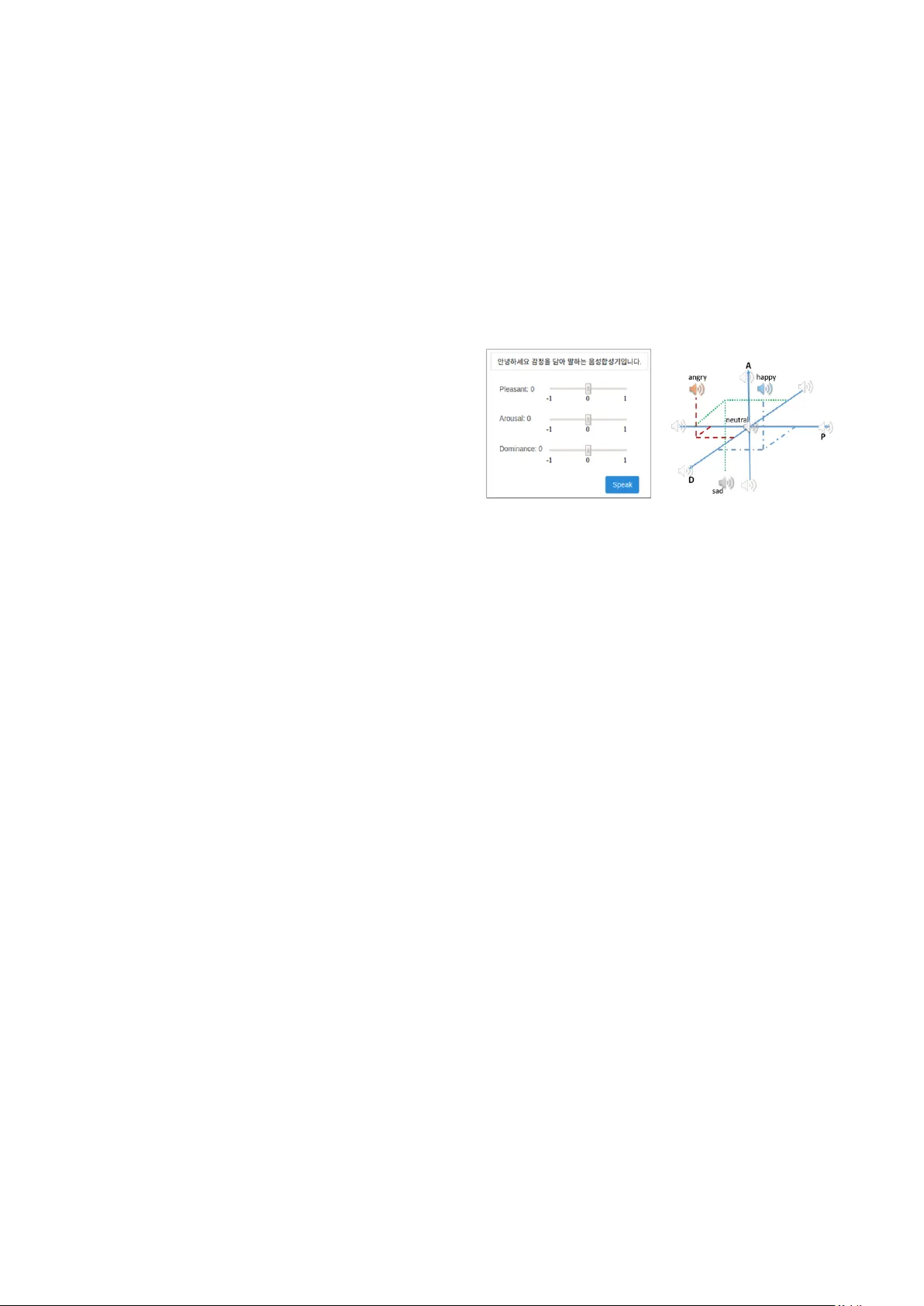

Accepted for Show & Tell demonstrations in In terspeech 2019, Graz Adjusting Pleasur e-Ar ousal-Dominan ce for Cont inuous Emotion al T ext- to - speech Synthes izer Azam Rabiee, Tae-Ho Kim, Soo-Young Lee KAIST Institute for Artificial I nt elligence Korea Advanced Institute of Technology, Daejeon, Korea {azrabiee, ktho22, sy - lee} @ kaist.ac.kr Abstract Emotion is not lim ited to discrete categories of happ y, sad, angry, fear, disgust, surp rise, and so on. Instead, each emotion category is pro jected into a set of nearly independent dimensions, named pleasure (or valence), arou sal, and dominance , kno wn as PAD . The value of each d imension varies fro m -1 to 1, such that the neutral emotion is in the center with all-zero values. Training an e motional continuous text- to -speech (TTS) sy nthesi zer on the i nd ependent dimensions provides the possibility of emotional speech synthesis with unlimited em otion categories. Our end- to -end neural speec h synthesizer is based on the well-known Tacotron. Empirically, we have found the optimum network architecture for injecting the 3D PADs. Moreover, the PAD values are adjusted for the speech synthesis purpose. Index Terms : speec h s ynthesis , text- to -speech, T TS, continuous emotion, controllable speech, emotional speech , PAD 1. Introduction For a natural speech synthesizer, uttering the text in the desired e motion is a fa vor; but emotion is not lim ited to t he well-known categories of hap py, sad, angry, fear, disgust, and surprise. Moreover, it is not easy to find an emotional sp eech dataset with a high number of emotion categories suitable for a continuous emotional text- to -speech (TTS) s ynthesizer. On the other hand, psychological stud ies revealed that the nearl y orthogonal and independent P leasure-Arousal-Dominance (PAD) represents the complete range of the human emotional state [1] (Figure 1) . Researchers applied the PAD to different applications . To the b est of our knowledge, this study presents the first step to approach the continuous 3D emotional TTS in a fully end - to -end neural model. Our model is capable of generating speech in a wide range of emotions . Section 2 explains the details of our synthesizer in addition to som e implementation tricks an d the P AD adjustment . Section 3 discusses th e ob jective evaluations. Finally, conclusion comes at the en d. The demo will de monstrate the ability of u ttering the given text in the wide range of e motions with continuous ly varying independent axes (Figure 1). 2. Continuous emotional TTS Our end- to -end neural speech synthesizer is based on Tacotron [2], with a slight change, explained in S ection 2.1. We propose to use the continuous three dimensional PAD (detailed in Section 2.2) to train th e model for emotional speech synthesis. We refer to the 3D representation as the style in this paper. Figure 1 : demo for the continuous emotion al TTS. Pleasure-Arousal-Dominance (PAD) covers the complete range of the human emotional state. 2.1. Speech synthesizer Our speech synthesizer is a sequence- to -sequence model with an attention mec hanis m . The encoder-attention-decoder, explained in Equations 1 to 4, shows th e p ath to convert the character sequence as input to the M el- sp ectrogram ou tput. Later a post-pro cessing and th e Griffin-Li m algorithm reconstruct the speech wavef or m . Equations 1 an d 4 describe the encod er and d ecoder parts o f the model, in which , , and are the input text, text enco der state, and th e -th time-step decoder ou tput ( a Mel spectrogram frame), respectively. As denoted in equ ations 3 and 4, the decoder output is generated according to the weighted sum o f the encoder state , and the projected style . The attenti on weight is calculated by the location sensitive attention [3], injecting the projected style . When , The model is non-emotional TTS . , (1) , (2) , (3) , (4) 2.2. PAD Adjustment In equations 2 and 4, the p rojected style is a high dimension representation of th e P AD (32-D in our implementation) because empirically we h ave figured out that simply injecting the 3D style do es not convey eno ugh capacity for th e network to d istinct the style correctl y. M oreover, the physiologically- obtained PAD values may var y fo r different environment. To adjust th e PAD and to find the optimum p rojected style , we relied the emotional training on the o nehot style with two dense layers. Hence, we are sure th at the emotional categories are trained distinctly. Then, is obtained as follows, 2 , (5) in w hich, is the 3D sty le representation ; thus is initialized b y th e P ADs adap ted from [1]. Later, we adjusted the P ADs for the TT S purpose in a transfer learnin g trick. First, with frozen and synthesizer parameters, is tuned . Then, and are trained with frozen synthesizer parameters. The final value of is adjusted PAD values for our purpose, which is compatible with [1] in terms o f sign. Without the PAD adjustment, our m od el co uld not d istinct angry, disgust and surprise categories clearly . 2.3. Style injection Empirically, we sou ght out the optimum network architecture for the style in jection in the synthesizer con sidering (1) minimum added parameters to the network for in ducing the style, (2) no style confusion, i. e. synthesizing the speech in the desired style, and (3) preserving the q uality. Thus, a hyper- parameter tuning is p erformed to f ind the optimum st yle representation, injection location and type. We have already explained the style representation and the corresponding training tricks in previou s s ections. Here, w e explain ou r experiments to find the optimum injection location and type. We d id n ot induce th e style in the encoder as it deals with linguistic f eatures. According t o Equations 2 and 4, we injected the sty le in the attention an d the decoder modules. Exploring two places in th e attention module (attention -RNN, and attention context vector), as well as th ree places in the decoder (after d ecoder pre -net, RNN-layer1, and RNN- layer2), empirically we have fo und that th e style injection place is important to avoid the style confusion. However, no style confusion has found for the models with the attention- RNN st yle injection . Furthermore, we considered two t ypes of style injections as follows, Sum , (6) Concatenate , (7) where is the style-injected , and can b e any n on-linear function (ReLU in our implementations). The trainable weight as a dense projection layer is needed for the element-wise sum. However, concatenate-t ype is p referred in terms of no added parameter to the network. 3. Experiments and Results The experi ments were performed on our internal Korean dataset, con taining s even emotion s (th e n eutral and six basic emotions) uttered by a male and female spea ker. Every style category has 3000 sentences, recorded in 1 6kHz sa mpling rate. Unless otherwise stated, w e used the same hyper- parameter settin gs as [2] . We report the objective evaluation here; however, the quality and sty le con fusion can be subjectively evaluated in demo. Our demo page is also available at “ http s://github.com/AzamRa biee/E motional- TTS. ” We ev aluate our results in two cases: teacher-forcing, and free-running. Teacher-forcing means feeding the clean (ground-truth) in Equation 2; whil e in free-running, it is the previou s time-step decoder output . Ta ble 1 compares four models with sum/cat injection type (e quations 6 and 7). However, we examined th e multiply form of the style injection as ; but our experiments faced with the exposure bias problem in free -running. The nu mbers 1, 2, and 4 indicates the n umber of in jections. Fou r models sh are the attention-RNN style injection. Our objective measures are the scale-invariant cosine- based signal- to -d istortion ration (SDR/Mel-SDR), and spectral distortion (SD/M el- SD ) as Equations 8, and 9, in which and are spectrograms of the target and the synthesized speech, respectively. (8) (9) Ta ble 1 repo rts the average results on 100 test set utterances with 95 % confidence interval. For S DR/Mel-SDR , higher value shows more accurate model; whereas for SD /Mel- SD , the lower value means better p erformance. In teacher-forcing, sequences are te mporary matched but in the free-running they are adjusted by dynamic time warping. According to the tab le and b ecause th e minimum change in TTS architecture is favorite, CAT- 4 model is selected for demo. Ta ble 1: Ob jective results of emotional TTS models Model SDR M el -SDR SD M el -SD Teacher forcing SUM-4 15.7 ± 0.15 13.7 ± 0.19 8.2 ± 0.50 6.4 ± 0.41 CAT-1 16.5 ± 0. 13 15.4 ± 0.19 8.1 ± 0.49 6.2 ± 0.39 CAT-2 16.4 ± 0.15 15.3 ± 0.22 8.0 ± 0.53 5.8 ± 0.41 CAT-4 17.7 ± 0.08 16.5 ± 0.14 6.5 ± 0.31 4.2 ± 0.21 Free running SUM-4 11.7 ± 0.16 10.3 ± 0.22 8.0 ± 0.47 7.0 ± 0.55 CAT-1 11.6 ± 0.16 10.4 ± 0.21 8.2 ± 0.43 7.3 ± 0.56 CAT-2 11.6 ± 0.15 10.4 ± 0.21 8.2 ± 0.45 7.3 ± 0.56 CAT-4 14.4 ± 0. 26 14 .3 ± 0. 31 6.8 ± 0.34 6.8 ± 0.57 4. Conclusion We presented a continuous emotional TTS capable of synthesizing sp eech in an unlimited number o f e motions. We have adju sted th e PAD values to better represent emotions in our TTS dataset. Demo will sh ow that t he P AD adjustment helped t o distinct the b asic emoti on categories, while PAD axes kept the pleasure , arousal, and dominance meaning. Furthermore, the experiment to find the optimum network architecture r eveal ed that more style injection s (in cluding th e attention-RNN style injection) lead to better performance. 5. Acknowledgements This work was supp orted by Institute of Inform ation & Communications Technology Planning & Eva luation (IITP) grant funded by th e Korea government (MSIT) [2016- 0- 00562(R0124- 16 -0002), Emotional Intelligence Te chno logy to Infer Human Emotion and Carry on Dialogue Acc ord ingly] 6. References [1] J. A. Russell and A. Mehrabian, “Evidence for a three -factor theory of em otions,” J. Res. Pers. , vol. 1 1, no. 3, pp. 273 – 294, 1977. [2] Y. Wang et al. , “Tacotron: A fully end - to -end te xt- to -speech synthesis model,” in Proc. Interspeech , 2017, pp. 4006 – 4010. [3] J. Chorowski, D. Bahdanau, D. Serdy uk, K . Cho, and Y. Bengio, “Attention - Based Models for Speech Recogni tion,” in Advances in N eural Information Processing Conference (NIPS) 2015 , 20 15, pp. 1 – 9.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment