BRIDGE: Byzantine-resilient Decentralized Gradient Descent

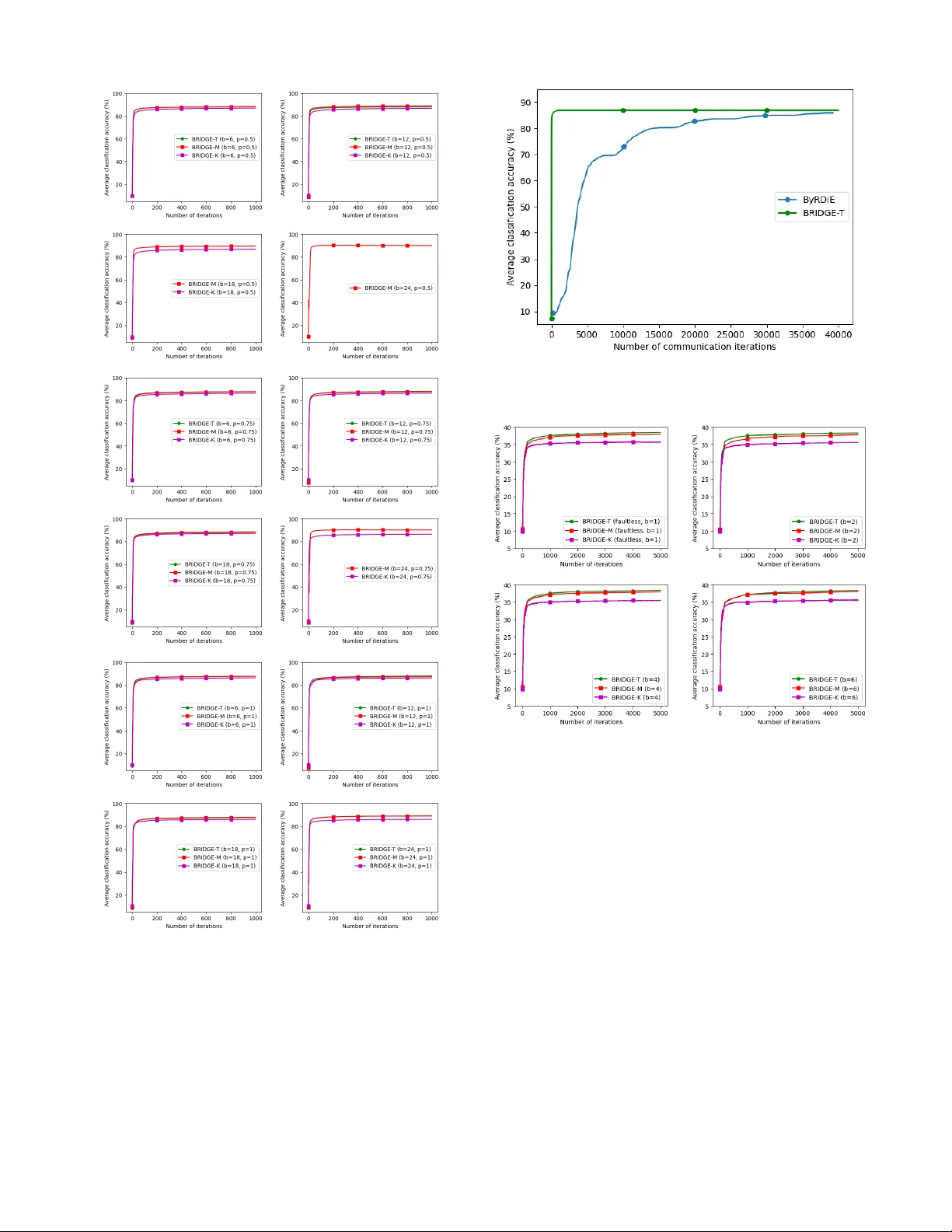

Machine learning has begun to play a central role in many applications. A multitude of these applications typically also involve datasets that are distributed across multiple computing devices/machines due to either design constraints (e.g., multiage…

Authors: Cheng Fang, Zhixiong Yang, Waheed U. Bajwa