BANANAS: Bayesian Optimization with Neural Architectures for Neural Architecture Search

Over the past half-decade, many methods have been considered for neural architecture search (NAS). Bayesian optimization (BO), which has long had success in hyperparameter optimization, has recently emerged as a very promising strategy for NAS when i…

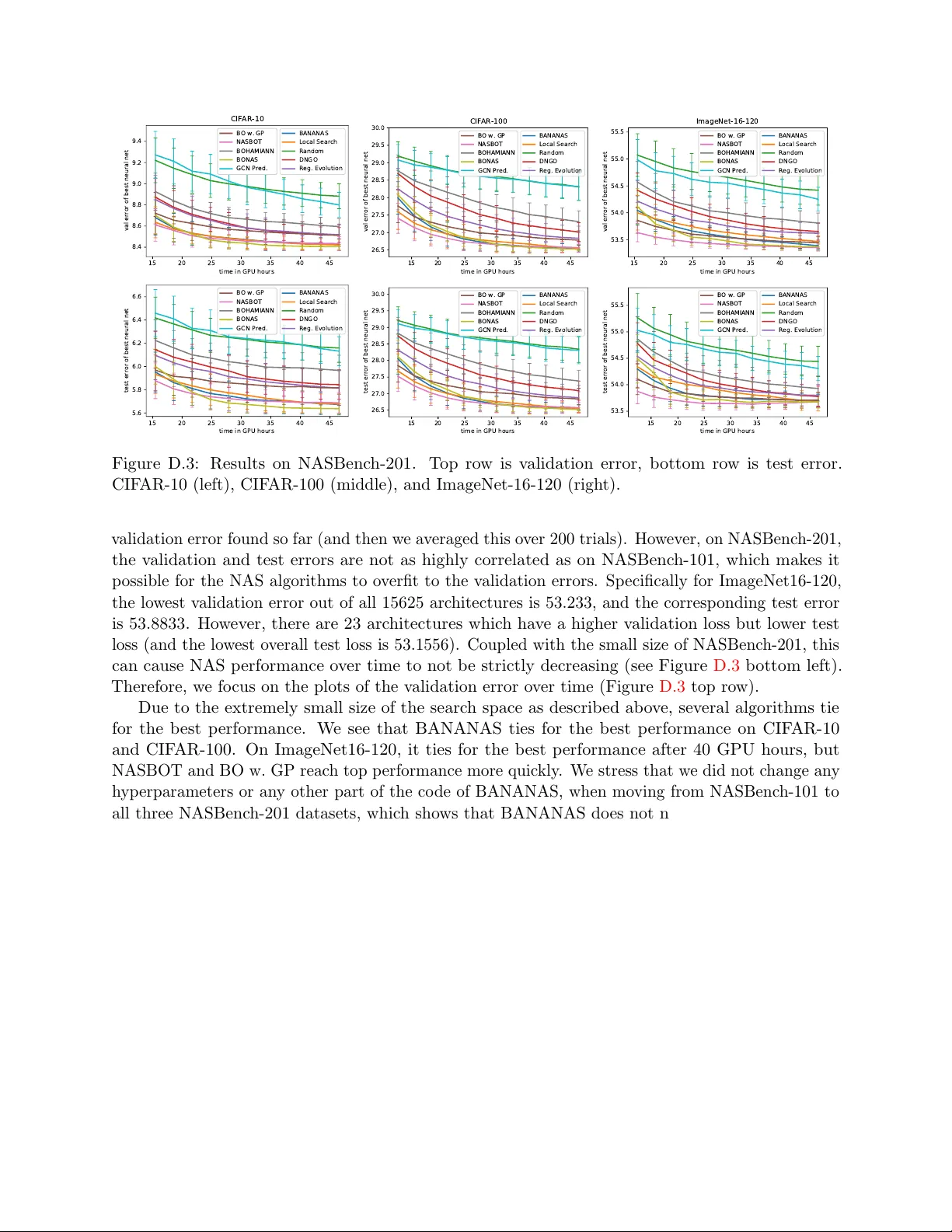

Authors: Colin White, Willie Neiswanger, Yash Savani