Discovering Transforms: A Tutorial on Circulant Matrices, Circular Convolution, and the Discrete Fourier Transform

How could the Fourier and other transforms be naturally discovered if one didn't know how to postulate them? In the case of the Discrete Fourier Transform (DFT), we show how it arises naturally out of analysis of circulant matrices. In particular, th…

Authors: Bassam Bamieh

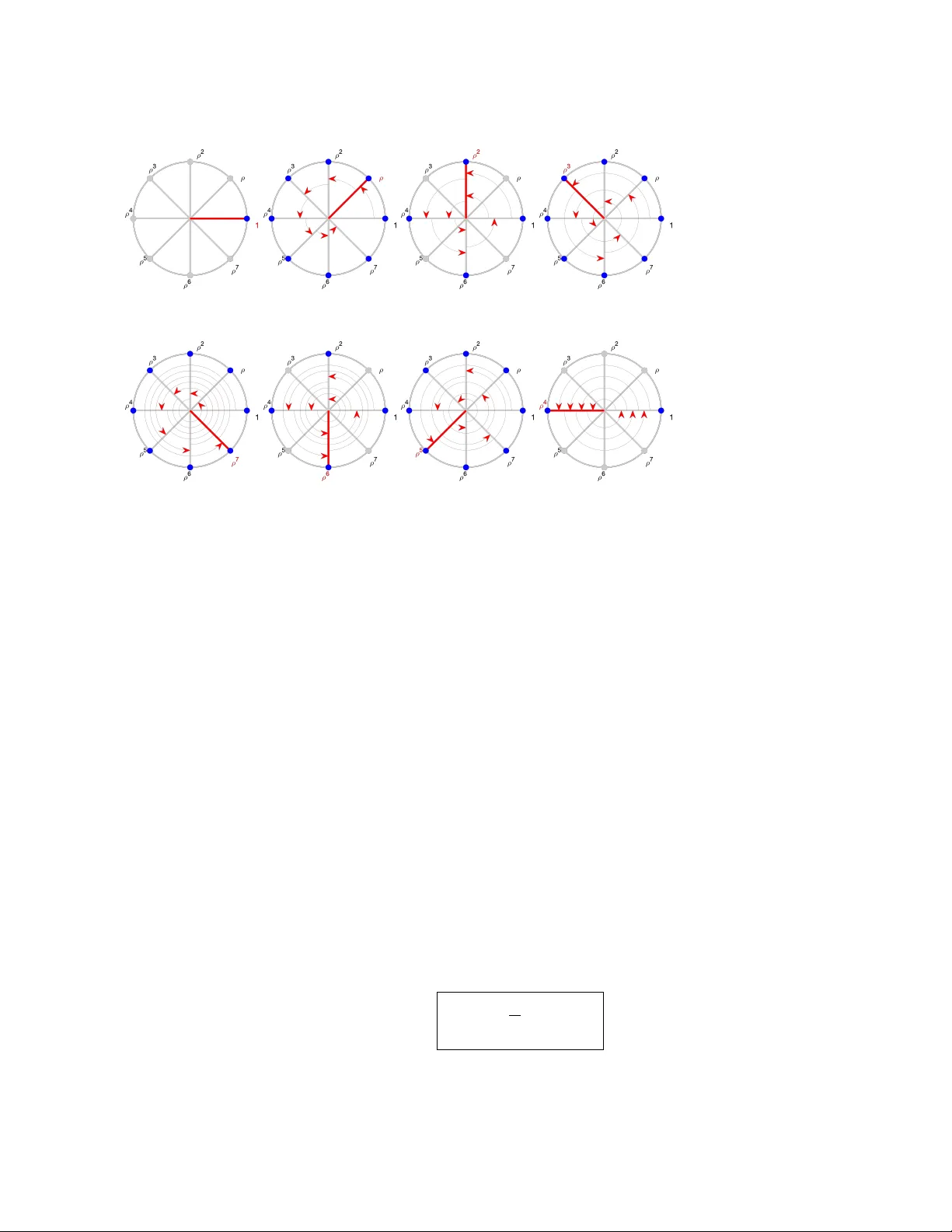

DISCO VERING TRANSF ORMS: A TUTORIAL ON CIR CULANT MA TRICES, CIR CULAR CONV OLUTION, AND THE DISCRETE F OURIER TRANSF ORM BASSAM BAMIEH ∗ Key w ords. Discrete F ourier T ransform, Circulan t Matrix, Circular Conv olution, Simultaneous Diagonalization of Matrices AMS sub ject classifications. 42-01,15-01, 42A85, 15A18, 15A27 Abstract. How could the F ourier and other transforms b e naturally discovered if one didn’t know how to p ostulate them? In the case of the Discrete F ourier T ransform (DFT), we show ho w it arises naturally out of analysis of circulant matrices. In particular, the DFT can be derived as the change of basis that simultaneously diagonalizes all circulan t matrices. In this wa y , the DFT arises naturally from a linear algebra question about a set of matrices. Rather than thinking of the DFT as a signal transform, it is more natural to think of it as a single c hange of basis that renders an entire set of mutually-comm uting matrices into simple, diagonal forms. The DFT can then be “discov ered” b y solving the eigenv alue/eigenv ector problem for a sp ecial element in that set. A brief outline is giv en of how this line of thinking can b e generalized to families of linear op erators, leading to the discov ery of the other common F ourier-type transforms. 1. Introduction. The F ourier transform in all its forms is ubiquitous. Its many useful properties are introduced early on in Mathematics, Science and Engineering curricula [ 1 ]. T ypically , it is introduced as a transformation on functions or signals, and then its many useful prop erties are easily deriv ed. Those prop erties are then sho wn to b e remark ably effectiv e in solving certain differen tial equations, or in an- alyzing the action of time-in v arian t linear dynamical systems, amongst man y other uses. T o the studen t, the effectiveness of the F ourier transform in solving these prob- lems may seem magical at first, before familiarit y even tually suppresses that initial sense of wonder. In this tutorial, I’d like to step back to b efore one is shown the F ourier transform, and ask the follo wing question: How w ould one naturally disc over the F ourier transform rather than hav e it b e p ostulate d ? The ab o ve question is interesting for several reasons. First, it is more in tellectu- ally satisfying to in tro duce a new mathematical ob ject from familiar and w ell-known ob jects rather than having it postulated “out of thin air”. In this tutorial we demon- strate ho w the DFT arises naturally from the problem of simultane ous diagonalization of all circulant matrices, whic h share symmetry prop erties that enable this diagonal- ization. It should be noted that simultaneous diagonalization of an y class of linear op erators or matrices is the ultimate w ay to understand their actions, b y reducing the en tire class to the simplest form of linear op erations (diagonal matrices) sim ultane- ously . The same procedure can b e applied to discov er the other close relatives of the DFT, namely the F ourier T ransform, the z -T ransform and F ourier Series. All can be arriv ed at by simultaneously diagonalizing a respective class of linear operators that ob ey their resp ective symmetry rules. T o make the p oint ab ov e, and to hav e a concrete discussion, in this tutorial we consider primarily the case of circulan t matrices. This case is also particularly useful b ecause it yields the DFT, which is the computational w orkhorse for all F ourier-t yp e analysis. Giv en an n -v ector a := ( a 0 , . . . , a n − 1 ), define the associated matrix C a ∗ Department of Mec hanical Engineering, Universit y of California at Santa Barbara, b amieh@ucsb.e du. This work is partially supp orted by NSF Awards CMMI-1763064 and ECCS- 1932777. 1 whose first column is made up of these n umbers, and each subsequen t column is obtained by a cir cular shift of the previous column (1.1) C a := a 0 a n -1 a n -2 · · · a 1 a 1 a 0 a n -1 a 2 a 2 a 1 a 0 a 3 . . . . . . . . . . . . a n -1 a n -2 a n -3 · · · a 0 . Note that each row is also obtained from the p ervious row by a circular shift. Thus the entire matrix is completely determined b y any one of its ro ws or columns. Suc h matrices are called cir culant . They are a sub class of T o eplitz matrices, and as men- tioned, hav e very sp ecial prop erties due to their intimate relation to the Discrete F ourier T ransform (DFT) and circular conv olution. Giv en an n -vector a as ab ov e, its DFT ˆ a is another n -vector defined by (1.2) ˆ a k := n − 1 X l =0 a l e − i 2 π n kl , k = 0 , 1 , . . . , n − 1 . A remark able fact is that given a circulan t matrix C a , its eigenv alues are easily com- puted. They are precisely the set of complex n umbers { ˆ a k } , i.e. the DFT of the v ector a that defines the circulant matrix C a . There are many w a ys to derive this conclusion and other prop erties of the DFT. Most treatments start with the definition (1.2) of the DFT, from whic h man y of its seemingly magical properties are easily derived. T o restate the goal of this tutorial, the question w e ask here is: what if we didn’t know the DFT? Ho w can w e arriv e at it in a natural manner without needing someone to p ostulate (1.2) for us? There is a natural w ay to think ab out this problem. Giv en a class of matrices or op erators, one asks if there is a transformation, a change of basis, in which their matrix representations all hav e the same structure such as diagonal, block diago- nal, or other sp ecial forms. The simplest such scenario is when a class of matrices can b e simultane ously diagonalize d with the same transformation. Since diagonaliz- ing transformations are made up of eigenv ectors of a matrix, then a set of matric es is simultane ously diagonalizable iff they shar e a ful l set of eigenve ctors . An equiv a- len t condition is that they each are diagonalizable, and they all mutually commute. Therefore giv en a mutually commuting set of matrices, b y finding their shared eigen- v ectors, one finds that sp ecial transformation that sim ultaneously diagonalizes all of them. Thus, finding the “right transform” for a particular class of op erators amoun ts to identifying the correct eigenv alue problem, and then calculating the eigenv ectors, whic h then yield the transform. An alternativ e but complementary view of the abov e pro cedure inv olves describ- ing the class of operators using some underlying common symmetry . F or example, circulan t matrices such as (1.1) hav e a shift in v ariance prop erty with resp ect to cir- cular shifts of vectors. This can also b e describ ed as having a shift-inv arian t action on vectors ov er Z n (the integers mo dulo n ), which is also equiv alent to ha ving a shift-in v ariant action on p erio dic functions (with p erio d n ). In more formal language, circulan t matrices represent a class of m utually commuting op erators that also com- m ute with the action of the group Z n . A basic shift op erator generates that group, and the eigenv alue problem for that shift op erator yields the DFT. This approach has the adv antage of b eing generalizable to more complex symmetries that can b e 2 enco ded in the action of other, p ossibly non-commutativ e, groups. These techniques are part of the theory of group representations. How ev er, we adopt here the approach describ ed in the previous paragraph, whic h uses familiar Linear Algebra language and a voids the formalism of group representations. None the less, the tw o approaches are in timately linked. P erhaps the present approach can b e though t of as a “gatewa y” treatmen t on a slipp ery slop e to group representations [ 2 , 3 ] if the reader is so inclined. This tutorial follows the ideas describ ed earlier. W e first ( section 2 ) inv estigate the simultaneous diagonalization problem for matrices, whic h is of interest in itself, and show ho w it can b e done constructiv ely . W e then ( section 3 ) introduce circulan t matrices, explore their underlying geometric and symmetry prop erties, as well as their simple correspondence with circular con volutions. The general procedure for commut- ing matrices is then used ( section 4 ) for the particular case of circulant matrices to sim ultaneously diagonalize them. The traditionally defined DFT emerges naturally out of this pro cedure, as well as other equiv alen t transforms. The “big picture” for the DFT is then summarized ( section 5 ). A muc h larger context is briefly outlined in section 6 , where the close relatives of the DFT, namely the F ourier transform, the z -transform and F ourier series are discussed. Those can b e arriv ed at naturally by sim ultaneously “diagonalizing” families of mutually commuting linear op erators. In this case, diagonalization has to b e interpreted in a more general sense of conv er- sion to so-called multiplication op erators. Finally ( subsection 6.2 ), an example of a non-comm utative case is given where not diagonalization, but rather simultaneous blo ck-diagonalization is p ossible. This serv es as a motiv ation for generalizing classical F ourier analysis to so-called non-commutativ e F ourier analysis whic h is v ery m uc h the sub ject of group representations. 2. Simultaneous Diagonalization of Commuting Matrices. The simplest matrices to study and understand are the diagonal matrices. They are basically uncoupled sets of scalar m ultiplications, essentially the simplest of all p ossible linear op erations. When a matrix M can b e diagonalized with a similarity transformation (i.e. Λ = V − 1 M V , where Λ is diagonal), then w e ha ve a c hange of basis in whic h the linear transformation has that simple diagonal matrix representation, and its prop erties can b e easily understoo d. Often one has to work with a set of tr ansformations rather than a single one, and usually with sums and pro ducts of elemen ts of that set. If w e require a differen t similarit y transformation for each member of that set, then sums and pro ducts will eac h require finding their own diagonalizing transformation, which is a lot of work. It is then natural to ask if there exists one basis in which all members of a set of transformations hav e diagonal forms. This is the simultaneous diagonalization problem. If such a basis exists, then the prop erties of the en tire set, as well as all sums and products (i.e. the algebra generated b y that set) can be easily deduced from their diagonal forms. Definition 2.1. A set M of matric es is c al le d simultaneously diagonalizable if ther e exists a single similarity tr ansformation that diagonalizes al l matric es in M . In other wor ds, ther e exists a single non-singular matrix V , such that for e ach M ∈ M , the matrix V − 1 M V = Λ is diagonal . It is immediate that all sums, pro ducts and in verses (when they exist) of elemen ts of M will then also b e diagonalized by this same similarity transformation. Th us a sim ultaneously diagonalizing transformation, when it exists, w ould b e an inv aluable to ol in studying such sets of matrices. 3 When can a given set of matrices b e sim ultaneously diagonalized? The answer is simple to state. First, they eac h ha v e to be individually diagonalizable as an ob vious necessary condition. Then, we will show that a set of diagonalizable matric es c an b e simultane ously diagonalize d iff they al l mutual ly c ommute . W e will illustrate the argumen t in some detail since it giv es a pro cedure for constructing the diagonalizing transformation. In the case of circulan t matrices, this construction will yield the DFT. W e note that the same construction also yields the z-transform, F ourier transform and F ourier series, but with some slight additional technicalities due to working with op erators on infinite-dimensional spaces. Ne c essity: W e can see that commutativit y is a necessary condition b ecause all diagonal matrices mutually comm ute, and if tw o matrices are simultaneously diago- nalizable, they do commute in the new basis, and therefore they m ust commute in the original basis. More precisely , let A = V − 1 Λ a V and B = V − 1 Λ b V be simultaneously diagonalizable with the transformation V , then AB = V − 1 Λ a V V − 1 Λ b V = V − 1 Λ a Λ b V = V − 1 Λ b Λ a V = V − 1 Λ b V V − 1 Λ a V = B A (2.1) What ab out the conv erse? If tw o matrices comm ute, are they simultaneously diagonalizable? The answer is yes if b oth matrices are diagonalizable individually (this is of course a necessary condition). The argumen t is simple if one of the matrices has non-rep eated eigenv alues. A little more care needs to b e tak en in the case of repeated eigen v alues since there are man y diagonalizing transformations in that case. W e will not need the more general version of this argumen t in this tutorial. T o begin, let’s recap ho w one constructiv ely diagonalizes a giv en matrix b y finding its eigenv ectors. If v i is a an eigenv ector of an n × n matrix A with c orresponding eigen v alue λ i then we ha ve (2.2) Av i = λ i v i , i = 1 , . . . , p, where p is the largest num b er of linearly indep endent eigenv ectors (whic h can b e any n umber from 1 to n ). The relations (2.2) can b e compactly rewritten using p artitione d matrix notation as a single matrix equation " Av 1 · · · Av p # = λ 1 v 1 · · · λ m v p m A " v 1 · · · v p # = " v 1 · · · v p # λ 1 . . . λ p ⇔ AV = V Λ , (2.3) where V is a matrix whose columns are the eigenv ectors of A , and Λ is the diagonal matrix made up of the corresp onding eigenv alues of A . W e say that an n × n matrix has a ful l set of eigenve ctors if it has n linearly indep enden t eigen v ectors. In that case, the matrix V in (2.3) is square and nonsingular and Λ = V − 1 AV is the diagonalizing similarity transformation. Of course not all matrices hav e a full set of eige n v ectors. If the Jordan form of a matrix contains an y non-trivial Jordan blo cks, then it can’t b e diagonalized, and has strictly less than n linearly indep endent eigen vectors. W e can therefore state that a matrix is diagonalizable iff it has a ful l set of eigenve ctors , i.e. diagonalization is equiv alen t (in a constructive sense) to finding n linearly indep endent eigen vectors. 4 The case of simple (non-rep eated) eigenv alues. Now consider the problem of simultaneous diagonalization. It is clear from the ab o ve discussion that tw o matrices can b e sim ultaneously diagonalized iff they shar e a ful l set of eigenve ctors . Consider the conv erse of the argument (2.1) , and assume that A has (simple) non-rep eated eigen v alues. This means that Av i = λ i v i , i = 1 , . . . , n, and λ i 6 = λ j if i 6 = j. Consider any matrix B that commutes with A . Let B act on each of the eigen vectors b y B v i and observe that (2.4) A B v i = B Av i = B λ i v i = λ i B v i . Th us B v i is an eigenv ector of A with eigen v alue λ i . Since those eigenv alues are distinct, and the corresp onding eigenspace is one dimensional, B v i m ust b e a scalar m ultiple of v i B v i = γ i v i . Th us v i is an eigen vector of B , but p ossibly with an eigenv alue γ i differen t from λ i . In other w ords, the eigen v ectors of B are exactly the unique (up to scalar m ultiples) eigen vectors of A . W e summarize this next. Lemma 2.2. If a matrix A has simple eigenvalues, then A and B ar e simultane- ously diagonalizable iff they c ommute. In that c ase, the diagonalizing b asis is made up of the eigenve ctors of A . This statement gives a constructive pro cedure for simultaneously diagonalizing a set M of mutually comm uting matrices. If w e can find one matrix A ∈ M with simple eigen v alues, then find its eigen v ectors, those will yield the sim ultaneously diagonaliz- ing transformation for the en tire set. This is the procedure used for circulant matrices in section 4 , where the “shift operator” S or its adjoint S ∗ pla y the role of the matrix with simple eigenv alues. The diagonalizing transformation for S ∗ yields the standard DFT. W e will see that we can also pro duce other, equiv alent versions of the DFT if w e use eigenv ectors of S instead, or eigen v ectors of S p with ( p, n ) co-prime. 3. Structural Prop erties of Circulant Matrices. The structure of circulan t matrices is most clearly expressed using modular arithmetic. In some sense, modular arithmetic “enco ds” the symmetry prop erties of circulant matrices. W e b egin with a geometric view of mo dular arithmetic by relating it to rotations of ro ots of unit y . W e then sho w the “rotation inv ariance” of the action of circulan t matrices, and finally connect that with circular con v olution. 3.1. Mo dular Arithmetic, Z n , and Circular Shifts. T o understand the sym- metry prop erties of circulant matrices, it is useful to first study and establish some simple prop erties of the set Z n := { 0 , 1 , · · · , n − 1 } of in tegers mo dulo n . The arith- metic in Z n is mo dular arithmetic , that is, we sa y k e quals l mo dulo n if k − l is an in teger multiple of n . The following notation can b e used to describ e this formally k = l (mod n ) or k ≡ n l ⇐ ⇒ ∃ m ∈ Z , s.t. k − l = m n Th us for example n ≡ n 0, and n + 1 ≡ n 1 and so on. There are t w o equiv alen t wa ys to define (and think) ab out Z n , one mathematically formal and the other graphical. The first is to consider the set of all in tegers Z and regard any tw o in tegers k and 5 l such that k − l is a m ultiple of n as equiv alent, or more precisely as members of the same e quivalenc e class . The infinite set of in tegers Z b ecomes a finite set of equiv alence classes with this equiv alence relation. This is illustrated in Figure 3.1a 0 - n n 1 1- n n +1 n -1 -1 2 n -1 n -2 -2 2 n -2 (a) 0 1 n -1 2 n -1 -1 - n - n +1 n n +1 (b) 0 ⌘ n n n -1 ⌘ n -1 n -2 ⌘ n -2 1 ⌘ n n +1 2 ⌘ n n +2 (c) 0 e i 2 ⇡ n e i 2 ⇡ n 2 e - i 2 ⇡ n e - i 2 ⇡ n 2 2 ⇡ /n (d) Fig. 3.1: (a) Definition of Z n as the decomp osition of the integers Z into e quivalenc e classes each indicated as a “vertical bin”. Two integers in Z b elong to the same equiv alence class (and represent the same element of Z n ) if they differ by an integer multiple of n . (b) Another depiction of the decomp osition where t wo integers that are v ertically aligned in this figure belong to the same equiv alence class. The arithmetic in Z n is just angle addition in this diagram. F or example, ( − 1) + ( n + 1) ≡ n 0 ≡ n n . (c) Using the set { 0 , 1 , · · · , n − 1 } as Z n . A few equiv alent mem b ers are shown, and the arithmetic of Z n is just angle addition here. This can b e thought of as the “top view” of (b). (d) The n th ro ots of unity ρ m := exp i 2 π n m lying on the unit circle in the complex plane. Identifying ρ m with m ∈ Z n shows that complex multiplication on { ρ m } (which corresp onds to angle addition) is equiv alent to modular addition in Z n . where elemen ts of Z n are arranged in “v ertical bins” whic h are the equiv alence classes. Eac h equiv alence class can b e iden tified with any of its mem b ers. One choice is to iden tify the first one with the element 0, the second one with 1, and so on up to the n ’th class identified with the integer n − 1. Figure 3.1c also shows ho w elements of Z n can b e arranged on a discrete circle so that the arithmetic in Z n is identified with angle addition. One more useful isomorphism is b etw een Z n and the n th ro ots of unity ρ m := e i 2 π n m , m = 0 , . . . , n − 1. The complex num b ers { ρ m } lie on the unit circle each at a corresp onding angle of 2 π n m counter-clockwise from the real axis ( Figure 3.1d ). Complex multiplication on { ρ m } corresp onds to addition of their corresp onding angles, and the mapping ρ m → m is an isomorphism from complex m ultiplication on { ρ m } to mo dular arithmetic in Z n . Using mo dular arithmetic, w e can write do wn the definition of a circulant ma- trix (1.1) by specifying the k l ’th entry 1 of the matrix C a as (3.1) ( C a ) kl := a k − l , k , l ∈ Z n , where we use (mo d n ) arithmetic for computing k − l . It is clear that with this definition, the first column of C a is just the sequence a 0 , a 1 , · · · , a n − 1 . The second column is giv en b y the sequence { a k − 1 } and is th us a − 1 , a 0 , · · · , a n − 2 , whic h is exactly the sequence a n − 1 , a 0 , · · · , a n − 2 , i.e. a circular shift of the first column. Similarly eac h subsequen t column is a circular shift of the column preceding it. Finally , it is useful to visualize an n -vector x := ( x 0 , . . . , x n − 1 ) as a set of n umbers arranged at equidistan t p oints along a circle, or equiv alently as a function on the discrete circle. This is illustrated in Figure 3.2 . Note the difference b etw een this 1 Here, and in this entire tutorial, matrix ro ws and columns are indexed from 0 to n − 1 rather than the more traditional 1 through n indexing. This alternative indexing significantly simplifies notation, and corresponds more directly to mo dular arithmetic. 6 x 0 x 1 x 2 x 3 x 4 x 5 x n 1 x n 2 (a) x 0 x 1 x 2 x 3 x 4 x 5 x n 1 x n 2 (b) x 0 x 1 x n -1 x n -2 x 0 x 1 x 2 x 3 x n -1 x n -2 0 1 2 n n +1 n -1 -1 t n -2 (c) Fig. 3.2: A v ector x := ( x 0 , . . . , x n − 1 ) visualized as (a) a set of num b ers arranged coun ter-clockwise on a discrete circle, or equiv alently (b) as a function x : Z n − → C on the discrete circle Z n . (c) An n -perio dic function on the integers Z can equiv alently be viewed as a function on Z n as in (b). figure and Figure 3.1 , which depicts the elements of Z n and mo dular arithmetic. Figure 3.2 instead depicts ve ctors as a set of num b ers arranged in a discrete c ircle, or as functions on Z n . A function on Z n can also b e thought of as a p erio dic function (with perio d n ) on the set of in tegers Z ( Figure 3.2 .c). In this case, p erio dicity of the function is expressed by the condition (3.2) x t + n = x t for all t ∈ Z . It is how ever more natural to view perio dic functions on Z as just functions on Z n . In this case, p erio dicity of the function is simply “enco ded” in the mo dular arithmetic of Z n , and condition (3.2) does not need to b e explicitly stated. 3.2. Symmetry Prop erties of Circulant Matrices. Amongst all circulant matrices, there is a special one. Let S and its adjoin t S ∗ b e the cir cular shift op er ators defined by the follo wing action on vectors S ( x 0 , · · · , x n -2 , x n -1 ) = ( x n -1 , x 0 , · · · , x n -2 ) S ∗ ( x 0 , x 1 , · · · , x n -1 ) = ( x 1 , · · · , x n -1 , x 0 ) . S is therefore called the cir cular right-shift op er ator while S ∗ is the cir cular left- shift op er ator . It is clear that S ∗ is the inv erse of S , and it is easy to show that it is the adjoint of S . The latter fact also b ecomes clear upon examining the matrix represen tations of S and S ∗ S x = 0 1 1 . . . . . . 1 0 x 0 x 1 . . . x n -1 = x n -1 x 0 . . . x n -2 , S ∗ x = 0 1 . . . . . . 1 1 0 x 0 x 1 . . . x n -1 = x 1 . . . x n -1 x 0 , whic h shows that S ∗ is indeed the transp ose (and therefore the adjoint) of S . Note that b oth matrix representations are circulant matrices since S = C (0 , 1 , 0 ,..., 0) and S ∗ = C (0 ,..., 0 , 1) in the notation of (1.1) . The actions of S and S ∗ expressed in terms of vector indices are (3.3) ( S x ) k := x k − 1 , ( S ∗ x ) k := x k +1 , k ∈ Z n , where mo dular arithmetic is used for computing vector indices. F or example ( S x ) 0 = x 0 − 1 ≡ n x n -1 . An imp ortant property of S is that it commutes with any circulan t matrix. One w ay to see this is to observ e the for any matrix M , left (right) m ultiplication by S 7 2 6 6 6 4 x 0 x 1 . . . x n 1 3 7 7 7 5 = = 2 6 6 6 4 y 0 y 1 . . . y n 1 3 7 7 7 5 y 2 y 1 y 0 y n -1 y n -2 x n -2 x n -1 x 0 x 1 x 2 y 2 y 1 y 0 y n -1 y n -2 x n -2 x n -1 x 0 x 1 x 2 S S M M = 2 6 6 6 4 y n 1 y 0 . . . y n 2 3 7 7 7 5 2 6 6 6 4 x n 1 x 0 . . . x n 2 3 7 7 7 5 = Fig. 3.3: Illustration of the cir cular shift-invarianc e prop erty of the matrix-vector product y = M x in a commutative diagram. S x is the circular shift of a v ector, depicted also as a counter-clockwise rotation of the vector comp onents arranged on the discrete circle. A matrix M has the shift in v ariance property if S M = M S . In this diagram, this means that the action of M on the rotated vector S x is equal to acting on x with M (to pro duce y = M x ) first, and then rotating the resulting vector to yield S y . A matrix M has this shift-inv ariance prop erty iff it is circulan t. amoun ts to ro w (column) circular permutation. A brief lo ok at the circulant structure in (1.1) sho ws that a ro w circular permutation giv es the same matrix as a column circular p ermutation. Therefore, for any circulant matrix C a , we hav e S C a = C a S . A more detailed argument is as follows. T o see this, note that the matrix representation of S implies its ij ’th en try is giv en by ( S ) ij = δ i − j − 1 . Now let C a b e any circulan t matrix, and observe that ( S C a ) ij = X l S il ( C a ) lj = X l δ i − l − 1 a l − j = X l δ ( i − 1) − l a l − j = a i − 1 − j , ( C a S ) ij = X l ( C a ) il S lj = X l a i − l δ l − j − 1 = X l a i − l δ l − ( j +1) = a i − j − 1 , where (3.1) is used for the entries of C a . Th us S commutes with an y circulant matrix. The conv erse is also true (see Exercise App endix A.1 ), and we state these conclusions in the next lemma. Lemma 3.1. A matrix M is cir culant iff it c ommutes with the cir cular shift op- er ator S , i.e. S M = M S . Note a simple corollary that a matrix is circulant iff it commutes with S ∗ since S M = M S ⇐ ⇒ S ∗ S M S ∗ = S ∗ M S S ∗ ⇐ ⇒ M S ∗ = S ∗ M , whic h could b e an alternativ e statement of the Lemma. The fact that a circulant matrix comm utes with S could hav e b een used as a definition of a circulan t matrix, with the structure in (1.1) derived as a consequence. Commutation with S also expresses a shift invarianc e property . If we think of an n -v ector x as a function on Z n ( Figure 3.2 .b), then S M x = M S x means that the action of M on x is shift inv ariant. Geometrically , S x is a counter-clockwise rotation of the function x in Figure 3.2 .b. S ( M x ) = M ( S x ) means that rotating the result of the action of M on x is the same as rotating x first and then acting with M . This prop erty is illustrated graphically in Figure 3.3 . 8 a 2 a 1 a 0 a n -1 a n -2 x 0 x 1 x 2 x 3 x n -2 x n -1 k =0 k =1 k =- 1 = n -1 x 0 x 1 x 2 x 3 x n -2 x n -1 a 2 a 1 a 0 a n -1 a n -2 x 0 x 1 x 2 x 3 x n -2 x n -1 a 2 a 1 a 0 a n -1 a n -2 Fig. 3.4: Graphical illustration of circular conv olution y k = P n − 1 l =0 a k − l x l for k = 0 , 1 , − 1 respec- tively . The a -v ector is arranged in reverse orientation, and then each y k is calculated from the dot product of x and the rotated, reverse-oriented a -vector rotated by k steps counter clo ckwise. 3.3. Circular Con volution. W e will start with examining the matrix-v ector pro duct when the matrix is circulan t. By analyzing this pro duct, w e will obtain the circular con volution of t wo v ectors. Let C a b y some circulant matrix, and examine the action of such a matrix on any v ector x = ( x 0 , x 1 , · · · , x n − 1 ). The matrix-vector m ultiplication y = C a x in detail reads (3.4) y = y 0 y 1 . . . y n − 1 = a 0 a n − 1 · · · a 1 a 1 a 0 a 2 . . . . . . . . . a n − 1 a n − 2 · · · a 0 x 0 x 1 . . . x n − 1 = C a x. Using ( C a ) kl = a k − l , this matrix-vector m ultiplication can b e rewritten as (3.5) y k = n − 1 X l =0 ( C a ) kl x l = n − 1 X l =0 a k − l x l . This can b e viewed as an operation on the t wo vectors a and x to yield the vector y , and allo ws us to reinterpret the matrix-v ector pro duct of a circulan t matrix as follo ws. Definition 3.2. Given two n -ve ctors a and x , their circular con v olution y = a ? x is another n -ve ctor define d by (3.6) y = a ? x ⇔ y k = n − 1 X l =0 a k − l x l , wher e the indic es in the sum ar e evaluate d mo dulo n . Comparing (3.5) with (3.6) , w e see that m ultiplying a v ector by a circulant matrix is equiv alent to con volving the vector with the vector defining the circulan t matrix (3.7) y = C a x = a ? x. The sum in (3.6) defining circular con volution has a nice circular visualization due to mo dular arithmetic on Z n . This is illustrated in Figure 3.4 . The elemen ts of x are arranged in a discrete circle coun ter-clo c kwise, while the elemen ts of a are arranged in a circle clockwise (the rev erse orientation is because elements of x are 9 indexed lik e x l while those of a are indexed lik e a . − l in the definition (3.6) ). F or eac h k , the array a is rotated counter-clockwise b y k steps ( Figure 3.4 sho ws cases for three differen t v alues of k ). The num ber y k in (3.6) is then obtained by multiplying the x and rotated a arrays element-wise, and then summing. This generates the n num b ers y 0 , . . . , y n − 1 . F rom the definition, it is easy to show (see Exercise App endix A.3 ) that circular con volution is associative and commutativ e. • Asso ciativity: for an y three n -vectors a , b and c we hav e a ? ( b ? c ) = ( a ? b ) ? c • Commutativity: for any t w o n -vectors a and b a ? b = b ? a The ab ov e tw o facts ha v e several interesting implications. First, since con v olution is comm utative, the matrix-v ector pro duct (3.4) can b e written in tw o equiv alen t wa ys C a x = a ? x = x ? a = C x a. Applying this fact in succession to tw o circulant matrices C b C a x = C b ( a ? x ) = b ? ( a ? x ) = ( b ? a ) ? x = C b?a x. This means that the pro duct of any tw o circulant matrices C b and C a is another circulan t matrix C b?a whose defining v ector is b ? a , the circular con v olution of the defining vectors of C b and C a resp ectiv ely . W e summarize this conclusion and an imp ortan t corollary of it next. Theorem 3.3. 1. Cir cular c onvolution of any two ve ctors c an b e written as a matrix-ve ctor pr o duct with a cir culant matrix a ? x = C a x = C x a. 2. The pr o duct of any two cir culant matric es is another cir culant matrix C a C b = C a?b . 3. Al l cir culant matric es mutual ly c ommute sinc e for any two C a and C b C a C b = C a?b = C b?a = C b C a . The set of all n -vectors forms a comm utative algebra under the op eration of circular con volution. The abov e sho ws that the set of n × n circulan t matrices under standard matrix multiplication is also a comm utative algebra isomorphic to n -v ectors with circular conv olution. 4. Simultaneous Diagonalization of all Circulan t Matrices Yields the DFT . In this section, we will derive the DFT as a byproduct of diagonalizing cir- culan t matrices. Since all circulan t matrices m utually commute, we recall Lemma 2.2 and lo ok for a circulan t matrix that has simple eigenv alues. The eigenv ectors of that matrix will then give the simultaneously diagonalizing transformation. The shift op erator is in some sense the most fundamental circulan t matrix, and is therefore a go o d candidate for an eigenv ector/eigen v alue decomp osition. The eigen- v alue problem for S will turn out to b e the simplest one. Note that we ha v e tw o options. T o find eigen vectors of S or alternativ ely of S ∗ . W e b egin with S ∗ since this will end up yielding the classically defined DFT. 10 4.1. Construction of Eigen vectors/Eigen v alues of S ∗ . Let w b e an eigen- v ector (with eigen v alue λ ) of the shift op erator S ∗ . Note that it is also an eigenv ector (with eigenv alue λ l ) of any p ow er ( S ∗ ) l of S ∗ . Applying the definition (3.3) to the relation S ∗ w = λw will rev eal that an eigenv ector w has a v ery sp ecial structure (4.1) S ∗ w = λw ⇐ ⇒ w k +1 = λ w k , k ∈ Z n , ( S ∗ ) l w = λ l w ⇐ ⇒ w k + l = λ l w k , k ∈ Z n , l ∈ Z , i.e. eac h en try w k +1 of w is equal to the previous en try w k m ultiplied by the eigen v alue λ . These relations can b e used to compute all eigenv ectors/eigen v alues of S ∗ . First, observ e that although (4.1) is v alid for all l ∈ Z , this relation “rep eats” for l ≥ n . In particular, for l = n w e ha ve for eac h index k (4.2) w k + n = λ n w k ⇐ ⇒ w k = λ n w k since k + n ≡ n k . No w since the v ector w 6 = 0, then for at least one index k , w k 6 = 0, and the last equality implies that λ n = 1 , i.e. an y eigenv alue of S must b e an n th r o ot of unity λ n = 1 ⇐ ⇒ λ = ρ m := e i 2 π n m , m ∈ Z n . Thus we have disc over e d that the n eigenvalues of S ∗ ar e pr e cisely the n distinct n th r o ots of unity { ρ m , m = 0 , . . . , n − 1 } . Note that an y of the n th ro ots of unity can b e expressed as a pow er of the first n th ro ot: ρ m = ρ m 1 (recall Figure 3.1d ). No w fix m ∈ Z n and compute w ( m ) , the eigen v ector corresp onding to the eigen- v alue ρ m . Apply the last relation in (4.1) w k + l = λ l w k , and use it to express the en tries of the eigenv ector w ( m ) in terms of the first entry ( k = 0) (4.3) w ( m ) l +0 = λ l w 0 ⇔ w ( m ) l = ρ l m w 0 ⇔ w ( m ) = w 0 1 , ρ m , ρ 2 m , . . . , ρ n -1 m . Note that w 0 is a scalar, and since eigen v ectors are only unique up to multiplication b y a scalar, w e can set w 0 = 1 for a more compact expression for the eigenv ector. In addition, ρ m in (4.3) could b e an y of the n th ro ots of unit y , and th us that expres- sion applies to all of them, yielding the n eigenv ectors. W e summarize the previous deriv ations in the follo wing statement. Lemma 4.1. The cir cular left-shift op er ator S ∗ on R n has n distinct eigenvalues. They ar e the n th r o ots of unity ρ m := e i 2 π n m = ρ m 1 =: ρ m , m ∈ Z n . The c orr esp onding eigenve ctors ar e (4.4) w ( m ) = 1 , ρ m , ρ 2 m , . . . , ρ m ( n - 1) , m = 0 , . . . , n − 1 , Note that the eigenv ectors w ( m ) are indexed with the same index as the eigenv alues { λ m = ρ m } . It is useful and instructive to visualize the eigenv alues and their corre- sp onding eigen vectors as sp ecially ordered sets of the ro ots of unity . Whic h ro ots of unit y enter into an y particular eigen vector, as well as their ordering, is determined b y the algebra of rotations of ro ots of unity . This is illustrated in detail in Figure 4.1 4.2. Eigenv alues Calculation of a Circulan t Matrix Yields the DFT. No w that we ha ve calculated all the eigenv ectors of the shift op erator in Lemma 4.1 , w e can use them to find the eigen v alues of any circulant matrix C a . Recall that since an y circulant matrix commutes with S ∗ , and S ∗ has distinct eigen v alues, then C a has 11 w (0) = (0 , 0 , 0 , 0 , 0 , 0 , 0 , 0) w (1) = (0 , 1 , 2 , 3 , 4 , 5 , 6 , 7) w (2) = (0 , 2 , 4 , 6 , 0 , 2 , 4 , 6) w (3) = (0 , 3 , 6 , 1 , 4 , 7 , 2 , 5) w (7) = (0 , 7 , 6 , 5 , 4 , 3 , 2 , 1) w (6) = (0 , 6 , 4 , 2 , 0 , 6 , 4 , 2) w (5) = (0 , 5 , 2 , 7 , 4 , 1 , 6 , 3) w (4) = (0 , 4 , 0 , 4 , 0 , 4 , 0 , 4) Fig. 4.1: Visualization of the eigenv alues and eigenv ectors of the left shift op erator S ∗ for the case n = 8. The eigenv alues (red straight lines and red lab els), and elements of the corresp ond- ing eigenvectors (blue dots) are all p oints on the unit circle of the complex plane. ρ is the n ’th root of unity (here ρ = e iπ/ 4 ). F or each m ∈ Z n , ρ m is an eigen v alue with eigenv ector w ( m ) = 1 , ρ m , ρ 2 m , . . . , ρ m ( n − 1) , where eac h element is a rotation of the previous element by ρ m (curvy red arrows). F or compactness of notation, ve ctors are denote d ab ove by p owers of ρ , e.g. w (4) = 1 , ρ 2 , ρ 4 , ρ 6 , 1 , ρ 2 , ρ 4 , ρ 6 = (0 , 2 , 4 , 6 , 0 , 2 , 4 , 6). Notice the pattern that which p o wers of ρ appear in w ( m ) depends on the least common factor (lcf ) of m and n , e.g. in w (4) that n umber is 8 / lcf (4 , 8) = 2. F or ( m, n ) co-prime, all pow ers of ρ app ear in w ( m ) , though with permuted ordering (see w (1) , w (3) , w (5) , w (7) ). the same eigenv ectors as those (4.4) previously found for S ∗ (b y Lemma 2.2 ). Th us w e hav e the relation (4.5) C a w ( m ) = λ m w ( m ) ⇔ a 0 a n − 1 · · · a 1 a 1 a 0 a 2 . . . . . . . . . a n − 1 a n − 2 · · · a 0 1 ρ m . . . ρ n -1 m = λ m 1 ρ m . . . ρ n -1 m , where { λ m } are the eigenv alues of C a (not the eigenv alues of S ∗ found in the previous section). Each ro w of the ab ov e equation represent essen tially the same equation (but m ultiplied by a pow er of ρ m ). The first ro w is the easiest equation to work with λ m = a 0 + a n − 1 ρ m + · · · + a 1 ρ n -1 m = a 0 + a 1 ρ -1 m + · · · + a n − 1 ρ -( n -1) m = n − 1 X l =0 a l ρ − l m = n − 1 X l =0 a l ρ − ml = n − 1 X l =0 a l e − i 2 π n ml =: ˆ a m , (4.6) whic h is precisely the classically-defined DFT (1.2) of the vector a . 12 W e therefore conclude that any circulant matrix C a is diagonalizable by the ba- sis (4.4) . Its n eigenv alues are given b y ( ˆ a 0 , ˆ a 1 , . . . , ˆ a n − 1 ) from (4.6) , which is the DFT of the v ector ( a 0 , a 1 , . . . , a n − 1 ). In this w a y , the DFT arises from a formula for computing the eigenv alues of any circulan t matrix. One might ask what the conclusion w ould hav e been if the eigen vectors of S hav e b een used instead of those of S ∗ . A rep etition of the previous steps but now for the case of S would yield that the eigenv alues of a circulant matrix C a are given b y (4.7) µ k = n − 1 X l =0 a l e i 2 π n kl , k = 0 , 1 , . . . , n − 1 . While the expressions (4.6) and (4.7) ma y at first appear differen t, the sets of n um b ers { λ m } and { µ k } are actually equal. So in fact, the expression (4.7) giv es the same set of eigenv alues as (4.6) but arranged in a different order since µ k = λ − k . λ − k = n − 1 X l =0 a l e − i 2 π n ( − k ) l = n − 1 X l =0 a l e i 2 π n kl = µ k . Along with the t wo c hoices of S and S ∗ , there are also other possibilities. Let p b e an y num b er that is coprime with n . It is easy to show (Exercise App endix A.2 ) that a n × n matrix is circulant iff it comm utes with S p . In addition, the eigenv alues of S p are distinct (see Figure 4.1 ). Therefore the eigenv ectors of S p (rather than those of S ) can b e used to sim ultaneously diagonalize all circulant matrices. This w ould yield y et another transform distinct from the tw o transforms (4.6) or (4.7) . Ho wev er, the set of num b ers pro duced from that transform will still b e the same as those computed from the previous tw o transforms, but arranged in a different ordering. 5. The Big Picture. Let C a b e a circulan t matrix made from a v ector a as in (1.1) . If we use the eigenv ectors (4.4) of S ∗ as columns of a matrix W , the n eigen v alue/eigenv ector relationships (4.5) C a w ( m ) = λ m w ( m ) can b e written as a single matrix equation as follo ws (5.1) C a w (0) · · · w ( n -1) = w (0) · · · w ( n -1) ˆ a 0 . . . ˆ a n − 1 , ⇐ ⇒ C a W = W diag ( ˆ a ) , where we hav e used the fact (4.6) that the eigenv alues of C a are precisely { ˆ a m } , the elemen ts of the DFT of the vector a . It is easy to verify that the columns of W are m utually orthogonal 2 , and th us W is a unitary matrix (up to a rescaling) W ∗ W = W W ∗ = nI , or equiv alently W − 1 = 1 n W ∗ . Since the matrix W is made up of the eigenv ectors of S ∗ , whic h in turn are made up of v arious pow ers of the ro ots of unity (4.4) , it has some sp ecial structure which is w orth examining W := w (0) · · · w ( n -1) = 1 1 · · · 1 1 ρ · · · ρ n -1 . . . . . . . . . 1 ρ n -1 · · · ρ ( n -1)( n -1) . 2 This also follows from the fact that the columns of W are the eigenv ectors of S ∗ , and since S ∗ is a normal matrix, it has mutually orthogonal eigenvectors. 13 The matrix W is symmetric, W ∗ is thus the matrix W with each entry replaced by its complex conjugate. F urthermore, since for eac h ro ot of unity ρ k ∗ = ρ − k , w e can therefore write W ∗ = 1 1 · · · 1 1 ρ -1 · · · ρ -( n -1) . . . . . . . . . 1 ρ -( n -1) · · · ρ -( n -1)( n -1) . Also observ e that m ultiplying a v ector b y W ∗ is exactly taking its DFT. Indeed the m ’th row of W ∗ x is ˆ x m = 1 ρ - m · · · ρ - m ( n -1) x 0 . . . x n − 1 , whic h is exactly the definition (1.2) of the DFT. Similarly , multiplication by 1 n W is taking the inv erse DFT x l = 1 n n − 1 X k =0 ˆ x k ρ kl = 1 n n − 1 X k =0 ˆ x k e i 2 π n kl . Multiplying both sides of (5.1) from the right by W − 1 giv es the diagonalization of C a whic h can b e written in several equiv alen t forms C a = W diag (ˆ a ) W − 1 = W diag (ˆ a ) 1 n W ∗ = 1 n W diag ( ˆ a ) W ∗ = 1 √ n W diag ( ˆ a ) 1 √ n W ∗ . (5.2) The diagonalization (5.2) can b e interpreted as follows in terms of the action of a circulan t matrix C a on any v ector x C a x = 1 n W diag ( ˆ a ) W ∗ x | {z } DFT of x | {z } multiply by ˆ a entrywise | {z } inv erse DFT Th us the action of C a on x , or equiv alen tly the circular conv olution of a with x , can b e p erformed b y first taking the DFT of x , then m ultiplying the resulting vector comp onen t-wise by ˆ a (the DFT of the vector a defining the matrix C a ), and then taking an inv erse DFT. In other words, the diagonalization of a circulant matrix is equiv alent to con v erting circular conv olution to comp onent-wise v ector multiplication through the DFT. This is illustrated in Figure 5.1 . Note that in the literature there is an alternativ e form for the DFT and its in verse ˆ x k = 1 √ n n − 1 X l =0 x l e − i 2 π n kl , x l = 1 √ n n − 1 X k =0 ˆ x k e i 2 π n kl , whic h is sometimes preferred due to its symmetry (and is also truly unitary since with this definition k x k 2 = k ˆ x k 2 ). This “unitary” DFT corresp onds to the last diag- onalization given in (5.2) . W e do not adopt this unitary DFT definition here since it complicates 3 the statement that the eigen v alues of C a are precisely the entries of ˆ a . 3 If the unitary DFT is adopted, the equiv alent statement would be that the eigenv alues of C a are the elements of the entries of √ n ˆ a . 14 conv olve with v ector a mul t i pl y by 2 4 ˆ a 0 . . . ˆ a n -1 3 5 mul t i pl y by ci r cu l a nt C a 1 n W 1 n W W ⇤ W ⇤ x ˆ x y ˆ y Fig. 5.1: Illustration of the relationships b etw een circulan t matrices, circular convo lution and the DFT. The matrix-v ector m ultiplication y = C a x with the circulan t matrix C a is equiv alent to the circular convolution y = a ? x . The DFT is a linear transformation W ∗ on vectors with inv erse 1 n W . It conv erts multiplication by the circulant matrix C a into multiplication by the diagonal matrix diag (ˆ a 0 , . . . , ˆ a n-1 ) whose entries are the DFT of the vector a defining the matrix C a . W e summarize the algebraic asp ects of the big picture in the following theorem. Theorem 5.1. The fol lowing sets ar e isomorphic c ommutative algebr as (a) The set of n -ve ctors is close d under cir cular c onvolutions and is thus an al- gebr a with the op er ations of addition and c onvolution. (b) The set of n × n cir culant matric es is an algebr a under the op er ations of addition and matrix multiplic ation. (c) The set of n -ve ctors is an algebr a under the op er ations of addition and c omp onent- wise multiplic ation. The ab ove isomorphisms ar e depicte d by the fol lowing diagr am form C x extract column 1 DFT DFT 1 ( b ) C x ,C y 2 R n ⇥ n C x C y : matrix m ultiplication ( c ) ˆ x, ˆ y 2 R n ˆ x ˆ y : comp onent-wise multiplication ( a ) x, y 2 R n ,x ? y : circular conv olution 6. F urther Commen ts and Generalizations. W e end by briefly sketc hing t wo differen t wa ys in which the pro cedures describ ed in this tutorial can b e general- ized. The first is generalizations to families of mutually commuting infinite matrices and linear operators. These families are c haracterized b y commuting with shifts of functions defined on “time-axes” whic h can be iden tified with groups or semi-groups. This yields the familiar F ourier transform, F ourier series, and the z-transform. A sec- ond line of generalization is to families of matrices that do not commute. In this case w e can no longer demand sim ultaneous diagonalization, but rather simultaneous blo ck diagonalization whenever p ossible. This is the sub ject of group representations, but w e will only touc h on the simplest of examples by wa y of illustration. The discussions in this section are meant to b e brief sketc hes to motiv ate the interested reader into further exploration of the literature. 6.1. F ourier T ransform, F ourier Series, and the z-T ransform. First we recap what these classical transforms are. They are summarized in T able 6.1 . In a Signals and Systems course [ 1 ], these concepts are usually introduced as transforms on temporal signals, so we will use that language to refer to the indep endent v ariable as time, although it can hav e an y other interpretation. As is the theme of this tu- torial, the starting p oint should not b e the signal transform, but rather the systems, 15 or op erators, that act on them and their resp ective inv ariance prop erties. W e no w formalize these prop erties. Time Axis T ransfo rm F requency Axis (F requency “Set”) t ∈ R F ourier T ransform F ( ω ) := Z ∞ −∞ f ( t ) e − j ω t dt j ω ∈ j R imaginary axis of C t ∈ Z z-T ransform (bilateral) F ( z ) := X t ∈ Z f ( t ) z − t z = e j θ ∈ T unit cir cle of C t ∈ T F ourier Series F ( k ) := Z 2 π 0 f ( t ) e − j k t dt j k ∈ j Z , inte gers of imaginary axis of C t ∈ Z n Discrete Fourier T ransfo rm (DFT) F ( k ) := n − 1 X t =0 f ( t ) e − j 2 π n kt k ∈ Z n n -r o ots of unity T able 6.1: A list of F ourier-type transforms. The left column lists the time axis ov er which a signal is defined, the middle column lists the common name and expression for the transform, and the right column lists the frequency axis, or more precisely the “set of frequencies” asso ciated with that transform. The set of frequencies is typically iden tified with a subset of the complex plane C . Note how all the transforms hav e the common form of integrating or summing the giv en signal against a function of the form e − st , where the set of v alues (“frequencies”) of s ∈ C is different for different transforms. The time axes are the in tegers Z for discrete time and the reals R for con tinuous time. Moreo ver, the discrete circle Z n and the contin uous circle T are the time axes for discrete and contin uous-time p erio dic signals resp ectively . A common feature of the time axes Z , R , T and Z n is that they all are c ommutative gr oups . In fact, they are the basic commutativ e groups. All other (so-called lo cally compact) comm utative groups are made up of group pro ducts of those basic four [ 4 ]. 16 Let’s denote by t, τ , T elemen ts of those groups G = R , Z , T , or Z n . F or any function f : G → R (or C ) defined on such a group, there is a natural “time shift” op eration (6.1) ( S T f ) ( t ) := f ( t − T ) , whic h is the right shift (dela y) of f b y T time units. All that is needed to make sense of this op eration is that for t, T ∈ G , we hav e t − T ∈ G , and that is guaranteed by the group structure for any of those four time sets. Now consider a linear op erator A : C G − → C G acting on the v ector space C G of all scalar-v alued functions on G , whic h can b e any of the four time sets. W e call such an op erator time invariant (or shift invariant ) if it commutes with all p ossible time-shift operations (6.1) , i.e. (6.2) ∀ T ∈ G , S T A = AS T . T o conform with traditional terminology , we refer to such shift-inv arian t linear op- erators as Line ar Time-Invariant (L TI) systems . They are normally describ ed as differen tial or difference equations with a forcing term, or as conv olutions of signals amongst other representations. Ho wev er, only the shift-inv ariance prop erty (6.2) , and not the details of those represen tations, is what’s important in disco vering the appropriate transform that simultaneously diagonalizes such operators. There are additional technicalities in generalizing the previous tec hniques to sets of line ar op er ators on infinite dimensional spaces rather than matrices. The proce- dure how ever is v ery similar. W e identify the class of op erators to b e analyzed. This in volv es a shift (time) inv ariance prop erty , whic h then implies that they all m utually comm ute. The “eigenv ectors” of the shift op erators give the simultaneously diag- onalizing transform. The complication here is that eigenv ectors may not exists in the classical sense (they do in the case of F ourier series, but not in the other cases). In addition, diagonalization will not necessarily corresp ond to finding a new basis of the v ector space. In b oth the F ourier and z-transforms, the n um b er of “linearly indep enden t eigenfunctions” is not even coun table, so they can’t b e thought of as forming a basis. F ortunately , it is easy to circumv en t these difficulties by generalizing the concept of diagonal matrices to multiplic ation op er ators . F or linear op erators on infinite-dimensional spaces, these play the same role as the diagonal matrices do on finite-dimensional spaces. Definition 6.1. L et Ω b e some set, and c onsider the ve ctor sp ac e C Ω of al l sc alar- value d functions on Ω . Given a p articular sc alar-value d function a : Ω − → C , we define the asso ciate d multiplication op erator M a : C Ω − → C Ω by ∀ x ∈ Ω , ( M a f ) ( x ) := a ( x ) f ( x ) , i.e. the p oint-wise multiplic ation of f by a . If Ω = { 1 , . . . , n } , then C Ω = C n , the space of all complex n -v ectors, and M a is simply represen ted by the diagonal matrix whose en tries are made up of the en tries of the v ector a . The concept introduced ab ov e is ho w ever m uch more general. Note that it is immediate from the definition that all m ultiplication operators m utually commute, just like all diagonal matrices mutually comm ute. No w we generalize the concept of diagonalizing matrices. Diagonalizing an op er- ator, when p ossible, is done by con verting it to a multiplication operator. 17 Definition 6.2. A line ar op er ator A : H − → H on a ve ctor sp ac e H is said to b e diagonalizable if ther e exists a function sp ac e C Ω , and an invertible tr ansformation V : C Ω − → H that c onverts A into a multiplic ation op er ator M a V AV − 1 = M a , for some function a : Ω − → C . The function a is r eferr e d to as the sym b ol of the op er ator A , and V is the diagonalizing tr ansformation. Th us in contrast to the case of matrices, we ma y hav e to mov e to a different vector space to diagonalize a general linear op erator. In some cases, w e can still give a diagonalization an in terpretation as a basis expansion in the same v ector space. It provides a helpful con trast to consider suc h an example. Let an operator A : H → H ha v e a countable set of eigenfunctions { v m } that span a Hilb ert space H . Assume in addition that A is normal, and thus the eigenfunctions are mutually orthonormal. An example of this situation the case of shift inv arian t op erators on T , which corresp onds to the 3rd entry in T able 6.1 (i.e. F ourier series). In that case we can take Ω = Z , and thus C Z is the space of all complex-v alued bilateral sequences. In addition we can add a Hilb ert space structure b y using ` 2 norms and consider ` 2 ( Z ) as the space of sequences. The diagonalizing transformation 4 is V : ` 2 ( Z ) − → L 2 ( T ) describ ed as follows. Let v k ( t ) := e j k t / √ 2 π b e the F ourier series elemen ts. They are an orthonormal basis of L 2 ( T ). Consider an y square-integrable function f ∈ L 2 ( T ) with F ourier series F k := h v k , f i = 1 √ 2 π Z 2 π 0 e − j k t f ( t ) dt, f ( t ) = X k ∈ Z F k v k ( t ) = 1 √ 2 π X k ∈ Z F k e j k t . The mapping V : ` 2 ( Z ) − → L 2 ( T ) then simply maps eac h function f to its bilateral F ourier series co efficients { . . . , F -1 , F 0 , F 1 , . . . } ↔ f = X k ∈ Z F k v k . Planc herel’s theorem guarantees that this mapping is a bijective isometry . Any shift- in v ariant operator A on L 2 ( T ) (e.g. those that can be written as circular con volutions) then has a diagonalization as a doubly infinite matrix V AV − 1 = . . . ˆ a − 1 ˆ a 0 ˆ a -1 . . . , where the sequence { ˆ a k } is made up of the eigenv alues of A . The example (F ourier series) just discussed is a very sp ecial case. In general, we ha ve to consider diagonalizing using a m ultiplication op erator on an uncoun table do- main. Examples of these are the F ourier and z-transforms, where the diagonalizations are m ultiplication op erators on C R and C T resp ectiv ely . Note that b oth sets R and T are uncountable, th us an interpretation of the transform as a basis expansion is not p ossible. None the less, multiplication operators pro vide the necessary generalization of diagonal matrices. 4 In this case the function space is C N , the space of semi-infinite sequences. W e need to add a Hilbert space structure in order to make sense of conv erges of infinite sums, and the choice ` 2 ( N ) provides additional nice prop erties such as P arsev al’s identit y . W e do not discuss these here. 18 6.2. Simultaneous Block-Diagonalization and Group Representations. The simplest example of a non-commutativ e group of transformations is the so-called symmetric gr oup S 3 of all p erm utations of ordered 3-tuples. Consider the ordered 3-tuple (0 , 1 , 2) and the follo wing “circular shift” and “sw ap” op erations on it (6.3) I (0 , 1 , 2) := (0 , 1 , 2) , c (0 , 1 , 2) := (2 , 0 , 1) , c 2 (0 , 1 , 2) := (1 , 2 , 0) , s 01 (0 , 1 , 2) := (1 , 0 , 2) , s 12 (0 , 1 , 2) := (0 , 2 , 1) , s 20 (0 , 1 , 2) := (2 , 1 , 0) , where I is the iden tit y (no p ermutation) operation, and s ij is the op eration of swap- ping the i and j elemen ts. The group op eration is the comp osition of p erm utations. Note that the first three permutations I , c, c 2 are isomorphic to Z 2 as a group and th us m utually comm ute. The swap and shift op erations in general do not mutually comm ute. A little inv estigation shows that the six elements I , c, c 2 , s 01 , s 12 , s 20 = S 3 do indeed form the group of all p ermutations of a 3-tuple. A r epr esentation of a group is an isomorphism b etw een the group and a set of matrices (or linear op erators) with the comp osition op eration b etw een them b eing standard matrix m ultiplication. With a sligh t abuse of notation (where we use the same symbol for the group element and its representer) w e hav e the follo wing repre- sen tation of S 3 as linear op erators on R 3 (i.e. as matrices on 3-vectors) (6.4) I = h 1 0 0 0 1 0 0 0 1 i , c = h 0 1 0 0 0 1 1 0 0 i , c 2 = h 0 0 1 1 0 0 0 1 0 i , s 01 = h 0 1 0 1 0 0 0 0 1 i , s 12 = h 1 0 0 0 0 1 0 1 0 i , s 20 = h 0 0 1 0 1 0 1 0 0 i . Those matrices acting on a v ector ( x 0 , x 1 , x 2 ) will permute the elements of that v ector according to (6.3) . No w we ask the question: c an the set of matric es in S 3 (identifie d as (6.4) ) b e simultane ously diagonalize d? Recall that comm utativity is a necessary condition for sim ultaneous diagonalizability , and since this set is not comm utative, the answer is no. The failure of commutativit y can b e seen from the follo wing easily established relation b etw een shifts and sw aps s kl c = c s k +1 ,l +1 , k , l ∈ Z 2 (i.e. the arithmetic for k + 1 and l + 1 should b e done in Z 2 ). The lack of commutativit y precludes sim ultaneous diagonalizability . Ho wev er, it is p ossible to simultaneously blo ck-diagonalize all elemen ts of S 3 so they all hav e the follo wing blo ck-diagonal form (6.5) ∗ 0 0 0 ∗ ∗ 0 ∗ ∗ . In some sense, this the simplest form one can hop e for when analyzing all mem b ers of S 3 (and the algebra generated by it). This blo ck diagonalization do es indeed reduce the complexit y of analyzing a set of 3 × 3 matrices to analyzing sets of at most 2 × 2 matrices. While this migh t not seem significant at first, it can b e immensely useful in certain cases. Imagine for example doing symbolic calculations with 3 × 3 matrices. This t ypically yields unwieldy formulas. A reduction to sym b olic calculations for 2 × 2 19 matrices can giv e significant simplifications. Another case is when infinite-dimensional op erators can b e blo ck diagonalized with finite-dimensional blo cks. This is the case when one uses Spherical Harmonics to represen t rotationally inv arian t differential op erators. In that case the representation has finite-dimensional blocks, though with increasing size. Blo c k Diagonalization and In v arian t Subspaces. Let’s first examine ho w blo c k diagonalization can b e interpreted geometrically . Given an operator A : V − → V on a vector space, w e sa y that a subspace V o ⊆ V is A -invariant if A V o ⊆ V o (i.e. for an y v ∈ V o , Av ∈ V o ). Note that the span of any eigen vector of A (the so-called eigenspace) is an inv arian t subspace of dimension 1. Finding in v ariant subspaces is equiv alent to blo ck triangularization . Let V 1 b e some complemen t of V o (i.e. V = V o ⊕ V 1 ), then with resp ect to that decomp osition, A has the form (6.6) A = A 11 A 12 0 A 22 . Note that in general, the complement subspace will not b e A -in v ariant. If it were, then A 12 = 0 ab ov e, and that form of A w ould b e blo ck diagonal. Th us blo ck diag- onalization amounts to finding an A -invariant subsp ac e V o , as wel l as a c omplement V 1 of it such that V 1 is also A -invariant . No w observe the following facts which are immediately obvious (at least for ma- trices) from the the form (6.6) . If A is inv ertible, then V o is also A − 1 -in v ariant since the inv erse of an upp er-blo ck-triangular matrix is also upp er-blo ck-triangular. If w e c ho ose V 1 = V ⊥ o , the orthogonal complemen t of V o , then V o is A -in v ariant iff V ⊥ o is A ∗ - in v ariant (this can be seen from (6.6) by observing that A ∗ is blo ck-lo wer-triangular). Finally , in the sp ecial case that A is unitary (i.e. AA ∗ = A ∗ A = I , and therefore A − 1 = A ∗ ), it follo ws from the previous tw o observ ations that for a unitary A , any A -in v ariant subspace V o is such that its orthogonal complement V ⊥ o is automatically A -in v ariant. Therefore, for unitary matrices, blo ck triangularization is equiv alent to blo c k diagonalization, which can b e done by finding inv arian t subspaces and their orthogonal complements. Blo c k Diagonalization of S 3 . Now we return to the matrices of S 3 (6.5) and sho w how they can b e simultaneously blo ck diagonalized. Note that all the matrices are unitary , and therefore once all the common inv arian t subspaces are found, they are guaranteed to b e mutually orthogonal. The easiest one to find is the v ector (1 , 1 , 1). Note that it is an eigen vector of all mem b ers of S 3 with eigen v alue 1 (since ob viously any p erm utation of the elements of this vector produce the same vector again). This is an eigenspace of dimension 1. There is not another shared eigenspace of dimension 1 since then w e w ould ha ve simultaneous diagonalizabilit y , and we kno w that is precluded by the lac k of commutativit y . W e th us ha ve to simply find the 2- dimensional orthogonal complemen t of the span of (1 , 1 , 1). There are sev eral choices for its basis. One of them is as follows v 1 := 1 1 1 , v 2 := -1 0 1 , v 3 := 0 -1 1 . Notice that the vectors { v i } are mutually orthogonal, which simplifies calculations that finally give the elemen ts of S 3 in this new basis as (6.7) c = 1 0 0 0 -1 -1 0 1 0 , c 2 = 1 0 0 0 0 -1 0 -1 -1 , s 01 = 1 0 0 0 0 1 0 1 0 , s 12 = 1 0 0 0 1 -1 0 1 -1 , s 20 = 1 0 0 0 -1 -1 0 0 1 , 20 whic h are all indeed of the form (6.5) . It is more common in the literature to p erform the abov e analysis in the language of group representations, sp ecifically as decomp osing a giv en representation in to its comp onen t irr e ducible r epr esentations . Blo ck diagonalization is then an observ ation ab out the matrix form that the representation takes after that decomp osition. F or the studen t proficient in linear algebra, but perhaps not as familiar with group theory , a more natural motiv ation is to start as done ab o ve from the blo c k-diagonalization problem as the goal, and then use group representations as a to ol to arrive at that goal. What has b een done abov e can be restated using group representations as follo ws. A representation of a group G is a group homomorphism ρ : G − → GL( V ) into the group GL( V ) of inv ertible linear transformations of a vector space V . Assume for simplicity that G is finite, ρ is injective, V is finite dimensional, and that all transformations ρ ( G ) are unitary . The matrices (6.4) of S 3 are in fact the images of an injective, unitary homomorphism ρ : S 3 − → GL(3) in to the group of all non- singular transformations of R 3 . A representation is said to b e irr e ducible if there are no non-trivial in v arian t subspaces common to all transformations ρ ( G ). In other w ords, all elements of ρ ( G ) cannot b e simultaneously blo ck diagonalized. As we demonstrated, (6.4) is indeed reducible. More formally , let ρ i : G − → GL( V i ), i = 1 , 2 b e t wo given represen tations. Their dir e ct sum ρ 1 ⊕ ρ 2 : G − → GL( V 1 ⊕ V 2 ) is the represen tation formed by the “blo c k diagonal” op erator (6.8) ρ 1 ( g ) 0 0 ρ 2 ( g ) , with the obvious generalization to more than tw o representations. If a representation is reducible, then the existence of a common inv arian t subspace means that it can be written as the direct sum of so-called “subrepresentations” as in (6.8) . Thus simul- tane ous blo ck-diagonalization into the smal lest dimension blo cks is equiv alent to the de c omp osition of a given r epr esentation into the dir e ct sum of irr e ducible r epr esen- tations . This is what we hav e done for the representation (6.4) of S 3 b y finding the t wo common inv ariant subspaces (which contain no prop er further subspaces that are in v ariant) and th us brining all of them in to the blo ck diagonal form (6.7) . In general, it is a fact that any representation of a finite group (more generally , of a compact group) can b e decomp osed as the direct sum of irreducible representations [ 5 , 6 ]. REFERENCES [1] A. V. Opp enheim, A. S. Willsky , and S. H. Naw ab, “Signals and systems, vol. 2,” Pr entic e-Hal l Englewo o d Cliffs, NJ , vol. 6, no. 7, p. 10, 1983. [2] R. Plymen, “Noncommutative fourier analysis,” 2010. [3] M. E. T aylor and J. Carmona, Nonc ommutative harmonic analysis . American Mathematical Soc., 1986, no. 22. [4] W. Rudin, F ourier analysis on gr oups . Courier Dov er Publications, 2017. [5] P . Diaconis, “Group representations in probabilit y and statistics,” L e ctur e Notes-Mono gr aph Series , vol. 11, pp. i–192, 1988. [6] J.-P . Serre, Line ar r epresentations of finite gr oups . Springer Science & Business Media, 2012, vol. 42. App endix A. Exercises. A.1. Circulant Structure. Sho w that an y matrix M that commutes with the shift op erator S must b e a circulant matrix, i.e. must hav e the structure shown 21 in (1.1) , or equiv alen tly (3.1) . Answ er: Starting from the relation S M = M S , and using the definition ( S ) ij = δ i − j − 1 compute ( S M ) ij = X l S il ( M ) lj = X l δ i − l − 1 ( M ) lj = ( M ) i − 1 ,j , ( M S ) ij = X l ( M ) il S lj = X l ( M ) il δ l − j − 1 = ( M ) i,j +1 . Note that since the indices i − j − 1 of the Kro eneck er delta are to be interpreted using modular arithmetic, then the indices i − 1 and j + 1 of M ab ov e should also b e in terpreted with mo dular arithmetic. The statemen ts ( M ) i − 1 ,j = ( M ) i,j +1 ⇔ ( M ) i − 1 ,j − 1 = ( M ) i,j ⇔ ( M ) i,j = ( M ) i +1 ,j +1 then mean that the i ’th column is obtained from the previous i − 1 column by circular righ t shift of it. Alternativ ely , the last statement ab ov e implies that for any k , ( M ) i,j = ( M ) i + k,j + k , i.e. that entries of M are constant along “diagonals”. Now take the first column of M as m i := ( M ) i, 0 , then ( M ) ij = ( M ) i − j,j − j = ( M ) i − j, 0 = m i − j . Th us all entries of M are obtained from the first column by circular shifts as in (3.1) . A.2. co-Prime Po wers of the Shift. Show that an n × n matrix M is circulan t iff it commutes with S p where ( p, n ) are coprime. Answ er: If M is circulan t, then it comm utes with S and also commutes with an y of its p ow ers S p . The other direction is more interesting. The basic underlying fact for this conclusion h as to do with modular arithmetic in Z n . If ( p, n ) are coprime, then there are in tegers a , b that satisfy the Bezout iden tit y ap + bn = 1 , whic h also implies that ap is equiv alen t to 1 mo d n since ap = 1 − bn , i.e. it is equal to a m ultiple of n plus 1. Therefore, there exists a pow er of S p , namely S ap suc h that (A.1) S ap = S. Th us if M commutes with S p , then it comm utes with all of its p ow ers, and namely with S ap = S , i.e. it commutes with S , which is the condition for M being circulant. Equation (A.1) has a nice geometric interpretation. S p is a rotation of the circle in Figure 3.3 by p steps. If p and n were not coprime, then regardless of how man y times the rotation S p is rep eated, there will be some elements of the discrete circle that are not reachable from the 0 elemen t b y these rotations (examine also Figure 4.1 for an illustration of this). The condition p and n coprime insures that there is some rep etition of the rotation S p , namely ( S p ) a whic h giv es the basic rotation S . Rep etitions of S then of course generate all p ossible rotations on the discrete circle. In other words, p and n coprime insures that by repeating the rotation S p , all elements of the discrete circle are even tually reachable from 0. 22 A.3. Commutativit y and Asso ciativit y . Sho w that circular conv olution (3.6) is commutativ e and associative. Answ er: Commutativity: F ollows from ( a ? b ) k = X l a l b k − l = X l a k − j b j = ( b ? a ) k where we used the substitution j = k − l (and consequently l = k − j ) . Asso ciativity: First note that ( b ? c ) i = P j b j c i − j , and compare ( a ? ( b ? c )) k = X l a l ( b ? c ) k − l = X l a l X j b j c k − l − j = X l,j a l b j c k − l − j (( a ? b ) ? c ) k = X j ( a ? b ) j c k − j = X j X l a l b j − l ! c k − j = X l,j a l b j − l c k − j . Relab eling j − l =: i (and therefore j = l + i ) in the second sum makes it X l,i a j b i c k − ( l + i ) = X l,i a j b i c k − l − i , Whic h is exactly the first sum, but with a different labeling of the indices. 23

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment